Navigating the Path to CyberPeace: Insights and Strategies

Featured #factCheck Blogs

Executive Summary

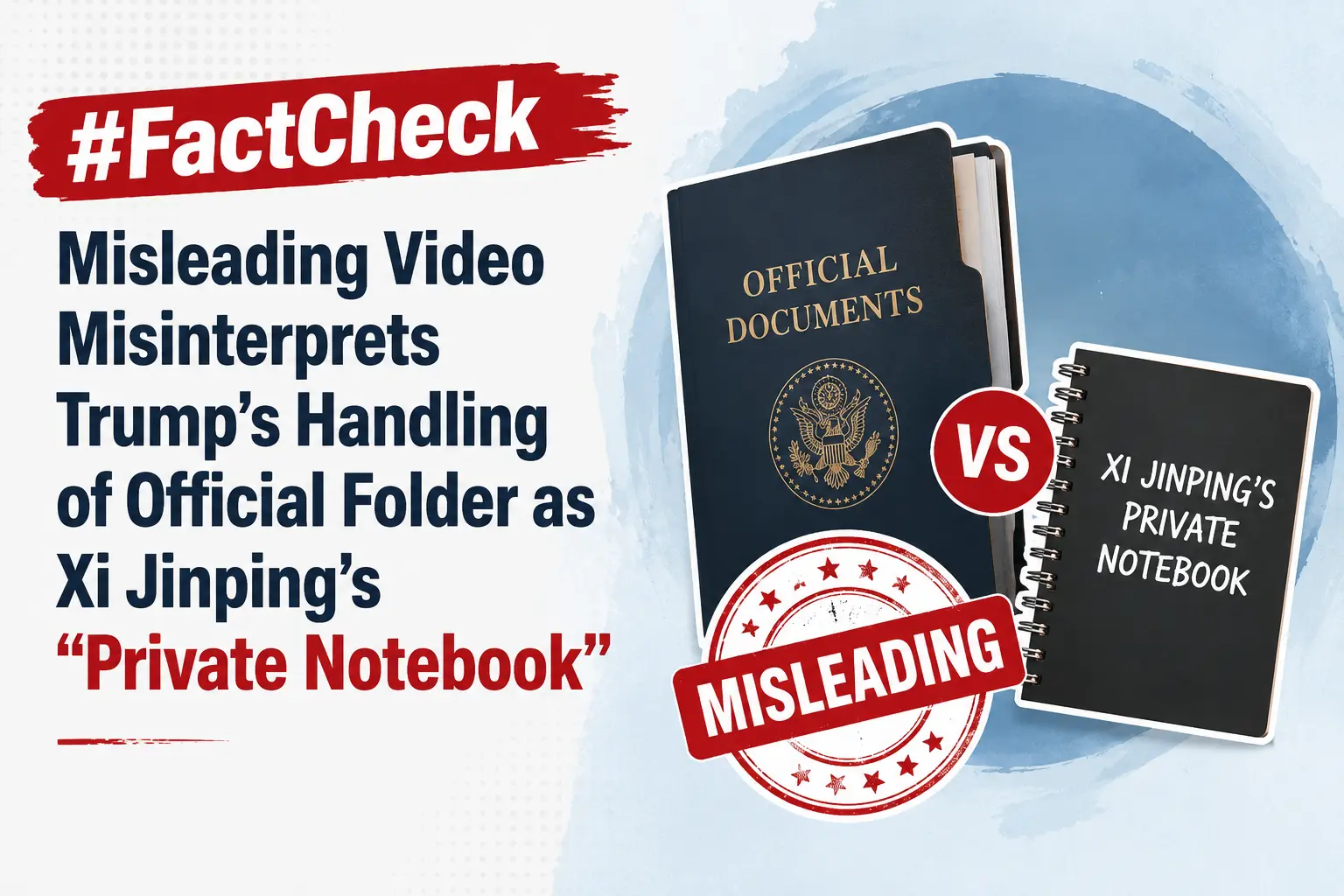

A video circulating on social media claims that during a summit in Beijing, Donald Trump was seen peeking into Chinese President Xi Jinping’s “private notebook” while Xi briefly stepped away. However, a fact-check by CyberPeace Research Wing found the claim to be baseless. A review of the full event footage clearly shows that the folder in question belonged to Donald Trump himself, not Xi Jinping. The viral interpretation is therefore misleading.

Claim

An X user shared the clip alleging, “Trump caught sneaking a peek at Xi Jinping’s private notebook during a Beijing banquet while Xi stepped away.”

Fact Check

A longer version of the video, shared by NBC News on May 14, shows the state banquet held at the Great Hall of the People in Beijing. Around the 1-minute-50-second mark, Xi Jinping, seated to Trump’s left, gets up and walks to the podium. The viral clip follows shortly after, showing Trump opening the folder placed to his left and flipping through its pages.

The White House also uploaded the full footage on its official YouTube channel, showing wider, uninterrupted shots of the event. Around the two-minute mark, the announcer says, “And now a toast by President Xi,” after which Xi Jinping stands up. Immediately after, Trump is seen opening the folder on his left and reading from it.

Later in the video, around the 12-minute mark, when Xi returns to his seat, Trump is seen standing up, taking the folder with him to the podium, turning pages, and reading from it. The same sequence can also be seen in the NBC News footage at around 11 minutes and 50 seconds. This clearly indicates that the folder belonged to the U.S. President and not Xi Jinping, and that Trump was not peeking into any private notebook. Another key detail is the embossed emblem on the folder, which closely resembles the Seal of the President of the United States. The American bald eagle, the national bird of the United States, is clearly visible at the centre. A comparison between the viral screenshot and the official seal shows they are nearly identical.

Conclusion

The viral claim is misleading and taken out of context. A detailed review of the full footage, including official recordings from NBC News and the White House, clearly shows that the folder in question belonged to Donald Trump and not Chinese President Xi Jinping. At multiple points in the video, Trump is seen opening, handling, and reading from the same folder, including while Xi Jinping is away from his seat and later after he returns. The visual evidence from the event also supports this conclusion. The embossed seal on the folder matches the official Seal of the President of the United States, further confirming that it was part of Trump’s official briefing material and not any private document belonging to Xi Jinping. Taken together, the full sequence of events and official video sources make it clear that the viral narrative has been incorrectly framed. There is no evidence to suggest that Trump was peeking into Xi Jinping’s personal notebook.

Executive Summary

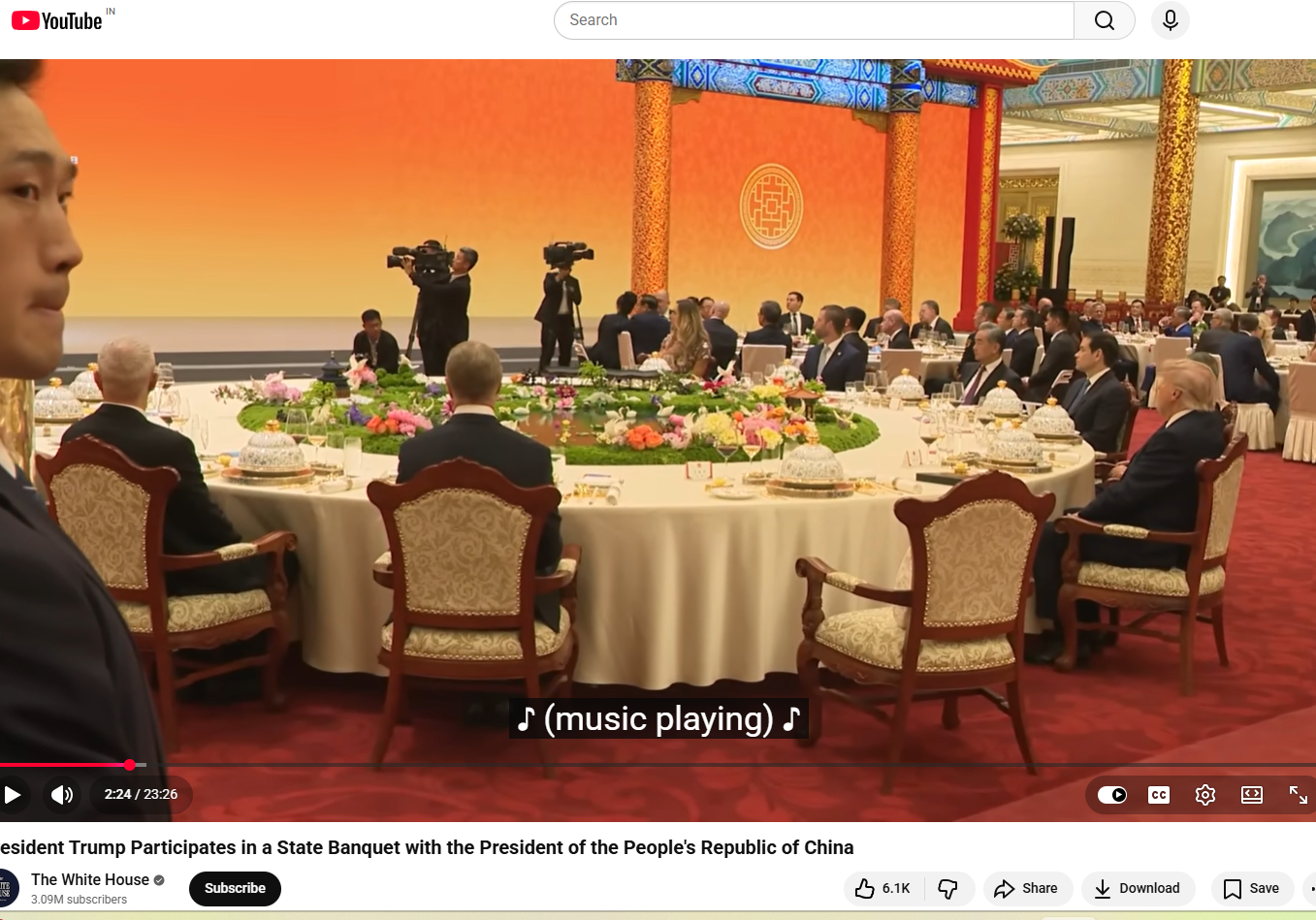

A graphic widely circulating on social media claims that Union Home Minister Amit Shah has warned, “A major crisis is coming; if possible, skip one meal a day.” The claim has been found to be false in a fact-check conducted by CyberPeace Research Wing. The research revealed that Amit Shah has not made any such statement.

Claim

A Facebook user shared the viral graphic on May 17, 2026, claiming that BJP leader and Home Minister Amit Shah issued a “warning” to the public, allegedly saying people should be prepared for a major crisis and consider skipping one meal a day. The post has been widely circulated on social media, drawing significant attention and discussion.

- https://www.facebook.com/photo.php?fbid=1509406197622070&set=pb.100056581115590.-2207520000&type=3

- https://archive.ph/Z9Tle

Factcheck

A keyword-based search on Google did not return any credible news reports supporting the claim. Further scrutiny of the official account of the Ministry of Home Affairs on X also found no mention or statement matching the viral claim.

A separate review of the official X account of Home Minister Amit Shah also did not show any such statement or post confirming the viral claim.

Conclusion

The viral claim is false. Union Home Minister Amit Shah has not made any such statement.

Executive Summary

A video circulating on social media falsely claims that Air India has cancelled all of its international flights until July due to a fuel shortage. However, research conducted by CyberPeace Research Wing found the claim to be misleading and false. Our research revealed that Air India has made no announcement regarding the cancellation of all international flights. In reality, the airline has only made temporary reductions and adjustments on select international routes due to increasing operational pressure and the impact on profitability.

Claim

An X (formerly Twitter) user shared the viral video on May 3, 2026, claiming: “Due to a fuel shortage, Air India has cancelled all its international flights until July.”he post quickly gained attention and was widely shared on social media platforms.

Fact Check

To verify the claim, we examined the official social media accounts of Air India. During the research, we found a post on X in which the airline itself described the viral claim as fake and misleading.

Taking the research further, we searched using relevant keywords and found a report published by ETNOW Swadesh on May 13, 2026. According to the report, Air India has not cancelled all international flights. Instead, due to mounting operational costs and pressure on profitability, the airline has temporarily reduced or modified services on a few select international routes.

Conclusion

Our research found that Air India has not announced the cancellation of all international flights until July. The viral claim circulating on social media is false and misleading. The airline has only implemented temporary adjustments and reductions on certain international routes, not a complete suspension of global operations.

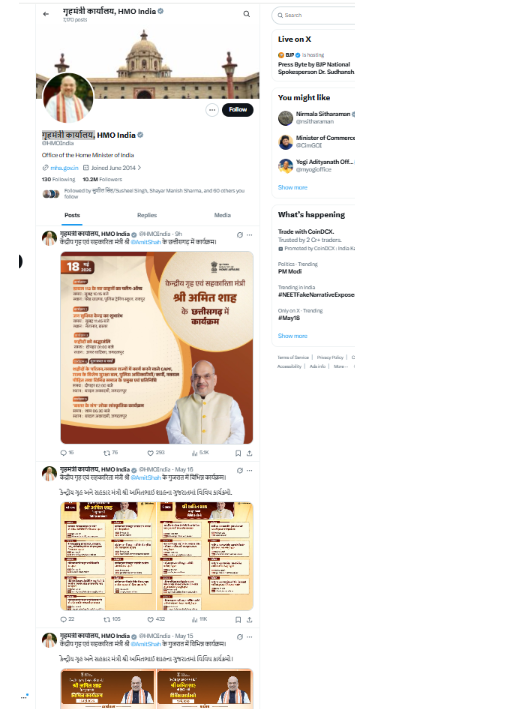

Executive Summary

A purported front page of The Hindu dated June 6, 1967, is being widely circulated on social media with the claim that then Prime Minister Indira Gandhi had urged Indians not to buy gold in any form and to follow “national discipline” by restricting gold consumption. The viral claim suggests that the appeal was part of the government’s efforts to conserve foreign exchange reserves, which were allegedly under severe pressure at the time. However, research conducted by CyberPeace Research Wing found the claim to be false. Our research revealed that the front page being circulated online is not authentic and has been digitally edited.

Claim

An X (formerly Twitter) user shared the viral newspaper clipping on May 12, 2026, and wrote:“In 1967, during a severe foreign exchange crisis, Indira Gandhi appealed to Indians not to buy gold.From ‘skip one meal’ to ‘don’t buy gold,’ Congress-era governance normalized shortages, restrictions, and sacrifice, while ordinary citizens paid the price for failed economic policies.”

Research

To verify the claim, we examined the official social media accounts of The Hindu. During the research, we found a post published on the publication’s official X account on May 12, 2026, clarifying that the viral image claiming to be the June 6, 1967 front page of The Hindu was digitally altered and not part of its official archives. The newspaper also urged readers to verify information carefully before sharing it online.

We also found an X post by B Kolappan, a journalist with The Hindu, who shared the original front page of the newspaper dated June 6, 1967, further exposing the viral image as fake.

For context, Prime Minister Narendra Modi, while addressing a public gathering on May 10, 2026, spoke about the possible economic impact of the ongoing conflict in the Middle East and advised people to avoid buying gold for a year, even during weddings or family functions. The viral claim appears to have resurfaced in this backdrop.

Conclusion

Our research found that the alleged 1967 front page of The Hindu circulating on social media is digitally edited and fake. There is no evidence that the viral newspaper page is authentic or part of The Hindu’s archival records.

Executive Summary

A video featuring former Indian cricketer Sachin Tendulkar is being widely circulated on social media with the date “12-5-2026” displayed on the screen. In the viral clip, Tendulkar appears to promote an investment scheme, allegedly saying that people investing in the scheme today could earn Rs 80 lakh by the end of the day. Throughout the video, he is seen speaking about investment opportunities and financial returns. However, research conducted by CyberPeace Research Wing found that the video is AI-generated and misleading. The original footage was actually from an event marking the centenary celebrations of Sri Sathya Sai Baba.

Claim

A Facebook user shared the viral video on May 12, 2026, claiming that Sachin Tendulkar was endorsing a high-return investment scheme. The post quickly gained traction on social media platforms.

Fact Check

To verify the claim, we searched the internet using relevant keywords but found no credible media reports suggesting that Tendulkar had endorsed any such investment scheme. As part of our research, we extracted key frames from the viral clip and conducted a reverse image search. During the search, we found the original video uploaded on November 19, 2025, on the YouTube channel of IANS. According to the video description, Tendulkar was attending an event organized to mark the centenary year celebrations of Sri Sathya Sai Baba.

We further found a similar version of the same video uploaded on November 19, 2025, on the official Facebook page of Times Now, confirming that the footage was unrelated to any investment or financial scheme.

Conclusion

Our research found that the viral video has been manipulated using AI-generated audio or editing techniques to falsely portray Sachin Tendulkar promoting an investment scheme. The original video was from a public event related to Sri Sathya Sai Baba’s centenary celebrations and had no connection to any financial investment platform.

.webp)

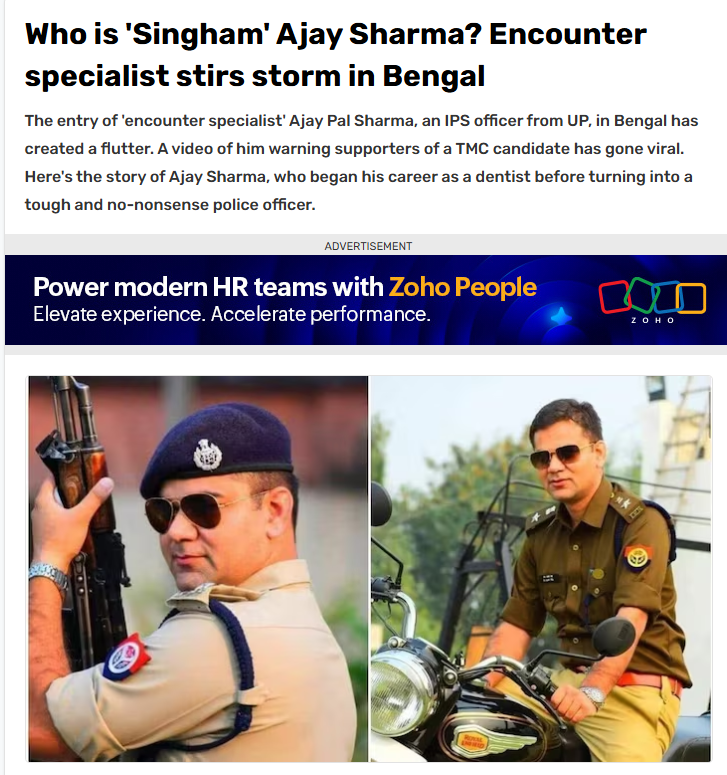

Executive Summary

Ahead of the final phase of the West Bengal Assembly elections, a claim regarding Uttar Pradesh cadre IPS officer Ajay Pal Sharma began circulating widely on social media. Users claimed that Sharma was being sent to West Bengal on deputation for a period of five years. However, research conducted by CyberPeace Research Wing found the claim to be false. Sources close to the IPS officer confirmed that no such deputation order has been issued so far and that Ajay Pal Sharma is currently posted as Additional Commissioner in Prayagraj, Uttar Pradesh. Ajay Pal Sharma had earlier been deployed as a police observer during the West Bengal elections. During that period, a video of him warning Trinamool Congress candidate Jahangir Khan from the Falta constituency had gone viral on social media.

Claim

Several users on Facebook and X claimed that Ajay Pal Sharma had been transferred to West Bengal for five years under an administrative arrangement involving experienced officers from different states. One Facebook user wrote:“This decision has been taken under an administrative arrangement through which experienced officers are deployed in different states.”

- https://www.facebook.com/photo.php?fbid=818902764628152&set=a.296761956842238&type=3

- https://perma.cc/FD8Q-CF7L?type=standard

Fact Check

Our research found that the deputation claim is false. Ajay Pal Sharma is currently serving as Additional Commissioner in Prayagraj, a position he has held since 2025. Further scrutiny revealed that the claim appears to have originated from a parody account on X. On May 4, around 6 PM, the account @abdullah_0mar posted the claim regarding Sharma’s alleged five-year deputation to Bengal. However, in the comments section, the user later clarified that the post was intended as satire.

We also reviewed several news reports regarding Ajay Pal Sharma’s role during the West Bengal elections. Reports confirmed that the Election Commission had deployed him as a police observer in South 24 Parganas district during the polls. However, none of the reports mentioned any five-year transfer or deputation to West Bengal.

Conclusion

The viral claim is false. No official order has been issued regarding IPS officer Ajay Pal Sharma’s deputation to West Bengal for five years. Sources close to the officer confirmed that he continues to serve as Additional Commissioner in Prayagraj, Uttar Pradesh. Sharma had only been deputed as a police observer during the West Bengal Assembly elections, during which a video of him warning TMC candidate Jahangir Khan went viral online.

Executive Summary

Following the results of the recent West Bengal elections, a video of former Chief Election Commissioner Rajiv Kumar has gone viral on social media. In the clip, Kumar is seen questioning television news channels over their election-result coverage and alleged early “trends” before the actual counting process begins. In the viral video, Rajiv Kumar can be heard saying, “When counting begins, channels start showing trends from 8:05 AM itself, which is nonsense. The first round of counting starts only at 8:30 AM. We have evidence that leads were being shown before that. Is it possible that these early trends are shown just to justify exit polls?”The video is being widely shared with the claim that Kumar made these remarks after the recently concluded West Bengal Assembly elections Research conducted by CyberPeace Research Wing found that a 2024 video of former Chief Election Commissioner Rajiv Kumar is being misleadingly shared as a recent statement made after the West Bengal election results.

Claim

An Instagram user shared the viral clip suggesting that the former Election Commissioner made these comments in the context of the latest West Bengal poll results.

Fact Check

Using relevant keyword searches, we traced the original source of the clip to an official post shared by the Election Commission of Indiaon Facebook on October 15, 2024. The video was part of a press conference announcing the Assembly election schedule for Maharashtra and Jharkhand.

We also found the complete live-streamed press conference on the official YouTube channel of the Election Commission.

During the press conference, around the 26:45-minute mark, an ANI journalist referred to discrepancies between exit polls and actual Lok Sabha election results and asked whether such situations fuel doubts over EVMs among the public. Responding to the question at around 30:27 minutes, Rajiv Kumar spoke about the need for self-regulation in electronic media and concerns over premature “trends” shown during counting day. He said that exit polls often create public expectations despite lacking a clear scientific basis and questioned why TV channels begin displaying leads even before the first official counting round starts.

Conclusion

The viral claim is misleading. The video of former Chief Election Commissioner Rajiv Kumar is not related to the recent West Bengal election results. The clip is from an October 15, 2024 press conference held to announce the Maharashtra and Jharkhand Assembly election schedule and is now being falsely shared in a misleading context after the West Bengal polls.

Executive Summary

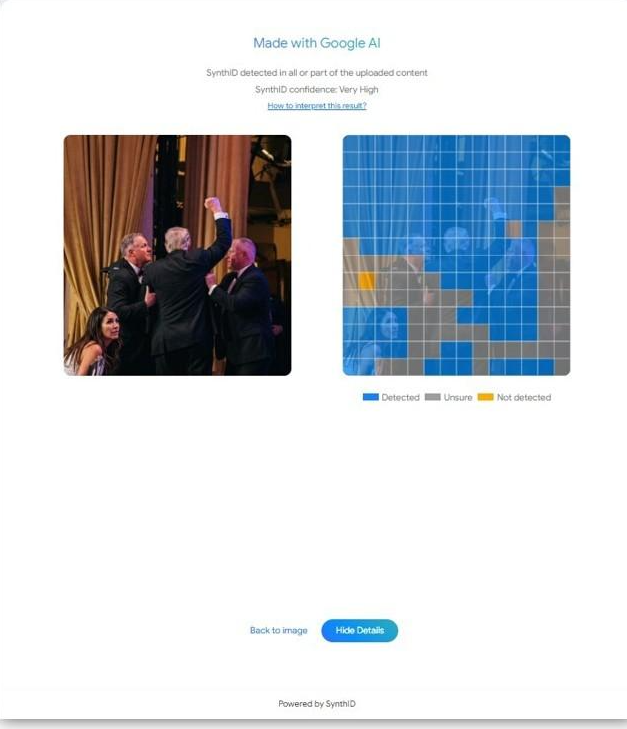

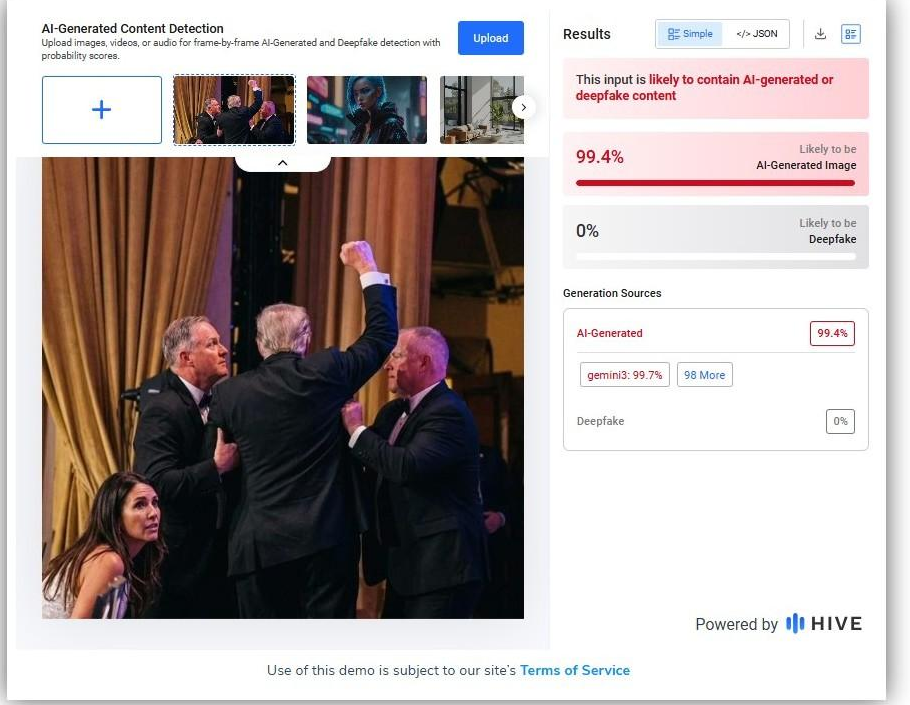

Amid rising tensions involving Iran, Israel and the United States following reports in early April 2026 that Iran had shot down an American fighter aircraft, a picture is going viral on social media claiming to show Iranian soldiers standing beside the wreckage of a destroyed helicopter while holding the Iranian flag. Research by CyberPeace Research Wing found that the viral claim is false. The image has been created using artificial intelligence and does not depict any real incident. The picture was generated using Google AI tools and is being misleadingly circulated online with different claims.

Claim

A Facebook page named “official salman 09” shared the image on May 1, 2026, along with a lengthy caption describing the scene as a symbol of Iran’s battlefield success. The post portrayed the image as evidence of a helicopter being brought down during ongoing tensions in the Middle East and suggested that the photo reflected strength, morale and victory in war.

- https://www.facebook.com/permalink.phpstory_fbid=pfbid02TAac6JwZha2UU4T8QiCGq4ENmsnNSwvigaz3vKxr9UWLbhghNsnMMpZdQ3dUuQ1rl&id=100092392280139

- https://archive.ph/

Fact Check

To verify the authenticity of the image, we first conducted a reverse image search using Google Lens. The image did not appear in any credible news reports or authentic media coverage. Instead, it was found circulating mainly on social media platforms, raising suspicion about its authenticity. We then analyzed the image using Google’s SynthID detector. The analysis confirmed the presence of a SynthID watermark with a “very high confidence” score, indicating that the image had been generated using Google AI tools. SynthID is Google’s watermarking technology used to identify AI-generated content created through its models.

Further verification using another AI-detection platform, Hive Moderation, also indicated a high probability that the image had been generated using AI. The tool identified Gemini as the likely source and assessed the image as overwhelmingly AI-generated.

Conclusion

Our research confirms that the viral image is AI-generated and unrelated to any real-world event. The picture showing soldiers holding the Iranian flag near helicopter wreckage was created using Google AI tools and is being falsely shared on social media to spread misleading claims.

Executive Summary

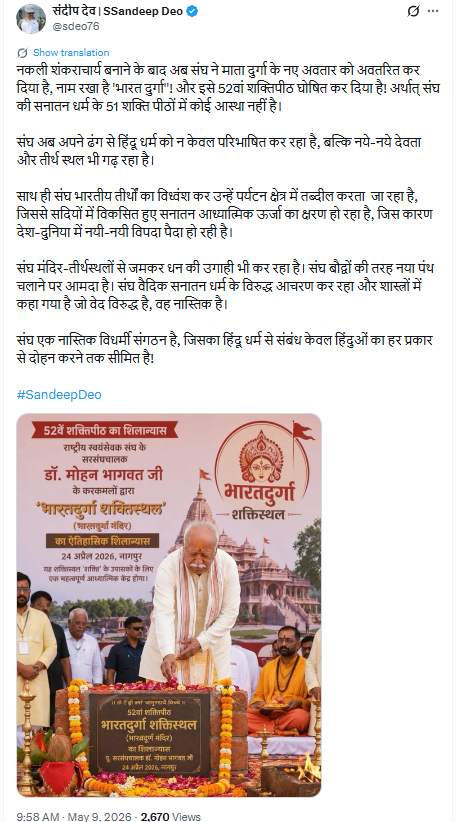

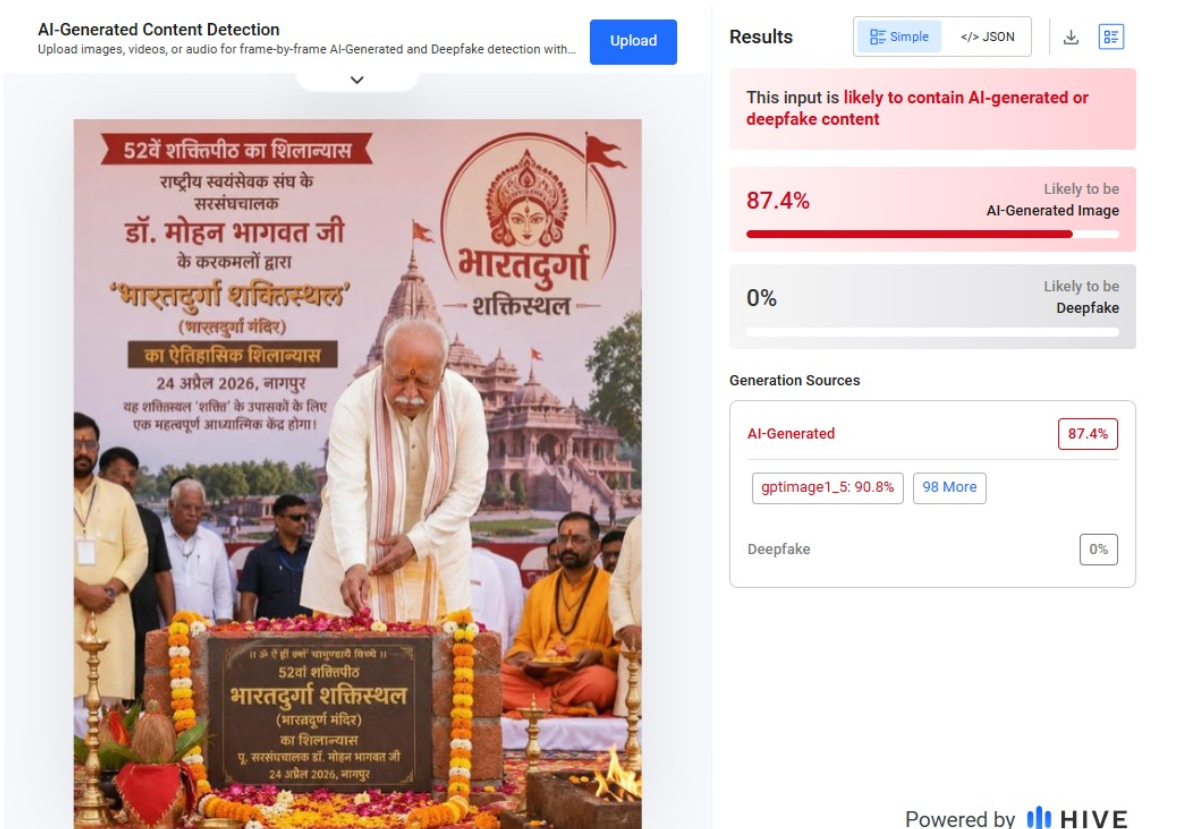

A picture of Mohan Bhagwat is going viral on social media, showing him allegedly laying the foundation stone of a so-called “52nd Shakti Peeth” named Bharatdurga. Users are claiming that the RSS chief insulted Sanatan Dharma by creating a new Shakti Peeth in Nagpur. Research by CyberPeace Research Wing found that the claim is false. The viral image is AI-generated, and Mohan Bhagwat did not inaugurate any “52nd Shakti Peeth.” In reality, he laid the foundation stone of the Bharatdurga Temple.

Claim

An X user named Sandeep Dev shared the viral image and alleged that after creating “fake Shankaracharyas,” the RSS had now introduced a new form of Goddess Durga called “Bharat Durga” and declared it the 52nd Shakti Peeth. The post further claimed that the RSS was redefining Hinduism and creating new religious sites.

Fact Check

To verify the claim, we searched relevant keywords and found reports published on April 24, 2026, by India TV and Navbharat Times. According to these reports, Mohan Bhagwat laid the foundation stone of the world’s first Bharatdurga Temple in Nagpur during a ceremony organized at the Jamtha campus of Dr. Abaji Thatte Seva and Research Institute. Several prominent saints and religious leaders attended the event. Importantly, none of the reports described the temple as the “52nd Shakti Peeth.”

Further research led us to the full video of the foundation ceremony uploaded on the YouTube channel of Devendra Fadnavis. The stage backdrop and video thumbnail clearly mention “Bharatdurga Temple Foundation Ceremony.” However, the viral image frame was not visible anywhere in the authentic footage.

We also analyzed the viral image using AI detection tools, which indicated that the image was AI-generated.

Conclusion

Our research confirms that the claim about Mohan Bhagwat laying the foundation of a “52nd Shakti Peeth” is false. The viral image is AI-generated. In reality, he participated in the foundation ceremony of the Bharatdurga Temple in Nagpur.

Executive Summary

Assembly election results for West Bengal, Assam, Kerala, Tamil Nadu and the Union Territory of Puducherry have been declared, with the Bharatiya Janata Party (BJP) set to form the government in West Bengal after defeating the Trinamool Congress (TMC). Amid celebrations and reports of violence in the state, several misleading videos and images are also circulating on social media. One such viral clip shows people waving the Indian tricolour and saffron flags during a street celebration. Social media users are claiming that the video captures people celebrating a political change and BJP’s victory in West Bengal. Research by CyberPeace Research Wing found that the claim is false. The viral video is not from West Bengal but from Prayagraj and actually shows celebrations after India’s victory in the ICC Men's T20 World Cup 2026.

Claim

An X user named “Ashok Shrivastav” shared the video on May 6, 2026, claiming that people in West Bengal were celebrating the departure of Mamata Banerjee and the TMC government. The user further claimed that people were waving only the national flag and saffron flags, not BJP flags.

Fact Check

To verify the claim, we extracted several keyframes from the viral video and conducted a reverse image search using Google Lens. The clip was found on multiple social media handles falsely linked to West Bengal.

However, the oldest version of the video was uploaded on March 8, 2026, by an Instagram page named “Streets of Sangam.” The caption identified the location as Prayagraj and included hashtags related to the World Cup and Loknath. During the comparison of the viral and original videos, we noticed a shop sign reading “Suman Ornaments.” Using Google Street View, we traced the location to Baba Loknath area in Prayagraj, where the same shop could be identified near Loknath Gate.

Conclusion

Our research confirms that the viral claim is fake. The video being shared as BJP victory celebrations in West Bengal is actually from Prayagraj, Uttar Pradesh, and dates back to March 2026, when locals celebrated Team India’s T20 World Cup victory. The old clip is now being misleadingly circulated with a false political narrative.

Executive Summary

A video allegedly showing India’s Defence Secretary Rajesh Kumar Singh making remarks about Pakistan’s cyber capabilities is being widely shared on social media. The clip claims that Singh admitted Pakistan had “jammed Indian systems” on May 10 and described Pakistan’s cyber and electronic warfare capabilities as a major challenge for India. Research by CyberPeace Research Wing found that the viral clip is an AI-generated deepfake being circulated to spread misinformation. Rajesh Kumar Singh never made any such statement.

Claim

An X user shared the viral video claiming that India’s Defence Secretary had acknowledged Pakistan’s technological superiority. The post alleged that Singh admitted Pakistan successfully jammed Indian systems and claimed that India was lagging behind in cyber and electronic warfare technology.

Fact Check

To verify the claim, we searched relevant keywords on Google but found no credible media reports carrying such a statement from the Defence Secretary. We then extracted keyframes from the viral clip and conducted a reverse image search. During the research, we found the original video uploaded on the YouTube channel of ANI on April 30, 2026.

A review of the full video confirmed that Rajesh Kumar Singh never made the remarks heard in the viral clip. The original footage had been manipulated and altered using AI-generated audio techniques.

Conclusion

Our research confirms that the viral video is fake and AI-manipulated. The statement attributed to India’s Defence Secretary Rajesh Kumar Singh is fabricated, and the deepfake clip is being shared with misleading claims to spread disinformation.

Executive Summary

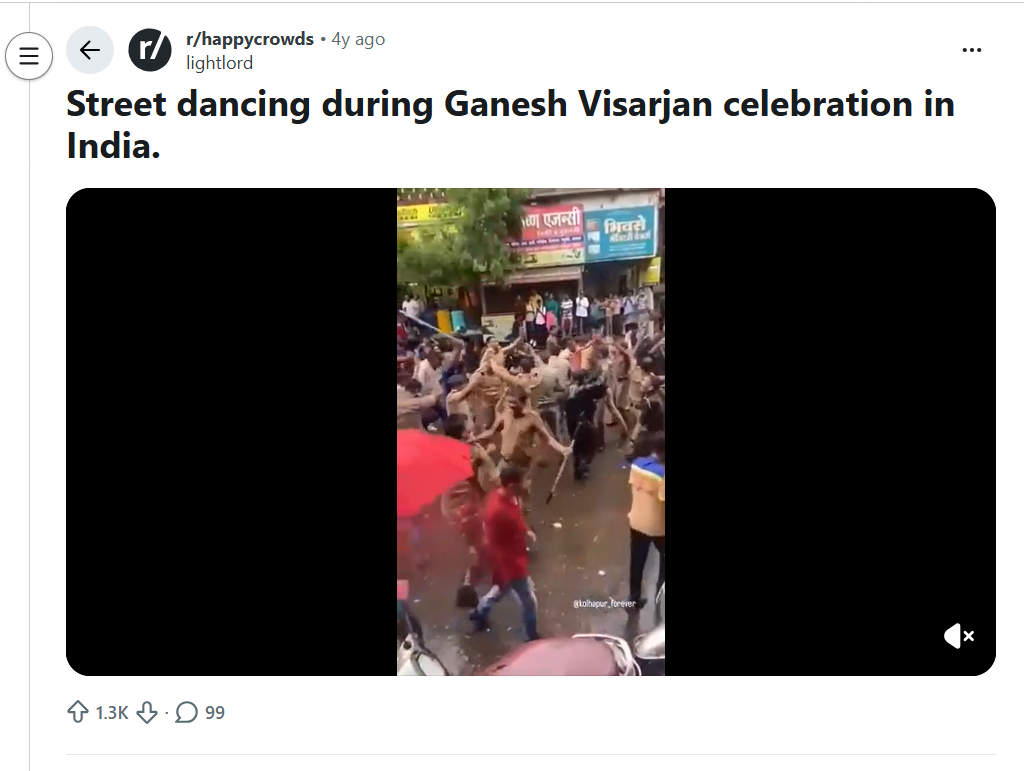

A video showing police personnel dancing on the streets along with civilians is going viral on social media. The clip is being shared with the claim that policemen in West Bengal were celebrating the defeat of Mamata Banerjee and the victory of the BJP.

Research by CyberPeace Research Wing found that the claim is misleading. The viral video is old and unrelated to the West Bengal elections.

Claim

An X user shared the video on April 26, 2026, alleging that police personnel were celebrating BJP’s victory. The post questioned those raising concerns over EVMs, suggesting that even police were openly rejoicing over the election outcome.

- https://x.com/Minakshishriyan/status/2051490074957930510

- https://archive.is/d81OT

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to a Reddit post dated September 13, 2022, where the same video was shared. The post described it as footage from Ganesh Visarjan celebrations in India, and several users in the comments identified the location as Maharashtra.

Further research led us to a YouTube channel “Yash Arate Vlogs,” which uploaded the same video on September 10, 2022. The description stated that the clip was recorded during Ganpati immersion celebrations in Kolhapur.

https://www.youtube.com/shorts/0_w5t-4rmTY

We also found media reports from September 2022 indicating that during Ganesh Visarjan in Kolhapur, loud music and festive atmosphere led even on-duty police personnel to briefly join the celebrations.

Conclusion

Our research confirms that the viral video does not show any post-election celebration in West Bengal. It is an old clip from Maharashtra, recorded during Ganesh Visarjan festivities, and is being falsely shared with a misleading political claim.

Executive Summary

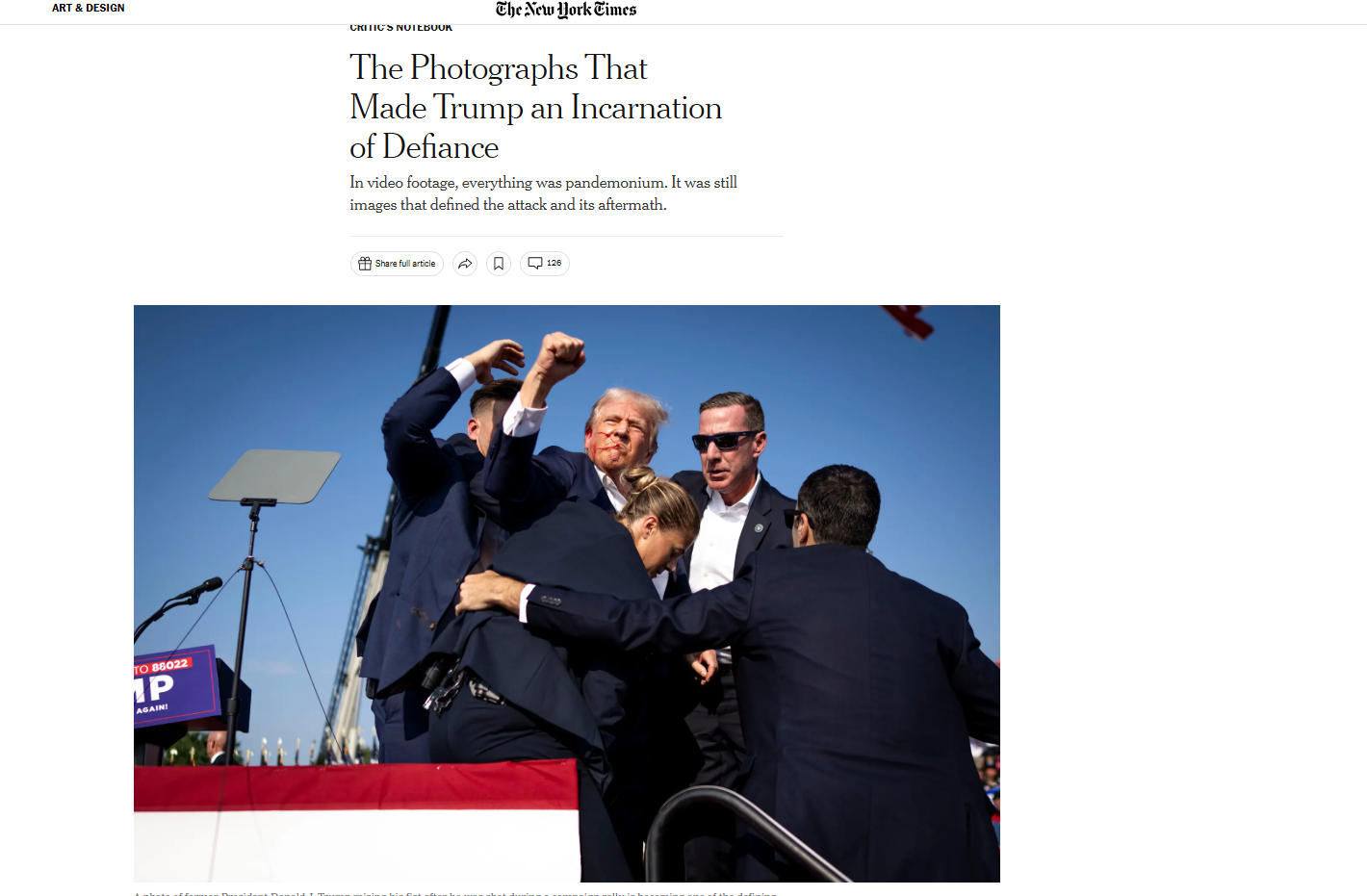

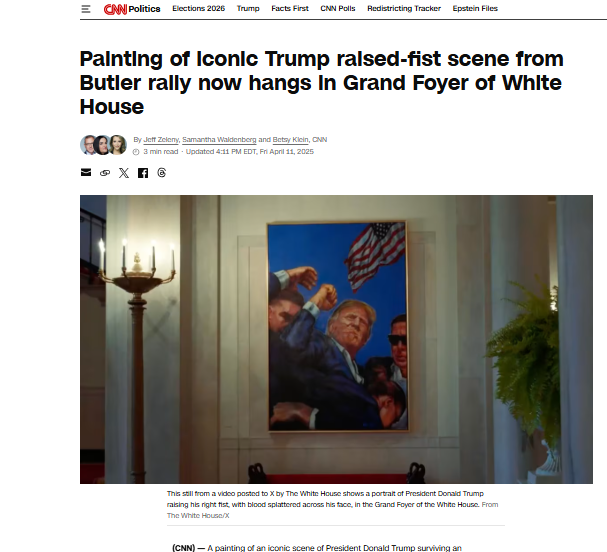

A photo of Donald Trump is going viral on social media, showing him raising his fist. Users claim the image was taken during a press event in Washington, when security personnel were escorting him out amid reports of gunfire. Research by CyberPeace Research Wing found that the viral image is AI-generated and is being shared with misleading claims.

Claim

On April 26, 2026, an X user shared the image with the caption: “Thank You, Lord our God, for protecting our President.” The post suggests that Trump made the gesture during a chaotic evacuation at a Washington event.

Fact Check

Reports confirm that Trump and senior officials were hurried away from the White House Correspondents’ Association dinner on April 25 after gunshots were reportedly heard from a floor above the ballroom. However, no authentic visuals show Trump raising his fist during the evacuation.

- https://www.nytimes.com/2024/07/14/arts/design/trump-photo-raised-fist.html

- https://edition.cnn.com/2025/04/11/politics/trump-obama-portrait-white-house

Further analysis of the viral image indicates signs of digital manipulation. Google’s SynthID detection tool flagged the file as containing SynthID—an invisible watermark embedded in content generated using Google’s AI tools.

Additionally, AI detection platform Hive Moderation assessed that the image is likely AI-generated or a deepfake.

Conclusion

The research confirms that the viral image of Donald Trump raising his fist during a Washington incident is not real. It was created using AI and is being circulated with a misleading narrative.

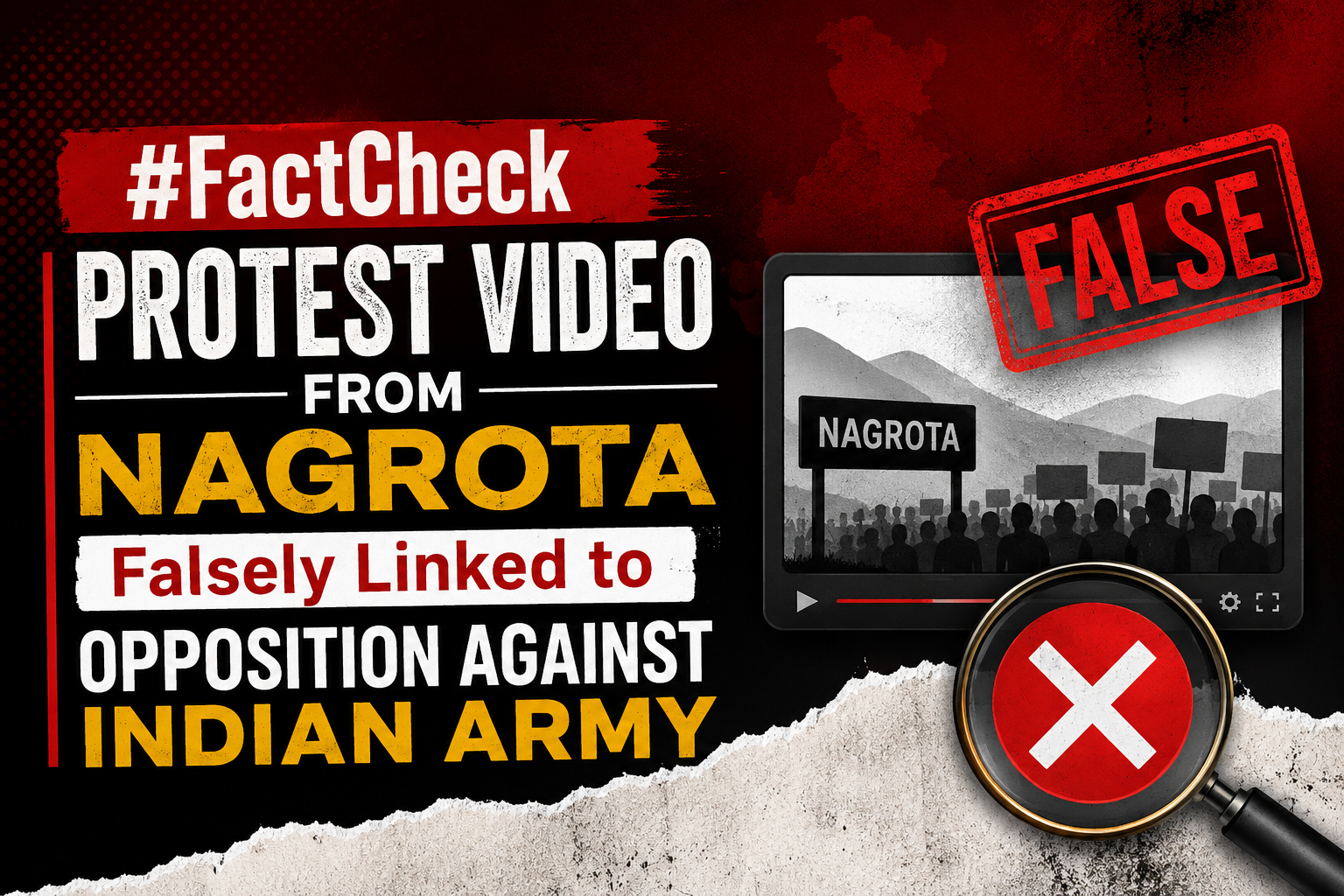

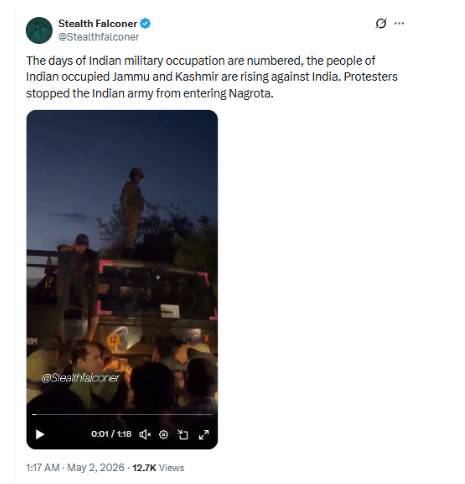

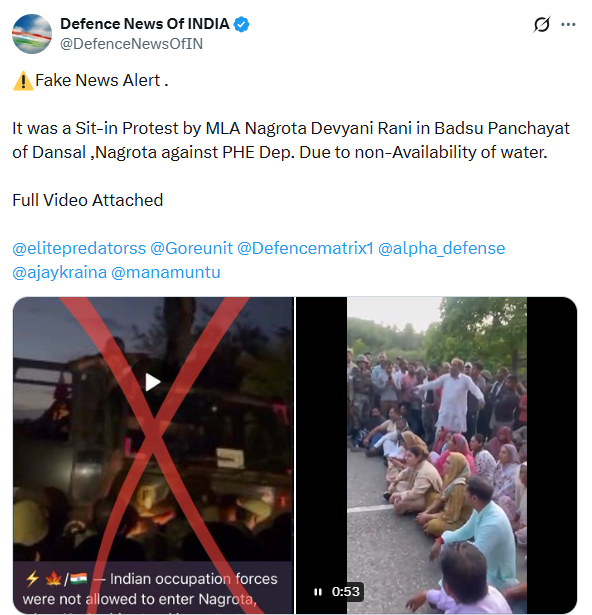

Executive Summary

A video is being widely circulated on social media by Pakistani propaganda-linked users, showing a group of people protesting on a road. It is being claimed that protesters in Jammu & Kashmir stopped Indian Army personnel from entering Nagrota, indicating growing public opposition against the forces. Research by CyberPeace Research Wing found that the claim is misleading. The viral video is unrelated to any protest against the Indian Army.

Claim

A user posted the video on X, claiming: “The days of Indian military occupation are numbered; people of Jammu & Kashmir have risen against India. Protesters stopped the Indian Army from entering Nagrota.”

- https://x.com/Stealthfalconer/status/2050301106623045758?s=20

Fact Check

During the research, the CyberPeace Research Wing team found no evidence of any such incident where civilians blocked or opposed the Indian Army in Nagrota. Further probe led to a post by an X user “Defence News Of INDIA,” which contained the full version of the viral video. The accompanying information clarified that the protest took place in Dansal’s Badsu Panchayat area of Nagrota and was led by BJP MLA Devayani Rana.

The protest was organized against the Public Health Engineering (PHE) Department over severe water shortage issues in the region. Locals, along with the MLA, staged a sit-in to highlight the lack of water supply.

We also found multiple media reports, including from KBC News – Kashmir and Jammu Links News, confirming that Devayani Rana led a road blockade protest in her constituency over water scarcity and accused the Jal Shakti Department of negligence and administrative failure. Additionally, videos of the same protest were available on social media platforms, including live streams shared from Devayani Rana’s official pages.

Conclusion

Our research confirms that the viral claim is false and misleading. The video does not show any protest against the Indian Army. It is actually from a demonstration led by Devayani Rana and local residents over water shortage issues in Nagrota.