#FactCheck-Protest Video from Nagrota Falsely Linked to Opposition Against Indian Army

Executive Summary

A video is being widely circulated on social media by Pakistani propaganda-linked users, showing a group of people protesting on a road. It is being claimed that protesters in Jammu & Kashmir stopped Indian Army personnel from entering Nagrota, indicating growing public opposition against the forces. Research by CyberPeace Research Wing found that the claim is misleading. The viral video is unrelated to any protest against the Indian Army.

Claim

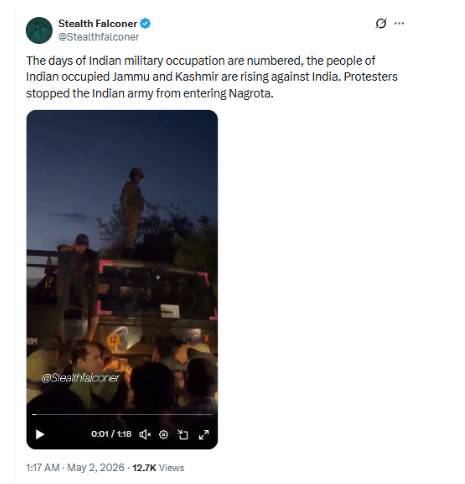

A user posted the video on X, claiming: “The days of Indian military occupation are numbered; people of Jammu & Kashmir have risen against India. Protesters stopped the Indian Army from entering Nagrota.”

- https://x.com/Stealthfalconer/status/2050301106623045758?s=20

Fact Check

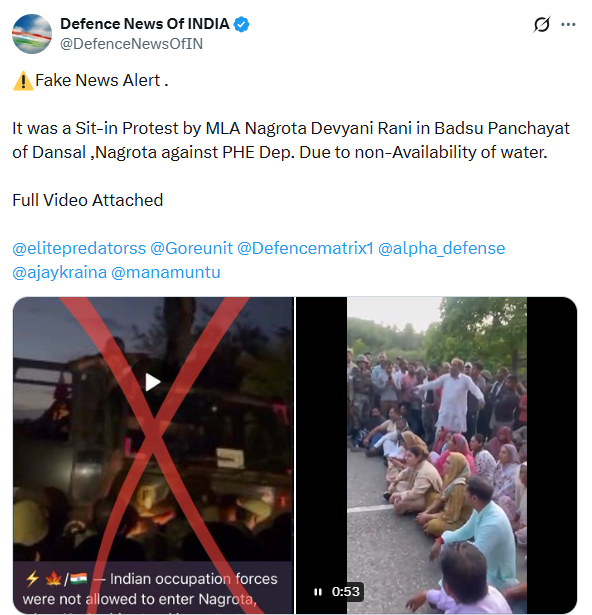

During the research, the CyberPeace Research Wing team found no evidence of any such incident where civilians blocked or opposed the Indian Army in Nagrota. Further probe led to a post by an X user “Defence News Of INDIA,” which contained the full version of the viral video. The accompanying information clarified that the protest took place in Dansal’s Badsu Panchayat area of Nagrota and was led by BJP MLA Devayani Rana.

The protest was organized against the Public Health Engineering (PHE) Department over severe water shortage issues in the region. Locals, along with the MLA, staged a sit-in to highlight the lack of water supply.

We also found multiple media reports, including from KBC News – Kashmir and Jammu Links News, confirming that Devayani Rana led a road blockade protest in her constituency over water scarcity and accused the Jal Shakti Department of negligence and administrative failure. Additionally, videos of the same protest were available on social media platforms, including live streams shared from Devayani Rana’s official pages.

Conclusion

Our research confirms that the viral claim is false and misleading. The video does not show any protest against the Indian Army. It is actually from a demonstration led by Devayani Rana and local residents over water shortage issues in Nagrota.

Related Blogs

Executive Summary

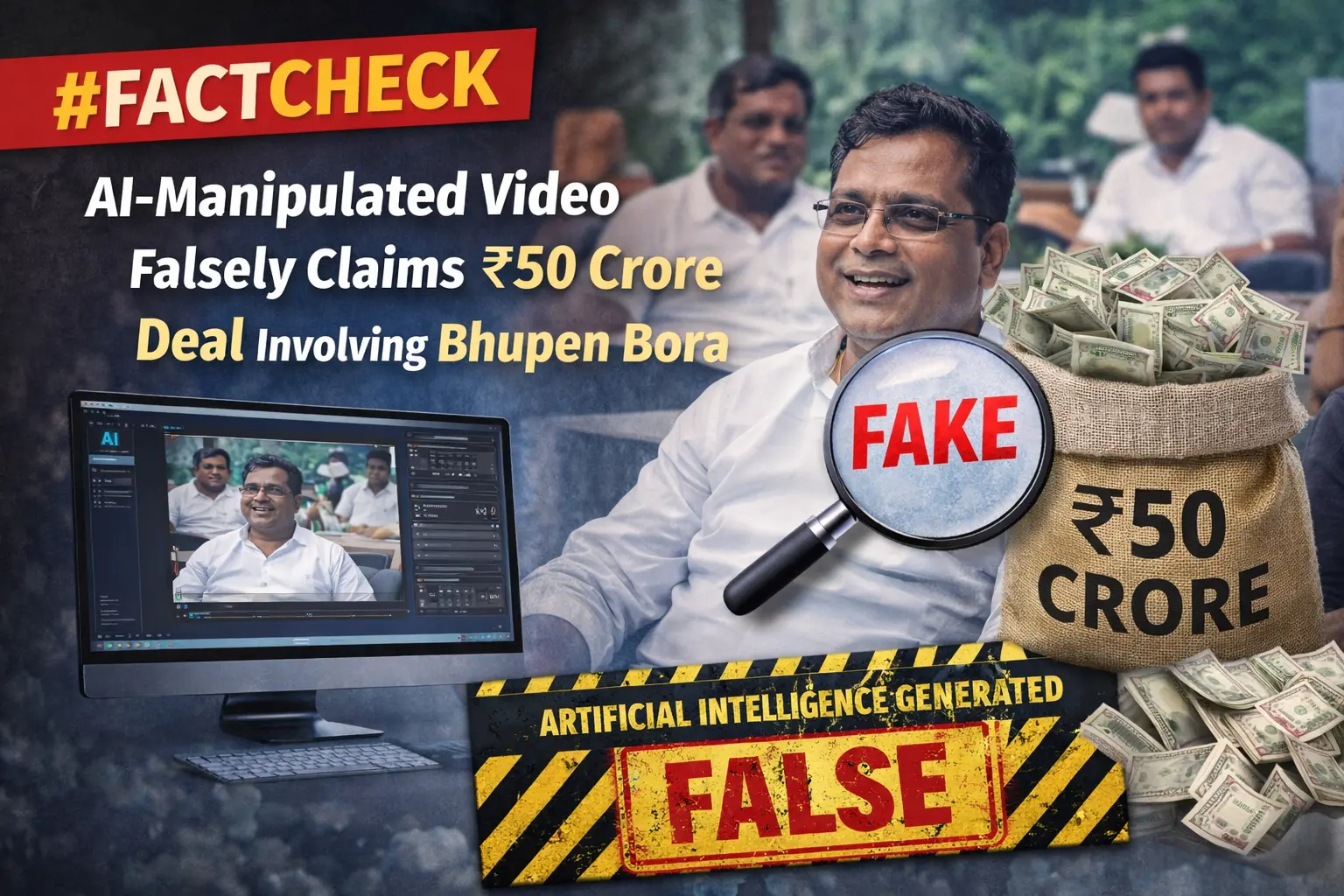

A purported news clip circulating on social media claims that the Bharatiya Janata Party (BJP) purchased Bhupen Bora, a leader of the Indian National Congress, for ₹50 crore as part of a political deal in Assam. The viral clip further alleges that the transaction took place under the leadership of Assam Chief Minister Himanta Biswa Sarma and included an agreement to induct several Congress leaders into the BJP.

However, research by CyberPeace found the viral claim to be false and revealed that the original news video had been manipulated using AI and shared with misleading claims.

Claim

On February 18, 2026, a user shared the viral video on Facebook, claiming that the Assam BJP had bought a Congress leader who had lost the last three elections for ₹50 crore, and that the alleged deal led by Himanta Biswa Sarma had drawn public criticism.

Fact Check:

To verify the authenticity of the claim, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. During the research, we found the original version of the video published on the website of Aaj Tak on February 16, 2026. In the original report, the anchor is only seen reporting on Bhupen Bora’s resignation from the party. The report does not mention any alleged financial transaction or political deal, contrary to the claims made in the viral clip.

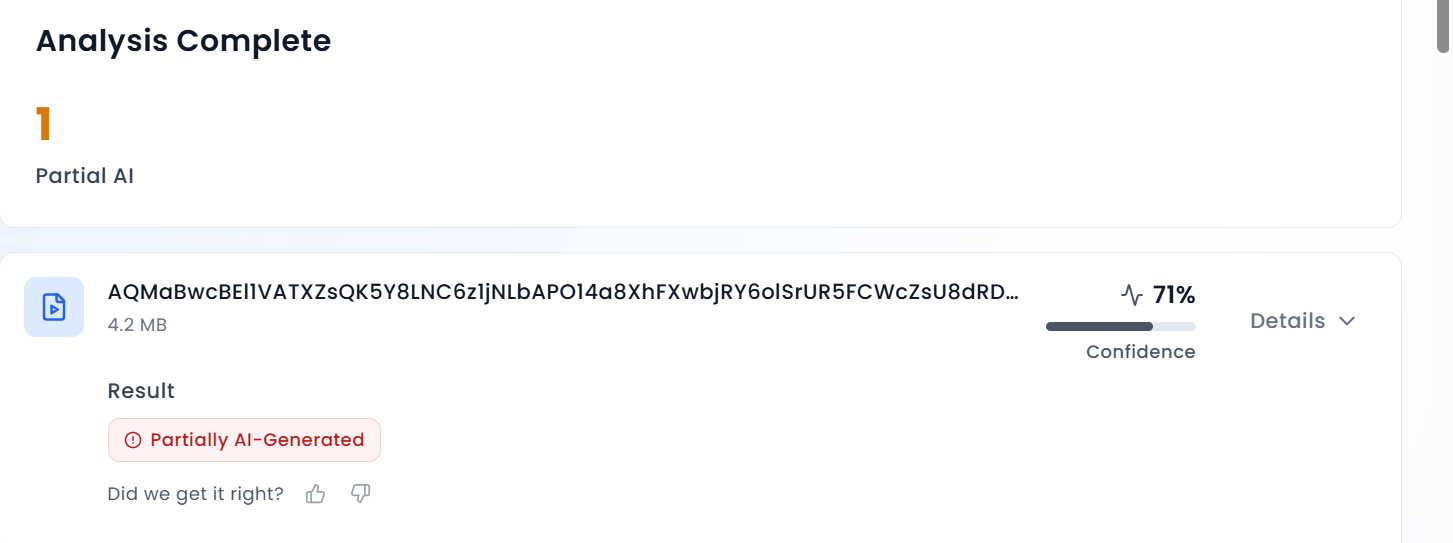

In the next stage of the research, the viral video was analysed using the AI detection tool AURGIN AI, which identified the video as AI-generated.

Conclusion

Our research found that users had manipulated the original news broadcast using AI and shared it with misleading claims. The viral clip does not show any real financial deal between Bhupen Bora and the Assam Chief Minister.

Introduction

In order to effectively deal with growing cyber crime and threats the Telangana police has taken initiative by launching Law Enforcement Chief Information Security Officers (CISO) Council, an innovative project launched in Telangana, India, which is a significant response to the growing cyber threat landscape. With cyber incidents increasing in the recent years and concerning statistics such as a tenfold rise in password-based attacks and an increase in ransomware attacks, the Council aims to strengthen the region's digital defenses. It primarily focuses on reducing vulnerability, improving resilience, and providing real-time threat intelligence. By promoting partnerships between the public and private sectors, offering legal and regulatory guidance, and facilitating networking and learning opportunities, this collaborative effort involving industry, academia, and law enforcement is a crucial move towards protecting critical infrastructure and businesses from cyber threats, the Telangana police in partnership with industry and academia, has launched the Law Enforcement CISO (Chief Information Security Officers) Council of India on 7th October 2023. Chief of the Central Crime Station Stephen Ravindra said that the forum is a path-breaking initiative and the Council represents an open platform for all the enforcement agencies in the country. The upcoming inititiative inculcate close association with different stakeholders, which includes government departments, startups, centers of excellence and international collaborations, carving a nieche for a sturdy cybersecurity envirnoment.

Enhancing Cybersecurity is the Need of the Hour:

The recent launch of the Law Enforcement CISO Council in Hyderabad, India emphasized the need for government organizations and industries to prioritize the protection of their digital space. Cyber incidents, ransomware attacks, and threats to critical infrastructure have been on the rise, making it essential to take proactive cybersecurity measures. Disturbing statistics regarding cyber threats, such as password-based attacks, BEC (Business Email Compromise) attempts, and vulnerabilities in the supply chain, highlight the importance of addressing these issues urgently. This initiative aims to provide real-time threat intelligence, legal guidance, and encourages collaboration between public and private organizations in order to combat cybercrime. Given that every cyber attack has criminal elements, the establishment of these councils is a crucial step towards minimizing vulnerabilities, enhancing resilience, and ensuring the security of our digital world.

International Issue & Domestic Issue:

The announcement by the Telangana State Police, is a proactive step to form a first-of-its-kind Law Enforcement CISO Council (LECC), as part of an initiative from the State government to give a further impetus to cyber security. Jointly with its law enforcement partners, the Telangana Police has decided to make cyber cops more efficient and shape them on par with the technology advancements. The Telangana police have proved its commitment for a secure cyber environment by recovering INR 2.2 crore and INR 6.8 crore lost by people in cyber frauds which is industry’s highest rate of helping the victims.

The Police department complemented efforts by corporate executives for their personal interest in the subject and mentioned police officers’ expertise and inputs from professionals from the industry need to work cohesively to prevent further increase in the number of cyber crime cases. Data indicates that the exponential increase in cyber threats in recent times necessitates an informed and prudent action with the cooperation and collaboration of the IT Department of Telangana, centers of excellence, start-ups, white hats or ethical hackers, and international associations.

A report from Telangana commissioner states the trend of a surge in the number of cyber incidents and vulnerabilities of Government organizations, Critical Infrastructure and MSMEs and stressed that every cyber security breaches have an element of criminality in it. The Law Enforcement CISO Council is a progressive step in this direction which ensures a reduced cyber attacks, enhanced resilience, actionable strategic and tactical real-time threat intelligence, legal guidance, opportunities for public private partnerships, networking, learning and much more.

The Secretary of SCSC, shared some alarming statistics on the threats that are currently rampant across the digital world. To combat it in today’s era of widespread digital dependence, the program launched by the Telangana Police stands as a commendable step or an initiative that offers a glimmer of aspiration. It brings together all the heroes who want to protect the digital spaces and counter the growing number of threats.

Contribution of Telangana Police for carving a niche to be followed:

The launch of the Law Enforcement CISO Council in Telangana represents a pivotal step in addressing the pressing challenges posed by escalating cyber threats. As highlighted by the Director General of Police, the initiative recognizes the critical need to combat cybercrime, which is growing at an alarming rate. The Council not only acknowledges the casual approach often taken towards cybersecurity but also aims to rectify it by fostering collaboration between law enforcement, industry, and academia.

One of the most significant positive aspects of this initiative is its commitment to sharing intelligence, ensuring that the hard-earned lessons from cyber fraud victims are translated into protective measures for others. By collaborating with the IT Department of Telangana, centers of excellence, startups, and ethical hackers, the Council is poised to develop robust Standard Operating Protocols (SOPs) and innovative tools to counter cyber threats effectively.

Moreover, the Council's emphasis on Public-Private Partnerships (PPPs) underscores its proactive approach in dealing with the evolving landscape of cyber threats. It offers a platform for networking and learning, enabling information sharing, and will contribute to reducing the attack surface, enhancing resilience, and providing real-time threat intelligence. Additionally, the Council will provide legal and regulatory guidance, which is crucial in navigating the complex realm of cybercrime. This collective effort represents a promising way forward in safeguarding digital spaces, critical infrastructure, and industries against cyber threats and ensuring a safer digital future for all.

Conclusion:

The Law Enforcement CISO Council in Telangana is an innovative effort to strengthen cybersecurity in the state. With the rise in cybercrimes and vulnerabilities, the council brings together expertise from various sectors to establish a strong defense against digital threats. Its goals include reducing vulnerabilities, improving resilience, and ensuring timely threat intelligence. Additionally, the council provides guidance on legal and regulatory matters, promotes collaborations between the public and private sectors, and creates opportunities for networking and knowledge-sharing. Through these important initiatives, the CISO Council will play a crucial role in establishing digital security and protecting the state from cyber threats.

References:

- http://www.uniindia.com/telangana-police-launches-india-s-first-law-enforcement-ciso-council/south/news/3065497.html

- https://indtoday.com/telangana-police-launched-indias-first-law-enforcement-ciso-council/

- https://www.technologyforyou.org/telangana-police-launched-indias-first-law-enforcement-ciso-council/

- https://timesofindia.indiatimes.com/city/hyderabad/victims-of-cyber-fraud-get-back-rs-2-2-cr-lost-money-in-bank-a/cs/articleshow/104226477.cms?from=mdr

Introduction:

The Ministry of Civil Aviation, GOI, established the initiative ‘DigiYatra’ to ensure hassle-free and health-risk-free journeys for travellers/passengers. The initiative uses a single token of face biometrics to digitally validate identity, travel, and health along with any other data needed to enable air travel.

Cybersecurity is a top priority for the DigiYatra platform administrators, with measures implemented to mitigate risks of data loss, theft, or leakage. With over 6.5 million users, DigiYatra is an important step forward for India, in the direction of secure digital travel with seamless integration of proactive cybersecurity protocols. This blog focuses on examining the development, challenges and implications that stand in the way of securing digital travel.

What is DigiYatra? A Quick Overview

DigiYatra is a flagship initiative by the Government of India to enable paperless travel, reducing identity checks for a seamless airport experience. This technology allows the entry of passengers to be automatically processed based on a facial recognition system at all the checkpoints at the airports, including main entry, security check areas, aircraft boarding, and more.

This technology makes the boarding process quick and seamless as each passenger needs less than three seconds to pass through every touchpoint. Passengers’ faces essentially serve as their documents (ID proof and if required, Vaccine Proof) and their boarding passes.

DigiYatra has also enhanced airport security as passenger data is validated by the Airlines Departure Control System. It allows only the designated passengers to enter the terminal. Additionally, the entire DigiYatra Process is non-intrusive and automatic. In improving long-standing security and operational airport protocols, the platform has also significantly improved efficiency and output for all airport professionals, from CISF personnel to airline staff members.

Policy Origins and Framework

Rooted in the Government of India's Digital India campaign and enabled by the National Civil Aviation Policy (NCAP) 2016, DigiYatra aims to modernise air travel by integrating Aadhaar-based passenger identification. While Aadhaar is currently the primary ID, efforts are underway to include other identification methods. The platform, supported by stakeholders like the Airports Authority of India (26%) and private airports (14.8% each), must navigate stringent cybersecurity demands. Compliance with the Digital Personal Data Protection Act, 2023, ensures the secure use of sensitive facial recognition data, while the Aircraft (Security) Rules, 2023, mandate robust interoperability and data protection mechanisms across stakeholders. DigiYatra also aspires to democratise digital travel, extending its reach to underserved airports and non-tech-savvy travellers. As India refines its cybersecurity and privacy frameworks, learning from global best practices is essential to safeguarding data and ensuring seamless, secure air travel operations.

International Practices

Global practices offer crucial lessons to strengthen DigiYatra's cybersecurity and streamline the seamless travel experience. Initiatives such as CLEAR in the USA and Seamless Traveller initiatives in Singapore offer actionable insights into further expanding the system to its full potential. CLEAR is operational in 58 airports and has more than 17 million users. Singapore has made Seamless Traveller active since the beginning of 2024 and aims to have a 95% shift to automated lanes by 2026.

Some additional measures that India can adopt from international initiatives are regular audits and updates to the cybersecurity policies. Further, India can aim for a cross-border policy for international travel. By implementing these recommendations, DigiYatra can not only improve data security and operational efficiency but also establish India as a leader in global aviation security standards, ensuring trust and reliability for millions of travellers

CyberPeace Recommendations

Some recommendations for further improving upon our efforts for seamless and secure digital travel are:

- Strengthen the legislation on biometric data usage and storage.

- Collaborate with global aviation bodies to develop standardised operations.

- Cybersecurity technologies, such as blockchain for immutable data records, should be adopted alongside encryption standards, data minimisation practices, and anonymisation techniques.

- A cybersecurity-first culture across aviation stakeholders.

Conclusion

DigiYatra represents a transformative step in modernising India’s aviation sector by combining seamless travel with robust cybersecurity. Leveraging facial recognition and secure data validation enhances efficiency while complying with the Digital Personal Data Protection Act, 2023, and Aircraft (Security) Rules, 2023.

DigiYatra must address challenges like secure biometric data storage, adopt advanced technologies like blockchain, and foster a cybersecurity-first culture to reach its full potential. Expanding to underserved regions and aligning with global best practices will further solidify its impact. With continuous innovation and vigilance, DigiYatra can position India as a global leader in secure, digital travel.

References

- https://government.economictimes.indiatimes.com/news/governance/digi-yatra-operates-on-principle-of-privacy-by-design-brings-convenience-security-ceo-digi-yatra-foundation/114926799

- https://www.livemint.com/news/india/explained-what-is-digiyatra-how-it-will-work-and-other-questions-answered-11660701094885.html

- https://www.civilaviation.gov.in/sites/default/files/2023-09/ASR%20Notification_published%20in%20Gazette.pdf