#FactCheck -No Evidence IPS Officer Ajay Pal Sharma Has Been Deputed to West Bengal for Five Years

Executive Summary

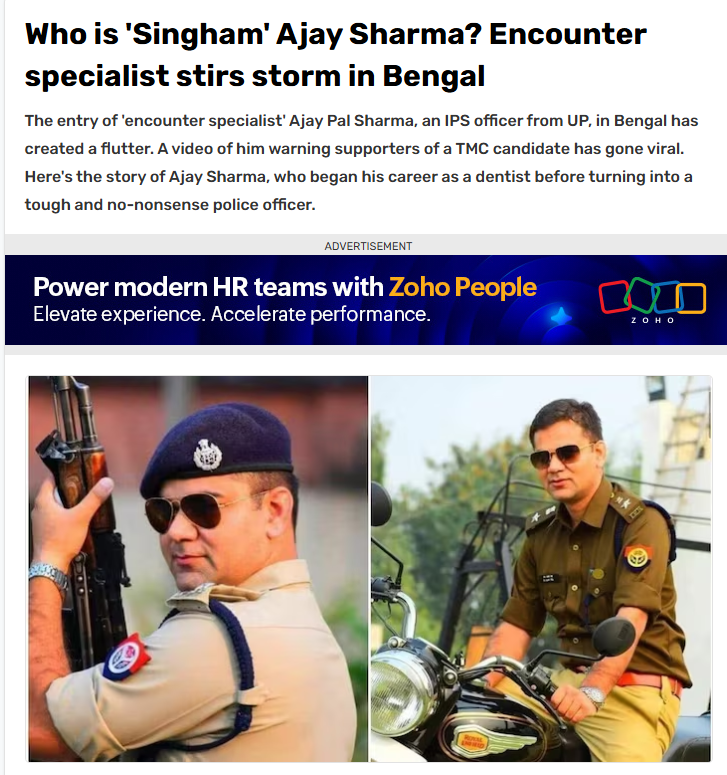

Ahead of the final phase of the West Bengal Assembly elections, a claim regarding Uttar Pradesh cadre IPS officer Ajay Pal Sharma began circulating widely on social media. Users claimed that Sharma was being sent to West Bengal on deputation for a period of five years. However, research conducted by CyberPeace Research Wing found the claim to be false. Sources close to the IPS officer confirmed that no such deputation order has been issued so far and that Ajay Pal Sharma is currently posted as Additional Commissioner in Prayagraj, Uttar Pradesh. Ajay Pal Sharma had earlier been deployed as a police observer during the West Bengal elections. During that period, a video of him warning Trinamool Congress candidate Jahangir Khan from the Falta constituency had gone viral on social media.

Claim

Several users on Facebook and X claimed that Ajay Pal Sharma had been transferred to West Bengal for five years under an administrative arrangement involving experienced officers from different states. One Facebook user wrote:“This decision has been taken under an administrative arrangement through which experienced officers are deployed in different states.”

- https://www.facebook.com/photo.php?fbid=818902764628152&set=a.296761956842238&type=3

- https://perma.cc/FD8Q-CF7L?type=standard

Fact Check

Our research found that the deputation claim is false. Ajay Pal Sharma is currently serving as Additional Commissioner in Prayagraj, a position he has held since 2025. Further scrutiny revealed that the claim appears to have originated from a parody account on X. On May 4, around 6 PM, the account @abdullah_0mar posted the claim regarding Sharma’s alleged five-year deputation to Bengal. However, in the comments section, the user later clarified that the post was intended as satire.

We also reviewed several news reports regarding Ajay Pal Sharma’s role during the West Bengal elections. Reports confirmed that the Election Commission had deployed him as a police observer in South 24 Parganas district during the polls. However, none of the reports mentioned any five-year transfer or deputation to West Bengal.

Conclusion

The viral claim is false. No official order has been issued regarding IPS officer Ajay Pal Sharma’s deputation to West Bengal for five years. Sources close to the officer confirmed that he continues to serve as Additional Commissioner in Prayagraj, Uttar Pradesh. Sharma had only been deputed as a police observer during the West Bengal Assembly elections, during which a video of him warning TMC candidate Jahangir Khan went viral online.