Navigating the Path to CyberPeace: Insights and Strategies

Featured #factCheck Blogs

Executive Summary

A video circulating on social media claims that during a summit in Beijing, Donald Trump was seen peeking into Chinese President Xi Jinping’s “private notebook” while Xi briefly stepped away. However, a fact-check by CyberPeace Research Wing found the claim to be baseless. A review of the full event footage clearly shows that the folder in question belonged to Donald Trump himself, not Xi Jinping. The viral interpretation is therefore misleading.

Claim

An X user shared the clip alleging, “Trump caught sneaking a peek at Xi Jinping’s private notebook during a Beijing banquet while Xi stepped away.”

Fact Check

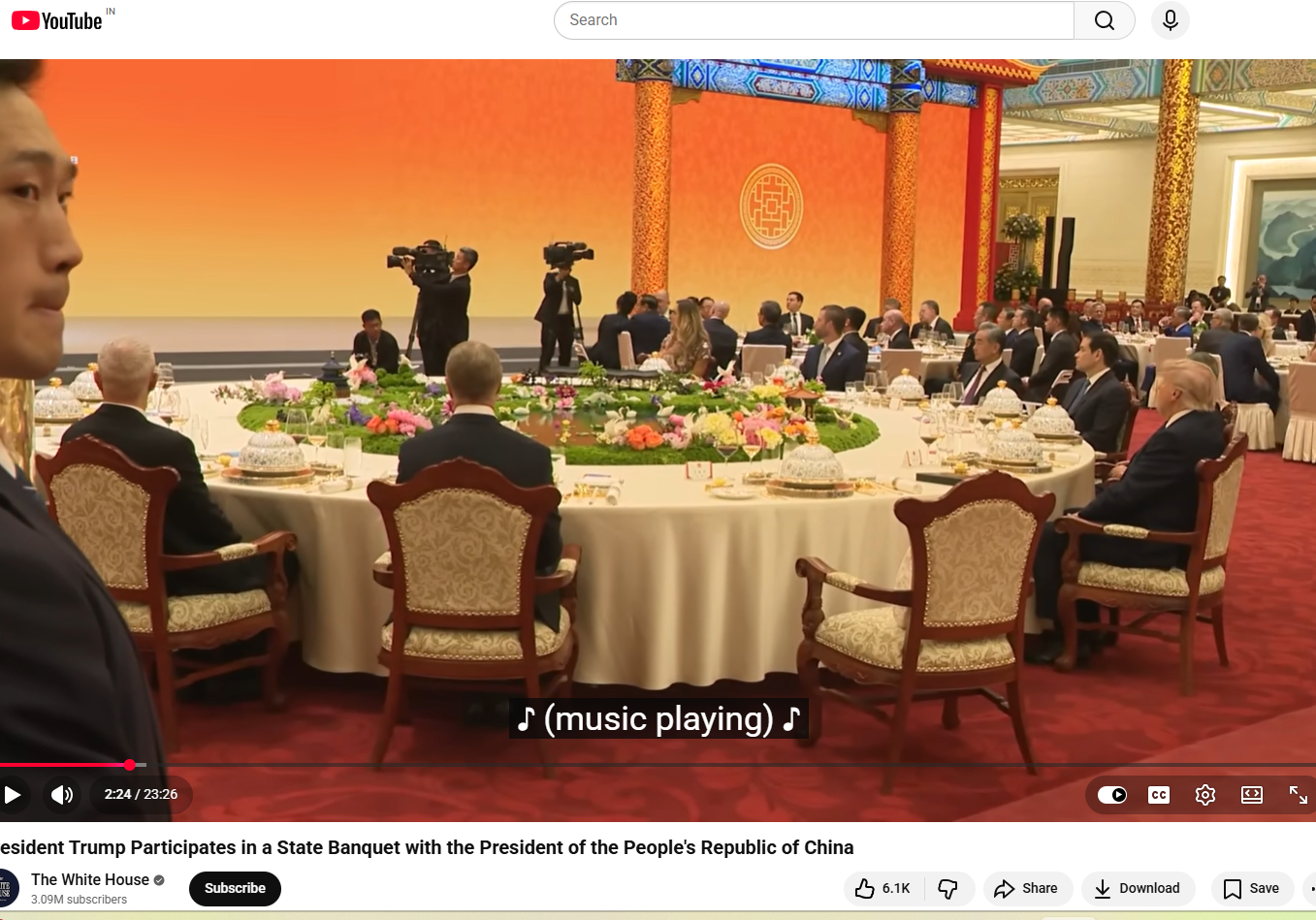

A longer version of the video, shared by NBC News on May 14, shows the state banquet held at the Great Hall of the People in Beijing. Around the 1-minute-50-second mark, Xi Jinping, seated to Trump’s left, gets up and walks to the podium. The viral clip follows shortly after, showing Trump opening the folder placed to his left and flipping through its pages.

The White House also uploaded the full footage on its official YouTube channel, showing wider, uninterrupted shots of the event. Around the two-minute mark, the announcer says, “And now a toast by President Xi,” after which Xi Jinping stands up. Immediately after, Trump is seen opening the folder on his left and reading from it.

Later in the video, around the 12-minute mark, when Xi returns to his seat, Trump is seen standing up, taking the folder with him to the podium, turning pages, and reading from it. The same sequence can also be seen in the NBC News footage at around 11 minutes and 50 seconds. This clearly indicates that the folder belonged to the U.S. President and not Xi Jinping, and that Trump was not peeking into any private notebook. Another key detail is the embossed emblem on the folder, which closely resembles the Seal of the President of the United States. The American bald eagle, the national bird of the United States, is clearly visible at the centre. A comparison between the viral screenshot and the official seal shows they are nearly identical.

Conclusion

The viral claim is misleading and taken out of context. A detailed review of the full footage, including official recordings from NBC News and the White House, clearly shows that the folder in question belonged to Donald Trump and not Chinese President Xi Jinping. At multiple points in the video, Trump is seen opening, handling, and reading from the same folder, including while Xi Jinping is away from his seat and later after he returns. The visual evidence from the event also supports this conclusion. The embossed seal on the folder matches the official Seal of the President of the United States, further confirming that it was part of Trump’s official briefing material and not any private document belonging to Xi Jinping. Taken together, the full sequence of events and official video sources make it clear that the viral narrative has been incorrectly framed. There is no evidence to suggest that Trump was peeking into Xi Jinping’s personal notebook.

Executive Summary

A graphic widely circulating on social media claims that Union Home Minister Amit Shah has warned, “A major crisis is coming; if possible, skip one meal a day.” The claim has been found to be false in a fact-check conducted by CyberPeace Research Wing. The research revealed that Amit Shah has not made any such statement.

Claim

A Facebook user shared the viral graphic on May 17, 2026, claiming that BJP leader and Home Minister Amit Shah issued a “warning” to the public, allegedly saying people should be prepared for a major crisis and consider skipping one meal a day. The post has been widely circulated on social media, drawing significant attention and discussion.

- https://www.facebook.com/photo.php?fbid=1509406197622070&set=pb.100056581115590.-2207520000&type=3

- https://archive.ph/Z9Tle

Factcheck

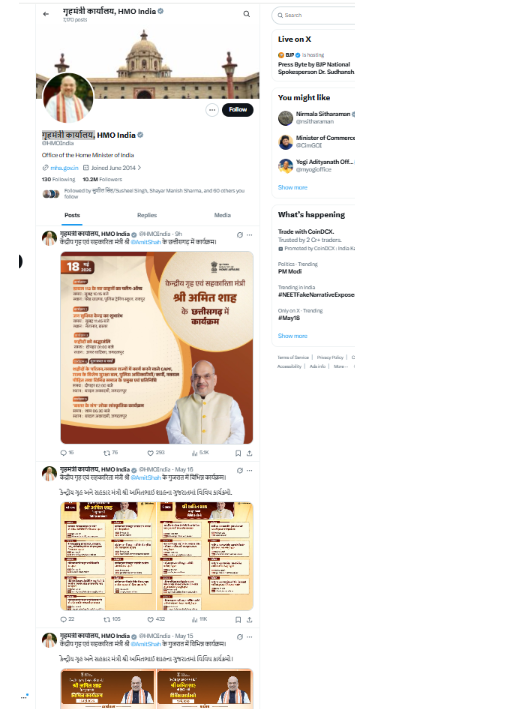

A keyword-based search on Google did not return any credible news reports supporting the claim. Further scrutiny of the official account of the Ministry of Home Affairs on X also found no mention or statement matching the viral claim.

A separate review of the official X account of Home Minister Amit Shah also did not show any such statement or post confirming the viral claim.

Conclusion

The viral claim is false. Union Home Minister Amit Shah has not made any such statement.

Social media users are widely sharing a video claiming to show an aircraft carrier being destroyed after getting trapped in a massive sea storm. In the viral clip, the aircraft carrier can be seen breaking apart amid violent waves, with users describing the visuals as a “wrath of nature.”

However, CyberPeace Foundation’s research has found this claim to be false. Our fact-check confirms that the viral video does not depict a real incident and has instead been created using Artificial Intelligence (AI).

Claim:

An X (formerly Twitter) user shared the viral video with the caption,“Nature’s wrath captured on camera.”The video shows an aircraft carrier appearing to be devastated by a powerful ocean storm. The post can be viewed here, and its archived version is available here.

https://x.com/Maailah1712/status/2011672435255624090

Fact Check:

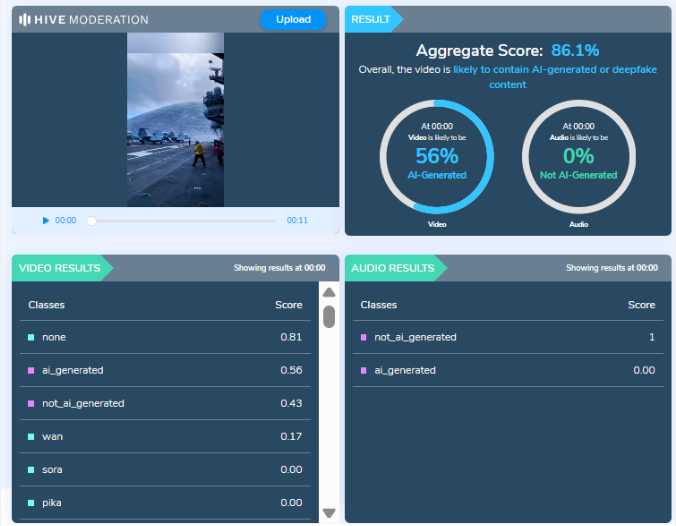

At first glance, the visuals shown in the viral video appear highly unrealistic and cinematic, raising suspicion about their authenticity. The exaggerated motion of waves, structural damage to the vessel, and overall animation-like quality suggest that the video may have been digitally generated. To verify this, we analyzed the video using AI detection tools.

The analysis conducted by Hive Moderation, a widely used AI content detection platform, indicates that the video is highly likely to be AI-generated. According to Hive’s assessment, there is nearly a 90 percent probability that the visual content in the video was created using AI.

Conclusion

The viral video claiming to show an aircraft carrier being destroyed in a sea storm is not related to any real incident.It is a computer-generated, AI-created video that is being falsely shared online as a real natural disaster. By circulating such fabricated visuals without verification, social media users are contributing to the spread of misinformation.

A video is being shared on social media, falsely attributing it to Australian Prime Minister Anthony Albanese. The video claims that following the Bondi Beach attack, he decided to cancel the visas of Pakistani citizens.

An investigation by the Cyber Peace Foundation revealed that the viral video was created using AI. In the original video, Anthony Albanese was answering questions related to the Climate Change Bill during a press conference. It is important to note that in the attack that took place last Sunday (14 December) at Bondi Beach in Sydney, New South Wales, Australia, 15 people were killed. According to Australian police, the attack targeted the Jewish community. New South Wales Police Commissioner Mal Lanyon stated that the two accused involved in the attack were father and son—one aged 50 and the other 24. Media reports identified them as Sajid and Naved Akram.

Claim:

On 14 December 2025, a user on the social media platform X shared a video claiming, “After the attack by a Pakistani Islamic terrorist, the Australian Prime Minister has decided to cancel the visas of all Pakistanis. The whole world is troubled by this community, and in India it is said that Abdul cannot buy a house in a Hindu neighbourhood.”

The link to the related post, its archived version, and screenshots can be seen below:

Investigation:Upon closely examining the viral video, we suspected it to be AI-generated. Subsequently, we scanned the video using the AI detection tool aurigin.ai. According to the results provided by the tool, the video was found to be AI-generated.

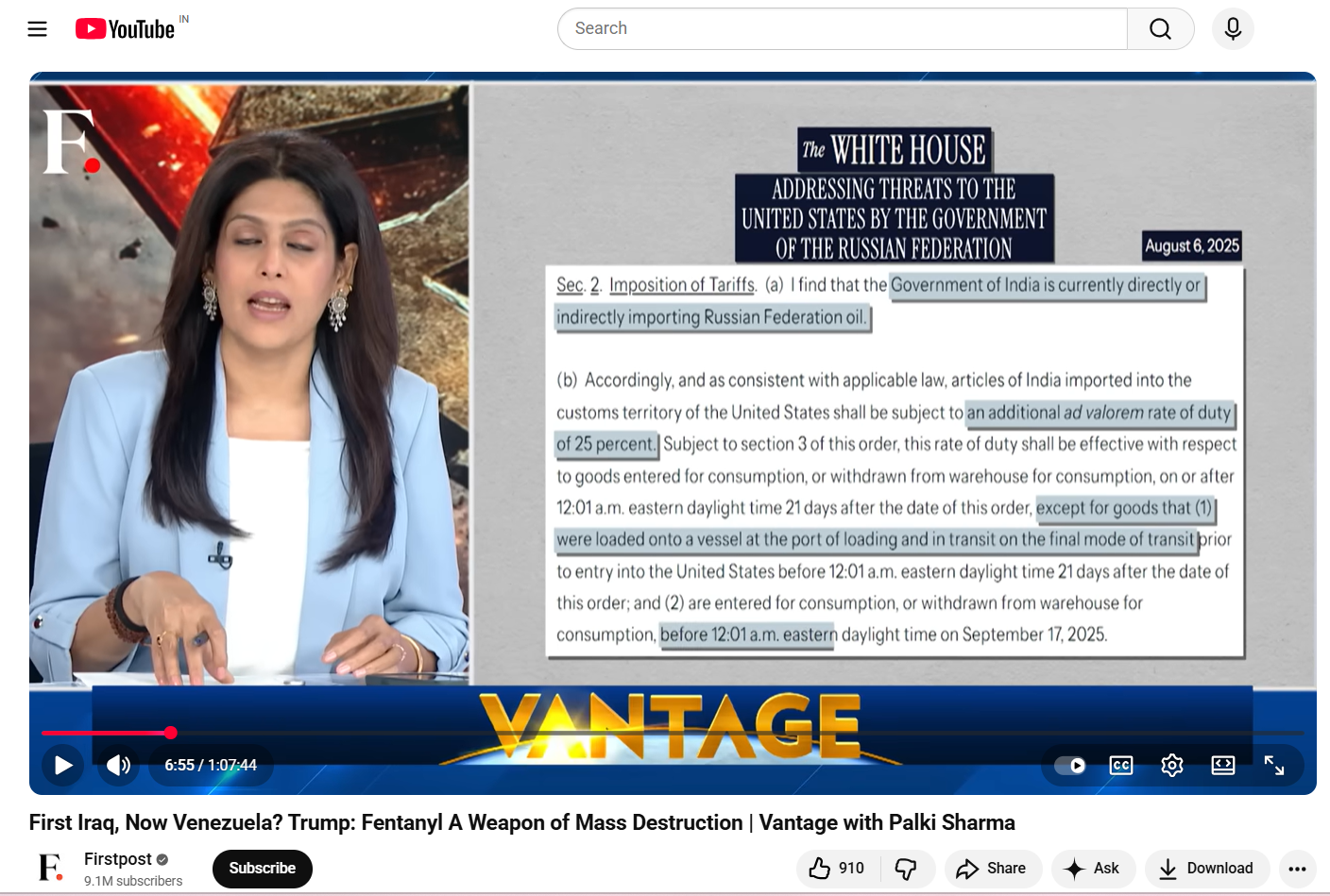

A video clip of journalist Palki Sharma is being widely shared on social media. Along with the video, it is being claimed that during Prime Minister Narendra Modi’s recent Middle East visit, she questioned Jordan’s diplomatic protocol.

In the viral clip, Palki Sharma is allegedly seen asking why Jordan’s King Abdullah II did not come to the airport to receive Prime Minister Modi, and whether this indicated a downgrade in the level of welcome.

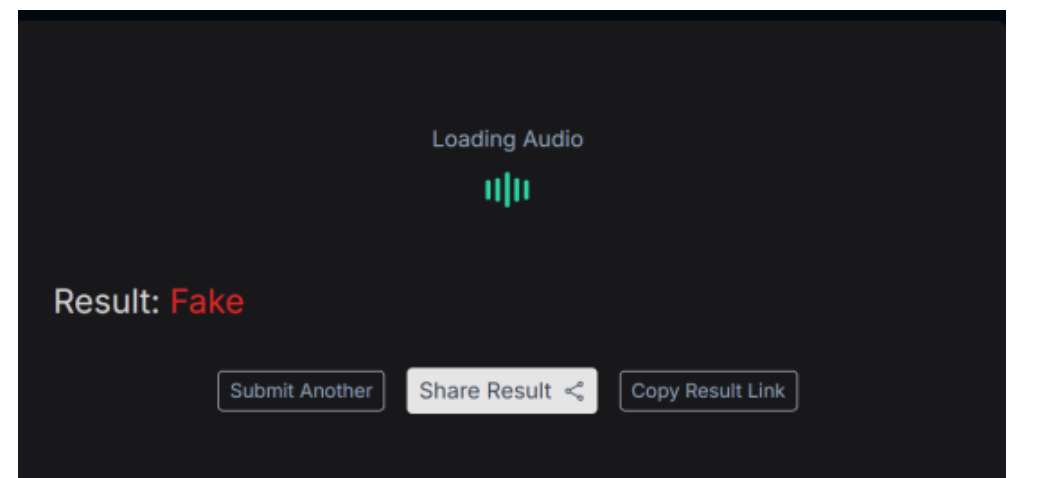

However, an investigation by the Cyber Peace Foundation found this claim to be misleading. The probe revealed that while the visuals in the viral video are genuine, the audio has been altered using Artificial Intelligence (AI).

On the social media platform ‘X’, a user named “Ammar Solangi” shared this video on 18 December. The post claimed that the video was related to questions raised about Jordan’s diplomatic protocol during Prime Minister Modi’s visit. According to the post, Palki Sharma questioned why King Abdullah II did not receive Prime Minister Modi at the airport. The archive link of the viral post can be seen here: https://ghostarchive.org/archive/26aK0

Verification

During the investigation, the fact-check desk noticed the ‘Firstpost’ logo in the top-left corner of the viral video. Based on this clue, a customized Google search was conducted, which led to the original news report.

The investigation revealed that the viral video was taken from an episode of journalist Palki Sharma’s show “Vantage with Palki Sharma”, which aired on 17 December.

Analysis of the video showed that the visuals appearing at the 33 minutes 30 seconds timestamp in the original report exactly match those used in the viral clip. However, in the original broadcast, Palki Sharma neither questioned Jordan’s protocol nor made any comment about King Abdullah II not being present at the airport.

In the original video, Palki Sharma says:

“Prime Minister Modi was on a diplomatic tour of Jordan, Ethiopia, and Oman, and in Jordan he was received at the airport by the country’s Prime Minister…” The link to the original report can be seen here: https://www.youtube.com/watch?v=-VYZYe9l6Bs

AI Audio Examination

Further investigation involved separating the audio from the viral video and analyzing it using the AI voice detection tool ‘Resemble AI’. The tool’s results confirmed that fake, AI-generated audio had been added over the real footage in the viral clip to spread a misleading claim. A screenshot of the results from this examination can be seen below.

Conclusion

The video being circulated in the name of journalist Palki Sharma has been tampered with. Her voice has been altered using AI technology, and the claim made regarding the Jordan visit is completely misleading.

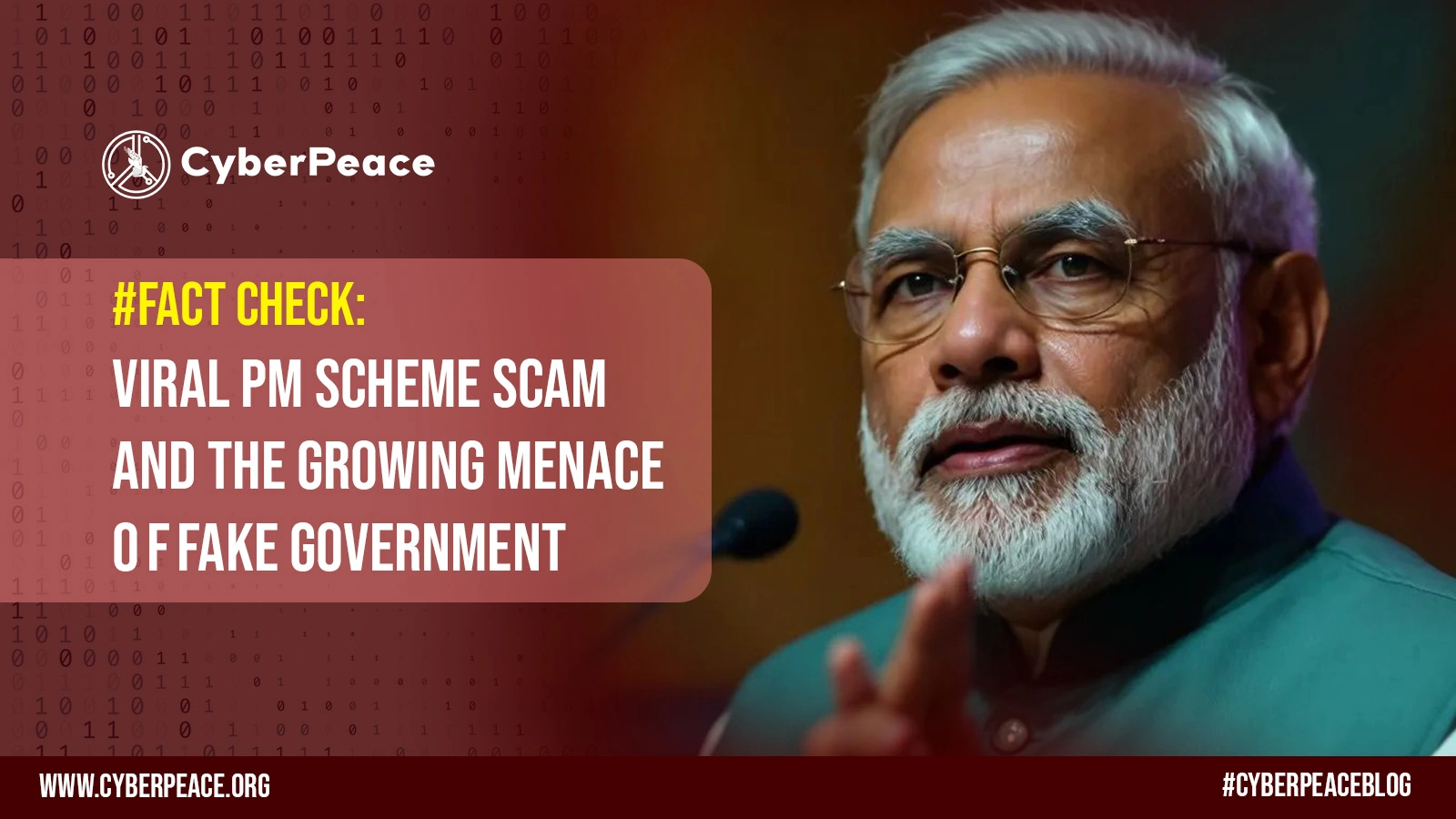

Introduction

There has been a recent surge of misinformation all over social media, claiming that every Indian ought to receive an allowance of ₹2,000 under some "Prime Minister's scheme." The message, which has been circulated far and wide on almost all platforms-WhatsApp, Facebook, Telegram, etc.-has urged users to click on an unfamiliar link to claim the allowance in their bank accounts.

It would seem like a very attractive offer, especially at a time when common citizens are coping with rising costs of living. But upon further examination, it turns out to be an outright online scam. NewsMobile fact-checked the claim and confirmed that no such scheme exists. Thus, the message circulating is a scam that aims to mislead common citizens.

Such an incident is not isolated. Over the years, fraudulent posts falsely offering benefits in the name of the government or well-known brands have been on the rise. These scams are not just about misinformation-they take advantage of trust, lure people into clicking, and sharing personal info that poses serious risks to financial and personal security.

Anatomy of the Viral PM Scheme Scam

The viral message received attention and was written in Hindi. It read:

“सभी नागरिकों को PM योजना के तहत दो हज़ार रुपए का भत्ता प्रदान किया गया है अपने bank खाते में प्राप्त करने के लिए click करें."

(English: “All citizens have been provided an allowance of ₹2000 under the PM scheme. Click to receive it in your bank account.”)

Beneath this was an odd link that, upon clicking through investigation, turned out to be not working and invalid. An examination of government sites, official handle accounts, and other such was done and no announcement for any such allowance was found.

This provides a neat explanation of a phishing attempt by which a scammer induces urgency and temptation in order to lure citizens into clicking a malicious link. While the link may no longer be active, it could very well have once redirected users to websites that harvest personal information such as Aadhaar numbers, bank details, or login credentials.

The Broader Problem: Fake Government Scheme Scams

Some scams have been exploiting the hoax gimmick of the ₹2,000 PM scheme into the wider trend. How do the con men work? They leverage the credibility of governmental initiatives to scam citizens. In the past, fake promises were made concerning free gas cylinders, cash allowances, subsidised rations, or even job opportunities.

During the COVID times, for instance, fake vaccination registration links and so-called relief scheme offers went viral, preying on the fears and vulnerabilities of ill-informed citizens. Likewise, false schemes associated with reputed companies such as Amazon, Flipkart, TATA Group, and Hermès have also gone viral, promising free gifts or allowances.

The one thing that makes scams associated with the government very dangerous is the exploitation of people's trust in authority. The common citizen is predisposed to believe the PM scheme or the Government Yojana because of the social credibility accorded to these announcements.

How These Scams Operate

These are scams where the creators intend deception and in the end, gain from defrauding a person. Fraudsters first create clickbait messages that are duly recorded to resemble official communications and often bear the government logos and bear a mix of Hindi-English text with the phrase "Pradhan Mantri Yojana" to make it sound legitimate. The messages then redirect users to bogus websites that really look very much like the government's portals, asking sick persons to enter personal information. Finally, as soon as they have obtained this data, the scammer uses it for identity theft, bank fraud, or sells it on the dark web. Social engineering does play a large role in these scams: here terms of urgency like limited time, last chance, and whatnot get created with the aim of pushing the targets to act on these without thinking. For maximum reach, victims are also asked to forward the message to their friends and family, causing the scammer to go viral across WhatsApp, Facebook, and Telegram.

Risks to Citizens

Risks are serious and manifold to falling prey to these scams. The immediate kind of risk is financial loss: divulging bank account details, an OTP, or credentials may constitute providing attackers the power to drain funds therefrom. Another prevalent kind of identity theft occurs through hijacked Aadhaar, PAN, or personal information that subsequently finds its way into fake loans or SIM activations. Apart from monetary losses, opening malicious links might also make devices infected with spyware or ransomware, thereby invading privacy and security. Victims tend to experience a form of psychological trauma due to feelings of betrayal or humiliation of being deceived, thus discouraging them from reporting, which in turn enables such scams to go undetected.

Best Practices for Prevention

It is prudent to exercise good cyber hygiene and be on the lookout for such scams. The citizens should verify each statement against government-authorised websites like https://www.mygov.in or through press statements of the ministries prior to believing it. One should not click on suspicious links offering money, gifts, or subsidies. Red flags like poor grammar, an unofficial domain name, or too-good-to-be-true offers can enable one to identify the scam in time. Two-factor authentication, antivirus software updates, and securing devices can drastically lower the threat from the technical angle. Equally important is the reporting of issues: always report any suspicious activities to cybercrime.gov.in or to the nearest cyber cell so that the authorities may trace some pattern and issue advisories accordingly. Finally, one can do some good by sharing verified fact checks within their circles to build added strength against misinformation and scams.

Policy and Community Role

While individual awareness is important, collective action must be taken against these fake government scheme scams. Platforms such as WhatsApp, Facebook, and X (Twitter) must tune up fraudsters' message detection mechanisms. In the meantime, Government Bodies must alert citizens periodically on new scams through their official handles/schemes and through community outreach.

Civil society and fact-checking agencies play an important role in dispelling frequently viral hoaxes. This work must be amplified to reach people's consciousness in regional languages for the very reason that in these terrain zones, forwarded messages are much more trusted.

Conclusion

The viral ₹2,000 PM scheme scam is a reminder that everything that is viral online cannot be trusted in toto. The scammers of the day are inventing newer scams to gain trust, spread misinformation, and extort innocent citizens.

The best defence will be awareness and alertness. Citizens must verify any claims through official channels before clicking on a link, sharing their data, or even acting upon it in any way. With proper cyber hygiene and avoiding suspicious messages, we can counterattack by reducing the percentage of impact that these scams may have and collaboratively build a secure digital environment.

As India pushes itself further into a digital ecosystem, both empowering and being resilient to cyber fraud is not a state of individual security, but a national agenda.

References

- https://www.newsmobile.in/nm-fact-checker/fact-check-viral-post-claiming-pm-scheme-offering-rs-2000-allowance-is-a-scam/

- https://timesofindia.indiatimes.com/business/financial-literacy/investing/beware-of-deepfake-scams-fraudsters-using-ai-videos-to-push-schemes-promising-unrealistic-returns-red-flags-to-watch-out-for/articleshow/124085155.cms

- https://www.business-standard.com/finance/personal-finance/invest-rs-21-000-to-earn-rs-20-lakh-monthly-viral-videos-of-fm-are-fake-125082000517_1.html

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2124728

.webp)

Executive Summary:

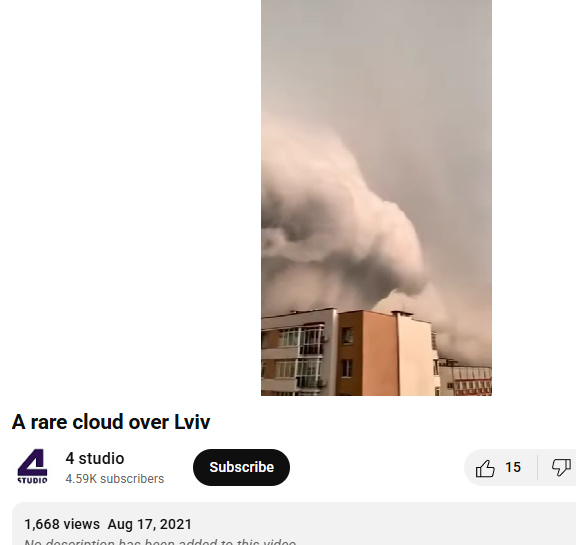

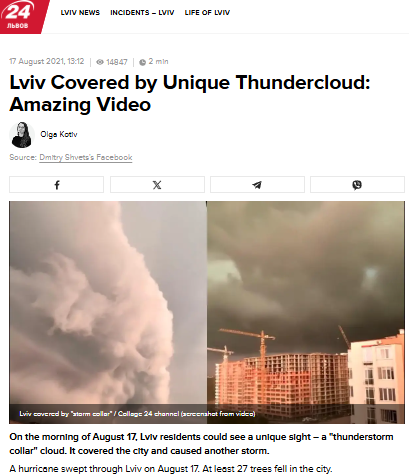

A viral video claims to show a massive cumulonimbus cloud over Gurugram, Haryana, and Delhi NCR on 3rd September 2025. However, our research reveals the claim is misleading. A reverse image search traced the visuals to Lviv, Ukraine, dating back to August 2021. The footage matches earlier reports and was even covered by the Ukrainian news outlet 24 Kanal, which published the story under the headline “Lviv Covered by Unique Thundercloud: Amazing Video”. Thus, the viral claim linking the phenomenon to a recent event in India is false.

Claim:

A viral video circulating on social media claims to show a massive cloud formation over Gurugram, Haryana, and the Delhi NCR region on 3rd September 2025. The cloud appears to be a cumulonimbus formation, which is typically associated with heavy rainfall, thunderstorms, and severe weather conditions.

Fact Check:

After conducting a reverse image search on key frames of the viral video, we found matching visuals from videos that attribute the phenomenon to Lviv, a city in Ukraine. These videos date back to August 2021, thereby debunking the claim that the footage depicts a recent weather event over Gurugram, Haryana, or the Delhi NCR region.

Further research revealed that a Ukrainian news channel named 24 Kanal, had reported on the Lviv thundercloud phenomenon in August 2021. The report was published under the headline “Lviv Covered by Unique Thundercloud: Amazing Video” ( original in Russian, translated into English).

Conclusion:

The viral video does not depict a recent weather event in Gurugram or Delhi NCR, but rather an old incident from Lviv, Ukraine, recorded in August 2021. Verified sources, including Ukrainian media coverage, confirm this. Hence, the circulating claim is misleading and false.

- Claim: Old Thundercloud Video from Lviv city in Ukraine Ukraine (2021) Falsely Linked to Delhi NCR, Gurugram and Haryana.

- Claimed On: Social Media

- Fact Check: False and Misleading.

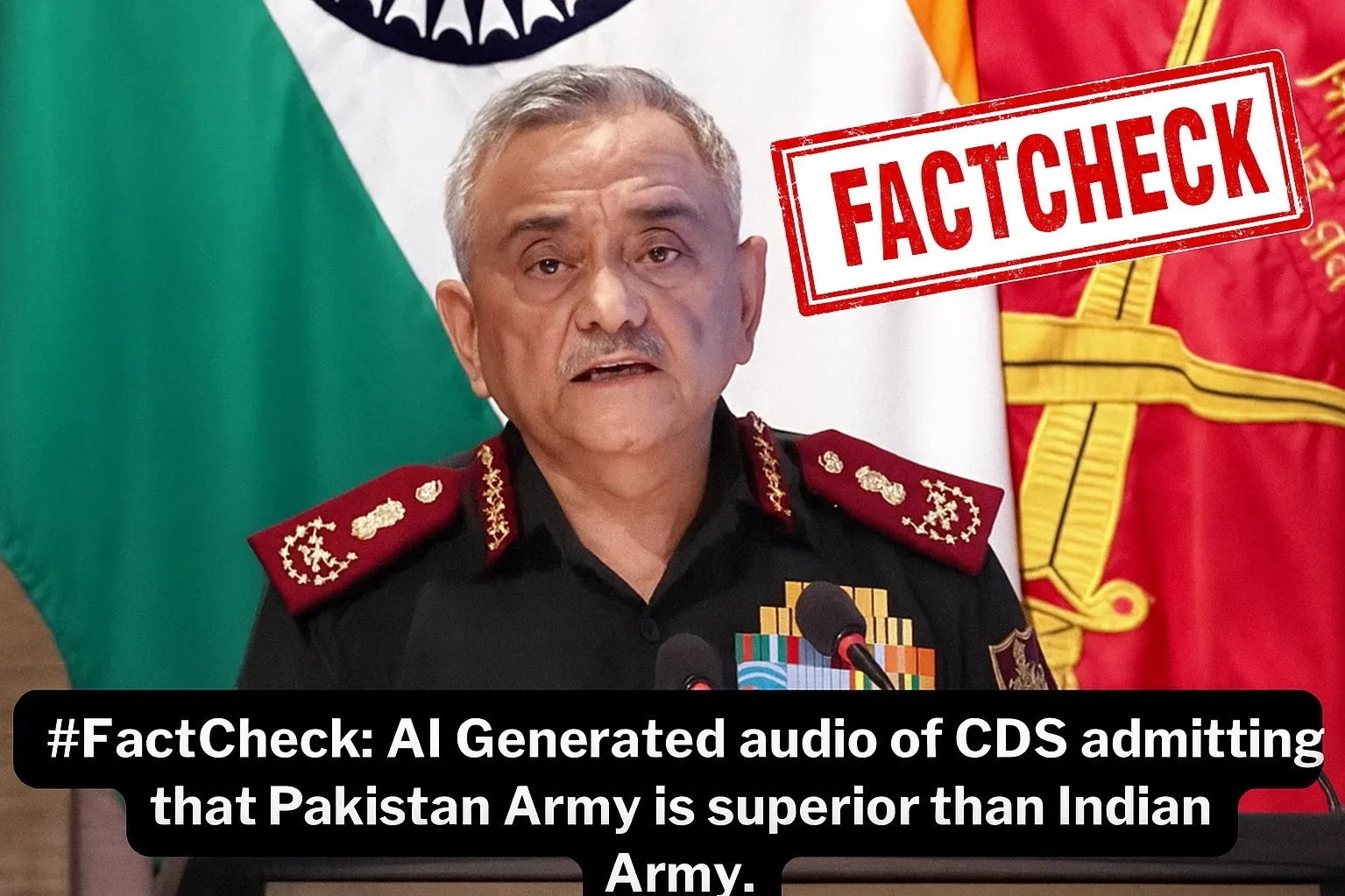

Executive Summary:

A viral social media claim alleges that India’s Chief of Defence Staff (CDS), General Anil Chauhan, praised Pakistan’s Army as superior during “Operation Sindoor.” Fact-checking confirms the claim is false. The original video, available on The Hindu’s official channel, shows General Chauhan inaugurating Ran-Samwad 2025 in Mhow, Madhya Pradesh. At the 1:22:12 mark, the genuine segment appears, proving the viral clip was altered. Additionally, analysis using Hiya AI Audio identified voice manipulation, flagging the segment as a deepfake with an authenticity score of 1/100. The fabricated statement was: “never mess with Pakistan because their army appears to be far more superior.” Thus, the viral video is doctored and misleading.

Claim:

A viral claim is being shared on social media (archived link) falsely claiming that India’s Chief of Defence Staff (CDS), General Anil Chauhan described Pakistan’s Army as superior and more advanced during Operation Sindoor.

Fact Check:

After performing a reverse image search we found a full clip on the official channel of The Hindu in which Chief of Defence Staff Anil Chauhan inaugurated ‘Ran-Samwad’ 2025 in Mhow, Madhya Pradesh.

In the clip on the time stamp of 1:22:12 we can see the actual part of the video segment which was manipulated in the viral video.

Also, by using Hiya AI Audio tool we got to know that the voice was manipulated in the specific segment of the video. The result shows Deepfake with an authenticity score 1/100, the result also shows the statement which is deepfake which was “ was to never mess with Pakistan because their army appears to be far more superior”.

Conclusion:

The viral video attributing remarks to CDS General Anil Chauhan about Pakistan’s Army being “superior” is fabricated. The original footage from The Hindu confirms no such statement was made, while forensic analysis using Hiya AI Audio detected clear voice manipulation, identifying the clip as a deepfake with minimal authenticity. Hence, the claim is baseless, misleading, and an attempt to spread disinformation.

- Claim: AI Generated audio of CDS admitting that the Pakistan Army is superior to the Indian Army.

- Claimed On: Social Media

- Fact Check: False and Misleading

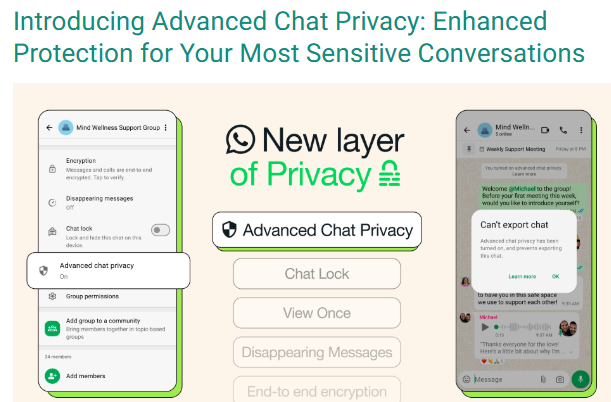

Executive Summary:

A viral social media video falsely claims that Meta AI reads all WhatsApp group and individual chats by default, and that enabling “Advanced Chat Privacy” can stop this. On performing reverse image search we found a blog post of WhatsApp which was posted in the month of April 2025 which claims that all personal and group chats remain protected with end to end (E2E) encryption, accessible only to the sender and recipient. Meta AI can interact only with messages explicitly sent to it or tagged with @MetaAI. The “Advanced Chat Privacy” feature is designed to prevent external sharing of chats, not to restrict Meta AI access. Therefore, the viral claim is misleading and factually incorrect, aimed at creating unnecessary fear among users.

Claim:

A viral social media video [archived link] alleges that Meta AI is actively accessing private conversations on WhatsApp, including both group and individual chats, due to the current default settings. The video further claims that users can safeguard their privacy by enabling the “Advanced Chat Privacy” feature, which purportedly prevents such access.

Fact Check:

Upon doing reverse image search from the keyframe of the viral video, we found a WhatsApp blog post from April 2025 that explains new privacy features to help users control their chats and data. It states that Meta AI can only see messages directly sent to it or tagged with @Meta AI. All personal and group chats are secured with end-to-end encryption, so only the sender and receiver can read them. The "Advanced Chat Privacy" setting helps stop chats from being shared outside WhatsApp, like blocking exports or auto-downloads, but it doesn’t affect Meta AI since it’s already blocked from reading chats. This shows the viral claim is false and meant to confuse people.

Conclusion:

The claim that Meta AI is reading WhatsApp Group Chats and that enabling the "Advance Chat Privacy" setting can prevent this is false and misleading. WhatsApp has officially confirmed that Meta AI only accesses messages explicitly shared with it, and all chats remain protected by end-to-end encryption, ensuring privacy. The "Advanced Chat Privacy" setting does not relate to Meta AI access, as it is already restricted by default.

- Claim: Viral social media video claims that WhatsApp Group Chats are being read by Meta AI due to current settings, and enabling the "Advance Chat Privacy" setting can prevent this.

- Claimed On: Social Media

- Fact Check: False and Misleading

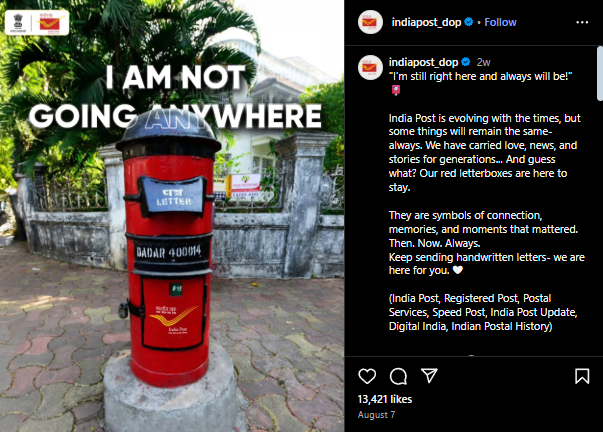

Executive Summary:

A viral social media claim suggested that India Post would discontinue all red post boxes across the country from 1 September 2025, attributing the move to the government’s Digital India initiative. However, fact-checking revealed this claim to be false. India Post’s official X (formerly Twitter) and Instagram handles clarified on 7 August 2025 that red letterboxes remain operational, calling them timeless symbols of connection and memories. No official notice or notification regarding their discontinuation exists on the Department of Posts’ website. This indicates the viral posts were misleading and aimed at creating confusion among the public.

Claim:

A claim is circulating on social media stating that India Post will discontinue all red post boxes across the country effective 1 September 2025. According to the viral posts,[archived link] the move is being linked to the government’s push towards Digital India, suggesting that traditional post boxes have lost their relevance in the digital era.

Fact Check:

After conducting a reverse image analysis, we found that the official X handle of India Post, in a post dated 7 August 2025, clarified that the viral claim was incorrect and misleading. The post was shared with the caption:

I’m still right here and always will be!"

India Post is evolving with the times, but some things will remain the same- always. We have carried love, news, and stories for generations... And guess what? Our red letterboxes are here to stay.

They are symbols of connection, memories, and moments that mattered. Then. Now. Always.

Keep sending handwritten letters- we are here for you.

This directly refutes the viral claim about the discontinuation of the red post box from 1 September 2025. A similar clarification was also posted on the official Instagram handle @indiapost_dop on the same date.

Furthermore, after thoroughly reviewing the official website of the Department of Posts, Government of India, we found absolutely no trace, notice, or even the slightest mention of any plan to discontinue the iconic red post boxes. This complete absence of official communication strongly reinforces the fact that the viral claim is nothing more than a baseless and misleading rumour.

Conclusion:

The claim about the discontinuation of red post boxes from 1 September 2025 is false and misleading. India Post has officially confirmed that the iconic red letterboxes will continue to function as before and remain an integral part of India’s postal services.

- Claim: A viral claim suggests that India Post will remove all red letter boxes across the country beginning 1 September 2025.

- Claimed On: Social Media

- Fact Check: False and Misleading

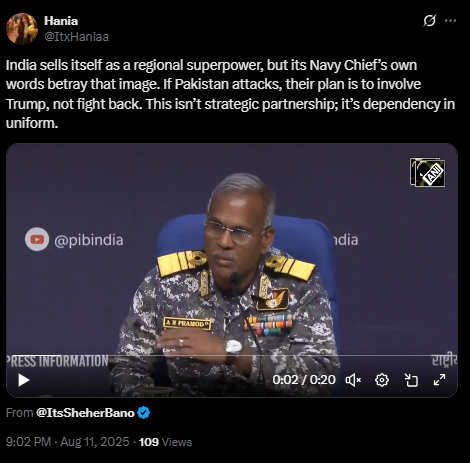

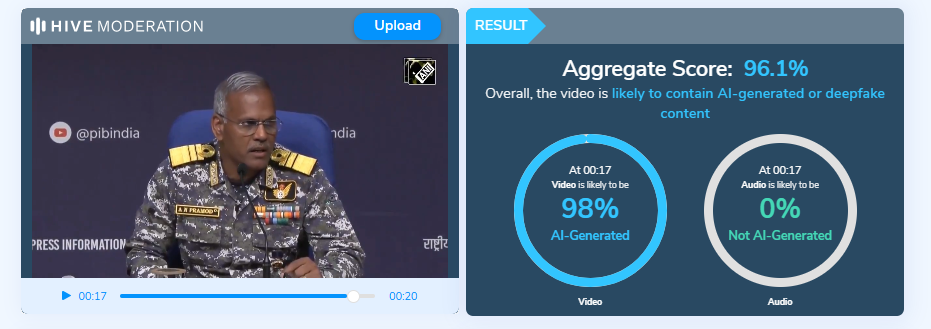

Executive Summary:

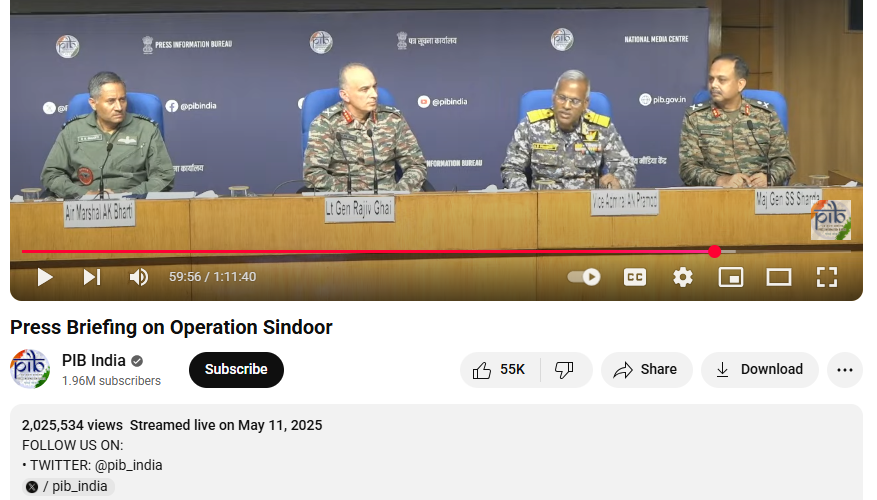

A viral video (archived link) circulating on social media claims that Vice Admiral AN Pramod stated India would seek assistance from the United States and President Trump if Pakistan launched an attack, portraying India as dependent rather than self-reliant. Research traced the extended footage to the Press Information Bureau’s official YouTube channel, published on 11 May 2025. In the authentic video, the Vice Admiral makes no such remark and instead concludes his statement with, “That’s all.” Further analysis using the AI Detection tool confirmed that the viral clip was digitally manipulated with AI-generated audio, misrepresenting his actual words.

Claim:

In the viral video an X user posted with the caption

”India sells itself as a regional superpower, but its Navy Chief’s own words betray that image. If Pakistan attacks, their plan is to involve Trump, not fight back. This isn’t strategic partnership; it’s dependency in uniform”.

In the video the Vice Admiral was heard saying

“We have worked out among three services, this time if Pakistan dares take any action, and Pakistan knows it, what we are going to do. We will complain against Pakistan to the United States of America and President Trump, like we did earlier in Operation Sindoor.”

Fact Check:

Upon conducting a reverse image search on key frames from the video, we located the full version of the video on the official YouTube channel of the Press Information Bureau (PIB), published on 11 May 2025. In this video, at the 59:57-minute mark, the Vice Admiral can be heard saying:

“This time if Pakistan dares take any action, and Pakistan knows it, what we are going to do. That’s all.”

Further analysis was conducted using the Hive Moderation tool to examine the authenticity of the circulating clip. The results indicated that the video had been artificially generated, with clear signs of AI manipulation. This suggests that the content was not genuine but rather created with the intent to mislead viewers and spread misinformation.

Conclusion:

The viral video attributing remarks to Vice Admiral AN Pramod about India seeking U.S. and President Trump’s intervention against Pakistan is misleading. The extended speech, available on the Press Information Bureau’s official YouTube channel, contained no such statement. Instead of the alleged claim, the Vice Admiral concluded his comments by saying, “That’s all.” AI analysis using Hive Moderation further indicated that the viral clip had been artificially manipulated, with fabricated audio inserted to misrepresent his words. These findings confirm that the video is altered and does not reflect the Vice Admiral’s actual remarks.

Claim: Fake Viral Video Claiming Vice Admiral AN Pramod saying that next time if Pakistan Attack we will complain to US and Prez Trump.

Claimed On: Social Media

Fact Check: False and Misleading

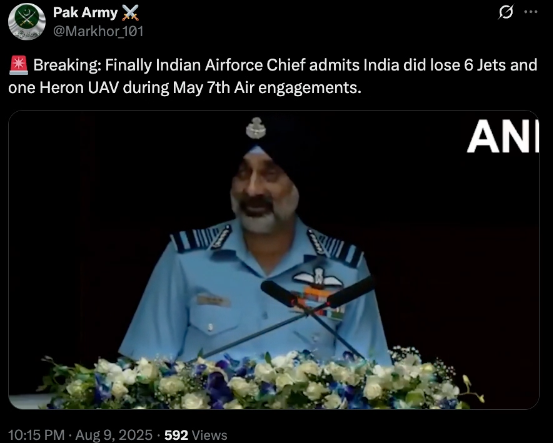

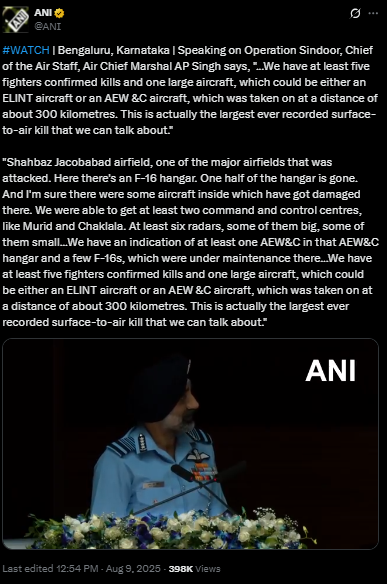

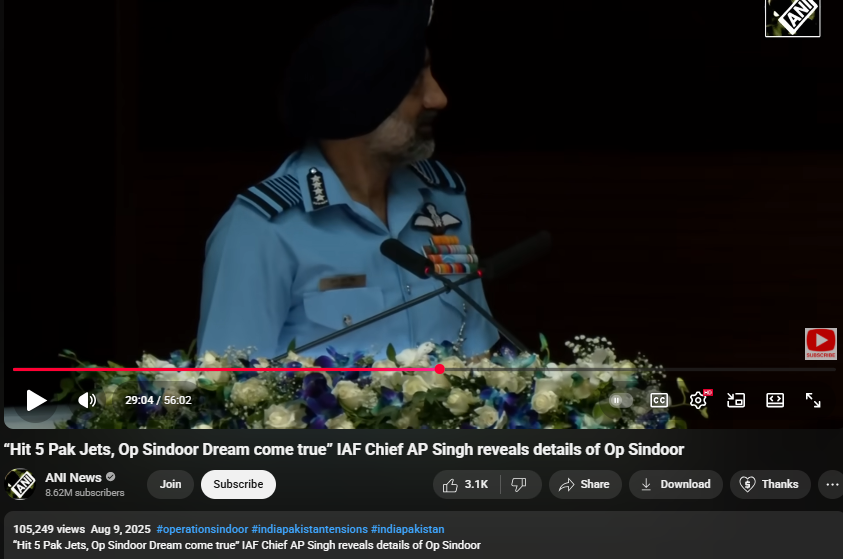

Executive Summary:

A video circulating on social media falsely claims to show Indian Air Chief Marshal AP Singh admitting that India lost six jets and a Heron drone during Operation Sindoor in May 2025. It has been revealed that the footage had been digitally manipulated by inserting an AI generated voice clone of Air Chief Marshal Singh into his recent speech, which was streamed live on August 9, 2025.

Claim:

A viral video (archived video) (another link) shared by an X user stating in the caption “ Breaking: Finally Indian Airforce Chief admits India did lose 6 Jets and one Heron UAV during May 7th Air engagements.” which is actually showing the Air Chief Marshal has admitted the aforementioned loss during Operation Sindoor.

Fact Check:

By conducting a reverse image search on key frames from the video, we found a clip which was posted by ANI Official X handle , after watching the full clip we didn't find any mention of the aforementioned alleged claim.

On further research we found an extended version of the video in the Official YouTube Channel of ANI which was published on 9th August 2025. At the 16th Air Chief Marshal L.M. Katre Memorial Lecture in Marathahalli, Bengaluru, Air Chief Marshal AP Singh did not mention any loss of six jets or a drone in relation to the conflict with Pakistan. The discrepancies observed in the viral clip suggest that portions of the audio may have been digitally manipulated.

The audio in the viral video, particularly the segment at the 29:05 minute mark alleging the loss of six Indian jets, appeared to be manipulated and displayed noticeable inconsistencies in tone and clarity.

Conclusion:

The viral video claiming that Air Chief Marshal AP Singh admitted to the loss of six jets and a Heron UAV during Operation Sindoor is misleading. A reverse image search traced the footage that no such remarks were made. Further an extended version on ANI’s official YouTube channel confirmed that, during the 16th Air Chief Marshal L.M. Katre Memorial Lecture, no reference was made to the alleged losses. Additionally, the viral video’s audio, particularly around the 29:05 mark, showed signs of manipulation with noticeable inconsistencies in tone and clarity.

- Claim: Viral Video Claiming IAF Chief Acknowledged Loss of Jets Found Manipulated

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A viral video (archive link) claims General Upendra Dwivedi, Chief of Army Staff (COAS), admitted to losing six Air Force jets and 250 soldiers during clashes with Pakistan. Verification revealed the footage is from an IIT Madras speech, with no such statement made. AI detection confirmed parts of the audio were artificially generated.

Claim:

The claim in question is that General Upendra Dwivedi, Chief of Army Staff (COAS), admitted to losing six Indian Air Force jets and 250 soldiers during recent clashes with Pakistan.

Fact Check:

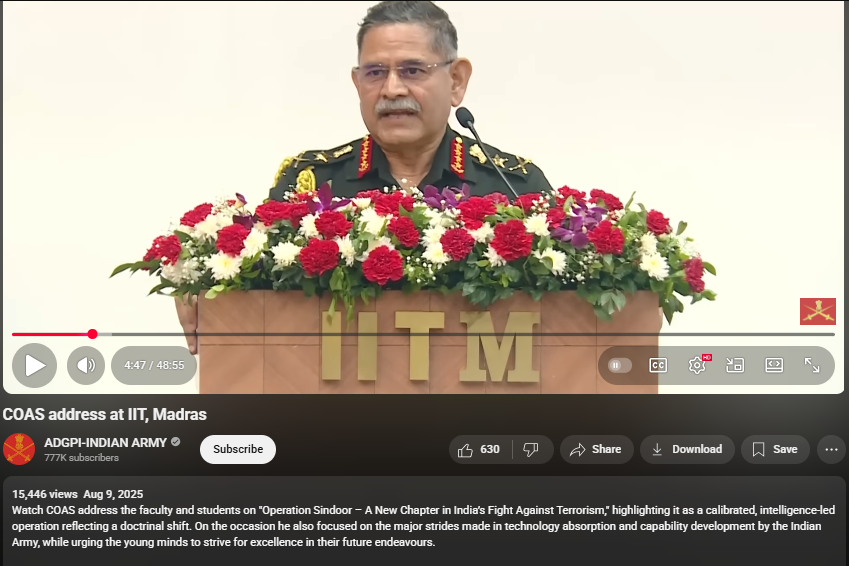

Upon conducting a reverse image search on key frames from the video, it was found that the original footage is from IIT Madras, where the Chief of Army Staff (COAS) was delivering a speech. The video is available on the official YouTube channel of ADGPI – Indian Army, published on 9 August 2025, with the description:

“Watch COAS address the faculty and students on ‘Operation Sindoor – A New Chapter in India’s Fight Against Terrorism,’ highlighting it as a calibrated, intelligence-led operation reflecting a doctrinal shift. On the occasion, he also focused on the major strides made in technology absorption and capability development by the Indian Army, while urging young minds to strive for excellence in their future endeavours.”

A review of the full speech revealed no reference to the destruction of six jets or the loss of 250 Army personnel. This indicates that the circulating claim is not supported by the original source and may contribute to the spread of misinformation.

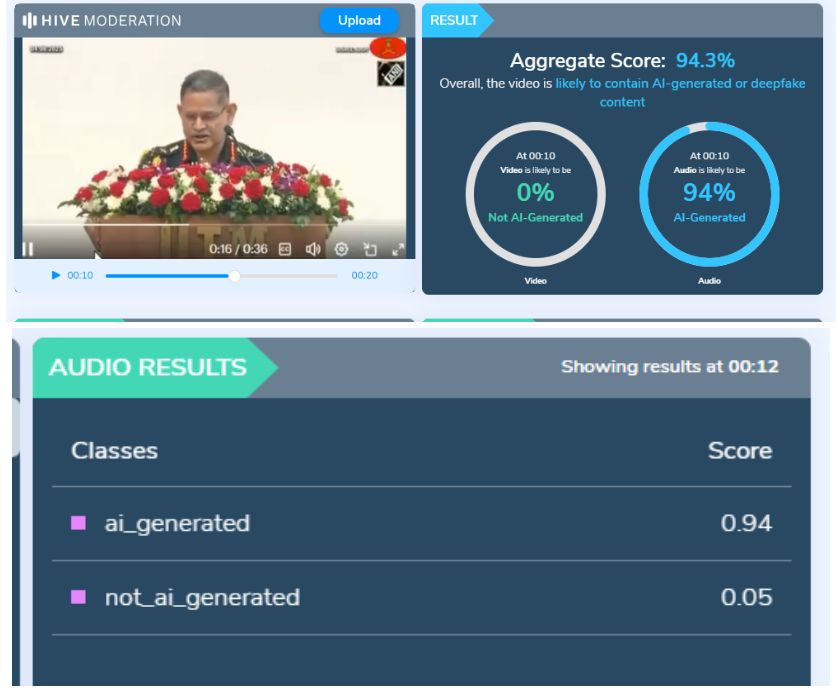

Further using AI Detection tools like Hive Moderation we found that the voice is AI generated in between the lines.

Conclusion:

The claim is baseless. The video is a manipulated creation that combines genuine footage of General Dwivedi’s IIT Madras address with AI-generated audio to fabricate a false narrative. No credible source corroborates the alleged military losses.

- Claim: AI-Generated Audio Falsely Claims COAS Admitted to Loss of 6 Jets and 250 Soldiers

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

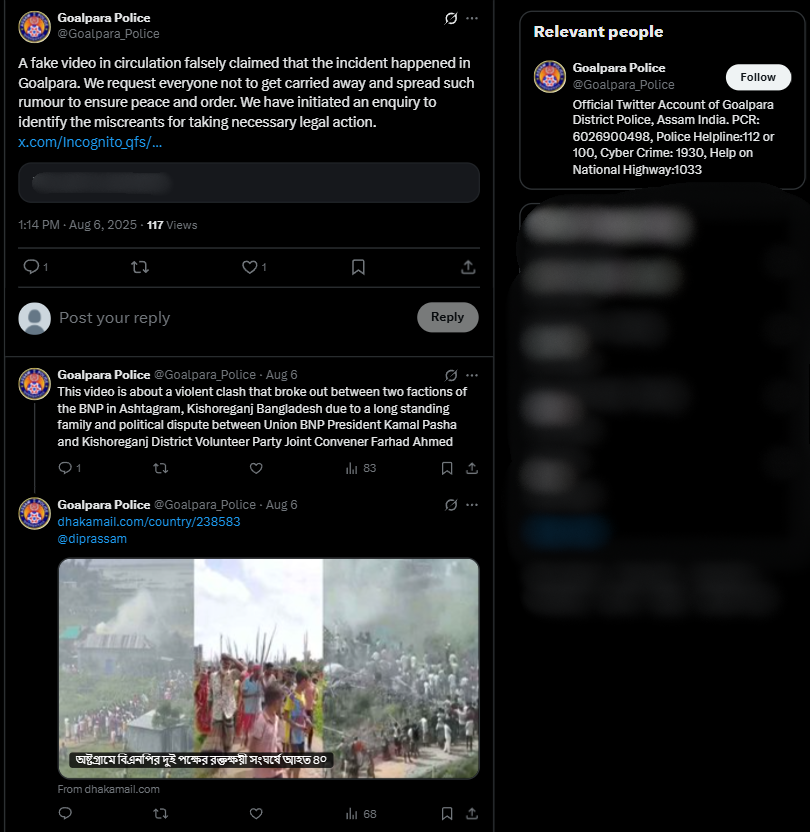

As we researched a viral social media video we encountered, we did a comprehensive fact check utilizing reverse image search. The video circulated with the claim that it shows illegal Bangladeshi in Assam's Goalpara district carrying homemade spears and attacking a police and/or government official. Our findings are certain that this claim is false. This video was filmed in the Kishoreganj district, Bangladesh, on July 1, 2025, during a political argument involving two rival factions of the Bangladesh Nationalist Party (BNP). The footage has been intentionally misrepresented, putting the report into context regarding Assam to disseminate false information.

Claim:

The viral video shows illegal Bangladeshi immigrants armed with spears marching in Goalpara, Assam, with the intention of attacking police or officials.

Fact Check:

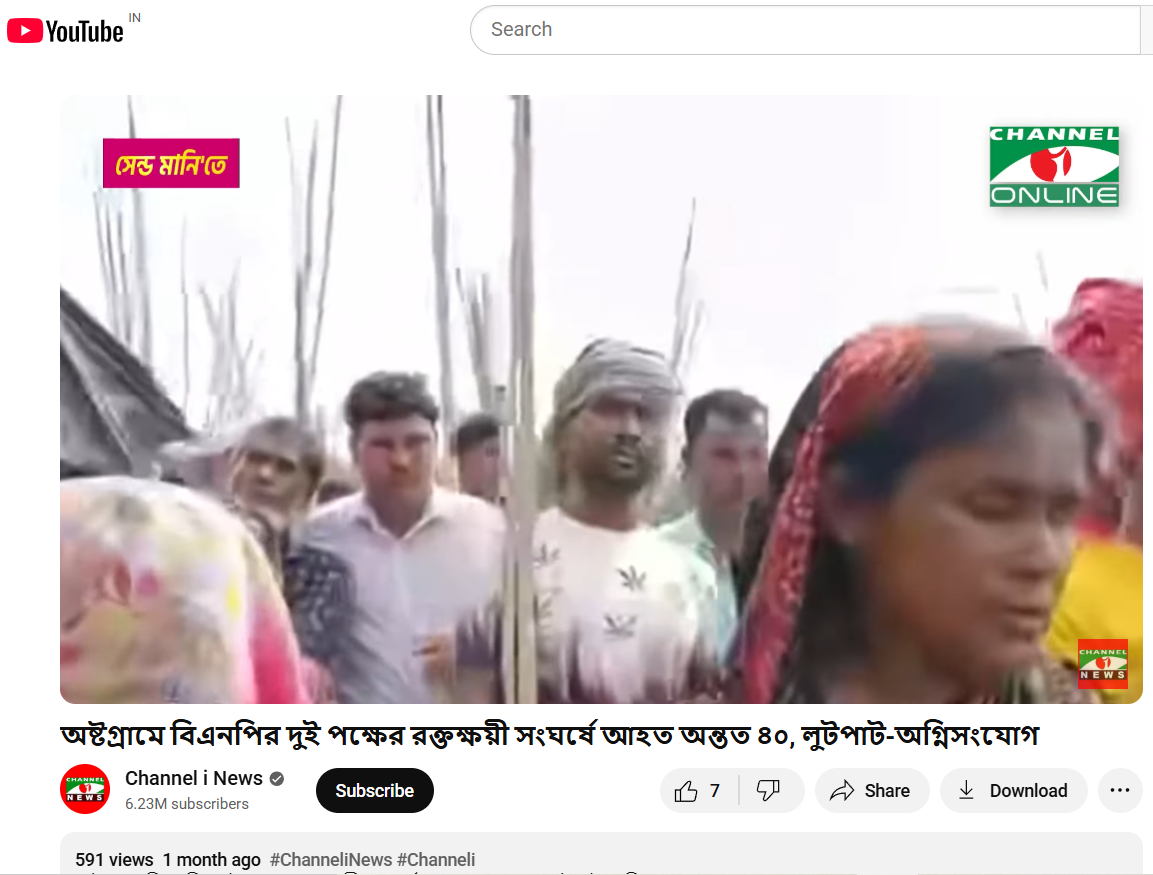

To establish if the claim was valid, we performed a reverse image search on some of the key frames from the video. We did our research on a number of news articles and social media posts from Bangladeshi sources. This led us to a reality check as the events confirmed in these reports took place in Ashtagram, Kishoreganj district, Bangladesh, in a violent political confrontation between factions of the Bangladesh Nationalist Party (BNP) on July 1, 2025, that ultimately resulted in about 40 injuries.

We also found on local media, in particular, Channel i News reported full accounts of the viral report and showed images from the video post. The individuals seen in the video were engaged in a political fight and wielding makeshift spears rather than transitioning into a cross-border attack. The Assam Police issued an official response on X (formerly Twitter) that denied the claim, while noting that nothing of that nature occurred in Goalpara nor in any other district of Assam.

Conclusion:

Based on our research, we conclude that the viral video does not show unlawful Bangladeshi immigrants in Assam. It depicts a political clash in Kishoreganj, Bangladesh, on July 1, 2025. The claim attached to the video is completely untrue and is intended to mislead the public as to where and what the incident depicted is.

Claim: Video shows illegal migrants with spears moving in groups to assault police!

Claimed On: Social Media

Fact Check: False and Misleading