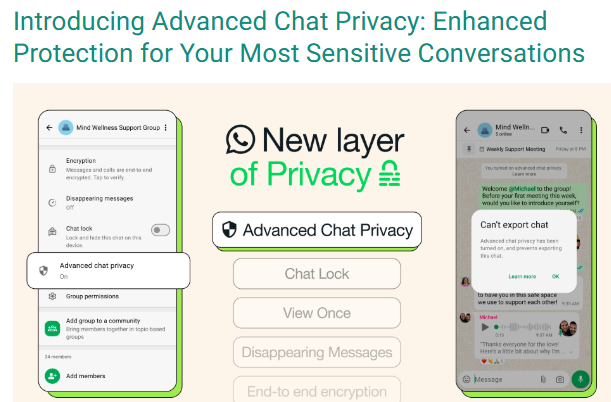

#FactCheck: A viral claim suggests that by turning on Advance Chat Privacy, Meta AI can avoid reading Whatsapp chats.

Executive Summary:

A viral social media video falsely claims that Meta AI reads all WhatsApp group and individual chats by default, and that enabling “Advanced Chat Privacy” can stop this. On performing reverse image search we found a blog post of WhatsApp which was posted in the month of April 2025 which claims that all personal and group chats remain protected with end to end (E2E) encryption, accessible only to the sender and recipient. Meta AI can interact only with messages explicitly sent to it or tagged with @MetaAI. The “Advanced Chat Privacy” feature is designed to prevent external sharing of chats, not to restrict Meta AI access. Therefore, the viral claim is misleading and factually incorrect, aimed at creating unnecessary fear among users.

Claim:

A viral social media video [archived link] alleges that Meta AI is actively accessing private conversations on WhatsApp, including both group and individual chats, due to the current default settings. The video further claims that users can safeguard their privacy by enabling the “Advanced Chat Privacy” feature, which purportedly prevents such access.

Fact Check:

Upon doing reverse image search from the keyframe of the viral video, we found a WhatsApp blog post from April 2025 that explains new privacy features to help users control their chats and data. It states that Meta AI can only see messages directly sent to it or tagged with @Meta AI. All personal and group chats are secured with end-to-end encryption, so only the sender and receiver can read them. The "Advanced Chat Privacy" setting helps stop chats from being shared outside WhatsApp, like blocking exports or auto-downloads, but it doesn’t affect Meta AI since it’s already blocked from reading chats. This shows the viral claim is false and meant to confuse people.

Conclusion:

The claim that Meta AI is reading WhatsApp Group Chats and that enabling the "Advance Chat Privacy" setting can prevent this is false and misleading. WhatsApp has officially confirmed that Meta AI only accesses messages explicitly shared with it, and all chats remain protected by end-to-end encryption, ensuring privacy. The "Advanced Chat Privacy" setting does not relate to Meta AI access, as it is already restricted by default.

- Claim: Viral social media video claims that WhatsApp Group Chats are being read by Meta AI due to current settings, and enabling the "Advance Chat Privacy" setting can prevent this.

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

Election misinformation poses a major threat to democratic processes all over the world. The rampant spread of misleading information intentionally (disinformation) and unintentionally (misinformation) during the election cycle can not only create grounds for voter confusion with ramifications on election results but also incite harassment, bullying, and even physical violence. The attack on the United States Capitol Building in Washington D.C., in 2021, is a classic example of this phenomenon, where the spread of dis/misinformation snowballed into riots.

Election Dis/Misinformation

Election dis/misinformation is false or misleading information that affects/influences public understanding of voting, candidates, and election integrity. The internet, particularly social media, is the foremost source of false information during elections. It hosts fabricated news articles, posts or messages containing incorrectly-captioned pictures and videos, fabricated websites, synthetic media and memes, and distorted truths or lies. In a recent example during the 2024 US elections, fake videos using the Federal Bureau of Investigation’s (FBI) insignia alleging voter fraud in collusion with a political party and claiming the threat of terrorist attacks were circulated. According to polling data collected by Brookings, false claims influenced how voters saw candidates and shaped opinions on major issues like the economy, immigration, and crime. It also impacted how they viewed the news media’s coverage of the candidates’ campaign. The shaping of public perceptions can thus, directly influence election outcomes. It can increase polarisation, affect the quality of democratic discourse, and cause disenfranchisement. From a broader perspective, pervasive and persistent misinformation during the electoral process also has the potential to erode public trust in democratic government institutions and destabilise social order in the long run.

Challenges In Combating Dis/Misinformation

- Platform Limitations: Current content moderation practices by social media companies struggle to identify and flag misinformation effectively. To address this, further adjustments are needed, including platform design improvements, algorithm changes, enhanced content moderation, and stronger regulations.

- Speed and Spread: Due to increasingly powerful algorithms, the speed and scale at which misinformation can spread is unprecedented. In contrast, content moderation and fact-checking are reactive and are more time-consuming. Further, incendiary material, which is often the subject of fake news, tends to command higher emotional engagement and thus, spreads faster (virality).

- Geopolitical influences: Foreign actors seeking to benefit from the erosion of public trust in the USA present a challenge to the country's governance, administration and security machinery. In 2018, the federal jury indicted 11 Russian military officials for alleged computer hacking to gain access to files during the 2016 elections. Similarly, Russian involvement in the 2024 federal elections has been alleged by high-ranking officials such as White House national security spokesman John Kirby, and Attorney General Merrick Garland.

- Lack of Targeted Plan to Combat Election Dis/Misinformation: In the USA, dis/misinformation is indirectly addressed through laws on commercial advertising, fraud, defamation, etc. At the state level, some laws such as Bills AB 730, AB 2655, AB 2839, and AB 2355 in California target election dis/misinformation. The federal and state governments criminalize false claims about election procedures, but the Constitution mandates “breathing space” for protection from false statements within election speech. This makes it difficult for the government to regulate election-related falsities.

CyberPeace Recommendations

- Strengthening Election Cybersecurity Infrastructure: To build public trust in the electoral process and its institutions, security measures such as updated data protection protocols, publicized audits of election results, encryption of voter data, etc. can be taken. In 2022, the federal legislative body of the USA passed the Electoral Count Reform and Presidential Transition Improvement Act (ECRA), pushing reforms allowing only a state’s governor or designated executive official to submit official election results, preventing state legislatures from altering elector appointment rules after Election Day and making it more difficult for federal legislators to overturn election results. More investments can be made in training, scenario planning, and fact-checking for more robust mitigation of election-related malpractices online.

- Regulating Transparency on Social Media Platforms: Measures such as transparent labeling of election-related content and clear disclosure of political advertising to increase accountability can make it easier for voters to identify potential misinformation. This type of transparency is a necessary first step in the regulation of content on social media and is useful in providing disclosures, public reporting, and access to data for researchers. Regulatory support is also required in cases where popular platforms actively promote election misinformation.

- Increasing focus on ‘Prebunking’ and Debunking Information: Rather than addressing misinformation after it spreads, ‘prebunking’ should serve as the primary defence to strengthen public resilience ahead of time. On the other hand, misinformation needs to be debunked repeatedly through trusted channels. Psychological inoculation techniques against dis/misinformation can be scaled to reach millions on social media through short videos or messages.

- Focused Interventions On Contentious Themes By Social Media Platforms: As platforms prioritize user growth, the burden of verifying the accuracy of posts largely rests with users. To shoulder the responsibility of tackling false information, social media platforms can outline critical themes with large-scale impact such as anti-vax content, and either censor, ban, or tweak the recommendations algorithm to reduce exposure and weaken online echo chambers.

- Addressing Dis/Information through a Socio-Psychological Lens: Dis/misinformation and its impact on domains like health, education, economy, politics, etc. need to be understood through a psychological and sociological lens, apart from the technological one. A holistic understanding of the propagation of false information should inform digital literacy training in schools and public awareness campaigns to empower citizens to evaluate online information critically.

Conclusion

According to the World Economic Forum’s Global Risks Report 2024, the link between misleading or false information and societal unrest will be a focal point during elections in several major economies over the next two years. Democracies must employ a mixed approach of immediate tactical solutions, such as large-scale fact-checking and content labelling, and long-term evidence-backed countermeasures, such as digital literacy, to curb the spread and impact of dis/misinformation.

Sources

- https://www.cbsnews.com/news/2024-election-misinformation-fbi-fake-videos/

- https://www.brookings.edu/articles/how-disinformation-defined-the-2024-election-narrative/

- https://www.fbi.gov/wanted/cyber/russian-interference-in-2016-u-s-elections

- https://indianexpress.com/article/world/misinformation-spreads-fear-distrust-ahead-us-election-9652111/

- https://academic.oup.com/ajcl/article/70/Supplement_1/i278/6597032#377629256

- https://www.brennancenter.org/our-work/policy-solutions/how-states-can-prevent-election-subversion-2024-and-beyond

- https://www.bbc.com/news/articles/cx2dpj485nno

- https://msutoday.msu.edu/news/2022/how-misinformation-and-disinformation-influence-elections

- https://misinforeview.hks.harvard.edu/article/a-survey-of-expert-views-on-misinformation-definitions-determinants-solutions-and-future-of-the-field/

- https://reutersinstitute.politics.ox.ac.uk/sites/default/files/2023-06/Digital_News_Report_2023.pdf

- https://www.weforum.org/stories/2024/03/disinformation-trust-ecosystem-experts-curb-it/

- https://www.apa.org/topics/journalism-facts/misinformation-recommendations

- https://mythvsreality.eci.gov.in/

- https://www.brookings.edu/articles/transparency-is-essential-for-effective-social-media-regulation/

- https://www.brookings.edu/articles/how-should-social-media-platforms-combat-misinformation-and-hate-speech/

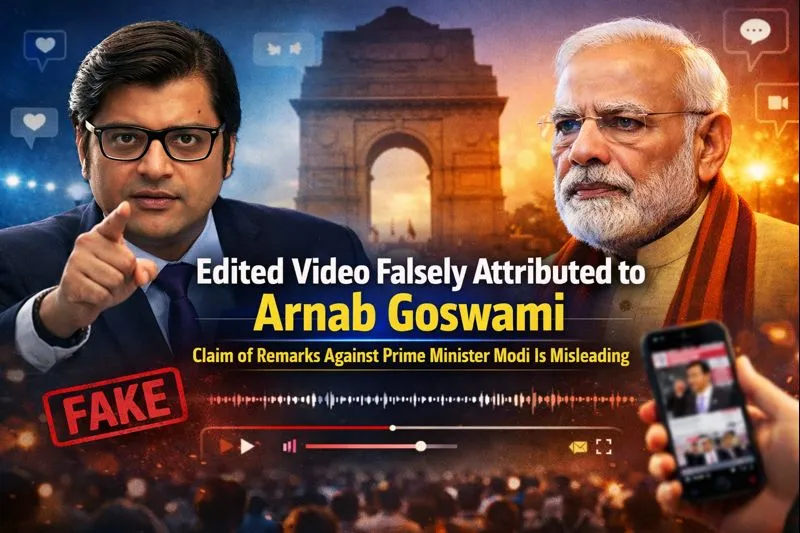

A video has been going viral on social media in recent days in which Republic TV’s Editor-in-Chief and anchor Arnab Goswami can allegedly be heard using objectionable language against Prime Minister Narendra Modi. While sharing the video, users are claiming that Arnab Goswami publicly made controversial remarks about the Prime Minister.

An investigation by CyberPeace Foundation found this claim to be completely false. Our probe revealed that the viral video is edited and is being circulated on social media with a misleading narrative. In the original video, Arnab Goswami was not making any personal statement; rather, he was referring to an old statement made by Congress leader Rahul Gandhi.

Viral Claim

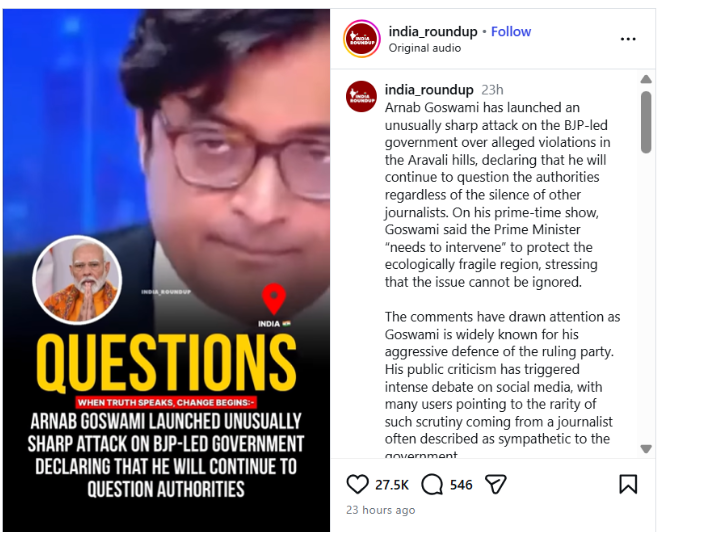

An Instagram user posted this video on 5 January 2026. In the video, a voice resembling Arnab Goswami is heard saying, “Ye jo Narendra Modi hain, ye chhe mahine baad ghar se nahi nikal paayenge aur Hindustan ke log inhein danda maarenge.”

(Translation: “This Narendra Modi will not be able to step out of his house after six months, and the people of India will beat him with sticks.”)

The post link, its archive link, and screenshots can be seen below:

- Instagram link: https://www.instagram.com/reel/DTHrO7bk7Rf/?igsh=MThzbzBlcm82eWN0ZA%3D%3D

- Archive link: https://archive.ph/oaYsf

Fact Check

To verify the viral claim, we first examined the video using Google Lens search. During this process, we found a video published on 18 July 2024 on the official YouTube channel of Republic Bharat. The investigation revealed that this video is the longer (extended) version of the viral clip.

After carefully watching the full video, it became clear that Arnab Goswami was not making the statement himself. Instead, he was referring to a remark made by Congress leader Rahul Gandhi during the 2020 Delhi Assembly elections against Prime Minister Narendra Modi. This confirms that the viral video was clipped and presented out of context.

The related video link can be seen below: https://www.youtube.com/shorts/KlQV25-3l8s

In the next step of the investigation, to verify whether Rahul Gandhi had indeed made such a statement, we conducted a customized keyword search on Google. During this, we found a video published on 6 February 2020 on the official YouTube channel of India Today.

In this video, recorded during a public event ahead of the 2020 Delhi Assembly elections, Rahul Gandhi is seen sharply attacking Prime Minister Narendra Modi, stating that if the Prime Minister fails to resolve the issue of unemployment in the country, the youth would beat him with sticks.

The video link is given below: https://www.youtube.com/watch?v=t5qCSA5nG9Y

Conclusion

The CyberPeace Foundation’s investigation found this claim to be completely fake. The viral video is edited and is being shared in a misleading context. In the original video, Arnab Goswami was referring to an old statement made by Rahul Gandhi, which was selectively clipped and presented in a way that falsely suggests Arnab Goswami himself made objectionable remarks against Prime Minister Narendra Modi.

Introduction

The most significant change seen in the Indian cyber laws this year was the passing of the Digital Personal Data Protection Act, 2023, in the parliament. DPDP Act is the first concrete form of legislation focusing on the protection of Digital Personal Data of Indian netizens in all aspects; the act is analogous to what GDPR is for Europe. The act lays down heavy compliance mandates for the intermediaries and data fiduciaries, this has made it difficult for the tech companies a lot of policy, legal and technical changes have to be made in order to implement the act to its complete efficiency. Recently, the big techs have addressed a letter to the Minister and Minister of State of Meity to extend the implementation timeline of the act. In other news, the union cabinet has given the green light for the much-awaited MoC with Japan focused on establishing a long-term Semiconductor Supply Chain Partnership.

Letter to Meity

The lobby of the big techs represented by a Trade Body named the Big Tech Asia Internet Coalition (AIC) this week wrote to the Ministry of Electronics and Information Technology (Meity), addressing it to the Minister Ashwini Vaishnav and Minister of State (MoS) Rajeev Chandershekhra recommending a 12-18 month extension on the implementation of the Digital Personal Data Protection Act. This request comes at a time when the government has been voicing its urgency to implement the act in order to safeguard Indian data at the earliest. The trade body represented big names, including Meta, Google, Microsoft, Apple and many more. These big techs essentially comprise the segment recognised under the DPDP as the Significant Data Fiduciaries due to the sheer volume of data processed, hosted, stored, etc. In the protective sense, the act has been designed to focus on preventing the exploitation of personal data of Indian netizens by the big techs, hence, they form an integral part of the Indian Data Ecosystem. The following reasons/complications concerning the implementation of the act were highlighted in the letter:

- Unrealistic Timelines: The AIC expressed that the current timeline for the implementation of the act seems unrealistic for the big techs to establish technological, policy and legal mechanisms to be in compliance with section 5 of the act, which talks about the Obligations of a Data Fiduciary and the particular notice to be shared with the data principles in accordance with the act.

- Technical Requirements: Members of AIC expressed that the duration for the implementation of the act is much less in comparison to the time required by the tech companies to set up/deploy relevant technical critical infrastructure, SoPs and capacity building for the same. This will cause a major hindrance in establishing the efficiency of the act.

- Data Rights: Right to Erasure, Correction, Deletion, Nominate, etc., are guaranteed under the DPDP, but the big techs are not sure about the efficient implementation of these rights and hence will need fundamental changes in the technology architecture of their platform, thus expressing concern of the early implementation of the act.

- Equivalency to GDPR: The DPDP is taken to be congruent to the European GDPR, but the DPDP focuses on a few more aspects, such as cross-border data flow and compliance mandates for the right to erasure, hence a lot of GDPR-compliant big techs also need to establish more robust mechanisms to maintain compliance to Indian DPDP.

Indo-Japan MoC

A Memorandum of Cooperation (MoC) on the Japan-India Semiconductor Supply Chain Partnership was signed in July 2023 between the Ministry of Electronics and Information Technology (MeitY) of India and the Ministry of Economy, Trade and Industry (METI) of Japan. This information was shared with the Union Cabinet, which is led by Prime Minister Narendra Modi. The Ministry of Commerce (MoC) aims to expand collaboration between Japan and India in order to improve the semiconductor supply chain. This is because semiconductors are critical to the development of industries and digital technologies. The Parties agree that the MoC will take effect on the date of signature and be in effect for five years. Bilateral cooperation on business-to-business and G2G levels on ways to develop a robust semiconductor supply chain and make use of complementary skills. The cooperation is aimed at harnessing indigenous talent and creating opportunities for higher employment avenues.

MeitY's purpose also includes promoting international cooperation within bilateral and regional frameworks in the frontier and emerging fields of information technology. MeitY has engaged in Memorandums of Understanding (MoUs), Memorandums of Covenants (MoCs), and Agreements with counterpart organisations/agencies of other nations with the aim of fostering bilateral collaboration and information sharing. Additionally, MeitY aims to establish supply chain resilience, which would enable India to become a reliable partner. An additional step towards mutually advantageous semiconductor-related commercial prospects and collaborations between India & Japan is the strengthening of mutual collaboration between Japanese and Indian enterprises through this Memorandum of Understanding. The “India-Japan Digital Partnership” (IJDP), which was introduced during PM Modi's October 2018 visit to Japan, was created in light of the two countries' complementary and synergistic efforts. Its goal is to advance both current areas of cooperation and new initiatives within the scope of S&T/ICT cooperation, with a particular emphasis on “Digital ICT Technologies."

Conclusion

As we move ahead into the digital age, it is pertinent to be aware and educated about the latest technological advancements, new forms of cybercrimes and threats and legal aspects of digital rights and responsibilities, whether it is the recommendation to extend the implementation of DPDP or the Indo-Japan MoC, both of these instances impact the Indian netizen and his/her interests. Hence, the indigenous netizen needs to develop a keen interest in the protection of the Indian cyber-ecosystem to create a safer future. In our war against technology, our best weapon is technology and awareness, thus implementing the same in our daily digital lifestyles and routines is a must.

References

- https://www.eetindia.co.in/cabinet-approves-moc-on-japan-india-semiconductor-supply-chain-partnership/

- https://www.moneycontrol.com/news/business/startup/trade-body-representing-big-tech-urges-govt-to-extend-dpdp-act-implementation-by-1-5-years-11605431.html

- https://www.google.com/url?rct=j&sa=t&url=https://www.eetindia.co.in/cabinet-approves-moc-on-japan-india-semiconductor-supply-chain-partnership/&ct=ga&cd=CAEYACoTOTI3Mzg4NzEyODgwMjI2ODk0MDIaOTBiYzUxNmI5YTRjYTE1NTpjb206ZW46VVM&usg=AOvVaw2lEO7-cIBZ_ox1xV39LGLs