#FactCheck: Old Thundercloud Video from Lviv city in Ukraine Ukraine (2021) Falsely Linked to Delhi NCR, Gurugram and Haryana

Executive Summary:

A viral video claims to show a massive cumulonimbus cloud over Gurugram, Haryana, and Delhi NCR on 3rd September 2025. However, our research reveals the claim is misleading. A reverse image search traced the visuals to Lviv, Ukraine, dating back to August 2021. The footage matches earlier reports and was even covered by the Ukrainian news outlet 24 Kanal, which published the story under the headline “Lviv Covered by Unique Thundercloud: Amazing Video”. Thus, the viral claim linking the phenomenon to a recent event in India is false.

Claim:

A viral video circulating on social media claims to show a massive cloud formation over Gurugram, Haryana, and the Delhi NCR region on 3rd September 2025. The cloud appears to be a cumulonimbus formation, which is typically associated with heavy rainfall, thunderstorms, and severe weather conditions.

Fact Check:

After conducting a reverse image search on key frames of the viral video, we found matching visuals from videos that attribute the phenomenon to Lviv, a city in Ukraine. These videos date back to August 2021, thereby debunking the claim that the footage depicts a recent weather event over Gurugram, Haryana, or the Delhi NCR region.

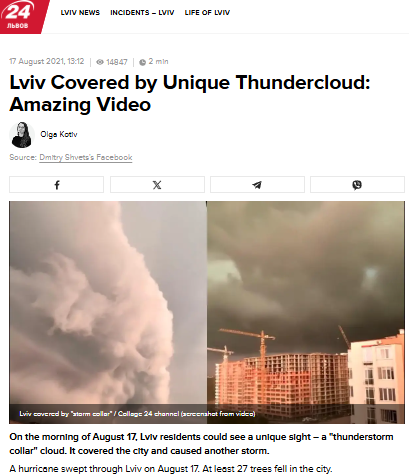

Further research revealed that a Ukrainian news channel named 24 Kanal, had reported on the Lviv thundercloud phenomenon in August 2021. The report was published under the headline “Lviv Covered by Unique Thundercloud: Amazing Video” ( original in Russian, translated into English).

Conclusion:

The viral video does not depict a recent weather event in Gurugram or Delhi NCR, but rather an old incident from Lviv, Ukraine, recorded in August 2021. Verified sources, including Ukrainian media coverage, confirm this. Hence, the circulating claim is misleading and false.

- Claim: Old Thundercloud Video from Lviv city in Ukraine Ukraine (2021) Falsely Linked to Delhi NCR, Gurugram and Haryana.

- Claimed On: Social Media

- Fact Check: False and Misleading.

Related Blogs

Introduction

There is a rising desire for artificial intelligence (AI) laws that limit threats to public safety and protect human rights while allowing for a flexible and inventive setting. Most AI policies prioritize the use of AI for the public good. The most compelling reason for AI innovation as a valid goal of public policy is its promise to enhance people's lives by assisting in the resolution of some of the world's most difficult difficulties and inefficiencies and to emerge as a transformational technology, similar to mobile computing. This blog explores the complex interplay between AI and internet governance from an Indian standpoint, examining the challenges, opportunities, and the necessity for a well-balanced approach.

Understanding Internet Governance

Before delving into an examination of their connection, let's establish a comprehensive grasp of Internet Governance. This entails the regulations, guidelines, and criteria that influence the global operation and management of the Internet. With the internet being a shared resource, governance becomes crucial to ensure its accessibility, security, and equitable distribution of benefits.

The Indian Digital Revolution

India has witnessed an unprecedented digital revolution, with a massive surge in internet users and a burgeoning tech ecosystem. The government's Digital India initiative has played a crucial role in fostering a technology-driven environment, making technology accessible to even the remotest corners of the country. As AI applications become increasingly integrated into various sectors, the need for a comprehensive framework to govern these technologies becomes apparent.

AI and Internet Governance Nexus

The intersection of AI and Internet governance raises several critical questions. How should data, the lifeblood of AI, be governed? What role does privacy play in the era of AI-driven applications? How can India strike a balance between fostering innovation and safeguarding against potential risks associated with AI?

- AI's Role in Internet Governance:

Artificial Intelligence has emerged as a powerful force shaping the dynamics of the internet. From content moderation and cybersecurity to data analysis and personalized user experiences, AI plays a pivotal role in enhancing the efficiency and effectiveness of Internet governance mechanisms. Automated systems powered by AI algorithms are deployed to detect and respond to emerging threats, ensuring a safer online environment.

A comprehensive strategy for managing the interaction between AI and the internet is required to stimulate innovation while limiting hazards. Multistakeholder models including input from governments, industry, academia, and civil society are gaining appeal as viable tools for developing comprehensive and extensive governance frameworks.

The usefulness of multistakeholder governance stems from its adaptability and flexibility in requiring collaboration from players with a possible stake in an issue. Though flawed, this approach allows for flaws that may be remedied using knowledge-building pieces. As AI advances, this trait will become increasingly important in ensuring that all conceivable aspects are covered.

The Need for Adaptive Regulations

While AI's potential for good is essentially endless, so is its potential for damage - whether intentional or unintentional. The technology's highly disruptive nature needs a strong, human-led governance framework and rules that ensure it may be used in a positive and responsible manner. The fast emergence of GenAI, in particular, emphasizes the critical need for strong frameworks. Concerns about the usage of GenAI may enhance efforts to solve issues around digital governance and hasten the formation of risk management measures.

Several AI governance frameworks have been published throughout the world in recent years, with the goal of offering high-level guidelines for safe and trustworthy AI development. The OECD's "Principles on Artificial Intelligence" (OECD, 2019), the EU's "Ethics Guidelines for Trustworthy AI" (EU, 2019), and UNESCO's "Recommendations on the Ethics of Artificial Intelligence" (UNESCO, 2021) are among the multinational organizations that have released their own principles. However, the advancement of GenAI has resulted in additional recommendations, such as the OECD's newly released "G7 Hiroshima Process on Generative Artificial Intelligence" (OECD, 2023).

Several guidance documents and voluntary frameworks have emerged at the national level in recent years, including the "AI Risk Management Framework" from the United States National Institute of Standards and Technology (NIST), a voluntary guidance published in January 2023, and the White House's "Blueprint for an AI Bill of Rights," a set of high-level principles published in October 2022 (The White House, 2022). These voluntary policies and frameworks are frequently used as guidelines by regulators and policymakers all around the world. More than 60 nations in the Americas, Africa, Asia, and Europe had issued national AI strategies as of 2023 (Stanford University).

Conclusion

Monitoring AI will be one of the most daunting tasks confronting the international community in the next centuries. As vital as the need to govern AI is the need to regulate it appropriately. Current AI policy debates too often fall into a false dichotomy of progress versus doom (or geopolitical and economic benefits versus risk mitigation). Instead of thinking creatively, solutions all too often resemble paradigms for yesterday's problems. It is imperative that we foster a relationship that prioritizes innovation, ethical considerations, and inclusivity. Striking the right balance will empower us to harness the full potential of AI within the boundaries of responsible and transparent Internet Governance, ensuring a digital future that is secure, equitable, and beneficial for all.

References

- The Key Policy Frameworks Governing AI in India - Access Partnership

- AI in e-governance: A potential opportunity for India (indiaai.gov.in)

- India and the Artificial Intelligence Revolution - Carnegie India - Carnegie Endowment for International Peace

- Rise of AI in the Indian Economy (indiaai.gov.in)

- The OECD Artificial Intelligence Policy Observatory - OECD.AI

- Artificial Intelligence | UNESCO

- Artificial intelligence | NIST

Introduction

Have you ever wondered how the internet works? Yes, there are screens and wires, but what’s going on beneath the surface? Every time you open a website, send an email, chat on messaging apps, or stream movies, you’re relying on something you probably don’t think about: the TCP/IP protocol suite. Without it, the internet as we know it wouldn’t exist. Let’s take a look at why this unassuming set of rules allows us to connect to anyone anywhere in the world.

The Problem: Networks That Couldn't Talk to Each Other

The internet is widely called a network of networks. A network is a group of devices that are connected and can share data with each other.

Researchers and governments began building early computer networks in the 1960s and 70s. But as the Cold War intensified, the U.S. military felt the need to establish a robust data-sharing infrastructure through interconnected networks that could withstand attacks. At the time, each network had different standards and protocols, which meant getting networks to communicate wasn’t easy or efficient. One network would have to be subsumed into another. This would lead to major problems in the reliability of data relay, flexibility of including more nodes, scalability of the interconnected network, and innovation.

The Breakthrough: Open Architecture Networking

This changed in the 1970s, when Bob Kahn proposed the concept of open architecture networking. It was a simple but revolutionary idea. He envisioned a system where all networks could talk to each other as equals. In this conceptualisation, all networks, even though unique in design and interface, could connect as peers to facilitate end-to-end communication. End-to-end communication helps deliver data between the source and destination without relying on intermediate nodes to control or modify it. This helps to make data relay more reliable and less prone to errors.

Along with Vint Cerf, he developed a network protocol, the TCP/IP suite, that would go on to enable different networks across satellite, wired, and non-wired domains to communicate with one another.

What Is TCP/IP?

TCP/IP stands for Transmission Control Protocol / Internet Protocol. It’s a set of communication rules that allow computers and devices to exchange information across different networks.

It’s powerful because:

- Layered and open architecture: Each function (like data delivery or routing) is handled by a specific layer. This modular design makes it easy to build new technologies like the World Wide Web or streaming services on top of it.

- Decentralisation: There's no single point of control. Any device can connect to another across the internet, making it scalable and resilient.

- Standardisation: TCP/IP works across all kinds of hardware and operating systems, making it truly universal.

The Core Components

- TCP (Transmission Control Protocol): Ensures that data is delivered accurately and in order. If any piece is lost or duplicated, TCP handles it.

- IP (Internet Protocol): Handles addressing and routing. It decides where each packet of data should go and how it gets there.

- UDP (User Datagram Protocol): A lightweight version of TCP, used when speed is more important than accuracy, such as for video calls or online gaming.

Why It Matters

The TCP/IP protocol suite introduced a set of standardised guidelines that enable networks to communicate, thereby laying the foundation of the Internet. It has made the Internet global, open, reliable, interoperable, scalable, and resilient, — features because of which the Internet has come to become the backbone of modern communication systems. So the next time you open a browser or send a message, remember: it’s TCP/IP quietly making it all possible.

References

- https://www.techtarget.com/searchnetworking/definition/ARPANET

- https://www.internetsociety.org/internet/history-internet/brief-history-internet/

- https://www.geeksforgeeks.org/tcp-ip-model/

- https://www.oreilly.com/library/view/tcpip-network-administration/0596002971/ch01.html

Executive Summary:

A video circulating online claims to show a man being assaulted by BSF personnel in India for selling Bangladesh flags at a football stadium. The footage has stirred strong reactions and cross border concerns. However, our research confirms that the video is neither recent nor related to the incident that occurred in India. The content has been wrongly framed and shared with misleading claims, misrepresenting the actual incident.

Claim:

It is being claimed through a viral post on social media that a Border Security Force (BSF) soldier physically attacked a man in India for allegedly selling the national flag of Bangladesh in West Bengal. The viral video further implies that the incident reflects political hostility towards Bangladesh within Indian territory.

Fact Check:

After conducting thorough research, including visual verification, reverse image searching, and confirming elements in the video background, we determined that the video was filmed outside of Bangabandhu National Stadium in Dhaka, Bangladesh, during the crowd buildup prior to the AFC Asian Cup. A match featuring Bangladesh against Singapore.

Second layer research confirmed that the man seen being assaulted is a local flag-seller named Hannan. There are eyewitness accounts and local news sources indicating that Bangladeshi Army officials were present to manage the crowd on the day under review. During the crowd control effort a soldier assaulted the vendor with excessive force. The incident created outrage to which the Army responded by identifying the officer responsible and taking disciplinary measures. The victim was reported to have been offered reparations for the misconduct.

Conclusion:

Our research confirms that the viral video does not depict any incident in India. The claim that a BSF officer assaulted a man for selling Bangladesh flags is completely false and misleading. The real incident occurred in Bangladesh, and involved a local army official during a football event crowd-control situation. This case highlights the importance of verifying viral content before sharing, as misinformation can lead to unnecessary panic, tension, and international misunderstanding.

- Claim: Viral video claims BSF personnel thrashing a person selling Bangladesh National Flag in West Bengal

- Claimed On: Social Media

- Fact Check: False and Misleading