#FactCheck - Viral Video of Aircraft Carrier Destroyed in Sea Storm Is AI-Generated

Social media users are widely sharing a video claiming to show an aircraft carrier being destroyed after getting trapped in a massive sea storm. In the viral clip, the aircraft carrier can be seen breaking apart amid violent waves, with users describing the visuals as a “wrath of nature.”

However, CyberPeace Foundation’s research has found this claim to be false. Our fact-check confirms that the viral video does not depict a real incident and has instead been created using Artificial Intelligence (AI).

Claim:

An X (formerly Twitter) user shared the viral video with the caption,“Nature’s wrath captured on camera.”The video shows an aircraft carrier appearing to be devastated by a powerful ocean storm. The post can be viewed here, and its archived version is available here.

https://x.com/Maailah1712/status/2011672435255624090

Fact Check:

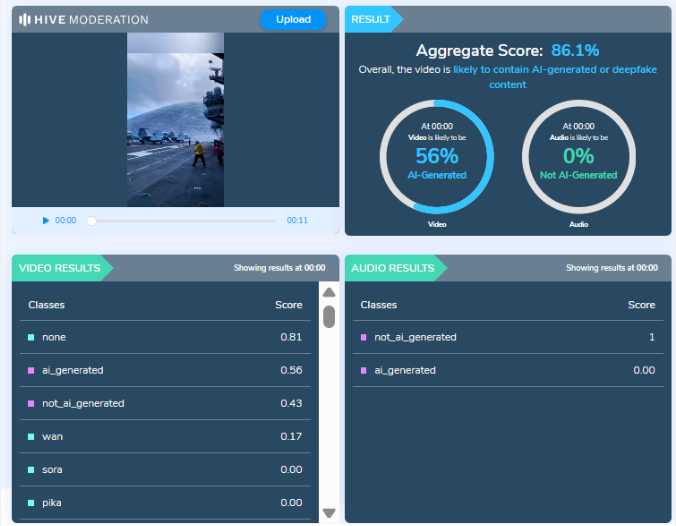

At first glance, the visuals shown in the viral video appear highly unrealistic and cinematic, raising suspicion about their authenticity. The exaggerated motion of waves, structural damage to the vessel, and overall animation-like quality suggest that the video may have been digitally generated. To verify this, we analyzed the video using AI detection tools.

The analysis conducted by Hive Moderation, a widely used AI content detection platform, indicates that the video is highly likely to be AI-generated. According to Hive’s assessment, there is nearly a 90 percent probability that the visual content in the video was created using AI.

Conclusion

The viral video claiming to show an aircraft carrier being destroyed in a sea storm is not related to any real incident.It is a computer-generated, AI-created video that is being falsely shared online as a real natural disaster. By circulating such fabricated visuals without verification, social media users are contributing to the spread of misinformation.

Related Blogs

Executive Summary

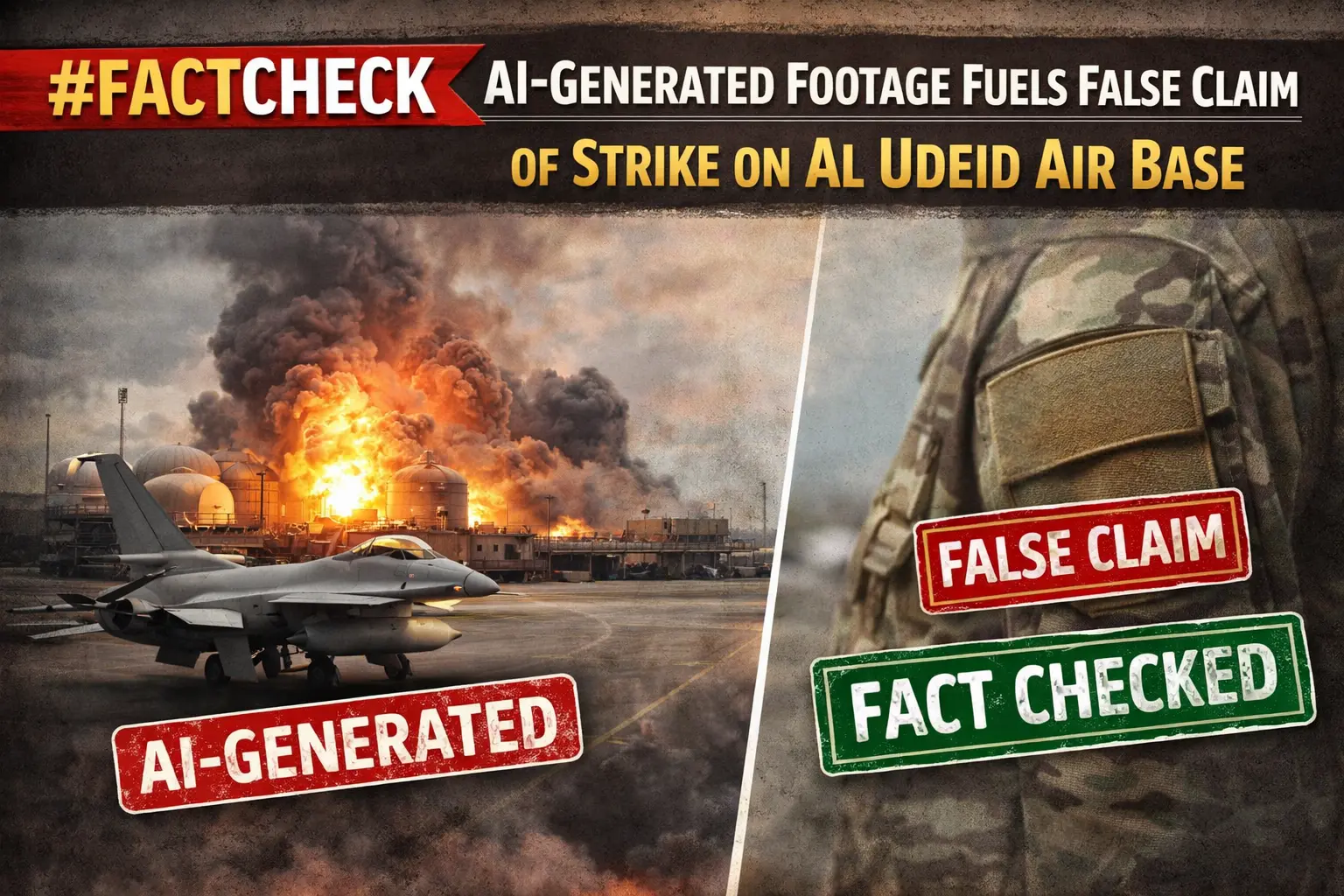

The ongoing conflict between the US-Israel and Iran has entered its third week. During this period, Iran reportedly targeted the US military base at Al Udeid in Qatar. Amid this, a video is going viral on social media showing people, vehicles, and chaos following an alleged attack. Some users are sharing it as footage of an Iranian missile strike on the Al Udeid Air Base. However, an research by the CyberPeacefound that the viral video is not real but AI-generated.

Claim:

An Instagram user “thenewscartel” shared the video on March 17, 2026, with the caption: “Al Udeid Air Base, Qatar (March 16, 2026): Iran launched ballistic missiles and drones at the US military’s largest Middle East base near Doha as retaliation for US-Israel strikes in Tehran. Qatar’s Defense Ministry confirmed multiple launches. Most were intercepted by Qatari air defense. One missile landed near the base or in an uninhabited area. No casualties or major damage reported. Explosions were heard in Doha, and smoke was seen in the sky.”

Fact Check:

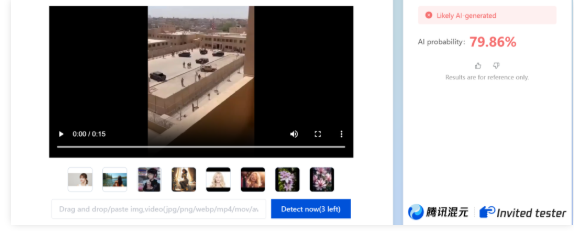

To verify the claim, we closely examined the viral video. We observed multiple visual inconsistencies—one person appears to be walking in reverse, another disappears and reappears, and the body shapes of people distort as they begin to run. These anomalies strongly indicate AI manipulation. We then analyzed the video using the AI detection tool Zhuque AI, which indicated an approximately 80 percent likelihood that the video is AI-generated.

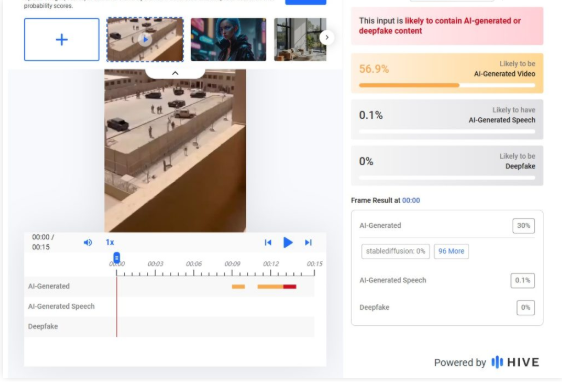

Further analysis using Hive Moderation showed around a 57 percent probability of the video being AI-generated.

Conclusion:

Our research found that the viral video being shared as footage of an Iranian attack on the US military base at Al Udeid in Qatar is AI-generated and not related to any real incident.

Executive Summary:

Social media is buzzing with a link that claims to offer an iPhone 15 as a gift from LuLu Hypermarket, presented as part of Holi celebrations. This article examines the deceptive tactics behind this fraudulent offer and provides guidance on recognizing and avoiding such scams.

False Claim:

The link being shared is misleading and falsely claims that LuLu Hypermarket is giving away free iPhone 15 phones. This is taking advantage of the Holi festival to trick unsuspecting people. When users click on the link, they are redirected multiple times and end up on a page with LuLu Hypermarket's photo and some simple questions. Fake comments are also used to make the offer seem genuine, but it is all a deception.

The Deceptive Scheme:

The plan uses psychological tricks by linking the offer to a famous brand and a popular celebration. The landing page's simplicity and phoney comments try to make users trust it and feel like they need to act fast, so they'll join the scam.

The Fraudulent Campaign Analysed:

The scammers are using psychological tactics to manipulate people. They're exploiting the trust people have in LuLu Hypermarket and the excitement around the new iPhone 15 during the Holi festival. The fake questionnaire serves no real purpose, but it's a way to engage users and make the scam seem legitimate. Testimonials claiming people have successfully received the iPhone 15 are also fake, designed to create a false sense of credibility. Users are prompted to select a "gift box," which adds an interactive element to draw them in further. When a user selects a box, they're falsely congratulated on winning the iPhone 15, giving them a sense of accomplishment. Finally, users are urged to share the link via WhatsApp to "claim" the gift, spreading the scam to more potential victims.

What do we Analyse? :

- We analyse the deceptive tactics employed by the scam, including psychological manipulation, false engagement techniques, and fake testimonials, all aimed at convincing users of the offer's legitimacy.

Link : (https://sophisticateddistort[.]top/nTiwpTTTT526?llue1696559991144)

- It is important to note that at this particular point, there has not been any official declaration or a proper confirmation of an offer made by Lulu Hypermarket So, people must be very careful when encountering such messages because they are often employed as lures in phishing attacks or misinformation campaigns. Before engaging or transmitting such claims, it is always advisable to authenticate the information from trustworthy sources in order to protect oneself online and prevent the spread of wrongful information

- The campaign is hosted on a third party domain instead of any official Website of LuLu Hypermarket, this raised suspicion. Also the domain was registered last year.

- The intercepted request revealed a connection to a China-linked analytical service, Baidu in the backend.

- Domain Name: sophisticateddistort.top

- Registry Domain ID: D20230629G10001G_04181852-top

- Registrar WHOIS Server: whois.west263.com

- Registrar URL: www.west263.com

- Updated Date: 2023-07-01T02:55:34Z

- Creation Date: 2023-06-29T06:05:00Z

- Registry Expiry Date: 2024-06-29T06:05:00Z

- Registrar: Chengdu west dimension digital

- Registrant State/Province: Shan Xi

- Registrant Country: CN (China)

- Name Server: curt.ns.cloudflare.com

- Name Server: harlee.ns.cloudflare.com

Note: Cybercriminal used Cloudflare technology to mask the actual IP address of the fraudulent website.

CyberPeace Advisory:

- Do not open those messages received from social platforms in which you think that such messages are suspicious or unsolicited. In the beginning, your own discretion can become your best weapon.

- Falling prey to such scams could compromise your entire system, potentially granting unauthorised access to your microphone, camera, text messages, contacts, pictures, videos, banking applications, and more. Keep your cyber world safe against any attacks.

- Never, in any case, reveal such sensitive data as your login credentials and banking details to entities you haven't validated as reliable ones.

- Before sharing any content or clicking on links within messages, always verify the legitimacy of the source. Protect not only yourself but also those in your digital circle.

- For the sake of the truthfulness of offers and messages, find the official sources and companies directly. Verify the authenticity of alluring offers before taking any action.

Conclusion:

During the festive season, as we engage in merrymaking and online activities, we should be mindful of fraudster's exploitation strategies. Another instance is the illegitimate Lulu Hypermarket offer of the upcoming iPhone 15. With the knowledge and carefulness, we can report any suspicious actions to avoid being victims of fraud in this way. Keep in mind the fact that legitimate offers are usually issued by trustworthy sources while if, the offer looks too good to be true, then it is rather a scam.

Introduction

There has been a recent surge of misinformation all over social media, claiming that every Indian ought to receive an allowance of ₹2,000 under some "Prime Minister's scheme." The message, which has been circulated far and wide on almost all platforms-WhatsApp, Facebook, Telegram, etc.-has urged users to click on an unfamiliar link to claim the allowance in their bank accounts.

It would seem like a very attractive offer, especially at a time when common citizens are coping with rising costs of living. But upon further examination, it turns out to be an outright online scam. NewsMobile fact-checked the claim and confirmed that no such scheme exists. Thus, the message circulating is a scam that aims to mislead common citizens.

Such an incident is not isolated. Over the years, fraudulent posts falsely offering benefits in the name of the government or well-known brands have been on the rise. These scams are not just about misinformation-they take advantage of trust, lure people into clicking, and sharing personal info that poses serious risks to financial and personal security.

Anatomy of the Viral PM Scheme Scam

The viral message received attention and was written in Hindi. It read:

“सभी नागरिकों को PM योजना के तहत दो हज़ार रुपए का भत्ता प्रदान किया गया है अपने bank खाते में प्राप्त करने के लिए click करें."

(English: “All citizens have been provided an allowance of ₹2000 under the PM scheme. Click to receive it in your bank account.”)

Beneath this was an odd link that, upon clicking through investigation, turned out to be not working and invalid. An examination of government sites, official handle accounts, and other such was done and no announcement for any such allowance was found.

This provides a neat explanation of a phishing attempt by which a scammer induces urgency and temptation in order to lure citizens into clicking a malicious link. While the link may no longer be active, it could very well have once redirected users to websites that harvest personal information such as Aadhaar numbers, bank details, or login credentials.

The Broader Problem: Fake Government Scheme Scams

Some scams have been exploiting the hoax gimmick of the ₹2,000 PM scheme into the wider trend. How do the con men work? They leverage the credibility of governmental initiatives to scam citizens. In the past, fake promises were made concerning free gas cylinders, cash allowances, subsidised rations, or even job opportunities.

During the COVID times, for instance, fake vaccination registration links and so-called relief scheme offers went viral, preying on the fears and vulnerabilities of ill-informed citizens. Likewise, false schemes associated with reputed companies such as Amazon, Flipkart, TATA Group, and Hermès have also gone viral, promising free gifts or allowances.

The one thing that makes scams associated with the government very dangerous is the exploitation of people's trust in authority. The common citizen is predisposed to believe the PM scheme or the Government Yojana because of the social credibility accorded to these announcements.

How These Scams Operate

These are scams where the creators intend deception and in the end, gain from defrauding a person. Fraudsters first create clickbait messages that are duly recorded to resemble official communications and often bear the government logos and bear a mix of Hindi-English text with the phrase "Pradhan Mantri Yojana" to make it sound legitimate. The messages then redirect users to bogus websites that really look very much like the government's portals, asking sick persons to enter personal information. Finally, as soon as they have obtained this data, the scammer uses it for identity theft, bank fraud, or sells it on the dark web. Social engineering does play a large role in these scams: here terms of urgency like limited time, last chance, and whatnot get created with the aim of pushing the targets to act on these without thinking. For maximum reach, victims are also asked to forward the message to their friends and family, causing the scammer to go viral across WhatsApp, Facebook, and Telegram.

Risks to Citizens

Risks are serious and manifold to falling prey to these scams. The immediate kind of risk is financial loss: divulging bank account details, an OTP, or credentials may constitute providing attackers the power to drain funds therefrom. Another prevalent kind of identity theft occurs through hijacked Aadhaar, PAN, or personal information that subsequently finds its way into fake loans or SIM activations. Apart from monetary losses, opening malicious links might also make devices infected with spyware or ransomware, thereby invading privacy and security. Victims tend to experience a form of psychological trauma due to feelings of betrayal or humiliation of being deceived, thus discouraging them from reporting, which in turn enables such scams to go undetected.

Best Practices for Prevention

It is prudent to exercise good cyber hygiene and be on the lookout for such scams. The citizens should verify each statement against government-authorised websites like https://www.mygov.in or through press statements of the ministries prior to believing it. One should not click on suspicious links offering money, gifts, or subsidies. Red flags like poor grammar, an unofficial domain name, or too-good-to-be-true offers can enable one to identify the scam in time. Two-factor authentication, antivirus software updates, and securing devices can drastically lower the threat from the technical angle. Equally important is the reporting of issues: always report any suspicious activities to cybercrime.gov.in or to the nearest cyber cell so that the authorities may trace some pattern and issue advisories accordingly. Finally, one can do some good by sharing verified fact checks within their circles to build added strength against misinformation and scams.

Policy and Community Role

While individual awareness is important, collective action must be taken against these fake government scheme scams. Platforms such as WhatsApp, Facebook, and X (Twitter) must tune up fraudsters' message detection mechanisms. In the meantime, Government Bodies must alert citizens periodically on new scams through their official handles/schemes and through community outreach.

Civil society and fact-checking agencies play an important role in dispelling frequently viral hoaxes. This work must be amplified to reach people's consciousness in regional languages for the very reason that in these terrain zones, forwarded messages are much more trusted.

Conclusion

The viral ₹2,000 PM scheme scam is a reminder that everything that is viral online cannot be trusted in toto. The scammers of the day are inventing newer scams to gain trust, spread misinformation, and extort innocent citizens.

The best defence will be awareness and alertness. Citizens must verify any claims through official channels before clicking on a link, sharing their data, or even acting upon it in any way. With proper cyber hygiene and avoiding suspicious messages, we can counterattack by reducing the percentage of impact that these scams may have and collaboratively build a secure digital environment.

As India pushes itself further into a digital ecosystem, both empowering and being resilient to cyber fraud is not a state of individual security, but a national agenda.

References

- https://www.newsmobile.in/nm-fact-checker/fact-check-viral-post-claiming-pm-scheme-offering-rs-2000-allowance-is-a-scam/

- https://timesofindia.indiatimes.com/business/financial-literacy/investing/beware-of-deepfake-scams-fraudsters-using-ai-videos-to-push-schemes-promising-unrealistic-returns-red-flags-to-watch-out-for/articleshow/124085155.cms

- https://www.business-standard.com/finance/personal-finance/invest-rs-21-000-to-earn-rs-20-lakh-monthly-viral-videos-of-fm-are-fake-125082000517_1.html

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2124728