Navigating the Path to CyberPeace: Insights and Strategies

Featured #factCheck Blogs

Executive Summary

A video circulating on social media claims that during a summit in Beijing, Donald Trump was seen peeking into Chinese President Xi Jinping’s “private notebook” while Xi briefly stepped away. However, a fact-check by CyberPeace Research Wing found the claim to be baseless. A review of the full event footage clearly shows that the folder in question belonged to Donald Trump himself, not Xi Jinping. The viral interpretation is therefore misleading.

Claim

An X user shared the clip alleging, “Trump caught sneaking a peek at Xi Jinping’s private notebook during a Beijing banquet while Xi stepped away.”

Fact Check

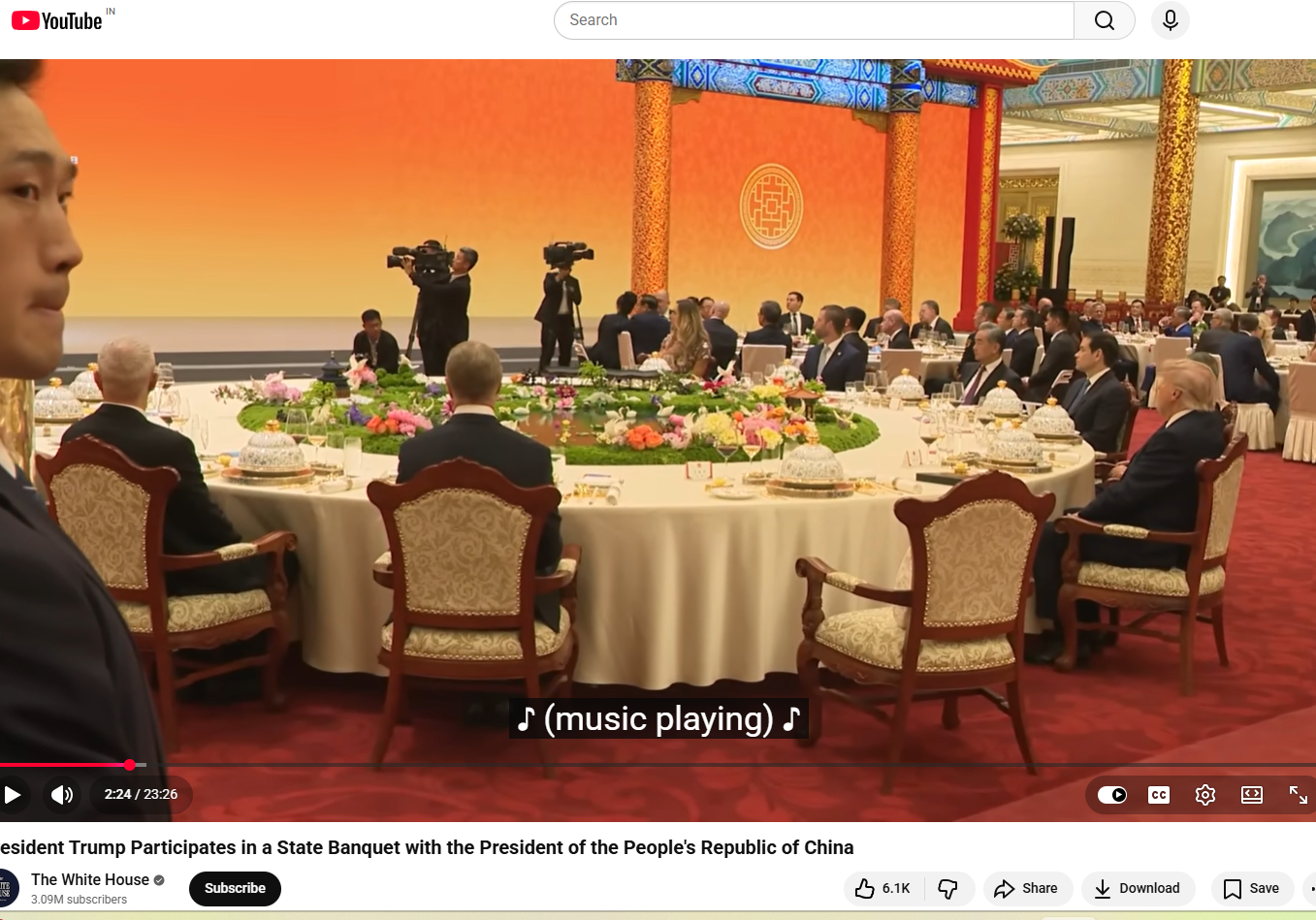

A longer version of the video, shared by NBC News on May 14, shows the state banquet held at the Great Hall of the People in Beijing. Around the 1-minute-50-second mark, Xi Jinping, seated to Trump’s left, gets up and walks to the podium. The viral clip follows shortly after, showing Trump opening the folder placed to his left and flipping through its pages.

The White House also uploaded the full footage on its official YouTube channel, showing wider, uninterrupted shots of the event. Around the two-minute mark, the announcer says, “And now a toast by President Xi,” after which Xi Jinping stands up. Immediately after, Trump is seen opening the folder on his left and reading from it.

Later in the video, around the 12-minute mark, when Xi returns to his seat, Trump is seen standing up, taking the folder with him to the podium, turning pages, and reading from it. The same sequence can also be seen in the NBC News footage at around 11 minutes and 50 seconds. This clearly indicates that the folder belonged to the U.S. President and not Xi Jinping, and that Trump was not peeking into any private notebook. Another key detail is the embossed emblem on the folder, which closely resembles the Seal of the President of the United States. The American bald eagle, the national bird of the United States, is clearly visible at the centre. A comparison between the viral screenshot and the official seal shows they are nearly identical.

Conclusion

The viral claim is misleading and taken out of context. A detailed review of the full footage, including official recordings from NBC News and the White House, clearly shows that the folder in question belonged to Donald Trump and not Chinese President Xi Jinping. At multiple points in the video, Trump is seen opening, handling, and reading from the same folder, including while Xi Jinping is away from his seat and later after he returns. The visual evidence from the event also supports this conclusion. The embossed seal on the folder matches the official Seal of the President of the United States, further confirming that it was part of Trump’s official briefing material and not any private document belonging to Xi Jinping. Taken together, the full sequence of events and official video sources make it clear that the viral narrative has been incorrectly framed. There is no evidence to suggest that Trump was peeking into Xi Jinping’s personal notebook.

Executive Summary

A graphic widely circulating on social media claims that Union Home Minister Amit Shah has warned, “A major crisis is coming; if possible, skip one meal a day.” The claim has been found to be false in a fact-check conducted by CyberPeace Research Wing. The research revealed that Amit Shah has not made any such statement.

Claim

A Facebook user shared the viral graphic on May 17, 2026, claiming that BJP leader and Home Minister Amit Shah issued a “warning” to the public, allegedly saying people should be prepared for a major crisis and consider skipping one meal a day. The post has been widely circulated on social media, drawing significant attention and discussion.

- https://www.facebook.com/photo.php?fbid=1509406197622070&set=pb.100056581115590.-2207520000&type=3

- https://archive.ph/Z9Tle

Factcheck

A keyword-based search on Google did not return any credible news reports supporting the claim. Further scrutiny of the official account of the Ministry of Home Affairs on X also found no mention or statement matching the viral claim.

A separate review of the official X account of Home Minister Amit Shah also did not show any such statement or post confirming the viral claim.

Conclusion

The viral claim is false. Union Home Minister Amit Shah has not made any such statement.

Executive Summary

Recent claims circulating on social media allege that an Indian Air Force MiG-29 fighter jet was shot down by Pakistani forces during "Operation Sindoor." These reports suggest the incident involved a jet crash attributed to hostile action. However, these assertions have been officially refuted. No credible evidence supports the existence of such an operation or the downing of an Indian aircraft as described. The Indian Air Force has not confirmed any such event, and the claim appears to be misinformation.

Claim

A social media rumor has been circulating, suggesting that an Indian Air Force MiG-29 fighter jet was shot down by Pakistani Air forces during "Operation Sindoor." The claim is accompanied by images purported to show the wreckage of the aircraft.

Fact Check

The social media posts have falsely claimed that a Pakistani Air Force shot down an Indian Air Force MiG-29 during "Operation Sindoor." This claim has been confirmed to be untrue. The image being circulated is not related to any recent IAF operations and has been previously used in unrelated contexts. The content being shared is misleading and does not reflect any verified incident involving the Indian Air Force.

After conducting research by extracting key frames from the video and performing reverse image searches, we successfully traced the original post, which was first published in 2024, and can be seen in a news article from The Hindu and Times of India.

A MiG-29 fighter jet of the Indian Air Force (IAF), engaged in a routine training mission, crashed near Barmer, Rajasthan, on Monday evening (September 2, 2024). Fortunately, the pilot safely ejected and escaped unscathed, hence the claim is false and an act to spread misinformation.

Conclusion

The claims regarding the downing of an Indian Air Force MiG-29 during "Operation Sindoor" are unfounded and lack any credible verification. The image being circulated is outdated and unrelated to current IAF operations. There has been no official confirmation of such an incident, and the narrative appears to be misleading. Peoples are advised to rely on verified sources for accurate information regarding defence matters.

- Claim: Pakistan Shot down an Indian Fighter Jet, MIG-29

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A widely circulated social media post claims that the Government of India has reportedly opened an account—Army Welfare Fund Battle Casualty—at Canara Bank to support the modernization of the Indian Army and assist injured or martyred soldiers. Citizens can voluntarily contribute starting from ₹1, with no upper limit. The fund is said to have been launched based on a suggestion by actor Akshay Kumar, which was later acknowledged by the Prime Minister of India through Mann Ki Baat and social media platforms. However, the fact is that no such decision has been taken by the cabinet recently, and no such decision has been officially announced.

Claim:

A viral social media post claims that the Government of India has launched a new initiative aimed at modernizing the Indian Army and supporting battle casualties through public donations. According to the post, a special bank account has been created to enable citizens to contribute directly toward the procurement of arms and equipment for the armed forces.

It further states that this initiative was introduced following a Cabinet decision and was inspired by a suggestion from Bollywood actor Akshay Kumar, which was reportedly acknowledged by the Prime Minister during his Mann Ki Baat address.

The post encourages individuals to donate any amount starting from ₹1, with no upper limit, and estimates that widespread public participation could generate up to ₹36,000 crore annually to support the armed forces. It also lists two bank accounts—one at Canara Bank (Account No: 90552010165915) and another at State Bank of India (Account No: 40650628094)—allegedly designated for the "Armed Forces Battle Casualties Welfare Fund."

The statement said,” The government established a range of welfare schemes for soldiers killed or disabled while undertaking military operations in recent combat. In 2020, the government established the 'Armed Forces Battle Casualty Welfare Fund (AFBCWF)', which is used to provide immediate financial assistance to families of soldiers, sailors and airmen who lose their lives or sustain grievous injury as a result of active military service.”

We also found a similar post from the past, which can be seen here.

Fact Check:

The Press Information Bureau (PIB) have responded to the viral post stating that it is misleading, and the Government has not launched any message inviting public donations towards the modernisation of the Indian Army or for purchasing Weapons for the army. The only known official initiative by the Ministry of Defence is the "Armed Forces Battle Casualties Welfare Fund", which is an initiative set up to support the families of our soldiers who have been marshalled or grievously disabled in the line of duty, not for buying military equipment.

In addition, the bank account details mentioned in the Viral post are false, and donations and charitable donations submitted to the account have been dishonoured.

The other false claim says that actor Akshay Kumar is promoting or heading this message-there is no official/disclosure record or announcement related to him leading or sponsoring this project. Having said that in 2017, Akshay Kumar encouraged public contributions of just one rupee per month to support the armed forces, through a web portal called “Bharat Ke Veer”. The platform was developed in partnership with the Ministry of Home Affairs

Citizens have to rely on only official government sources and ignore misleading messages on such social media platforms.

Conclusion:

The viral social media post suggesting that the Government of India has initiated a donation drive for the modernisation of the Indian Army and the purchase of weapons is misleading and inaccurate. According to the Press Information Bureau (PIB), no such initiative has been launched by the government, and the bank account details provided in the post are false, with reported cases of dishonoured transactions. The only legitimate initiative is the Armed Forces Battle Casualties Welfare Fund (AFBCWF), which provides financial assistance to the families of soldiers who are martyred or seriously injured in the line of duty. While actor Akshay Kumar played a key role in launching the Bharat Ke Veer portal in 2017 to support paramilitary personnel, he has no official connection to the viral claims.

- Claim: The government has launched a public donation message to fund Army weapon purchases.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A widely circulated claim on social media, including a post from the official X account of Pakistan, alleges that the Pakistan Air Force (PAF) carried out an airstrike on India, supported by a viral video. However, according to our research, the video used in these posts is actually footage from the video game Arma-3 and has no connection to any real-world military operation. The use of such misleading content contributes to the spread of false narratives about a conflict between India and Pakistan and has the potential to create unnecessary fear and confusion among the public.

Claim:

Viral social media posts, including the official Government of Pakistan X handle, claims that the PAF launched a successful airstrike against Indian military targets. The footage accompanying the claim shows jets firing missiles and explosions on the ground. The video is presented as recent and factual evidence of heightened military tensions.

Fact Check:

As per our research using reverse image search, the videos circulating online that claim to show Pakistan launching an attack on India under the name 'Operation Sindoor' are misleading. There is no credible evidence or reliable reporting to support the existence of any such operation. The Press Information Bureau (PIB) has also verified that the video being shared is false and misleading. During our research, we also came across footage from the video game Arma-3 on YouTube, which appears to have been repurposed to create the illusion of a real military conflict. This strongly indicates that fictional content is being used to propagate a false narrative. The likely intention behind this misinformation is to spread fear and confusion by portraying a conflict that never actually took place.

Conclusion:

It is true to say that Pakistan is using the widely shared misinformation videos to attack India with false information. There is no reliable evidence to support the claim, and the videos are misleading and irrelevant. Such false information must be stopped right away because it has the potential to cause needless panic. No such operation is occurring, according to authorities and fact-checking groups.

- Claim: Viral social media posts claim PAF attack on India

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A social media video claims that India's Udhampur Air Force Station was destroyed by Pakistan's JF-17 fighter jets. According to official sources, the Udhampur base is still fully operational, and our research proves that the video was produced by artificial intelligence. The growing problem of AI-driven disinformation in the digital age is highlighted by this incident.

Claim:

A viral video alleges that Pakistan's JF-17 fighter jets successfully destroyed the Udhampur Air Force Base in India. The footage shows aircraft engulfed in flames, accompanied by narration claiming the base's destruction during recent cross-border hostilities.

Fact Check :

The Udhampur Air Force Station was destroyed by Pakistani JF-17 fighter jets, according to a recent viral video that has been shown to be completely untrue. The audio and visuals in the video have been conclusively identified as AI-generated based on a thorough analysis using AI detection tools such as Hive Moderation. The footage was found to contain synthetic elements by Hive Moderation, confirming that the images were altered to deceive viewers. Further undermining the untrue claims in the video is the Press Information Bureau (PIB) of India, which has clearly declared that the Udhampur Airbase is still fully operational and has not been the scene of any such attack.

Our analysis of recent disinformation campaigns highlights the growing concern that AI-generated content is being weaponized to spread misinformation and incite panic, which is highlighted by the purposeful misattribution of the video to a military attack.

Conclusion:

It is untrue that the Udhampur Air Force Station was destroyed by Pakistan's JF-17 fighter jets. This claim is supported by an AI-generated video that presents irrelevant footage incorrectly. The Udhampur base is still intact and fully functional, according to official sources. This incident emphasizes how crucial it is to confirm information from reliable sources, particularly during periods of elevated geopolitical tension.

- Claim: Recent video footage shows destruction caused by Pakistani jets at the Udhampur Airbase.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A viral message is circulating claiming the Reserve Bank of India (RBI) has banned the use of black ink for writing cheques. This information is incorrect. The RBI has not issued any such directive, and cheques written in black ink remain valid and acceptable.

Claim:

The Reserve Bank of India (RBI) has issued new guidelines prohibiting using black ink for writing cheques. As per the claimed directive, cheques must now be written exclusively in blue or green ink.

Fact Check:

Upon thorough verification, it has been confirmed that the claim regarding the Reserve Bank of India (RBI) issuing a directive banning the use of black ink for writing cheques is entirely false. No such notification, guideline, or instruction has been released by the RBI in this regard. Cheques written in black ink remain valid, and the public is advised to disregard such unverified messages and rely only on official communications for accurate information.

As stated by the Press Information Bureau (PIB), this claim is false The Reserve Bank of India has not prescribed specific ink colors to be used for writing cheques. There is a mention of the color of ink to be used in point number 8, which discusses the care customers should take while writing cheques.

Conclusion:

The claim that the Reserve Bank of India has banned the use of black ink for writing cheques is completely false. No such directive, rule, or guideline has been issued by the RBI. Cheques written in black ink are valid and acceptable. The RBI has not prescribed any specific ink color for writing cheques, and the public is advised to disregard unverified messages. While general precautions for filling out cheques are mentioned in RBI advisories, there is no restriction on the color of the ink. Always refer to official sources for accurate information.

- Claim: The new RBI ink guidelines are mandatory from a specified date.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

Our research has determined that a widely circulated social media image purportedly showing astronaut Sunita Williams with U.S. President Donald Trump and entrepreneur Elon Musk following her return from space is AI-generated. There is no verifiable evidence to suggest that such a meeting took place or was officially announced. The image exhibits clear indicators of AI generation, including inconsistencies in facial features and unnatural detailing.

Claim:

It was claimed on social media that after returning to Earth from space, astronaut Sunita Williams met with U.S. President Donald Trump and Elon Musk, as shown in a circulated picture.

Fact Check:

Following a comprehensive analysis using Hive Moderation, the image has been verified as fake and AI-generated. Distinct signs of AI manipulation include unnatural skin texture, inconsistent lighting, and distorted facial features. Furthermore, no credible news sources or official reports substantiate or confirm such a meeting. The image is likely a digitally altered post designed to mislead viewers.

While reviewing the accounts that shared the image, we found that former Indian cricketer Manoj Tiwary had also posted the same image and a video of a space capsule returning, congratulating Sunita Williams on her homecoming. Notably, the image featured a Grok watermark in the bottom right corner, confirming that it was AI-generated.

Additionally, we discovered a post from Grok on X (formerly known as Twitter) featuring the watermark, stating that the image was likely AI-generated.

Conclusion:

As per our research on the viral image of Sunita Williams with Donald Trump and Elon Musk is AI-generated. Indicators such as unnatural facial features, lighting inconsistencies, and a Grok watermark suggest digital manipulation. No credible sources validate the meeting, and a post from Grok on X further supports this finding. This case underscores the need for careful verification before sharing online content to prevent the spread of misinformation.

- Claim: Sunita Williams met Donald Trump and Elon Musk after her space mission.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A viral video that has gone viral is purportedly of mass cheating during the UPSC Civil Services Exam conducted in Uttar Pradesh. This video claims to show students being filmed cheating by copying answers. But, when we did a thorough research, it was noted that the incident happened during an LLB exam, not the UPSC Civil Services Exam. This is a representation of misleading content being shared to promote misinformation.

Claim:

Mass cheating took place during the UPSC Civil Services Exam in Uttar Pradesh, as shown in a viral video.

Fact Check:

Upon careful verification, it has been established that the viral video being circulated does not depict the UPSC Civil Services Examination, but rather an incident of mass cheating during an LLB examination. Reputable media outlets, including Zee News and India Today, have confirmed that the footage is from a law exam and is unrelated to the UPSC.

The video in question was reportedly live-streamed by one of the LLB students, held in February 2024 at City Law College in Lakshbar Bajha, located in the Safdarganj area of Barabanki, Uttar Pradesh.

The misleading attempt to associate this footage with the highly esteemed Civil Services Examination is not only factually incorrect but also unfairly casts doubt on a process that is known for its rigorous supervision and strict security protocols. It is crucial to verify the authenticity and context of such content before disseminating it, in order to uphold the integrity of our institutions and prevent unnecessary public concern.

Conclusion:

The viral video purportedly showing mass cheating during the UPSC Civil Services Examination in Uttar Pradesh is misleading and not genuine. Upon verification, the footage has been found to be from an LLB examination, not related to the UPSC in any manner. Spreading such misinformation not only undermines the credibility of a trusted examination system but also creates unwarranted panic among aspirants and the public. It is imperative to verify the authenticity of such claims before sharing them on social media platforms. Responsible dissemination of information is crucial to maintaining trust and integrity in public institutions.

- Claim: A viral video shows UPSC candidates copying answers.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A viral post currently circulating on various social media platforms claims that Reliance Jio is offering a ₹700 Holi gift to its users, accompanied by a link for individuals to claim the offer. This post has gained significant traction, with many users engaging in it in good faith, believing it to be a legitimate promotional offer. However, after careful investigation, it has been confirmed that this post is, in fact, a phishing scam designed to steal personal and financial information from unsuspecting users. This report seeks to examine the facts surrounding the viral claim, confirm its fraudulent nature, and provide recommendations to minimize the risk of falling victim to such scams.

Claim:

Reliance Jio is offering a ₹700 reward as part of a Holi promotional campaign, accessible through a shared link.

Fact Check:

Upon review, it has been verified that this claim is misleading. Reliance Jio has not provided any promo deal for Holi at this time. The Link being forwarded is considered a phishing scam to steal personal and financial user details. There are no reports of this promo offer on Jio’s official website or verified social media accounts. The URL included in the message does not end in the official Jio domain, indicating a fake website. The website requests for the personal information of individuals so that it could be used for unethical cyber crime activities. Additionally, we checked the link with the ScamAdviser website, which flagged it as suspicious and unsafe.

Conclusion:

The viral post claiming that Reliance Jio is offering a ₹700 Holi gift is a phishing scam. There is no legitimate offer from Jio, and the link provided leads to a fraudulent website designed to steal personal and financial information. Users are advised not to click on the link and to report any suspicious content. Always verify promotions through official channels to protect personal data from cybercriminal activities.

- Claim: Users can claim ₹700 by participating in Jio's Holi offer.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A viral claim circulating on social media suggests that the Indian government is offering a 50% subsidy on tractor purchases under the so-called "Kisan Tractor Yojana." However, our research reveals that the website promoting this scheme, allegedly under the Ministry of Agriculture and Farmers Welfare, is misleading. This report aims to inform readers about the deceptive nature of this claim and emphasize the importance of safeguarding personal information against fraudulent schemes.

Claim:

A website has been circulating misleading information, claiming that the Indian government is offering a 50% subsidy on tractor purchases under the so-called "Kisan Tractor Yojana." Additionally, a YouTube video promoting this scheme suggests that individuals can apply by submitting certain documents and paying a small, supposedly refundable application fee.

Fact Check:

Our research has confirmed that there is no scheme by the Government of India named 'PM Kisan Tractor Yojana.' The circulating announcement is false and appears to be an attempt to defraud farmers through fraudulent means.

While the government does provide various agricultural subsidies under recognized schemes such as the PM Kisan Samman Nidhi and the Sub-Mission on Agricultural Mechanization (SMAM), no such initiative under the name 'PM Kisan Tractor Yojana' exists. This misleading claim is, therefore, a phishing attempt aimed at deceiving farmers and unlawfully collecting their personal or financial information.

Farmers and stakeholders are advised to rely only on official government sources for scheme-related information and to exercise caution against such deceptive practices.

To assess the authenticity of the “PM Kisan Tractor Yojana” claim, we reviewed the websites farmertractoryojana.in and tractoryojana.in. Our analysis revealed several inconsistencies, indicating that these websites are fraudulent.

As part of our verification process, we evaluated tractoryojana.in using Scam Detector to determine its trustworthiness. The results showed a low trust score, raising concerns about its legitimacy. Similarly, we conducted the same check for farmertractoryojana.in, which also appeared untrustworthy and risky. The detailed results of these assessments are attached below.

Given that these websites falsely present themselves as government-backed initiatives, our findings strongly suggest that they are part of a fraudulent scheme designed to mislead and exploit individuals seeking genuine agricultural subsidies.

During our research, we examined the "How it Works" section of the website, which outlines the application process for the alleged “PM Kisan Tractor Yojana.” Notably, applicants are required to pay a refundable application fee to proceed with their registration. It is important to emphasize that no legitimate government subsidy program requires applicants to pay a refundable application fee.

Our research found that the address listed on the website, “69A, Hanuman Road, Vile Parle East, Mumbai 400057,” is not associated with any government office or agricultural subsidy program. This further confirms the website’s fraudulent nature. Farmers should verify subsidy programs through official government sources to avoid scams.

A key inconsistency is the absence of a verified social media presence. Most legitimate government programs maintain official social media accounts for updates and communication. However, these websites fail to provide any such official handles, further casting doubt on their authenticity.

Upon attempting to log in, both websites redirect to the same page, suggesting they may be operated by the same entity or individual. This further raises concerns about their legitimacy and reinforces the likelihood of fraudulent activity.

Conclusion:

Our research confirms that the "PM Kisan Tractor Yojana" claim is fraudulent. No such government scheme exists, and the websites promoting it exhibit multiple red flags, including low trust scores, a misleading application process requiring a refundable fee, a false address, and the absence of an official social media presence. Additionally, both websites redirect to the same page, suggesting they are operated by the same entity. Farmers are advised to rely on official government sources to avoid falling victim to such scams.

- Claim: PM-Kisan Tractor Yojana Government Offering Subsidy on tractors.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A video gone viral on Facebook claims Union Finance Minister Nirmala Sitharaman endorsed the government’s new investment project. The video has been widely shared. However, our research indicates that the video has been AI altered and is being used to spread misinformation.

Claim:

The claim in this video suggests that Finance Minister Nirmala Sitharaman is endorsing an automotive system that promises daily earnings of ₹15,00,000 with an initial investment of ₹21,000.

Fact Check:

To check the genuineness of the claim, we used the keyword search for “Nirmala Sitharaman investment program” but we haven’t found any investment related scheme. We observed that the lip movements appeared unnatural and did not align perfectly with the speech, leading us to suspect that the video may have been AI-manipulated.

When we reverse searched the video which led us to this DD News live-stream of Sitharaman’s press conference after presenting the Union Budget on February 1, 2025. Sitharaman never mentioned any investment or trading platform during the press conference, showing that the viral video was digitally altered. Technical analysis using Hive moderator further found that the viral clip is Manipulated by voice cloning.

Conclusion:

The viral video on social media shows Union Finance Minister Nirmala Sitharaman endorsing the government’s new investment project as completely voice cloned, manipulated and false. This highlights the risk of online manipulation, making it crucial to verify news with credible sources before sharing it. With the growing risk of AI-generated misinformation, promoting media literacy is essential in the fight against false information.

- Claim: Fake video falsely claims FM Nirmala Sitharaman endorsed an investment scheme.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

A misleading video claiming to show fireworks at Dubai International Cricket Stadium following India’s 2025 ICC Champions Trophy win has gone viral, causing confusion among viewers. Our investigation confirms that the video is unrelated to the cricket tournament. It actually depicts the fireworks display from the December 2024 Arabian Gulf Cup opening ceremony at Kuwait’s Jaber Al-Ahmad Stadium. This incident underscores the rapid spread of outdated or misattributed content, particularly in relation to significant sports events, and highlights the need for vigilance in verifying such claims.

Claim:

The circulated video claims fireworks and a drone display at Dubai International Cricket Stadium after India's win in the ICC Champions Trophy 2025.

Fact Check:

A reverse image search of the most prominent keyframes in the viral video led it back to the opening ceremony of the 26th Arabian Gulf Cup, which was hosted by Jaber Al-Ahmad International Stadium in Kuwait on December 21, 2024. The fireworks seen in the video correspond to the imagery in this event. A second look at the architecture of the stadium also affirms that the venue is not Dubai International Cricket Stadium, as asserted. Additional confirmation from official sources and media outlets verifies that there was no such fireworks celebration in Dubai after India's ICC Champions Trophy 2025 win. The video has therefore been misattributed and shared with incorrect context.

Fig: Claimed Stadium Picture

Conclusion:

A viral video claiming to show fireworks at Dubai International Cricket Stadium after India's 2025 ICC Champions Trophy win is misleading. Our research confirms the video is from the December 2024 Arabian Gulf Cup opening ceremony at Kuwait’s Jaber Al-Ahmad Stadium. A reverse image search and architectural analysis of the stadium debunk the claim, with official sources verifying no such celebration took place in Dubai. The video has been misattributed and shared out of context.

- Claim: Fireworks in Dubai celebrate India’s Champions Trophy win.

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary:

We have identified a post addressing a scam email that falsely claims to offer a download link for an e-PAN Card. This deceptive email is designed to mislead recipients into disclosing sensitive financial information by impersonating official communication from Income Tax Department authorities. Our report aims to raise awareness about this fraudulent scheme and emphasize the importance of safeguarding personal data against such cyber threats.

Claim:

Scammers are sending fake emails, asking people to download their e-PAN cards. These emails pretend to be from government authorities like the Income Tax Department and contain harmful links that can steal personal information or infect devices with malware.

Fact Check:

Through our research, we have found that scammers are sending fake emails, posing as the Income Tax Department, to trick users into downloading e-PAN cards from unofficial links. These emails contain malicious links that can lead to phishing attacks or malware infections. Genuine e-PAN services are only available through official platforms such as the Income Tax Department's website (www.incometaxindia.gov.in) and the NSDL/UTIITSL portals. Despite repeated warnings, many individuals still fall victim to such scams. To combat this, the Income Tax Department has a dedicated page for reporting phishing attempts: Report Phishing - Income Tax India. It is crucial for users to stay cautious, verify email authenticity, and avoid clicking on suspicious links to protect their personal information.

Conclusion:

The emails currently in circulation claiming to provide e-PAN card downloads are fraudulent and should not be trusted. These deceptive messages often impersonate government authorities and contain malicious links that can result in identity theft or financial fraud. Clicking on such links may compromise sensitive personal information, putting individuals at serious risk. To ensure security, users are strongly advised to verify any such communication directly through official government websites and avoid engaging with unverified sources. Additionally, any phishing attempts should be reported to the Income Tax Department and also to the National Cyber Crime Reporting Portal to help prevent the spread of such scams. Staying vigilant and exercising caution when handling unsolicited emails is crucial in safeguarding personal and financial data.

- Claim: Fake emails claim to offer e-PAN card downloads.

- Claimed On: Social Media

- Fact Check: False and Misleading