#Fact Check: Pakistan’s Airstrike Claim Uses Video Game Footage

Executive Summary:

A widely circulated claim on social media, including a post from the official X account of Pakistan, alleges that the Pakistan Air Force (PAF) carried out an airstrike on India, supported by a viral video. However, according to our research, the video used in these posts is actually footage from the video game Arma-3 and has no connection to any real-world military operation. The use of such misleading content contributes to the spread of false narratives about a conflict between India and Pakistan and has the potential to create unnecessary fear and confusion among the public.

Claim:

Viral social media posts, including the official Government of Pakistan X handle, claims that the PAF launched a successful airstrike against Indian military targets. The footage accompanying the claim shows jets firing missiles and explosions on the ground. The video is presented as recent and factual evidence of heightened military tensions.

Fact Check:

As per our research using reverse image search, the videos circulating online that claim to show Pakistan launching an attack on India under the name 'Operation Sindoor' are misleading. There is no credible evidence or reliable reporting to support the existence of any such operation. The Press Information Bureau (PIB) has also verified that the video being shared is false and misleading. During our research, we also came across footage from the video game Arma-3 on YouTube, which appears to have been repurposed to create the illusion of a real military conflict. This strongly indicates that fictional content is being used to propagate a false narrative. The likely intention behind this misinformation is to spread fear and confusion by portraying a conflict that never actually took place.

Conclusion:

It is true to say that Pakistan is using the widely shared misinformation videos to attack India with false information. There is no reliable evidence to support the claim, and the videos are misleading and irrelevant. Such false information must be stopped right away because it has the potential to cause needless panic. No such operation is occurring, according to authorities and fact-checking groups.

- Claim: Viral social media posts claim PAF attack on India

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

As the sun rises on the Indian subcontinent, a nation teeters on the precipice of a democratic exercise of colossal magnitude. The Lok Sabha elections, a quadrennial event that mobilises the will of over a billion souls, is not just a testament to the robustness of India's democratic fabric but also a crucible where the veracity of information is put to the sternest of tests. In this context, the World Economic Forum's 'Global Risks Report 2024' emerges as a harbinger of a disconcerting trend: the spectre of misinformation and disinformation that threatens to distort the electoral landscape.

The report, a carefully crafted document that shares the insights of 1,490 experts from the interests of academia, government, business and civil society, paints a tableau of the global risks that loom large over the next decade. These risks, spawned by the churning cauldron of rapid technological change, economic uncertainty, a warming planet, and simmering conflict, are not just abstract threats but tangible realities that could shape the future of nations.

India’s Electoral Malice

India, as it strides towards the general elections scheduled in the spring of 2024, finds itself in the vortex of this hailstorm. The WEF survey positions India at the zenith of vulnerability to disinformation and misinformation, a dubious distinction that underscores the challenges facing the world's largest democracy. The report depicts misinformation and disinformation as the chimaeras of false information—whether inadvertent or deliberate—that are dispersed through the arteries of media networks, skewing public opinion towards a pervasive distrust in facts and authority. This encompasses a panoply of deceptive content: fabricated, false, manipulated and imposter.

The United States, the European Union, and the United Kingdom too, are ensnared in this web of varying degrees of misinformation. South Africa, another nation on the cusp of its own electoral journey, is ranked 22nd, a reflection of the global reach of this phenomenon. The findings, derived from a survey conducted over the autumnal weeks of September to October 2023, reveal a world grappling with the shadowy forces of untruth.

Global Scenario

The report prognosticates that as close to three billion individuals across diverse economies—Bangladesh, India, Indonesia, Mexico, Pakistan, the United Kingdom, and the United States—prepare to exercise their electoral rights, the rampant use of misinformation and disinformation, and the tools that propagate them, could erode the legitimacy of the governments they elect. The repercussions could be dire, ranging from violent protests and hate crimes to civil confrontation and terrorism.

Beyond the electoral arena, the fabric of reality itself is at risk of becoming increasingly polarised, seeping into the public discourse on issues as varied as public health and social justice. As the bedrock of truth is undermined, the spectre of domestic propaganda and censorship looms large, potentially empowering governments to wield control over information based on their own interpretation of 'truth.'

The report further warns that disinformation will become increasingly personalised and targeted, honing in on specific groups such as minority communities and disseminating through more opaque messaging platforms like WhatsApp or WeChat. This tailored approach to deception signifies a new frontier in the battle against misinformation.

In a world where societal polarisation and economic downturn are seen as central risks in an interconnected 'risks network,' misinformation and disinformation have ascended rapidly to the top of the threat hierarchy. The report's respondents—two-thirds of them—cite extreme weather, AI-generated misinformation and disinformation, and societal and/or political polarisation as the most pressing global risks, followed closely by the 'cost-of-living crisis,' 'cyberattacks,' and 'economic downturn.'

Current Situation

In this unprecedented year for elections, the spectre of false information looms as one of the major threats to the global populace, according to the experts surveyed for the WEF's 2024 Global Risk Report. The report offers a nuanced analysis of the degrees to which misinformation and disinformation are perceived as problems for a selection of countries over the next two years, based on a ranking of 34 economic, environmental, geopolitical, societal, and technological risks.

India, the land of ancient wisdom and modern innovation, stands at the crossroads where the risk of disinformation and misinformation is ranked highest. Out of all the risks, these twin scourges were most frequently selected as the number one risk for the country by the experts, eclipsing infectious diseases, illicit economic activity, inequality, and labor shortages. The South Asian nation's next general election, set to unfurl between April and May 2024, will be a litmus test for its 1.4 billion people.

The spectre of fake news is not a novel adversary for India. The 2019 election was rife with misinformation, with reports of political parties weaponising platforms like WhatsApp and Facebook to spread incendiary messages, stoking fears that online vitriol could spill over into real-world violence. The COVID-19 pandemic further exacerbated the issue, with misinformation once again proliferating through WhatsApp.

Other countries facing a high risk of the impacts of misinformation and disinformation include El Salvador, Saudi Arabia, Pakistan, Romania, Ireland, Czechia, the United States, Sierra Leone, France, and Finland, all of which consider the threat to be one of the top six most dangerous risks out of 34 in the coming two years. In the United Kingdom, misinformation/disinformation is ranked 11th among perceived threats.

The WEF analysts conclude that the presence of misinformation and disinformation in these electoral processes could seriously destabilise the real and perceived legitimacy of newly elected governments, risking political unrest, violence, and terrorism, and a longer-term erosion of democratic processes.

The 'Global Risks Report 2024' of the World Economic Forum ranks India first in facing the highest risk of misinformation and disinformation in the world at a time when it faces general elections this year. The report, released in early January with the 19th edition of its Global Risks Report and Global Risk Perception Survey, claims to reveal the varying degrees to which misinformation and disinformation are rated as problems for a selection of analyzed countries in the next two years, based on a ranking of 34 economic, environmental, geopolitical, societal, and technological risks.

Some governments and platforms aiming to protect free speech and civil liberties may fail to act effectively to curb falsified information and harmful content, making the definition of 'truth' increasingly contentious across societies. State and non-state actors alike may leverage false information to widen fractures in societal views, erode public confidence in political institutions, and threaten national cohesion and coherence.

Trust in specific leaders will confer trust in information, and the authority of these actors—from conspiracy theorists, including politicians, and extremist groups to influencers and business leaders—could be amplified as they become arbiters of truth.

False information could not only be used as a source of societal disruption but also of control by domestic actors in pursuit of political agendas. The erosion of political checks and balances and the growth in tools that spread and control information could amplify the efficacy of domestic disinformation over the next two years.

Global internet freedom is already in decline, and access to more comprehensive sets of information has dropped in numerous countries. The implication: Falls in press freedoms in recent years and a related lack of strong investigative media are significant vulnerabilities set to grow.

Advisory

Here are specific best practices for citizens to help prevent the spread of misinformation during electoral processes:

- Verify Information:Double-check the accuracy of information before sharing it. Use reliable sources and fact-checking websites to verify claims.

- Cross-Check Multiple Sources:Consult multiple reputable news sources to ensure that the information is consistent across different platforms.

- Be Wary of Social Media:Social media platforms are susceptible to misinformation. Be cautious about sharing or believing information solely based on social media posts.

- Check Dates and Context:Ensure that information is current and consider the context in which it is presented. Misinformation often thrives when details are taken out of context.

- Promote Media Literacy:Educate yourself and others on media literacy to discern reliable sources from unreliable ones. Be skeptical of sensational headlines and clickbait.

- Report False Information:Report instances of misinformation to the platform hosting the content and encourage others to do the same. Utilise fact-checking organisations or tools to report and debunk false information.

- Critical Thinking:Foster critical thinking skills among your community members. Encourage them to question information and think critically before accepting or sharing it.

- Share Official Information:Share official statements and information from reputable sources, such as government election commissions, to ensure accuracy.

- Avoid Echo Chambers:Engage with diverse sources of information to avoid being in an 'echo chamber' where misinformation can thrive.

- Be Responsible in Sharing:Before sharing information, consider the potential impact it may have. Refrain from sharing unverified or sensational content that can contribute to misinformation.

- Promote Open Dialogue:Open discussions should be promoted amongst their community about the significance of factual information and the dangers of misinformation.

- Stay Calm and Informed:During critical periods, such as election days, stay calm and rely on official sources for updates. Avoid spreading unverified information that can contribute to panic or confusion.

- Support Media Literacy Programs:Media Literacy Programs in schools should be promoted to provide individuals with essential skills to sail through the information sea properly.

Conclusion

Preventing misinformation requires a collective effort from individuals, communities, and platforms. By adopting these best practices, citizens can play a vital role in reducing the impact of misinformation during electoral processes.

References:

- https://thewire.in/media/survey-finds-false-information-risk-highest-in-india

- https://thesouthfirst.com/pti/india-faces-highest-risk-of-disinformation-in-general-elections-world-economic-forum/

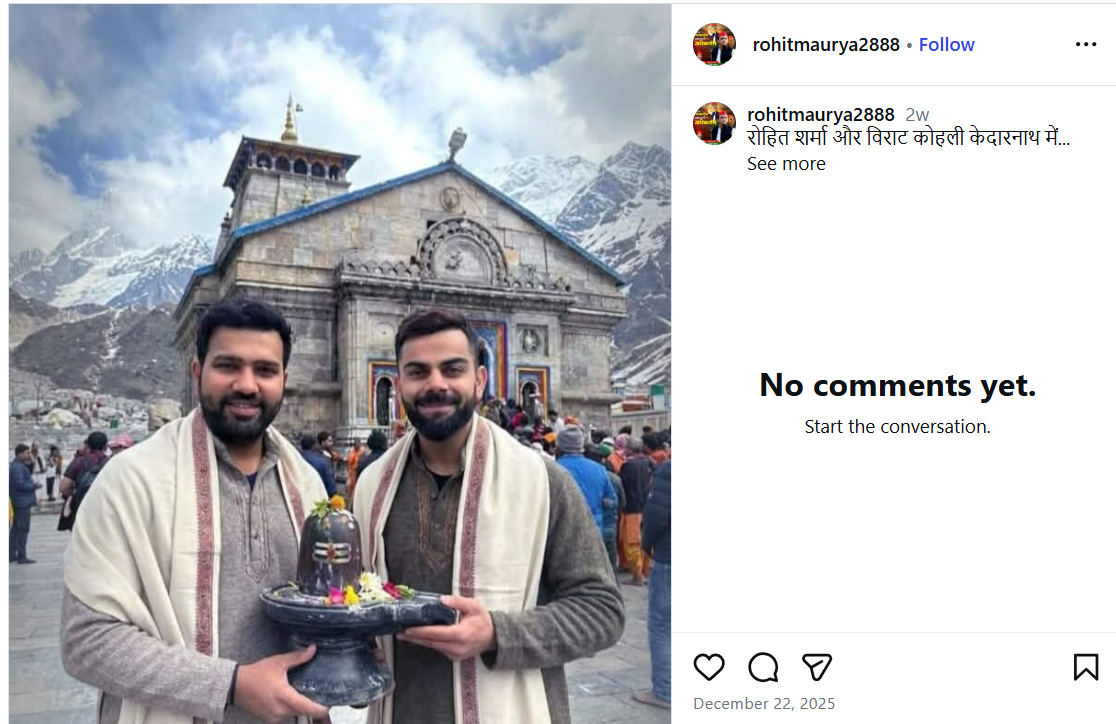

A photo featuring Indian cricketers Virat Kohli and Rohit Sharma is being widely shared on social media. In the image, both players are seen holding a Shivling, with the Kedarnath temple visible in the background. Users sharing the image claim that Virat Kohli and Rohit Sharma recently visited Kedarnath.

However, CyberPeace Foundation’s investigation found the claim to be false. Our verification established that the viral image is not real but has been created using Artificial Intelligence (AI) and is being circulated with a misleading narrative.

The Claim

An Instagram user shared the viral image on December 22, 2025, with the caption stating that Rohit Sharma and Virat Kohli are in Kedarnath. The post has since been widely reshared by other users, who assumed the image to be authentic. Link, archive link, screenshot:

Fact Check

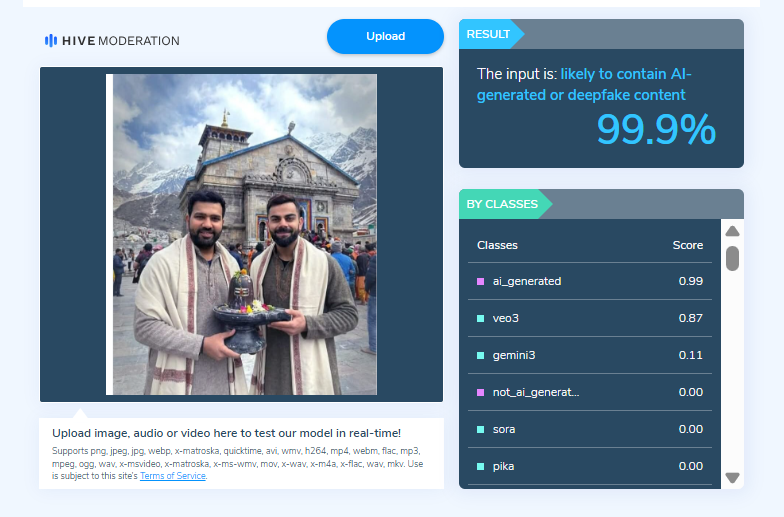

On closely examining the viral image, the Desk noticed visual inconsistencies suggesting that it may be AI-generated. To verify this, the image was scanned using the AI detection tool HIVE Moderation. According to the results, the image was found to be 99 per cent AI-generated.

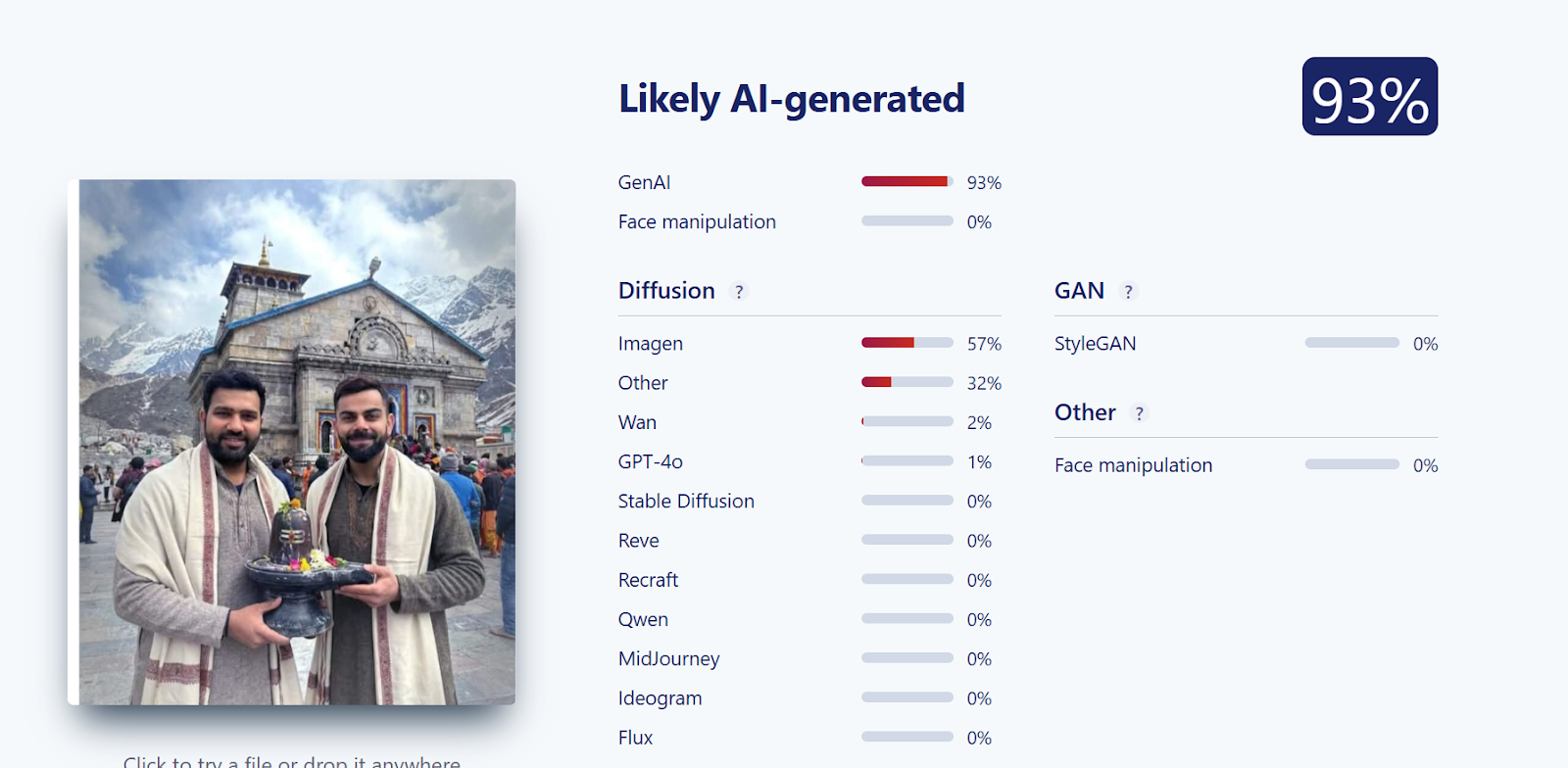

Further verification was conducted using another AI detection tool, Sightengine. The analysis revealed that the image was 93 per cent likely to be AI-generated, reinforcing the findings from the previous tool.

Conclusion

CyberPeace Foundation’s research confirms that the viral image claiming Virat Kohli and Rohit Sharma visited Kedarnath is fabricated. The image has been generated using AI technology and is being falsely shared on social media as a real photograph.

Introduction

Given the era of digital trust and technological innovation, the age of artificial intelligence has provided a new dimension to how people communicate and how they create and consume content. However, like all borrowed powers, the misuse of AI can lead to terrible consequences. One recent dark example was a cybercrime in Brazil: a sophisticated online scam using deepfake technology to impersonate celebrities of global stature, including supermodel Gisele Bündchen, in misleading Instagram ads. Luring in millions of reais in revenue, this crime clearly brings forth the concern of AI-generative content having rightfully set on the side of criminals.

Scam in Motion

Lately, the federal police of Brazil have stated that this scheme has been in circulation since 2024, when the ads were already being touted as apparently very genuine, using AI-generated video and images. The ads showed Gisele Bündchen and other celebrities endorsing skincare products, promotional giveaways, or time-limited discounts. The victims were tricked into making petty payments, mostly under 100 reais (about $19) for these fake products or were lured into paying "shipping costs" for prizes that never actually arrived.

The criminals leveraged their approach by scaling it up and focusing on minor losses accumulated from every victim, thus christening it "statistical immunity" by investigators. Victims being pocketed only a couple of dollars made most of them stay on their heels in terms of filing a complaint, thereby allowing these crooks extra limbs to shove on. Over time, authorities estimated that the group had gathered over 20 million reais ($3.9 million) in this elaborate con.

The scam was detected when a victim came forth with the information that an Instagram advertisement portraying a deepfake video of Gisele Bündchen was indeed false. With Anna looking to be Gisele and on the recommendation of a skincare company, the deepfake video was the most well-produced fake video. On going further into the matter, it became apparent that the investigations uncovered a whole network of deceptive social media pages, payment gateways, and laundering channels spread over five states in Brazil.

The Role of AI and Deepfakes in Modern Fraud

It is one of the first few large-scale cases in Brazil where AI-generated deepfakes have been used to perpetrate financial fraud. Deepfake technology, aided by machine learning algorithms, can realistically mimic human appearance and speech and has become increasingly accessible and sophisticated. Whereas before a level of expertise and computer resources were needed, one now only requires an online tool or app.

With criminals gaining a psychological advantage through deepfakes, the audiences would be more willing to accept the ad as being genuine as they saw a familiar and trusted face, a celebrity known for integrity and success. The human brain is wired to trust certain visual cues, making deepfakes an exploitation of this cognitive bias. Unlike phishing emails brimming with spelling and grammatical errors, deepfake videos are immersive, emotional, and visually convincing.

This is the growing terrain: AI-enabled misinformation. From financial scams to political propaganda, manipulated media is killing trust in the digital ecosystem.

Legalities and Platform Accountability

The Brazilian government had taken a proactive stance on the issue. In June 2025, the country's Supreme Court held that social media platforms could be held liable for failure to expeditiously remove criminal content, even in the absence of a formal order from a court. The icing on the cake is that that judgment would go a long way in architecting platform accountability in Brazil and potentially worldwide as jurisdictions adopt processes to deal with AI-generated fraud.

Meta, the parent company of Instagram, had said its policies forbid "ads that deceptively use public figures to scam people." Meta claims to use advanced detection mechanisms, trained review teams, and user tools to report violations. The persistence of such scams shows that the enforcement mechanisms still lag the pace and scale of AI-based deception.

Why These Scams Succeed

There are many reasons for the success of these AI-powered scams.

- Trust Due to Familiarity: Human beings tend to believe anything put forth by a known individual.

- Micro-Fraud: Keeping the money laundered from victims small prevents any increase in the number of complaints about these crimes.

- Speed To Create Content: New ads are being generated by criminals faster than ads can be checked for and removed by platforms via AI tools.

- Cross-Platform Propagation: A deepfake ad is then reshared onto various other social networking platforms once it starts gaining some traction, thereby worsening the problem.

- Absence of Public Awareness: Most users still cannot discern manipulated media, especially when high-quality deepfakes come into play.

Wider Implications on Cybersecurity and Society

The Brazilian case is but a microcosm of a much bigger problem. With deepfake technology evolving, AI-generated deception threatens not only individuals but also institutions, markets, and democratic systems. From investment scams and fake charters to synthetic IDs for corporate fraud, the possibilities for abuse are endless.

Moreover, with generative AIs being adopted by cybercriminals, law enforcement faces obstructions to properly attributing, validating evidence, and conducting digital forensics. Determining what is actual and what is manipulated has now given rise to the need for a forensic AI model that has triggered the deployment of the opposite on the other side, the attacker, thus initiating a rising tech arms race between the two parties.

Protecting Citizens from AI-Powered Scams

Public awareness has remained the best defence for people in such scams. Gisele Bündchen's squad encouraged members of the public to verify any advertisement through official brand or celebrity channels before engaging with said advertisements. Consumers need to be wary of offers that appear "too good to be true" and double-check the URL for authenticity before sharing any kind of personal information

Individually though, just a few acts go so far in lessening some of the risk factors:

- Verify an advertisement's origin before clicking or sharing it

- Never share any monetary or sensitive personal information through an unverifiable link

- Enable two-factor authentication on all your social accounts

- Periodically check transaction history for any unusual activity

- Report any deepfake or fraudulent advertisement immediately to the platform or cybercrime authorities

Collaboration will be the way ahead for governments and technology companies. Investing in AI-based detection systems, cooperating on international law enforcement, and building capacity for digital literacy programs will enable us to stem this rising tide of synthetic media scams.

Conclusion

The deepfake case in Brazil with Gisele Bündchen acts as a clarion for citizens and legislators alike. This shows the evolution of cybercrime that profited off the very AI technologies that were once hailed for innovation and creativity. In this new digital frontier that society is now embracing, authenticity stands closer to manipulation, disappearing faster with each dawn.

While keeping public safety will certainly still require great cybersecurity measures in this new environment, it will demand equal contributions on vigilance, awareness, and ethical responsibility. Deepfakes are not only a technology problem but a societal one-crossing into global cooperation, media literacy, and accountability at every level throughout the entire digital ecosystem.