#FactCheck-Fake Video of Mass Cheating at UPSC Exam Circulates Online

Executive Summary:

A viral video that has gone viral is purportedly of mass cheating during the UPSC Civil Services Exam conducted in Uttar Pradesh. This video claims to show students being filmed cheating by copying answers. But, when we did a thorough research, it was noted that the incident happened during an LLB exam, not the UPSC Civil Services Exam. This is a representation of misleading content being shared to promote misinformation.

Claim:

Mass cheating took place during the UPSC Civil Services Exam in Uttar Pradesh, as shown in a viral video.

Fact Check:

Upon careful verification, it has been established that the viral video being circulated does not depict the UPSC Civil Services Examination, but rather an incident of mass cheating during an LLB examination. Reputable media outlets, including Zee News and India Today, have confirmed that the footage is from a law exam and is unrelated to the UPSC.

The video in question was reportedly live-streamed by one of the LLB students, held in February 2024 at City Law College in Lakshbar Bajha, located in the Safdarganj area of Barabanki, Uttar Pradesh.

The misleading attempt to associate this footage with the highly esteemed Civil Services Examination is not only factually incorrect but also unfairly casts doubt on a process that is known for its rigorous supervision and strict security protocols. It is crucial to verify the authenticity and context of such content before disseminating it, in order to uphold the integrity of our institutions and prevent unnecessary public concern.

Conclusion:

The viral video purportedly showing mass cheating during the UPSC Civil Services Examination in Uttar Pradesh is misleading and not genuine. Upon verification, the footage has been found to be from an LLB examination, not related to the UPSC in any manner. Spreading such misinformation not only undermines the credibility of a trusted examination system but also creates unwarranted panic among aspirants and the public. It is imperative to verify the authenticity of such claims before sharing them on social media platforms. Responsible dissemination of information is crucial to maintaining trust and integrity in public institutions.

- Claim: A viral video shows UPSC candidates copying answers.

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Executive Summary

In the Philippines, social media users have been sharing a video and an image following complaints over sharp increases in electricity bills. The posts claim that, in exchange for higher electricity charges, low-income households are being provided free electricity and air conditioning units. However, a fact-check by CyberPeace Research Wing found no evidence to support these claims. A closer examination of the visuals revealed several inconsistencies and visual indicators suggesting that the content is likely AI-generated.

Claim

An image was also shared on Instagram on April 29, showing purported beneficiaries holding new air conditioning units allegedly provided “free by the government.”

Fact Check

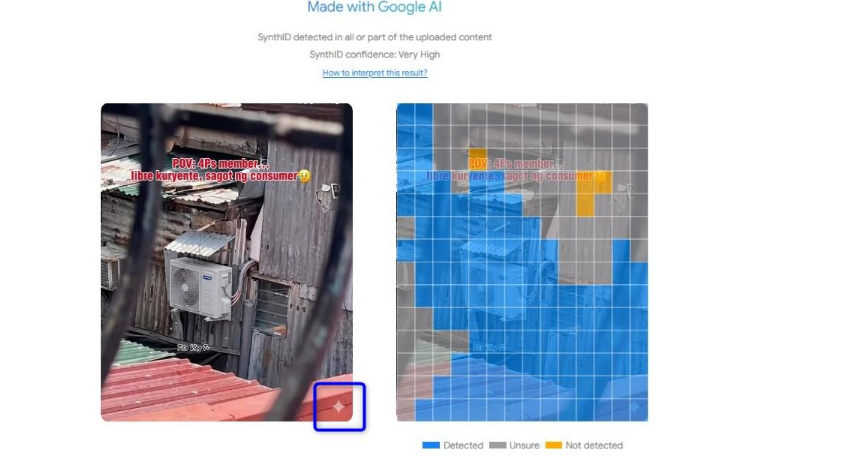

However, the video and image circulating on social media do not actually show beneficiaries receiving any subsidy. A closer analysis of the visuals indicates that the content is AI-generated. In the initial frames of the misrepresented video, a diamond-shaped icon can be seen in the bottom-right corner, which is the watermark associated with Google’s Gemini AI model.

Further analysis using Google’s SynthID Detector, a tool designed to identify AI-generated content, indicated with a “very high” level of confidence that the material was created using the company’s AI technology.

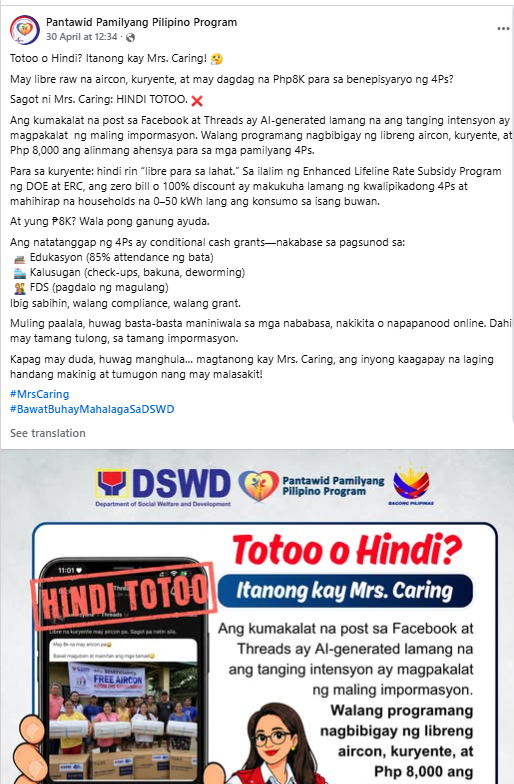

The country’s social welfare agency also issued a statement on Facebook on April 30, rejecting the claim and saying it is “not true” and intended only to “propagate wrong information.” Social welfare agency statement on Facebook

- https://www.facebook.com/photo?fbid=1373756198132587&set=a.665386155636265

Conclusion

However, the video and image circulating on social media do not actually show beneficiaries receiving any subsidy. A closer analysis of the visuals indicates that the content is AI-generated. In the initial frames of the misrepresented video, a diamond-shaped icon can be seen in the bottom-right corner, which is the watermark associated with Google’s Gemini AI model. The viral video and image are misleading. Multiple inconsistencies and visual cues suggest that the content is likely AI-generated, and the claim that low-income households are receiving free electricity and air conditioners is false.

Introduction

In the rapidly evolving landscape of cyber threats, a novel menace has surfaced the concept of Digital Arrest. The impostors impersonating law enforcement officers deceive the victims into believing that their bank account, SIM card, Aadhaar card, or bank card has been used unlawfully. They coerce victims into paying them money. Digital Arrest involves the virtual restraint of individuals. These suspensions can vary from restricted access to the account(s), and digital platforms, to implementing measures to prevent further digital activities or being restrained on video calling or being monitored through video calling. In the era of digitisation where the technology is growing on an exponential phase, various existing loopholes are being utilised by the wrongdoers which has given rise to this sinister trend known as “digital arrest fraud”. In this scam, the defrauder manipulates the victims, who impersonate law enforcement officials and further traps the victims into a web of deception involving threats of imminent digital restraint and coerced financial transactions.

Recognizing the Danger of Digital Arrest

A recent case involving an interactive voice response (IVR) call that targeted a victim sheds light on the complexities of the "digital arrest" cybercrime. The victim was notified by the scammers—who were pretending to be law enforcement officers—that a SIM card in her name had apparently been utilised in a criminal incident in Mumbai. The call proceeded to a video conversation with an FBI agent who falsely accused her of being involved in money laundering. The victim was forced into a web of dishonesty because she now believed she was involved in a criminal case, underscoring the psychological manipulation these hackers were using.

Recent incidents of digital arrest fraud

- Recently, a complaint was registered at the Noida Cyber Crime Police Station made by a 50-year-old victim, who was deceived of over Rs 11 lakh and exposed to "digital arrest". By using the identities of an IPS officer in the CBI and the founder of an airline that was grounded, the attackers, masquerading as law enforcement officers, falsely accused the victim of being involved in a fake money-laundering case. She was told that she had another SIM card in her name that was used for fraudulent activities in Mumbai. The complaint made by the victim asserted “Victim’s call was transferred to a person (who identified himself as a Mumbai Police officer) who conducted the initial interrogation over the call and then on Skype VC, where she stayed from 9:30 AM to around 7 in the evening. The woman ended up transferring around ₹11.11 lakh. The scammers then ended contact with her, after which she realised she had been scammed.

- Another recent case of digital arrest fraud came from Faridabad. Where a 23-year-old girl got a call from a fraudster posing as a Lucknow customs officer. The caller said that a package was being shipped to Cambodia that included cards and passports associated with the victim's Aadhaar number. The victim was forced to believe that she was a part of illegal activity, which included trafficking in humans. Under the guise of police officials, the hackers made up allegations before extorting money from the victim. After that, she was told by a man acting as a CBI official that she needed to pay five per cent of the total which was Rs 15 lakh. She said the cybercriminals instructed her not to log off Skype. In the meantime, she ended up transferring Rs 2.5 lakh to a bank account shared by cybercriminals.

Measures to protect oneself from digital arrest

Sustaining a practical and observant approach towards cybersecurity is the key to lowering the peril of being targeted and experiencing digital arrest. Following are certain best practices for ensuring the same:

- Cyber Hygiene: This includes maintaining cyber hygiene by regularly updating passwords, and software and also enabling two-factor authentications to reduce the chances of unauthorized access.

- Phishing Attempts: These can be evaded by refraining from clicking on dubious links or downloading attachments from unknown sources and also authenticating the legitimacy of emails and messages before sharing any personal information.

- Secured devices: By installing reputable antivirus and anti-malware solutions and keeping operating systems and applications up to date with the latest security protocols.

- Virtual Private Networks (VPNs): VPNs can be employed to encrypt internet connections thus enhancing privacy and security. However one must be cautious of free VPN services and OTP only for trustworthy providers.

- Monitor online services: A regular review of online accounts for any unauthorized or unlawful activities and setting up alerts for any changes to account settings or login attempts may help in the early detection of cybercrime and coping with it.

- Secure communication channels: Using secure communication techniques such as encryption can be done for the protection of sensitive information. Sharing of passwords and other information must be cautiously done especially in public forums.

- Awareness: The increasing prevalence of cybercrime known as "digital arrest" underscores the need for preventive measures and increased public awareness. Educational initiatives that draw attention to prevalent cyber threats—especially those that include law enforcement impersonation—can enable people to identify and fend off scams of this kind. The collaboration of law enforcement agencies and telecommunication companies can effectively limit the access points used by fraudsters by identifying and blocking susceptible calls.

Conclusion

The rise of Digital Arrest presents a noteworthy and innovative threat to cybersecurity by taking advantage of people's weaknesses through deceitful impersonation and coercive measures. The case in Noida is a prime example of the boldness and skill of cybercriminals who use fear and false information to trick victims into thinking they are in danger of suffering harsh legal repercussions and taking large amounts of money. In order to combat this increasing cybercrime, people need to take a proactive and watchful stance when it comes to cybersecurity. Cyber hygiene techniques, such as two-factor authentication and frequent password changes, are essential for lowering the possibility of unwanted access. Important precautions include being aware of phishing efforts, protecting devices with reliable antivirus software, and using Virtual Private Networks (VPNs) to increase privacy. Cybercriminals and fraudsters often use fear as a powerful tool to manipulate people and exploit their vulnerabilities for illicit gains in the realms of cybercrime and financial fraud. To protect themselves against the sneaky threat of Digital Arrest, netizens must traverse the constantly changing cyber threat landscape with collective knowledge, educated practices, and strong cybersecurity measures.

References:

- https://www.business-standard.com/india-news/new-cyber-crime-trend-unravelled-in-up-woman-held-under-digital-arrest-123120200485_1.html

- https://www.businessinsider.in/india/news/noida-woman-scammed-11-lakh-in-digital-arrest-scam-everything-you-need-to-know/articleshow/105727970.cms

- https://m.timesofindia.com/life-style/parenting/moments/23-year-old-faridabad-girl-on-digital-arrest-for-17-days-how-to-protect-your-children-from-cyber-crime/photostory/105442556.cms

.webp)

Starting on 16th February 2025, Google changed its advertisement platform program policy. It will permit advertisers to employ device fingerprinting techniques for user tracking. Organizations that use their advertising services are now permitted to use fingerprinting techniques for tracking their users' data. Originally announced on 18th December 2024, this rule change has sparked yet another debate regarding privacy and profits.

The Issue

Fingerprinting is a technique that allows for the collection of information about a user’s device and browser details, ultimately enabling the creation of a profile of the user. Not only used for or limited to targeting advertisements, data procured in such a manner can be used by private entities and even government organizations to identify individuals who access their services. If information on customization options, such as language settings and a user’s screen size, is collected, it becomes easier to identify an individual when combined with data points like browser type, time zone, battery status, and even IP address.

What makes this technique contentious at the moment is the lack of awareness regarding the information being collected from the user and the inability to opt out once permissions are granted.

This is unlike Google’s standard system of data collection through permission requests, such as accepting website cookies—small text files sent to the browser when a user visits a particular website. While contextual and first-party cookies limit data collection to enhance user experience, third-party cookies enable the display of irrelevant advertisements while users browse different platforms. Due to this functionality, companies can engage in targeted advertising.

This issue has been addressed in laws like the General Data Protection Regulation (GDPR) of the European Union (EU) and the Digital Personal Data Protection (DPDP) Act, 2023 (India), which mandate strict rules and regulations regarding advertising, data collection, and consent, among other things. One of the major requirements in both laws is obtaining clear, unambiguous consent. This also includes the option to opt out of previously granted permissions for cookies.

However, in the case of fingerprinting, the mechanism of data collection relies on signals that users cannot easily erase. While clearing all data from the browser or refusing cookies might seem like appropriate steps to take, they do not prevent tracking through fingerprinting, as users can still be identified using system details that a website has already collected. This applies to all IoT products as well. People usually do not frequently change the devices they use, and once a system is identified, there are no available options to stop tracking, as fingerprinting relies on device characteristics rather than data-collecting text files that could otherwise be blocked.

Google’s Changing Stance

According to Statista, Google’s revenue is largely made up of the advertisement services it provides (amounting to 264.59 billion U.S. dollars in 2024). Any change in its advertisement program policies draws significant attention due to its economic impact.

In 2019, Google claimed in a blog post that fingerprinting was a technique that “subverts user choice and is wrong.” It is in this context that the recent policy shift comes as a surprise. In response, the ICO (Information Commissioner’s Office), the UK’s data privacy watchdog, has stated that this change is irresponsible. Google, however, is eager to have further discussions with the ICO regarding the policy change.

Conclusion

The debate regarding privacy in targeted advertising has been ongoing for quite some time. Concerns about digital data collection and storage have led to new and evolving laws that mandate strict fines for non-compliance.

Google’s shift in policy raises pressing concerns about user privacy and transparency. Fingerprinting, unlike cookies, offers no opt-out mechanism, leaving users vulnerable to continuous tracking without consent. This move contradicts Google’s previous stance and challenges global regulations like the GDPR and DPDP Act, which emphasize clear user consent.

With regulators like the ICO expressing disapproval, the debate between corporate profits and individual privacy intensifies. As digital footprints become harder to erase, users, lawmakers, and watchdogs must scrutinize such changes to ensure that innovation does not come at the cost of fundamental privacy rights

References

- https://www.techradar.com/pro/security/profit-over-privacy-google-gives-advertisers-more-personal-info-in-major-fingerprinting-u-turn

- https://www.ccn.com/news/technology/googles-new-fingerprinting-policy-sparks-privacy-backlash-as-ads-become-harder-to-avoid/

- https://www.emarketer.com/content/google-pivot-digital-fingerprinting-enable-better-cross-device-measurement

- https://www.lewissilkin.com/insights/2025/01/16/google-adopts-new-stance-on-device-fingerprinting-102ju7b

- https://www.lewissilkin.com/insights/2025/01/16/ico-consults-on-storage-and-access-cookies-guidance-102ju62

- https://www.bbc.com/news/articles/cm21g0052dno

- https://www.techradar.com/features/browser-fingerprinting-explained

- https://fingerprint.com/blog/canvas-fingerprinting/

- https://www.statista.com/statistics/266206/googles-annual-global-revenue/#:~:text=In%20the%20most%20recently%20reported,billion%20U.S.%20dollars%20in%202024