#FactCheck - Viral Video of ‘Hatha Yogi’ Meditating on Snowy Mountain Is AI-Generated

A video claiming to show a Hatha yogi performing extreme penance on a snow-covered mountain amid strong icy winds is going viral on social media. In the clip, the ascetic is seen balancing on one hand in a yoga posture, while users portray the visuals as a rare example of extraordinary spiritual endurance in harsh climatic conditions.

However, an investigation by the CyberPeace Foundation has found the claim to be false. Our analysis confirms that the viral video is AI-generated and does not depict a real person or an actual event.

Claim:

A Instagram user shared the video with the caption:

“Hatha yogi, what kind of soil are these people made of?” The post suggests that the visuals show a real yogi performing intense meditation on a frozen mountain.

- https://www.instagram.com/reels/DTK32TvDGIJ/

- (Archive link as provided) https://perma.cc/H84M-MGXZ

Fact Check:

To verify the claim, the CyberPeace Foundation conducted a detailed examination of the viral video.No credible or verifiable news reports were found to support the claim that such an incident ever occurred.

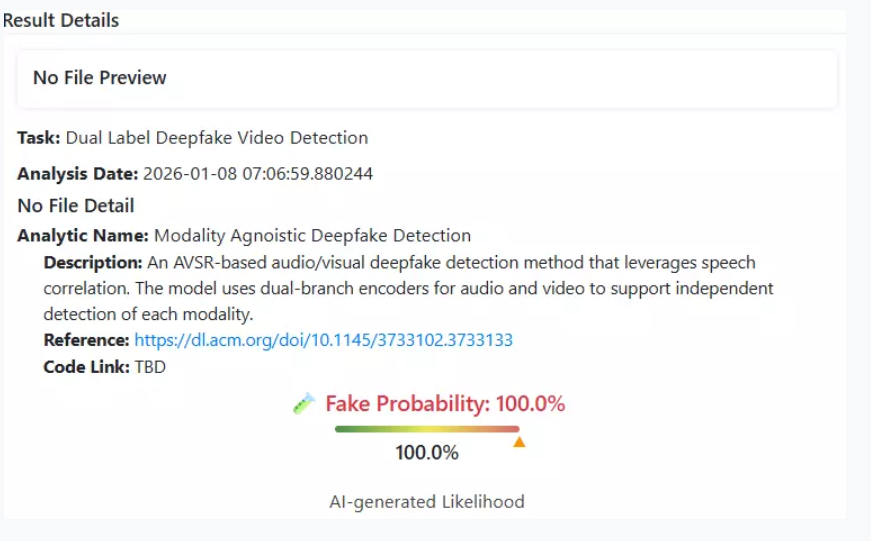

The viral video was analysed using the AI detection tool Deepfake-O-Meter.Its AVSRDD (2025) module flagged the video as AI-generated, confirming that the visuals were digitally created and not recorded in real life.

Multiple indicators within the footage,such as unnatural body balance, environmental inconsistencies, and visual artifacts are consistent with AI-generated content.

Conclusion

The viral video purportedly showing a yogi meditating on a frozen mountain is not real. It has been created using artificial intelligence and is being circulated on social media with a misleading narrative. Users are advised to exercise caution and verify content before sharing such sensational claims.

Related Blogs

Introduction

The Online Lottery Scam involves a scammer reaching out through email, phone or SMS to inform you that you have won a significant amount of money in a lottery, instructing you to contact an agent at a specific phone number or email address that actually belongs to the fraudster. Once the agent is reached out to, the recipient will need to cover processing charges in order to claim the lottery reward. Upfront Paying is required in order to receive your reward. However, actual rewards come at no cost. Additionally, such defective 'offers’ often contain phishing attacks, tricking users into clicking on malicious links.

Modus Operandi

The common lottery fraud starts with a message stating that the receiver has won a large lottery prize. These messages are frequently crafted to imitate official correspondence from reputable institutions, sweepstakes, or foreign administrations. The scammers request the receiver to give personal information like name, address, and banking details, or to make a payment for taxes, processing fees, or legal procedures. After the victim sends the money or discloses their personal details, the scammers may vanish or persist in requesting more payments for different reasons.

Tactics and Psychological Manipulation

These fraudulent techniques mostly rely on psychological manipulation to work. Fraudsters by persuading the victims create the fake sense of emergency that they must act quickly in order to get the lottery prize. Additionally, they prey on people's hopes for a better life by convincing them that this unanticipated gain has the power to change their destiny. Many people fall prey to the scam because they are driven by the desire to get wealthy and fail to recognize the warning indications. Additionally, fraudsters frequently use convincing language and fictitious documentation that appears authentic, hence users need to be extra cautious and recognise the early signs of such online fraudulent activities.

Festive Season and Uptick in Deceptive Online Scams

As the festive season begins, there is a surge in deceptive online scams that aim at targeting innocent internet users. A few examples of such scams include, free Navratri garba passes, quiz participation opportunities, coupons offering freebies, fake offers of cheap jewellery, counterfeit product sales, festival lotteries, fake lucky draws and charity appeals. Most of these scams are targeted to lure the victims for financial gain.

In 2023, CyberPeace released a research report on the Navratri festivities scam where we highlighted the ‘Tanishq iPhone 15 Gift’ scam which involved fraudsters posing as Tanishq, a well-known jewellery brand, and offering fake iPhone 15 as Navratri gifts. Victims were lured into clicking on malicious links. CyberPeace issued a detailed advisory within the report, highlighting that the public must exercise vigilance, scrutinise the legitimacy of such offers, and take precautionary measures to shield themselves from falling prey to such deceptive cyber schemes.

Preventive Measures for Lottery Scams

To avoid lottery scams ,users should avoid responding to messages or calls about fake lottery wins, verify the source of the lottery, maintain confidentiality by not sharing sensitive personal details, approach unexpected windfalls with scepticism, avoid upfront payment requests, and recognize manipulative tactics by scammers. Ignoring messages or calls about fake lottery wins is a smart move. Verifying the source and asking probing questions is also crucial. Users are also advisednot to click on such unsolicited links of lottery prizes received in emails or messages as such links can be phishing attempts. These best practices can help protect the victims against scammers who pressurise victims to act quickly that led them to fall prey to such scams.

Must-Know Tips to Prevent Lottery Scams

● It is advised to steer clear of any communication that offers lotteries or giveaways, as these are often perceived as too good to be true.

● It is advised to refrain from transferring money to individuals/entities who are unknown without verifying their identity and credibility.

● If you have already given the fraudsters your bank account details, it is crucial to alert your bank immediately.

● Report any such incidents on the National Cyber Crime Reporting Portal at cybercrime.gov.in or Cyber Crime Helpline Number 1930.

Executive Summary:

In the recent advisory the Indian Computer Emergency Response Team (CERT-In) has released a high severity warning in the older versions of the software across Apple devices. This high severity rating is because of the multiple vulnerabilities reported in Apple products which could allow the attacker to unfold the sensitive information, and execute arbitrary code on the targeted system. This warning is extremely useful to remind of the necessity to have the software up to date to prevent threats of a cybernature. It is important to update the software to the latest versions and cyber hygiene practices.

Devices Affected:

CERT-In advisory highlights significant risks associated with outdated software on the following Apple devices:

- iPhones and iPads: iOS versions that are below 18 and the 17.7 release.

- Mac Computers: All macOS builds before 14.7 (20G71), 13.7 (20H34), and earlier 20.2 for Sonoma, Ventura, Sequoia, respectively.

- Apple Watches: watchOS versions prior to 11

- Apple TVs: tvOS versions prior to 18

- Safari Browsers: versions prior to 18

- Xcode: versions prior to 16

- visionOS: versions prior to 2

Details of the Vulnerabilities:

The vulnerabilities discovered in these Apple products could potentially allow attackers to perform the following malicious activities:

- Access sensitive information: The attackers could easily access the sensitive information stored in other parts of the violated gadgets.

- Execute arbitrary code: The web page could be compromised with malcode and run on the targeted system which in the worst scenario would give the intruder full Administrator privileges on the device.

- Bypass security restrictions: Measures agreed to safeguard the device and information contained on it may be easily bypassed and the system left open to more proliferation.

- Cause denial-of-service (DoS) attacks: The vulnerabilities could be used to cause the targeted device or service to be unavailable to the rightful users.

- Perform spoofing attacks: There could be a situation where the attackers created fake entities or users or accounts to have a way into important information or do other unauthorized activities.

- Elevate privileges: It is also stated that weaknesses might be exploited to authorize the attacker a higher level of privileges in the system they are targets.

- Engage in cross-site scripting (XSS) attacks: Some of them make the associated Web applications/sites prone to XSS attacks by injecting hostile scripts into Web page code.

Vulnerabilities:

CVE-2023-42824

- Attack vector could allow a local attacker to elevate their privileges and potentially execute arbitrary code.

Affected System

- Apple's iOS and iPadOS software

CVE-2023-42916

- To improve the out of bounds read it was mitigated with improved input validation which was resolved later.

Affected System

- Safari, iOS, iPadOS, macOS, and Apple Watch Series 4 and later devices running watchOS 10.2

CVE-2023-42917

- leads to arbitrary code execution, and there have been reports of it being exploited in earlier versions of iOS.

Affected System

- Apple's Safari browser, iOS, iPadOS, and macOS Sonoma systems

Recommended Actions for Users:

To mitigate these risks, that users take immediate action:

- Update Software: Ensure all your devices are on the most current version of the operating systems they use. Repetitive updates have important security updates that fix identified weaknesses or flaws within the system.

- Monitor Device Activity: Stay vigilant if something doesn’t seem right; if your gadgets are accessed by someone who isn’t you.

- Always use strong, distinct passwords and use two-factor authentication.

- Install and update the antivirus and Firewall softwares.

- Avoid downloading any applications or clicking link from unknown sources

Conclusion:

The advisory from CERT-In, clearly demonstrates the fundamental need of keeping the software on all Apple devices up to date. Consumers need to act right away to patch their devices and apply best security measures like using multiple factors for login and system scanning. This advisory has come out when Apple has just released new products into the market such as the iPhone 16 series in India. When consumers embrace new technologies it is important for them to observe relevant measures of security precautions. Maintaining good cyber hygiene is a critical process for the protection against new threats.

Reference:

- https://www.cert-in.org.in/s2cMainServlet?pageid=PUBVLNOTES02&VLCODE=CIAD-2023-0043

- https://www.cve.org/CVERecord?id=CVE-2023-42916

- https://www.cve.org/CVERecord?id=CVE-2023-42917

- https://www.bizzbuzz.news/technology/gadjets/cert-in-issues-advisory-on-vulnerabilities-affecting-iphones-ipads-and-macs-1337253#google_vignette

- https://www.wionews.com/videos/india-warns-apple-users-of-high-severity-security-risks-in-older-software-761396

The World Wide Web was created as a portal for communication, to connect people from far away, and while it started with electronic mail, mail moved to instant messaging, which let people have conversations and interact with each other from afar in real-time. But now, the new paradigm is the Internet of Things and how machines can communicate with one another. Now one can use a wearable gadget that can unlock the front door upon arrival at home and can message the air conditioner so that it switches on. This is IoT.

WHAT EXACTLY IS IoT?

The term ‘Internet of Things’ was coined in 1999 by Kevin Ashton, a computer scientist who put Radio Frequency Identification (RFID) chips on products in order to track them in the supply chain, while he worked at Proctor & Gamble (P&G). And after the launch of the iPhone in 2007, there were already more connected devices than people on the planet.

Fast forward to today and we live in a more connected world than ever. So much so that even our handheld devices and household appliances can now connect and communicate through a vast network that has been built so that data can be transferred and received between devices. There are currently more IoT devices than users in the world and according to the WEF’s report on State of the Connected World, by 2025 there will be more than 40 billion such devices that will record data so it can be analyzed.

IoT finds use in many parts of our lives. It has helped businesses streamline their operations, reduce costs, and improve productivity. IoT also helped during the Covid-19 pandemic, with devices that could help with contact tracing and wearables that could be used for health monitoring. All of these devices are able to gather, store and share data so that it can be analyzed. The information is gathered according to rules set by the people who build these systems.

APPLICATION OF IoT

IoT is used by both consumers and the industry.

Some of the widely used examples of CIoT (Consumer IoT) are wearables like health and fitness trackers, smart rings with near-field communication (NFC), and smartwatches. Smartwatches gather a lot of personal data. Smart clothing, with sensors on it, can monitor the wearer’s vital signs. There are even smart jewelry, which can monitor sleeping patterns and also stress levels.

With the advent of virtual and augmented reality, the gaming industry can now make the experience even more immersive and engrossing. Smart glasses and headsets are used, along with armbands fitted with sensors that can detect the movement of arms and replicate the movement in the game.

At home, there are smart TVs, security cameras, smart bulbs, home control devices, and other IoT-enabled ‘smart’ appliances like coffee makers, that can be turned on through an app, or at a particular time in the morning so that it acts as an alarm. There are also voice-command assistants like Alexa and Siri, and these work with software written by manufacturers that can understand simple instructions.

Industrial IoT (IIoT) mainly uses connected machines for the purposes of synchronization, efficiency, and cost-cutting. For example, smart factories gather and analyze data as the work is being done. Sensors are also used in agriculture to check soil moisture levels, and these then automatically run the irrigation system without the need for human intervention.

Statistics

- The IoT device market is poised to reach $1.4 trillion by 2027, according to Fortune Business Insight.

- The number of cellular IoT connections is expected to reach 3.5 billion by 2023. (Forbes)

- The amount of data generated by IoT devices is expected to reach 73.1 ZB (zettabytes) by 2025.

- 94% of retailers agree that the benefits of implementing IoT outweigh the risk.

- 55% of companies believe that 3rd party IoT providers should have to comply with IoT security and privacy regulations.

- 53% of all users acknowledge that wearable devices will be vulnerable to data breaches, viruses,

- Companies could invest up to 15 trillion dollars in IoT by 2025 (Gigabit)

CONCERNS AND SOLUTIONS

- Two of the biggest concerns with IoT devices are the privacy of users and the devices being secure in order to prevent attacks by bad actors. This makes knowledge of how these things work absolutely imperative.

- It is worth noting that these devices all work with a central hub, like a smartphone. This means that it pairs with the smartphone through an app and acts as a gateway, which could compromise the smartphone as well if a hacker were to target that IoT device.

- With technology like smart television sets that have cameras and microphones, the major concern is that hackers could hack and take over the functioning of the television as these are not adequately secured by the manufacturer.

- A hacker could control the camera and cyberstalk the victim, and therefore it is very important to become familiar with the features of a device and ensure that it is well protected from any unauthorized usage. Even simple things, like keeping the camera covered when it is not being used.

- There is also the concern that since IoT devices gather and share data without human intervention, they could be transmitting data that the user does not want to share. This is true of health trackers. Users who wear heart and blood pressure monitors have their data sent to the insurance company, who may then decide to raise the premium on their life insurance based on the data they get.

- IoT devices often keep functioning as normal even if they have been compromised. Most devices do not log an attack or alert the user, and changes like higher power or bandwidth usage go unnoticed after the attack. It is therefore very important to make sure the device is properly protected.

- It is also important to keep the software of the device updated as vulnerabilities are found in the code and fixes are provided by the manufacturer. Some IoT devices, however, lack the capability to be patched and are therefore permanently ‘at risk’.

CONCLUSION

Humanity inhabits this world that is made up of all these nodes that talk to each other and get things done. Users can harmonize their devices so that everything runs like a tandem bike – completely in sync with all other parts. But while we make use of all the benefits, it is also very important that one understands what they are using, how it is functioning, and how one can tackle issues should they come up. This is also important to understand because once people get used to IoT, it will be that much more difficult to give up the comfort and ease that these systems provide, and therefore it would make more sense to be prepared for any eventuality. A lot of times, good and sensible usage alone can keep devices safe and services intact. But users should be aware of any issues because forewarned is forearmed.