#FactCheck: Old Ukraine Blast Video Falsely Shared as Iran Strike on Israeli Nuclear Site

Executive Summary

A video showing a massive fire and explosion is going viral on social media. The clip shows a large plume of smoke followed by a sudden blast. It is being shared with the claim that it depicts Iran attacking a nuclear reactor in Israel amid the ongoing Iran-Israel conflict. However, research by CyberPeace found that the claim is misleading. The viral video is actually from 2017 and shows a massive explosion at an ammunition depot in Ukraine.

Claim:

On social media platform X (formerly Twitter), a user shared the video on March 21, 2026, with the caption:“Israel’s nuclear reactor was targeted with Fateh and Khyber missiles. Well done Iran! The whole world is with you.”

Fact Check:

To verify the viral claim, we extracted keyframes from the video and conducted a reverse image search. During this process, we found the same video uploaded on March 23, 2017, on a YouTube channel named “null.” According to the upload, the video shows a massive explosion at an ammunition depot in Balakliya, Ukraine. Using these clues, we performed a keyword search and found a report published on March 24, 2017, by Global News.

According to the report, a major fire and explosion broke out at a large military ammunition depot in Balakliya, located in Ukraine’s Kharkiv region. The incident resulted in one death, while nearly 20,000 people from surrounding areas were evacuated to safer locations.

Conclusion:

The claim that the video shows Iran attacking a nuclear reactor in Israel is misleading. The viral footage is actually from 2017 and depicts an explosion at an ammunition depot in Ukraine.

Related Blogs

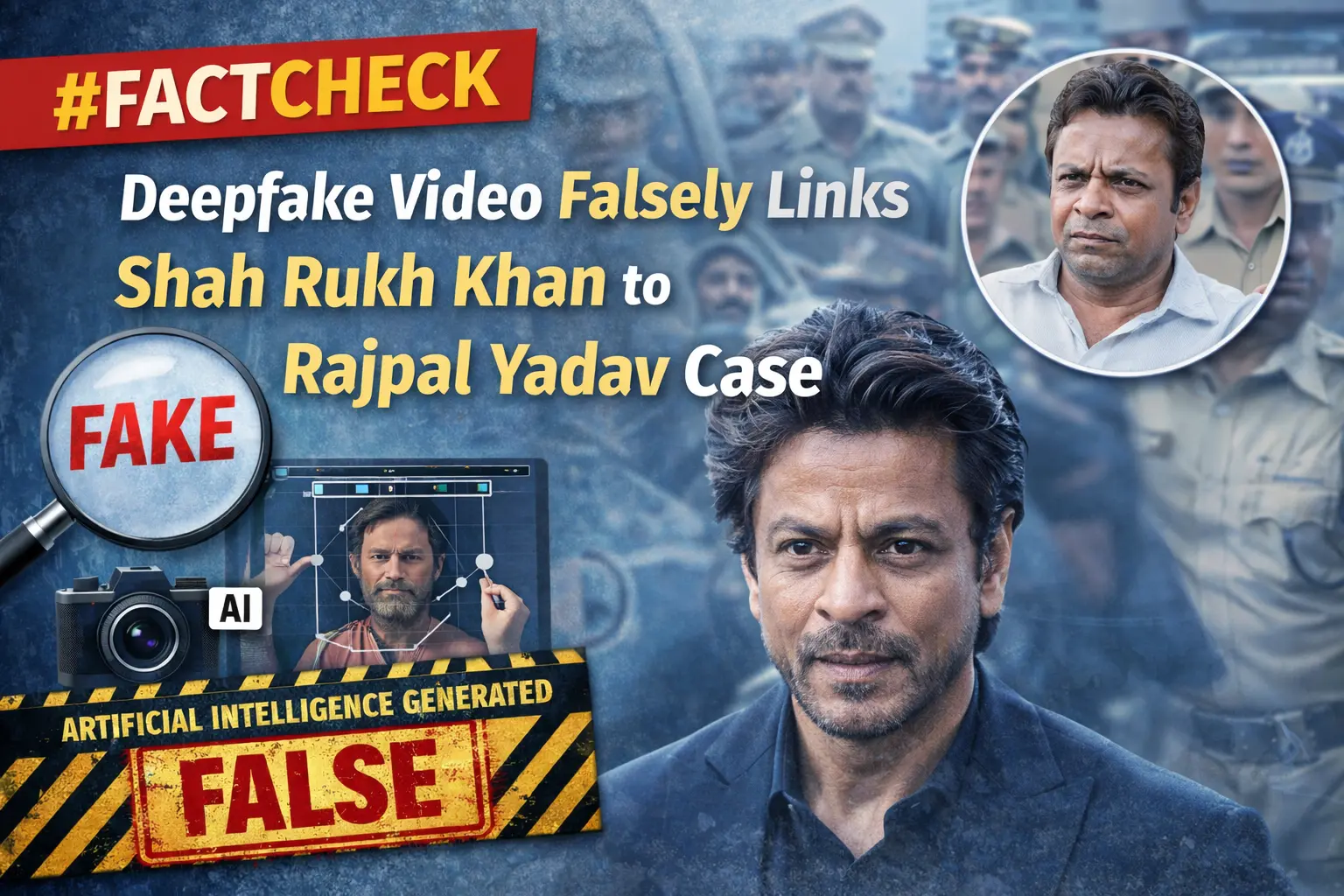

Executive Summary

A video featuring popular comedian Rajpal Yadav has recently gone viral on social media, claiming that he is currently lodged in Tihar Jail in connection with a loan default and cheque bounce case. In connection with this, another video showing Bollywood superstar Shah Rukh Khan is being widely shared online. In the viral clip, Khan is purportedly seen saying that he would help Rajpal Yadav get out of jail and also offer him a role in his upcoming film. However, research by the CyberPeace found the viral video to be fake. The clip is a deepfake, in which the audio has been manipulated using artificial intelligence. In the original video, Shah Rukh Khan is speaking about his life and personal experiences. Although several prominent Bollywood personalities have expressed support for Rajpal Yadav, the claims made in the viral video are misleading.

Claim

An Instagram user named “ayubeditz” shared the viral video on February 11, 2026, with the caption: “Rajpal Yadav bhai, stay strong, we are all with you — Shah Rukh Khan.” The link to the post and its archived version are provided below.

Fact Check

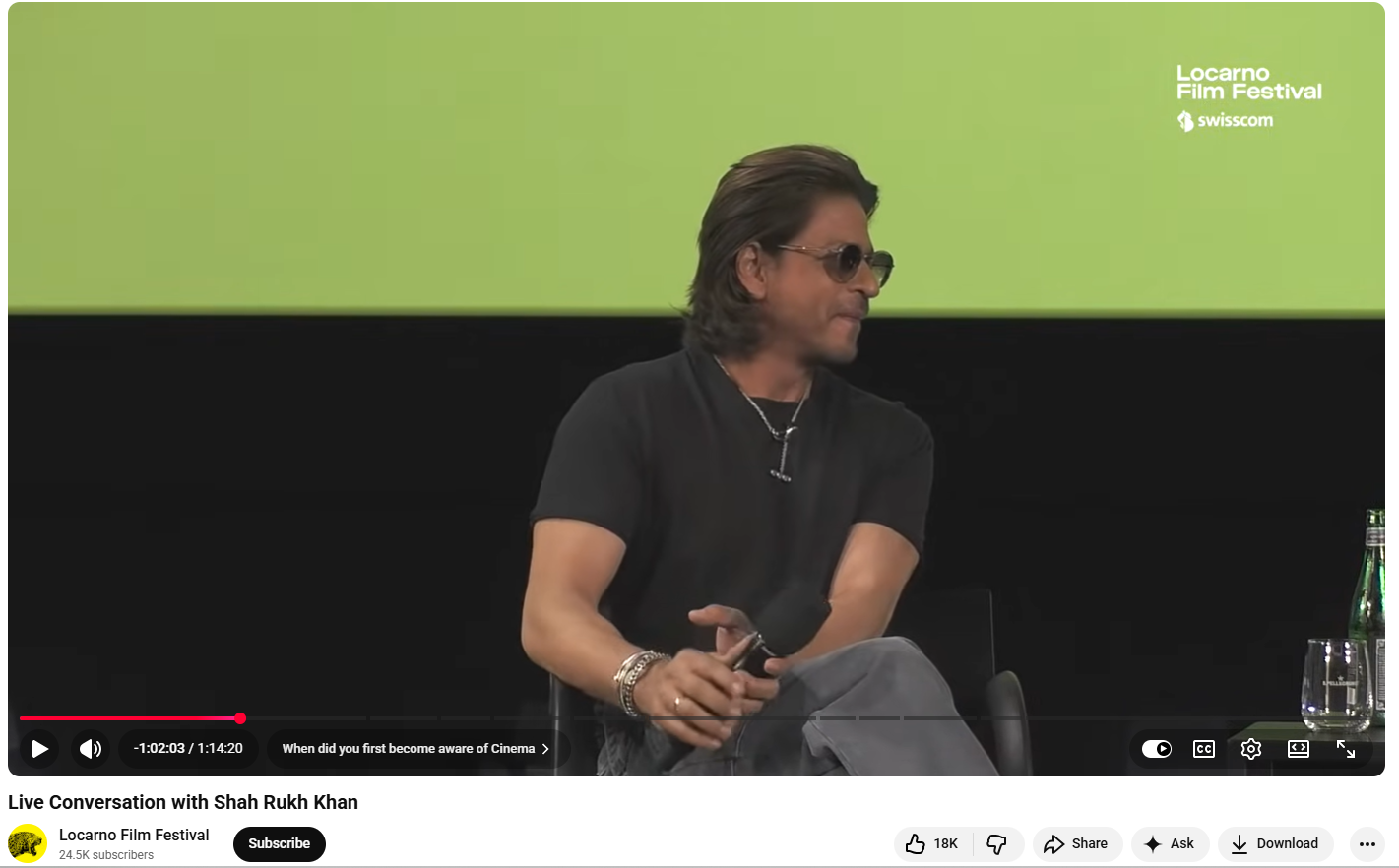

To verify the claim, we extracted key frames from the viral video and conducted a Google reverse image search. This led us to the original video uploaded on a YouTube channel titled “Locarno Film Festival” on August 11, 2024. According to the available information, Shah Rukh Khan was sharing insights about his life and career during a conversation with the festival’s Artistic Director, Giona A. Nazzaro. This raised strong suspicion that the viral video had been edited using AI.

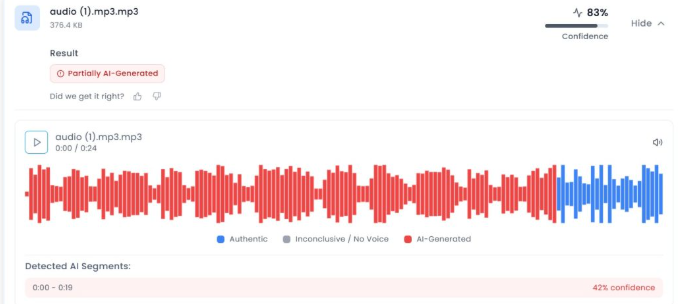

To further examine the authenticity of the audio, we analysed it using AI detection tools. The audio was first checked using Aurigin.ai, which indicated an 83 percent probability that the voice in the viral clip was AI-generated.

Conclusion

The CyberPeace’s research confirmed that the claim associated with Shah Rukh Khan’s viral video is false. The video is a deepfake in which the audio has been altered using artificial intelligence. In the original footage, Khan was discussing his life and experiences, and he did not make any statement about helping Rajpal Yadav.

Introduction

“an intermediary, on whose computer resource the information is stored, hosted or published, upon receiving actual knowledge in the form of an order by a court of competent jurisdiction or on being notified by the Appropriate Government or its agency under clause (b) of sub-section (3) of section 79 of the Act, shall not , which is prohibited under any law for the time being in force in relation to the interest of the sovereignty and integrity of India; security of the State; friendly relations with foreign States; public order; decency or morality; in relation to contempt of court; defamation; incitement to an offence relating to the above, or any information which is prohibited under any law for the time being in force”

Law grows by confronting its absences, it heals itself through its own gaps. The most recent notification from MeitY, G.S.R. 775(E) dated October 22, 2025, is an illustration of that self-correction. On November 15, 2025, the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2025, will come into effect. They accomplish two crucial things: they restrict who can use "actual knowledge” to initiate takedown and require senior-level scrutiny of those directives. By doing this, they maintain genuine security requirements while guiding India’s content governance system towards more transparent due process.

When Regulation Learns Restraint

To better understand the jurisprudence of revision, one must need to understand that Regulation, in its truest form, must know when to pause. The 2025 amendment marks that rare moment when the government chooses precision over power, when regulation learns restraint. The amendment revises Rule 3(1)(d) of the 2021 Rules. Social media sites, hosting companies, and other digital intermediaries are still required to take action within 36 hours of receiving “actual knowledge” that a piece of content is illegal (e.g. poses a threat to public order, sovereignty, decency, or morality). However, “actual knowledge” now only occurs in the following situations:

(i) a court order from a court of competent jurisdiction, or

(ii) a reasoned written intimation from a duly authorised government officer not below Joint Secretary rank (or equivalent)

The authorised authority in matters involving the police “must not be below the rank of Deputy Inspector General of Police (DIG)”. This creates a well defined, senior-accountable channel in place of a diffuse trigger.

There are two more new structural guardrails. The Rules first establish a monthly assessment of all takedown notifications by a Secretary-level officer of the relevant government to test necessity, proportionality, and compliance with India’s safe harbour provision under Section 79(3) of the IT Act. Second, in order for platforms to act precisely rather than in an expansive manner, takedown requests must be accompanied by legal justification, a description of the illegal act, and precise URLs or identifiers. The cumulative result of these guardrails is that each removal has a proportionality check and a paper trail.

Due Process as the Law’s Conscience

Indian jurisprudence has been debating what constitutes “actual knowledge” for over a decade. The Supreme Court in Shreya Singhal (2015) connected an intermediary’s removal obligation to notifications from official channels or court orders rather than vague notice. But over time, that line became hazy due to enforcement practices and some court rulings, raising concerns about over-removal and safe-harbour loss under Section 79(3). Even while more recent decisions questioned the “reasonable efforts” of intermediaries, the 2025 amendment institutionally pays homage to Shreya Singhal’s ethos by refocusing “actual knowledge” on formal reviewable communications from senior state actors or judges.

The amendment also introduces an internal constitutionalism to executive orders by mandating monthly audits at the Secretary level. The state is required to re-justify its own orders on a rolling basis, evaluating them against proportionality and necessity, which are criteria that Indian courts are increasingly requesting for speech restrictions. Clearer triggers, better logs, and less vague “please remove” communications that previously left compliance teams in legal limbo are the results for intermediaries.

The Court’s Echo in the Amendment

The essence of this amendment is echoed in Karnataka High Court’s Ruling on Sahyog Portal, a government portal used to coordinate takedown orders under Section 79(3)(b), was constitutional. The HC rejected X’s (formerly Twitter’s) appeal contesting the legitimacy of the portal in September. The business had claimed that by giving nodal officers the authority to issue takedown orders without court review, the portal permitted arbitrary content removals. The court disagreed, holding that the officers’ acts were in accordance with Section 79 (3)(b) and that they were “not dropping from the air but emanating from statutes.” The amendment turns compliance into conscience by conforming to the Sahyog Portal verdict, reiterating that due process is the moral grammar of governance rather than just a formality.

Conclusion: The Necessary Restlessness of Law

Law cannot afford stillness; it survives through self doubt and reinvention. The 2025 amendment, too, is not a destination, it’s a pause before the next question, a reminder that justice breathes through revision. As befits a constitutional democracy, India’s path to content governance has been combative and iterative. The next rule making cycle has been sharpened by the stays split judgments, and strikes down that have resulted from strategic litigation centred on the IT Rules, safe harbour, government fact-checking, and blocking orders. Lessons learnt are reflected in the 2025 amendment: review triumphs over opacity; specificity triumphs over vagueness; and due process triumphs over discretion. A digital republic balances freedom and force in this way.

Sources

- https://pressnews.in/law-and-justice/government-notifies-amendments-to-it-rules-2025-strengthening-intermediary-obligations/

- https://www.meity.gov.in/static/uploads/2025/10/90dedea70a3fdfe6d58efb55b95b4109.pdf

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2181719

- https://www.scobserver.in/journal/x-relies-on-shreya-singhal-in-arbitrary-content-blocking-case-in-karnataka-hc/

- https://www.medianama.com/2025/10/223-content-takedown-rules-online-platforms-36-hr-deadline-officer-rank/#:~:text=It%20specifies%20that%20government%20officers,Deputy%20Inspector%20General%20of%20Police%E2%80%9D.

Executive Summary:

A viral video claiming to show Israelis pleading with Iran to "stop the war" is not authentic. As per our research the footage is AI-generated, created using tools like Google’s Veo, and not evidence of a real protest. The video features unnatural visuals and errors typical of AI fabrication. It is part of a broader wave of misinformation surrounding the Israel-Iran conflict, where AI-generated content is widely used to manipulate public opinion. This incident underscores the growing challenge of distinguishing real events from digital fabrications in global conflicts and highlights the importance of media literacy and fact-checking.

Claim:

A X verified user with the handle "Iran, stop the war, we are sorry" posted a video featuring people holding placards and the Israeli flag. The caption suggests that Israeli citizens are calling for peace and expressing remorse, stating, "Stop the war with Iran! We apologize! The people of Israel want peace." The user further claims that Israel, having allegedly initiated the conflict by attacking Iran, is now seeking reconciliation.

Fact Check:

The bottom-right corner of the video displays a "VEO" watermark, suggesting it was generated using Google's AI tool, VEO 3. The video exhibits several noticeable inconsistencies such as robotic, unnatural speech, a lack of human gestures, and unclear text on the placards. Additionally, in one frame, a person wearing a blue T-shirt is seen holding nothing, while in the next frame, an Israeli flag suddenly appears in their hand, indicating possible AI-generated glitches.

We further analyzed the video using the AI detection tool HIVE Moderation, which revealed a 99% probability that the video was generated using artificial intelligence technology. To validate this finding, we examined a keyframe from the video separately, which showed an even higher likelihood of 99% probability of being AI generated. These results strongly indicate that the video is not authentic and was most likely created using advanced AI tools.

Conclusion:

The video is highly likely to be AI-generated, as indicated by the VEO watermark, visual inconsistencies, and a 99% probability from HIVE Moderation. This highlights the importance of verifying content before sharing, as misleading AI-generated media can easily spread false narratives.

- Claim: AI generated video of Israelis saying "Stop the War, Iran We are Sorry".

- Claimed On: Social Media

- Fact Check:AI Generated Mislead