#FactCheck-Bangladesh Video Falsely Shared as Security Forces Action During West Bengal Elections 2026

Executive Summary

As West Bengal heads for vote counting on May 4, 2026, following the second phase of Assembly polling held on April 29, a video is being widely shared on social media. The clip shows security personnel baton-charging civilians, with users claiming it depicts force being used during the West Bengal Assembly Elections 2026. Research by CyberPeace Research Wing found that the viral claim is misleading. The video is actually from Bangladesh and is being falsely linked to the West Bengal elections to spread confusion.

Claim

A Facebook user named “Adv Mohd Salman” shared the clip on April 29, 2026, using Bengal-related hashtags and claiming that voters standing in line were beaten to influence the election outcome. The post alleged that free and fair voting rights were being suppressed.

Fact Check

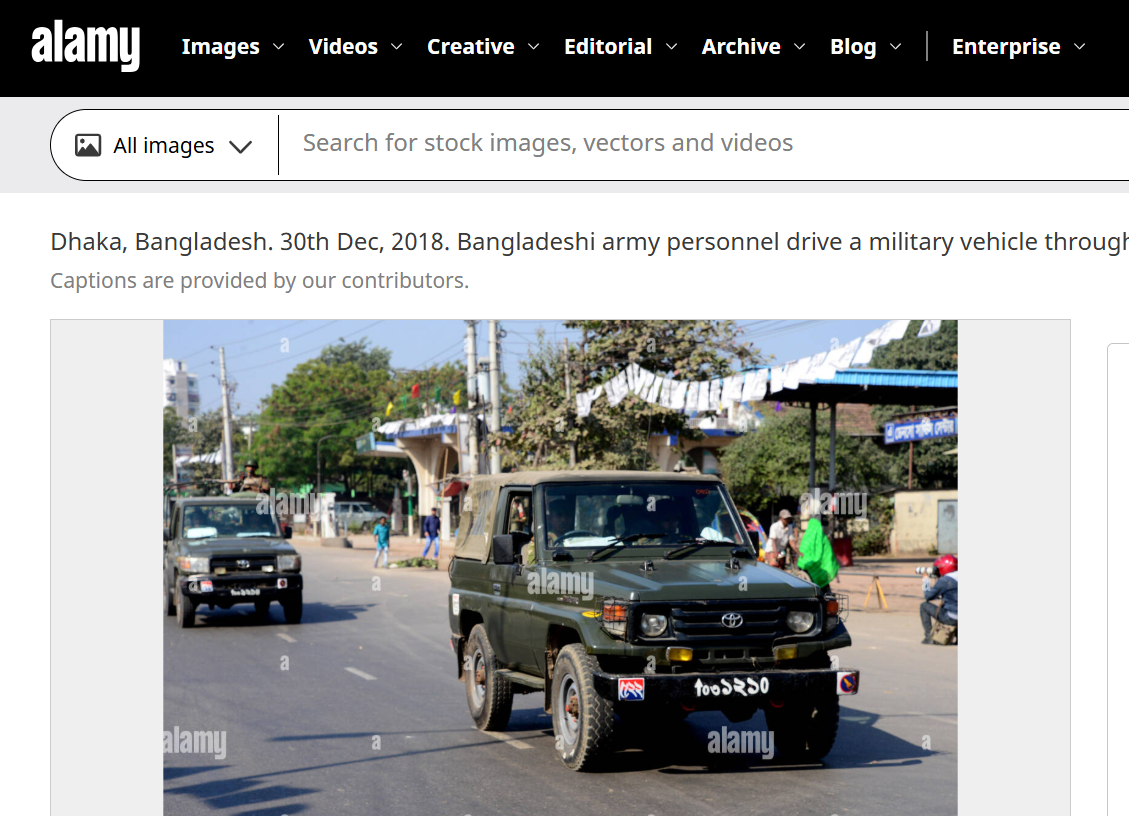

To verify the claim, we closely examined the viral video. A vehicle visible in the footage had a registration number written in a non-Hindi script. Using Google Lens reverse image search, we found a matching image uploaded on Alamy on December 30, 2018. The image showed a military vehicle with the same script and registration style seen in the viral clip.

According to the description on the platform, the image was taken in Dhaka during Bangladesh’s national elections and showed Bangladeshi army personnel moving through a street near a polling station. This confirms that the viral footage is not related to the 2026 West Bengal Assembly elections.

Conclusion

Our research confirms that the video showing security personnel baton-charging civilians is from Bangladesh, not West Bengal. It is being falsely shared as footage from the 2026 West Bengal Assembly elections to mislead users.

Related Blogs

Introduction

Cert-In (Indian Computer Emergency Response Team) has recently issued the “Guidelines on Information Security Practices” for Government Entities for Safe & Trusted Internet. The guideline has come at a critical time when the Draft Digital India Bill is about to be released, which is aimed at revamping the legal aspects of Indian cyberspace. These guidelines lay down the policy framework and the requirements for critical infrastructure for all government organisations and institutions to improve the overall cyber security of the nation.

What is Cert-In?

A Computer Emergency Response Team (CERT) is a group of information security experts responsible for the protection against, detection of and response to an organisation’s cybersecurity incidents. A CERT may focus on resolving data breaches and denial-of-service attacks and providing alerts and incident handling guidelines. CERTs also conduct ongoing public awareness campaigns and engage in research aimed at improving security systems. The Ministry of Electronics and Information Technology (MeitY) oversees CERT-In. It regularly releases alerts to help individuals and companies safeguard their data, information, and ICT (Information and Communications Technology) infrastructure.

Indian Computer Emergency Response Team (CERT-In) has been established and appointed as national agency in respect of cyber incidents and cyber security incidents in terms of the provisions of section 70B of Information Technology (IT) Act, 2000.

CERT-In requests information from service providers, intermediaries, data centres, and body corporates to coordinate reaction actions and emergency procedures regarding cyber security incidents. It is a focal point for incident reporting and offers round-the-clock security services. It manages cyber occurrences that are tracked and reported while continuously analysing cyber risks. It strengthens the security barriers for the Indian Internet domain.

Background

India is fast becoming one of the world’s largest connected nations – with over 80 Crore Indians (Digital Nagriks) presently connected and using the Internet and cyberspace – and with this number is expected to touch 120 Crores in the coming few years. The Digital Nagriks of the country are using the Internet for business, education, finance and various applications and services including Digital Government services. Internet provides growth and innovation and at the same time it has seen rise in cybercrimes, user harm and other challenges to online safety. The policies of the Government are aimed at ensuring an Open, Safe & Trusted and Accountable Internet for its users. Government is fully cognizant and aware of the growing cyber security threats and attacks.

It is the Government of India’s objective to ensure that Digital Nagriks experience a Safe & Trusted Internet. Along with ubiquitous applications of Information & Communication Technologies (ICT) in almost all facets of service delivery and operations, continuously evolving cyber threats have become a concern for the Government. Cyber-attacks can come in the form of malware, ransomware, phishing, data breach etc., that adversely affect an organisation’s information and systems. Cyber threats leading to cyber-attacks or incidents can compromise the confidentiality, integrity, and availability of an organisation’s information and systems and can have far reaching impact on essential services and national interests. To protect against cyber threats, it is important for government entities to implement strong cybersecurity measures and follow best practices. As ICT infrastructure of the Government entities is one of the preferred targets of the malicious actors, responsibility of implementing good cyber security practices for protecting computers, servers, applications, electronic systems, networks, and data from digital attacks, also remain with the ICT assets’ owner i.e. Government entity.

What are the new Guidelines about?

The Government of India (distribution of business) Rules, 1961’s First Schedule lists a number of Ministries, Departments, Secretariats, and Offices, along with their affiliated and subordinate offices, which are all subject to the rules. They also comprise all governmental organisations, businesses operating in the public sector, and other governmental entities under their administrative control.

“The government has launched a number of steps to guarantee an accessible, trustworthy, and accountable digital environment. With a focus on capabilities, systems, human resources, and awareness, we are extending and speeding our work in the area of cyber security, according to Rajeev Chandrasekhar, Minister of State for Electronics, Information Technology, Skill Development, and Entrepreneurship.

The Recommendations

- Various security domains are covered in the standards, including network security, identity and access management, application security, data security, third-party outsourcing, hardening procedures, security monitoring, incident management, and security audits.

- For instance, the rules advise using only a Standard User (non-administrator) account to use computers and laptops for regular work regarding desktop, laptop, and printer security in the workplace. Users may only be granted administrative access with the CISO’s consent.

- The usage of lengthy passwords containing at least eight characters that combine capital letters, tiny letters, numerals, and special characters; Never save any usernames or passwords in your web browser. Likewise, never save any payment-related data there.

- They include guidelines created by the National Informatics Centre for Chief Information Security Officers (CISOs) and staff members of Central government Ministries/Departments to improve cyber security and cyber hygiene in addition to adhering to industry best practises.

Conclusion

The government has been proactive in the contemporary times to eradicate the menace of cybercrimes and therreats from the Indian cyberspace and hence now we have seen a series of new bills and polices introduced by the Ministry of Electronics and Information Technology, and various other government organisations like Cert-In and TRAI. These policies have been aimed towards being relevant to time and current technologies. The threats from emerging technologies like web 3.0 cannot be ignored and hence with active netizen participation and synergy between government and corporates will lead to a better and improved cyber ecosystem in India.

Overview:

It is worth stating that millions of Windows users around the world are facing the Blue Screen of Death (BSOD) problem that makes systems shutdown or restart. This has been attributed to a CrowdStrike update that was released recently and has impacted many organizations, financial institutions, and government agencies across the globe. Indian airlines have also reported disruptions on X (formerly Twitter), informing passengers about the issue.

Understanding Blue Screen of Death:

Blue Screen errors, also known as black screen errors or STOP code errors, can occur due to critical issues forcing Windows to shut down or restart. You may encounter messages like "Windows has been shut down to prevent damage to your computer." These errors can be caused by hardware or software problems.

Impact on Industries

Some of the large U. S. airlines such as American Airlines, Delta Airlines, and United Airlines had to issue ground stops because of communication problems. Also, several airports on Friday suffered a massive technical issue in check-in kiosks for IndiGo, Akasa Air, SpiceJet, and Air India Express.

The Widespread Issue

The issue seems widespread and is causing disruption across the board as Windows PCs are deployed at workplaces and other public entities like airlines, banks, and even media companies. It has been pointed out that Windows PCs use a special cybersecurity solution from a company called CrowdStrike that seems to be the culprit for this outage, affecting most Windows PC users out there.

Microsoft's Response

The issue was acknowledged by Microsoft and the mitigations are underway. The company in its verified X handle Microsoft 365 status has shared a series information on the latest outage and they are looking into the matter. The issue is under investigation.

In one of the posts from Microsoft Azure, it is mentioned that they have become aware of an issue affecting Virtual Machines (VMs) running Windows Client and Windows Server with the CrowdStrike Falcon agent installed. These VMs may encounter a bug check (BSOD) and become stuck in a restarting state. Their analysis indicates that this issue started approximately at 19:00 UTC on July 18th. They have provided recommendations as follows:

Restore from Backup: In case customers have available backups prior to 19:00 UTC on July 18th, they should recover VM data from the backups. If the customer is using Azure Backup, they can get exact steps on how to restore VM data in the Azure portal. here.

Offline OS Disk Repair: Alternatively, customers can attempt offline repair of the OS disk by attaching an unmanaged disk to the affected VM. Encrypted disks may require additional steps to unlock before repair. Once attached, delete the following file:

Windows/System/System32/Drivers/CrowdStrike/C00000291*.sys

After deletion, reattach the disk to the original VM.

Microsoft Azure is actively investigating additional mitigation options for affected customers. We will provide updates as we gather more information.

Resolving Blue Screen Errors in Windows

Windows 11 & Windows 10:

Blue Screen errors can stem from both hardware and software issues. If new hardware was added before the error, try removing it and restarting your PC. If restarting is difficult, start your PC in Safe Mode.

To Start in Safe Mode:

From Settings:

Open Settings > Update & Security > Recovery.

Under "Advanced startup," select Restart now.

After your PC restarts to the Choose an option screen, select Troubleshoot > Advanced options > Startup Settings > Restart.

After your PC restarts, you'll see a list of options. Select 4 or press F4 to start in Safe Mode. If you need to use the internet, select 5 or press F5 for Safe Mode with Networking.

From the Sign-in Screen:

Restart your PC. When you get to the sign-in screen, hold the Shift key down while you select Power > Restart.

After your PC restarts, follow the steps above.

From a Black or Blank Screen:

Press the power button to turn off your device, then turn it back on. Repeat this two more times.

After the third time, your device will start in the Windows Recovery Environment (WinRE).

From the Choose an option screen, follow the steps to enter Safe Mode.

Additional Help:

Windows Update: Ensure your system has the latest patches.

Blue Screen Troubleshooter: In Windows, open Get Help, type Troubleshoot BSOD error, and follow the guided walkthrough.

Online Troubleshooting: Visit Microsoft's support page and follow the recommendations under "Recommended Help."

If none of those steps help to resolve your Blue Screen error, please try the Blue Screen Troubleshooter in the Get Help app:

- In Windows, open Get Help.

- In the Get Help app, type Troubleshoot BSOD error.

- Follow the guided walkthrough in the Get Help app.

[Note: If you're not on a Windows device, you can run the Blue Screen Troubleshooter on your browser by going to Contact Microsoft Support and typing Troubleshoot BSOD error. Then follow the guided walkthrough under "Recommended Help."]

For detailed steps and further assistance, please refer to the Microsoft support portal or contact their support team.

CrowdStrike’s Response:

In the statement given by CrowdStrike, they have clearly mentioned it is not any cyberattack and their resources are working to fix the issue on Windows. Further, they have identified the deployment issue and fixed the same. Crowdstrike mentions about their problematic versions as follows:

- “Channel file "C-00000291*.sys" with timestamp of 0527 UTC or later is the reverted (good) version.

- Channel file "C-00000291*.sys" with timestamp of 0409 UTC is the problematic version.

Note: It is normal for multiple "C-00000291*.sys files to be present in the CrowdStrike directory - as long as one of the files in the folder has a timestamp of 0527 UTC or later, that will be the active content.”

The CrowdStrike will be providing latest updates on the same and advises their customers and organizations to contact their officials officially to get latest updates and accurate information. It is encouraged to refer to customer’s support portal for further help.

Stay safe and ensure regular backups to mitigate the impact of such issues.

References:

https://status.cloud.microsoft/

https://www.crowdstrike.com/blog/statement-on-falcon-content-update-for-windows-hosts/

Executive Summary

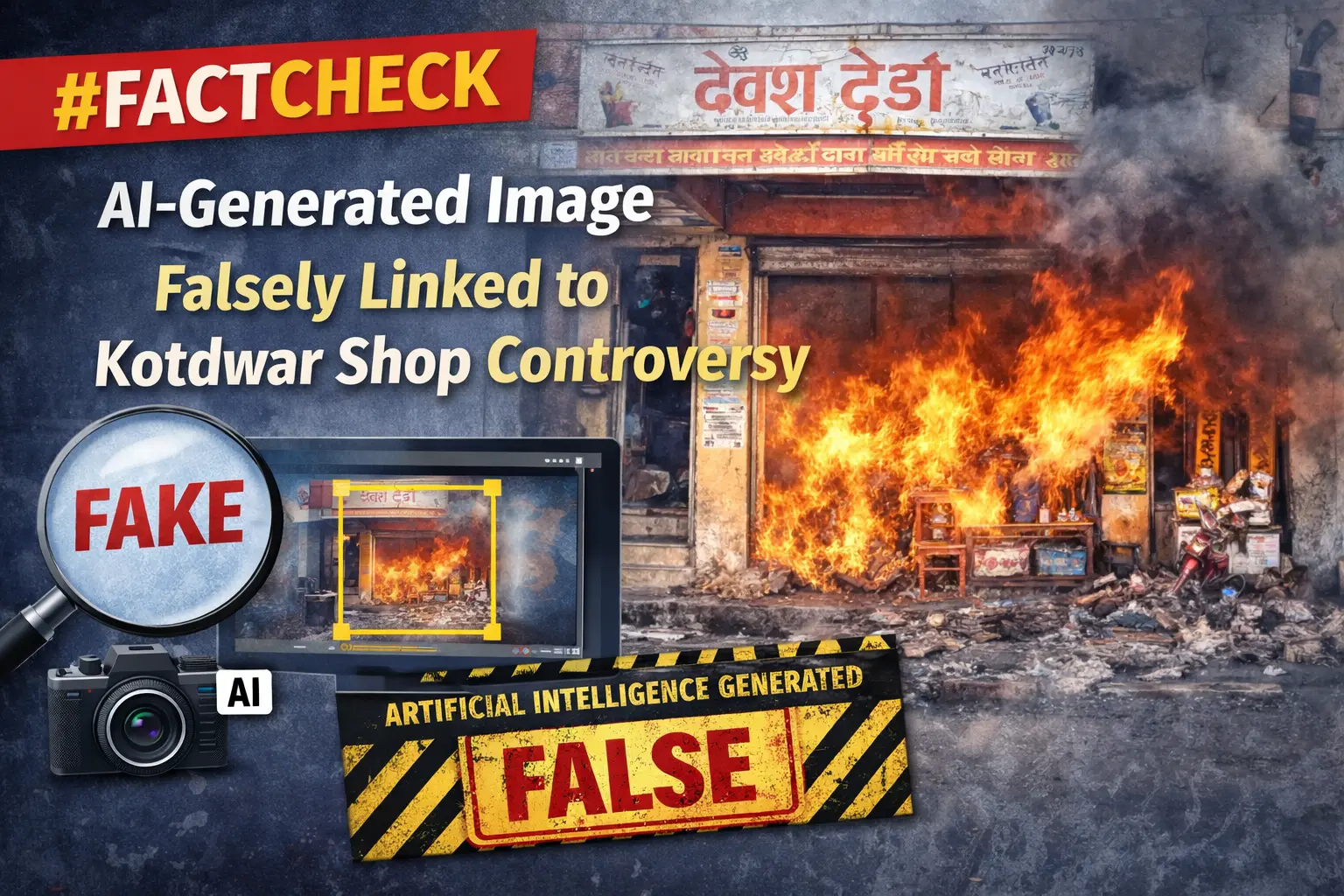

A dispute had recently emerged in Kotdwar, Uttarakhand, over the name of a shop. During the controversy, a local youth, Deepak Kumar, came forward in support of the shopkeeper. The incident subsequently became a subject of discussion on social media, with users expressing varied reactions. Meanwhile, a photo began circulating on social media showing a burqa-clad woman presenting a bouquet to Deepak Kumar. The image is being shared with the claim that All India Majlis-e-Ittehadul Muslimeen (AIMIM)’s women’s president, Rubina, welcomed “Mohammad Deepak Kumar” by presenting him with a bouquet. However, research conducted by the CyberPeace found the viral claim to be false. The research revealed that users are sharing an AI-generated image with a misleading claim.

Claim:

On social media platform Instagram, a user shared the viral image claiming that AIMIM’s women’s president Rubina welcomed “Mohammad Deepak Kumar” by presenting him with a bouquet. The link to the post, its archived version, and a screenshot are provided below.

Fact Check:

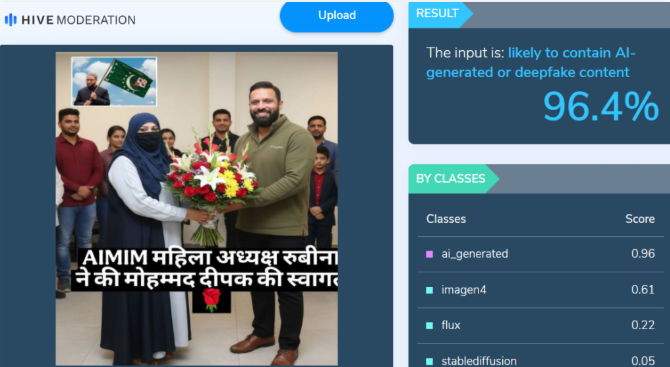

Upon closely examining the viral image, certain inconsistencies raised suspicion that it could be AI-generated. To verify its authenticity, the image was analysed using the AI detection tool Hive Moderation, which indicated a 96 percent probability that the image was AI-generated.

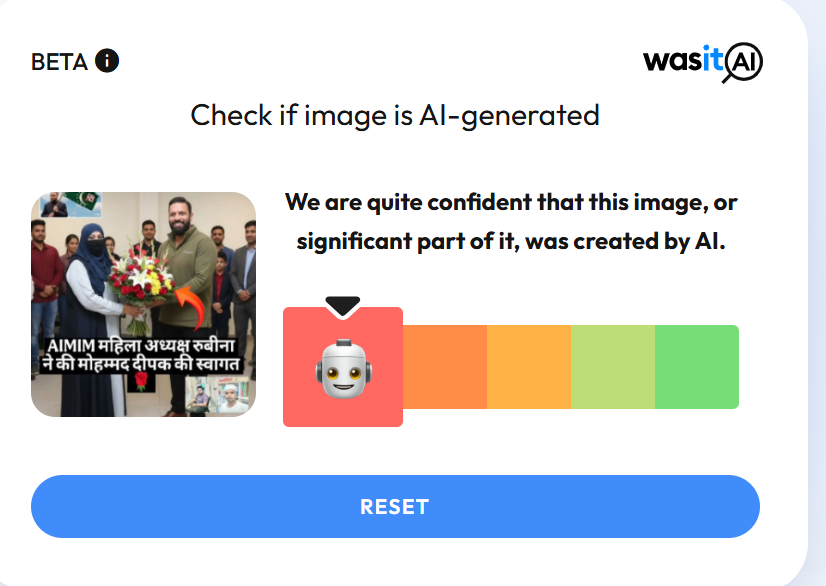

In the next stage of the research , the image was also analysed using another AI detection tool, Wasit AI, which likewise identified the image as AI-generated.

Conclusion

The research establishes that users are circulating an AI-generated image with a misleading claim linking it to the Kotdwar controversy.