#FactCheck -Viral Humanoid Robot Video Actually Filmed at the Museum of the Future

Executive Summary

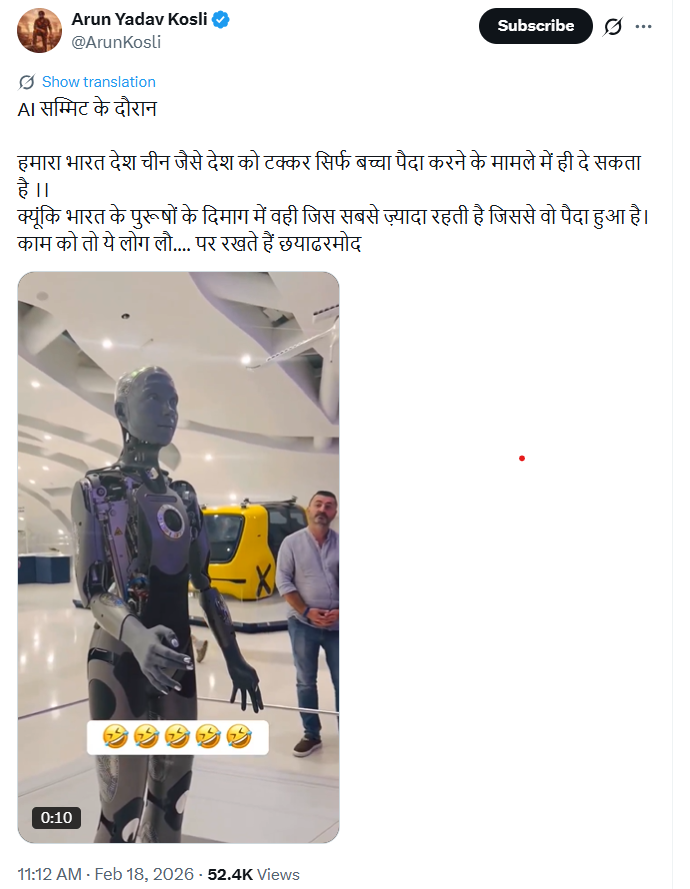

A video circulating widely on social media shows a man interacting with a humanoid robot and using abusive language, after which the robot asks him to maintain politeness. Several users shared the clip claiming that the incident took place during a recent AI summit in New Delhi. The video triggered strong reactions online, with some users demanding legal action against the individual. However, research by CyberPeace found the claim to be misleading.

Claim

Social media users claimed that the viral video showing a man abusing a robot was recorded during an AI summit in New Delhi, India.

Fact Check

To verify the claim, we conducted a reverse image search of the individual seen in the video. The search led us to an Instagram post uploaded by a Pakistani account identifying the individual as Kashif Zameer.

Further keyword searches helped us locate his Instagram profile, where the same video had been uploaded on February 17, 2026. The post included hashtags such as “Dubai,” indicating the actual location of the incident. The profile also lists Lahore, Pakistan, as the user’s location and describes him as a businessman and social media personality.

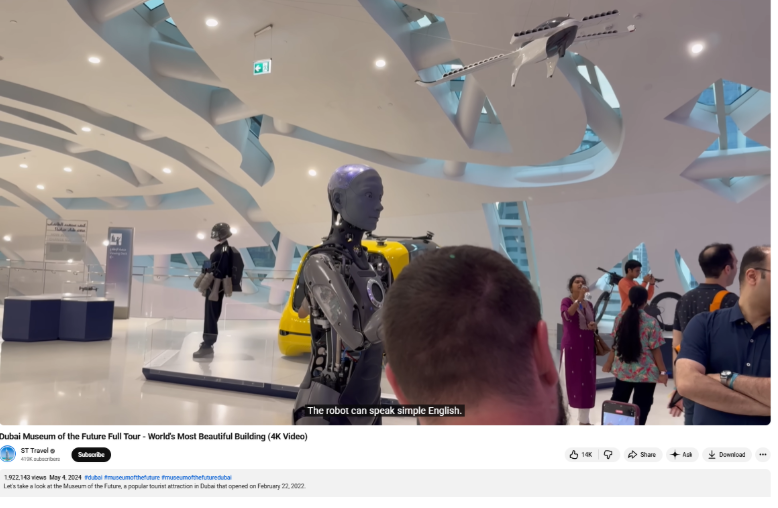

To confirm the location shown in the video, we conducted additional searches using keywords such as “Dubai” and “humanoid robot.” The research revealed that the robot featured in the clip is “Ameca,” located at the Museum of the Future in Dubai.

Conclusion

The viral claim is false. The video is not related to any AI summit held in New Delhi. The incident occurred in Dubai, and the person seen in the video is not an Indian citizen.

Related Blogs

Introduction

The Information Technology (IT) Ministry has tested a new parental control app called ‘SafeNet’ that is intended to be pre-installed in all mobile phones, laptops and personal computers (PCs). The government's approach shows collaborative efforts by involving cooperation between Internet service providers (ISPs), the Department of School Education, and technology manufacturers to address online safety concerns. Campaigns and the proposed SafeNet application aim to educate parents about available resources for online protection and safeguarding their children.

The Need for SafeNet App

SafeNet Trusted Access is an access management and authentication service that ensures no user is a target by allowing you to expand authentication to all users and apps with diverse authentication capabilities. SafeNet is, therefore, an arsenal of tools, each meticulously crafted to empower guardians in the art of digital parenting. With the finesse of a master weaver, it intertwines content filtering with the vigilant monitoring of live locations, casting a protective net over the vulnerable online experiences of the children. The ability to oversee calls and messages adds another layer of security, akin to a watchful sentinel standing guard over the gates of communication. Some pointers regarding the parental control app that can be taken into consideration are as follows.

1. Easy to use and set up: The app should be useful, intuitive, and easy to use. The interface plays a significant role in achieving this goal. The setup process should be simple enough for parents to access the app without any technical issues. Parents should be able to modify settings and monitor their children's activity with ease.

2. Privacy and data protection: Considering the sensitive nature of children's data, strong privacy and data protection measures are paramount. From the app’s point of view, strict privacy standards include encryption protocols, secure data storage practices, and transparent data handling policies with the right of erasure to protect and safeguard the children's personal information from unauthorized access.

3. Features for Time Management: Effective parental control applications frequently include capabilities for regulating screen time and establishing use limitations. The app will evaluate if the software enables parents to set time limits for certain applications or devices, therefore promoting good digital habits and preventing excessive screen time.

4. Comprehensive Features of SafeNet: The app's commitment to addressing the multifaceted aspects of online safety is reflected in its robust features. It allows parents to set content filters with surgical precision, manage the time their children spend in the digital world, and block content that is deemed age-inappropriate. This reflects a deep understanding of the digital ecosystem's complexities and the varied threats that lurk within its shadows.

5. Adaptable to the needs of the family: In a stroke of ingenuity, SafeNet offers both parent and child versions of the app for shared devices. This adaptability to diverse family dynamics is not just a nod to inclusivity but a strategic move that enhances its usability and effectiveness in real-world scenarios. It acknowledges the unique tapestry of family structures and the need for tools that are as flexible and dynamic as the families they serve.

6. Strong Support From Government: The initiative enjoys a chorus of support from both government and industry stakeholders, a symphony of collaboration that underscores the collective commitment to the cause. Recommendations for the pre-installation of SafeNet on devices by an industry consortium resonate with the directives from the Prime Minister's Office (PMO),creating a harmonious blend of policy and practice. The involvement of major telecommunications players and Internet service providers underscores the industry's recognition of the importance of such initiatives, emphasising a collaborative approach towards deploying digital safeguarding measures at scale.

Recommendations

The efforts by the government to implement parental controls a recommendable as they align with societal goals of child welfare and protection. This includes providing parents with tools to manage and monitor their children's Internet usage to address concerns about inappropriate content and online risks. The following suggestions are made to further support the government's initiative:

1. The administration can consider creating a verification mechanism similar to how identities are verified when mobile SIMS are issued. While this certainly makes for a longer process, it will help address concerns about the app being misused for stalking and surveillance if it is made available to everyone as a default on all digital devices.

2. Parental controls are available on several platforms and are designed to shield, not fetter. Finding the right balance between protection and allowing for creative exploration is thus crucial to ensuring children develop healthy digital habits while fostering their curiosity and learning potential. It might be helpful to the administration to establish updated policies that prioritise the privacy-protection rights of children so that there is a clear mandate on how and to what extent the app is to be used.

3. Policy reforms can be further supported through workshops, informational campaigns, and resources that educate parents and children about the proper use of the app, the concept of informed consent, and the importance of developing healthy, transparent communication between parents and children.

Conclusion

Safety is a significant step towards child protection and development. Children have to rely on adults for protection and cannot identify or sidestep risk. In this context, the United Nations Convention on the Rights of the Child emphasises the matter of protection efforts for children, which notes that children have the "right to protection". Therefore, the parental safety app will lead to significant concentration on the general well-being and health of the children besides preventing drug misuse. On the whole, while technological solutions can be helpful, one also needs to focus on educating people on digital safety, responsible Internet use, and parental supervision.

References

- https://www.hindustantimes.com/india-news/itministry-tests-parental-control-app-progress-to-be-reviewed-today-101710702452265.html

- https://www.htsyndication.com/ht-mumbai/article/it-ministry-tests-parental-control-app%2C-progress-to-be-reviewed-today/80062127

- https://www.varindia.com/news/it-ministry-to-evaluate-parental-control-software

- https://www.medianama.com/2024/03/223-indian-government-to-incorporate-parental-controls-in-data-usage/

Executive Summary

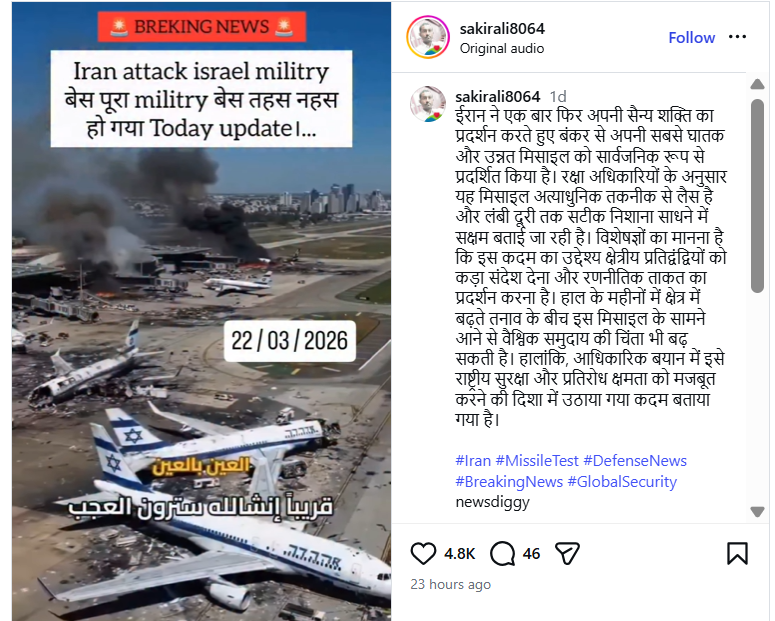

Amid the ongoing conflict involving the US-Israel and Iran in West Asia, a video showing destroyed aircraft at an airport is going viral on social media. The clip is being shared with the claim that it shows an Israeli military base destroyed in an Iranian attack. However, an research by the CyberPeacen found that the viral video is not real but AI-generated.

Claim:

An Instagram user “sakirali8064” shared the video on March 22, 2026, claiming that Iran had demonstrated its military strength by deploying advanced missiles capable of long-range precision strikes.The video also carries a “Breaking News” overlay stating:“Iran attack Israel military base… the entire base destroyed.

Post link and archive link:

Fact Check:

To verify the claim, we extracted keyframes from the viral clip and conducted a reverse image search using Google Lens. We found a longer version of the same video posted on March 5, 2026, by a Facebook user named “With INC,” where it was also falsely linked to an Iranian attack on Israel’s Ben Gurion Airport.

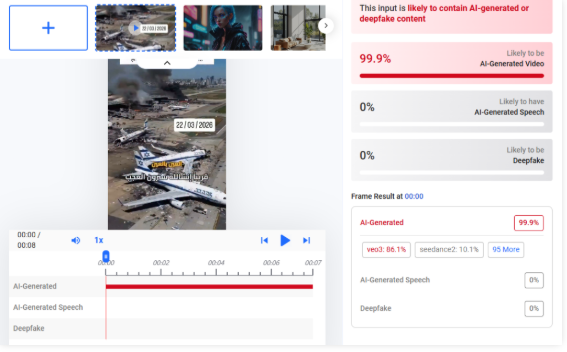

Upon closely examining the video, we observed inconsistencies such as fire changing positions unnaturally, which raised suspicion of AI manipulation. We then analyzed the video using Hive Moderation, which indicated a probability of over 99% that the content is AI-generated.

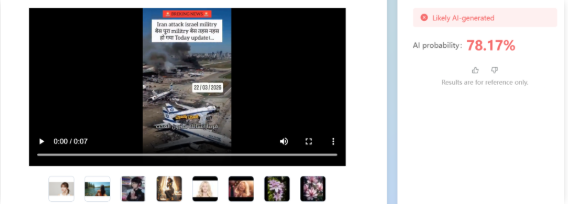

Additionally, analysis using Tencent’s “Zhuque AI” detection tool suggested more than 78% likelihood of the video being AI-generated.

Conclusion:

The viral video claiming that an Iranian attack destroyed an Israeli military base is AI-generated and misleading. While Iran has claimed to have targeted Israel’s Ben Gurion International Airport using drones, the viral footage does not depict a real event.

.webp)

Introduction

The link between social media and misinformation is undeniable. Misinformation, particularly the kind that evokes emotion, spreads like wildfire on social media and has serious consequences, like undermining democratic processes, discrediting science, and promulgating hateful discourses which may incite physical violence. If left unchecked, misinformation propagated through social media has the potential to incite social disorder, as seen in countless ethnic clashes worldwide. This is why social media platforms have been under growing pressure to combat misinformation and have been developing models such as fact-checking services and community notes to check its spread. This article explores the pros and cons of the models and evaluates their broader implications for online information integrity.

How the Models Work

- Third-Party Fact-Checking Model (formerly used by Meta) Meta initiated this program in 2016 after claims of extraterritorial election tampering through dis/misinformation on its platforms. It entered partnerships with third-party organizations like AFP and specialist sites like Lead Stories and PolitiFact, which are certified by the International Fact-Checking Network (IFCN) for meeting neutrality, independence, and editorial quality standards. These fact-checkers identify misleading claims that go viral on platforms and publish verified articles on their websites, providing correct information. They also submit this to Meta through an interface, which may link the fact-checked article to the social media post that contains factually incorrect claims. The post then gets flagged for false or misleading content, and a link to the article appears under the post for users to refer to. This content will be demoted in the platform algorithm, though not removed entirely unless it violates Community Standards. However, in January 2025, Meta announced it was scrapping this program and beginning to test X’s Community Notes Model in the USA, before rolling it out in the rest of the world. It alleges that the independent fact-checking model is riddled with personal biases, lacks transparency in decision-making, and has evolved into a censoring tool.

- Community Notes Model ( Used by X and being tested by Meta): This model relies on crowdsourced contributors who can sign up for the program, write contextual notes on posts and rate the notes made by other users on X. The platform uses a bridging algorithm to display those notes publicly, which receive cross-ideological consensus from voters across the political spectrum. It does this by boosting those notes that receive support despite the political leaning of the voters, which it measures through their engagements with previous notes. The benefit of this system is that it is less likely for biases to creep into the flagging mechanism. Further, the process is relatively more transparent than an independent fact-checking mechanism since all Community Notes contributions are publicly available for inspection, and the ranking algorithm can be accessed by anyone, allowing for external evaluation of the system by anyone.

CyberPeace Insights

Meta’s uptake of a crowdsourced model signals social media’s shift toward decentralized content moderation, giving users more influence in what gets flagged and why. However, the model’s reliance on diverse agreements can be a time-consuming process. A study (by Wirtschafter & Majumder, 2023) shows that only about 12.5 per cent of all submitted notes are seen by the public, making most misleading content go unchecked. Further, many notes on divisive issues like politics and elections may not see the light of day since reaching a consensus on such topics is hard. This means that many misleading posts may not be publicly flagged at all, thereby hindering risk mitigation efforts. This casts aspersions on the model’s ability to check the virality of posts which can have adverse societal impacts, especially on vulnerable communities. On the other hand, the fact-checking model suffers from a lack of transparency, which has damaged user trust and led to allegations of bias.

Since both models have their advantages and disadvantages, the future of misinformation control will require a hybrid approach. Data accuracy and polarization through social media are issues bigger than an exclusive tool or model can effectively handle. Thus, platforms can combine expert validation with crowdsourced input to allow for accuracy, transparency, and scalability.

Conclusion

Meta’s shift to a crowdsourced model of fact-checking is likely to have bigger implications on public discourse since social media platforms hold immense power in terms of how their policies affect politics, the economy, and societal relations at large. This change comes against the background of sweeping cost-cutting in the tech industry, political changes in the USA and abroad, and increasing attempts to make Big Tech platforms more accountable in jurisdictions like the EU and Australia, which are known for their welfare-oriented policies. These co-occurring contestations are likely to inform the direction the development of misinformation-countering tactics will take. Until then, the crowdsourcing model is still in development, and its efficacy is yet to be seen, especially regarding polarizing topics.

References

- https://www.cyberpeace.org/resources/blogs/new-youtube-notes-feature-to-help-users-add-context-to-videos

- https://en-gb.facebook.com/business/help/315131736305613?id=673052479947730

- http://techxplore.com/news/2025-01-meta-fact.html

- https://about.fb.com/news/2025/01/meta-more-speech-fewer-mistakes/

- https://communitynotes.x.com/guide/en/about/introduction

- https://blogs.lse.ac.uk/impactofsocialsciences/2025/01/14/do-community-notes-work/?utm_source=chatgpt.com

- https://www.techpolicy.press/community-notes-and-its-narrow-understanding-of-disinformation/

- https://www.rstreet.org/commentary/metas-shift-to-community-notes-model-proves-that-we-can-fix-big-problems-without-big-government/

- https://tsjournal.org/index.php/jots/article/view/139/57