#FactCheck : AI Video Falsely Shows Iran Destroying Israeli Military Base

Executive Summary

Amid the ongoing conflict involving the US-Israel and Iran in West Asia, a video showing destroyed aircraft at an airport is going viral on social media. The clip is being shared with the claim that it shows an Israeli military base destroyed in an Iranian attack. However, an research by the CyberPeacen found that the viral video is not real but AI-generated.

Claim:

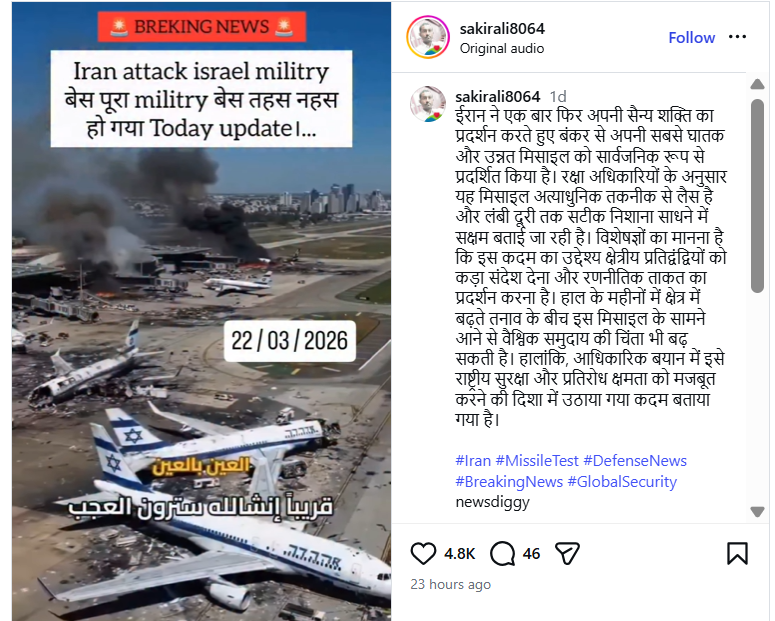

An Instagram user “sakirali8064” shared the video on March 22, 2026, claiming that Iran had demonstrated its military strength by deploying advanced missiles capable of long-range precision strikes.The video also carries a “Breaking News” overlay stating:“Iran attack Israel military base… the entire base destroyed.

Post link and archive link:

Fact Check:

To verify the claim, we extracted keyframes from the viral clip and conducted a reverse image search using Google Lens. We found a longer version of the same video posted on March 5, 2026, by a Facebook user named “With INC,” where it was also falsely linked to an Iranian attack on Israel’s Ben Gurion Airport.

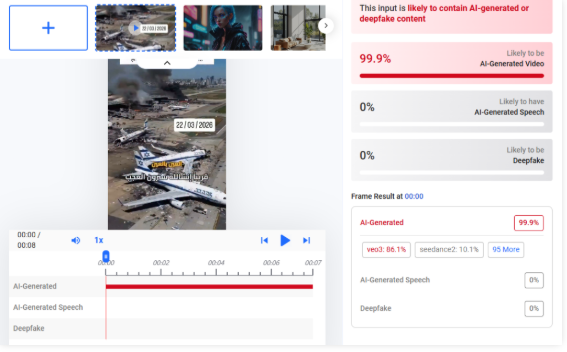

Upon closely examining the video, we observed inconsistencies such as fire changing positions unnaturally, which raised suspicion of AI manipulation. We then analyzed the video using Hive Moderation, which indicated a probability of over 99% that the content is AI-generated.

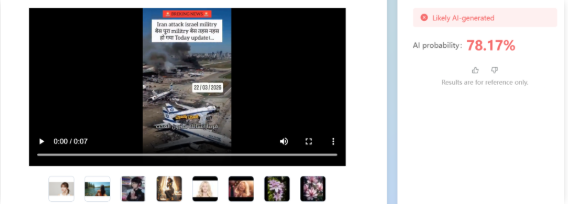

Additionally, analysis using Tencent’s “Zhuque AI” detection tool suggested more than 78% likelihood of the video being AI-generated.

Conclusion:

The viral video claiming that an Iranian attack destroyed an Israeli military base is AI-generated and misleading. While Iran has claimed to have targeted Israel’s Ben Gurion International Airport using drones, the viral footage does not depict a real event.

Related Blogs

Introduction

The courts in India have repeatedly emphasised the importance of “enhanced customer protection” and “limited liability” on their part. The rationale behind such imperatives is to extend security against exploitation by institutions that are equipped with all the means to manipulate customers. India, with its looming financial literacy gaps that have to be addressed, needs to curb any manipulation on the part of banking institutions. Various studies have highlighted this gap in recent times; for example, according to the National Centre for Financial Education, only 27% of Indian people are financially literate, which is much less than the 42% global average. With only 19% of millennials exhibiting sufficient financial awareness yet expressing high trust in their financial skills, the issue is very worrisome. Thus, the increasing number of financial frauds intensifies the issue.

Zero Liability in Cyber Frauds: Regulatory Safeguards for Digital Banking Customers

In light of the growing emphasis on financial inclusion and consumer protection, and in response to the recent rise in complaints regarding unauthorised debits from customer accounts and cards, the framework for assessing customer liability in such cases has been re-evaluated. The RBI’s circular dated July 6, 2017 titled “Customer Protection-Limited Liability of Customers in Unauthorised Electronic Banking Transactions” serves as the foundation for regulatory protections for Indian customers of digital banking. A clear and organised framework for determining customer accountability is outlined in the circular, which acknowledges the exponential increase in electronic transactions and related scams. It assigns proportional obligations for unauthorised transactions resulting from system-level breaches, client carelessness, and bank contributory negligence. Most importantly it establishes the zero responsibility concept, which protects clients from monetary losses in cases when the bank or another system component is at fault and the client promptly reports the breach.

This directive’s sophisticated approach to consumer protection is what makes it unique. It requires banks to set up strong fraud prevention systems, proactive alerting systems, and round-the-clock reporting systems. Furthermore, it significantly alters the power dynamics between financial institutions and customers by placing the onus of demonstrating customer negligence completely on the bank. The circular emphasises prompt reversal of funds to impacted customers and requires banks to implement Board-approved policies on liability to redress. As a result, it is a consumer rights charter rather than just a compliance document, promoting confidence and financial accountability in India’s digital banking sector.

Judicial Endorsement in Reinforcing the Zero Liability Principle

In the case of Suresh Chandra Negi & Anr. v. Bank of Baroda & Ors. (Writ (C) No. 24192 of 2022) The Allahabad High Court reaffirmed that the burden of proving consumer accountability rests firmly on the banking institution, hence reaffirming the zero liability concept in circumstances of unapproved electronic banking transactions. The Division bench emphasised the regulatory requirement that banks provide adequate proof before assigning blame to customers, citing Clause 12 of the RBI’s circular dated June 6, 2017, Customer Protection—Limited Liability of Customers in Unauthorised Electronic Banking Transactions. In a similar scenario, the Bombay HC held that a customer is entitled to zero liability when an authorized transaction occurs due to a third-party breach, where the deficiency lies neither with the bank nor the customer, provided the fraud is promptly reported.

The zero liability principle, as envisaged under Clause 8 of the RBI circular, has emerged as a cornerstone of consumer protection in India’s digital banking ecosystem.

Another landmark judgment that has given this principle the front stage in addressing banking frauds is Hare Ram Singh vs RBI &Ors. (W.P. (C) 13497/2022) laid down by Delhi HC which is an important legal turning point in the development of the zero liability principle under the RBI’s 2017 framework. The court reiterated the need to evaluate customer diligence in light of new fraud tactics like phishing and vishing by holding the State Bank of India (SBI) liable for a cyber fraud incident even though the transactions were authenticated by OTP. The ruling made it clear that when complex social engineering or technical manipulation is used, banks are nonetheless accountable even if they only rely on OTP validation. The legal protection provided to victims of unauthorised electronic banking transactions is strengthened by the court’s emphasis on the bank having the burden of evidence in accordance with RBI standards.

Importantly, this ruling lays the full burden of securing digital banking systems on financial organisations and supports the judiciary’s increasing acknowledgement of the digital asymmetry between banks and consumers. It emphasises that prompt consumer reporting, banks’ failure to disclose important credentials, and their own operational errors must all be taken into consideration when determining culpability. As a result, this decision establishes a strong precedent that will increase consumer confidence, promote systemic advancements in digital risk management, and better integrate the zero liability standard into Indian digital banking law. In a time when cyber vulnerabilities are growing, it acts as a beacon for financial accountability.

Conclusion

The Zero Liability Principle serves as a vital safety net for customers navigating an increasingly intricate and precarious financial environment in a time when digital transactions are the foundation of contemporary banking. In addition to codifying strong safeguards against unauthorized electronic transactions, the RBI’s 2017 framework rebalanced the fiduciary relationship by putting financial institutions squarely in charge. Through significant rulings, the courts have upheld this protective culture and emphasised that banks, not the victims of cybercrime, bear the burden of proof.

It would be crucial to execute these principles consistently, review them frequently, and raise public awareness as India transitions to a more digital economy. In order to ensure that consumers are not only protected but also empowered must become more than just a policy on paper.

References

- https://www.business-standard.com/content/specials/making-money-vs-managing-money-india-s-critical-financial-literacy-gap-125021900786_1.html

- https://www.livelaw.in/high-court/allahabad-high-court/allahabad-high-court-ruling-bank-liability-unauthorized-electronic-transaction-and-customer-fault-297962

- https://www.mondaq.com/india/white-collar-crime-anti-corruption-fraud/1635616/cyber-law-series-2-issue-10-the-zero-liability-principle-in-cyber-fraud-hare-ram-singh-v-reserve-bank-of-india-ors-case

Executive Summary

A dispute over water balloons on the day of Holi in the Uttam Nagar area of Delhi reportedly turned violent, resulting in the brutal murder of a young man named Tarun Khatik. Following the incident, a video is being widely shared on social media and linked to the murder case. In the viral video, Yogi Adityanath, Chief Minister of Uttar Pradesh, can be seen walking alongside Ravi Kishan, Member of Parliament from Gorakhpur. People standing along the route are seen showering flowers on them. Several users claim that the video shows the chief minister visiting the house of Tarun Khatik to meet his family.

However, research by CyberPeace found the viral claim to be misleading. Our research revealed that the video has no connection to the Tarun Khatik murder case. In fact, the video is from a Holika Dahan celebration held in Gorakhpur, which is now being shared on social media with a misleading claim.

Claim Post:

An Instagram user shared the viral video on March 10, 2026, writing: “Tarun bhai ko insaaf dilane ke liye aage aaye maananiya mukhyamantri Shri Yogi Adityanath ji.”

Fact Check:

To verify the claim, we extracted several key frames from the viral video and conducted a reverse image search using Google Lens. During the search, we found the same video posted on a Facebook account on March 2, 2026.

According to the caption of the Facebook post, the video shows a grand procession organised by the Shri Shri Holika Dahan Utsav Samiti, Pandeyhata in Gorakhpur. Yogi Adityanath attended the procession and was seen celebrating Holi with people by playing with flowers and coloured powder. During the procession, flower petals were showered on devotees, and the entire area witnessed a festive atmosphere filled with colours, devotion, and enthusiasm. A large number of people participated in the celebration, and the festival was celebrated with traditional drums and music. During further research, we also found images related to the viral video on the official X (formerly Twitter) account of Ravi Kishan. These images were shared on March 2, 2026, and the caption confirmed that they were taken during the Holika Dahan celebration in Gorakhpur.

At the end of the research, we also found the same video uploaded on March 3, 2026 on the Instagram page Local News Gorakhpur. According to the information in the post, Yogi Adityanath and several other leaders participated in the grand procession organised by the Shri Shri Holika Dahan Utsav Samiti, Pandeyhata. People celebrated Holi with flowers and coloured powder during the event.

Conclusion:

Our research found that the viral video has no connection with the Tarun Khatik murder case in Uttam Nagar, Delhi. The video actually shows Yogi Adityanath participating in a Holika Dahan celebration in Gorakhpur. Therefore, the video is being shared on social media with a misleading claim.

Executive Summary:

A number of false information is spreading across social media networks after the users are sharing the mistranslated video with Indian Hindus being congratulated by Italian Prime Minister Giorgia Meloni on the inauguration of Ram Temple in Ayodhya under Uttar Pradesh state. Our CyberPeace Research Team’s investigation clearly reveals that those allegations are based on false grounds. The true interpretation of the video that actually is revealed as Meloni saying thank you to those who wished her a happy birthday.

Claims:

A X (Formerly known as Twitter) user’ shared a 13 sec video where Italy Prime Minister Giorgia Meloni speaking in Italian and user claiming to be congratulating India for Ram Mandir Construction, the caption reads,

“Italian PM Giorgia Meloni Message to Hindus for Ram Mandir #RamMandirPranPratishta. #Translation : Best wishes to the Hindus in India and around the world on the Pran Pratistha ceremony. By restoring your prestige after hundreds of years of struggle, you have set an example for the world. Lots of love.”

Fact Check:

The CyberPeace Research team tried to translate the Video in Google Translate. First, we took out the transcript of the Video using an AI transcription tool and put it on Google Translate; the result was something else.

The Translation reads, “Thank you all for the birthday wishes you sent me privately with posts on social media, a lot of encouragement which I will treasure, you are my strength, I love you.”

With this we are sure that it was not any Congratulations message but a thank you message for all those who sent birthday wishes to the Prime Minister.

We then did a reverse Image Search of frames of the Video and found the original Video on the Prime Minister official X Handle uploaded on 15 Jan, 2024 with caption as, “Grazie. Siete la mia” Translation reads, “Thank you. You are my strength!”

Conclusion:

The 13 Sec video shared by a user had a great reach at X as a result many users shared the Video with Similar Caption. A Misunderstanding starts from one Post and it spreads all. The Claims made by the X User in Caption of the Post is totally misleading and has no connection with the actual post of Italy Prime Minister Giorgia Meloni speaking in Italian. Hence, the Post is fake and Misleading.

- Claim: Italian Prime Minister Giorgia Meloni congratulated Hindus in the context of Ram Mandir

- Claimed on: X

- Fact Check: Fake