#FactCheck - Viral Video of Anti-RSS Slogans Is From 2022 Telangana, Not Uttar Pradesh

Executive Summary

A video showing a group of people wearing Muslim caps raising provocative slogans against the Rashtriya Swayamsevak Sangh (RSS) is being widely shared on social media. Users sharing the clip claim that the incident took place recently in Uttar Pradesh. However, CyberPeace research found the claim to be false. The probe established that the video is neither recent nor related to Uttar Pradesh. In fact, the footage dates back to 2022 and is from Telangana. The slogans heard in the video were raised during a protest against Goshamahal MLA T. Raja Singh, and the clip is now being circulated with a misleading claim.

Claim

On January 21, 2026, a user on social media platform X (formerly Twitter) shared the video claiming it showed people in Uttar Pradesh chanting slogans such as, “Kaat daalo saalon ko, RSS walon ko” and “Gustakh-e-Nabi ka sar chahiye.” The post suggested that such slogans were being raised openly in Uttar Pradesh despite strict law enforcement. Links to the post and its archive are provided below.

Fact Check:

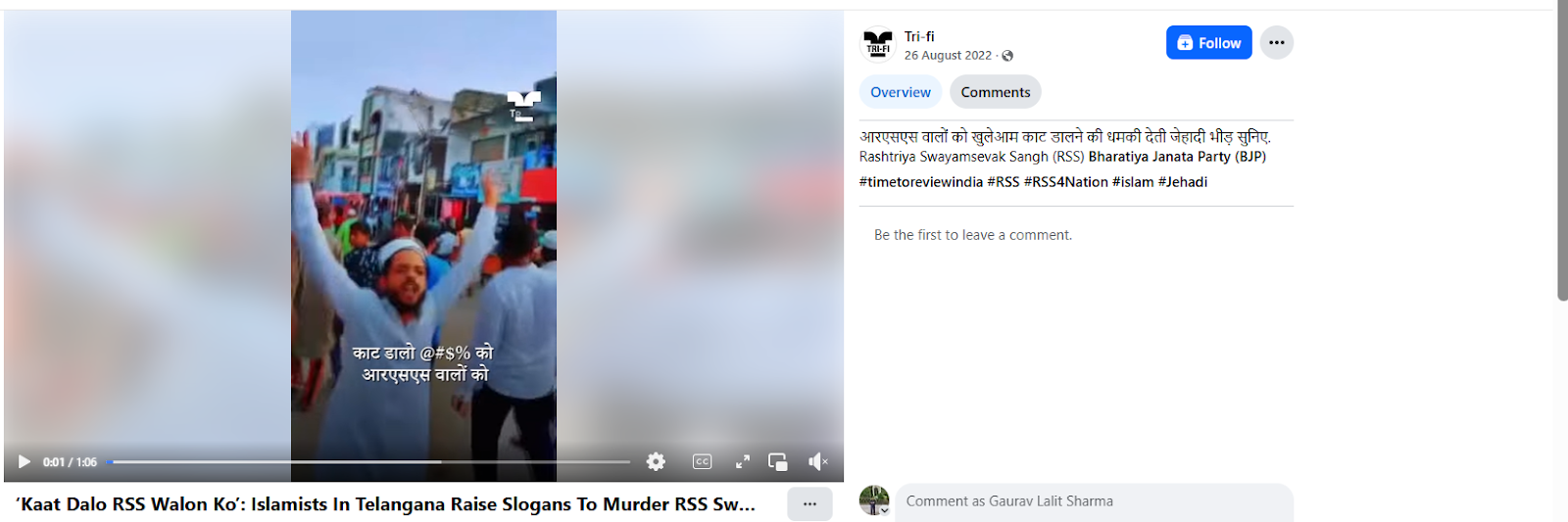

To verify the claim, CyberPeace research conducted a reverse image search using keyframes from the viral video. The same footage was found on a Facebook account where it had been uploaded on August 26, 2022, indicating that the video is not recent.

Further verification led the team to a report published by news portal OpIndia on August 25, 2022, which featured identical visuals from the viral clip. According to the report, the video showed a protest march organised against BJP MLA T. Raja Singh following his alleged controversial remarks about Prophet Muhammad. The report identified one of the individuals in the video as Kaleem Uddin, who was allegedly heard raising the slogan “Kaat daalo saalon ko,” to which the crowd responded “RSS walon ko.” The slogan was linked to incitement against RSS members.

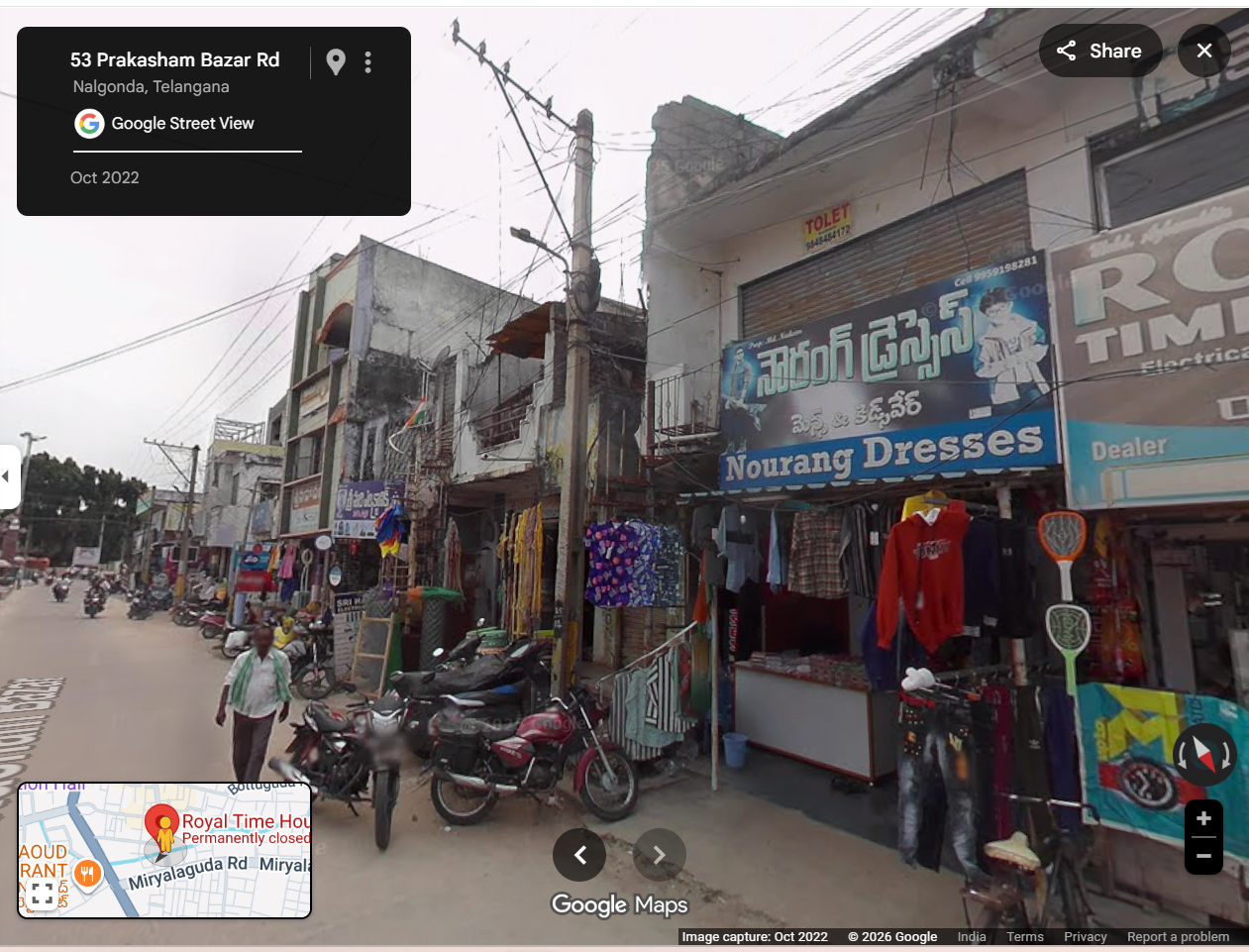

To confirm the location, the video was examined closely. A shop sign reading “Royal Time House” was visible in the footage. Using Google Street View, the same shop was located in Nalgonda, Telangana, conclusively establishing that the video was filmed there and not in Uttar Pradesh.

Conclusion

CyberPeace research confirmed that the viral video is from 2022 and was recorded in Telangana, not Uttar Pradesh. The clip is being falsely circulated with a misleading claim to give it a communal and political angle.