#Factcheck-Viral Image of Men Riding an Elephant Next to a Tiger in Bihar is Misleading

Executive Summary:

A post on X (formerly Twitter) featuring an image that has been widely shared with misleading captions, claiming to show men riding an elephant next to a tiger in Bihar, India. This post has sparked both fascination and skepticism on social media. However, our investigation has revealed that the image is misleading. It is not a recent photograph; rather, it is a photo of an incident from 2011. Always verify claims before sharing.

Claims:

An image purporting to depict men riding an elephant next to a tiger in Bihar has gone viral, implying that this astonishing event truly took place.

Fact Check:

After investigation of the viral image using Reverse Image Search shows that it comes from an older video. The footage shows a tiger that was shot after it became a man-eater by forest guard. The tiger killed six people and caused panic in local villages in the Ramnagar division of Uttarakhand in January, 2011.

Before sharing viral posts, take a brief moment to verify the facts. Misinformation spreads quickly and it’s far better to rely on trusted fact-checking sources.

Conclusion:

The claim that men rode an elephant alongside a tiger in Bihar is false. The photo presented as recent actually originates from the past and does not depict a current event. Social media users should exercise caution and verify sensational claims before sharing them.

- Claim: The video shows people casually interacting with a tiger in Bihar

- Claimed On:Instagram and X (Formerly Known As Twitter)

- Fact Check: False and Misleading

Related Blogs

Executive Summary

A video of senior Congress leader Shashi Tharoor is widely circulating on social media, allegedly showing him praising Pakistan’s diplomatic stance over the ICC T20 World Cup issue. Many users are sharing the clip believing it to be genuine. However, research by the CyberPeace found the claim to be false. The viral video of Tharoor is a deepfake, and the Congress leader himself has described it as fabricated and fake.

Claim

A Facebook page named “Vok Sports” shared the video on February 11, 2026, claiming that Tharoor praised Pakistan. In the viral clip, he is purportedly heard saying in English that Pakistan’s diplomatic handling of the matter was “brilliant” and that it had outmanoeuvred the Indian cricket board, adding that good diplomacy could make a weak nation appear powerful.

The video was widely shared by social media users as authentic. (Archive links and post details provided.)

Fact Check

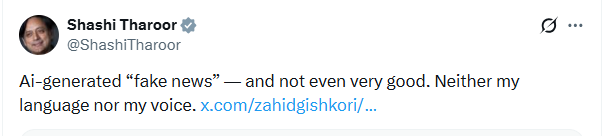

To verify the claim, we first scanned Tharoor’s official X (formerly Twitter) handle. We found a post dated February 12 in which he responded to a Pakistani journalist who had shared the video. Tharoor stated that the clip was AI-generated “fake news,” adding that neither the language nor the voice in the video was his.

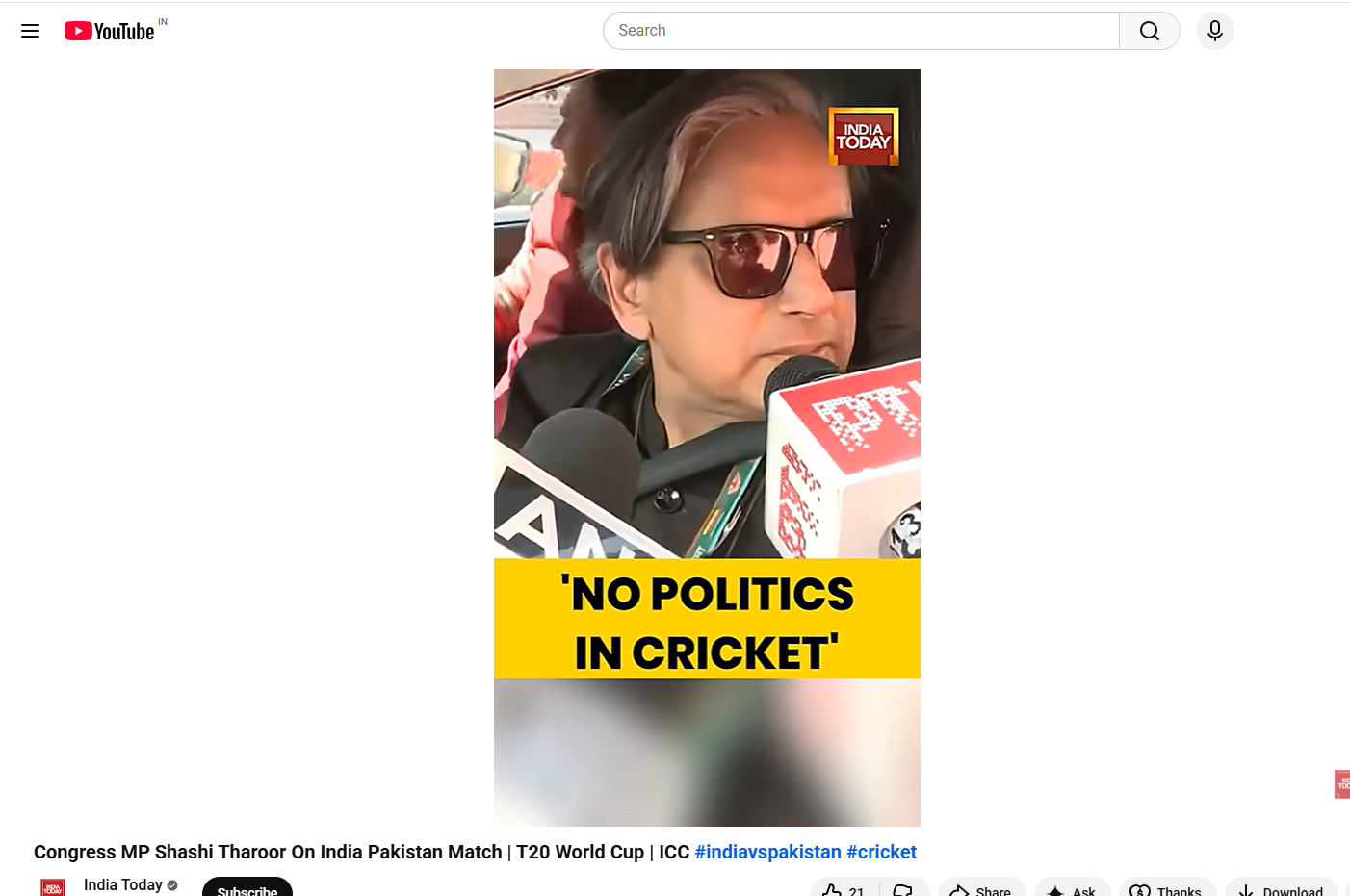

A reverse image search using Google Lens led the Desk to a video uploaded on February 10, 2026, by India Today on its official YouTube channel. The visuals in this original video exactly matched those seen in the viral clip showing Tharoor speaking to the media. However, upon analysing the original footage, we found that Tharoor was speaking in Hindi about the controversy surrounding the T20 World Cup. He stated that politics should not be mixed with cricket or sports and did not praise Pakistan or the Pakistan Cricket Board at any point. This indicates that the audio in the viral clip had been manipulated and replaced. In the original video, Tharoor said that politicians should conduct politics separately, diplomats should handle diplomacy, and cricket players should focus on the game, expressing hope that cricket would move forward with the match.

- https://www.youtube.com/watch?v=GkA1mLlAT8Q&t=3s

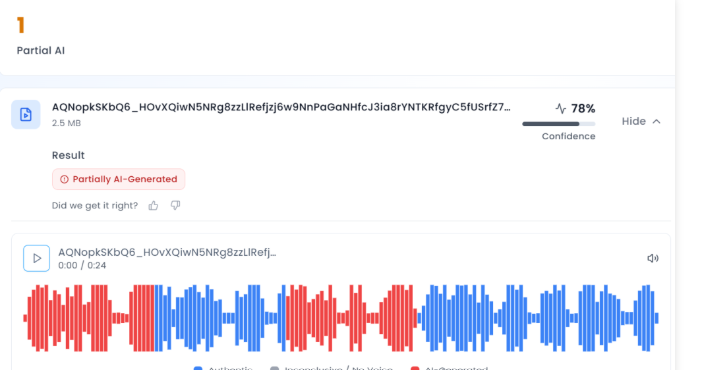

To further verify the authenticity of the video, several AI detection tools were used. Analysis through Aurigin.ai suggested a 78 percent probability that the audio in the viral clip was AI-generated.

Conclusion

The CyberPeace confirmed that the viral video is a deepfake. Tharoor did not praise Pakistan’s diplomatic stance during the T20 World Cup controversy, and the circulating clip has been digitally manipulated.

Introduction

" सर्वे भवन्तु सुखिनः, सर्वे सन्तु निरामयाः " May all be happy, may all be free from suffering. This timeless invocation reflects a vision of collective well-being, where progress is meaningful only when shared, and protection extends to every individual in society. This very philosophy lies at the heart of Corporate Social Responsibility, which seeks to ensure that growth is not isolated or unequal, but inclusive, ethical, and mindful of the broader social good.

At its core, Corporate Social Responsibility is not merely a statutory obligation, it is a reflection of a deeper ethical commitment, an acknowledgement that growth must carry with it a sense of duty towards society. In many ways, CSR embodies the idea that progress without responsibility is incomplete, and that corporations, as key actors shaping modern life, must help safeguard the very communities they engage with.

Reframing Digital Literacy Through Cyber Safety in CSR Frameworks

In India, this moral vision has been given a legal structure under the Companies Act, 2013, CSR Schedule VII, which mandates certain classes of companies to allocate a portion of their profits towards socially beneficial activities. Section 135 of the Act requires companies meeting specified financial thresholds to undertake CSR initiatives, guided by principles of inclusivity, sustainability, and social welfare. The underlying values are clear, CSR is intended not as charity, but as a strategic and accountable contribution to societal development.

Schedule VII of the Act further outlines the broad areas that qualify as CSR, including “Education and Digital Literacy”, gender equality, rural development, and measures for reducing inequalities. Within this framework, promoting “digital literacy” has increasingly been recognised as a legitimate and necessary CSR activity, especially in the context of a rapidly digitising society like India.

However, the current understanding of digital literacy within CSR remains incomplete. It often emphasises access and usage, teaching individuals how to navigate digital platforms, use devices, and engage with online services. What remains insufficiently addressed is the question of safety. In an environment where cyber fraud, data breaches, online harassment, and identity theft are becoming increasingly common, digital literacy without cyber awareness risks becoming a partial and potentially harmful intervention.

Embedding cyber awareness and capacity building within ‘digital literacy’ in explicit form is therefore not optional, it is essential. This includes equipping individuals with the ability to recognise online threats, protect personal data, understand digital consent, and respond effectively to cyber risks. It also requires recognising that vulnerable populations, including first-time internet users, women, and marginalised communities, often face disproportionate exposure to cyber harm.

“It is pertinent to note that Cybersecurity awareness training is relevant to CSR but is not yet consistently implemented as an explicit CSR activity. It is often included indirectly within digital literacy programs, highlighting the need for a more structured, progressive and integrated approach.”

Given this reality, there is a strong case for explicitly recognising cyber awareness as a distinct and integral component of CSR activities, rather than treating it as an implicit subset of digital literacy. Doing so would not only align CSR with contemporary societal risks but also ensure that corporate interventions move beyond enabling access to actively ensuring safety.

In a digital society, empowerment without protection is incomplete. If CSR is to truly reflect its foundational values, it must evolve to address not just the opportunities of the digital age, but also its risks.

Why Cyber Safety Must Be Central to CSR

The current state of digital ecosystems, which used to operate as secondary systems, now functions as essential systems that support government operations, banking systems, educational institutions, and social communication. The digital environment has its vulnerabilities, which create direct dangers for people in society. The elderly, first-time internet users, and rural communities face higher cyber threat risks because they often lack knowledge and protective resources on responsible use. The implementation of CSR initiatives that provide digital access to these groups, along with how to handle risks, will create greater benefit for their safety. Organisations must encourage the implementation of cyber safety training in their CSR programs because doing so will create value while fulfilling their ethical obligations. The empowerment process needs to achieve complete success, which protects people from any potential dangers according to the "do no harm" principle.

Key Components of CyberPeace-Aligned Digital Literacy

To make CSR initiatives more effective and future-ready, organisations should incorporate the following elements into their digital literacy programs:

- Cyber Awareness and Risk Recognition: The training program teaches participants how to recognise typical security threats, which include phishing attacks and scams, deepfake technology and misinformation.

- Data Protection and Privacy Literacy: The program teaches users how to protect their personal information, together with the process of giving consent and the methods used to handle their online presence.

- Responsible Digital Behaviour: The program teaches people how to use the internet responsibly by showing them how to make ethical decisions that require both respect and accountability while understanding the legal consequences of their actions.

- Incident Response and Reporting Mechanisms: The program teaches users about cyber incident response, which includes all reporting methods and available support resources.

- Inclusion-Focused Design: The program develops specific solutions which protect various demographic groups from their particular vulnerabilities while maintaining accessibility and essential programmatic relevance.

Policy and Institutional Alignment

The integration of cyber safety into corporate social responsibility lets organisations achieve their national objectives, which include:

- Strengthening digital trust and resilience

- Supporting safe digital inclusion initiatives

- Complementing the efforts of institutions working on cybersecurity awareness and capacity building

The structured approach requires organisations to execute three specific steps, which include:

- Partnering with cybersecurity organisations and civil society

- Developing standardised cyber awareness modules

- The organisation will use behavioural change indicators to evaluate its impact instead of relying on access metrics.

The Way Forward

Digital-era Corporate Social Responsibility needs to transition from its present state of providing access to digital resources toward establishing secure online platforms for users. The understanding of digital literacy needs to shift from its current status as a technical ability toward its new definition as a social competency that encompasses safety, responsibility and resilience training.

Companies need to understand their digital transformation obligations because their digital transformation efforts require them to handle all associated risks. The implementation of cyber safety within corporate social responsibility frameworks will enable organisations to develop a secure and trustworthy digital environment that includes all users.

Conclusion

The implementation of corporate social responsibility needs to fulfil its core mission of creating societal benefits through inclusive practices that span all current digital possibilities and their associated security threats. The field of digital literacy requires a new framework that combines digital safety practices with its existing educational materials.

The digital safety practice ensures that people obtain essential knowledge and skills that enable them to use digital resources securely when they access online content. The process of accomplishing shared community prosperity needs to establish a framework that benefits every person through social advancement and the protection of their rights.

References

- https://upload.indiacode.nic.in/schedulefile?aid=AC_CEN_22_29_00008_201318_1517807327856&rid=79

- https://www.allresearchjournal.com/archives/2025/vol11issue4/PartF/11-5-60-511.pdf

- https://www.unesco.org/en/dtc-finance-toolkit-factsheets/corporate-social-responsibility-csr

- https://www.investopedia.com/terms/c/corp-social-responsibility.asp

- https://digitalmarketinginstitute.com/blog/corporate-16-brands-doing-corporate-social-responsibility-successfully

- https://www.imd.org/blog/sustainability/csr-strategy/

Executive Summary:

Amid escalating tensions between Afghanistan and Pakistan, a video is being widely shared on social media claiming that Afghanistan has shot down a Pakistani fighter jet. The posts further allege that the incident marks the formal beginning of a war between the two countries. However, research conducted by the CyberPeace found the viral claim to be false and the research revealed that the circulating video is not authentic but AI-generated.

Claim

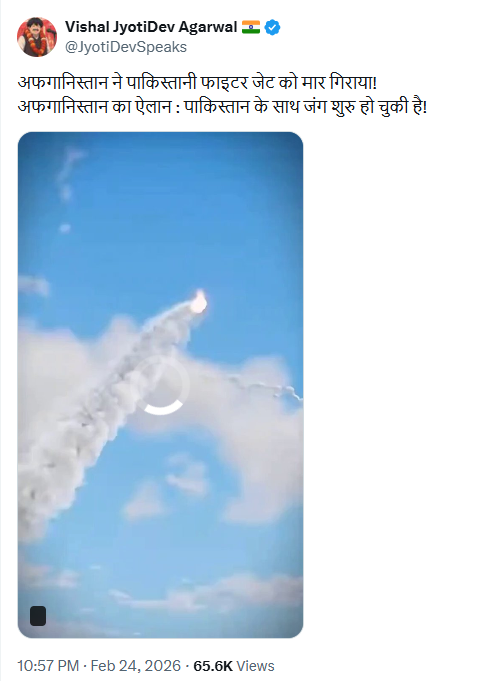

On February 24, 2026, a user on X (formerly Twitter) shared the viral video with the caption: “Afghanistan has shot down a Pakistani fighter jet! Afghanistan announces that war with Pakistan has begun.”

- Original post link: https://x.com/JyotiDevSpeaks/status/2026348257186545914

- Archived link: https://ghostarchive.org/archive/7l00Y

Fact Check:

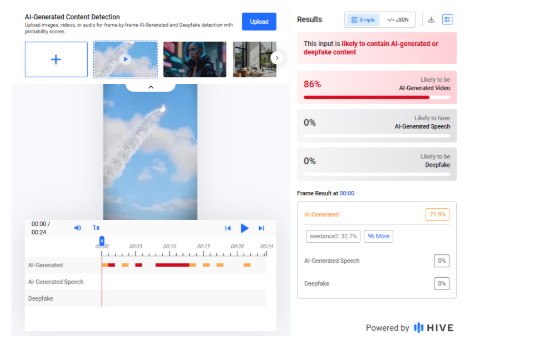

A careful review of the viral video revealed unusual visual patterns and artificial-looking effects, raising suspicions that it may have been created using artificial intelligence.We analyzed the video using the AI detection tool Hive Moderation, which indicated an 86 percent probability that the video was AI-generated.

To further verify the findings, we scanned the footage using another AI detection platform, Sightengine. The results showed a 99 percent likelihood that the video was AI-generated.

To understand the broader context of the ongoing tensions, we conducted a keyword search and found a report published on February 22, 2026, by BBC Hindi. According to the report, Pakistan claimed it had targeted “seven terrorist hideouts and camps” along the Pakistan–Afghanistan border based on intelligence inputs. Meanwhile, a spokesperson for the Taliban government in Afghanistan stated that Pakistani airstrikes in Nangarhar and Paktika provinces resulted in the deaths of dozens of people, including women and children.

- https://www.bbc.com/hindi/articles/clyz8141397o

Conclusion

Our research confirms that the viral video claiming Afghanistan shot down a Pakistani fighter jet and formally declared war on Pakistan is fake. The footage is AI-generated and is being circulated with a false and misleading narrative.