#FactCheck - Suryakumar Yadav–Salman Ali Agha Handshake Row: Viral Image Found AI-Generated

Executive Summary

An image circulating on social media claims to show Suryakumar Yadav, captain of the Indian cricket team, extending his hand to greet Pakistan’s skipper Salman Ali Agha, who allegedly refused the gesture during the India–Pakistan T20 World Cup match held on February 15. Users shared the image as evidence of a real incident from the high-profile clash. However, a research by CyberPeace found that the image is AI-generated and was falsely circulated to mislead viewers.

Claim

On February 15, an X account named “@iffiViews,” reportedly operated from Pakistan, shared the image claiming it was taken during the India–Pakistan T20 World Cup match at the R. Premadasa Stadium in Colombo. The viral image appeared to show Yadav attempting to shake hands with Agha, who seemed to decline the gesture. The post quickly gained significant traction online, attracting around one million views at the time of reporting. Here is the link and archive link to the post, along with a screenshot.

- https://x.com/iffiViews/status/2023024665770484206?s=20

- https://archive.ph/xvtBs

Fact Check:

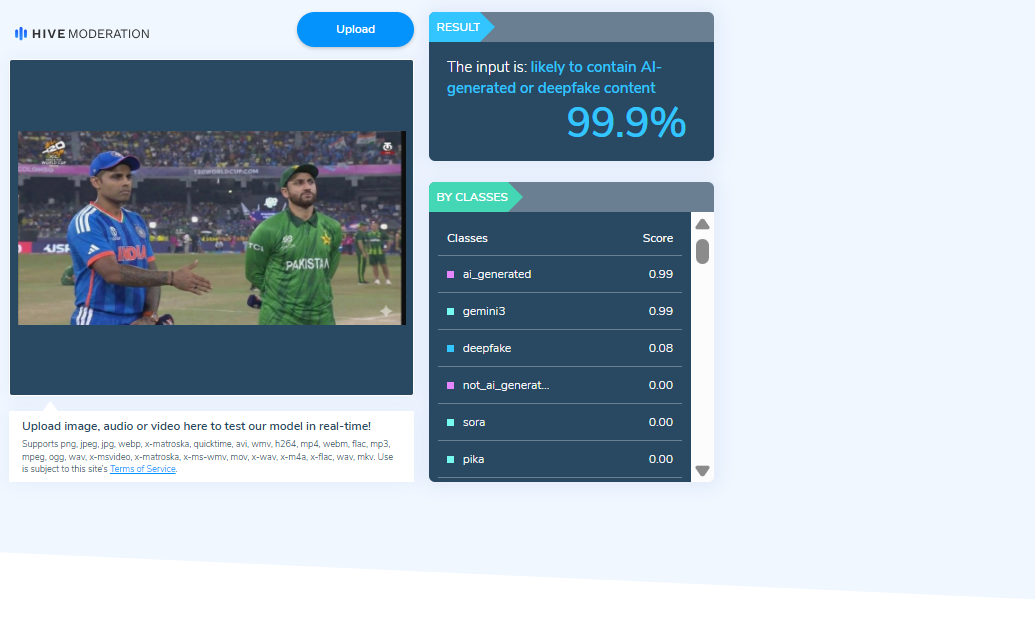

To verify the authenticity of the image, researchers closely examined the visual and identified a watermark associated with an AI image-generation tool. This raised strong indications that the image was digitally created and did not depict an actual event.

The image was further analysed using an AI detection tool, which indicated a 99.9 percent probability that the content was artificially generated or manipulated.

Researchers also conducted keyword searches to check whether the two captains had exchanged a handshake during the match. The search revealed media reports confirming that the traditional handshake between players has been discontinued since the Asia Cup 2025 in both men’s and women’s cricket. A report published by The Times of India on February 15 confirmed that no such customary exchange took place during the match between the two teams in Colombo.

Conclusion

The viral image claiming to show Suryakumar Yadav attempting to shake hands with Salman Ali Agha is not authentic. The visual is AI-generated and has been shared online with misleading claims.

Related Blogs

.webp)

Introduction

The AI Action Summit is a global forum that brings together world leaders, policymakers, technology experts, and industry representatives to discuss AI governance, ethics, and its role in society. This year, the week-long Paris AI Action Summit officially culminated on the 11th of February, 2025. It brought together experts from the industry, policymakers, and other dignitaries to discuss Artificial Intelligence and its challenges. The event was co-chaired by Indian Prime Minister Narendra Modi and French President Emmanuel Macron. In line with the summit, the Indian delegation actively engaged in the 2nd India-France AI Policy Roundtable, an official side event of the summit, and the 14th India-France CEOs Forum. These discussions were on diverse sectors including defense, aerospace, technology, etc. among other things.

Prime Minister Modi’s Address

During the AI Action Summit in Paris, Prime Minister Narendra Modi drew attention to the revolutionary effect of AI in politics, the economy, security, and society. Stressing the requirement of international cooperation, he promoted strong frameworks of governance to combat AI-based risks and consequently, build public confidence in new technologies. Needed efforts with respect to cybersecurity issues such as deepfakes and disinformation were also acknowledged.

Democratising AI, and sharing its benefits, particularly with the Global South not only aligned with Sustainable Development Goals (SDGs) but also affirmed India’s resolve towards sharing expertise and best practices. India’s remarkable feat of creating a Digital Public Infrastructure, that caters to a population of 1.4 billion through open and accessible technology was highlighted as well.

Among the key announcements, India revealed its plans to create its own Large Language Model (LLM) that reflects the country's linguistic diversity, strengthening its AI aspirations. Further, India will be hosting the next AI Action Summit, reaffirming its position in international AI leadership. The Prime Minister also welcomed France's initiatives, such as the launch of the "AI Foundation" and the "Council for Sustainable AI", initiated by President Emmanuel Macron. He emphasized the necessity to extend the Global Partnership for AI and to get it more representative and inclusive so that Global South voices are actually incorporated into AI innovation and governance.

Other Perspectives

Though there were 58 countries that signed the international agreement on a more open, inclusive, sustainable, and ethical approach to AI development (including India, France, and China), the UK and the US have refused to sign the international agreement at the AI Summit stating their issues with global governance and national security. While the former raised concerns about the lack of sufficient details regarding the establishment of global AI governance and AI’s effect on national security as their reason, the latter showcased its reservations about the overly wide AI regulations which had the potential to hamper a transformative industry. Meanwhile, the US is also looking forward to ‘Stargate’, its $500 billion AI infrastructure project alongside the companies- OpenAI, Softbank, and Oracle.

CyberPeace Insights

The Summit has garnered greater significance with the backdrop of the release of platforms such as DeepSeek R1, China’s AI assistant system similar to that of OpenAI’s ChatGPT. On its release, it was the top-rated free application on Apple’s app store and sent the technology stocks tumbling. Moreover, investors world over appreciated the creation of the model which was made roughly in about $5 million while other AI companies spent more in comparison (keeping in mind the restrictions caused by the chip export controls in China). This breakthrough challenges the conventional notion that massive funding is a prerequisite for innovation, offering hope for India’s burgeoning AI ecosystem. With the IndiaAI mission and fewer geopolitical restrictions, India stands at a pivotal moment to drive responsible AI advancements.

References:

- https://www.mea.gov.in/press-releases.htm?dtl/39023/Prime_Minister_cochairs_AI_Action_Summit_in_Paris_February_11_2025

- https://indianexpress.com/article/explained/explained-sci-tech/what-is-stargate-trumps-500-billion-ai-project-9793165/

- https://pib.gov.in/PressReleasePage.aspx?PRID=2102056

- https://pib.gov.in/PressReleasePage.aspx?PRID=2101947

- https://pib.gov.in/PressReleasePage.aspx?PRID=2101896

- https://www.timesnownews.com/technology-science/uk-and-us-decline-to-sign-global-ai-agreement-at-paris-ai-action-summit-here-is-why-article-118164497

- https://www.thehindu.com/sci-tech/technology/india-57-others-sign-paris-joint-statement-on-inclusive-sustainable-ai/article69207937.ece

Introduction

In an era where digitalization is transforming every facet of life, ensuring that personal data is protected becomes crucial. The enactment of the Digital Personal Data Protection Act, 2023 (DPDP Act) is a significant step that has been taken by the Indian Parliament which sets forth a comprehensive framework for Digital Personal Data. The Draft Digital Personal Data Protection Rules, 2025 has recently been released for public consultation to supplement the Act and ensure its smooth implementation once finalised. Though noting certain positive aspects, there is still room for addressing certain gaps and multiple aspects under the draft rules that require attention. The DPDP Act, 2023 recognises the individual’s right to protect their personal data providing control over the processing of personal data for lawful purposes. This Act applies to data which is available in digital form as well as data which is not in digital form but is digitalised subsequently. While the Act is intended to offer wide control to the individuals (Data Principal) over their personal information, its impact on vulnerable groups such as ‘Persons with Disabilities’ requires closer scrutiny.

Person with Disabilities as data principal

The term ‘data principal’ has been defined under the DPDP Act under Section 2(j) as a person to whom the personal data is related to, which also includes a person with a disability. A lawful guardian acting on behalf of such person with disability has also been included under the ambit of this definition of Data Principal. As a result, a lawful guardian acting on behalf of a person with disability will have the same rights and responsibilities as a data principal under the Act.

- Section 9 of the DPDP Act, 2023 states that before processing the personal data of a person with a disability who has a lawful guardian, the data fiduciary must obtain verifiable consent from that guardian, ensuring proper protection of the person with disability's data privacy.

- The data principal has the right to access information about personal data under Section 11 which is being processed by the data fiduciary.

- Section 12 provides the right to correction and erasure of personal data by making a request in a manner prescribed by the data fiduciary.

- A right to grievance redressal must be provided to the data principal in respect of any act or omission of performance of obligations by the data fiduciary or the consent manager.

- Under Section 14, the data principal has the right to nominate any other person to exercise the rights provided under the Act in case of death or incapacity.

Provision of consent and its implication

The three key components of Consent that can be identified under the DPDP Act, are:

- Explicit and Informed Consent: Consent given for the processing of data by the data principal or a lawful guardian in case of persons with disabilities must be clear, free and informed as per section 6 of the Act. The data fiduciary must specify the itemised description of the personal data required along with the specified purpose and description of the goods or services that would be provided by such processing of data. (Rule 3 under Draft Digital Personal Data Protection Rules)

- Verifiable Consent: Section 9 of the DPDP Act provides that the data fiduciary needs to obtain verifiable consent of the lawful guardian before processing any personal data of such a person with a disability. Rule 10 of the Draft Rules obligates the data fiduciary to adopt measures to ensure that the consent given by the lawful guardian is verifiable before the is processed.

- Withdrawal of Consent: Data principal or such lawful guardian has the option to withdraw consent for the processing of data at any point by making a request to the data fiduciary.

Although the Act includes certain provisions that focus on the inclusivity of persons with disability, the interpretation of such sections says otherwise.

Concerns related to provisions for Persons with Disabilities under the DPDP Act:

- Lack of definition of ‘person with disabilities’: The DPDP Act or the Draft Rules does not define the term ‘persons with disabilities’. This will create confusion as to which categories of disability are included and up to what percentage. The Rights of Persons with Disabilities Act, 2016 clearly defines ‘person with benchmark disability’, ‘person with disability’ and ‘person with disability having high support needs’. This categorisation is essential to determine up to what extent a person with disability needs a lawful guardian which is missing under the DPDP Act.

- Lack of autonomy: Though the definition of data principal includes persons with disabilities however the decision-making authority has been given to the lawful guardian of such individuals. The section creates ambiguity for people who have a lower percentage of disability and are capable of making their own decisions and have no autonomy in making decisions related to the processing of their personal data because of the lack of clarity in the definition of ‘persons with disabilities’.

- Safeguards for abuse of power by lawful guardian: The lawful guardian once verified by the data fiduciary can make decisions for the persons with disabilities. This raises concerns regarding the potential abuse of power by lawful guardians in relation to the handling of personal data. The DPDP Act does not provide any specific protection against such abuse.

- Difficulty in verification of consent: The consent obtained by the Data Fiduciary must be verified. The process that will be adopted for verification is at the discretion of the data fiduciary according to Rule 10 of the Draft Data Protection Rules. The authenticity of consent is difficult to determine as it is a complex process which lacks a standard format. Also, with the technological advancements, it would be challenging to identify whether the information given to verify the consent is actually true.

CyberPeace Recommendations

The DPDP Act, 2023 is a major step towards making the data protection framework more comprehensive, however, the provisions related to persons with disabilities and powers given to lawful guardians acting on their behalf still need certain clarity and refinement within the DPDP Act framework.

- Consonance of DPDP with Rights of Persons with Disabilities (RPWD) Act, 2016: The RPWD and DPDP Act should supplement each other and can be used to clear the existing ambiguities. Such as the definition of ‘persons with disabilities’ under the RPWD Act can be used in the context of the DPDP Act, 2023.

- Also, there must be certain mechanisms and safeguards within the Act to prevent abuse of power by the lawful guardian. The affected individual in case of suspected abuse of power should have an option to file a complaint with the Data Protection Board and the Board can further take necessary actions to determine whether there is abuse of power or not.

- Regulatory oversight and additional safeguards are required to ensure that consent is obtained in a manner that respects the rights of all individuals, including those with disabilities.

References:

- https://www.meity.gov.in/writereaddata/files/Digital%20Personal%20Data%20Protection%20Act%202023.pdf

- https://www.meity.gov.in/writereaddata/files/259889.pdf

- https://www.indiacode.nic.in/bitstream/123456789/15939/1/the_rights_of_persons_with_disabilities_act%2C_2016.pdf

- https://www.deccanherald.com/opinion/consent-disability-rights-and-data-protection-3143441

- https://www.pacta.in/digital-data-protection-consent-protocols-for-disability.pdf

- https://www.snrlaw.in/indias-new-data-protection-regime-tracking-updates-and-preparing-for-compliance/

Executive Summary

A video circulating on social media claims that a Jaguar fighter jet of the Indian Air Force (IAF) failed to land during a takeoff and landing exercise held on April 22, 2026, at the Purvanchal Expressway in Uttar Pradesh. The claim suggests that the incident disrupted preparations for “Operation Sindoor.” However, an research by the CyberPeace Research Wing has found the claim to be false.

Claim

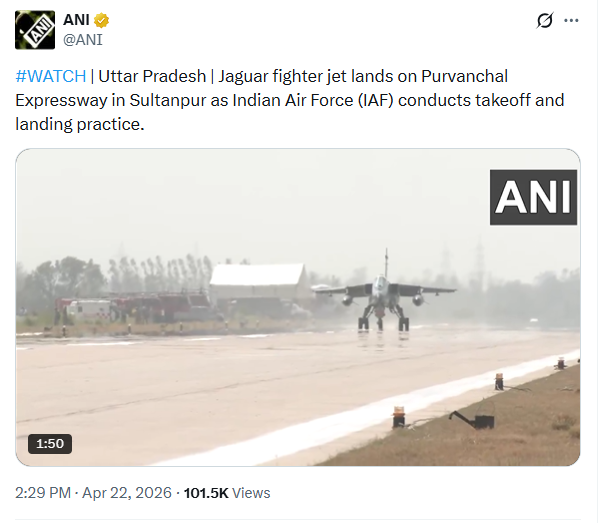

The video was shared by a Facebook user, ‘Meera MJ,’ alleging that the Jaguar aircraft could not land during the exercise conducted near Sultanpur. To verify the authenticity of the video, multiple keyframes were extracted and analyzed using reverse image search tools. This led to the original footage shared by ANI on its official X (formerly Twitter) handle on April 22, 2026. The authentic video of the air show does not show any such incident of a failed landing.

Fact Check

A detailed review of ANI’s social media posts also revealed no evidence supporting the viral claim. This strongly indicates that the circulating clip has been digitally manipulated by altering the original footage.

Further corroboration came from a report published by Bhaskar.com, which extensively covered the air show. According to the report, the event featured successful operations by multiple aircraft, including the C-295 transport aircraft landing on the expressway airstrip, followed by Jaguar jets taking off. Sukhoi and Mirage fighter jets also performed takeoff and landing drills, while M17 helicopters carried out commando mock operations. Additionally, the M32 Bhishma aircraft conducted ‘touch and go’

Conclusion:

The viral claim that a Jaguar fighter jet failed to land during the Indian Air Force drill is baseless. The video being circulated is digitally manipulated and does not reflect any real incident.