#FactCheck - Old Wedding Fire Video Misleadingly Shared as Iranian Hypersonic Missile Strike in Tel Aviv

Executive Summary:

Amid the ongoing conflict involving the United States, Israel, and Iran, a video showing a building engulfed in flames is being widely circulated on social media. In the clip, a large fire can be seen inside a building while several people appear to be running in panic. The video is being shared with the claim that Iran fired a hypersonic missile targeting a ceremony in Tel Aviv, Israel, allegedly killing several Israeli military generals and other prominent figures.

However, research by the CyberPeace found that the claim is false. The video being circulated as footage of an attack in Israel actually predates the current conflict and shows a fire that broke out during a wedding ceremony.

Claim

A Facebook user named “Syed Asif Raza Jafri” shared the video on March 13, 2026, claiming that an Iranian hypersonic missile had struck a grand ceremony in Tel Aviv, where several Israeli military officers, generals, soldiers, and other important personalities were present. According to the post, the attack resulted in multiple casualties.

Source:

- https://www.facebook.com/reel/902182825912364

- https://ghostarchive.org/archive/rZryr

Fact Check

To verify the claim, we began our research using the Google Lens reverse image search tool. Several key frames from the viral video were extracted and searched online.

During the search, we found the same video shared earlier on multiple foreign social media accounts. A Facebook user named “Es de Bombero” from Chile had posted the video on January 17, 2026, describing it in Spanish as footage of a fire that broke out during a wedding celebration.

Our research shows that the viral video had been circulating on social media since at least January 15, 2026, well before the escalation of the current conflict. According to a report published on March 1, 2026, by BBC, the large-scale attacks on Iran by the United States and Israel began on February 28, 2026, after which Iran’s Supreme Leader Ali Khamenei was reported dead.

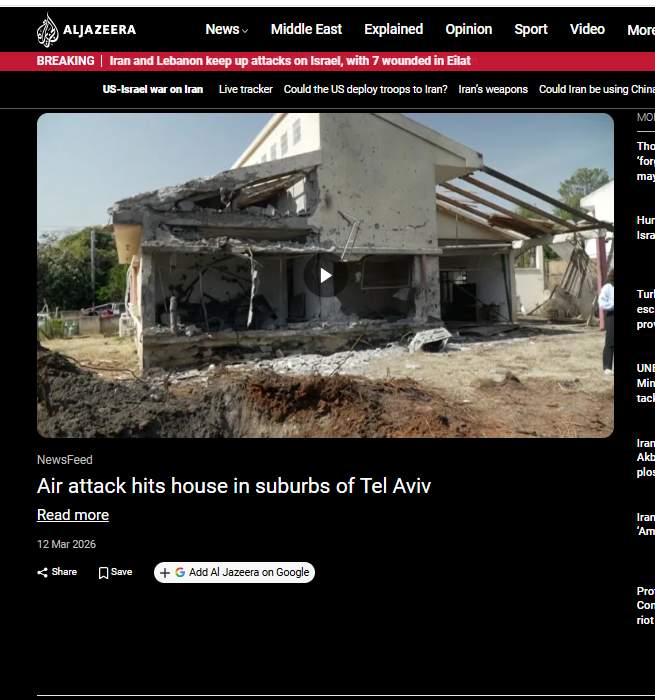

Additionally, a March 12, 2026 report by Al Jazeera stated that a house near Tel Aviv in central Israel was damaged by a rocket reportedly fired by Hezbollah, which has previously carried out joint attacks in coordination with Iran.

Conclusion

The viral video being shared as footage of an Iranian hypersonic missile strike in Tel Aviv is misleading. The clip is an older video of a fire that reportedly broke out during a wedding ceremony and was circulating online before the current conflict began.

While the exact location of the incident shown in the video cannot be independently verified, it is clear that the footage has no connection to the ongoing war between the United States, Israel, and Iran.