#FactCheck: Viral video of Unrest in Kenya is being falsely linked with J&K

Executive Summary:

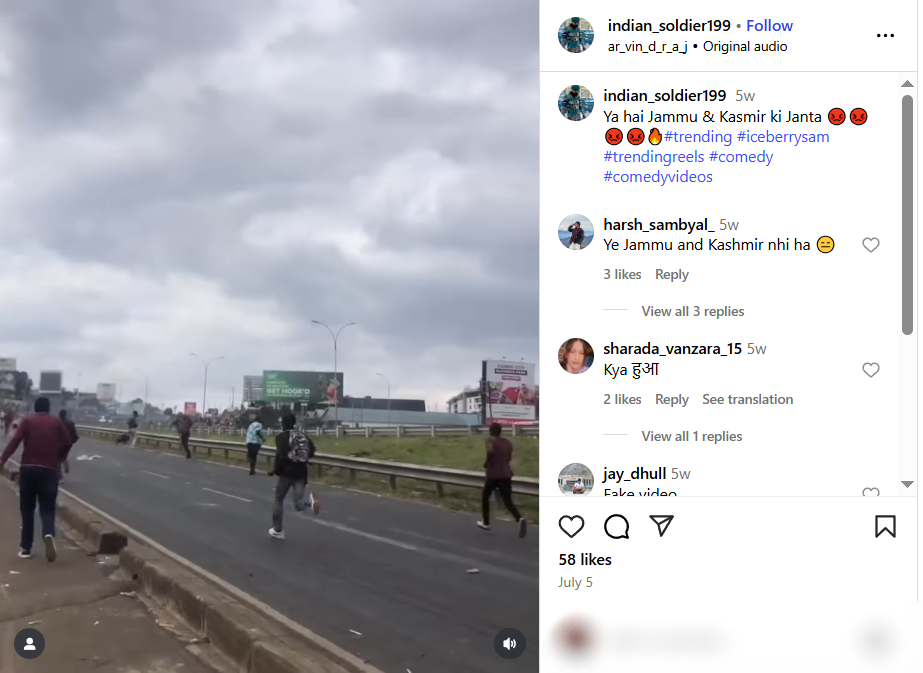

A video of people throwing rocks at vehicles is being shared widely on social media, claiming an incident of unrest in Jammu and Kashmir, India. However, our thorough research has revealed that the video is not from India, but from a protest in Kenya on 25 June 2025. Therefore, the video is misattributed and shared out of context to promote false information.

Claim:

The viral video shows people hurling stones at army or police vehicles and is claimed to be from Jammu and Kashmir, implying ongoing unrest and anti-government sentiment in the region.

Fact Check:

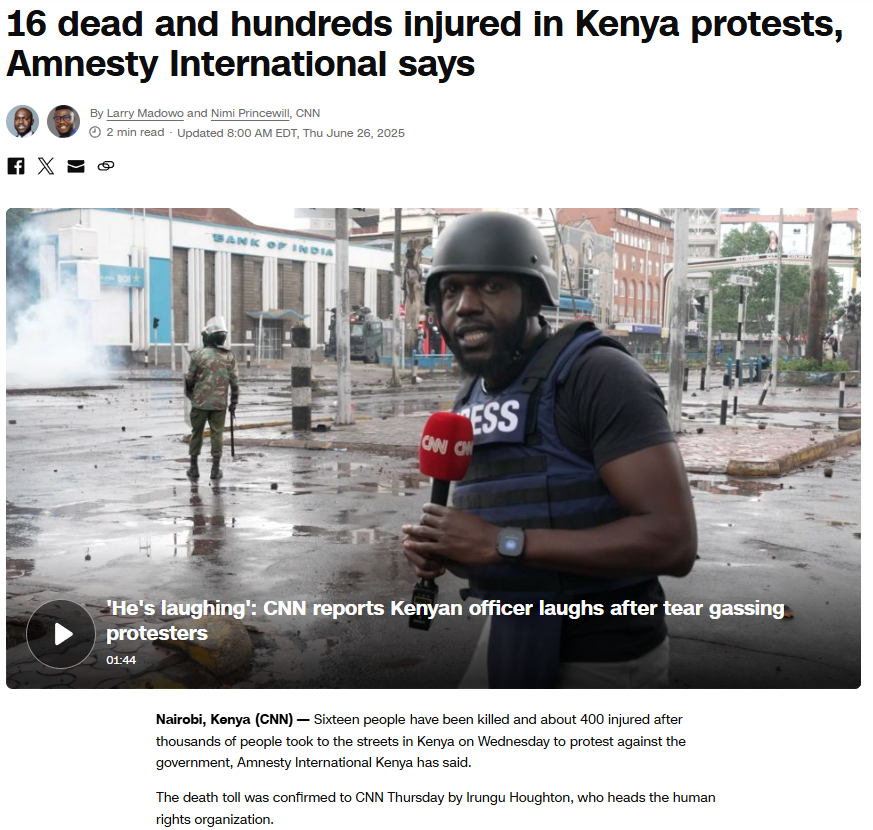

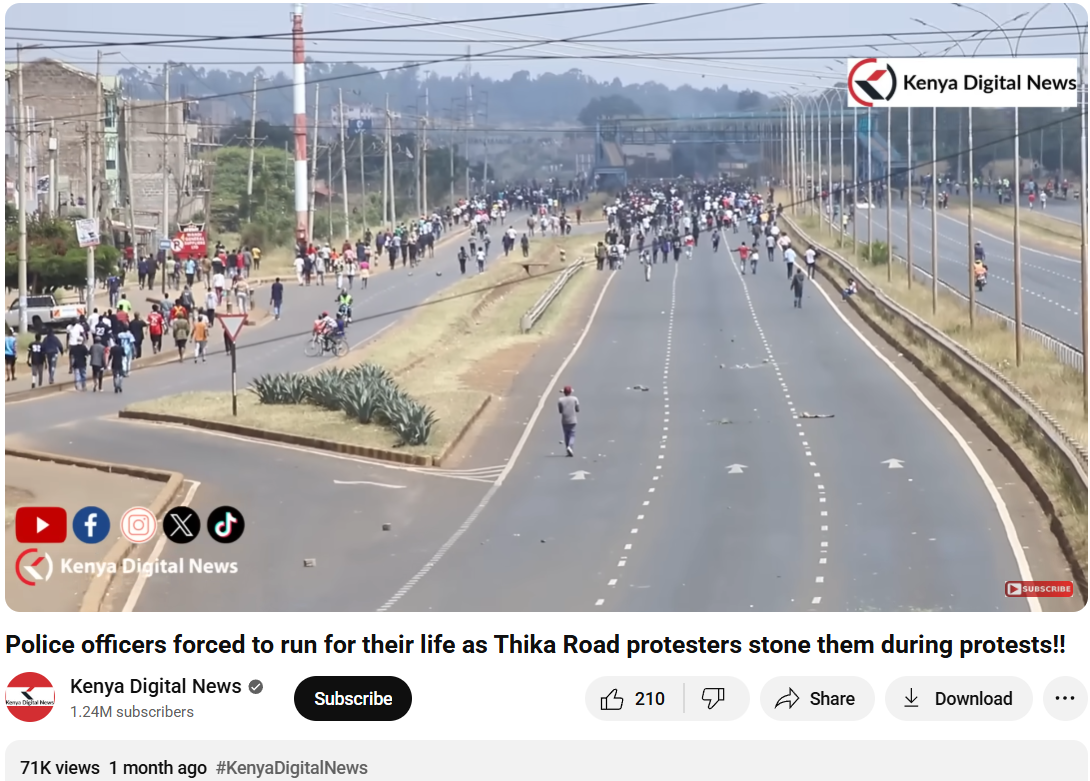

To verify the validity of the viral statement, we did a reverse image search by taking key frames from the video. The results clearly demonstrated that the video was not sourced from Jammu and Kashmir as claimed, but rather it was consistent with footage from Nairobi, Kenya, where a significant protest took place on 25 June 2025. Protesters in Kenya had congregated to express their outrage against police brutality and government action, which ultimately led to violent clashes with police.

We also came across a YouTube video with similar news and frames. The protests were part of a broader anti-government movement to mark its one-year time period.

To support the context, we did a keyword search of any mob violence or recent unrest in J&K on a reputable Indian news source, But our search did not turn up any mention of protests or similar events in J&K around the relevant time. Based on this evidence, it is clear that the video has been intentionally misrepresented and is being circulated with false context to mislead viewers.

Conclusion:

The assertion that the viral video shows a protest in Jammu and Kashmir is incorrect. The video appears to be taken from a protest in Nairobi, Kenya, in June 2025. Labeling the video incorrectly only serves to spread misinformation and stir up uncalled for political emotions. Always be sure to verify where content is sourced from before you believe it or share it.

- Claim: Army faces heavy resistance from Kashmiri youth — the valley is in chaos.

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

The most recent cable outages in the Red Sea, which caused traffic to slow down throughout the Middle East, South Asia, and even India, Pakistan and several parts of the UAE, like Etilasat and Du networks, also experienced comparable internet outages, serve as a reminder that the physical backbone of the internet is both routine and extremely important. Cloud platforms reroute traffic, e-commerce stalls, financial transactions stutter, and governments face the fragility of something they long believed to be seamless when systems like SMW4 and IMEWE malfunction close to Jeddah. Concerns over the susceptibility of undersea information highways have been raised by the incident. Given the ongoing conflict in the Red Sea region, where Yemen’s Houthi rebels have been waging a campaign against commercial shipping in retaliation for the Israel-Hamas war in Gaza. The effects are seen immediately. The argument over whether global connection is genuinely robust or just operating on borrowed time was reignited by these recent failures, which compelled key providers to reroute flows.

A geopolitical signal is what looks like a “technical glitch.” Accidents in contested waters are rarely simply accidents, and the inability to quickly assign blame highlights how brittle this ostensibly flawless digital world is.

The Paradox of Essential yet Exposed Infrastructure

This is not an isolated accident. Undersea cables, which carry more than 97% of all internet traffic worldwide, connect continents at the speed of light, and support the cloud infrastructures that contemporary societies rely on, are the brains of the digital economy., as cautioned by NATO’s Cooperative Cyber Defence Centre of Excellence. In a sense, they are our unseen electrical grid; without them, connectivity breaks down. However, they continue to be incredibly fragile in spite of their significance. Anchors and fishing gear frequently damage cables, which are no thicker than a garden hose, and they break more than a hundred times annually on average. Most faults can be swiftly fixed or relocated, but when several cuts happen in strategic areas, like the 2022 Tonga eruption or the current Red Sea crisis, nations and economies are exposed to being isolated for days.

The geopolitical risks are far more urgent. Subsea cables traverse disputed waters, land in hostile regimes, and cross oceans without regard for political boundaries. This makes them appealing for espionage, where state actors can tap or alter flows covertly, as well as sabotage, when service is interrupted to prevent access. Deliberate cable strikes have been likened by NATO specialists to the destruction of bridges or highways: if you choke the arteries, you choke the economy. Ironically, the most susceptible locations are not far below the surface but rather where cables emerge. These landing sites, which handle billions of dollars’ worth of trade, can have less security than a conventional bank office.

The New Theatre of Geopolitics

Legal frameworks exist, but they are patchwork. Intentional damage is illegal under the UN Convention on the Law of the Sea and previous agreements, but attribution is still infamously challenging. Covert sabotage and intelligence operations are examples of legal grey areas in hybrid warfare scenarios. Even during times of peace, national governments that rely on their continuous operation but find it difficult to extend sovereignty into international waters, private telecom consortia, and content giants like Google and Amazon that now finance their own cables share the burden of protection.

Cables convey influence in addition to data. Strategic leverage belongs to whoever can secure them, tap them or cut them during a fight. Even though landing stations are the entry points for billions of dollars’ worth of international trade, they frequently offer less security than a commercial bank branch.

India at the Crossroads of Digital Geopolitics

India’s reliance on underwater cables presents both advantages and disadvantages. India presents a classic single-point-of-failure danger, with more than 95% of its international data traffic being routed through a 6-km coastal stretch close to Versova, Mumbai. Red Sea disruptions have previously demonstrated how swiftly chokepoints located far from India’s coast may impede its digital arteries, placing a burden on government functions, defence communications, and financial flows. However, this same vulnerability also makes India a crucial player in the global discussion around digital sovereignty. It is not only an infrastructure exercise; it is also a strategic and constitutional necessity to be able to diversify landing places, expedite clearances, and develop indigenous repair capability.

India’s geographic location also presents opportunities. India’s location along East-West cable lines makes it an ideal location for robust connectivity as the Indo-Pacific region becomes the defining region of geopolitics in the twenty-first century. India may change from being a passive recipient of connectivity to a shaper of its governance by investing in distributed cable architecture and strengthening partnerships through initiatives like Quad and IPEF. Its aspirations for global influence must be balanced with its home regulatory lethargy. By doing this, India can secure not only bandwidth but also sovereignty itself by converting subsea cables from hidden liabilities into tools of economic might and geopolitical leverage.

CyberPeace Insights

If cables are considered essential infrastructure, then their safety demands the same level of attention that we give to ports, airports, and electrical grids. Stronger landing station defences, redundancy in route, and sincere public-private collaborations are now a necessity rather than an option.

The Red Sea incident is a call to action rather than a singular disruption. The robustness of underwater cables will determine whether the internet is a sustainable resource or a brittle luxury susceptible to the next outage as reliance on the cloud grows and 5G spreads.

References

- https://forumias.com/blog/answered-assess-the-strategic-significance-of-undersea-cable-networks-for-indias-digital-economy-and-national-security-discuss-the-vulnerabilities-of-this-infrastructure-and-suggest-measures-to-e/

- https://www.reuters.com/world/middle-east/red-sea-cable-cuts-disrupt-internet-across-asia-middle-east-2025-09-07/

- https://pulse.internetsociety.org/blog/what-can-we-learn-from-africas-multiple-submarine-cable-outages

Introduction

The world has been witnessing various advancements in cyberspace, and one of the major changes is the speed with which we gain and share information. Cyberspace has been declared as the fifth dimension of warfare, and hence, the influence of technology will go a long way in safeguarding ourselves and our nation. Information plays a vital role in this scenario, and due to the easy access to information, the instances of misinformation and disinformation have been rampant across the globe. In the recent Russia-Ukraine crisis, it was clearly seen how instances of misinformation can lead to major loss and harm to a nation and its subjects. All nations and global leaders are deliberating upon this aspect and efficient sharing of information among friendly nations and inter-government organisations.

What is IW?

IW, also known as Information warfare, is a critical aspect of defending our cyberspace. Information Warfare, in its broadest sense, is a struggle over the information and communications process, a struggle that began with the advent of human communication and conflict. Over the past few decades, the rapid rise in information and communication technologies and their increasing prevalence in our society has revolutionised the communications process and, with it, the significance and implications of information warfare. Information warfare is the application of destructive force on a large scale against information assets and systems, against the computers and networks that support the four critical infrastructures (the power grid, communications, financial, and transportation). However, protecting against computer intrusion, even on a smaller scale, is in the national security interests of the country and is important in the current discussion about information warfare.

IW in India

The aspects of misinformation have been recently seen in India in the form of the violence in Manipur and Nuh, which resulted in a massive loss of property and even human lives. A lot of miscreants or anti-national elements often seed misinformation in our daily news feed, and this is often magnified by social media platforms such as Instagram or X (formerly known as Twitter) and OTT-based messaging applications like WhatsApp or Telegram during the pandemic. It was seen nearly every week that some or the other new ways to treat COVID-19 were shared on Social media, which were false and inaccurate, especially in regard to the vaccination drive. A lot of posts and messages highlighted that the Vaccine is not safe, but a lot of this was a part of misinformation propaganda. Most of the time, the speed of spread of such episodes of misinformation is rapid and is often spread by the use of social media platforms and OTT messaging applications.

IW and Indian Army

Former Meta employees have recently come up with allegations that the Chinar Corp of the Indian Army had approached the social media giant to suppress some pages and channels which propagated content that may be objectionable. It is alleged that the formation made such a request to propagate its counterintelligence operations against Pakistan. The Chinar Corps is one of the most prestigious formations of the Indian Army and has the operational area of Kashmir Valley. The instances of online grooming and brainwashing have been common from the anti-national elements of Pakistan, as a faction of youth has been engaged in terrorist activities directly or indirectly. Various messaging and social media apps are used by the bad actors to lure in innocent youth on the fake and fabricated pretext of religion or any other social issue. The Indian Army had launched an anti-misinformation campaign in Kashmir, which aimed to protect Kashmiris from the propaganda of fake news and misinformation, which often led to radicalisation or even riots or attacks on defence forces. The aspect of net neutrality is often misused by bad actors in areas which are sociological, critical or unstable. The Indian Army has created special offices focusing on IW at all levels of formations, and the same is also used to eradicate all or any fake news or fake propaganda against the Indian Army.

Conclusion

Information has always been a source of power since the days of the Roman Empire. Control, dissemination, moderation and mode of sharing of information plays a vital role for any nation both in term of safety from external threats and to maintain National Security. Information Warfare is part of the 5th dimension of warfare, i.e., Cyberwar and is a growing concern for developed as well as developing nations. Information warfare is a critical aspect which needs to be incorporated in terms of basic training for defence personnel and law enforcement agencies. The anti-misinformation operation in Kashmir was primarily focused towards eradicating the bad elements after repealing Article 377, from cyberspace and ensuring harmony, peace, stability and prosperity in the state.

References

- https://irp.fas.org/eprint/snyder/infowarfare.htm

- https://www.thehindu.com/news/national/metas-india-team-delayed-action-against-army-led-misinfo-op-in-kashmir-us-news-report/article67352470.ece

- https://www.indiatoday.in/india/story/facebook-instagram-block-handles-of-chinar-corps-no-response-from-company-over-a-week-says-officials-1910445-2022-02-08

Executive Summary:

Amid the ongoing war involving the United States, Israel, and Iran, a video clip circulating on social media claims to show Iran unveiling a drone resembling the US B-2 stealth bomber. In the viral clip, an aircraft-like object can be seen emerging from a cave before taking off. Several users are sharing the video with the claim that Iran has deployed a B-2-style drone in the conflict.

However, research by the CyberPeace found that the viral video is not real and was generated using artificial intelligence. While the United States has reportedly used B-2 stealth bombers in strikes against Iran during the conflict, the viral clip does not show an actual Iranian drone.

Claim

X user “Muslim_Voice_Space” posted the video on March 3, 2026, claiming that Iran had rolled out a drone resembling the B-2 bomber for use in the war.

Fact Check

To verify the claim, we first closely examined the viral video. In the opening moments of the clip, the wing of the alleged drone appears to hit the side of the cave while exiting. Despite the apparent collision, the aircraft continues flying smoothly without any visible damage. This unusual detail raised doubts about the authenticity of the footage.

We then analyzed the video using the AI detection tool Hive Moderation, which flagged the clip as likely AI-generated.

Further analysis using the Sightengine AI detection tool also suggested that the video was artificially created. The tool estimated a 75% probability that the footage was generated using AI. It also indicated a 70% likelihood that the clip may have been created using Sora, an AI video-generation tool.

Conclusion

The viral video claiming to show an Iranian drone resembling the US B-2 stealth bomber emerging from a cave is not authentic. Analysis indicates that the clip was created using AI tools and is being misleadingly shared in the context of the ongoing conflict.