#FactCheck - Viral Clip Claiming PM Modi Pushed BJP President Nitin Nabin Is Misleading

Executive Summary:

A video clip featuring Prime Minister Narendra Modi and the newly elected Bharatiya Janata Party (BJP) national president, Nitin Nabin, is going viral on social media. In the clip, PM Modi is seen apparently pushing Nitin Nabin, prompting claims that Nabin had accidentally stepped between the Prime Minister and the camera, after which Modi allegedly pushed him out of the frame. CyberPeace’s research found that the viral clip is misleading and cropped. The original, unedited video shows Prime Minister Modi gesturing for Nitin Nabin to move ahead and offer floral tributes to the statues of Bharatiya Jana Sangh founder Syama Prasad Mukherjee and Pandit Deendayal Upadhyaya at the BJP headquarters in Delhi. It is pertinent to note that on 20 January 2026, BJP leader Nitin Nabin was elected as the party’s national president. Several senior BJP leaders, including Prime Minister Narendra Modi, were present at the event. During his address, PM Modi remarked, “Nitin Nabin ji is my boss, and I am a party worker.” The statement received widespread attention, following which multiple videos linking to the remark began circulating on social media. A Facebook user shared the viral clip with a Hindi caption alleging that despite calling himself a “party worker,” PM Modi pushed his “boss” out of the camera frame. The post further mocked the position of BJP president, claiming it to be merely ceremonial. (Archived link)

To verify the claim, we conducted a reverse image and video search, which led us to a longer version of the video uploaded on news agency INS’s official X handle on 20 January 2026. The caption stated that PM Modi, BJP president Nitin Nabin, Defence Minister Rajnath Singh, Home Minister Amit Shah, Union Minister Nitin Gadkari and senior leader J.P. Nadda paid tributes to Syama Prasad Mukherjee and Pandit Deendayal Upadhyaya at the BJP headquarters.

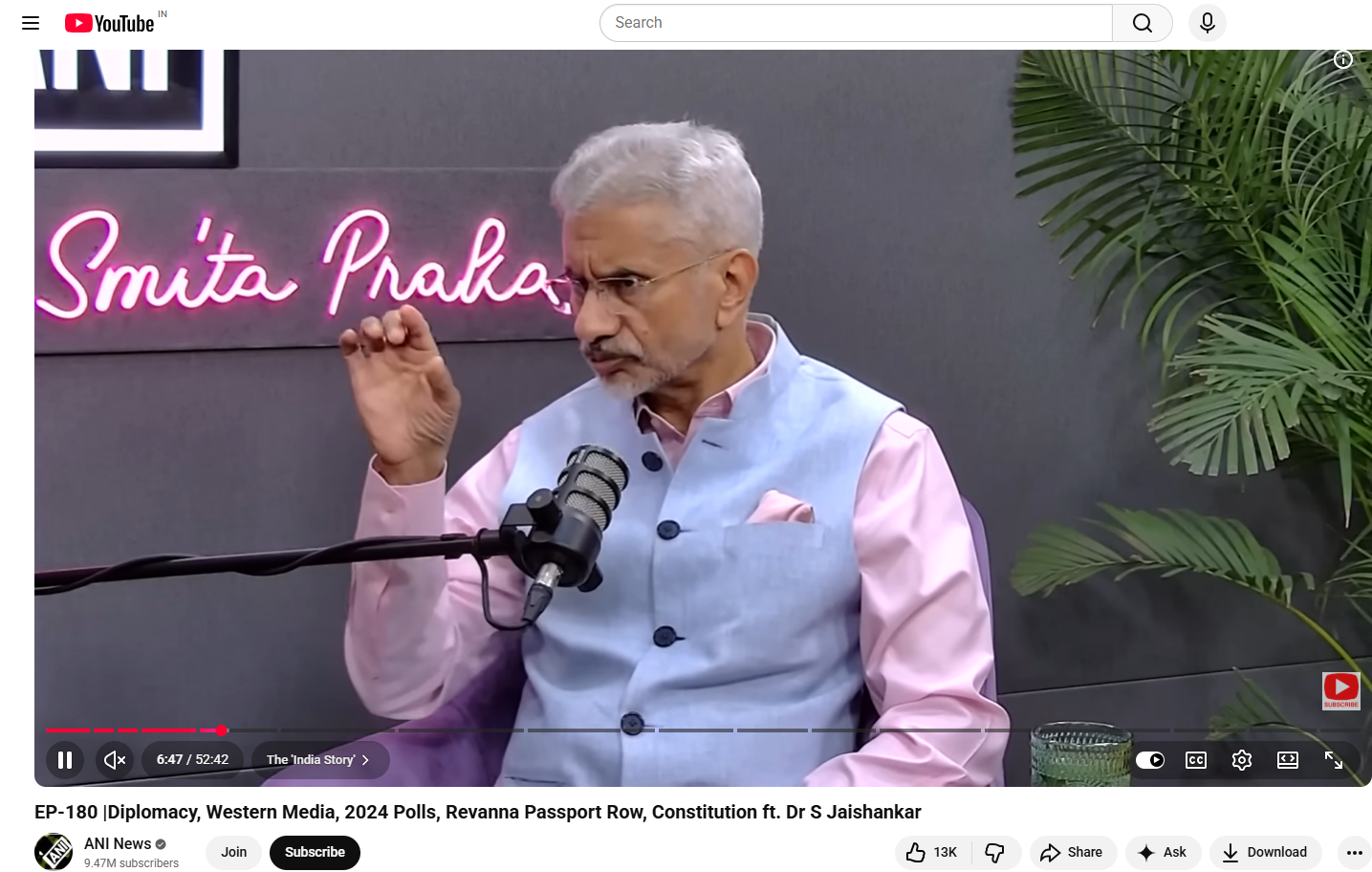

In the full video, PM Modi and Nitin Nabin are seen walking together. PM Modi then requests Nitin Nabin to proceed first for the floral tribute, placing his hand on Nabin’s back as a gesture to move forward. The viral clip selectively cuts this moment out of context and loops it to create a misleading impression. The complete footage clearly shows that PM Modi asked Nitin Nabin to offer tributes first, after which other leaders followed. There is no indication whatsoever that Nitin Nabin was pushed out of the camera frame, as claimed in the viral posts. We also found the live broadcast of the ‘Bharatiya Janata Party Sangathan Parv’ on BJP’s official YouTube channel. The same visuals appear at the end of the live stream, further confirming that PM Modi was merely gesturing for Nitin Nabin to proceed first.

Additionally, photographs available on Nitin Nabin’s official X handle show him offering floral tributes ahead of PM Modi, who is seen standing behind and waiting.

Conclusion:

CyberPeace research confirms that the viral clip has been cropped and shared with a false narrative. In the original context, Prime Minister Narendra Modi was respectfully inviting BJP national president Nitin Nabin to move ahead and pay tributes, not pushing him out of the camera frame.