#FactCheck - Old Video of US Soldiers’ Coffins Mislinked to Iran War

Executive Summary

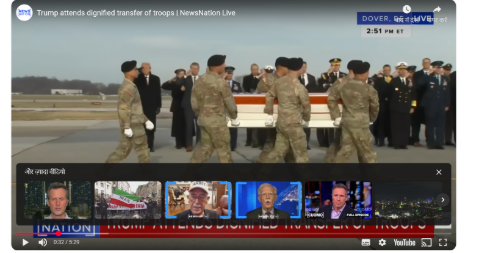

A video is being widely shared on social media showing soldiers carrying coffins with full military honours. Users are claiming that the footage shows the bodies of American soldiers who were killed in the war with Iran being brought back to the United States.

However, research by the CyberPeacefound the viral claim to be misleading. Our research revealed that the video has no connection to the recent Iran-Israel conflict. The footage actually dates back to December 2025, when an Islamic State gunman in Syria killed two US soldiers and a US civilian.

Following that incident, the bodies of the victims were transported with military honours, and the ceremony was recorded in the viral video. The clip is now being circulated online with a false claim.

Claim

On March 1, 2026, an Instagram user shared the viral video claiming that it shows the bodies of American soldiers returning to the US after being killed in the war against Iran. The caption of the post reads: “Bodies of American soldiers martyred against Iran are returning to the United States. War always brings destruction, which we are now witnessing.”

The link to the post and its archived version can be seen below.

Fact check

To verify the claim, we extracted key frames from the viral video and performed a reverse image search using Google Lens. During the search, we found the full version of the video in a report published by BBC on December 18, 2025. This confirms that the footage predates the current developments.

According to the BBC report, US President Donald Trump attended a dignified transfer ceremony for two members of the US National Guard and a US civilian who were killed in Syria. The somber ceremony took place at Dover Air Force Base in Delaware, United States. The US Central Command (Centcom) stated that the two soldiers and a civilian interpreter were killed in an ambush carried out by an Islamic State (IS) gunman in Syria. The US Army identified the two soldiers as Sgt. Edgar Brian Torres-Tovar (25) of Des Moines and Sgt. William Nathaniel Howard (29) of Marshalltown. A US civilian interpreter, Ayad Mansoor Sakat, was also killed in the attack. Officials said that three other service members were injured during the attack, and the gunman was engaged and killed. Syria’s state media also reported that two Syrian security personnel were injured in the incident.

Further research led us to a report published on the News Nation YouTube channel on December 18, 2025, which also featured the same footage related to the incident.

Conclusion

Our research found that the viral video is not related to the recent Iran-Israel conflict. The footage dates back to December 2025, when two US soldiers and a US civilian were killed in an Islamic State attack in Syria. The video shows the dignified transfer of their remains and is now being shared on social media with a misleading claim.