#FactCheck - Misleading Video of Dubai Airport Attack Circulates Online, Found AI-Generated

Executive Summary

Amid rising tensions in the Middle East following attacks on Iran by the United States and Israel, a video is being shared on social media claiming that it shows a recent attack at Dubai International Airport. Research by the CyberPeace found the viral claim to be false. Our research revealed that the viral video is not real but has been created using artificial intelligence technology.

Claim:

An Instagram user shared the viral video on March 1, 2026, claiming it shows an attack at Dubai Airport. The link to the post, the archive link, and a screenshot are provided below.

Fact Check:

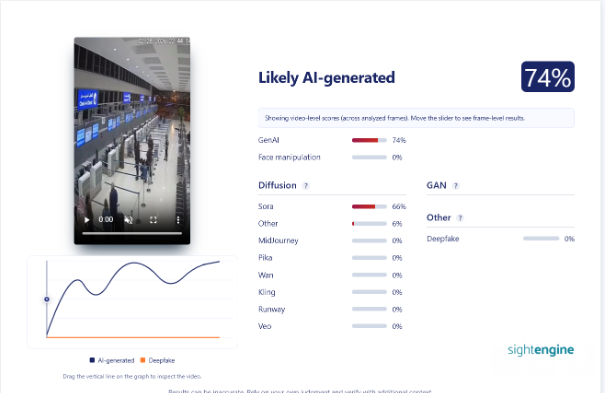

To verify the viral claim, we searched Google using relevant keywords. However, we did not find any credible media report confirming the claim.On closely examining the viral video, we noticed several unusual visuals and technical inconsistencies, raising suspicion that it might be AI-generated. To verify this, we scanned the video using the AI detection tool Sightengine. According to the results, around 74 percent of the video shows the likelihood of being AI-generated.

Conclusion:

Our research found that the viral video is not real but has been created using artificial intelligence technology.

Related Blogs

Introduction

Attempts at countering the spread of misinformation can include various methods and differing degrees of engagement by different stakeholders. The inclusion of Artificial Intelligence, user awareness and steps taken on the part of the public at a larger level, focus on innovation to facilitate clear communication can be considered in the fight to counter misinformation. This becomes even more important in spaces that deal with matters of national security, such as the Indian army.

IIT Indore’s Intelligent Communication System

As per a report in Hindustan Times on 14th November 2024, IIT Indore has achieved a breakthrough on their project regarding Intelligent Communication Systems. The project is supported by the Department of Telecommunications (DoT), the Ministry of Electronics and Information Technology (MeitY), and the Council of Scientific and Industrial Research (CSIR), as part of a specialised 6G research initiative (Bharat 6G Alliance) for innovation in 6G technology.

Professors at IIT Indore claim that the system they are working on has features different from the ones currently in use. They state that the receiver system can recognise coding, interleaving (a technique used to enhance existing error-correcting codes), and modulation methods together in situations of difficult environments, which makes it useful for transmitting information efficiently and securely, and thus could not only be used for telecommunication but the army as well. They also mention that previously, different receivers were required for different scenarios, however, they aim to build a system that has a single receiver that can adapt to any situation.

Previously, in another move that addressed the issue of misinformation in the army, the Ministry of Defence designated the Additional Directorate General of Strategic Communication in the Indian Army as the authorised officer to issue take-down notices regarding instances of posts consisting of illegal content and misinformation concerning the Army.

Recommendations

Here are a few policy implications and deliberations one can explore with respect to innovations geared toward tackling misinformation within the army:

- Research and Development: In this context, investment and research in better communication through institutes have enabled a system that ensures encrypted and secure communication, which helps with ways to combat misinformation for the army.

- Strategic Deployment: Relevant innovations can focus on having separate pilot studies testing sensitive data in the military areas to assess their effectiveness.

- Standardisation: Once tested, a set parameter of standards regarding the intelligence communication systems used can be encouraged.

- Cybersecurity integration: As misinformation is largely spread online, innovation in such fields can encourage further exploration with regard to integration with Cybersecurity.

Conclusion

The spread of misinformation during modern warfare can have severe repercussions. Sensitive and clear data is crucial for safe and efficient communication as a lot is at stake. Innovations that are geared toward combating such issues must be encouraged, for they not only ensure efficiency and security with matters related to defence but also combat misinformation as a whole.

References

- https://timesofindia.indiatimes.com/city/indore/iit-indore-unveils-groundbreaking-intelligent-receivers-for-enhanced-6g-and-military-communication-security/articleshow/115265902.cms

- https://www.hindustantimes.com/technology/6g-technology-and-intelligent-receivers-will-ease-way-for-army-intelligence-operations-iit-official-101731574418660.html

Executive Summary

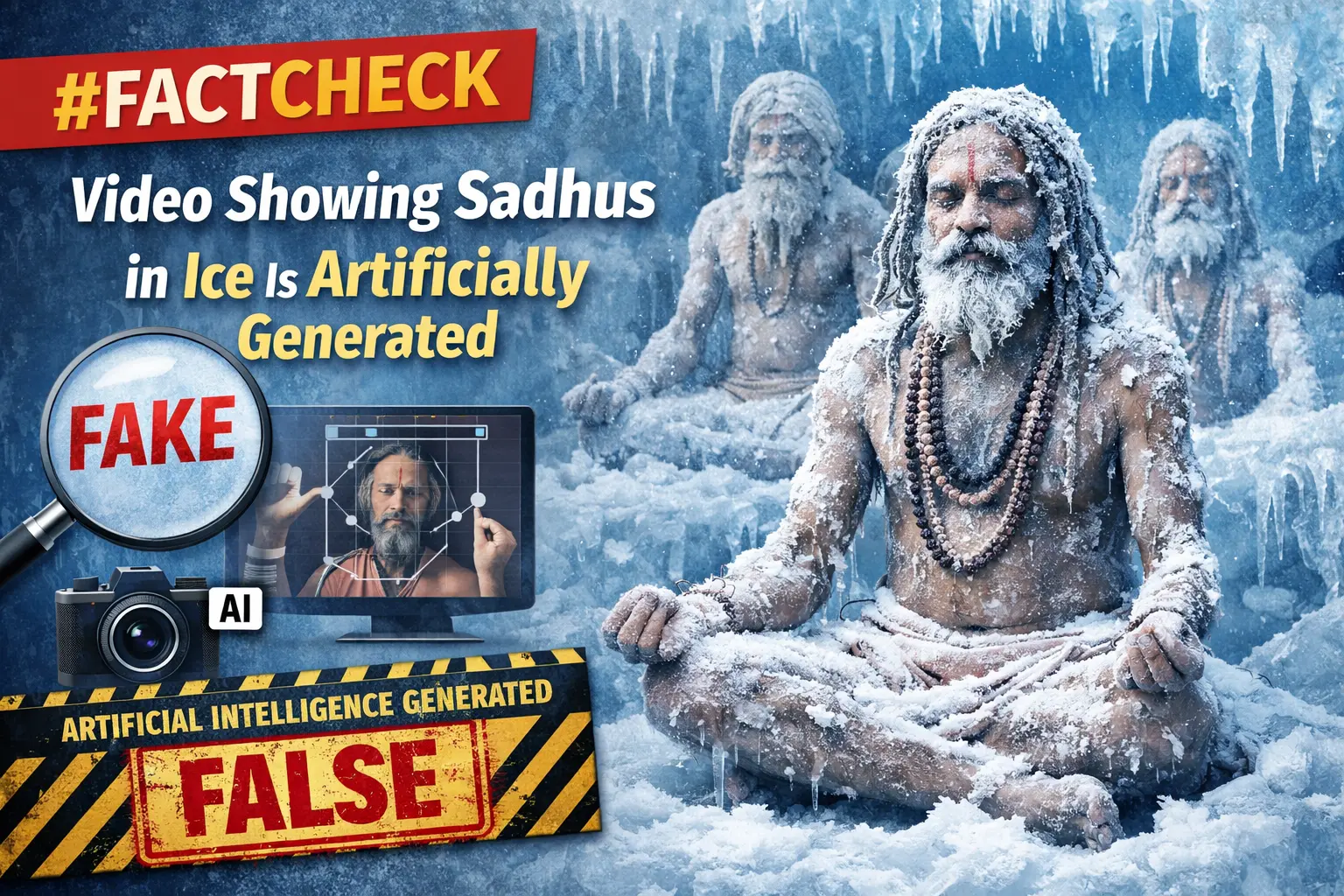

A video showing a group of Hindu ascetics (sadhus) allegedly performing intense penance while their bodies appear to be covered in ice is being widely shared on social media. Users are circulating the video as real and claiming that it represents an ancient tradition of Sanatan Dharma. CyberPeace research found the viral claim to be false.The research revealed that the video circulating on social media is not real but has been generated using artificial intelligence (AI).

Claim

On social media platform Facebook, a user shared the viral video on January 16, 2026. The video shows several ascetics engaged in penance, with their bodies seemingly covered in ice. Users shared the video while claiming that it depicts an authentic spiritual practice rooted in Sanatan Dharma.

Links to the post, archive link, and screenshots can be seen below.

Fact Check:

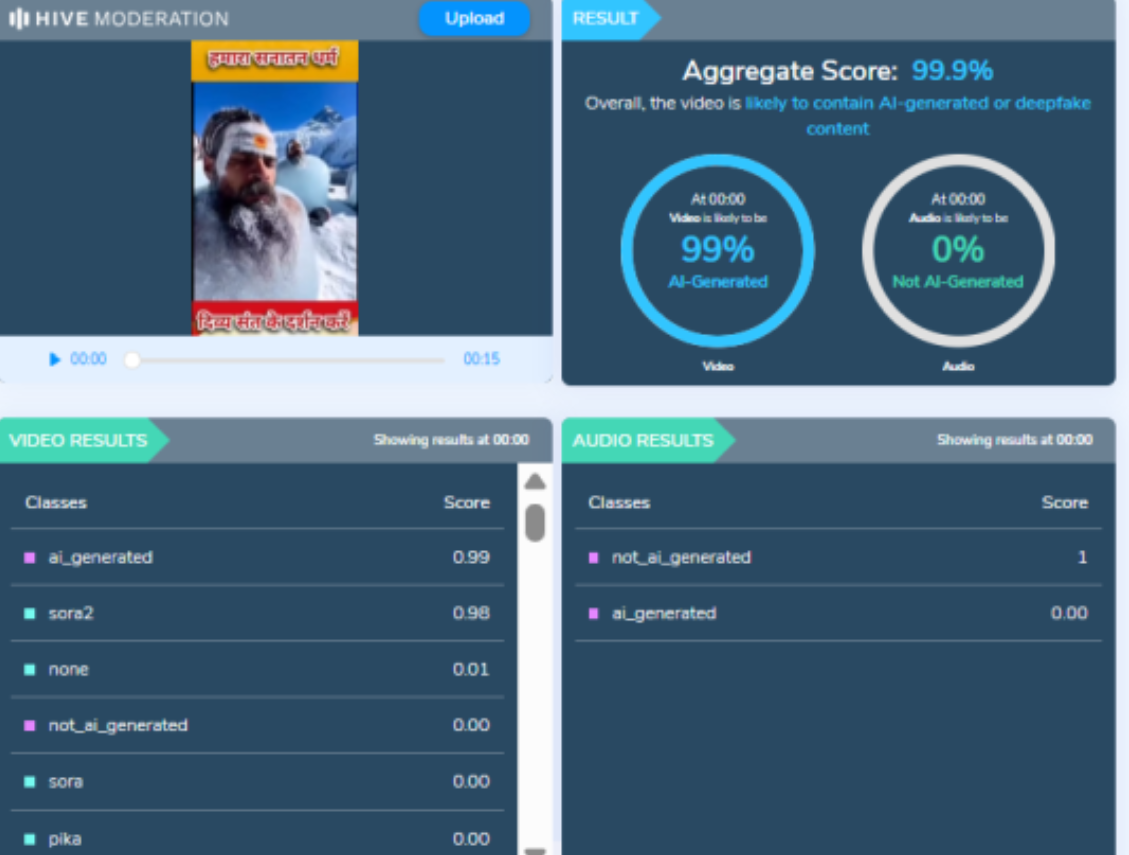

To verify the authenticity of the viral claim, CyberPeace searched relevant keywords on Google. However, no credible or reliable media reports supporting the claim were found. A close examination of the viral video raised suspicion that it may have been AI-generated. To verify this, the video was analysed using the AI detection tool Hive Moderation. According to the results, the video was found to be 99 percent AI-generated.

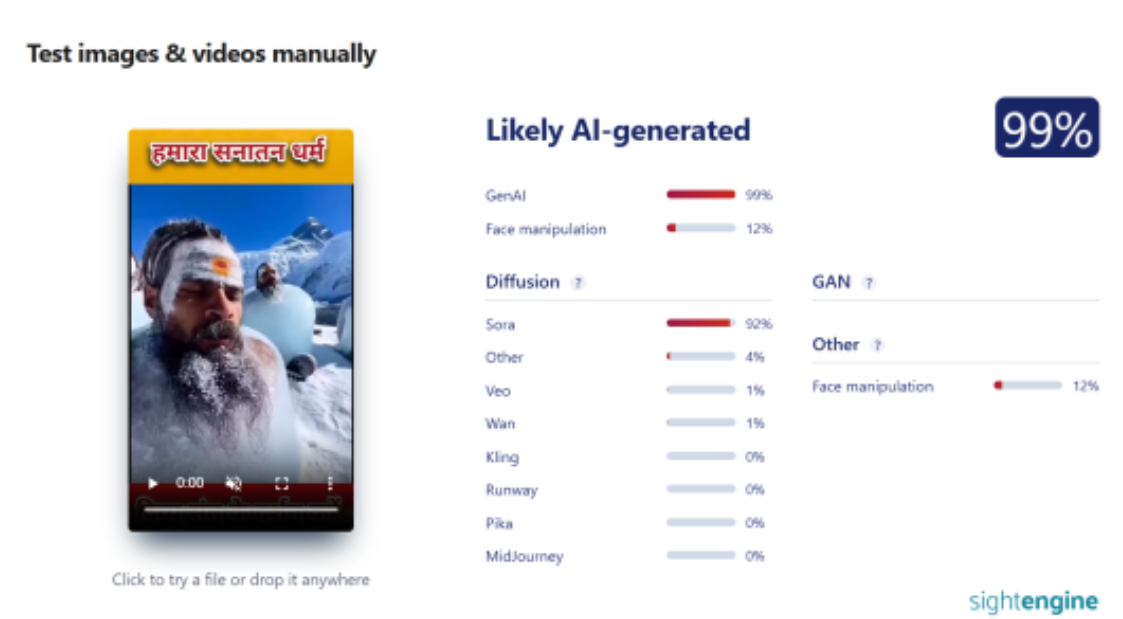

In the next step of the research, the same video was analysed using another AI detection tool, Sightengine. The results again indicated that the video was 99 percent AI-generated.

Conclusion

CyberPeace concludes that the video circulating on social media is not real. The viral video showing ascetics covered in ice was generated using artificial intelligence and does not depict an actual religious or spiritual practice.

Recently, Apple has pushed away the Advanced Data Protection feature for its customers in the UK. This was done due to a request by the UK’s Home Office, which demanded access to encrypted data stored in its cloud service, empowered by the Investigatory Powers Act (IPA). The Act compels firms to provide information to law enforcement. This move and its subsequent result, however, have raised concerns—bringing out different perspectives regarding the balance between privacy and security, along with the involvement of higher authorities and tech firms.

What is Advanced Data Protection?

Advanced Data Protection is an opt-in feature and doesn’t necessarily require activation. It is Apple’s strongest data tool, which provides end-to-end encryption for the data that the user chooses to protect. This is different from the standard (default) encrypted data services that Apple provides for photos, back-ups, and notes, among other things. The flip side of having such a strong security feature from a user perspective is that if the Apple account holder were to lose access to the account, they would lose their data as well since there are no recovery paths.

Doing away with the feature altogether, the sign-ups have been currently halted, and the company is working on removing existing user access at a later date (which is yet to be confirmed). For the UK users who hadn’t availed of this feature, there would be no change. However, for the ones who are currently trying to avail it are met with a notification on the Advanced Data Protection settings page that states that the feature cannot be enabled anymore. Consequently, there is no clarity whether the data stored by the UK users who availed the former facility would now cease to exist as even Apple doesn’t have access to it. It is important to note that withdrawing the feature does not ensure compliance with the Investigative Powers Act (IPA) as it is applicable to tech firms worldwide that have a UK market. Similar requests to access data have been previously shut down by Apple in the US.

Apple’s Stand on Encryption and Government Requests

The Tech giant has resisted court orders, rejecting requests to write software that would allow officials to access and enable identification of iPhones operated by gunmen (made in 2016 and 2020). It is said that the supposed reasons for such a demand by the UK Home Office have been made owing to the elusive role of end-to-end encryption in hiding criminal activities such as child sexual abuse and terrorism, hampering the efforts of security officials in catching them. Over the years, Apple has emphasised time and again its reluctance to create a backdoor to its encrypted data, stating the consequences of it being more vulnerable to attackers once a pathway is created. The Salt Typhoon attack on the US Telecommunication system is a recent example that has alerted officials, who now encourage the use of end-to-end encryption. Barring this, such requests could set a dangerous precedent for how tech firms and governments operate together. This comes against the backdrop of the Paris AI Action Summit, where US Vice President J.D. Vance raised concerns regarding regulation. As per reports, Apple has now filed a legal complaint against the Investigatory Powers Tribunal, the UK’s judicial body that handles complaints with respect to surveillance power usage by public authorities.

The Broader Debate on Privacy vs. Security

This standoff raises critical questions about how tech firms and governments should collaborate without compromising fundamental rights. Striking the right balance between privacy and regulation is imperative, ensuring security concerns are addressed without dismantling individual data protection. The outcome of Apple’s legal challenge against the IPA may set a significant precedent for how encryption policies evolve in the future.

References

- https://www.bbc.com/news/articles/c20g288yldko

- https://www.bbc.com/news/articles/cgj54eq4vejo

- https://www.bbc.com/news/articles/cn524lx9445o

- https://www.yahoo.com/tech/apple-advanced-data-protection-why-184822119.html

- https://indianexpress.com/article/technology/tech-news-technology/apple-advanced-data-protection-removal-uk-9851486/

- https://www.techtarget.com/searchsecurity/news/366619638/Apple-pulls-Advanced-Data-Protection-in-UK-sparking-concerns

- https://www.computerweekly.com/news/366619614/Apple-withdraws-encrypted-iCloud-storage-from-UK-after-government-demands-back-door-access?_gl=1*1p1xpm0*_ga*NTE3NDk1NzQxLjE3MzEzMDA2NTc.*_ga_TQKE4GS5P9*MTc0MDc0MTA4Mi4zMS4xLjE3NDA3NDEwODMuMC4wLjA.

- https://www.theguardian.com/technology/2025/feb/21/apple-removes-advanced-data-protection-tool-uk-government

- https://proton.me/blog/protect-data-apple-adp-uk#:~:text=Proton-,Apple%20revoked%20advanced%20data%20protection%20

- https://www.theregister.com/2025/03/05/apple_reportedly_ipt_complaint/

- https://www.computerweekly.com/news/366616972/Government-agencies-urged-to-use-encrypted-messaging-after-Chinese-Salt-Typhoon-hack