#FactCheck

Executive Summary:

A viral video has circulated on social media, wrongly showing lawbreakers surrendering to the Indian Army. However, the verification performed shows that the video is of a group surrendering to the Bangladesh Army and is not related to India. The claim that it is related to the Indian Army is false and misleading.

Claims:

A viral video falsely claims that a group of lawbreakers is surrendering to the Indian Army, linking the footage to recent events in India.

Fact Check:

Upon receiving the viral posts, we analysed the keyframes of the video through Google Lens search. The search directed us to credible news sources in Bangladesh, which confirmed that the video was filmed during a surrender event involving criminals in Bangladesh, not India.

We further verified the video by cross-referencing it with official military and news reports from India. None of the sources supported the claim that the video involved the Indian Army. Instead, the video was linked to another similar Bangladesh Media covering the news.

No evidence was found in any credible Indian news media outlets that covered the video. The viral video was clearly taken out of context and misrepresented to mislead viewers.

Conclusion:

The viral video claiming to show lawbreakers surrendering to the Indian Army is footage from Bangladesh. The CyberPeace Research Team confirms that the video is falsely attributed to India, misleading the claim.

- Claim: The video shows miscreants surrendering to the Indian Army.

- Claimed on: Facebook, X, YouTube

- Fact Check: False & Misleading

Executive Summary:

A misleading video of a child covered in ash allegedly circulating as the evidence for attacks against Hindu minorities in Bangladesh. However, the investigation revealed that the video is actually from Gaza, Palestine, and was filmed following an Israeli airstrike in July 2024. The claim linking the video to Bangladesh is false and misleading.

Claims:

A viral video claims to show a child in Bangladesh covered in ash as evidence of attacks on Hindu minorities.

Fact Check:

Upon receiving the viral posts, we conducted a Google Lens search on keyframes of the video, which led us to a X post posted by Quds News Network. The report identified the video as footage from Gaza, Palestine, specifically capturing the aftermath of an Israeli airstrike on the Nuseirat refugee camp in July 2024.

The caption of the post reads, “Journalist Hani Mahmoud reports on the deadly Israeli attack yesterday which targeted a UN school in Nuseirat, killing at least 17 people who were sheltering inside and injuring many more.”

To further verify, we examined the video footage where the watermark of Al Jazeera News media could be seen, We found the same post posted on the Instagram account on 14 July, 2024 where we confirmed that the child in the video had survived a massacre caused by the Israeli airstrike on a school shelter in Gaza.

Additionally, we found the same video uploaded to CBS News' YouTube channel, where it was clearly captioned as "Video captures aftermath of Israeli airstrike in Gaza", further confirming its true origin.

We found no credible reports or evidence were found linking this video to any incidents in Bangladesh. This clearly implies that the viral video was falsely attributed to Bangladesh.

Conclusion:

The video circulating on social media which shows a child covered in ash as the evidence of attack against Hindu minorities is false and misleading. The investigation leads that the video originally originated from Gaza, Palestine and documents the aftermath of an Israeli air strike in July 2024.

- Claims: A video shows a child in Bangladesh covered in ash as evidence of attacks on Hindu minorities.

- Claimed by: Facebook

- Fact Check: False & Misleading

Executive Summary:

A viral picture on social media showing UK police officers bowing to a group of social media leads to debates and discussions. The investigation by CyberPeace Research team found that the image is AI generated. The viral claim is false and misleading.

Claims:

A viral image on social media depicting that UK police officers bowing to a group of Muslim people on the street.

Fact Check:

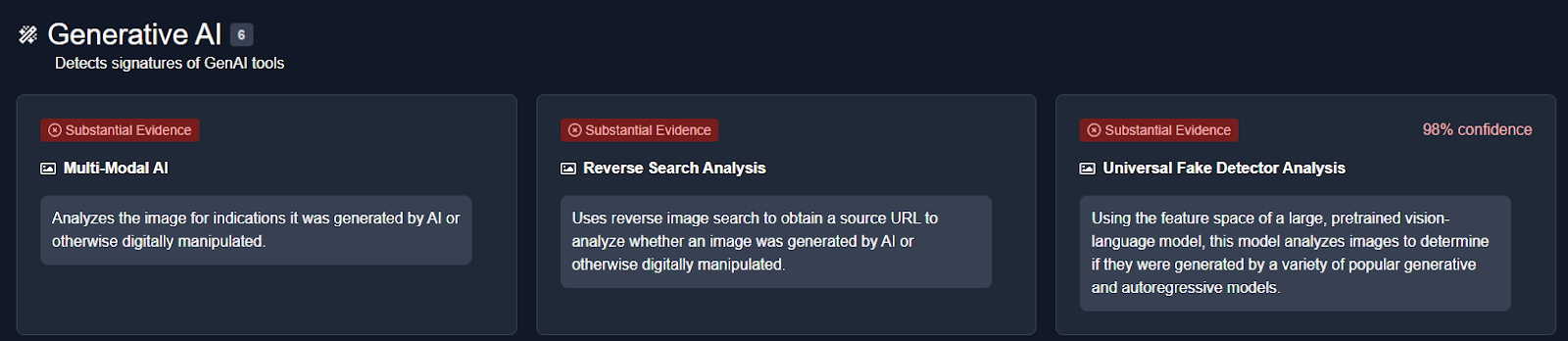

The reverse image search was conducted on the viral image. It did not lead to any credible news resource or original posts that acknowledged the authenticity of the image. In the image analysis, we have found the number of anomalies that are usually found in AI generated images such as the uniform and facial expressions of the police officers image. The other anomalies such as the shadows and reflections on the officers' uniforms did not match the lighting of the scene and the facial features of the individuals in the image appeared unnaturally smooth and lacked the detail expected in real photographs.

We then analysed the image using an AI detection tool named True Media. The tools indicated that the image was highly likely to have been generated by AI.

We also checked official UK police channels and news outlets for any records or reports of such an event. No credible sources reported or documented any instance of UK police officers bowing to a group of Muslims, further confirming that the image is not based on a real event.

Conclusion:

The viral image of UK police officers bowing to a group of Muslims is AI-generated. CyberPeace Research Team confirms that the picture was artificially created, and the viral claim is misleading and false.

- Claim: UK police officers were photographed bowing to a group of Muslims.

- Claimed on: X, Website

- Fact Check: Fake & Misleading

Executive Summary:

A video of Pakistani Olympic gold medalist and Javelin player Arshad Nadeem wishing Independence Day to the People of Pakistan, with claims of snoring audio in the background is getting viral. CyberPeace Research Team found that the viral video is digitally edited by adding the snoring sound in the background. The original video published on Arshad's Instagram account has no snoring sound where we are certain that the viral claim is false and misleading.

Claims:

A video of Pakistani Olympic gold medalist Arshad Nadeem wishing Independence Day with snoring audio in the background.

Fact Check:

Upon receiving the posts, we thoroughly checked the video, we then analyzed the video in TrueMedia, an AI Video detection tool, and found little evidence of manipulation in the voice and also in face.

We then checked the social media accounts of Arshad Nadeem, we found the video uploaded on his Instagram Account on 14th August 2024. In that video, we couldn’t hear any snoring sound.

Hence, we are certain that the claims in the viral video are fake and misleading.

Conclusion:

The viral video of Arshad Nadeem with a snoring sound in the background is false. CyberPeace Research Team confirms the sound was digitally added, as the original video on his Instagram account has no snoring sound, making the viral claim misleading.

- Claim: A snoring sound can be heard in the background of Arshad Nadeem's video wishing Independence Day to the people of Pakistan.

- Claimed on: X,

- Fact Check: Fake & Misleading

Executive Summary:

The viral video circulating on social media about the Indian men’s 4x400m relay team recently broke the Asian record and qualified for the finals of the world Athletics championship. The fact check reveals that this is not a recent event but it is from the World World Athletics Championships, August 2023 that happened in Budapest, Hungary. The Indian team comprising Muhammed Anas Yahiya, Amoj Jacob, Muhammed Ajmal Variyathodi, and Rajesh Ramesh, clocked a time of 2 minutes 59.05 seconds, finishing second behind the USA and breaking the Asian record. Although they performed very well in the heats, they only got fifth place in the finals. The video is being reuploaded with false claims stating its a recent record.

Claims:

A recent claim that the Indian men’s 4x400m relay team set the Asian record and qualified to the world finals.

Fact Check:

In the recent past, a video of the Indian Men’s 4x400m relay team which set a new Asian record is viral on different Social Media. Many believe that this is a video of the recent achievement of the Indian team. Upon receiving the posts, we did keyword searches based on the input and we found related posts from various social media. We found an article published by ‘The Hindu’ on August 27, 2023.

According to the article, the Indian team competed in the World Athletics Championship held in Budapest, Hungary. During that time, the team had a very good performance. The Indian team, which consisted of Muhammed Anas Yahiya, Amoj Jacob, Muhammed Ajmal Variyathodi, and Rajesh Ramesh, completed the race in 2:58.47 seconds, coming second after the USA in the event.

The earlier record was 3.00.25 which was set in 2021.

This was a new record in Asia, so it was a historic moment for India. Despite their great success, this video is being reshared with captions that implies this is a recent event, which has raised confusion. We also found various social media posts posted on Aug 26, 2023. We also found the same video posted on the official X account of Prime Minister Narendra Modi, the caption of the post reads, “Incredible teamwork at the World Athletics Championships!

Anas, Amoj, Rajesh Ramesh, and Muhammed Ajmal sprinted into the finals, setting a new Asian Record in the M 4X400m Relay.

This will be remembered as a triumphant comeback, truly historical for Indian athletics.”

This reveals that this is not a recent event but it is from the World World Athletics Championships, August 2023 that happened in Budapest, Hungary.

Conclusion:

The viral video of the recent news about the Indian men’s 4x400m relay team breaking the Asian record is not true. The video was from August 2023 that happened at the World Athletics Championships, Budapest. The Indian team broke the Asian record with 2 minutes 59.05 seconds in second position while the US team obtained first position with a timing of 2 minutes 58.47 seconds. However, the video circulated projecting as a recent event is misleading and false.

- Claim: Recent achievement of the Indian men's 4x400m relay team broke the Asian record and qualified for the World finals.

- Claimed on: X, LinkedIn, Instagram

- Fact Check: Fake & Misleading

Executive Summary:

A viral photo on social media claims to show a ruined bridge in Kerala, India. But, a reality check shows that the bridge is in Amtali, Barguna district, Bangladesh. The reverse image search of this picture led to a Bengali news article detailing the bridge's critical condition. This bridge was built-in 2002 to 2006 over Jugia Khal in Arpangashia Union. It has not been repaired and experiences recurrent accidents and has the potential to collapse, which would disrupt local connectivity. Thus, the social media claims are false and misleading.

Claims:

Social Media users share a photo that shows a ruined bridge in Kerala, India.

Fact Check:

On receiving the posts, we reverse searched the image which leads to a Bengali News website named Manavjamin where the title displays, “19 dangerous bridges in Amtali, lakhs of people in fear”. We found the picture on this website similar to the viral image. On reading the whole article, we found that the bridge is located in Bangladesh's Amtali sub-district of Barguna district.

Taking a cue from this, we then searched for the bridge in that region. We found a similar bridge at the same location in Amtali, Bangladesh.

According to the article, The 40-meter bridge over Jugia Khal in Arpangashia Union, Amtali, was built in 2002 to 2006 and was never repaired. It is in a critical condition, causing frequent accidents and risking collapse. If the bridge collapses it will disrupt communication between multiple villages and the upazila town. Residents have made temporary repairs.

Hence, the claims made by social media users are fake and misleading.

Conclusion:

In conclusion, the viral photo claiming to show a ruined bridge in Kerala is actually from Amtali, Barguna district, Bangladesh. The bridge is in a critical state, with frequent accidents and the risk of collapse threatening local connectivity. Therefore, the claims made by social media users are false and misleading.

- Claim: A viral image shows a ruined bridge in Kerala, India.

- Claimed on: Facebook

- Fact Check: Fake & Misleading

Executive Summary:

A photo claiming that Mr. Rowan Atkinson, the famous actor who played the role of Mr. Bean, lying sick on bed is circulating on social media. However, this claim is false. The image is a digitally altered picture of Mr.Barry Balderstone from Bollington, England, who died in October 2019 from advanced Parkinson’s disease. Reverse image searches and media news reports confirm that the original photo is of Barry, not Rowan Atkinson. Furthermore, there are no reports of Atkinson being ill; he was recently seen attending the 2024 British Grand Prix. Thus, the viral claim is baseless and misleading.

Claims:

A viral photo of Rowan Atkinson aka Mr. Bean, lying on a bed in sick condition.

Fact Check:

When we received the posts, we first did some keyword search based on the claim made, but no such posts were found to support the claim made.Though, we found an interview video where it was seen Mr. Bean attending F1 Race on July 7, 2024.

Then we reverse searched the viral image and found a news report that looked similar to the viral photo of Mr. Bean, the T-Shirt seems to be similar in both the images.

The man in this photo is Barry Balderstone who was a civil engineer from Bollington, England, died in October 2019 due to advanced Parkinson’s disease. Barry received many illnesses according to the news report and his application for extensive healthcare reimbursement was rejected by the East Cheshire Clinical Commissioning Group.

Taking a cue from this, we then analyzed the image in an AI Image detection tool named, TrueMedia. The detection tool found the image to be AI manipulated. The original image is manipulated by replacing the face with Rowan Atkinson aka Mr. Bean.

Hence, it is clear that the viral claimed image of Rowan Atkinson bedridden is fake and misleading. Netizens should verify before sharing anything on the internet.

Conclusion:

Therefore, it can be summarized that the photo claiming Rowan Atkinson in a sick state is fake and has been manipulated with another man’s image. The original photo features Barry Balderstone, the man who was diagnosed with stage 4 Parkinson’s disease and subsequently died in 2019. In fact, Rowan Atkinson seemed perfectly healthy recently at the 2024 British Grand Prix. It is important for people to check on the authenticity before sharing so as to avoid the spreading of misinformation.

- Claim: A Viral photo of Rowan Atkinson aka Mr. Bean, lying on a bed in a sick condition.

- Claimed on: X, Facebook

- Fact Check: Fake & Misleading

.webp)

Executive Summary:

Cyber incidents are evolving along with time, they are designed to attract and lure people through social networking sites and/or messaging services. In the recent past a spate of messages alleging that TRAI is offering ‘3 months free recharge with free voice calls and internet for 4g/5g with 200 GB free data’. These messages display the TRAI logo with attractive offers to trick the users into revealing their personal details. This blog discusses the functioning of this free mobile recharge scheme, its methods and guidelines on how to avoid such fake schemes. This blog explains the importance of vigilance and verification when receiving any links, emphasizing the need to report suspicious activities and educate others to prevent identity theft and protect personal information.

Claim:

The message circulated an enticing offer: free mobile recharge for 3 months which provides unlimited free voice calls with 200GB 4G/5G data with TRAI logo. The key characteristics of the false claims are

- Official Branding: The logo of TRAI has been viewed as a deceptive facade of credibility.

- Unrealistic Offers: It is accompanied by a free recharge , which is intended for an extended period indefinite period, like most fraudsters’ bait.

- Urgency and Exclusivity: The offer is for a limited time to make urgency forcing the receiver to take the offer without confirmation.

The Deceptive Scheme:

Organized systematically, the fraudulent campaign usually proceeds in several steps, all of which aim at extracting the victim’s personal data. Here’s a breakdown of the scheme:

1. Initial Contact: Such messages or calls reach the users’ inboxes or phone numbers through social media applications such as WhatsApp or through text messages. These messages further implies that the user was chosen for the special offer from TRAI, which elicits the interest of the user.

2. Information Request: To claim the purported offer, users are directed to a website or asked to reply with personal details, including:

- Phone number

- State of residence

- SIM provider details

This is useful for the scammers as they harvest information which can be used to conduct identity theft or sold to others on the shady part of the internet known as the ‘Dark Web’.

3. Fake Confirmation: After providing all the information, a congratulatory message appears on the screen showing that their phone number is eligible for the offer. The user is compelled to forward the message to many phone numbers through whatsapp to get the offer.

4. Pressure Tactics: The message often implies a sense of time constraint or fear which psychologically produces pressure to provide all the user information. For example, users are given messages such as that if they do not ‘act now’, they will lose their mobile service.

Analyzing the Fraudulent Campaign

The TRAI fraudulent recharge scheme case depicts that social engineering is used in cyber crimes. Here are some key aspects that characterize this campaign:

- Sophisticated Social Engineering

Scammers take advantage of the holders’ confidence in official bodies such as TRAI. By using official TRAI logos, official language they try to deceive even cautious people.

- Viral Spread

The user is compelled to share the given message to friends and groups; this is an excellent strategy to spread the scam. It not only spreads the fraudulent message but also tries to extract the details of other people.

- Technical Analysis

- Domain Name: SGOFF[.]CYOU

- Registry Domain ID: D472308342-CNIC

- Registrar WHOIS Server: whois.hkdns.hk

- Registrar URL: http://www.hkdns.hk

- Updated Date: 2024-07-24T18:50:48.0Z

- Creation Date: 2024-07-19T18:48:44.0Z

- Registry Expiry Date: 2025-07-19T23:59:59.0Z

- Registrar: West263 International Limited

- Registrar IANA ID: 1915

- Registrant State/Province: Anhui

- Registrant Country: CN

- Name Server: NORMAN.NS.CLOUDFLARE.COM

- Name Server: PAM.NS.CLOUDFLARE.COM

- DNSSEC: unsigned

Cloudflare Inc. is used to cover the scam. The real website always uses the older domain while this url has been registered recently which indicates that this link is a scam.

The graph indicates that some of the communicated files and websites are malicious.

CyberPeace Advisory and Best Practice:

In light of the growing threat posed by such scams, the Research Wing of CyberPeace recommend the following best practices to help users protect themselves:

1. Verify Communications: It is always advisable to visit the official site of the organization or call the official contact numbers of the company to speak to their customer care and clarify about the offers.

2. Do not share personal information: No genuine organization will call the people for personal information. Step carefully and do not provide personal information that will lead to identity theft when dealing with such offers.

3. Report Fraudulent Activity: If one receives any calls or messages that seem to be suspicious, then the user can report cyber crimes to the National Cyber Crime Reporting Portal on www. cybercrime. gov. in or call on 1930. Such scams are reportable and assist the authorities in tracking and fighting the vice.

4. Educate Others : Always raise awareness among friends by sharing these kinds of scams. Educating people helps to avoid them falling prey to such fraudulent schemes.

5. Use Reliable Resources : Always refer to official sources or websites for any kind of offers or promotions.

Conclusion:

The free recharge scheme for 3 months with the logo of TRAI is a fraudulent scam. There is no official information from TRAI or in their official website about this free recharge scheme. Though the scheme looks attractive, it is deceptive. Through this, the scammers are trying to collect personal details of the individual. Before clicking any links, it is necessary to check the authenticity of the information, report these kinds of incidents to spread awareness among people. Always be safe and be vigilant.

Executive Summary:

A video online alleges that people are chanting "India India" as Ohio Senator J.D. Vance meets them at the Republican National Convention (RNC). This claim is not correct. The CyberPeace Research team’s investigation showed that the video was digitally changed to include the chanting. The unaltered video was shared by “The Wall Street Journal” and confirmed via the YouTube channel of “Forbes Breaking News”, which features different music performing while Mr. and Mrs. Usha Vance greeted those present in the gathering. So the claim that participants chanted "India India" is not real.

Claims:

A video spreading on social media shows attendees chanting "India-India" as Ohio Senator J.D. Vance and his wife, Usha Vance greet them at the Republican National Convention (RNC).

Fact Check:

Upon receiving the posts, we did keyword search related to the context of the viral video. We found a video uploaded by The Wall Street Journal on July 16, titled "Watch: J.D. Vance Is Nominated as Vice Presidential Nominee at the RNC," at the time stamp 0:49. We couldn’t hear any India-India chants whereas in the viral video, we can clearly hear it.

We also found the video on the YouTube channel of Forbes Breaking News. In the timestamp at 3:00:58, we can see the same clip as the viral video but no “India-India” chant could be heard.

Hence, the claim made in the viral video is false and misleading.

Conclusion:

The viral video claiming to show "India-India" chants during Ohio Senator J.D. Vance's greeting at the Republican National Convention is altered. The original video, confirmed by sources including “The Wall Street Journal” and “Forbes Breaking News” features different music without any such chants. Therefore, the claim is false and misleading.

Claim: A video spreading on social media shows attendees chanting "India-India" as Ohio Senator J.D. Vance and his wife, Usha Vance greet them at the Republican National Convention (RNC).

Claimed on: X

Fact Check: Fake & Misleading

Executive Summary:

A new threat being uncovered in today’s threat landscape is that while threat actors took an average of one hour and seven minutes to leverage Proof-of-Concept(PoC) exploits after they went public, now the time is at a record low of 22 minutes. This incredibly fast exploitation means that there is very limited time for organizations’ IT departments to address these issues and close the leaks before they are exploited. Cloudflare released the Application Security report which shows that the attack percentage is more often higher than the rate at which individuals invent and develop security countermeasures like the WAF rules and software patches. In one case, Cloudflare noted an attacker using a PoC-based attack within a mere 22 minutes from the moment it was released, leaving almost no time for a remediation window.

Despite the constant growth of vulnerabilities in various applications and systems, the share of exploited vulnerabilities, which are accompanied by some level of public exploit or PoC code, has remained relatively stable over the past several years and fluctuates around 50%. These vulnerabilities with publicly known exploit code, 41% was initially attacked in the zero-day mode while of those with no known code, 84% was first attacked in the same mode.

Modus Operandi:

The modus operandi of the attack involving the rapid weaponization of proof-of-concept (PoC) exploits is characterized by the following steps:

- Vulnerability Identification: Threat actors bring together the exploitation of a system vulnerability that may be in the software or hardware of the system; this may be a code error, design failure, or a configuration error. This is normally achieved using vulnerability scanners and test procedures that have to be performed manually.

- Vulnerability Analysis: After the vulnerability is identified, the attackers study how it operates to determine when and how it can be triggered and what consequences that action will have. This means that one needs to analyze the details of the PoC code or system to find out the connection sequence that leads to vulnerability exploitation.

- Exploit Code Development: Being aware of the weakness, the attackers develop a small program or script denoted as the PoC that addresses exclusively the identified vulnerability and manipulates it in a moderated manner. This particular code is meant to be utilized in showing a particular penalty, which could be unauthorized access or alteration of data.

- Public Disclosure and Weaponization: The PoC exploit is released which is frequently done shortly after the vulnerability has been announced to the public. This makes it easier for the attackers to exploit it while waiting for the software developer to release the patch. To illustrate, Cloudflare has spotted an attacker using the PoC-based exploit 22 minutes after the publication only.

- Attack Execution: The attackers then use the weaponized PoC exploit to attack systems which are known to be vulnerable to it. Some of the actions that are tried in this context are attempts at running remote code, unauthorized access and so on. The pace at which it happens is often much faster than the pace at which humans put in place proper security defense mechanisms, such as the WAF rules or software application fixes.

- Targeted Operations: Sometimes, they act as if it’s a planned operation, where the attackers are selective in the system or organization to attack. For example, exploitation of CVE-2022-47966 in ManageEngine software was used during the espionage subprocess, where to perform such activity, the attackers used the mentioned vulnerability to install tools and malware connected with espionage.

Precautions: Mitigation

Following are the mitigating measures against the PoC Exploits:

1. Fast Patching and New Vulnerability Handling

- Introduce proper patching procedures to address quickly the security released updates and disclosed vulnerabilities.

- Focus should be made on the patching of those vulnerabilities that are observed to be having available PoC exploits, which often risks being exploited almost immediately.

- It is necessary to frequently check for the new vulnerability disclosures and PoC releases and have a prepared incident response plan for this purpose.

2. Leverage AI-Powered Security Tools

- Employ intelligent security applications which can easily generate desirable protection rules and signatures as attackers ramp up the weaponization of PoC exploits.

- Step up use of artificial intelligence (AI) - fueled endpoint detection and response (EDR) applications to quickly detect and mitigate the attempts.

- Integrate Artificial Intelligence based SIEM tools to Detect & analyze Indicators of compromise to form faster reaction.

3. Network Segmentation and Hardening

- Use strong networking segregation to prevent the attacker’s movement across the network and also restrict the effects of successful attacks.

- Secure any that are accessible from the internet, and service or protocols such as RDP, CIFS, or Active directory.

- Limit the usage of native scripting applications as much as possible because cyber attackers may exploit them.

4. Vulnerability Disclosure and PoC Management

- Inform the vendors of the bugs and PoC exploits and make sure there is a common understanding of when they are reported, to ensure fast response and mitigation.

- It is suggested to incorporate mechanisms like digital signing and encryption for managing and distributing PoC exploits to prevent them from being accessed by unauthorized persons.

- Exploits used in PoC should be simple and independent with clear and meaningful variable and function names that help reduce time spent on triage and remediation.

5. Risk Assessment and Response to Incidents

- Maintain constant supervision of the environment with an intention of identifying signs of a compromise, as well as, attempts of exploitation.

- Support a frequent detection, analysis and fighting of threats, which use PoC exploits into the system and its components.

- Regularly communicate with security researchers and vendors to understand the existing threats and how to prevent them.

Conclusion:

The rapid process of monetization of Proof of Concept (POC) exploits is one of the most innovative and constantly expanding global threats to cybersecurity at the present moment. Cyber security experts must react quickly while applying a patch, incorporate AI to their security tools, efficiently subdivide their networks and always heed their vulnerability announcements. Stronger incident response plan would aid in handling these kinds of menaces. Hence, applying measures mentioned above, the organizations will be able to prevent the acceleration of turning PoC exploits into weapons and the probability of neutral affecting cyber attacks.

Reference:

https://www.mayrhofer.eu.org/post/vulnerability-disclosure-is-positive/

https://www.uptycs.com/blog/new-poc-exploit-backdoor-malware

https://www.balbix.com/insights/attack-vectors-and-breach-methods/

https://blog.cloudflare.com/application-security-report-2024-update

Executive Summary:

Viral pictures featuring US Secret Service agents smiling while protecting former President Donald Trump during a planned attempt to kill him in Pittsburgh have been clarified as photoshopped pictures. The pictures making the rounds on social media were produced by AI-manipulated tools. The original image shows no smiling agents found on several websites. The event happened with Thomas Mathew Crooks firing bullets at Trump at an event in Butler, PA on July 13, 2024. During the incident one was deceased and two were critically injured. The Secret Service stopped the shooter, and circulating photos in which smiles were faked have stirred up suspicion. The verification of the face-manipulated image was debunked by the CyberPeace Research Team.

Claims:

Viral photos allegedly show United States Secret Service agents smiling while rushing to protect former President Donald Trump during an attempted assassination in Pittsburgh, Pennsylvania.

Fact Check:

Upon receiving the posts, we searched for any credible source that supports the claim made, we found several articles and images of the incident but in those the images were different.

This image was published by CNN news media, in this image we can see the US Secret Service protecting Donald Trump but not smiling. We then checked for AI Manipulation in the image using the AI Image Detection tool, True Media.

We then checked with another AI Image detection tool named, contentatscale AI image detection, which also found it to be AI Manipulated.

Comparison of both photos:

Hence, upon lack of credible sources and detection of AI Manipulation concluded that the image is fake and misleading.

Conclusion:

The viral photos claiming to show Secret Service agents smiling when protecting former President Donald Trump during an assassination attempt have been proven to be digitally manipulated. The original image found on CNN Media shows no agents smiling. The spread of these altered photos resulted in misinformation. The CyberPeace Research Team's investigation and comparison of the original and manipulated images confirm that the viral claims are false.

- Claim: Viral photos allegedly show United States Secret Service agents smiling while rushing to protect former President Donald Trump during an attempted assassination in Pittsburgh, Pennsylvania.

- Claimed on: X, Thread

- Fact Check: Fake & Misleading

Executive Summary:

Several videos claiming to show bizarre, mutated animals with features such as seal's body and cow's head have gone viral on social media. Upon thorough investigation, these claims were debunked and found to be false. No credible source of such creatures was found and closer examination revealed anomalies typical of AI-generated content, such as unnatural leg movements, unnatural head movements and joined shoes of spectators. AI material detectors confirmed the artificial nature of these videos. Further, digital creators were found posting similar fabricated videos. Thus, these viral videos are conclusively identified as AI-generated and not real depictions of mutated animals.

Claims:

Viral videos show sea creatures with the head of a cow and the head of a Tiger.

Fact Check:

On receiving several videos of bizarre mutated animals, we searched for credible sources that have been covered in the news but found none. We then thoroughly watched the video and found certain anomalies that are generally seen in AI manipulated images.

Taking a cue from this, we checked all the videos in the AI video detection tool named TrueMedia, The detection tool found the audio of the video to be AI-generated. We divided the video into keyframes, the detection found the depicting image to be AI-generated.

In the same way, we investigated the second video. We analyzed the video and then divided the video into keyframes and analyzed it with an AI-Detection tool named True Media.

It was found to be suspicious and so we analyzed the frame of the video.

The detection tool found it to be AI-generated, so we are certain with the fact that the video is AI manipulated. We analyzed the final third video and found it to be suspicious by the detection tool.

The detection tool found the frame of the video to be A.I. manipulated from which it is certain that the video is A.I. manipulated. Hence, the claim made in all the 3 videos is misleading and fake.

Conclusion:

The viral videos claiming to show mutated animals with features like seal's body and cow's head are AI-generated and not real. A thorough investigation by the CyberPeace Research Team found multiple anomalies in AI-generated content and AI-content detectors confirmed the manipulation of A.I. fabrication. Therefore, the claims made in these videos are false.

- Claim: Viral videos show sea creatures with the head of a cow, the head of a Tiger, head of a bull.

- Claimed on: YouTube

- Fact Check: Fake & Misleading