#FactCheck - Viral Videos of Mutated Animals Debunked as AI-Generated

Executive Summary:

Several videos claiming to show bizarre, mutated animals with features such as seal's body and cow's head have gone viral on social media. Upon thorough investigation, these claims were debunked and found to be false. No credible source of such creatures was found and closer examination revealed anomalies typical of AI-generated content, such as unnatural leg movements, unnatural head movements and joined shoes of spectators. AI material detectors confirmed the artificial nature of these videos. Further, digital creators were found posting similar fabricated videos. Thus, these viral videos are conclusively identified as AI-generated and not real depictions of mutated animals.

Claims:

Viral videos show sea creatures with the head of a cow and the head of a Tiger.

Fact Check:

On receiving several videos of bizarre mutated animals, we searched for credible sources that have been covered in the news but found none. We then thoroughly watched the video and found certain anomalies that are generally seen in AI manipulated images.

Taking a cue from this, we checked all the videos in the AI video detection tool named TrueMedia, The detection tool found the audio of the video to be AI-generated. We divided the video into keyframes, the detection found the depicting image to be AI-generated.

In the same way, we investigated the second video. We analyzed the video and then divided the video into keyframes and analyzed it with an AI-Detection tool named True Media.

It was found to be suspicious and so we analyzed the frame of the video.

The detection tool found it to be AI-generated, so we are certain with the fact that the video is AI manipulated. We analyzed the final third video and found it to be suspicious by the detection tool.

The detection tool found the frame of the video to be A.I. manipulated from which it is certain that the video is A.I. manipulated. Hence, the claim made in all the 3 videos is misleading and fake.

Conclusion:

The viral videos claiming to show mutated animals with features like seal's body and cow's head are AI-generated and not real. A thorough investigation by the CyberPeace Research Team found multiple anomalies in AI-generated content and AI-content detectors confirmed the manipulation of A.I. fabrication. Therefore, the claims made in these videos are false.

- Claim: Viral videos show sea creatures with the head of a cow, the head of a Tiger, head of a bull.

- Claimed on: YouTube

- Fact Check: Fake & Misleading

Related Blogs

Introduction

As digital platforms rapidly become repositories of information related to health, YouTube has emerged as a trusted source people look to for answers. To counter rampant health misinformation online, the platform launched YouTube Health, a program aiming to make “high-quality health information available to all” by collaborating with health experts and content creators. While this is an effort in the right direction, the program needs to be tailored to the specificities of the Indian context if it aims to transform healthcare communication in the long run.

The Indian Digital Health Context

India’s growing internet penetration and lack of accessible healthcare infrastructure, especially in rural areas, facilitates a reliance on digital platforms for health information. However, these, especially social media, are rife with misinformation. Supplemented by varying literacy levels, access disparities, and lack of digital awareness, health misinformation can lead to serious negative health outcomes. The report ‘Health Misinformation Vectors in India’ by DataLEADS suggests a growing reluctance surrounding conventional medicine, with people looking for affordable and accessible natural remedies instead. Social media helps facilitate this shift. However, media-sharing platforms such as WhatsApp, YouTube, and Facebook host a large chunk of health misinformation. The report identifies that cancer, reproductive health, vaccines, and lifestyle diseases are four key areas susceptible to misinformation in India.

YouTube’s Efforts in Promoting Credible Health Content

YouTube Health aims to provide evidence-based health information with “digestible, compelling, and emotionally supportive health videos,” from leading experts to everyone irrespective of who they are or where they live. So far, it executes this vision through:

- Content Curation: The platform has health source information panels and content shelves highlighting videos regarding 140+ medical conditions from authority sources like All India Institute of Medical Sciences (AIIMS), National Institute of Mental Health and Neurosciences (NIMHANS), Max Healthcare etc., whenever users search for health-related topics.

- Localization Strategies: The platform offers multilingual health content in regional languages such as Hindi, Tamil, Telugu, Marathi, Kannada, Malayalam, Punjabi, and Bengali, apart from English. This is to help health information reach viewers across most of the country.

- Verification of professionals: Healthcare professionals and organisations can apply to YouTube’s health feature for their videos to be authenticated as an authority health source on the platform and for their videos to show up on the ‘Health Sources’ shelf.

Challenges

- Limited Reach: India has a diverse linguistic ecosystem. While health information is made available in over 8 languages, the number is not enough to reach everyone in the country. Efforts to reach more people in vernacular languages need to be ramped up. Further, while there were around 50 billion views of health content on YouTube in 2023, it is difficult to measure the on-ground outcomes of those views.

- Lack of Digital Literacy: Misinformation on digital platforms cannot be entirely curtailed owing to the way algorithms are designed to enhance user engagement. However, uploading authoritative health information as a solution may not be enough, if users lack awareness about misinformation and the need to critically evaluate and trust only credible sources. In India, this critical awareness remains largely underdeveloped.

Conclusion

Considering that India has over 450 million users, by far the highest number of users in any country in the world, the platform has recognized that it can play a transformative role in the country’s digital health ecosystem. To accomplish its mission “to combat the societal threat of medical misinformation,” YouTube will have to continue to take several proactive measures. There is scope for strengthening collaborations with Indian public health agencies and trusted public figures, national and regional, to provide credible health information to all. The approach will have to be tailored to India’s vast linguistic diversity, by encouraging capacity-building for vernacular creators to produce credible content. Finally, multiple stakeholders will need to come together to promote digital literacy through education campaigns about identifying trustworthy sources.

Sources

- https://indianexpress.com/article/technology/tech-news-technology/youtube-health-dr-garth-graham-interview-9746673/

- https://economictimes.indiatimes.com/news/india/cancer-misinformation-extremely-prevalent-in-india-trust-in-science-medicine-crucial-report/articleshow/115931783.cms?from=mdr

- https://health.youtube/our-mission/

- https://health.youtube/features-application/

- https://backlinko.com/youtube-users

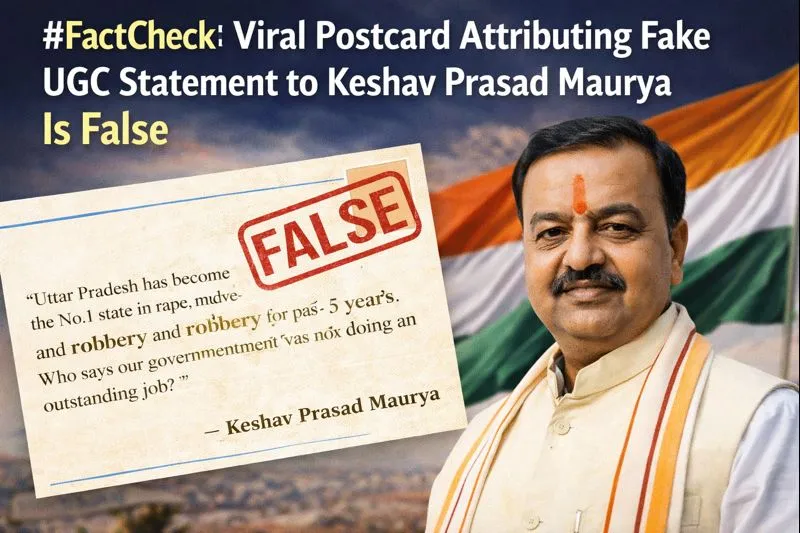

Executive Summary

A postcard claiming that Uttar Pradesh Deputy Chief Minister Keshav Prasad Maurya commented on the Supreme Court’s stay on the new UGC regulations is being widely shared on social media. The viral postcard suggests that Maurya stated the Modi government would “fight till its last breath” to implement the UGC law and appealed to Dalit, backward and tribal communities to trust the government as their true well-wisher. However, an research by the CyberPeace has found that the viral postcard is fake. Keshav Prasad Maurya has not made any such statement.

Claim

A Facebook user shared the postcard with the caption:“Now read it yourself. Statement of Deputy CM Keshav Prasad Maurya — the Modi government will fight till its last breath to implement the UGC law. An appeal to Dalit, backward and tribal communities to trust the government, calling it their true well-wisher.”

(Archived version of the post available here.)

Fact Check:

During the research, we did not find any credible news reports mentioning such a statement by Deputy Chief Minister Keshav Prasad Maurya regarding the UGC regulations or the Supreme Court’s order. A closer examination of the viral postcard revealed several inconsistencies. Notably, the text on the postcard lacks proper punctuation, such as commas and full stops, which is unusual for professionally designed news graphics. The postcard carries the logo of Navbharat Times (NBT). However, when compared with genuine NBT postcards, the font style used in the viral image does not match NBT’s official design. We also traced the original NBT postcard that appears to have been edited to create the fake one. In the authentic postcard, shared by NBT on January 20, Keshav Prasad Maurya is quoted as saying: Where the lotus has bloomed, it will continue to bloom, and where it has not, under the guidance of PM Modi and the leadership of Nitin Nabin, the lotus will bloom.”

The original statement was digitally altered, and a fabricated quote was inserted to create the viral postcard.

Conclusion

CyberPeace research clearly establishes that the viral postcard is fake. The original Navbharat Times postcard has been tampered with, and Keshav Prasad Maurya’s actual statement has been replaced with a fabricated quote, which is now being circulated with a misleading claim.

Introduction

In an era when misinformation spreads like wildfire across the digital landscape, the need for effective strategies to counteract these challenges has grown exponentially in a very short period. Prebunking and Debunking are two approaches for countering the growing spread of misinformation online. Prebunking empowers individuals by teaching them to discern between true and false information and acts as a protective layer that comes into play even before people encounter malicious content. Debunking is the correction of false or misleading claims after exposure, aiming to undo or reverse the effects of a particular piece of misinformation. Debunking includes methods such as fact-checking, algorithmic correction on a platform, social correction by an individual or group of online peers, or fact-checking reports by expert organisations or journalists. An integrated approach which involves both strategies can be effective in countering the rapid spread of misinformation online.

Brief Analysis of Prebunking

Prebunking is a proactive practice that seeks to rebut erroneous information before it spreads. The goal is to train people to critically analyse information and develop ‘cognitive immunity’ so that they are less likely to be misled when they do encounter misinformation.

The Prebunking approach, grounded in Inoculation theory, teaches people to recognise, analyse and avoid manipulation and misleading content so that they build resilience against the same. Inoculation theory, a social psychology framework, suggests that pre-emptively conferring psychological resistance against malicious persuasion attempts can reduce susceptibility to misinformation across cultures. As the term suggests, the MO is to help the mind in the present develop resistance to influence that it may encounter in the future. Just as medical vaccines or inoculations help the body build resistance to future infections by administering weakened doses of the harm agent, inoculation theory seeks to teach people fact from fiction through exposure to examples of weak, dichotomous arguments, manipulation tactics like emotionally charged language, case studies that draw parallels between truths and distortions, and so on. In showing people the difference, inoculation theory teaches them to be on the lookout for misinformation and manipulation even, or especially, when they least expect it.

The core difference between Prebunking and Debunking is that while the former is preventative and seeks to provide a broad-spectrum cover against misinformation, the latter is reactive and focuses on specific instances of misinformation. While Debunking is closely tied to fact-checking, Prebunking is tied to a wider range of specific interventions, some of which increase motivation to be vigilant against misinformation and others increase the ability to engage in vigilance with success.

There is much to be said in favour of the Prebunking approach because these interventions build the capacity to identify misinformation and recognise red flags However, their success in practice may vary. It might be difficult to scale up Prebunking efforts and ensure their reach to a larger audience. Sustainability is critical in ensuring that Prebunking measures maintain their impact over time. Continuous reinforcement and reminders may be required to ensure that individuals retain the skills and information they gained from the Prebunking training activities. Misinformation tactics and strategies are always evolving, so it is critical that Prebunking interventions are also flexible and agile and respond promptly to developing challenges. This may be easier said than done, but with new misinformation and cyber threats developing frequently, it is a challenge that has to be addressed for Prebunking to be a successful long-term solution.

Encouraging people to be actively cautious while interacting with information, acquire critical thinking abilities, and reject the effect of misinformation requires a significant behavioural change over a relatively short period of time. Overcoming ingrained habits and prejudices, and countering a natural reluctance to change is no mean feat. Developing a widespread culture of information literacy requires years of social conditioning and unlearning and may pose a significant challenge to the effectiveness of Prebunking interventions.

Brief Analysis of Debunking

Debunking is a technique for identifying and informing people that certain news items or information are incorrect or misleading. It seeks to lessen the impact of misinformation that has already spread. The most popular kind of Debunking occurs through collaboration between fact-checking organisations and social media businesses. Journalists or other fact-checkers discover inaccurate or misleading material, and social media platforms flag or label it. Debunking is an important strategy for curtailing the spread of misinformation and promoting accuracy in the digital information ecosystem.

Debunking interventions are crucial in combating misinformation. However, there are certain challenges associated with the same. Debunking misinformation entails critically verifying facts and promoting corrected information. However, this is difficult owing to the rising complexity of modern tools used to generate narratives that combine truth and untruth, views and facts. These advanced approaches, which include emotional spectrum elements, deepfakes, audiovisual material, and pervasive trolling, necessitate a sophisticated reaction at all levels: technological, organisational, and cultural.

Furthermore, It is impossible to debunk all misinformation at any given time, which effectively means that it is impossible to protect everyone at all times, which means that at least some innocent netizens will fall victim to manipulation despite our best efforts. Debunking is inherently reactive in nature, addressing misinformation after it has grown extensively. This reactionary method may be less successful than proactive strategies such as Prebunking from the perspective of total harm done. Misinformation producers operate swiftly and unexpectedly, making it difficult for fact-checkers to keep up with the rapid dissemination of erroneous or misleading information. Debunking may need continuous exposure to fact-check to prevent erroneous beliefs from forming, implying that a single Debunking may not be enough to rectify misinformation. Debunking requires time and resources, and it is not possible to disprove every piece of misinformation that circulates at any particular moment. This constraint may cause certain misinformation to go unchecked, perhaps leading to unexpected effects. The misinformation on social media can be quickly spread and may become viral faster than Debunking pieces or articles. This leads to a situation in which misinformation spreads like a virus, while the antidote to debunked facts struggles to catch up.

Prebunking vs Debunking: Comparative Analysis

Prebunking interventions seek to educate people to recognise and reject misinformation before they are exposed to actual manipulation. Prebunking offers tactics for critical examination, lessening the individuals' susceptibility to misinformation in a variety of contexts. On the other hand, Debunking interventions involve correcting specific false claims after they have been circulated. While Debunking can address individual instances of misinformation, its impact on reducing overall reliance on misinformation may be limited by the reactive nature of the approach.

.png)

CyberPeace Policy Recommendations for Tech/Social Media Platforms

With the rising threat of online misinformation, tech/social media platforms can adopt an integrated strategy that includes both Prebunking and Debunking initiatives to be deployed and supported on all platforms to empower users to recognise the manipulative messaging through Prebunking and be aware of the accuracy of misinformation through Debunking interventions.

- Gamified Inoculation: Tech/social media companies can encourage gamified inoculation campaigns, which is a competence-oriented approach to Prebunking misinformation. This can be effective in helping people immunise the receiver against subsequent exposures. It can empower people to build competencies to detect misinformation through gamified interventions.

- Promotion of Prebunking and Debunking Campaigns through Algorithm Mechanisms: Tech/social media platforms may promote and guarantee that algorithms prioritise the distribution of Prebunking materials to users, boosting educational content that strengthens resistance to misinformation. Platform operators should incorporate algorithms that prioritise the visibility of Debunking content in order to combat the spread of erroneous information and deliver proper corrections; this can eventually address and aid in Prebunking and Debunking methods to reach a bigger or targeted audience.

- User Empowerment to Counter Misinformation: Tech/social media platforms can design user-friendly interfaces that allow people to access Prebunking materials, quizzes, and instructional information to help them improve their critical thinking abilities. Furthermore, they can incorporate simple reporting tools for flagging misinformation, as well as links to fact-checking resources and corrections.

- Partnership with Fact-Checking/Expert Organizations: Tech/social media platforms can facilitate Prebunking and Debunking initiatives/campaigns by collaborating with fact-checking/expert organisations and promoting such initiatives at a larger scale and ultimately fighting misinformation with joint hands initiatives.

Conclusion

The threat of online misinformation is only growing with every passing day and so, deploying effective countermeasures is essential. Prebunking and Debunking are the two such interventions. To sum up: Prebunking interventions try to increase resilience to misinformation, proactively lowering susceptibility to erroneous or misleading information and addressing broader patterns of misinformation consumption, while Debunking is effective in correcting a particular piece of misinformation and having a targeted impact on belief in individual false claims. An integrated approach involving both the methods and joint initiatives by tech/social media platforms and expert organizations can ultimately help in fighting the rising tide of online misinformation and establishing a resilient online information landscape.

References

- https://mark-hurlstone.github.io/THKE.22.BJP.pdf

- https://futurefreespeech.org/wp-content/uploads/2024/01/Empowering-Audiences-Through-%E2%80%98Prebunking-Michael-Bang-Petersen-Background-Report_formatted.pdf

- https://newsreel.pte.hu/news/unprecedented_challenges_Debunking_disinformation

- https://misinforeview.hks.harvard.edu/article/global-vaccination-badnews/