#FactCheck - "Viral Video Misleadingly Claims Surrender to Indian Army, Actually Shows Bangladesh Army”

Executive Summary:

A viral video has circulated on social media, wrongly showing lawbreakers surrendering to the Indian Army. However, the verification performed shows that the video is of a group surrendering to the Bangladesh Army and is not related to India. The claim that it is related to the Indian Army is false and misleading.

Claims:

A viral video falsely claims that a group of lawbreakers is surrendering to the Indian Army, linking the footage to recent events in India.

Fact Check:

Upon receiving the viral posts, we analysed the keyframes of the video through Google Lens search. The search directed us to credible news sources in Bangladesh, which confirmed that the video was filmed during a surrender event involving criminals in Bangladesh, not India.

We further verified the video by cross-referencing it with official military and news reports from India. None of the sources supported the claim that the video involved the Indian Army. Instead, the video was linked to another similar Bangladesh Media covering the news.

No evidence was found in any credible Indian news media outlets that covered the video. The viral video was clearly taken out of context and misrepresented to mislead viewers.

Conclusion:

The viral video claiming to show lawbreakers surrendering to the Indian Army is footage from Bangladesh. The CyberPeace Research Team confirms that the video is falsely attributed to India, misleading the claim.

- Claim: The video shows miscreants surrendering to the Indian Army.

- Claimed on: Facebook, X, YouTube

- Fact Check: False & Misleading

Related Blogs

.webp)

Introduction

Misinformation has the potential to impact people, communities and institutions alike, and the ramifications can be far-ranging. From influencing voter behaviours and consumer choices to shaping personal beliefs and community dynamics, the information we consume in our daily lives affects every aspect of our existence. And so, when this very information is flawed or incomplete, whether accidentally or deliberately so, it has the potential to confuse and mislead people.

‘Debunking’ is the process of exposing false information or countering inaccuracies and manipulation by presenting actual facts. The goal is to minimise the harmful effects of misinformation by informing and educating people. Debunking initiatives work hard to expose false information and cut down conspiracies, catalogue evidence of false information, clearly identify sources of misinformation vs. accurate information, and assert the truth. Debunking looks at building capacity and educating people both as a strategy and goal.

Debunking is most effective when it comes from trusted sources, provides detailed explanations, and offers guidance and verifiable advice. Debunking is reactive in nature and it focuses on specific instances of misinformation and is closely tied to fact-checking. Debunking aims to mitigate the impact of misinformation that has already spread. As such, the approach is to contain and correct, post-occurrence. The most common method of debunking is collaboration between fact-checking groups and social media companies. When journalists or other fact-checkers identify false or misleading content, social media sites flag or label it such, so that audiences are alerted. Debunking is an essential method for reducing the impact and incidence of misinformation by providing real facts and increasing overall accuracy of content in the digital information ecosystem.

Role of Debunking the Misinformation

Debunking fights against false or misleading information by correcting false claims, myths, and misinformation with evidence-based rebuttals. It combats untruths and the spread of misinformation by providing and disseminating debunked evidence to the public. Debunking by presenting evidence that contradicts misleading facts and encourages individuals to develop fact-checking habits and proactively check for authenticated sources. Debunking plays a vital role in boosting trust in credible sources by offering evidence-based corrections and enhancing the credibility of online information. By exposing falsehoods and endorsing qualities like information completeness and evidence-backed data and logic, debunking efforts help create a culture of well-informed and constructive public conversations and analytical exchanges. Effectively dispelling myths and misinformation can help create communities and societies that are more educated, resilient, and goal-oriented.

Debunking as a tailoring Strategy to counter Misinformation

Understanding the information environment and source trustworthiness is critical for developing effective debunking techniques. Successful debunking efforts use clear messages, appealing forms, and targeted distribution to reach a wide range of netizens. Debunking as an effective method for combating misinformation includes analysing successful efforts, using fact-checking, relying on reputable sources for corrections, and using scientific communication. Fact-checking plays a critical role in ensuring information accuracy and holding people accountable for making misleading claims. Collaborative efforts and transparent techniques can boost the credibility and efficacy of fact-checking activities and boost the legitimacy and effectiveness of debunking initiatives at a larger scale. Scientific communication is also critical for debunking myths about different topics/concerns by giving evidence-based information. Clear and understandable framing of scientific knowledge is critical for engaging broad audiences and effectively refuting misinformation.

CyberPeace Policy Recommendations

- It is recommended that debunking initiatives must highlight core facts, emphasising what is true over what is wrong and establishing a clear contrast between the two. This is crucial as people are more likely to believe familiar information even if they learn later that it is incorrect. Debunking must provide a comprehensive explanation, filling the ‘information gap’ created by the myth. This can be done by explaining things as clearly as possible, as people may stop paying attention if they are faced with an overload of competing information. The use of visuals to illustrate core facts is an effective way to help people understand the issue and clearly tell the difference between information and misinformation.

- Individuals can play a role in debunking misinformation on social media by highlighting inconsistencies, recommending related articles with corrections or sharing trusted sources and debunking reports in their communities.

- Governments and regulatory agencies can improve information openness by demanding explicit source labelling and technical measures to be implemented on platforms. This can increase confidence in information sources and equip people to practice discernment when they consume content online. Governments should also support and encourage independent fact-checking organisations that are working to disprove misinformation. Digital literacy programmes may teach the public how to critically assess information online and spot any misinformation.

- Tech businesses may enhance algorithms for detecting and flagging misinformation, therefore reducing the propagation of misleading information. Offering options for people to report suspicious/doubtful information and misinformation can empower them and help them play an active role in identifying and rectifying inaccurate information online and foster a more responsible information environment on the platforms.

Conclusion

Debunking is an effective strategy to counter widespread misinformation through a combination of fact-checking, scientific evidence, factual explanations, verified facts and corrections. Debunking can play an important role in fostering a culture where people look for authenticity while consuming the information and place a high value on trusted and verified information. A collaborative strategy can increase the legitimacy and reach of debunking efforts, making them more effective in reaching larger audiences and being easy-to-understand for a wide range of demographics. In a complex and ever-evolving digital ecosystem, it is important to build information resilience both at the macro level for the ecosystem as a whole and at the micro level, with the individual consumer. Only then can we ensure a culture of mindful, responsible content creation and consumption.

References

Introduction:

This report examines ongoing phishing scams targeting "State Bank of India (SBI)" customers, India's biggest public bank using fake SelfKYC APKs to trick people. The image plays a part in a phishing plan to get users to download bogus APK files by claiming they need to update or confirm their "Know Your Customer (KYC)" info.

Fake Claim:

A picture making the rounds on social media comes with an APK file. It shows a phishing message that says the user's SBI YONO account will stop working because of their "Old PAN card." It then tells the user to install the "WBI APK" APK (Android Application Package) to check documents and keep their account open. This message is fake and aims to get people to download a harmful app.

Key Characteristics of the Scam:

- The messages "URGENTLY REQUIRED" and "Your account will be blocked today" show how scammers try to scare people into acting fast without thinking.

- PAN Card Reference: Crooks often use PAN card verification and KYC updates as a trick because these are normal for Indian bank customers.

- Risky APK Downloads: The message pushes people to get APK files, which can be dangerous. APKs from places other than the Google Play Store often have harmful software.

- Copying the Brand: The message looks a lot like SBI's real words and logos to seem legit.

- Shady Source: You can't find the APK they mention on Google Play or SBI's website, which means you should ignore the app right away.

Modus Operandi:

- Delivery Mechanism: Typically, users of messaging services like "WhatsApp," "SMS," or "email" receive identical messages with an APK link, which is how the scam is distributed.

- APK Installation: The phony APK frequently asks for a lot of rights once it is installed, including access to "SMS," "contacts," "calls," and "banking apps."

- Data Theft: Once installed, the program may have the ability to steal card numbers, personal information, OTPs, and banking credentials.

- Remote Access: These APKs may occasionally allow cybercriminals to remotely take control of the victim's device in order to carry out fraudulent financial activities.

While the user installs the application on their device the following interface opens:

It asks the user to allow the following:

- SMS is used to send and receive info from the bank.

- User details such as Username, Password, Mobile Number, and Captcha.

Technical Findings of the Application:

Static Analysis:

- File Name: SBI SELF KYC_015850.apk

- Package Name: com.mark.dot.comsbione.krishn

- Scan Date: Sept. 25, 2024, 6:45 a.m.

- App Security Score: 52/100 (MEDIUM RISK)

- Grade: B

File Information:

- File Name: SBI SELF KYC_015850.apk

- Size: 2.88MB

- MD5: 55fdb5ff999656ddbfa0284d0707d9ef

- SHA1: 8821ee6475576beb86d271bc15882247f1e83630

- SHA256: 54bab6a7a0b111763c726e161aa8a6eb43d10b76bb1c19728ace50e5afa40448

App Information:

- App Name: SBl Bank

- Package Name:: com.mark.dot.comsbione.krishn

- Main Activity: com.mark.dot.comsbione.krishn.MainActivity

- Target SDK: 34

- Min SDK: 24

- Max SDK:

- Android Version Name:: 1.0

- Android Version Code:: 1

App Components:

- Activities: 8

- Services: 2

- Receivers: 2

- Providers: 1

- Exported Activities: 0

- Exported Services: 1

- Exported Receivers: 2

- Exported Providers:: 0

Certificate Information:

- Binary is signed

- v1 signature: False

- v2 signature: True

- v3 signature: False

- v4 signature: False

- X.509 Subject: CN=PANDEY, OU=PANDEY, O=PANDEY, L=NK, ST=NK, C=91

- Signature Algorithm: rsassa_pkcs1v15

- Valid From: 20240904 07:38:35+00:00

- Valid To: 20490829 07:38:35+00:00

- Issuer: CN=PANDEY, OU=PANDEY, O=PANDEY, L=NK, ST=NK, C=91

- Serial Number: 0x1

- Hash Algorithm: sha256

- md5: 4536ca31b69fb68a34c6440072fca8b5

- sha1: 6f8825341186f39cfb864ba0044c034efb7cb8f4

- sha256: 6bc865a3f1371978e512fa4545850826bc29fa1d79cdedf69723b1e44bf3e23f

- sha512:05254668e1c12a2455c3224ef49a585b599d00796fab91b6f94d0b85ab48ae4b14868dabf16aa609c3b6a4b7ac14c7c8f753111b4291c4f3efa49f4edf41123d

- PublicKey Algorithm: RSA

- Bit Size: 2048

- Fingerprint: a84f890d7dfbf1514fc69313bf99aa8a826bade3927236f447af63fbb18a8ea6

- Found 1 unique certificate

App Permission

1. Normal Permissions

- Access_network_state: Allows the App to View the Network Status of All Networks.

- Foreground_service: Enables Regular Apps to Use Foreground Services.

- Foreground_service_data_sync: Allows Data Synchronization With Foreground Services.

- Internet: Grants Full Internet Access.

2. Signature Permission:

- Broadcast_sms: Sends Sms Received Broadcasts. It Can Be Abused by Malicious Apps to Forge Incoming Sms Messages.

3. Dangerous Permissions:

- Read_phone_numbers: Grants Access to the Device’s Phone Number(S).

- Read_phone_state: Reads the Phone’s State and Identity, Including Phone Features and Data.

- Read_sms: Allows the App to Read Sms or Mms Messages Stored on the Device or Sim Card. Malicious Apps Could Use This to Read Confidential Messages.

- Receive_sms: Enables the App to Receive and Process Sms Messages. Malicious Apps Could Monitor or Delete Messages Without Showing Them to the User.

- Send_sms: Allows the App to Send Sms Messages. Malicious Apps Could Send Messages Without the User’s Confirmation, Potentially Leading to Financial Costs.

On further analysis on virustotal platform using md5 hash file, the following results were retrieved where there are 24 security vendors out of 68, marked this apk file as malicious and the graph represents the distribution of malicious file in the environment.

Key Takeaways:

- Normal Permissions: Generally Safe for Accessing Basic Functionalities (Network State, Internet).

- Signature Permissions: May Pose Risks When Misused, Especially Related to Sms Broadcasts.

- Dangerous Permissions: Provide Sensitive Data Access, Such as Phone Numbers and Device Identity, Which Can Be Exploited by Malicious Apps.

- The Dangerous Permissions Pose Risks Regarding the Reading, Receiving, and Sending of Sms, Which Can Lead to Privacy Breaches or Financial Consequences.

How to Identify the Scam:

- Official Statement: SBI never asks clients to download unauthorized APKs for upgrades related to KYC or other services. All formal correspondence takes place via the SBI YONO app, which may be found in reputable app shops.

- No Immediate Threats: Bank correspondence never employs menacing language or issues harsh deadlines, such as "your account will be blocked today."

- Email Domain and SMS Number: Verified email addresses or phone numbers are used for official SBI correspondence. Generic, unauthorized numbers or addresses are frequently used in scams.

- Links and APK Files: Steer clear of downloading APK files from unreliable sources at all times. For app downloads, visit the Apple App Store or Google Play Store instead.

CyberPeace Advisory:

- The Research team recommends that people should avoid opening such messages sent via social platforms. One must always think before clicking on such links, or downloading any attachments from unauthorised sources.

- Downloading any application from any third party sources instead of the official app store should be avoided. This will greatly reduce the risk of downloading a malicious app, as official app stores have strict guidelines for app developers and review each app before it gets published on the store.

- Even if you download the application from an authorised source, check the app's permissions before you install it. Some malicious apps may request access to sensitive information or resources on your device. If an app is asking for too many permissions, it's best to avoid it.

- Keep your device and the app-store app up to date. This will ensure that you have the latest security updates and bug fixes.

- Falling into such a trap could result in a complete compromise of the system, including access to sensitive information such as microphone recordings, camera footage, text messages, contacts, pictures, videos, and even banking applications and could lead users to financial loss.

- Do not share confidential details like credentials, banking information with such types of Phishing scams.

- Never share or forward fake messages containing links on any social platform without proper verification.

Conclusion:

Fake APK phishing scams target financial institutions more often. This report outlines safety steps for SBI customers and ways to spot and steer clear of these cons. Keep in mind that legitimate banks never ask you to get an APK from shady websites or threaten to close your account right away. To stay safe, use SBI's official YONO app on both systems and get apps from trusted places like Google Play or the Apple App Store. Check if the info is true before you do anything turn on 2FA for all your bank and money accounts, and tell SBI or your local cyber police about any scams you see.

Executive Summary:

A video went viral on social media claiming to show a bridge collapsing in Bihar. The video prompted panic and discussions across various social media platforms. However, an exhaustive inquiry determined this was not real video but AI-generated content engineered to look like a real bridge collapse. This is a clear case of misinformation being harvested to create panic and ambiguity.

Claim:

The viral video shows a real bridge collapse in Bihar, indicating possible infrastructure failure or a recent incident in the state.

Fact Check:

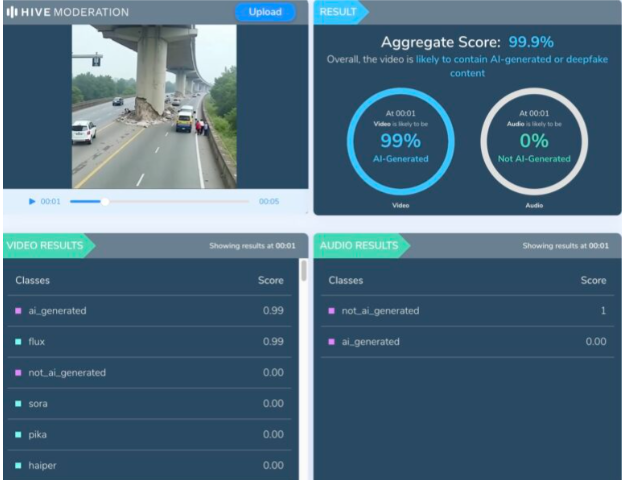

Upon examination of the viral video, various visual anomalies were highlighted, such as unnatural movements, disappearing people, and unusual debris behavior which suggested the footage was generated artificially. We used Hive AI Detector for AI detection, and it confirmed this, labelling the content as 99.9% AI. It is also noted that there is the absence of realism with the environment and some abrupt animation like effects that would not typically occur in actual footage.

No valid news outlet or government agency reported a recent bridge collapse in Bihar. All these factors clearly verify that the video is made up and not real, designed to mislead viewers into thinking it was a real-life disaster, utilizing artificial intelligence.

Conclusion:

The viral video is a fake and confirmed to be AI-generated. It falsely claims to show a bridge collapsing in Bihar. This kind of video fosters misinformation and illustrates a growing concern about using AI-generated videos to mislead viewers.

Claim: A recent viral video captures a real-time bridge failure incident in Bihar.

Claimed On: Social Media

Fact Check: False and Misleading