#FactCheck- Viral video of UP Police patrolling on e-rickshaw is AI-generated

Executive Summary

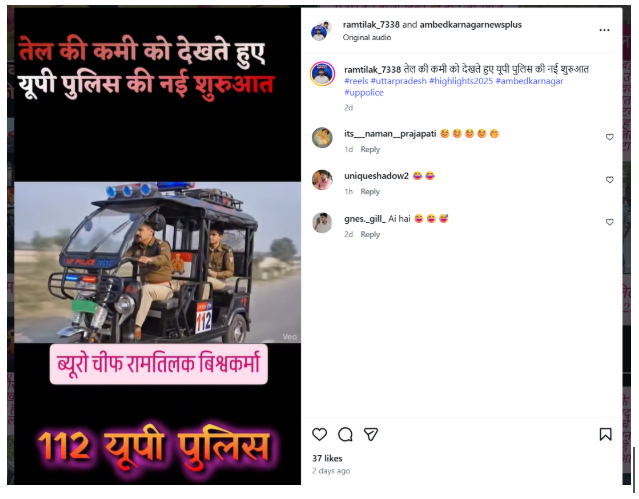

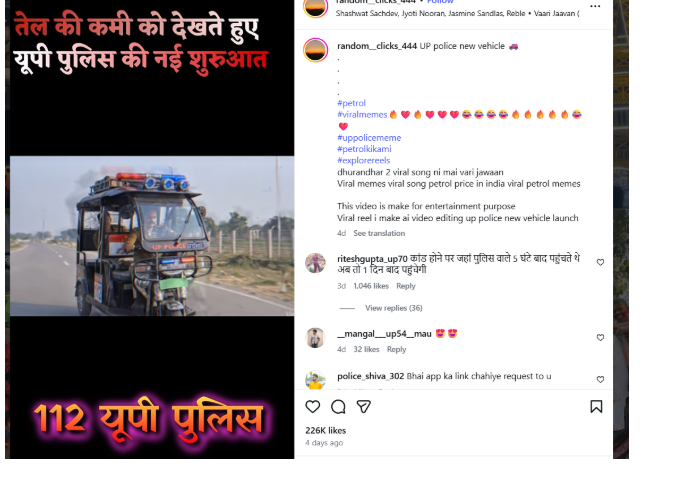

A video is being widely shared on social media showing a police officer driving an e-rickshaw, while two other policemen are seen in the back seat. Users sharing the clip claim that, due to a shortage of petrol, this is a new initiative by the Uttar Pradesh Police. However, research by CyberPeace found the viral claim to be false. Our research also confirms that the video is not real but AI-generated.

Claim

An Instagram user shared the viral video claiming that due to fuel shortages, Uttar Pradesh Police has started patrolling using e-rickshaws.

- Post link: https://www.instagram.com/reel/DWepKWXAeiE/

- Archive: https://archive.ph/QBNXs

Fact Check

To verify the claim, we first conducted a keyword search on Google but found no credible media reports supporting this claim.

Next, we extracted keyframes from the viral video and performed a reverse image search using Google Lens. During this process, we found the same video uploaded on an Instagram channel on March 28, 2026. The uploader clearly mentioned that the video was created purely for entertainment purposes.

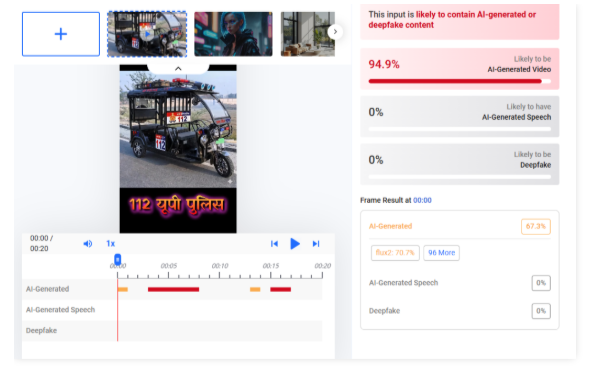

We further analyzed the video using AI detection tools. When scanned with Hive Moderation, the results indicated that the video is approximately 94% AI-generated.

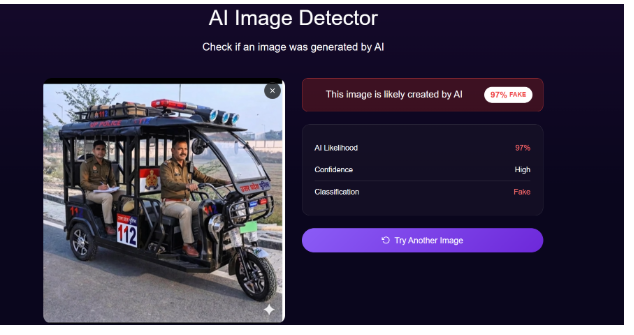

In the next step, we also tested the clip using DeepAI. According to its analysis, the video is about 97% AI-generated.

Conclusion

Our research clearly shows that the viral video is not authentic. It is an AI-generated clip created for entertainment purposes, and the claim that Uttar Pradesh Police has started e-rickshaw patrolling due to petrol shortage is false.

Related Blogs

THREE CENTRES OF EXCELLENCE IN ARTIFICIAL INTELLIGENCE:

India’s Finance Minister, Mrs. Nirmala Sitharaman, with a vision of ‘Make AI for India’ and ‘Make AI work for India, ’ announced during the presentation of Union Budget 2023 that the Indian Government is planning to set up three ‘Centre of Excellence’ for Artificial Intelligence in top Educational Institutions to revolutionise fields such as health, agriculture, etc.

Under the ‘Amirt Kaal,’ i.e., the budget of 2023 is a stepping stone by the government to have a technology-driven knowledge-based economy and the seven priorities that have been set up by the government called ‘Saptarishi’ such as inclusive development, reaching the last mile, infrastructure investment, unleashing potential, green growth, youth power, and financial sector will guide the nation in this endeavor along with leading industry players that will partner in conducting interdisciplinary research, developing cutting edge applications and scalable problem solutions in such areas.

The government has already formed the roadmap for AI in the nation through MeitY, NASSCOM, and DRDO, indicating that the government has already started this AI revolution. For AI-related research and development, the Centre for Artificial Intelligence and Robotics (CAIR) has already been formed, and biometric identification, facial recognition, criminal investigation, crowd and traffic management, agriculture, healthcare, education, and other applications of AI are currently being used.

Even a task force on artificial intelligence (AI) was established on August 24, 2017. The government had promised to set up Centers of Excellence (CoEs) for research, education, and skill development in robotics, artificial intelligence (AI), digital manufacturing, big data analytics, quantum communication, and the Internet of Things (IoT) and by announcing the same in the current Union budget has planned to fulfill the same.

The government has also announced the development of 100 labs in engineering institutions for developing applications using 5G services that will collaborate with various authorities, regulators, banks, and other businesses.

Developing such labs aims to create new business models and employment opportunities. Among others, it will also create smart classrooms, precision farming, intelligent transport systems, and healthcare applications, as well as new pedagogy, curriculum, continual professional development dipstick survey, and ICT implementation will be introduced for training the teachers.

POSSIBLE ROLES OF AI:

The use of AI in top educational institutions will help students to learn at their own pace, using AI algorithms providing customised feedback and recommendations based on their performance, as it can also help students identify their strengths and weaknesses, allowing them to focus their study efforts more effectively and efficiently and will help train students in AI and make the country future-ready.

The main area of AI in healthcare, agriculture, and sustainable cities would be researching and developing practical AI applications in these sectors. In healthcare, AI can be effective by helping medical professionals diagnose diseases faster and more accurately by analysing medical images and patient data. It can also be used to identify the most effective treatments for specific patients based on their genetic and medical history.

Artificial Intelligence (AI) has the potential to revolutionise the agriculture industry by improving yields, reducing costs, and increasing efficiency. AI algorithms can collect and analyse data on soil moisture, crop health, and weather patterns to optimise crop management practices, improve yields and the health and well-being of livestock, predict potential health issues, and increase productivity. These algorithms can identify and target weeds and pests, reducing the need for harmful chemicals and increasing sustainability.

ROLE OF AI IN CYBERSPACE:

Artificial Intelligence (AI) plays a crucial role in cyberspace. AI technology can enhance security in cyberspace, prevent cyber-attacks, detect and respond to security threats, and improve overall cybersecurity. Some of the specific applications of AI in cyberspace include:

- Intrusion Detection: AI-powered systems can analyse large amounts of data and detect signs of potential cyber-attacks.

- Threat Analysis: AI algorithms can help identify patterns of behaviour that may indicate a potential threat and then take appropriate action.

- Fraud Detection: AI can identify and prevent fraudulent activities, such as identity theft and phishing, by analysing large amounts of data and detecting unusual behaviour patterns.

- Network Security: AI can monitor and secure networks against potential cyber-attacks by detecting and blocking malicious traffic.

- Data Security: AI can be used to protect sensitive data and ensure that it is only accessible to authorised personnel.

CONCLUSION:

Introducing AI in top educational institutions and partnering it with leading industries will prove to be a stepping stone to revolutionise the development of the country, as Artificial Intelligence (AI) has the potential to play a significant role in the development of a country by improving various sectors and addressing societal challenges. Overall, we hope to see an increase in efficiency and productivity across various industries, leading to increased economic growth and job creation, improved delivery of healthcare services by increasing access to care and, improving patient outcomes, making education more accessible and effective as AI has the potential to improve various sectors of a country and contribute to its overall development and progress. However, it’s important to ensure that AI is developed and used ethically, considering its potential consequences and impact on society.

References:

.webp)

In the tapestry of our modern digital ecosystem, a silent, pervasive conflict simmers beneath the surface, where the quest for cyber resilience seems Sisyphean at times. It is in this interconnected cyber dance that the obscure orchestrator, StripedFly, emerges as the maestro of stealth and disruption, spinning a complex, mostly unseen web of digital discord. StripedFly is not some abstract concept; it represents a continual battle against the invisible forces that threaten the sanctity of our digital domain.

This saga of StripedFly is not a tale of mere coincidence or fleeting concern. It is emblematic of a fundamental struggle that defines the era of interconnected technology—a struggle that is both unyielding and unforgiving in its scope. Over the past half-decade, StripedFly has slithered its way into over a million devices, creating a clandestine symphony of cybersecurity breaches, data theft, and unintentional complicity in its agenda. Let's delve deep into this grand odyssey to unravel the odious intricacies of StripedFly and assess the reverberations felt across our collective pursuit of cyber harmony.

The StripedFly malware represents the epitome of a digital chameleon, a master of cyber camouflage, masquerading as a mundane cryptocurrency miner while quietly plotting the grand symphony of digital bedlam. Its deceptive sophistication has effortlessly skirted around the conventional tripwires laid by our cybersecurity guardians for years. The Russian cybersecurity giant Kaspersky's encounter with StripedFly in 2017 brought this ghostly figure into the spotlight—hitherto, a phantom whistling past the digital graveyard of past threats.

How Does it work

Distinctive in its composition, StripedFly conceals within its modular framework the potential for vast infiltration—an exploitation toolkit designed to puncture the fortifications of both Linux and Windows systems. In an emboldened maneuver, it utilizes a customized version of the EternalBlue SMBv1 exploit—a technique notoriously linked to the enigmatic Equation Group. Through such nefarious channels, StripedFly not only deploys its malicious code but also tenaciously downloads binary files and executes PowerShell scripts with a sinister adeptness unbeknownst to its victims.

Despite its insidious nature, perhaps its most diabolical trait lies in its array of plugin-like functions. It's capable of exfiltrating sensitive information, erasing its tracks, and uninstalling itself with almost supernatural alacrity, leaving behind a vacuous space where once tangible evidence of its existence resided.

In the intricate chess game of cyber threats, StripedFly plays the long game, prioritizing persistence over temporary havoc. Its tactics are calculated—the meticulous disabling of SMBv1 on compromised hosts, the insidious utilization of pilfered keys to propagate itself across networks via SMB and SSH protocols, and the creation of task scheduler entries on Windows systems or employing various methods to assert its nefarious influence within Linux environments.

The Enigma around the Malware

This dualistic entity couples its espionage with monetary gain, downloading a Monero cryptocurrency miner and utilizing the shadowy veils of DNS over HTTPS (DoH) to camouflage its command and control pool servers. This intricate masquerade serves as a cunning, albeit elaborate, smokescreen, lulling security mechanisms into complacency and blind spots.

StripedFly goes above and beyond in its quest to minimize its digital footprint. Not only does it store its components as encrypted data on code repository platforms, deftly dispersed among the likes of Bitbucket, GitHub, and GitLab, but it also harbors a bespoke, efficient TOR client to communicate with its cloistered C2 server out of sight and reach in the labyrinthine depths of the TOR network.

One might speculate on the genesis of this advanced persistent threat—its nuanced approach to invasion, its parallels to EternalBlue, and the artistic flare that permeates its coding style suggest a sophisticated architect. Indeed, the suggestion of an APT actor at the helm of StripedFly invites a cascade of questions concerning the ultimate objectives of such a refined, enduring campaign.

How to deal with it

To those who stand guard in our ever-shifting cyber landscape, the narrative of StripedFly is a clarion call. StObjective reminders of the trench warfare we engage in to preserve the oasis of digital peace within a desert of relentless threats. The StripedFly chronicle stands as a persistent, looming testament to the necessity for heeding the sirens of vigilance and precaution in cyber practice.

Reaffirmation is essential in our quest to demystify the shadows cast by StripedFly, as it punctuates the critical mission to nurture a more impregnable digital habitat. Awareness and dedication propel us forward—the acquisition of knowledge regarding emerging threats, the diligent updating and patching of our systems, and the fortification of robust, multilayered defenses are keystones in our architecture of cyber defense. Together, in concert and collaboration, we stand a better chance of shielding our digital frontier from the dim recesses where threats like StripedFly lurk, patiently awaiting their moment to strike.

References:

https://thehackernews.com/2023/11/stripedfly-malware-operated-unnoticed.html?m=1

Introduction

Valentine’s Day celebrates the bond between people, their romantic love, and their deep relationships with others. The increasing use of digital platforms in modern relationships has created a situation where cybercriminals use this time of year to exploit human emotions for money-making schemes. The period around 14 February often sees a rise in online romance scams, phishing attacks, and fake shopping websites that specifically target people who are emotionally vulnerable and active online. People need to be aware of these scams because this awareness helps them protect their personal information and their financial resources.

The Rise of Romance Scams

Modern romance scams have evolved from their original form because criminals now execute their schemes through more advanced methods. Fraudsters create authentic-looking fake identities, which they use to deceive victims through dating applications, social media platforms and networking websites. The profiles use stolen images and fake job histories, together with convincing emotional stories, which help them establish trust with potential victims.

Scammers usually begin their deception after they have built an emotional connection with their targets. Once trust is established, they introduce a crisis or an opportunity that pressures the victim to act quickly. This is often presented as a problem that needs urgent help or a chance that should not be missed, such as:

- A sudden medical emergency that requires money for treatment

- Requests for travel expenses to finally come and meet in person

- Fake investment opportunities that promise quick or guaranteed returns

- Demands for customs, courier, or clearance fees to release a supposed package or gift

They make the victim give money to them and buy gift cards and handle personal banking details. The scam takes place for several weeks or months until the victim starts to show doubt about what is happening. The psychological manipulation that occurs in romance scams causes severe harm to their victims. Victims experience two types of damage because criminals steal their money, and they suffer emotional pain, and their social standing gets damaged.

Fake E-Commerce and “Valentine’s Deals”

Valentine's Day marks the beginning of a shopping rush, which leads people to buy various gifts, including flowers, jewellery and customised products, as well as making reservations for events. Cybercriminals create fake websites to exploit this demand by providing fake discounts and temporary promotional offers.

Common warning signs include:

- Newly registered domains that lack valid user reviews

- Websites that contain multiple spelling mistakes and display poor design

- Payment requests through methods that cannot be tracked

- Online platforms that lack secure payment processing systems

Consumers who make purchases on such sites face the risk of losing money while their card information is stolen for future fraudulent activities.

Phishing in the Name of Love

During the holiday season, phishing campaigns increase their focus on particular targets. Users may receive:

- Valentine's Day discount emails

- Messages that claim to show secret admirer intentions

- Links that lead to supposed romantic surprises

- Delivery notifications that inform about unreceived gifts

Malicious links result in credential theft, malware installation and unauthorised financial transactions. At first glance, these attacks show resemblance to authentic brands and logistics companies, which makes them hard to identify.

Investment and Crypto Romance Fraud

A rising type of romance scams now uses cryptocurrency and online trading platforms as their new approach. Scammers who establish trust with their victims will convince them to invest in digital assets that appear to generate high returns. The fake dashboards display excellent investment results to convince investors to commit more funds. The process stops when they block all withdrawal requests and stop all contact with the user. The combination of emotional manipulation with financial fraud shows how cybercrime develops according to technological advancements.

Why Seasonal Scams Work

Seasonal scams succeed because they match the predictable behaviour patterns that people exhibit during specific times of the year. During Valentine’s season:

- People experience their highest emotional vulnerability

- People shop more frequently through online platforms

- People use digital platforms at increased rates

- Users will decrease their level of scepticism while trying to establish connections with others

Cybercriminals use urgent situations together with emotional ties and social norms as their primary attack methods. The combination of psychological triggers and digital convenience creates fertile ground for deception.

CyberPeace Recommendations for Staying Safe This Valentine’s Season

The digital platforms provide people who search for connections with valuable opportunities to connect with others, yet users must remain careful about their online activities. People can protect themselves from online fraud by following these steps:

- They should confirm identity details before they give away their private data.

- They should not send money to people whom they met only through internet platforms.

- They should verify website ownership and examine customer feedback before making online purchases.

- They should activate multi-factor authentication for their social media accounts and financial accounts.

- People should treat unexpected links with great care, especially those links that create a sense of urgency.

- The Cybercrime reporting portal www.cybercrime.gov.in with 24x7 helpline 1930 is an effective tool at the disposal of victims of cybercrimes to report their complaints.

- In case of any cyber threat, issue or discrepancy, you can also seek assistance from the CyberPeace Helpline at +91 9570000066 or write to us at helpline@cyberpeace.net. Immediate reporting protects victims and helps to combat cybercrime.

Conlusion

Online safety during festive seasons requires shared responsibility among multiple parties. Digital resilience is strengthened through the combined efforts of platforms, financial institutions, regulators, and civil society organisations. The digital ecosystem becomes safer through three essential elements, which include awareness campaigns, stronger verification systems, and timely reporting mechanisms.

Valentine’s Day centres on the building of trust between people who want to connect with each other. To maintain trust in digital environments, users need to practice digital literacy skills, which should be shared by everyone. People who stay updated about cybersecurity threats can celebrate Valentine’s Day more safely, because their expressions of love remain protected from online scams.

References

- https://www.cloudsek.com/blog/valentines-day-cyber-attack-landscape-exploiting-love-through-digital-deception

- https://about.fb.com/news/2025/02/how-avoid-romance-scams-this-valentines-day/

- https://www.fbi.gov/contact-us/field-offices/sanfrancisco/fbi-san-francisco-warns-romance-scams-increasing-across-the-bay-area-this-valentines-day

- https://abc11.com/post/romance-scams-surge-ahead-valentines-day/18581079/

- https://www.moneycontrol.com/technology/5-common-online-scams-you-should-avoid-this-valentine-s-day-article-13820108.html