#FactCheck: Viral Fake Post Claims Central Government Offers Unemployment Allowance Under ‘PM Berojgari Bhatta Yojna’

Executive Summary:

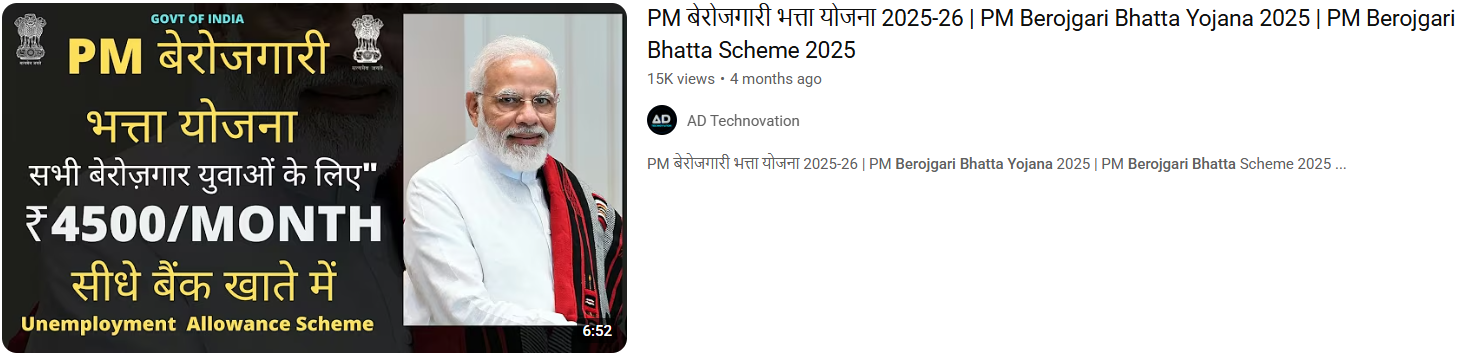

A viral thumbnail and numerous social posts state that the government of India is giving unemployed youth ₹4,500 a month under a program labeled "PM Berojgari Bhatta Yojana." This claim has been shared on multiple online platforms.. It has given many job-seeking individuals hope, however, when we independently researched the claim, there was no verified source of the scheme or government notification.

Claim:

The viral post states: "The Central Government is conducting a scheme called PM Berojgari Bhatta Yojana in which any unemployed youth would be given ₹ 4,500 each month. Eligible candidates can apply online and get benefits." Several videos and posts show suspicious and unverified website links for registration, trying to get the general public to share their personal information.

Fact check:

In the course of our verification, we conducted a research of all government portals that are official, in this case, the Ministry of Labour and Employment, PMO India, MyScheme, MyGov, and Integrated Government Online Directory, which lists all legitimate Schemes, Programmes, Missions, and Applications run by the Government of India does not posted any scheme related to the PM Berojgari Bhatta Yojana.

Numerous YouTube channels seem to be monetizing false narratives at the expense of sentiment, leading users to misleading websites. The purpose of these scams is typically to either harvest data or market pay-per-click ads that suspend disbelief in outrageous claims.

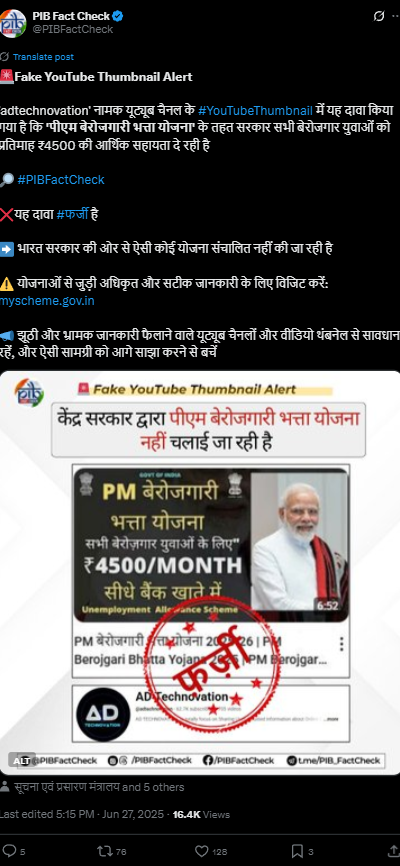

Our research findings were backed up later by the PIB Fact Check which shared a clarification on social media. stated that: “No such scheme called ‘PM Berojgari Bhatta Yojana’ is in existence. The claim that has gone viral is fake”.

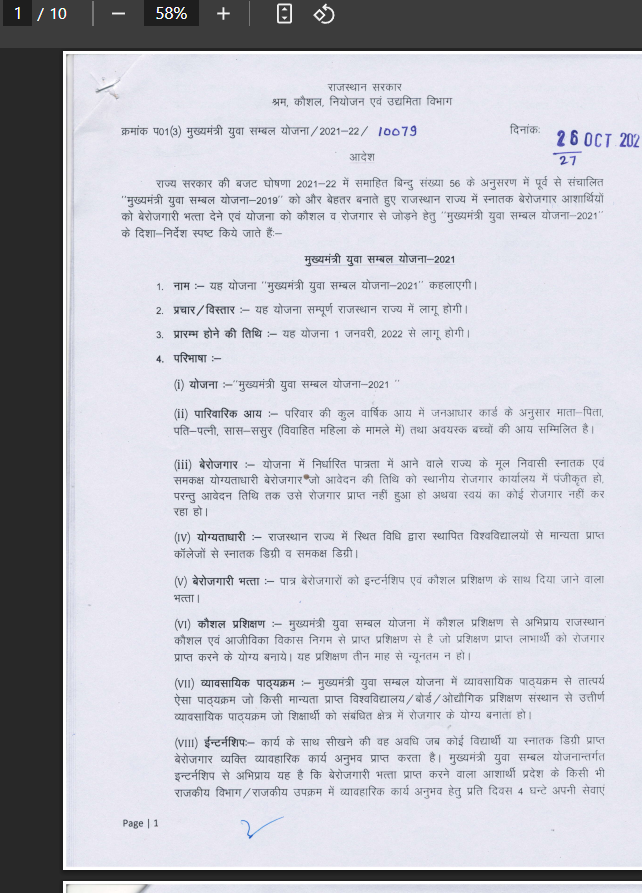

To provide some perspective, in 2021-22, the Rajasthan government launched a state-level program under the Mukhyamantri Udyog Sambal Yojana (MUSY) that provided ₹4,500/month to unemployed women and transgender persons, and ₹4000/month to unemployed males. This was not a Central Government program, and the current viral claim falsely contextualizes past, local initiatives as nationwide policy.

Conclusion:

The claim of a ₹4,500 monthly unemployment benefit under the PM Berojgari Bhatta Yojana is incorrect. The Central Government or any government department has not launched such a scheme. Our claim aligns with PIB Fact Check, which classifies this as a case of misinformation. We encourage everyone to be vigilant and avoid reacting to viral fake news. Verify claims through official sources before sharing or taking action. Let's work together to curb misinformation and protect citizens from false hopes and data fraud.

- Claim: A central policy offers jobless individuals ₹4,500 monthly financial relief

- Claimed On: Social Media

- Fact Check: False and Misleading

.webp)