#FactCheck -AI-Generated Image Falsely Linked to Kotdwar Shop Controversy

Executive Summary

A dispute had recently emerged in Kotdwar, Uttarakhand, over the name of a shop. During the controversy, a local youth, Deepak Kumar, came forward in support of the shopkeeper. The incident subsequently became a subject of discussion on social media, with users expressing varied reactions. Meanwhile, a photo began circulating on social media showing a burqa-clad woman presenting a bouquet to Deepak Kumar. The image is being shared with the claim that All India Majlis-e-Ittehadul Muslimeen (AIMIM)’s women’s president, Rubina, welcomed “Mohammad Deepak Kumar” by presenting him with a bouquet. However, research conducted by the CyberPeace found the viral claim to be false. The research revealed that users are sharing an AI-generated image with a misleading claim.

Claim:

On social media platform Instagram, a user shared the viral image claiming that AIMIM’s women’s president Rubina welcomed “Mohammad Deepak Kumar” by presenting him with a bouquet. The link to the post, its archived version, and a screenshot are provided below.

Fact Check:

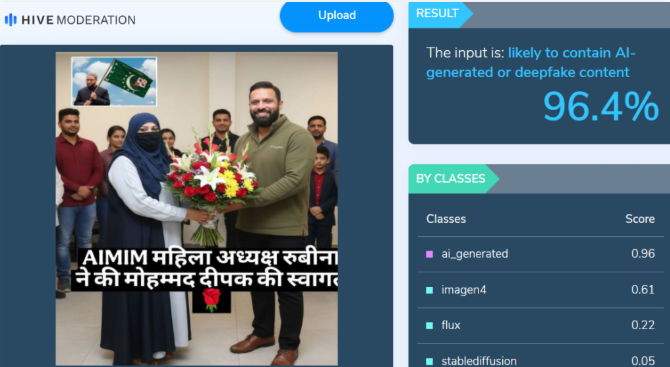

Upon closely examining the viral image, certain inconsistencies raised suspicion that it could be AI-generated. To verify its authenticity, the image was analysed using the AI detection tool Hive Moderation, which indicated a 96 percent probability that the image was AI-generated.

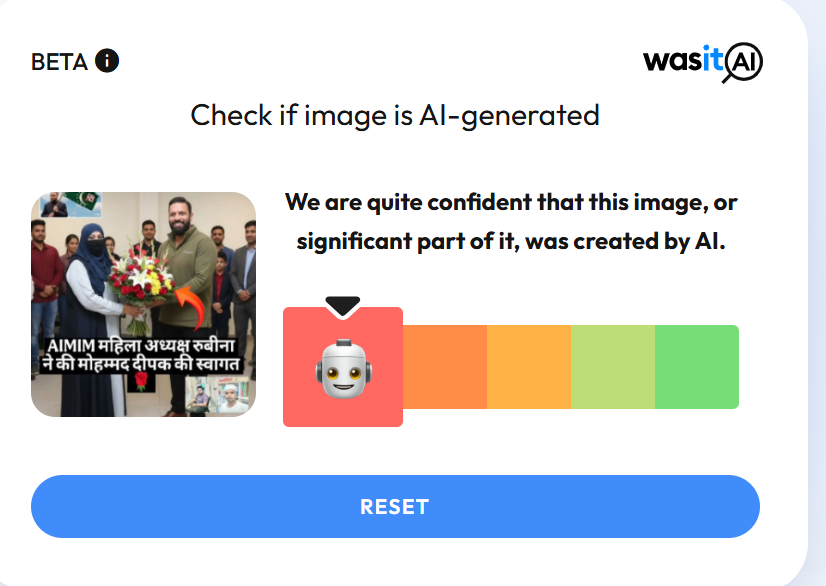

In the next stage of the research , the image was also analysed using another AI detection tool, Wasit AI, which likewise identified the image as AI-generated.

Conclusion

The research establishes that users are circulating an AI-generated image with a misleading claim linking it to the Kotdwar controversy.

Related Blogs

.webp)

Introduction

The rise of unreliable social media newsgroups on online platforms has significantly altered the way people consume and interact with news, contributing to the spread of misinformation and leading to sources of unverified and misleading content. Unlike traditional news outlets that adhere to journalistic standards, these newsgroups often lack proper fact-checking and editorial oversight, leading to the rapid dissemination of false or distorted information. Social media transformed individuals into active content creators. Social media newsgroups (SMNs) are social media platforms used as sources of news and information. According to a survey by the Pew Research Center (July-August 2024), 54% of U.S. adults now rely on social media for news. This rise in SMNs has raised concerns over the integrity of online news and undermines trust in legitimate news sources. Social media users are advised to consume information and news from authentic sources or channels available on social media platforms.

The Growing Issue of Misinformation in Social Media Newsgroups

Social media newsgroups have become both a source of vital information and a conduit for misinformation. While these platforms allow rapid news sharing and facilitate political and social campaigns, they also pose significant risks of unverified information. Misleading information, often driven by algorithms designed to maximise user engagement, proliferates in these spaces. This has led to increasing challenges, as SMNs cater to diverse communities with varying political affiliations, gender demographics, and interests. This sometimes results in the creation of echo chambers where information is not critically assessed, amplifying the confirmation bias and enabling the unchecked spread of misinformation. A prominent example is the false narratives surrounding COVID-19 vaccines that spread across SMNs, contributing to widespread vaccine hesitancy and public health risks.

Understanding the Susceptibility of Online Newsgroups to Misinformation

Several factors make social media newsgroups particularly susceptible to misinformation. Some of the factors are listed below:

- The lack of robust fact-checking mechanisms in social media news groups can lead to false narratives which can spread easily.

- The lack of expertise from admins of online newsgroups, who are often regular users without journalism knowledge, can result in the spreading of inaccurate information. Their primary goal of increasing engagement may overshadow concerns about accuracy and credibility.

- The anonymity of users exacerbates the problem of misinformation. It allows users to share unverified or misleading content without accountability.

- The viral nature of social media also leads to the vast spread of misinformation to audiences instantly, often outpacing efforts to correct it.

- Unlike traditional media outlets, online newsgroups often lack formal fact-checking processes. This absence allows misinformation to circulate without verification, making it easier for inaccuracies to go unchallenged.

- The sheer volume of user engagement in the form of posts has created the struggle to moderate content effectively imposing significant challenges.

- Social Media Platforms have algorithms designed to enhance user engagement and inadvertently amplify sensational or emotionally charged content, which is more likely to be false.

Consequences of Misinformation in Newsgroups

The societal impacts of misinformation in SMNs are profound. Political polarisation can fuel one-sided views and create deep divides in democratic societies. Health risks emerge when false information spreads about critical issues, such as the anti-vaccine movements or misinformation related to public health crises. Misinformation has dire long-term implications and has the potential to destabilise governments and erode trust in media, in both traditional and social media leading to undermining democracy. If unaddressed, the consequences could continue to ripple through society, perpetuating false narratives that shape public opinion.

Steps to Mitigate Misinformation in Social Media Newsgroups

- Educating users in social media literacy education can empower critical assessment of the information encountered, reducing the spread of false narratives.

- Introducing stricter platform policies, including penalties for deliberately sharing misinformation, may act as a deterrent against sharing unverified information.

- Collaborative fact-checking initiatives with involvement from social media platforms, independent journalists, and expert organisations can provide a unified front against the spread of false information.

- From a policy perspective, a holistic approach that combines platform responsibility with user education and governmental and industry oversight is essential to curbing the spread of misinformation in social media newsgroups.

Conclusion

The emergence of Social media newsgroups has revolutionised the dissemination of information. This rapid spread of misinformation poses a significant challenge to the integrity of news in the digital age. It gets further amplified by algorithmic echo chambers unchecked user engagement and profound societal implications. A multi-faceted approach is required to tackle these issues, combining stringent platform policies, AI-driven moderation, and collaborative fact-checking initiatives. User empowerment concerning media literacy is an important factor in promoting critical thinking and building cognitive defences. By adopting these measures, we can better navigate the complexities of consuming news from social media newsgroups and preserve the reliability of online information. Furthermore, users need to consume news from authoritative sources available on social media platforms.

References

Executive Summary

A video of Dr. Samir V. Kamat, Chairman of the Defence Research and Development Organisation (DRDO), is going viral on social media. In the clip, he appears to claim that Prime Minister Narendra Modi instructed scientists to wash the Agni-6 missile with cow urine, and later use a mixture of cow dung and urine to prevent rusting. Research by CyberPeace Research Wing found that the video is a deepfake, created by manipulating original footage using AI tools. It was also shared by an account previously known for posting anti-India misinformation and is reportedly banned in India.

Claim

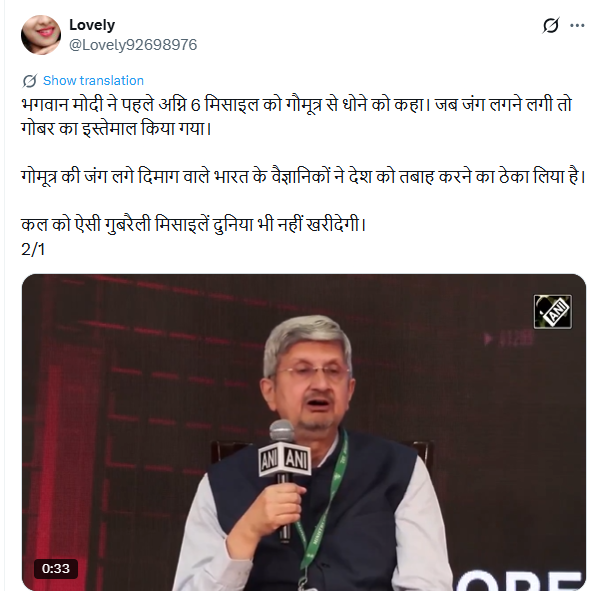

An X user named “Lovely” shared the video on May 1, 2026, alleging that Indian scientists were using cow urine and dung in missile development under government direction. The post used derogatory language and criticized India’s scientific community.

Fact Check

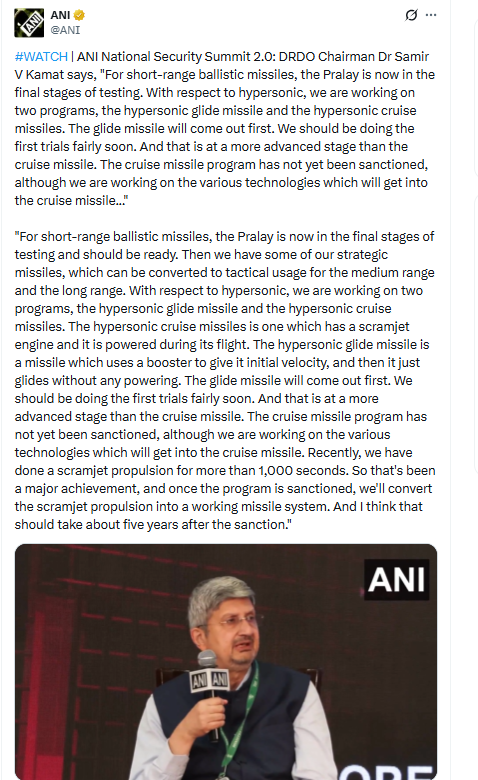

To verify the claim, we searched relevant keywords on Google but found no credible media reports supporting such statements by the DRDO chief. We then extracted keyframes from the viral clip and conducted a reverse image search using Google Lens. This led us to the original video posted by ANI on April 30, 2026. The footage is from the National Security Summit 2.0, where Dr. Kamat spoke about India’s missile development programs.

In the authentic video, Dr. Kamat discusses short-range ballistic missiles like ‘Pralay’, and advancements in hypersonic glide and cruise missile technologies, including scramjet propulsion. There is no mention of cow urine, cow dung, or any such practices.

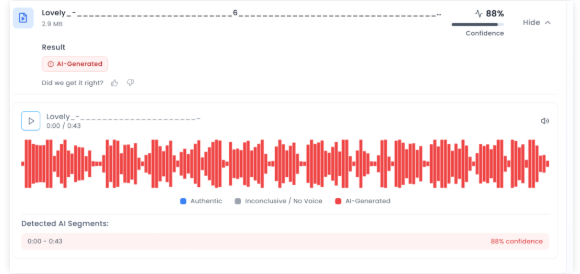

Further analysis using AI detection tool Aurigin indicated an 88% probability that the viral video was AI-generated or manipulated.

Conclusion

Our research confirms that the viral video is fake and AI-manipulated. Dr. Samir V. Kamat never made any statement about washing missiles with cow urine. The clip is a deepfake created to spread misinformation and mislead viewers.

Introduction

In the digital realm of social media, Meta Platforms, the driving force behind Facebook and Instagram, faces intense scrutiny following The Wall Street Journal's investigative report. This exploration delves deeper into critical issues surrounding child safety on these widespread platforms, unravelling algorithmic intricacies, enforcement dilemmas, and the ethical maze surrounding monetisation features. Instances of "parent-managed minor accounts" leveraging Meta's subscription tools to monetise content featuring young individuals have raised eyebrows. While skirting the line of legality, this practice prompts concerns due to its potential appeal to adults and the associated inappropriate interactions. It's a nuanced issue demanding nuanced solutions.

Failed Algorithms

The very heartbeat of Meta's digital ecosystem, its algorithms, has come under intense scrutiny. These algorithms, designed to curate and deliver content, were found to actively promoting accounts featuring explicit content to users with known pedophilic interests. The revelation sparks a crucial conversation about the ethical responsibilities tied to the algorithms shaping our digital experiences. Striking the right balance between personalised content delivery and safeguarding users is a delicate task.

While algorithms play a pivotal role in tailoring content to users' preferences, Meta needs to reevaluate the algorithms to ensure they don't inadvertently promote inappropriate content. Stricter checks and balances within the algorithmic framework can help prevent the inadvertent amplification of content that may exploit or endanger minors.

Major Enforcement Challenges

Meta's enforcement challenges have come to light as previously banned parent-run accounts resurrect, gaining official verification and accumulating large followings. The struggle to remove associated backup profiles adds layers to concerns about the effectiveness of Meta's enforcement mechanisms. It underscores the need for a robust system capable of swift and thorough actions against policy violators.

To enhance enforcement mechanisms, Meta should invest in advanced content detection tools and employ a dedicated team for consistent monitoring. This proactive approach can mitigate the risks associated with inappropriate content and reinforce a safer online environment for all users.

The financial dynamics of Meta's ecosystem expose concerns about the exploitation of videos that are eligible for cash gifts from followers. The decision to expand the subscription feature before implementing adequate safety measures poses ethical questions. Prioritising financial gains over user safety risks tarnishing the platform's reputation and trustworthiness. A re-evaluation of this strategy is crucial for maintaining a healthy and secure online environment.

To address safety concerns tied to monetisation features, Meta should consider implementing stricter eligibility criteria for content creators. Verifying the legitimacy and appropriateness of content before allowing it to be monetised can act as a preventive measure against the exploitation of the system.

Meta's Response

In the aftermath of the revelations, Meta's spokesperson, Andy Stone, took centre stage to defend the company's actions. Stone emphasised ongoing efforts to enhance safety measures, asserting Meta's commitment to rectifying the situation. However, critics argue that Meta's response lacks the decisive actions required to align with industry standards observed on other platforms. The debate continues over the delicate balance between user safety and the pursuit of financial gain. A more transparent and accountable approach to addressing these concerns is imperative.

To rebuild trust and credibility, Meta needs to implement concrete and visible changes. This includes transparent communication about the steps taken to address the identified issues, continuous updates on progress, and a commitment to a user-centric approach that prioritises safety over financial interests.

The formation of a task force in June 2023 was a commendable step to tackle child sexualisation on the platform. However, the effectiveness of these efforts remains limited. Persistent challenges in detecting and preventing potential child safety hazards underscore the need for continuous improvement. Legislative scrutiny adds an extra layer of pressure, emphasising the urgency for Meta to enhance its strategies for user protection.

To overcome ongoing challenges, Meta should collaborate with external child safety organisations, experts, and regulators. Open dialogues and partnerships can provide valuable insights and recommendations, fostering a collaborative approach to creating a safer online environment.

Drawing a parallel with competitors such as Patreon and OnlyFans reveals stark differences in child safety practices. While Meta grapples with its challenges, these platforms maintain stringent policies against certain content involving minors. This comparison underscores the need for universal industry standards to safeguard minors effectively. Collaborative efforts within the industry to establish and adhere to such standards can contribute to a safer digital environment for all.

To align with industry standards, Meta should actively participate in cross-industry collaborations and adopt best practices from platforms with successful child safety measures. This collaborative approach ensures a unified effort to protect users across various digital platforms.

Conclusion

Navigating the intricate landscape of child safety concerns on Meta Platforms demands a nuanced and comprehensive approach. The identified algorithmic failures, enforcement challenges, and controversies surrounding monetisation features underscore the urgency for Meta to reassess and fortify its commitment to being a responsible digital space. As the platform faces this critical examination, it has an opportunity to not only rectify the existing issues but to set a precedent for ethical and secure social media engagement.

This comprehensive exploration aims not only to shed light on the existing issues but also to provide a roadmap for Meta Platforms to evolve into a safer and more responsible digital space. The responsibility lies not just in acknowledging shortcomings but in actively working towards solutions that prioritise the well-being of its users.

References

- https://timesofindia.indiatimes.com/gadgets-news/instagram-facebook-prioritised-money-over-child-safety-claims-report/articleshow/107952778.cms

- https://www.adweek.com/blognetwork/meta-staff-found-instagram-tool-enabled-child-exploitation-the-company-pressed-ahead-anyway/107604/

- https://www.tbsnews.net/tech/meta-staff-found-instagram-subscription-tool-facilitated-child-exploitation-yet-company