#FactCheck - False Claim of Italian PM Congratulating on Ram Temple, Reveals Birthday Thanks

Executive Summary:

A number of false information is spreading across social media networks after the users are sharing the mistranslated video with Indian Hindus being congratulated by Italian Prime Minister Giorgia Meloni on the inauguration of Ram Temple in Ayodhya under Uttar Pradesh state. Our CyberPeace Research Team’s investigation clearly reveals that those allegations are based on false grounds. The true interpretation of the video that actually is revealed as Meloni saying thank you to those who wished her a happy birthday.

Claims:

A X (Formerly known as Twitter) user’ shared a 13 sec video where Italy Prime Minister Giorgia Meloni speaking in Italian and user claiming to be congratulating India for Ram Mandir Construction, the caption reads,

“Italian PM Giorgia Meloni Message to Hindus for Ram Mandir #RamMandirPranPratishta. #Translation : Best wishes to the Hindus in India and around the world on the Pran Pratistha ceremony. By restoring your prestige after hundreds of years of struggle, you have set an example for the world. Lots of love.”

Fact Check:

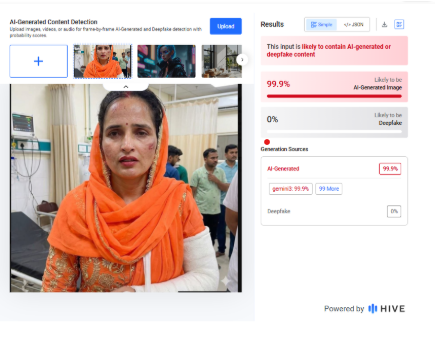

The CyberPeace Research team tried to translate the Video in Google Translate. First, we took out the transcript of the Video using an AI transcription tool and put it on Google Translate; the result was something else.

The Translation reads, “Thank you all for the birthday wishes you sent me privately with posts on social media, a lot of encouragement which I will treasure, you are my strength, I love you.”

With this we are sure that it was not any Congratulations message but a thank you message for all those who sent birthday wishes to the Prime Minister.

We then did a reverse Image Search of frames of the Video and found the original Video on the Prime Minister official X Handle uploaded on 15 Jan, 2024 with caption as, “Grazie. Siete la mia” Translation reads, “Thank you. You are my strength!”

Conclusion:

The 13 Sec video shared by a user had a great reach at X as a result many users shared the Video with Similar Caption. A Misunderstanding starts from one Post and it spreads all. The Claims made by the X User in Caption of the Post is totally misleading and has no connection with the actual post of Italy Prime Minister Giorgia Meloni speaking in Italian. Hence, the Post is fake and Misleading.

- Claim: Italian Prime Minister Giorgia Meloni congratulated Hindus in the context of Ram Mandir

- Claimed on: X

- Fact Check: Fake