#FactCheck- Viral Kapil Mishra Video on 50% Attendance Not Recent

Executive Summary

A video of Delhi government cabinet minister Kapil Mishra is being shared on social media. In the clip, he can be heard saying that from the next day, only 50 percent attendance will be allowed in offices, while the remaining 50 percent employees will work from home. He also states that all institutions must comply with this. Users are sharing the video as a recent development. However, a study by the CyberPeace found the viral claim to be misleading. Our research revealed that the video is not recent but dates back to December 2025.

Claim:

An Instagram user shared the viral video on March 24, 2026. The link to the post is given below.

Fact Check:

To verify the claim, we conducted a keyword search on Google. During this process, we found a report published on December 17, 2025, on NDTV Hindi. According to the report, the Delhi government had made 50 percent work-from-home mandatory in government offices due to severe air pollution. Additional restrictions were also imposed under GRAP Stage IV.

Further, we found the original video on the official social media handle of BJP Delhi. In this video, Kapil Mishra can be heard stating that 50 percent work-from-home has been made mandatory in all government and private offices in Delhi, while health and other essential services have been exempted from this arrangement.

Conclusion:

Our research found that the viral video is not recent. It is from December 2025 and is being shared with a misleading claim.

Related Blogs

Executive Summary

A video of Prime Minister Narendra Modi is being widely shared on social media, in which he appears to announce that all ration card holders will receive free mobile phones, provided no member of their family is a government employee. However, research by the CyberPeace has found this claim to be false. Our research reveals that the viral video is AI-generated and does not reflect any real announcement.

Claim:

An Instagram user shared the viral video with the caption, “If you have a ration card, you will get a free mobile phone.”

- Post link: https://www.instagram.com/reels/DWqDKWxy6lJ/

- Archived link: https://archive.ph/wip/dmpIf

Fact Check

To verify the claim, we first conducted a keyword-based search on Google. However, we did not find any credible media reports supporting such an announcement, raising doubts about the authenticity of the video. We then checked the official government welfare schemes portal, myscheme.gov.in, which provides verified information about central government schemes. No such scheme offering free mobile phones to ration card holders was found on the platform.

Conclusion

Our research confirms that the viral video is fake and AI-generated. There is no official announcement or credible report suggesting that ration card holders will receive free mobile phones under any government scheme. The video has been digitally manipulated using artificial intelligence and is being circulated with a misleading claim. This serves as another example of how AI-generated content can be used to spread misinformation.

Executive Summary

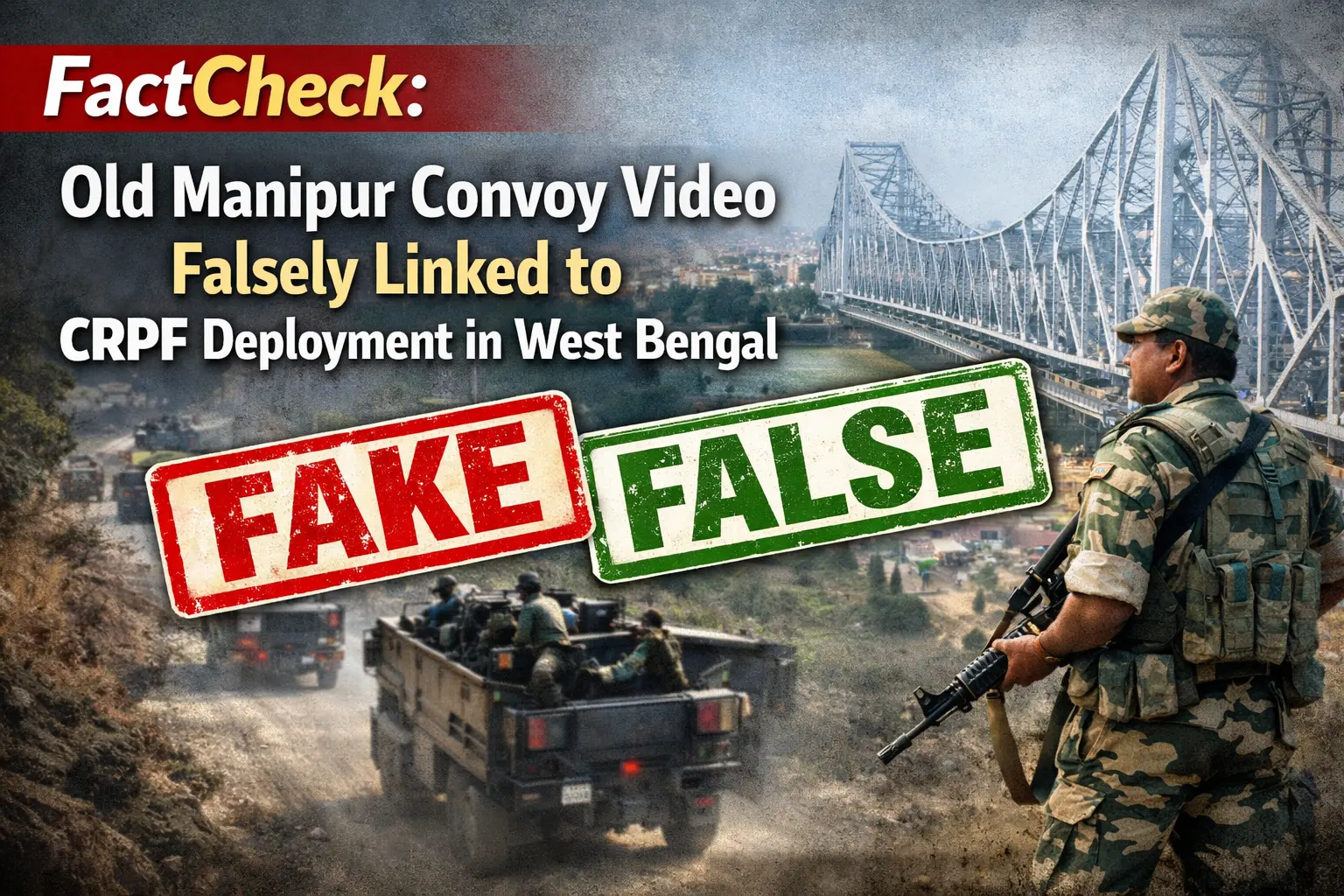

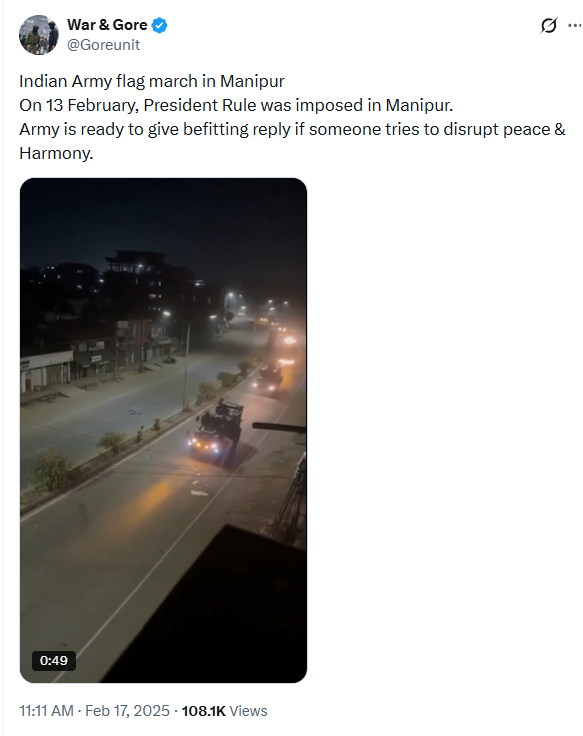

A video showing a military convoy moving along a road is being widely circulated on social media with the claim that the entry of CRPF forces into West Bengal has changed the situation on the ground, suggesting strict action is underway during the ongoing elections. However, research by CyberPeace found the claim to be misleading. The video is not recent and has been available online since February 2025.

Claim

The 12-second viral clip shows multiple heavy vehicles moving in a convoy on a road. It has been shared on X (formerly Twitter) with a caption claiming that CRPF’s entry into West Bengal has led to a shift from dialogue to strong action, along with communal assertions.

Fact Check

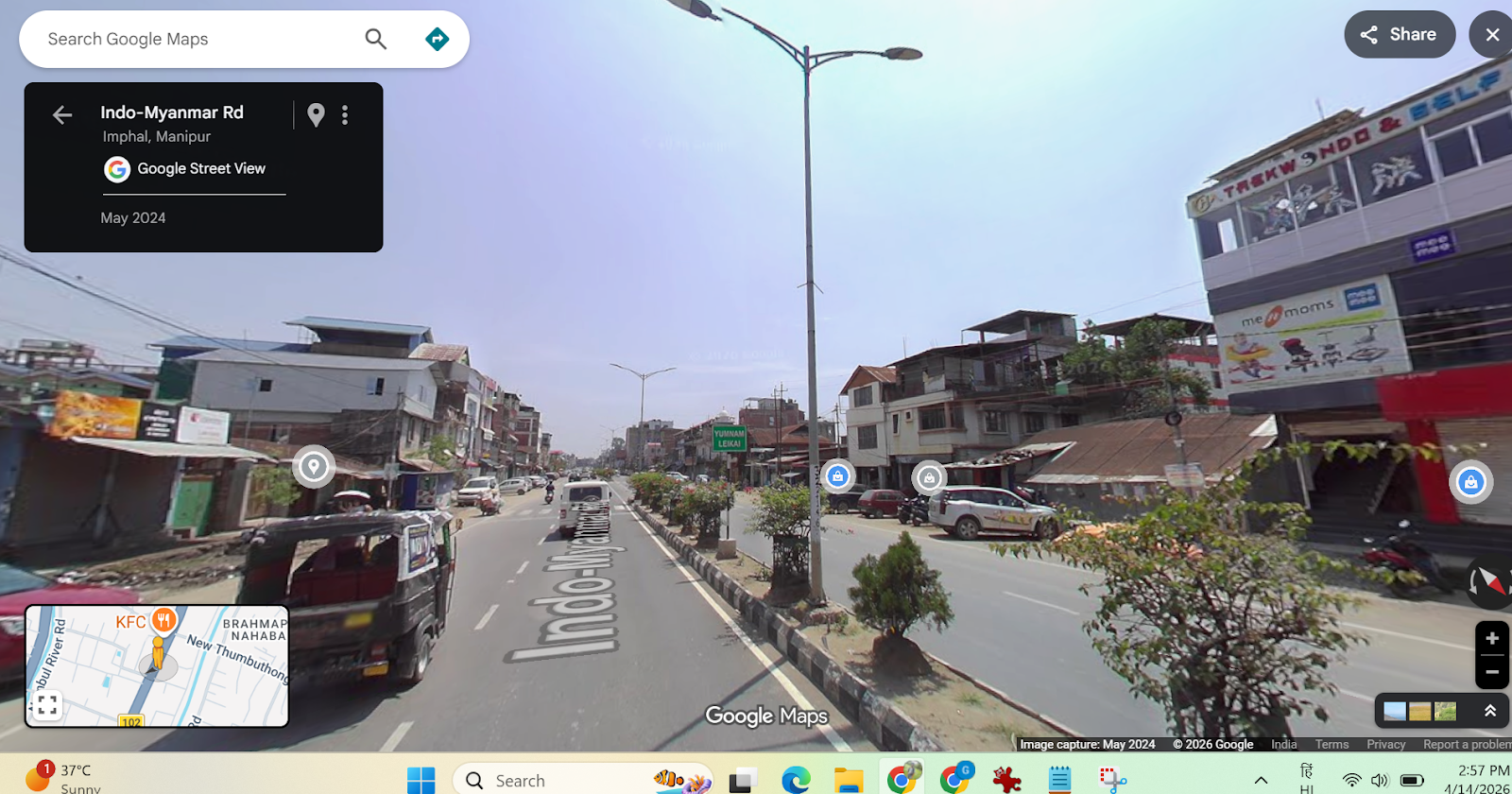

During the verification process, we found that the same video had been posted by several X users around February 17, 2025. In those earlier posts, the video was described as being from Manipur, not West Bengal.

Further analysis revealed that the video contains background audio in the Manipuri language. To confirm this, we contacted a Manipuri journalist, who stated that the audio includes announcements asking people to stay indoors and avoid gathering on the streets. Notably, this audio is missing in the currently viral version of the clip.Although we could not independently verify the exact date and precise location of the footage, visual elements such as road dividers and streetlight patterns closely resemble those found in Imphal, the capital city of Manipur.

Additionally, reports confirm that central armed police forces have indeed been deployed in West Bengal for election duties in multiple phases. However, there is no evidence linking this specific video to those deployments.

Conclusion

The viral claim is misleading. The video does not show CRPF deployment in West Bengal during the ongoing elections. Instead, it appears to be an older clip from Manipur, likely recorded in early 2025, and has been shared with a false and communal narrative. There is no credible evidence to support the claim made alongside the video. Users are advised to verify content before sharing, especially during sensitive events like elections.

Introduction

Social media has become integral to our lives and livelihood in today’s digital world. Influencers are now strong people who shape trends, views, and consumer behaviour. Influencers have become targets for bad actors aiming to abuse their fame due to their significant internet presence. Unfortunately, account hacking has grown frequently, with significant ramifications for influencers and their followers. Furthermore, the emergence of social media platforms in recent years has opened the way for influencer culture. Influencers exert power over their followers’ ideas, lifestyle choices, and purchase decisions. Influencers and brands frequently collaborate to exploit their reach, resulting in a mutually beneficial environment. As a result, the value of influencer accounts has risen dramatically, attracting the attention of hackers trying to abuse their potential for financial gain or personal advantage.

Instances of recent attacks

Places of worship

The hackers have targeted renowned temples for fulfilling their malicious activities the recent attack happened on The Khautji Shyam Temple, a famous religious institution with enormous cultural and spiritual value for its adherents. It serves as a place of worship, community events, and numerous religious activities. However, since technology has invaded all sectors of life, the temple’s online presence has developed, giving worshippers access to information, virtual darshans (holy viewings), and interactive forums. Unfortunately, this digital growth has also rendered the shrine vulnerable to cyber threats. The hackers hacked the Facebook page twice in the month, demanded donations and hacked the cheques the devotes gave to the trust. The second event happened by posting objectional images on the page and hurting the sentiments of the devotees. The Committee of the temple has filed an FIR under various charges and is also seeking help from the cyber cell.

Social media Influencers

Influencers enjoy a vast online following worldwide, but their presence is limited to the digital space. Hence every video, photo is of importance to them. An incident took place with leading news anchor and reporter Barkha Dutt, where in her youtube channel was hacked into, and all the posts made from the channel were deleted. The hackers also replaced the channel’s logo with Tesla and were streaming a live video on the channel featuring Elon Musk. A similar incident was reported by influencer Tanmay Bhatt, who also lost all the content e had posted on his channel. The hackers use the following methods to con social media influencers:

- Social engineering

- Phishing

- Brute Force Attacks

Such attacks on influencers can cause harm to their reputation, can also cause financial loss, and even lose the trust of the viewers or the followers who follow them, thus further impacting the collaborations.

Safeguards

Social media influencers need to be very careful about their cyber security as their prominent presence is in the online world. The influencers from different platforms should practice the following safeguards to protect themselves and their content better online

Secure your accounts

Protecting your accounts with passphrases or strong passwords is the first step. The best strategy for doing this is to create a passphrase, a phrase only you know. We advise choosing a passphrase with at least four words and 15 characters.

To further secure your accounts, you must enable multi-factor authentication in the second step.

To access your account, a hacker must guess your password and provide a second authentication factor (such as a face scan or fingerprint) that matches yours.

Be careful about who has access

Many social media influencers collaborate with a team to help generate and post content while building their personal brands.

This entails using team members who can write and produce material that influencers can share themselves, according to some of them. In these situations, the influencer is the only person who still has access to the account.

There are more potential weak spots when more people have access. Additionally, it increases the number of ways a password or account access could fall into the hands of a cybercriminal. Only some staff members will be as cautious about password security as you may be.

Stay up-to-date on the threats

What’s the most significant way to combat threats to computer security? Information.

Cybercriminals constantly adapt their methods. It’s crucial to stay informed about these threats and how they can be utilised against you.

But it’s not just threats. Social media platforms and other service providers are likewise changing their offerings to avoid these challenges.

Educate yourself to protect yourself. You can keep one step ahead of the hazards that cybercriminals offer by continuously educating yourself.

Preach cybersecurity

As influencers, cyber security should be preached, no matter your agenda.

This will also enable users to inculcate best practices for digital hygiene.

This will also boost the reporting numbers and increase population awareness, thus eradicating such bad actors from our cyberspace.

Acknowledge the risks

Keeping a blind eye will always hurt the safety aspects, as ignorance always causes issues.

Risks should be kept in mind while creating the digital routine and netiquette

Always inform your users of risk existing and potential risks

Monitor threats

After the acknowledgement, it is essential to monitor threats.

Active lookout for threats will allow you to understand the modus Operandi and the vulnerabilities to avoid criminals

Threats monitoring is also a basic netizens’ responsibility to ensure that the threats are reported as they emerge.

Interpret the data

All cyber nodal agencies release data and trends of cybercrimes, understand the trends and protect your vulnerabilities.

Data interpretation can lead to an early flagging of threats and issues, thus protecting the cyber ecosystem by and large.

Create risk profiles

All influencers should create risk profiles and backup profiles.

This will also help protect one’s data as it can be stored on different profiles.

Risk profiles and having a private profile are essential to safeguard the basic cyber interests of an influencer.

Conclusion

As we go deeper into the digital age, we see more technologies emerging, but along with them, we see a new generation of cyber threats and challenges. The physical, as well as the cyberspace, is now inter twinned and interdependent. Practising basic cyber security practices, hygiene, netiquette, and monitoring best practices will go a long way in protecting the online interests of the Influencers and will impact their followers to engage in best practices thus safeguarding the cyber ecosystem at large.