#FactCheck: Edited Broadcast Misused to Spread False Assam Political Rift Claim

Executive Summary:

A video from an India TV news show related to the Assam elections is going viral on social media. In the clip, anchor Meenakshi Joshi is allegedly seen claiming that there is a rift between the BJP and the RSS in Assam. The video further suggests that RSS chief Mohan Bhagwat wrote a letter to Prime Minister Narendra Modi stating that former Congress members have taken over the BJP, and that RSS volunteers would not work for the party in Assam. However, a research by the CyberPeace found that the viral video is edited and misleading. The original video contains no such claims.

Claim:

A social media user Ajit Singh shared the video on X with the caption:“The core idea of today’s BJP is to capture power by any means. We have been saying this for long, and now even RSS has accepted that BJP in Assam has been taken over by Congress mindset.”

Fact Check:

To verify the claim, we searched relevant keywords about the alleged letter by RSS chief Mohan Bhagwat to Prime Minister Narendra Modi. However, we found no credible media reports supporting this claim. We then checked the YouTube channel of India TV but could not find the viral clip there. During the search, we did find a similar video from Meenakshi Joshi’s show. In the beginning of that video, the portion seen in the viral clip appears.

In the original video, the anchor is discussing the announcement of election dates in five states. There is no mention of any rift between the BJP and RSS in Assam.

Conclusion:

The viral India TV video claiming a rift between the BJP and RSS in Assam is edited and misleading. The original broadcast was about election dates in five states and did not include any such claims.

Related Blogs

Introduction

The US national cybersecurity strategy was released at the beginning of March this year. The aim of the cybersecurity strategy is to build a more defensive and resilient digital mechanism through general investments in the cybersecurity infrastructure. It is important to invest in a resilient future, And the increasing digital diplomacy and private-sector partnerships, regulation of crucial industries, and holding software companies accountable if their products enable hackers in.

What is the cybersecurity strategy

The US National cybersecurity strategy is the plan which organisations pursue to fight against cyberattacks and cyber threats, and also they plan a risk assessment plan for the future in a resilient way. Through the cybersecurity strategy, there will be appropriate defences against cyber threats.

US National Cybersecurity Strategy-

the national cybersecurity strategy mainly depends on five pillars-

- Critical infrastructure- The national cybersecurity strategy intends to defend important infrastructure from cyberattacks, for example, hospitals and clean energy installations. This pillar mainly focuses on the security and resilience of critical systems and services that are critical.

- Disrupt & Threat Assessment- This strategy pillar seeks to address and eliminate cyber attackers who endanger national security and public safety.

- Shape the market forces in resilient and security has driven-

- Invest in resilient future approaches.

- Forging international partnerships to pursue shared goals.

Need for a National cybersecurity strategy in India –

India is becoming more reliant on technology for day-to-day purposes, communication and banking aspects. And as per the computer emergency response team (CERT-In), in 2022, ransomware attacks increased by 50% in India. Cybercrimes against individuals are also rapidly on the rise. To build a safe cyberspace, India also required a national cybersecurity strategy in the country to develop trust and confidence in IT systems.

Learnings for India-

India has a cybersecurity strategy just now but India can also implement its cybersecurity strategy as the US just released. For the threats assessments and for more resilient future outcomes, there is a need to eliminate cybercrimes and cyber threats in India.

Shortcomings of the US National Cybersecurity Strategy-

- The implementation of the United States National Cybersecurity Strategy has Some problems and things that could be improved in it. Here are some as follows:

- Significant difficulties: The cybersecurity strategy proved to be difficult for government entities. The provided guidelines do not fulfil the complexity and growing cyber threats.

- Insufficient to resolve desirable points: the implementation is not able to resolve some, of the aspects of national cybersecurity strategies, for example, the defined goals and resource allocation, which have been determined to be addressed by the national cybersecurity strategy and implementation plan.

- Lack of Specifying the Objectives: the guidelines shall track the cybersecurity progress, and the implementation shall define the specific objectives.

- Implementation Alone is insufficient: cyber-attacks and cybercrimes are increasing daily, and to meet this danger, the US cybersecurity strategy shall not depend on the implementation. However, the legislation will help to involve public-private collaboration, and technological advancement is required.

- The strategy calls for critical infrastructure owners and software companies to meet minimum security standards and be held liable for flaws in their products, but the implementation and enforcement of these standards and liability measures must be clearly defined.

Conclusion

There is a legitimate need for a national cybersecurity strategy to fight against the future consequences of the cyber pandemic. To plan proper strategies and defences. It is crucial to avail techniques under the cybersecurity strategy. And India is increasingly depending on technology, and cybercrimes are also increasing among individuals. Healthcare sectors and as well on educational sectors, so to resolve these complexities, there is a need for proper implementations.

Executive Summary

A video circulating on social media claims that Iran’s new Supreme Leader Mojtaba Khamenei has passed away, with users attributing the claim to American sources. However, research by the CyberPeace found the claim to be false. Our research confirms that Mojtaba Khamenei is alive and in good health.

Claim

A Facebook user shared the viral video, claiming that Iran’s new Supreme Leader Mojtaba Khamenei had died.

Fact Check

To verify the claim, we conducted keyword searches on Google but found no credible media reports confirming his death. Further research led us to a report published on April 10, 2026, by ABP News. According to the report, amid discussions around a ceasefire, Mojtaba Khamenei issued a statement saying that Iran does not seek war with the United States or Israel, but as a nation, it must defend its rights.

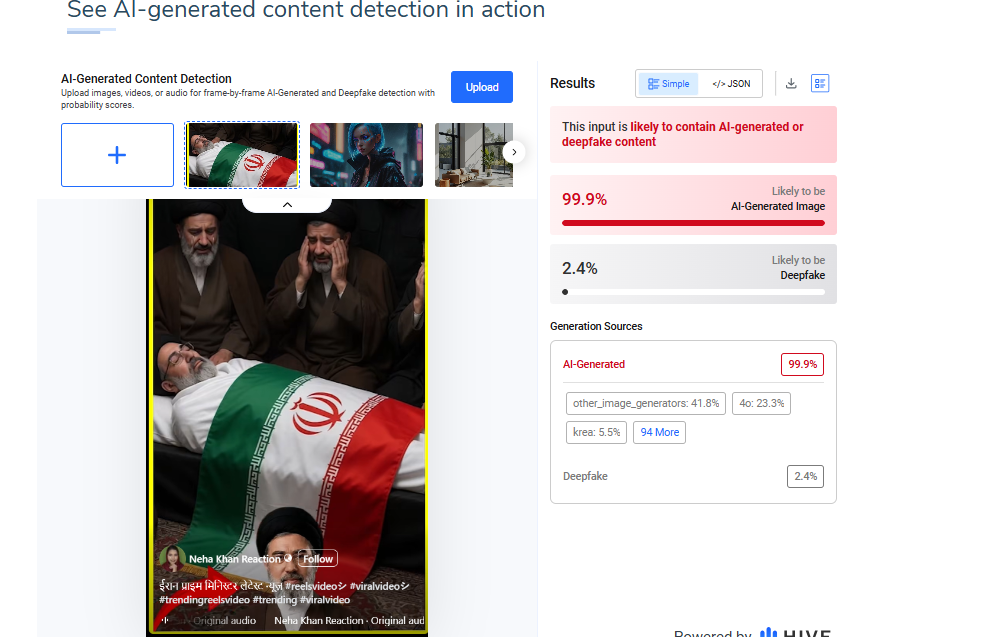

Additionally, the image used in the viral video was analyzed using the AI detection tool HIVE Moderation. The results indicated a 99% probability that the image is AI-generated.

Conclusion

The viral claim is false and misleading. There is no credible evidence to suggest that Mojtaba Khamenei has died. On the contrary, recent verified reports confirm that he is alive and has even issued public statements on ongoing geopolitical developments. The widespread circulation of this claim appears to be driven by misinformation, amplified through social media without verification. The use of AI-generated visuals further adds to the confusion, making the content appear authentic at first glance.

Introduction

Regulatory agencies throughout Europe have stepped up their monitoring of digital communication platforms because of the increased use of Artificial Intelligence in the digital domain. Messaging services have evolved into being more than just messaging systems, they now serve as a gateway for Artificial Intelligence services, Business Tools and Digital Marketplaces. In light of this evolution, the Competition Authority in Italy has taken action against Meta Platforms and ordered Meta to cease activities on WhatsApp that are deemed to restrict the ability of other companies to sell AI-based chatbots. This action highlights the concerns surrounding Gatekeeping Power, Market Foreclosure and Innovation Suppression. This proceeding will also raise questions regarding the application of Competition Law to the actions of Dominant Digital Platforms, where they leverage their own ecosystems to promote their own AI products to the detriment of competitors.

Background of the Case

In December 2025, Italy’s competition authority, the Autorità Garante della Concorrenza e del Mercato (AGCM), ordered Meta Platforms to suspend certain contractual terms governing WhatsApp. These terms allegedly prevented or restricted the operation of third-party AI chatbots on WhatsApp’s platform.

The decision was issued as an interim measure during an ongoing antitrust investigation. According to the AGCM, the disputed terms risked excluding competing AI chatbot providers from accessing a critical digital channel, thereby distorting competition and harming consumer choice.

Why WhatsApp Matters as a Digital Gateway

WhatsApp is situated uniquely within the European digital landscape. It has hundreds of millions of users throughout the entire European Union; therefore, it is an integral part of the communication infrastructure that supports communications between individual consumers and companies as well as between companies and their service providers. AI chatbot developers depend heavily upon WhatsApp as it provides the ability to connect directly with consumers in real-time, which is critical to their success as business offers.

According to the Italian regulator's opinion, a corporation that controls the ability to communicate via such a popular platform has a tremendous influence over innovation within that market as it essentially operates as a gatekeeper between the company creating an innovative service and the consumer using that service. If Meta is permitted to stop competing AI chatbot developers while providing more productive and useful offers than those offered by competing developers, it is likely that competing developers will be unable to market and distribute their innovative products at sufficient scale to remain competitive.

Alleged Abuse of Dominant Position

Under EU and national competition law, companies holding a dominant market position bear a special responsibility not to distort competition. The AGCM’s concern is that Meta may have abused WhatsApp’s dominance by:

- Restricting market access for rival AI chatbot providers

- Limiting technical development by preventing interoperability

- Strengthening Meta’s own AI ecosystem at the expense of competitors

Such conduct, if proven, could amount to an abuse under Article 102 of the Treaty on the Functioning of the European Union (TFEU). Importantly, the authority emphasised that even contractual terms—rather than explicit bans—can have exclusionary effects when imposed by a dominant platform.

Meta’s Response and Infrastructure Argument

Meta has openly condemned the Italian ruling as “fundamentally flawed,” arguing that third-party AI chatbots represent a major economic burden to the infrastructure and risk the performance, safety, and user enjoyment of WhatsApp.

Although the protection of infrastructure is a valid issue of concern, competition authorities commonly look at whether the justifications for such restrictions are appropriate and non-discriminatory. One of the principal legal issues is whether the restrictions imposed by Meta were applied in a uniform manner or whether they were selectively imposed in favour of Meta's AI services. If the restrictions are asymmetrical in application, they may be viewed as anti-competitive rather than as legitimate technical safeguards.

Link to the EU’s Digital Markets Framework

The Italian case fits into a wider EU context in relation to their efforts to regulate the actions of large technology companies with the use of prior (ex-ante) regulation as contained in the Digital Markets Act (DMA). The DMA has put in place obligations on a set of gatekeepers to make available to third parties on a non-discriminatory basis in order to maintain equitable access, interoperability and no discrimination against those parties.

While the Italian case has been brought pursuant to an Italian competition law, its philosophy is consistent with that of the DMA in that dominant digital platforms should not undertake actions that use their control over their core products and services to prevent other companies from being able to innovate. The trend with some EU national regulators is to be increasingly willing to take swift action through the application of interim measures rather than await many years for final decisions.

Implications for AI Developers and Platforms

The Italian order signifies to developers of AI-based chatbots that competitive access to AI technology via messaging services is an important factor for regulatory bodies. The order also serves as a warning to the large incumbent organisations that are establishing a foothold in the messaging services market to integrate AI into their already established platforms that they will not be protected from competition laws.

Additionally, the overall case showcases the growing consensus amongst regulatory agencies regarding the role of competition in the development of AI. If a handful of large companies are allowed to control both the infrastructure and the AI technology being operated on top of that infrastructure, the result will likely be the development of closed ecosystems that eliminate or greatly reduce the potential for technology diversity.

Conclusion

Italy's move against Meta highlights a significant intersection between competitive laws and artificial intelligence. The Italian antitrust authority has reinforced the principle that digital gatekeepers cannot use contractual methods to block off access to competition in targeting WhatsApp's restrictive terms. As AI becomes a larger part of our day to day digital services, regulatory bodies will likely continue to increase their scrutiny on platform behaviour. The result of this investigation will impact not just the Metaverse's AI strategy, but also create a baseline for future European regulators' methods of balancing innovation versus competition versus consumer choice in an increasingly AI-driven digital marketplace.

References

- https://www.reuters.com/sustainability/boards-policy-regulation/italy-watchdog-orders-meta-halt-whatsapp-terms-barring-rival-ai-chatbots-2025-12-24/

- https://techcrunch.com/2025/12/24/italy-tells-meta-to-suspend-its-policy-that-bans-rival-ai-chatbots-from-whatsapp/

- https://www.communicationstoday.co.in/italy-watchdog-orders-meta-to-halt-whatsapp-terms-barring-rival-ai-chatbots/

- https://www.techinasia.com/news/italy-watchdog-orders-meta-halt-whatsapp-terms-ai-bot