#FactCheck- Old Dubai Flood Videos Falsely Shared as Recent Storm Footage

Executive Summary

Amid reports of heavy rainfall and flooding in several cities of the United Arab Emirates, a video is being widely circulated on social media claiming to show recent scenes from Dubai. The clip allegedly depicts severe waterlogging at Dubai Airport and inside shopping malls, with users linking it to a “recent storm.”According to research by CyberPeace, the viral footage is not recent. The video is actually a compilation of three different clips stitched together and dates back to 2024, when Dubai experienced unprecedented flooding following heavy rains.

Claim

The misleading post was shared by an X (formerly Twitter) user named ‘Ruksar Khan’ on March 28, 2026, with a caption suggesting that Dubai had been submerged after just one day of rain. The post attempted to sensationalize the situation by portraying the visuals as current.

Fact Check:

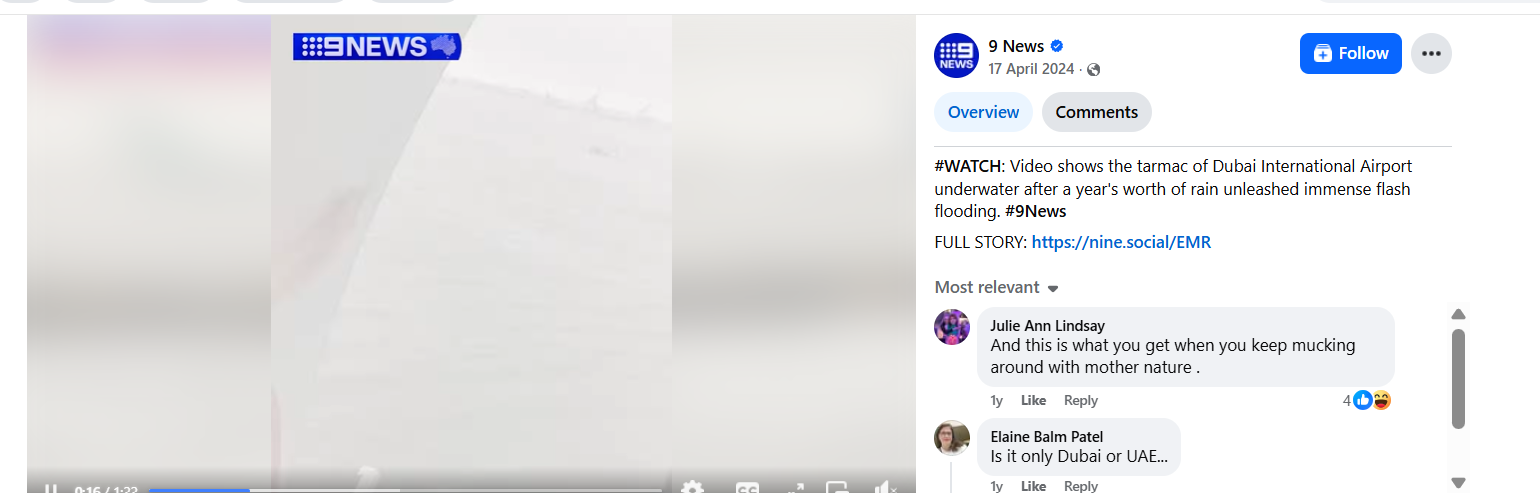

To verify the claim, keyframes from the viral video were extracted using the InVid tool and analyzed through reverse image search. One of the clips was traced to a Facebook post by “9 News,” uploaded on April 17, 2024. The video showed waterlogged runways at Dubai International Airport following intense rainfall and flooding.

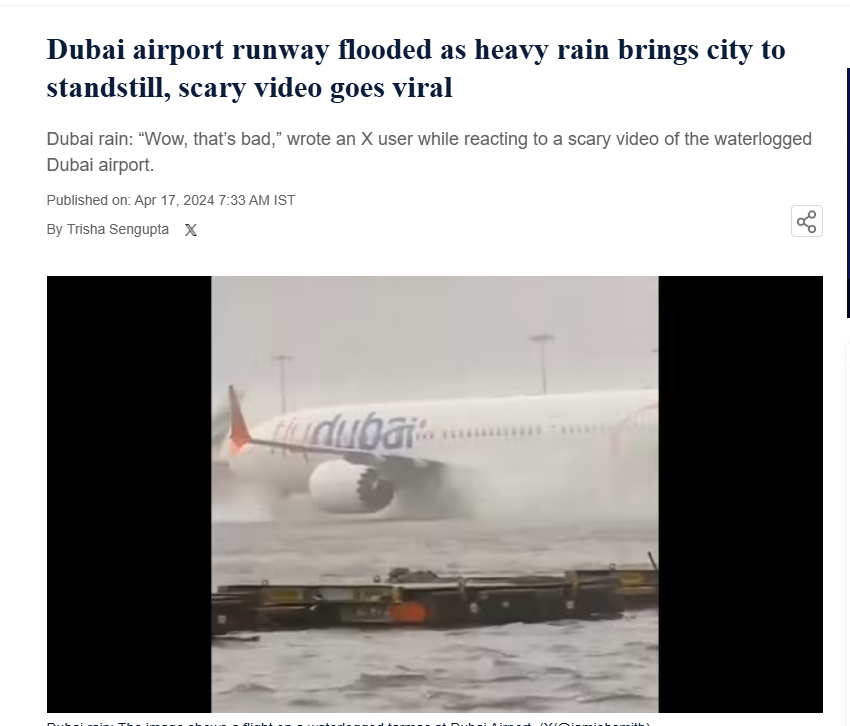

Further verification led to a report published by Hindustan Times on April 17, 2024, which featured similar visuals and confirmed that the footage was from the floods that hit Dubai in 2024.

Conclusion:

The viral claim suggesting that the video shows recent flooding in Dubai is false. The footage is nearly two years old and originates from the 2024 floods in Dubai. It is now being reshared with misleading claims to create confusion around current weather events.

Related Blogs

Executive Summary:

This report deals with a recent cyberthreat that took the form of a fake message carrying a title of India Post which is one of the country’s top postal services. The scam alerts recipients to the failure of a delivery due to incomplete address information and requests that they click on a link (http://iydc[.]in/u/5c0c5939f) to confirm their address. Privacy of the victims is compromised as they are led through a deceitful process, thereby putting their data at risk and compromising their security. It is highly recommended that users exercise caution and should not click on suspicious hyperlinks or messages.

False Claim:

The fraudsters send an SMS stating the status of delivery of an India Mail package which could not be delivered due to incomplete address information. They provide a deadline of 12 hours for recipients to confirm their address by clicking on the given link (http://iydc[.]in/u/5c0c5939f). This misleading message seeks to fool people into disclosing personal information or compromising the security of their device.

The Deceptive Journey:

- First Contact: The SMS is sent and is claimed to be from India Post, informs users that due to incomplete address information the package could not be delivered.

- Recipients are then expected to take action by clicking on the given link (http://iydc[.]in/u/5c0c5939f) to update the address. The message creates a panic within the recipient as they have only 12 hours to confirm their address on the suspicious link.

- Click the Link: Inquiring or worried recipients click on the link.

- User Data: When the link is clicked, it is suspected to launch possible remote scripts in the background and collect personal information from users.

- Device Compromise: Occasionally, the website might also try to infect the device with malware or take advantage of security flaws.

The Analysis:

- Phishing Technique: The scam allures its victims with a phishing technique and poses itself as the India Post Team, telling the recipients to click on a suspicious link to confirm the address as the delivery package can’t be delivered due to incomplete address.

- Fake Website Creation: Victims are redirected to a fraudulent website when they click on the link (http://iydc[.]in/u/5c0c5939f) to update their address.

- Background Scripts: Scripts performing malicious operations such as stealing the visitor information, distributing viruses are suspected to be running in the background. This script can make use of any vulnerability in the device/browser of the user to extract more info or harm the system security.

- Risk of Data Theft: This type of fraud has the potential to steal the data involved because it lures the victims into giving their personal details by creating fake urgency. The threat actors can use it for various illegal purposes such as financial fraud, identity theft and other criminal purposes in future.

- Domain Analysis: The iydc.in domain was registered on the 5th of April, 2024, just a short time ago. Most of the fraud domains that are put up quickly and utilized in criminal activities are usually registered in a short time.

- Registrar: GoDaddy.com, LLC, a reputable registrar, through which the domain is registered.

- DNS: Chase.ns.cloudflare.com and delilah.ns.cloudflare.com are the name servers used by Cloudflare to manage domain name resolution.

- Registrant: Apart from the fact that it is in Thailand, not much is known about the registrant probably because of using the privacy reduction plugins.

- Domain Name: iydc.in

- Registry Domain ID: DB3669B210FB24236BF5CF33E4FEA57E9-IN

- Registrar URL: www.godaddy.com

- Registrar: GoDaddy.com, LLC

- Registrar IANA ID: 146

- Updated Date: 2024-04-10T02:37:06Z

- Creation Date: 2024-04-05T02:37:05Z (Registered in very recent time)

- Registry Expiry Date: 2025-04-05T02:37:05Z

- Registrant State/Province: errww

- Registrant Country: TH (Thailand)

- Name Server: delilah.ns.cloudflare.com

- Name Server: chase.ns.cloudflare.com

Note: Cybercriminals used Cloudflare technology to mask the actual IP address of the fraudulent website.

CyberPeace Advisory:

- Do not open the messages received from social platforms in which you think that such messages are suspicious or unsolicited. In the beginning, your own discretion can become your best weapon.

- Falling prey to such scams could compromise your entire system, potentially granting unauthorized access to your microphone, camera, text messages, contacts, pictures, videos, banking applications, and more. Keep your cyber world safe against any attacks.

- Never reveal sensitive data such as your login credentials and banking details to entities where you haven't validated as reliable ones.

- Before sharing any content or clicking on links within messages, always verify the legitimacy of the source. Protect not only yourself but also those in your digital circle.

- Verify the authenticity of alluring offers before taking any action.

Conclusion:

The India Post delivery scam is an example of fraudulent activity that uses the name of trusted postal services to trick people. The campaign is initiated by using deceptive texts and fake websites that will trick the recipients into giving out their personal information which can later be used for identity theft, financial losses or device security compromise. Technical analysis shows the sophisticated tactics used by fraudsters through various techniques such as phishing, data harvesting scripts and the creation of fraudulent domains with less registration history etc. While encountering such messages, it's important to verify their authenticity from official sources and take proactive measures to protect both your personal information and devices from cyber threats. People can reduce the risk of falling for online scams by staying informed and following cybersecurity best practices.

Introduction

The Telecommunications Act of 2023 was passed by Parliament in December, receiving the President's assent and being published in the official Gazette on December 24, 2023. The act is divided into 11 chapters 62 sections and 3 schedules. Sections 1, 2, 10-30, 42-44, 46, 47, 50-58, 61 and 62 already took effect on June 26, 2024.

On July 04, 2024, the Centre issued a Gazetted Notification and sections 6-8, 48 and 59(b) were notified to be effective from July 05, 2024. The Act aims to amend and consolidate the laws related to telecommunication services, telecommunication networks, and spectrum assignment and it ‘repeals’ certain older colonial-era legislations like the Indian Telegraph Act 1885 and Indian Wireless Telegraph Act 1933. Due to the advancements in technology in the telecom sector, the new law is enacted.

On 18 July 2024 Thursday, the telecom minister while launching the theme of Indian Mobile Congress (IMC), announced that all rules and provisions of the new Telecom Act would be notified within the next 180 days, hence making the Act operational at full capacity.

Important definitions under Telecommunications Act, 2023

- Authorisation: Section 2(d) entails “authorisation” means a permission, by whatever name called, granted under this Act for— (i) providing telecommunication services; (ii) establishing, operating, maintaining or expanding telecommunication networks; or (iii) possessing radio equipment.

- Telecommunication: Section 2(p) entails “Telecommunication” means transmission, emission or reception of any messages, by wire, radio, optical or other electro-magnetic systems, whether or not such messages have been subjected to rearrangement, computation or other processes by any means in the course of their transmission, emission or reception.

- Telecommunication Network: Section 2(s) entails “telecommunication network” means a system or series of systems of telecommunication equipment or infrastructure, including terrestrial or satellite networks or submarine networks, or a combination of such networks, used or intended to be used for providing telecommunication services, but does not include such telecommunication equipment as notified by the Central Government.

- Telecommunication Service: Section 2(t) entails “telecommunication service” means any service for telecommunication.

Measures for Cyber Security for the Telecommunication Network/Services

Section 22 of the Telecommunication Act, 2023 talks about the protection of telecommunication networks and telecommunication services. The section specifies that the centre may provide rules to ensure the cybersecurity of telecommunication networks and telecommunication services. Such measures may include the collection, analysis and dissemination of traffic data that is generated, transmitted, received or stored in telecommunication networks. ‘Traffic data’ can include any data generated, transmitted, received, or stored in telecommunication networks – such as type, duration, or time of a telecommunication.

Section 22 further empowers the central government to declare any telecommunication network, or part thereof, as Critical Telecommunication Infrastructure. It may further provide for standards, security practices, upgradation requirements and procedures to be implemented for such Critical Telecommunication Infrastructure.

CyberPeace Policy Wing Outlook:

The Telecommunication Act, 2023 marks a significant change & growth in the telecom sector by providing a robust regulatory framework, encouraging research and development, promoting infrastructure development, and measures for consumer protection. The Central Government is empowered to authorize individuals for (a) providing telecommunication services, (b) establishing, operating, maintaining, or expanding telecommunication networks, or (c) possessing radio equipment. Section 48 of the act provides no person shall possess or use any equipment that blocks telecommunication unless permitted by the Central Government.

The Central Government will protect users by implementing different measures, such as the requirement of prior consent of users for receiving particular messages, keeping a 'Do Not Disturb' register to stop unwanted messages, the mechanism to enable users to report any malware or specified messages received, the preparation and maintenance of “Do Not Disturb” register, to ensure that users do not receive specified messages or class of specified messages without prior consent. The authorized entity providing telecommunication services will also be required to create an online platform for users for their grievances pertaining to telecommunication services.

In certain limited circumstances such as national security measures, disaster management and public safety, the act contains provisions empowering the Government to take temporary possession of telecom services or networks from authorised entity; direct interception or disclosure of messages, with measures to be specified in rulemaking. This entails that the government gains additional controls in case of emergencies to ensure security and public order. However, this has to be balanced with appropriate measures protecting individual privacy rights and avoiding any unintended arbitrary actions.

Taking into account the cyber security in the telecommunication sector, the government is empowered under the act to introduce standards for cyber security for telecommunication services and telecommunication networks; and encryption and data processing in telecommunication.

The act also promotes the research and development and pilot projects under Digital Bharat Nidhi. The act also promotes the approach of digital by design by bringing online dispute resolution and other frameworks. Overall the approach of the government is noteworthy as they realise the need for updating the colonial era legislation considering the importance of technological advancements and keeping pace with the digital and technical revolution in the telecommunication sector.

References:

- The Telecommunications Act, 2023 https://acrobat.adobe.com/id/urn:aaid:sc:AP:88cb04ff-2cce-4663-ad41-88aafc81a416

- https://pib.gov.in/PressReleasePage.aspx?PRID=2031057

- https://pib.gov.in/PressReleaseIframePage.aspx?PRID=2027941

- https://economictimes.indiatimes.com/industry/telecom/telecom-news/new-telecom-act-will-be-notified-in-180-days-bsnl-4g-rollout-is-monitored-on-a-daily-basis-scindia/articleshow/111851845.cms?from=mdr

- https://www.azbpartners.com/wp-content/uploads/2024/06/Update-Staggered-Enforcement-of-Telecommunications-Act-2023.pdf

- https://telecom.economictimes.indiatimes.com/blog/analysing-the-impact-of-telecommunications-act-2023-on-digital-india-mission/111828226

Executive Summary:

Assembly elections are underway in several Indian states, including West Bengal, Assam, Kerala, Tamil Nadu, and Puducherry. While voting has already taken place in Assam, Kerala, and Puducherry, polling is still pending in West Bengal. In view of the elections, central security forces have been deployed across West Bengal. Amid this, a video showing a group of people pelting stones at a security vehicle is being widely shared on social media. Some users claim that the incident took place in West Bengal and allege that Muslims attacked an army vehicle. However, research by CyberPeace found the claim to be false. The viral video is from Pakistan and has no connection to West Bengal.

Claim

A social media user shared the video on April 5, 2026, claiming that an army vehicle was attacked in West Bengal.

Post links:

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search using Google Lens. This led us to a video posted on a Facebook page on October 13, 2025. The caption of that post indicated that the video was from Lahore, showing clashes between members of Tehreek-e-Labbaik and the police.

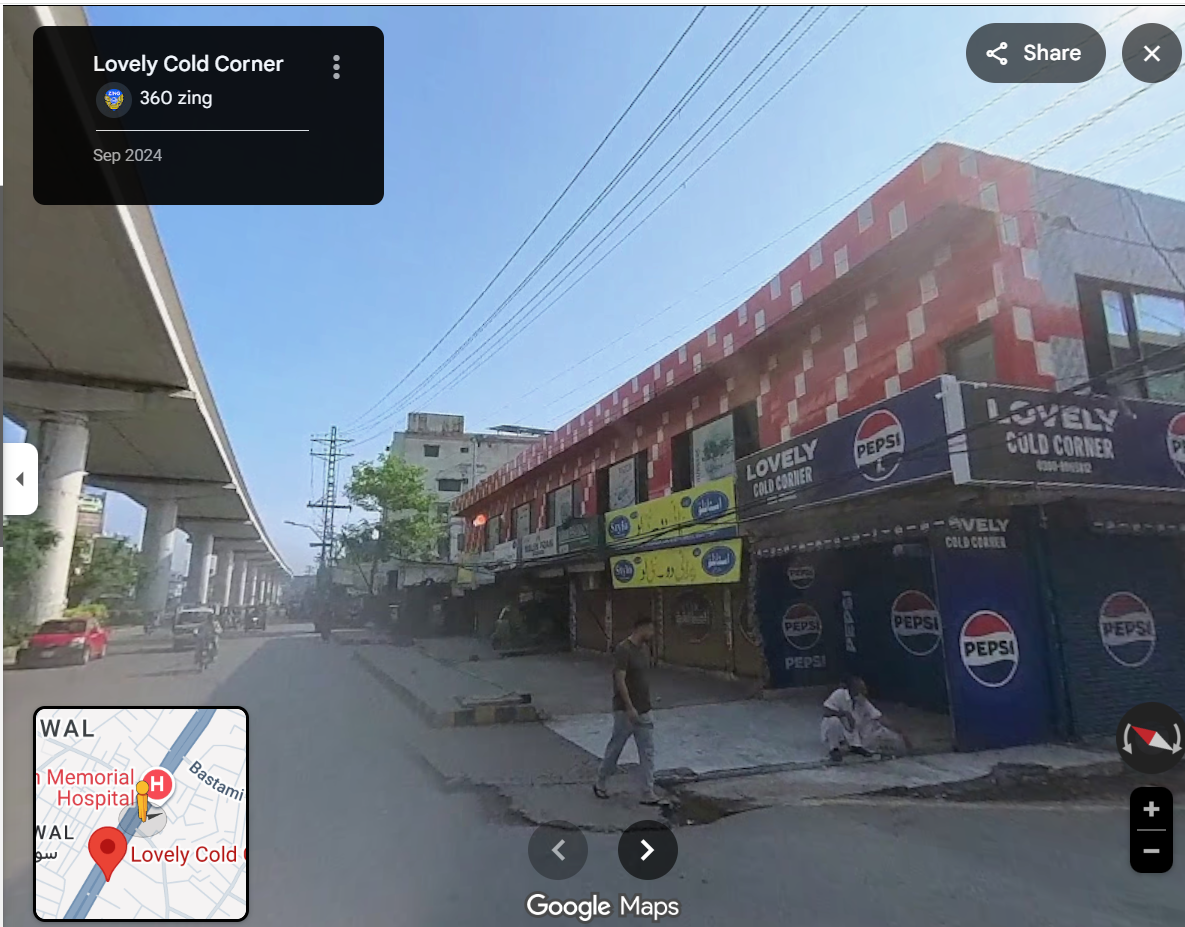

Further clues in the video also pointed to Pakistan. A shop sign reading “Lovely Drink Corner” is visible in the footage. A Google search confirmed that this establishment is located in Lahore, Pakistan.

Conclusion

The viral claim is misleading. Although central forces have been deployed in West Bengal for the ongoing elections, the video showing stone-pelting on a security vehicle is not from the state. It is an old video from Lahore, Pakistan, and is being falsely shared with a communal angle to mislead users.