#FactCheck - Old Video of Khamenei Manipulated With AI Voice, Viral Claim Misleading

Executive Summary

Claims are circulating that Iran’s Supreme Leader Ayatollah Ali Khamenei was killed in a major attack allegedly carried out by Israel and the United States. Amid these claims, a video is being widely shared on social media in which Khamenei can be heard saying, “Beware of fake news, I am alive.” Research conducted by CyberPeace has found the viral claim to be false. Our research revealed that the video being shared is old and that Khamenei’s voice has been altered using artificial intelligence to support a misleading narrative.

Claim

On March 1, 2026, an Instagram user shared the viral video in which Ayatollah Ali Khamenei is heard saying, “Beware of fake news, I am alive.” The link to the post and its archived version are provided above along with a screenshot.

Fact Check:

To verify the authenticity of the claim, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. During the research, we found the same video on the YouTube channel of Sky News Australia, published on June 19, 2025. In the approximately 43-minute-long video, the portion used in the viral clip appears around the 10-minute mark.

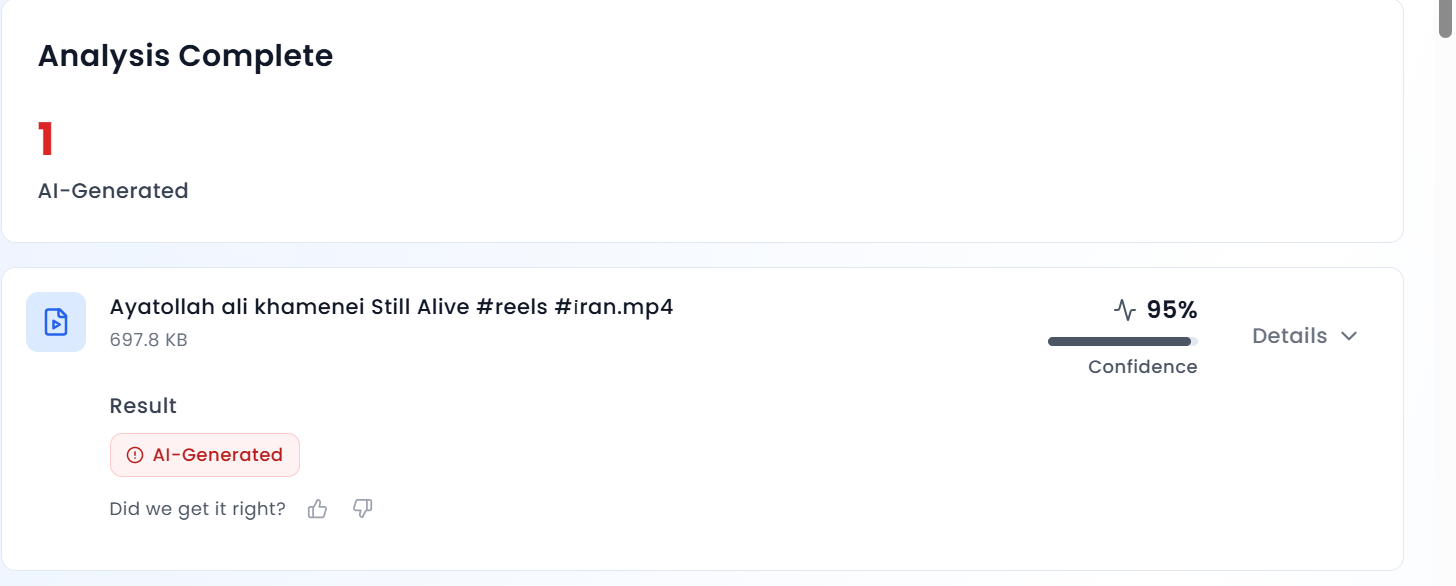

According to Sky News Australia’s report, Iran’s Supreme Leader Ayatollah Ali Khamenei had rejected US President Donald Trump’s demand for unconditional surrender. The Ayatollah regime also warned that any American military intervention would be accompanied by “irreparable damage.” Upon closely listening to the viral clip, we noticed that Khamenei’s voice sounded robotic, raising suspicion that it may have been AI-generated. We then analyzed the video using the AI detection tool AURGIN AI. The results indicated that the viral clip had been generated using artificial intelligence.

Conclusion

Our research establishes that the viral video is old and has been digitally manipulated. Ayatollah Ali Khamenei’s voice has been altered using artificial intelligence and the clip is being shared with a misleading claim.

Related Blogs

Executive Summary:

A viral post currently circulating on various social media platforms claims that Reliance Jio is offering a ₹700 Holi gift to its users, accompanied by a link for individuals to claim the offer. This post has gained significant traction, with many users engaging in it in good faith, believing it to be a legitimate promotional offer. However, after careful investigation, it has been confirmed that this post is, in fact, a phishing scam designed to steal personal and financial information from unsuspecting users. This report seeks to examine the facts surrounding the viral claim, confirm its fraudulent nature, and provide recommendations to minimize the risk of falling victim to such scams.

Claim:

Reliance Jio is offering a ₹700 reward as part of a Holi promotional campaign, accessible through a shared link.

Fact Check:

Upon review, it has been verified that this claim is misleading. Reliance Jio has not provided any promo deal for Holi at this time. The Link being forwarded is considered a phishing scam to steal personal and financial user details. There are no reports of this promo offer on Jio’s official website or verified social media accounts. The URL included in the message does not end in the official Jio domain, indicating a fake website. The website requests for the personal information of individuals so that it could be used for unethical cyber crime activities. Additionally, we checked the link with the ScamAdviser website, which flagged it as suspicious and unsafe.

Conclusion:

The viral post claiming that Reliance Jio is offering a ₹700 Holi gift is a phishing scam. There is no legitimate offer from Jio, and the link provided leads to a fraudulent website designed to steal personal and financial information. Users are advised not to click on the link and to report any suspicious content. Always verify promotions through official channels to protect personal data from cybercriminal activities.

- Claim: Users can claim ₹700 by participating in Jio's Holi offer.

- Claimed On: Social Media

- Fact Check: False and Misleading

Introduction

Social media has become integral to our lives and livelihood in today’s digital world. Influencers are now strong people who shape trends, views, and consumer behaviour. Influencers have become targets for bad actors aiming to abuse their fame due to their significant internet presence. Unfortunately, account hacking has grown frequently, with significant ramifications for influencers and their followers. Furthermore, the emergence of social media platforms in recent years has opened the way for influencer culture. Influencers exert power over their followers’ ideas, lifestyle choices, and purchase decisions. Influencers and brands frequently collaborate to exploit their reach, resulting in a mutually beneficial environment. As a result, the value of influencer accounts has risen dramatically, attracting the attention of hackers trying to abuse their potential for financial gain or personal advantage.

Instances of recent attacks

Places of worship

The hackers have targeted renowned temples for fulfilling their malicious activities the recent attack happened on The Khautji Shyam Temple, a famous religious institution with enormous cultural and spiritual value for its adherents. It serves as a place of worship, community events, and numerous religious activities. However, since technology has invaded all sectors of life, the temple’s online presence has developed, giving worshippers access to information, virtual darshans (holy viewings), and interactive forums. Unfortunately, this digital growth has also rendered the shrine vulnerable to cyber threats. The hackers hacked the Facebook page twice in the month, demanded donations and hacked the cheques the devotes gave to the trust. The second event happened by posting objectional images on the page and hurting the sentiments of the devotees. The Committee of the temple has filed an FIR under various charges and is also seeking help from the cyber cell.

Social media Influencers

Influencers enjoy a vast online following worldwide, but their presence is limited to the digital space. Hence every video, photo is of importance to them. An incident took place with leading news anchor and reporter Barkha Dutt, where in her youtube channel was hacked into, and all the posts made from the channel were deleted. The hackers also replaced the channel’s logo with Tesla and were streaming a live video on the channel featuring Elon Musk. A similar incident was reported by influencer Tanmay Bhatt, who also lost all the content e had posted on his channel. The hackers use the following methods to con social media influencers:

- Social engineering

- Phishing

- Brute Force Attacks

Such attacks on influencers can cause harm to their reputation, can also cause financial loss, and even lose the trust of the viewers or the followers who follow them, thus further impacting the collaborations.

Safeguards

Social media influencers need to be very careful about their cyber security as their prominent presence is in the online world. The influencers from different platforms should practice the following safeguards to protect themselves and their content better online

Secure your accounts

Protecting your accounts with passphrases or strong passwords is the first step. The best strategy for doing this is to create a passphrase, a phrase only you know. We advise choosing a passphrase with at least four words and 15 characters.

To further secure your accounts, you must enable multi-factor authentication in the second step.

To access your account, a hacker must guess your password and provide a second authentication factor (such as a face scan or fingerprint) that matches yours.

Be careful about who has access

Many social media influencers collaborate with a team to help generate and post content while building their personal brands.

This entails using team members who can write and produce material that influencers can share themselves, according to some of them. In these situations, the influencer is the only person who still has access to the account.

There are more potential weak spots when more people have access. Additionally, it increases the number of ways a password or account access could fall into the hands of a cybercriminal. Only some staff members will be as cautious about password security as you may be.

Stay up-to-date on the threats

What’s the most significant way to combat threats to computer security? Information.

Cybercriminals constantly adapt their methods. It’s crucial to stay informed about these threats and how they can be utilised against you.

But it’s not just threats. Social media platforms and other service providers are likewise changing their offerings to avoid these challenges.

Educate yourself to protect yourself. You can keep one step ahead of the hazards that cybercriminals offer by continuously educating yourself.

Preach cybersecurity

As influencers, cyber security should be preached, no matter your agenda.

This will also enable users to inculcate best practices for digital hygiene.

This will also boost the reporting numbers and increase population awareness, thus eradicating such bad actors from our cyberspace.

Acknowledge the risks

Keeping a blind eye will always hurt the safety aspects, as ignorance always causes issues.

Risks should be kept in mind while creating the digital routine and netiquette

Always inform your users of risk existing and potential risks

Monitor threats

After the acknowledgement, it is essential to monitor threats.

Active lookout for threats will allow you to understand the modus Operandi and the vulnerabilities to avoid criminals

Threats monitoring is also a basic netizens’ responsibility to ensure that the threats are reported as they emerge.

Interpret the data

All cyber nodal agencies release data and trends of cybercrimes, understand the trends and protect your vulnerabilities.

Data interpretation can lead to an early flagging of threats and issues, thus protecting the cyber ecosystem by and large.

Create risk profiles

All influencers should create risk profiles and backup profiles.

This will also help protect one’s data as it can be stored on different profiles.

Risk profiles and having a private profile are essential to safeguard the basic cyber interests of an influencer.

Conclusion

As we go deeper into the digital age, we see more technologies emerging, but along with them, we see a new generation of cyber threats and challenges. The physical, as well as the cyberspace, is now inter twinned and interdependent. Practising basic cyber security practices, hygiene, netiquette, and monitoring best practices will go a long way in protecting the online interests of the Influencers and will impact their followers to engage in best practices thus safeguarding the cyber ecosystem at large.

Introduction

As India moves full steam ahead towards a trillion-dollar digital economy, how user data is gathered, processed and safeguarded is under the spotlight. One of the most pervasive but least known technologies used to gather user data is the cookie. Cookies are inserted into every website and application to improve functionality, measure usage and customize content. But they also present enormous privacy threats, particularly when used without explicit user approval.

In 2023, India passed the Digital Personal Data Protection Act (DPDP) to give strong legal protection to data privacy. Though the act does not refer to cookies by name, its language leaves no doubt as to the inclusion of any technology that gathers or processes personal information and thus cookies regulation is at the centre of digital compliance in India. This blog covers what cookies are, how international legislation, such as the GDPR, has addressed them and how India's DPDP will regulate their use.

What Are Cookies and Why Do They Matter?

Cookies are simply small pieces of data that a website stores in the browser. They were originally designed to help websites remember useful information about users, such as your login session or what is in your shopping cart. Netscape initially built them in 1994 to make web surfing more efficient.

Cookies exist in various types. Session cookies are volatile and are deleted when the browser is shut down, whereas persistent cookies are stored on the device to monitor users over a period of time. First-party cookies are made by the site one is visiting, while third-party cookies are from other domains, usually utilised for advertisements or analytics. Special cookies, such as secure cookies, zombie cookies and tracking cookies, differ in intent and danger. They gather information such as IP addresses, device IDs and browsing history information associated with a person, thus making it personal data per the majority of data protection regulations.

A Brief Overview of the GDPR and Cookie Policy

The GDPR regulates how personal data can be processed in general. However, if a cookie collects personal data (like IP addresses or identifiers that can track a person), then GDPR applies as well, because it sets the rules on how that personal data may be processed, what lawful bases are required, and what rights the user has.

The ePrivacy Directive (also called the “Cookie Law”) specifically regulates how cookies and similar technologies can be used. Article 5(3) of the ePrivacy Directive says that storing or accessing information (such as cookies) on a user’s device requires prior, informed consent, unless the cookie is strictly necessary for providing the service requested by the user.

In the seminal Planet49 decision, the Court of Justice of the European Union held that pre-ticked boxes do not represent valid consent. Another prominent enforcement saw Amazon fined €35 million by France's CNIL for using tracking cookies without user consent.

Cookies and India’s Digital Personal Data Protection Act (DPDP), 2023

India's Digital Personal Data Protection Act, 2023 does not refer to cookies specifically but its provisions necessarily come into play when cookies harvest personal data like user activity, IP addresses, or device data. According to DPDP, personal data is to be processed for legitimate purposes with the individual's consent. The consent has to be free, informed, clear and unambiguous. The individuals have to be informed of what data is collected, how it will be processed.. The Act also forbids behavioural monitoring and targeted advertising in the case of children.

The Ministry of Electronics and IT released the Business Requirements Document for Consent Management Systems (BRDCMS) in June 2025. Although it is not binding by law, it provides operational advice on cookie consent. It recommends that websites use cookie banners with "Accept," "Reject," and "Customize" choices. Users must be able to withdraw or change their consent at any moment. Multi-language handling and automatic expiry of cookie preferences are also suggested to suit accessibility and privacy requirements.

The DPDP Act and the BRDCMS together create a robust user-rights model, even in the absence of a special cookie law.

What Should Indian Websites Do?

For the purposes of staying compliant, Indian websites and online platforms need to act promptly to harmonise their use of cookies with DPDP principles. This begins with a transparent and simple cookie banner providing users with an opportunity to accept or decline non-essential cookies. Consent needs to be meaningful; coercive tactics such as cookie walls must not be employed. Websites need to classify cookies (e.g., necessary, analytics and ads) and describe each category's function in plain terms under the privacy policy. Users must be given the option to modify cookie settings anytime using a Consent Management Platform (CMP). Monitoring children or their behavioural information must be strictly off-limits.

These are not only about being compliant with the law, they're about adhering to ethical data stewardship and user trust building.

What Should Users Do?

Cookies need to be understood and controlled by users to maintain online personal privacy. Begin by reading cookie notices thoroughly and declining unnecessary cookies, particularly those associated with tracking or advertising. The majority of browsers today support blocking third-party cookies altogether or deleting them periodically.

It is also recommended to check and modify privacy settings on websites and mobile applications. It is possible to minimise surveillance with the use of browser add-ons such as ad blockers or privacy extensions. Users are also recommended not to blindly accept "accept all" in cookie notices and instead choose "customise" or "reject" where not necessary for their use.

Finally, keeping abreast of data rights under Indian law, such as the right to withdraw consent or to have data deleted, will enable people to reclaim control over their online presence.

Conclusion

Cookies are a fundamental component of the modern web, but they raise significant concerns about individual privacy. India's DPDP Act, 2023, though not explicitly referring to cookies, contains an effective legal framework that regulates any data collection activity involving personal data, including those facilitated by cookies.

As India continues to make progress towards comprehensive rulemaking and regulation, companies need to implement privacy-first practices today. And so must the users, in an active role in their own digital lives. Collectively, compliance, transparency and awareness can build a more secure and ethical internet ecosystem where privacy is prioritised by design.

References

- https://prsindia.org/billtrack/digital-personal-data-protection-bill-2023

- https://gdpr-info.eu/

- https://d38ibwa0xdgwxx.cloudfront.net/create-edition/7c2e2271-6ddd-4161-a46c-c53b8609c09d.pdf

- https://oag.ca.gov/privacy/ccpa

- https://www.barandbench.com/columns/cookie-management-under-the-digital-personal-data-protection-act-2023#:~:text=The%20Business%20Requirements%20Document%20for,the%20DPDP%20Act%20and%20Rules.

- https://samistilegal.in/cookies-meaning-legal-regulations-and-implications/#

- https://secureprivacy.ai/blog/india-digital-personal-data-protection-act-dpdpa-cookie-consent-requirements

- https://law.asia/cookie-use-india/

- https://www.cookielawinfo.com/major-gdpr-fines-2020-2021/#:~:text=4.,French%20websites%20could%20refuse%20cookies.