#FactCheck - AI-Generated Image of Abhishek Bachchan and Aishwarya Rai Falsely Linked to Kedarnath Visit

A photo featuring Bollywood actor Abhishek Bachchan and actress Aishwarya Rai is being widely shared on social media. In the image, the Kedarnath Temple is clearly visible in the background. Users are claiming that the couple recently visited the Kedarnath shrine for darshan.

Cyber Peace Foundation’s research found the viral claim to be false. Our research revealed that the image of Abhishek Bachchan and Aishwarya Rai is not real, but AI-generated, and is being misleadingly shared as a genuine photograph.

Claim

On January 14, 2026, a user on X (formerly Twitter) shared the viral image with a caption suggesting that all rumours had ended and that the couple had restarted their life together. The post further claimed that both actors were seen smiling after a long time, implying that the image was taken during their visit to Kedarnath Temple.

The post has since been widely circulated on social media platforms

Fact Check:

To verify the claim, we first conducted a keyword search on Google related to Abhishek Bachchan, Aishwarya Rai, and a Kedarnath visit. However, we did not find any credible media reports confirming such a visit.

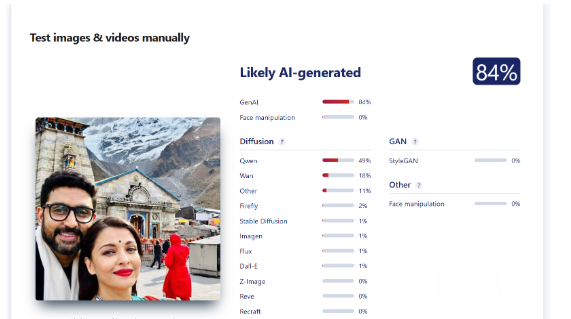

On closely examining the viral image, several visual inconsistencies raised suspicion about it being artificially generated. To confirm this, we scanned the image using the AI detection tool Sightengine. According to the tool’s analysis, the image was found to be 84 percent AI-generated.

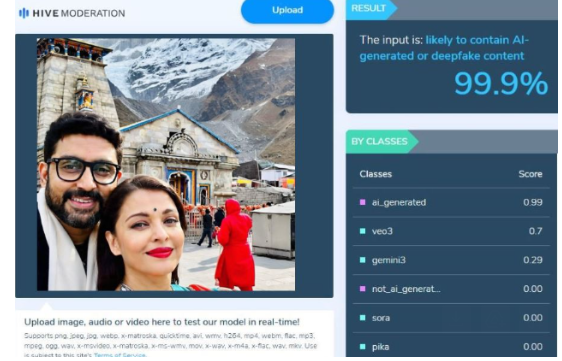

Additionally, we scanned the same image using another AI detection tool, HIVE Moderation. The results showed an even stronger indication, classifying the image as 99 percent AI-generated.

Conclusion

Our research confirms that the viral image showing Abhishek Bachchan and Aishwarya Rai at Kedarnath Temple is not authentic. The picture is AI-generated and is being falsely shared on social media to mislead users.