#FactCheck - AI-Generated Image Falsely Linked to Mira–Bhayandar Bridge

Executive Summary

Mumbai’s Mira–Bhayandar bridge has recently been in the news due to its unusual design. In this context, a photograph is going viral on social media showing a bus seemingly stuck on the bridge. Some users are also sharing the image while claiming that it is from Sonpur subdivision in Bihar. However, an research by the CyberPeace has found that the viral image is not real. The bridge shown in the image is indeed the Mira–Bhayandar bridge, which is under discussion because its design causes it to suddenly narrow from four lanes to two lanes. That said, the bridge is not yet operational, and the viral image showing a bus stuck on it has been created using Artificial Intelligence (AI).

Claim

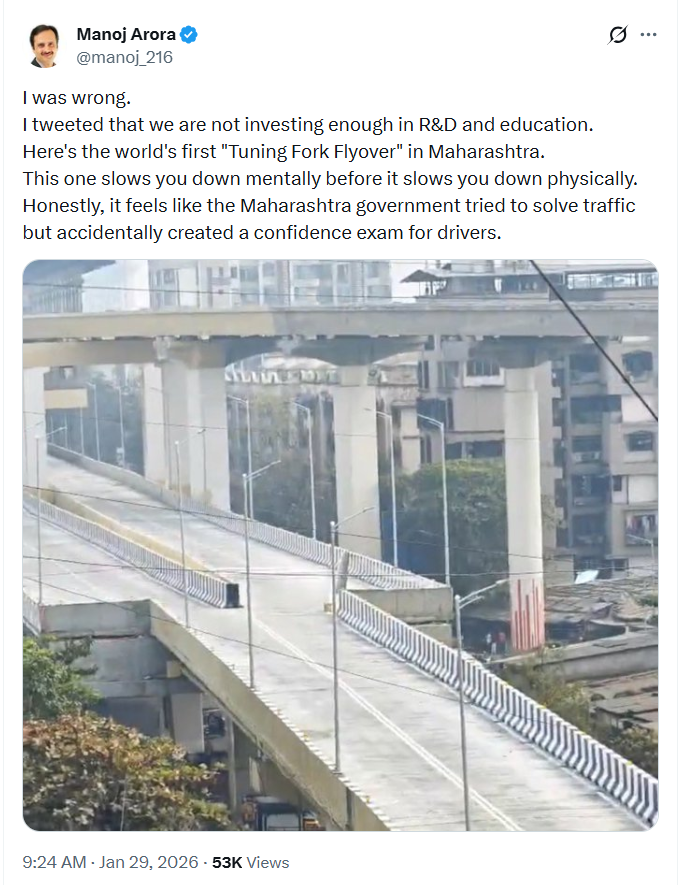

An Instagram user shared the viral image on January 29, 2026, with the caption:“Are Indian taxpayers happy to see that this is funded by their money?” The link, archive link, and screenshot of the post can be seen below.

Fact Check:

To verify the claim, we first conducted a Google Lens reverse image search. This led us to a post shared by X (formerly Twitter) user Manoj Arora on January 29. While the bridge structure in that image matches the viral photo, no bus is visible in the original post.This raised suspicion that the viral image had been digitally manipulated.

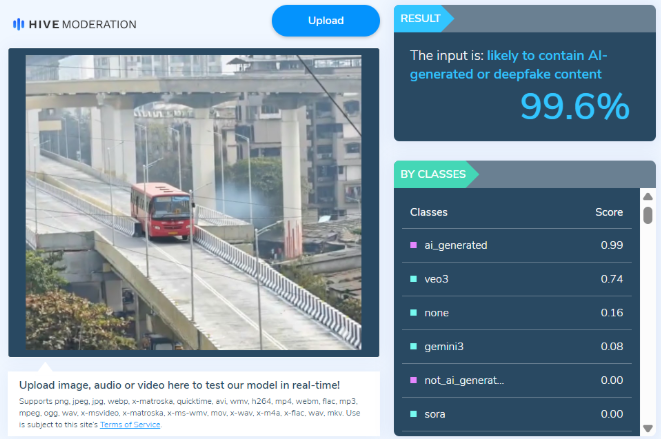

We then ran the viral image through the AI detection tool Hive Moderation, which flagged it as over 99% likely to be AI-generated

Conclusion

The CyberPeace research confirms that while the Mira–Bhayandar bridge is real and has been in the news due to its design, the viral image showing a bus stuck on the bridge has been created using AI tools. Therefore, the image circulating on social media is misleading.

Related Blogs

Introduction

The advent of AI-driven deepfake technology has facilitated the creation of explicit counterfeit videos for sextortion purposes. There has been an alarming increase in the use of Artificial Intelligence to create fake explicit images or videos for sextortion.

What is AI Sextortion and Deepfake Technology

AI sextortion refers to the use of artificial intelligence (AI) technology, particularly deepfake algorithms, to create counterfeit explicit videos or images for the purpose of harassing, extorting, or blackmailing individuals. Deepfake technology utilises AI algorithms to manipulate or replace faces and bodies in videos, making them appear realistic and often indistinguishable from genuine footage. This enables malicious actors to create explicit content that falsely portrays individuals engaging in sexual activities, even if they never participated in such actions.

Background on the Alarming Increase in AI Sextortion Cases

Recently there has been a significant increase in AI sextortion cases. Advancements in AI and deepfake technology have made it easier for perpetrators to create highly convincing fake explicit videos or images. The algorithms behind these technologies have become more sophisticated, allowing for more seamless and realistic manipulations. And the accessibility of AI tools and resources has increased, with open-source software and cloud-based services readily available to anyone. This accessibility has lowered the barrier to entry, enabling individuals with malicious intent to exploit these technologies for sextortion purposes.

The proliferation of sharing content on social media

The proliferation of social media platforms and the widespread sharing of personal content online have provided perpetrators with a vast pool of potential victims’ images and videos. By utilising these readily available resources, perpetrators can create deepfake explicit content that closely resembles the victims, increasing the likelihood of success in their extortion schemes.

Furthermore, the anonymity and wide reach of the internet and social media platforms allow perpetrators to distribute manipulated content quickly and easily. They can target individuals specifically or upload the content to public forums and pornographic websites, amplifying the impact and humiliation experienced by victims.

What are law agencies doing?

The alarming increase in AI sextortion cases has prompted concern among law enforcement agencies, advocacy groups, and technology companies. This is high time to make strong Efforts to raise awareness about the risks of AI sextortion, develop detection and prevention tools, and strengthen legal frameworks to address these emerging threats to individuals’ privacy, safety, and well-being.

There is a need for Technological Solutions, which develops and deploys advanced AI-based detection tools to identify and flag AI-generated deepfake content on platforms and services. And collaboration with technology companies to integrate such solutions.

Collaboration with Social Media Platforms is also needed. Social media platforms and technology companies can reframe and enforce community guidelines and policies against disseminating AI-generated explicit content. And can ensure foster cooperation in developing robust content moderation systems and reporting mechanisms.

There is a need to strengthen the legal frameworks to address AI sextortion, including laws that specifically criminalise the creation, distribution, and possession of AI-generated explicit content. Ensure adequate penalties for offenders and provisions for cross-border cooperation.

Proactive measures to combat AI-driven sextortion

Prevention and Awareness: Proactive measures raise awareness about AI sextortion, helping individuals recognise risks and take precautions.

Early Detection and Reporting: Proactive measures employ advanced detection tools to identify AI-generated deepfake content early, enabling prompt intervention and support for victims.

Legal Frameworks and Regulations: Proactive measures strengthen legal frameworks to criminalise AI sextortion, facilitate cross-border cooperation, and impose offender penalties.

Technological Solutions: Proactive measures focus on developing tools and algorithms to detect and remove AI-generated explicit content, making it harder for perpetrators to carry out their schemes.

International Cooperation: Proactive measures foster collaboration among law enforcement agencies, governments, and technology companies to combat AI sextortion globally.

Support for Victims: Proactive measures provide comprehensive support services, including counselling and legal assistance, to help victims recover from emotional and psychological trauma.

Implementing these proactive measures will help create a safer digital environment for all.

Misuse of Technology

Misusing technology, particularly AI-driven deepfake technology, in the context of sextortion raises serious concerns.

Exploitation of Personal Data: Perpetrators exploit personal data and images available online, such as social media posts or captured video chats, to create AI- manipulation violates privacy rights and exploits the vulnerability of individuals who trust that their personal information will be used responsibly.

Facilitation of Extortion: AI sextortion often involves perpetrators demanding monetary payments, sexually themed images or videos, or other favours under the threat of releasing manipulated content to the public or to the victims’ friends and family. The realistic nature of deepfake technology increases the effectiveness of these extortion attempts, placing victims under significant emotional and financial pressure.

Amplification of Harm: Perpetrators use deepfake technology to create explicit videos or images that appear realistic, thereby increasing the potential for humiliation, harassment, and psychological trauma suffered by victims. The wide distribution of such content on social media platforms and pornographic websites can perpetuate victimisation and cause lasting damage to their reputation and well-being.

Targeting teenagers– Targeting teenagers and extortion demands in AI sextortion cases is a particularly alarming aspect of this issue. Teenagers are particularly vulnerable to AI sextortion due to their increased use of social media platforms for sharing personal information and images. Perpetrators exploit to manipulate and coerce them.

Erosion of Trust: Misusing AI-driven deepfake technology erodes trust in digital media and online interactions. As deepfake content becomes more convincing, it becomes increasingly challenging to distinguish between real and manipulated videos or images.

Proliferation of Pornographic Content: The misuse of AI technology in sextortion contributes to the proliferation of non-consensual pornography (also known as “revenge porn”) and the availability of explicit content featuring unsuspecting individuals. This perpetuates a culture of objectification, exploitation, and non-consensual sharing of intimate material.

Conclusion

Addressing the concern of AI sextortion requires a multi-faceted approach, including technological advancements in detection and prevention, legal frameworks to hold offenders accountable, awareness about the risks, and collaboration between technology companies, law enforcement agencies, and advocacy groups to combat this emerging threat and protect the well-being of individuals online.

Introduction

In the contemporary information environment, misinformation has emerged as a subtle yet powerful force capable of shaping public perception, influencing behavior, and undermining institutional credibility. Unlike overt falsehoods, misinformation often gains traction because it appears authentic, familiar, and authoritative. The rapid circulation of content through digital platforms has intensified this challenge, allowing altered or misleading material to reach wide audiences before verification mechanisms can respond. When misinformation mimics official communication, its impact becomes especially concerning, as citizens tend to place implicit trust in documents that carry the appearance of state authority. This growing vulnerability of public information systems was illustrated by the calendar incident in Himachal Pradesh in January 2026.

The calendar incident of Himachal Pradesh in January 2026 shows how a small lie can lead to large social and governance problems. A person whose identity is still unknown posted a modified version of the Government Calendar 2026, changing the official dates and resulting in public confusion and reputational damage to the Printing and Stationery Department. The incident may not appear very serious at first sight, but it indicates a deeper systemic issue. Misinformation is posing increasing dangers to public information ecosystems, especially when official documents are misrepresented and disseminated through digital platforms.

Misinformation as a Governance Challenge

Government calendars and official documents are necessary for public awareness and administrative coordination, and their manipulation impedes the credibility of institutions and the trustworthiness of governance. In Himachal Pradesh, modified dates might have led to confusion regarding public holidays, interference in school and administrative planning, and misinformation among the people. Such misinformation is a direct interference in the social contract that exists between the citizens and the State, where accurate information is the foundation of trust, compliance, and participation.

Impact on Citizens: Confusion, Distrust, and Digital Fatigue

For the general public, the dissemination of fake government information leads to a situation where people are confused and, at the same time, lose their trust in the government communication channels. If someone continuously gets to see the changed or misleading information misrepresented as credible, that person will find it hard to differentiate the truth from lies in the end.

This results in:

- Decision paralysis occurs when the public cannot make up their minds and either postpones or refrains from action due to the doubts they have

- Erosion of trust, not only in one department but also in the whole government communications department

- Digital fatigue occurs when people stop following public information completely, since they think that all content can be unreliable

Misinformation in a digital society is not limited to one platform only. It spreads quickly through direct messaging apps, community groups, and social networks, thus creating greater confusion among people before the official clarifications can reach the same audience.

Institutional Harm and Reputational Damage

The intentional tampering with official documents is not only a violation of ethics but also a crime and an immoral act from a governance perspective. The Printing and Stationery Department noted that such practices tarnish the public image of government bodies, which are based on accuracy, neutrality, and trust.

When untrue material gets to be known as official content:

- Departments have to communicate reactively.

- Money and manpower that could have been used for the normal administrative work are now spent on the control of the situation.

The registration of a First Information Report (FIR) in this matter is an indication of the gradual shift in the perception of law enforcement agencies that misinformation is not a playful act but rather a technology-assisted crime with serious consequences.

The Role of Verifiable Information and Trusted Sources

Such occurrences stress the need for trustworthy information as well as confirmed sources to be at the centre of the digital era. It should be the responsibility of the authorities to lead the citizens to practice and ENABLING to depend on official websites, verified social media accounts, government portals, and press releases for authentication.

Platform Responsibility and Digital Literacy

The spread of misinformation poses a significant challenge for social media platforms, which frequently amplify highly engaging content. There are some ways that the social media networks can try to limit the damage, and these are: tagging of non-verified material, limiting the sharing and working with authorities in the area of fact-checking support. However, one more thing which is crucial here is ‘public knowledge’ about digital platforms, as even unintentional dissemination of fake “official” materials can lead to legal and social repercussions. The advice of the Himachal state government is a good thing, but constantly informing the public is still a requirement.

Legal Accountability as a Deterrent

The active participation of the Cyber Crime Cells unequivocally indicates that digital misinformation, especially involving government documents, will face severe consequences. The establishment of legal responsibility acts as a preventive measure and reiterates the notion that the right to speak one's mind does not cover the right to lie or undermine public institutions. Nonetheless, to have an effective enforcement, it has to be accompanied by preventive actions such as good communication, strong governance, and public trust-building. Consistent enforcement against digital misinformation can contribute to greater accountability within society. Digital Literacy programs should be conducted periodically for netizens and institutions.

Conclusion

The incident of the creation of fake calendars in Himachal Pradesh served as a signal for the authorities to adopt accurate communication strategies. The ratification of misinformation can be achieved only if there is shared participation of governments, digital platforms, citizens and civil societies. The main goal of all this is to maintain public trust and the dissemination of information in democratic processes.

Executive Summary:

A video circulating on social media falsely claims to show Indian Air Chief Marshal AP Singh admitting that India lost six jets and a Heron drone during Operation Sindoor in May 2025. It has been revealed that the footage had been digitally manipulated by inserting an AI generated voice clone of Air Chief Marshal Singh into his recent speech, which was streamed live on August 9, 2025.

Claim:

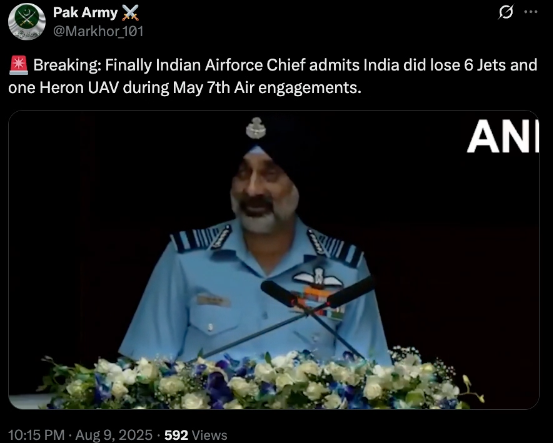

A viral video (archived video) (another link) shared by an X user stating in the caption “ Breaking: Finally Indian Airforce Chief admits India did lose 6 Jets and one Heron UAV during May 7th Air engagements.” which is actually showing the Air Chief Marshal has admitted the aforementioned loss during Operation Sindoor.

Fact Check:

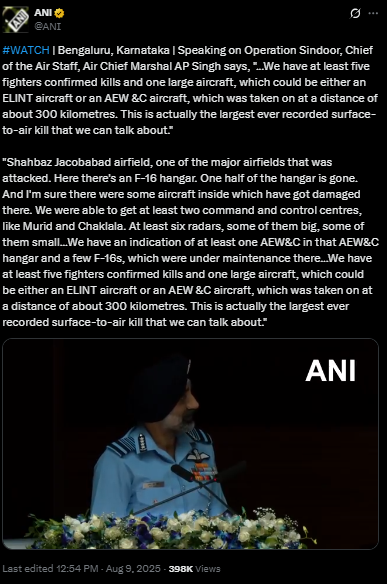

By conducting a reverse image search on key frames from the video, we found a clip which was posted by ANI Official X handle , after watching the full clip we didn't find any mention of the aforementioned alleged claim.

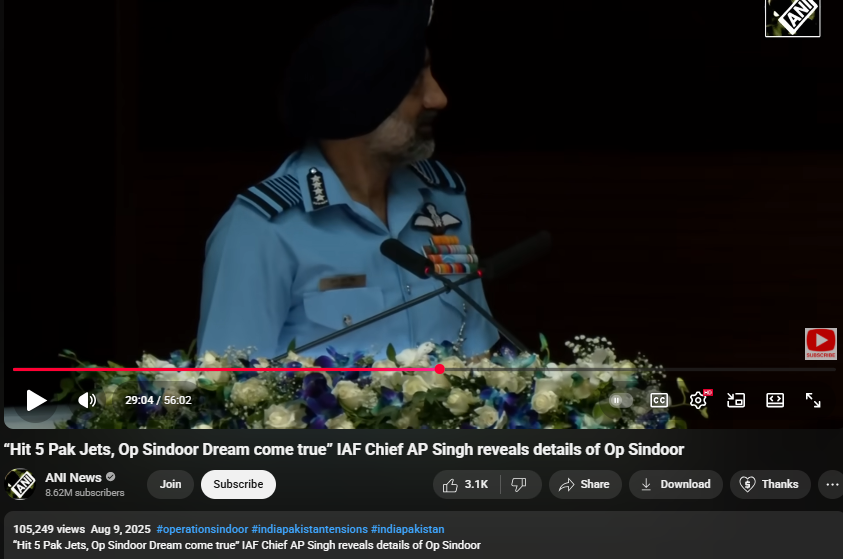

On further research we found an extended version of the video in the Official YouTube Channel of ANI which was published on 9th August 2025. At the 16th Air Chief Marshal L.M. Katre Memorial Lecture in Marathahalli, Bengaluru, Air Chief Marshal AP Singh did not mention any loss of six jets or a drone in relation to the conflict with Pakistan. The discrepancies observed in the viral clip suggest that portions of the audio may have been digitally manipulated.

The audio in the viral video, particularly the segment at the 29:05 minute mark alleging the loss of six Indian jets, appeared to be manipulated and displayed noticeable inconsistencies in tone and clarity.

Conclusion:

The viral video claiming that Air Chief Marshal AP Singh admitted to the loss of six jets and a Heron UAV during Operation Sindoor is misleading. A reverse image search traced the footage that no such remarks were made. Further an extended version on ANI’s official YouTube channel confirmed that, during the 16th Air Chief Marshal L.M. Katre Memorial Lecture, no reference was made to the alleged losses. Additionally, the viral video’s audio, particularly around the 29:05 mark, showed signs of manipulation with noticeable inconsistencies in tone and clarity.

- Claim: Viral Video Claiming IAF Chief Acknowledged Loss of Jets Found Manipulated

- Claimed On: Social Media

- Fact Check: False and Misleading