#FactCheck: False Social Media Claim on six Army Personnel were killed in retaliatory attack by ULFA in Myanmar

Executive Summary:

A widely circulated claim on social media indicates that six soldiers of the Assam Rifles were killed during a retaliatory attack carried out by a Myanmar-based breakaway faction of the United Liberation Front of Asom (Independent), or ULFA (I). The post included a photograph of coffins covered in Indian flags with reference to soldiers who were part of the incident where ULFA (I) killed six soldiers. The post was widely shared, however, the fact-check confirms that the photograph is old, not related, and there are no trustworthy reports to indicate that any such incident took place. This claim is therefore false and misleading.

Claim:

Social media users claimed that the banned militant outfit ULFA (I) killed six Assam Rifles personnel in retaliation for an alleged drone and missile strike by Indian forces on their camp in Myanmar with captions on it “Six Indian Army Assam Rifles soldiers have reportedly been killed in a retaliatory attack by the Myanmar-based ULFA group.”. The claim was accompanied by a viral post showing coffins of Indian soldiers, which added emotional weight and perceived authenticity to the narrative.

Fact Check:

We began our research with a reverse image search of the image of coffins in Indian flags, which we saw was shared with the viral claim. We found the image can be traced to August 2013. We found the traces in The Washington Post, which confirms the fact that the viral snap is from the Past incident where five Indian Army soldiers were killed by Pakistani intruders in Poonch, Jammu, and Kashmir, on August 6, 2013.

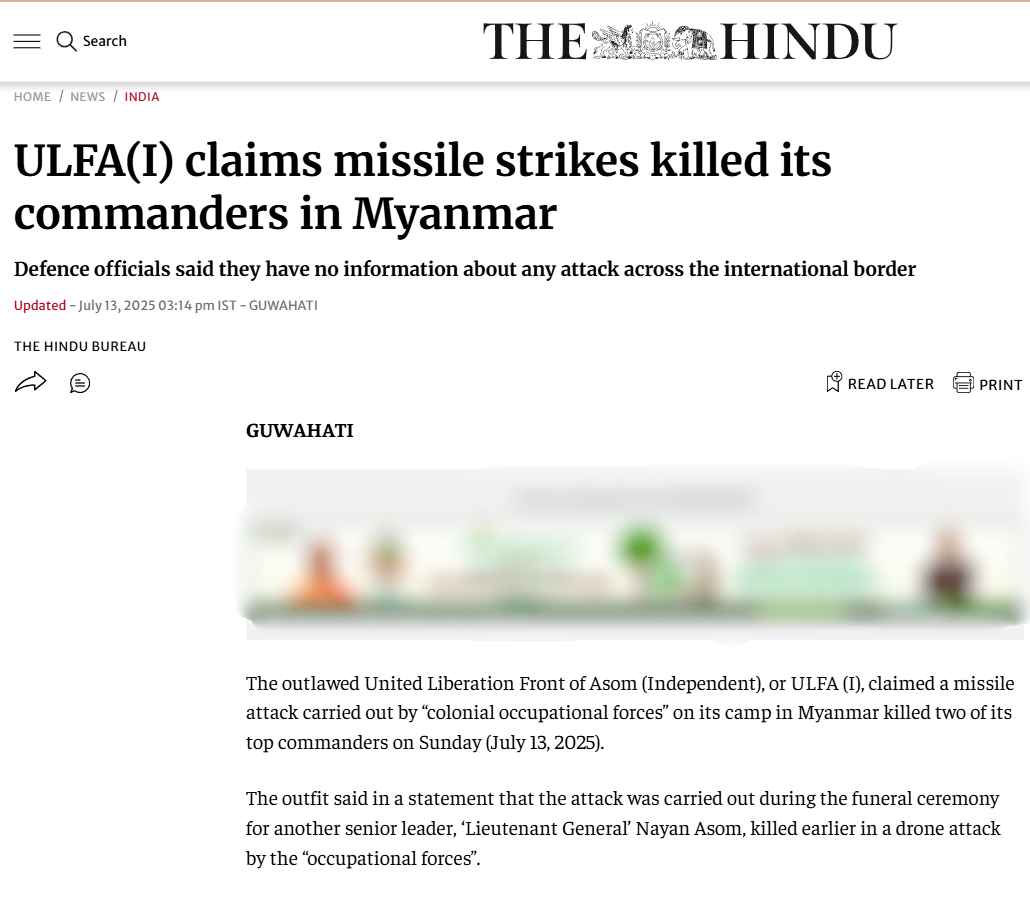

Also, The Hindu and India Today offered no confirmation of the death of six Assam Rifles personnel. However, ULFA (I) did issue a statement dated July 13, 2025, claiming that three of its leaders had been killed in a drone strike by Indian forces.

However, by using Shutterstock, it depicts that the coffin's image is old and not representative of any current actions by the United Liberation Front of Asom (ULFA).

The Indian Army denied it, with Defence PRO Lt Col Mahendra Rawat telling reporters there were "no inputs" of such an operation. Assam Chief Minister Himanta Biswa Sarma also rejected that there was cross-border military action whatsoever. Therefore, the viral claim is false and misleading.

Conclusion:

The assertion that ULFA (I) killed six soldiers from the 6th Assam Rifles in a retaliation strike is incorrect. The viral image used in these posts is from 2013 in Jammu & Kashmir and has no relevance to the present. There have been no verified reports of any such killings, and both the Indian Army and the Assam government have categorically denied having conducted or knowing of any cross-border operation. This faulty narrative is circulating, and it looks like it is only inciting fear and misinformation therefore, please ignore it.

- Claim: Report confirms the death of six Assam Rifles personnel in an ULFA-led attack.

- Claimed On: Social Media

- Fact Check: False and Misleading