#FactCheck: Viral image shows the Maldives mocking India with a "SURRENDER" sign on photo of Prime Minister Narendra Modi

Executive Summary:

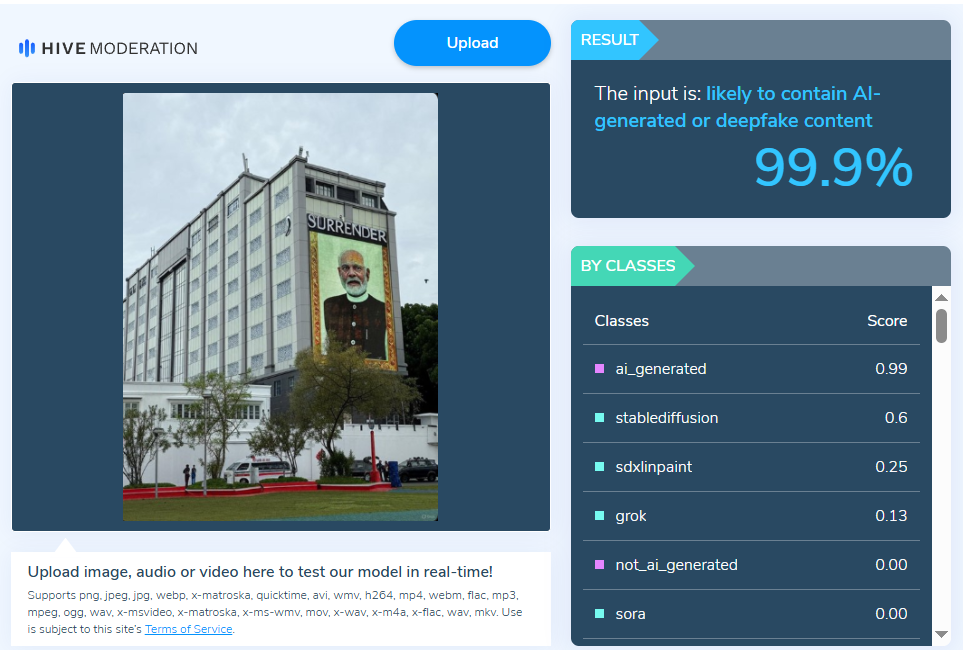

A manipulated viral photo of a Maldivian building with an alleged oversized portrait of Indian Prime Minister Narendra Modi and the words "SURRENDER" went viral on social media. People responded with fear, indignation, and anxiety. Our research, however, showed that the image was manipulated and not authentic.

Claim:

A viral image claims that the Maldives displayed a huge portrait of PM Narendra Modi on a building front, along with the phrase “SURRENDER,” implying an act of national humiliation or submission.

Fact Check:

After a thorough examination of the viral post, we got to know that it had been altered. While the image displayed the same building, it was wrong to say it included Prime Minister Modi’s portrait along with the word “SURRENDER” shown in the viral version. We also checked the image with the Hive AI Detector, which marked it as 99.9% fake. This further confirmed that the viral image had been digitally altered.

During our research, we also found several images from Prime Minister Modi’s visit, including one of the same building displaying his portrait, shared by the official X handle of the Maldives National Defence Force (MNDF). The post mentioned “His Excellency Prime Minister Shri @narendramodi was warmly welcomed by His Excellency President Dr.@MMuizzu at Republic Square, where he was honored with a Guard of Honor by #MNDF on his state visit to Maldives.” This image, captured from a different angle, also does not feature the word “surrender.

Conclusion:

The claim that the Maldives showed a picture of PM Modi with a surrender message is incorrect and misleading. The image is altered and is being spread to mislead people and stir up controversy. Users should check the authenticity of photos before sharing.

- Claim: Viral image shows the Maldives mocking India with a surrender sign

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

Emerging technologies in the digital era have made their inroads in manifold domains and locations, including the “Aviation industry”. A 2022 Cranfield University and Inmarsat report has made the point for digitalization powering a reviving age for the aviation industry. Several airport authorities are presently mobilizing power of emerging technologies such as Artificial Intelligence (AI) across the airport bedrock to provide travelers with a plain sailing and expeditious air travel experience.

The Perils of Juice-Jacking

Today, Universal Serial Bus (USB) charging ports are ubiquitous and a convenient way for travelers to keep their devices powered up. In their busy, mundane lives, people use the public charging facility while travelling. However, cybersecurity experts have warned that charging in public areas could wipe off data from an electronic device or install malware, and they have urged people to stay away from USB charging ports at airports and other public areas. This leads to the possibility that fraudsters may manipulate susceptible users via juice jacking.

Investigative journalist Brian Krebs in 2011 coined the term "Juice Jacking". It isa form of cyber attack where a public USB charging port is fiddled with and infected using hardware and software changes to pocket data or install malware on devices connected to it. The term “juice jacking” is a slang representation for electric power or energy, and “hijacking” indicates an unauthorized key toa device.

While the preliminary purpose of juice jacking is usually to pilfer sensitive information from corresponding devices, such as passwords and payment card details, attackers can exploit this stolen information to attain unauthorized to your financial accounts. If the adversary attacker installs malware in the electronic device during the juice jacking strategy, the attacker may further observe the individual's movements even after one has disconnected the device from the USB port. However, the hazards of Juice Jacking include malware infection, data heist, economic loss and damage to the reputation of an individual.

RedFlags from Agencies

In2023, the Federal Bureau of Investigation (FBI) forewarned travelers against using charging stations in public zones such as hotels, airports, and shopping malls due to malicious actors attempting to use the public USB to introduce monitoring software and malware into devices. The U.S. Federal Communications Commission (FCC) has also administered a new advisory regarding “juice jacking "and its possibility of launching a hushed cyber attack against a mobile gadget while one is charging the phone with a USB cord. Similarly, according to new research from International Business Machines (IBM) Security, many nation-state hackers are currently training their eyes on travelers.

RBI Advisory

Recently in 2024, The Reserve Bank of India (RBI) has likewise administered a warning statement to mobile phone users urging them against charging their devices using public ports. RBI has additionally accentuated the importance of safeguarding private and financial data while using mobile devices. Juice jacking is further cited as one of the scams in the RBI booklet on the modus operandi of financial fraudsters in the financial space.

Preventing juice jacking attacks

The routes to avoid Juice Jacking are to keep a tab on the USB devices, not use the public charging ports, update the phone software regularly, enable and utilize the software security measures of the device, use a USB pass-through device, a wall outlet, or a backup battery; never use unknown charging cables and use only the trusted security apps. It is further important to avoid using cables that are left behind by other travelers in any public space. Users can correspondingly turn off their devices before connecting to a wary charging port. Nevertheless, the absence of documented cases does not necessarily imply that users cannot be a target of such an attack and a warning is still recommended when securing personal gadgets with susceptible user data while using standard cables. Also, using a virtual private network (VPN) and assuring that devices have the updated security updates established can aid in mitigating the danger of cyber attacks. It is equally important to utilize the security features of your device, such as passcodes, fingerprints, or facial recognition, enabled to count as a supplementary layer of safeguard.

Conclusion

In the contemporary digital age, individuals, on the whole, need to be vigilant about “Cybersecurity hygiene” and avoid accessing susceptible data or conducting financial transactions on unsecured networks. Mobile phones or devices should run on the latest operating system, and antivirus software should be revamped to mitigate conceivable security susceptibilities.

References

- https://www.forbes.com/sites/suzannerowankelleher/2023/04/20/juice-jacking-malware-phone-airports-hotels/?sh=47adab7e82ed

- https://www.businessairportinternational.com/features/how-ai-is-improving-business-aviation-operations.html

- https://www.news18.com/business/juice-jacking-attack-scam-bank-frauds-india-8412037.html

- https://www.comparitech.com/blog/information-security/juice-jacking/

- https://blogs.blackberry.com/en/2023/04/juice-jacking-advisory

- https://www.thehindubusinessline.com/info-tech/juice-jacking-rbi-issues-warning-against-charging-mobile-phones-using-public-ports/article67895091.ece

- https://www.thehindu.com/sci-tech/technology/juice-jacking-how-hackers-target-smartphones-tethered-to-public-charging-points/article67026433.ece

- https://www.forbes.com/sites/suzannerowankelleher/2019/05/21/why-you-should-never-use-airport-usb-charging-stations/?sh=630f026a5955

- https://edition.cnn.com/2023/04/12/tech/fbi-public-charging-port-warning/index.html

- https://social-innovation.hitachi/en-in/knowledge-hub/hitachi-voice/digital-transformation/

- https://www.inmarsat.com/en/insights/aviation/2022/future-aviation-connectivity.html

Introduction

Twitter is a popular social media plate form with millions of users all around the world. Twitter’s blue tick system, which verifies the identity of high-profile accounts, has been under intense scrutiny in recent years. The platform must face backlash from its users and brands who have accused it of basis, inaccuracy, and inconsistency in its verification process. This blog post will explore the questions raised on the verification process and its impact on users and big brands.

What is Twitter’s blue trick System?

The blue tick system was introduced in 2009 to help users identify the authenticity of well-known public figures, Politicians, celebrities, sportspeople, and big brands. The Twitter blue Tick system verifies the identity of high-profile accounts to display a blue badge next to your username.

According to a survey, roughly there are 294,000 verified Twitter Accounts which means they have a blue tick badge with them and have also paid the subscription for the service, which is nearly $7.99 monthly, so think about those subscribers who have paid the amount and have also lost their blue badge won’t they feel cheated?

The Controversy

Despite its initial aim, the blue tick system has received much criticism from consumers and brands. Twitter’s irregular and non-transparent verification procedure has sparked accusations of prejudice and inaccuracy. Many Twitter users have complained that the network’s verification process is random and favours account with huge followings or celebrity status. In contrast, others have criticised the platform for certifying accounts that promote harmful or controversial content.

Furthermore, the verification mechanism has generated user confusion, as many need to understand the significance of the blue tick badge. Some users have concluded that the blue tick symbol represents a Twitter endorsement or that the account is trustworthy. This confusion has resulted in users following and engaging with verified accounts that promote misleading or inaccurate data, undermining the platform’s credibility.

How did the Blue Tick Row start in India?

On 21 May 2021, when the government asked Twitter to remove the blue badge from several profiles of high-profile Indian politicians, including the Indian National Congress Party Vice-President Mr Rahul Ghandhi.

The blue badge gives the users an authenticated identity. Many celebrities, including Amitabh Bachchan, popularly known as Big B, Vir Das, Prakash Raj, Virat Kohli, and Rohit Sharma, have lost their blue tick despite being verified handles.

What is the Twitter policy on blue tick?

To Twitter’s policy, blue verification badges may be removed from accounts if the account holder violates the company’s verification policy or terms of service. In such circumstances, Twitter typically notifies the account holder of the removal of the verification badge and the reason for the removal. In the instance of the “Twitter blue badge row” in India, however, it appears that Twitter did not notify the impacted politicians or their representatives before revoking their verification badges. Twitter’s lack of communication has exacerbated the controversy around the episode, with some critics accusing the company of acting arbitrarily and not following due process.

Is there a solution?

The “Twitter blue badge row” has no simple answer since it involves a complex convergence of concerns about free expression, social media policies, and government laws. However, here are some alternatives:

- Establish clear guidelines: Twitter should develop and constantly implement clear guidelines and policies for the verification process. All users, including politicians and government officials, would benefit from greater transparency and clarity.

- Increase transparency: Twitter’s decision-making process for deleting or restoring verification badges should be more open. This could include providing explicit reasons for badge removal, notifying impacted users promptly, and offering an appeals mechanism for those who believe their credentials were removed unfairly.

- Engage in constructive dialogue: Twitter should engage in constructive dialogue with government authorities and other stakeholders to address concerns about the platform’s content moderation procedures. This could contribute to a more collaborative approach to managing online content, leading to more effective and accepted policies.

- Follow local rules and regulations: Twitter should collaborate with the Indian government to ensure it conforms to local laws and regulations while maintaining freedom of expression. This could involve adopting more precise standards for handling requests for material removal or other actions from governments and other organisations.

Conclusion

To sum up, the “Twitter blue tick row” in India has highlighted the complex challenges that Social media faces daily in handling the conflicting interests of free expression, government rules, and their own content moderation procedures. While Twitter’s decision to withdraw the blue verification badges of several prominent Indian politicians garnered anger from the government and some public members, it also raised questions about the transparency and uniformity of Twitter’s verification procedure. In order to deal with this issue, Twitter must establish clear verification procedures and norms, promote transparency in its decision-making process, participate in constructive communication with stakeholders, and adhere to local laws and regulations. Furthermore, the Indian government should collaborate with social media platforms to create more effective and acceptable laws that balance the necessity for free expression and the protection of citizens’ rights. The “Twitter blue tick row” is just one example of the complex challenges that social media platforms face in managing online content, and it emphasises the need for greater collaboration among platforms, governments, and civil society organisations to develop effective solutions that protect both free expression and citizens’ rights.

Introduction

In the age of digital technology, the concept of net neutrality has become more crucial for preserving the equity and openness of the internet. Thanks to net neutrality, all internet traffic is treated equally, without difference or preferential treatment. Thanks to this concept, users can freely access and distribute content, which promotes innovation, competition, and the democratisation of knowledge. India has seen controversy over net neutrality, which has led to a legal battle to protect an open internet. In this blog post, we’ll look at the challenges of the law and the efforts made to safeguard net neutrality in India.

Background on Net Neutrality in India

Net neutrality became a hot topic in India after a major telecom service provider suggested charging various fees for accessing different parts of the internet. Internet users, activists, and organisations in favour of an open internet raised concern over this. Millions of comments were made on the consultation document by the Telecom Regulatory Authority of India (TRAI) published in 2015, highlighting the significance of net neutrality for the country’s internet users.

Legal Battle and Regulatory Interventions

The battle for net neutrality in India acquired notoriety when TRAI released the “Prohibition of Discriminatory Tariffs for Data Services Regulations” in 2016. These laws, often known as the “Free Basics” prohibition, were created to put an end to the usage of zero-rating platforms, which exempt specific websites or services from data expenses. The regulations ensured that all data on the internet would be handled uniformly, regardless of where it originated.

But the legal conflict didn’t end there. The telecom industry challenged TRAI’s regulations, resulting in a flurry of legal conflicts in numerous courts around the country. The Telecom Regulatory Authority of India Act and its provisions of it that control TRAI’s ability to regulate internet services were at the heart of the legal dispute.

The Indian judicial system greatly helped the protection of net neutrality. The importance of non-discriminatory internet access was highlighted in 2018 when the Telecom Disputes Settlement and Appellate Tribunal (TDSAT) upheld the TRAI regulations and ruled in favour of net neutrality. The TDSAT ruling created a crucial precedent for net neutrality in India. In 2019, after several rounds of litigation, the Supreme Court of India backed the principles of net neutrality, declaring that it is a fundamental idea that must be protected. The nation’s legislative framework for preserving a free and open internet was bolstered by the ruling by the top court.

Ongoing Challenges and the Way Forward

Even though India has made great strides towards upholding net neutrality, challenges persist. Because of the rapid advancement of technology and the emergence of new services and platforms, net neutrality must always be safeguarded. Some practices, such as “zero-rating” schemes and service-specific data plans, continue to raise questions about potential violations of net neutrality principles. Regulatory efforts must be proactive and under constant watch to allay these worries. The regulatory organisation, TRAI, is responsible for monitoring for and responding to breaches of the net neutrality principles. It’s crucial to strike a balance between promoting innovation and competition and maintaining a free and open internet.

Additionally, public awareness and education on the issue are crucial for the continuation of net neutrality. By informing users of their rights and promoting involvement in the conversation, a more inclusive and democratic decision-making process is assured. Civil society organisations and advocacy groups may successfully educate the public about net neutrality and gain their support.

Conclusion

The legal battle for net neutrality in India has been a significant turning point in the campaign to preserve an open and neutral internet. A robust framework for net neutrality in the country has been established thanks to legislative initiatives and judicial decisions. However, due to ongoing challenges and the dynamic nature of technology, maintaining net neutrality calls for vigilant oversight and strong actions. An open and impartial internet is crucial for fostering innovation, increasing free speech, and providing equal access to information. India’s attempts to uphold net neutrality should motivate other nations dealing with similar issues. All parties, including politicians, must work together to protect the principles of net neutrality and ensure that the Internet is accessible to everyone.