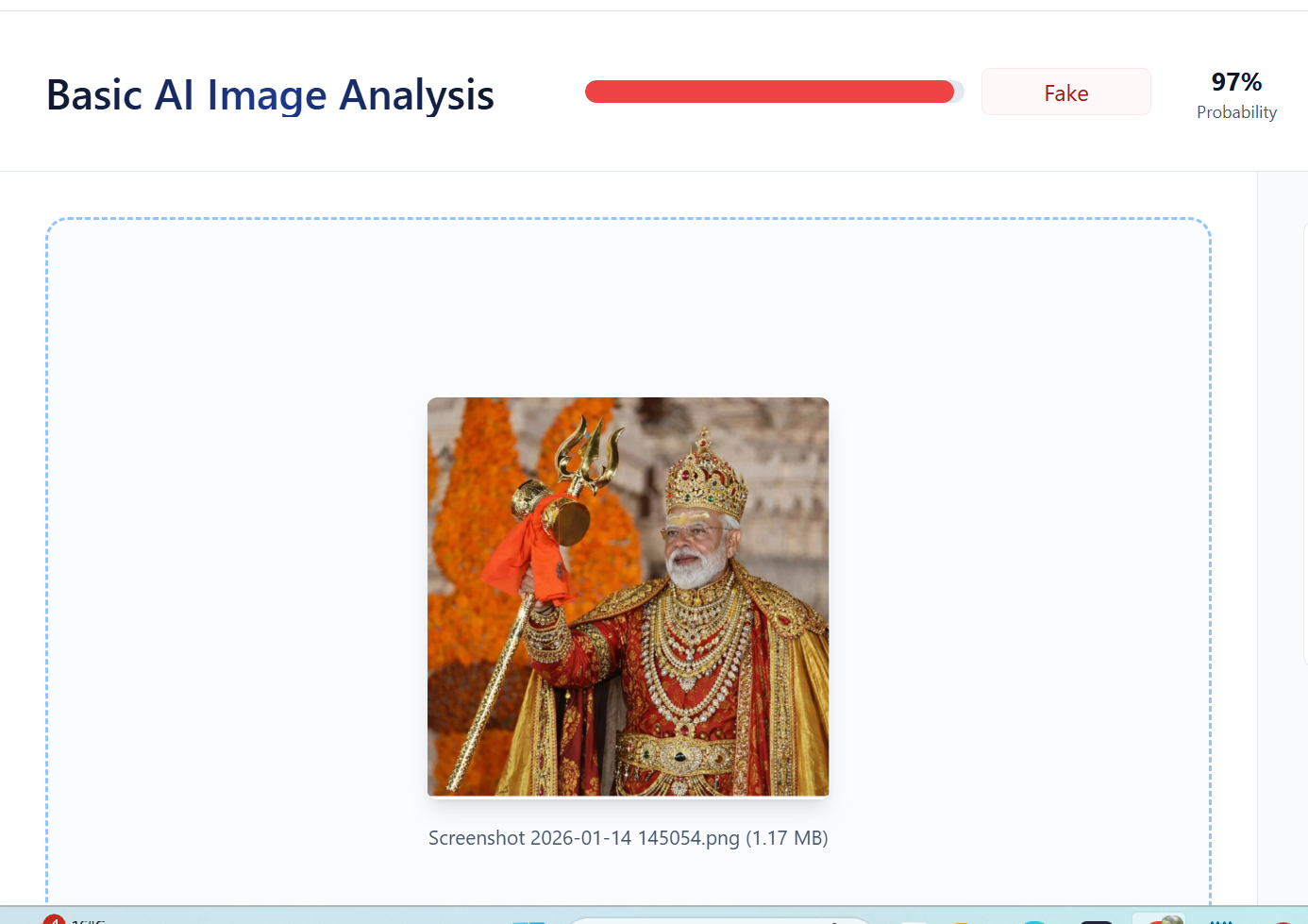

#FactCheck: No, PM Modi Did Not Appear in Royal Attire,Image Is AI-Generated

A photograph showing Prime Minister Narendra Modi holding a trident and dressed in royal attire is being widely shared on social media. Users circulating the image are claiming that it shows PM Modi in a regal outfit.

However, a verification by the Cyber Peace Foundation’s Research Desk has found that the claim is false. The investigation established that the viral image is not authentic and has been generated using Artificial Intelligence (AI).

Claim:

On January 11, 2026, several Instagram users shared the image with captions describing it as a photograph of Prime Minister Modi in royal attire.

Links and archived versions of the posts, along with screenshots, are provided below.

Fact Check:

To verify the claim, relevant keywords such as “PM Modi holding trishul” were searched on Google. This led to a report published by Navbharat Times on January 10, 2025. The report features photographs of Prime Minister Modi holding a trident during his visit to the Somnath Temple. However, in the original images, he is seen wearing normal attire, not royal clothing as shown in the viral image. Link and screenshot

In the next step of the investigation, the original photograph was traced to the official Instagram account of BJP Gujarat, where it was posted on January 11, 2026. The post clearly identifies the image as being from Somnath Temple. Link and screenshot: https://www.instagram.com/p/DTVlb-9Da1V

A close examination of the viral image raised suspicion about digital manipulation. The image was then analysed using the AI detection tool TruthScan. The tool’s assessment indicated a 97 percent likelihood that the image was AI-generated.

Further comparison between the viral image and the original photograph revealed that all visual elements match except the clothing, confirming that the attire was digitally altered using AI tools.

Conclusion

The claim that Prime Minister Narendra Modi appeared in royal attire is false. The Cyber Peace Foundation’s research confirms that the viral image was created using AI by altering the clothing in an original photograph taken during PM Modi’s visit to Somnath Temple. The manipulated image was shared online to mislead users.

Related Blogs

Pretext

On 20th October 2022, the Competition Commission of India (CCI) imposed a penalty of Rs. 1,337.76 crores on Google for abusing its dominant position in multiple markets in the Android Mobile device ecosystem, apart from issuing cease and desist orders. The CCI also directed Google to modify its conduct within a defined timeline. Smart mobile devices need an operating system (OS) to run applications (apps) and programs. Android is one such mobile operating system that Google acquired in 2005. In the instant matter, the CCI examined various practices of Google w.r.t. licensing of this Android mobile operating system and various proprietary mobile applications of Google (e.g., Play Store, Google Search, Google Chrome, YouTube, etc.).

The Issue

Google was found to be misusing its dominant position in the tech market, and the same was the reason behind the penalty. Google argued about the competitive constraints being faced from Apple. In relation to understanding the extent of competition between Google’s Android ecosystem and Apple’s iOS ecosystem, the CCI noted the differences in the two business models, which affect the underlying incentives of business decisions. Apple’s business is primarily based on a vertically integrated smart device ecosystem that focuses on the sale of high-end smart devices with state-of-the-art software components. In contrast, Google’s business was found to be driven by the ultimate intent of increasing users on its platforms so that they interact with its revenue-earning service, i.e., online searches, which directly affects the sale of online advertising services by Google. It was seen that google had created a dominant position among the android phone manufacturers as they were made to have a set of google apps preinstalled in the device to increase the user’s dependency on google services. The CCI felt that Google had created a dominant position to which they replied that the same operations are done by Apple as well, to which the commission responded that apple is a phone and app manufacturer and they have Apple-owned apps in Apple devices only, but Google here in had made a pseudo mandate for android manufactures to have the google apps pre-installed which is, in turn, a possible way of disrupting the market equilibrium and violative of market practices. The CCI imposed a penalty of Rs. 1,337.76 for abusing its dominant position in multiple markets in India, CCI delineated the following five relevant markets in the present matter –

- The market for licensable OS for smart mobile devices in India

- The market for app store for Android smart mobile OS in India

- The market for general web search services in India

- The market for non-OS specific mobile web browsers in India

- The market for online video hosting platforms (OVHP) in India.

Supreme Courts Opinion

In October 2022, the Competition Commission of India (CCI) ruled that Google, owned by Alphabet Inc, exploited its dominant position in Android and told it to remove restrictions on device makers, including those related to the pre-installation of apps and ensuring exclusivity of its search. Google lost a challenge in the Supreme Court to block the directives, as the learned court refused to put a stay on the imposed penalty, further giving seven days to comply. The Supreme Court has said a lower tribunal—where Google first challenged the Android directives—can continue to hear the company’s appeal and must rule by March 31.

Counterpoint Research estimates that about 97% of 600 million smartphones in India run on Android. Apple has just a 3% share. Hoping to block the implementation of the CCI directives, Google challenged the CCI order in the Supreme Court by warning it could stall the growth of the Android ecosystem. It also said it would be forced to alter arrangements with more than 1,100 device manufacturers and thousands of app developers if the directives kick in. Google has been concerned about India’s decision as the steps are seen as more sweeping than those imposed in the European Commission’s 2018 ruling. There it was fined for putting in place what the Commission called unlawful restrictions on Android mobile device makers. Google is still challenging the record $4.3 billion fine in that case. In Europe, Google made changes later, including letting Android device users pick their default search engine, and said device makers would be able to license the Google mobile application suite separately from the Google Search App or the Chrome browser.

Conclusion

As the world goes deeper into cyberspace, the big tech companies have more control over the industry and the markets, but the same should not turn into anarchy in the global markets. The Tech giants need to be made aware that compliance is the utmost duty for all companies, and enforcement of the law of the land will be maintained no matter what. Earlier India lacked policies and legislation to govern cyberspace, but in the recent proactive stance by the govt, a lot of new bills have been tabled, one of them being the Intermediary Rules 2021, which has laid down the obligations nand duties of the companies by setting up an intermediary in the country. Such bills coupled with such crucial judgments on tech giants will act as a test and barrier for other tech companies who try to flaunt the rules and avoid compliance.

In the vast, uncharted territories of the digital world, a sinister phenomenon is proliferating at an alarming rate. It's a world where artificial intelligence (AI) and human vulnerability intertwine in a disturbing combination, creating a shadowy realm of non-consensual pornography. This is the world of deepfake pornography, a burgeoning industry that is as lucrative as it is unsettling.

According to a recent assessment, at least 100,000 deepfake porn videos are readily available on the internet, with hundreds, if not thousands, being uploaded daily. This staggering statistic prompts a chilling question: what is driving the creation of such a vast number of fakes? Is it merely for amusement, or is there a more sinister motive at play?

Recent Trends and Developments

An investigation by India Today’s Open-Source Intelligence (OSINT) team reveals that deepfake pornography is rapidly morphing into a thriving business. AI enthusiasts, creators, and experts are extending their expertise, investors are injecting money, and even small financial companies to tech giants like Google, VISA, Mastercard, and PayPal are being misused in this dark trade. Synthetic porn has existed for years, but advances in AI and the increasing availability of technology have made it easier—and more profitable—to create and distribute non-consensual sexually explicit material. The 2023 State of Deepfake report by Home Security Heroes reveals a staggering 550% increase in the number of deepfakes compared to 2019.

What’s the Matter with Fakes?

But why should we be concerned about these fakes? The answer lies in the real-world harm they cause. India has already seen cases of extortion carried out by exploiting deepfake technology. An elderly man in UP’s Ghaziabad, for instance, was tricked into paying Rs 74,000 after receiving a deep fake video of a police officer. The situation could have been even more serious if the perpetrators had decided to create deepfake porn of the victim.

The danger is particularly severe for women. The 2023 State of Deepfake Report estimates that at least 98 percent of all deepfakes is porn and 99 percent of its victims are women. A study by Harvard University refrained from using the term “pornography” for creating, sharing, or threatening to create/share sexually explicit images and videos of a person without their consent. “It is abuse and should be understood as such,” it states.

Based on interviews of victims of deepfake porn last year, the study said 63 percent of participants talked about experiences of “sexual deepfake abuse” and reported that their sexual deepfakes had been monetised online. It also found “sexual deepfake abuse to be particularly harmful because of the fluidity and co-occurrence of online offline experiences of abuse, resulting in endless reverberations of abuse in which every aspect of the victim’s life is permanently disrupted”.

Creating deepfake porn is disturbingly easy. There are largely two types of deepfakes: one featuring faces of humans and another featuring computer-generated hyper-realistic faces of non-existing people. The first category is particularly concerning and is created by superimposing faces of real people on existing pornographic images and videos—a task made simple and easy by AI tools.

During the investigation, platforms hosting deepfake porn of stars like Jennifer Lawrence, Emma Stone, Jennifer Aniston, Aishwarya Rai, Rashmika Mandanna to TV actors and influencers like Aanchal Khurana, Ahsaas Channa, and Sonam Bajwa and Anveshi Jain were encountered. It takes a few minutes and as little as Rs 40 for a user to create a high-quality fake porn video of 15 seconds on platforms like FakeApp and FaceSwap.

The Modus Operandi

These platforms brazenly flaunt their business association and hide behind frivolous declarations such as: the content is “meant solely for entertainment” and “not intended to harm or humiliate anyone”. However, the irony of these disclaimers is not lost on anyone, especially when they host thousands of non-consensual deepfake pornography.

As fake porn content and its consumers surge, deepfake porn sites are rushing to forge collaborations with generative AI service providers and have integrated their interfaces for enhanced interoperability. The promise and potential of making quick bucks have given birth to step-by-step guides, video tutorials, and websites that offer tools and programs, recommendations, and ratings.

Nearly 90 per cent of all deepfake porn is hosted by dedicated platforms that charge for long-duration premium fake content and for creating porn—of whoever a user wants, and take requests for celebrities. To encourage them further, they enable creators to monetize their content.

One such website, Civitai, has a system in place that pays “rewards” to creators of AI models that generate “images of real people'', including ordinary people. It also enables users to post AI images, prompts, model data, and LoRA (low-rank adaptation of large language models) files used in generating the images. Model data designed for adult content is gaining great popularity on the platform, and they are not only targeting celebrities. Common people are equally susceptible.

Access to premium fake porn, like any other content, requires payment. But how can a gateway process payment for sexual content that lacks consent? It seems financial institutes and banks are not paying much attention to this legal question. During the investigation, many such websites accepting payments through services like VISA, Mastercard, and Stripe were found.

Those who have failed to register/partner with these fintech giants have found a way out. While some direct users to third-party sites, others use personal PayPal accounts to manually collect money in the personal accounts of their employees/stakeholders, which potentially violates the platform's terms of use that ban the sale of “sexually oriented digital goods or content delivered through a digital medium.”

Among others, the MakeNude.ai web app – which lets users “view any girl without clothing” in “just a single click” – has an interesting method of circumventing restrictions around the sale of non-consensual pornography. The platform has partnered with Ukraine-based Monobank and Dublin’s BetaTransfer Kassa which operates in “high-risk markets”.

BetaTransfer Kassa admits to serving “clients who have already contacted payment aggregators and received a refusal to accept payments, or aggregators stopped payments altogether after the resource was approved or completely freeze your funds”. To make payment processing easy, MakeNude.ai seems to be exploiting the donation ‘jar’ facility of Monobank, which is often used by people to donate money to Ukraine to support it in the war against Russia.

The Indian Scenario

India currently is on its way to design dedicated legislation to address issues arising out of deepfakes. Though existing general laws requiring such platforms to remove offensive content also apply to deepfake porn. However, persecution of the offender and their conviction is extremely difficult for law enforcement agencies as it is a boundaryless crime and sometimes involves several countries in the process.

A victim can register a police complaint under provisions of Section 66E and Section 66D of the IT Act, 2000. Recently enacted Digital Personal Data Protection Act, 2023 aims to protect the digital personal data of users. Recently Union Government issued an advisory to social media intermediaries to identify misinformation and deepfakes. Comprehensive law promised by Union IT minister Ashwini Vaishnav will be able to address these challenges.

Conclusion

In the end, the unsettling dance of AI and human vulnerability continues in the dark web of deepfake pornography. It's a dance that is as disturbing as it is fascinating, a dance that raises questions about the ethical use of technology, the protection of individual rights, and the responsibility of financial institutions. It's a dance that we must all be aware of, for it is a dance that affects us all.

References

- https://www.indiatoday.in/india/story/deepfake-porn-artificial-intelligence-women-fake-photos-2471855-2023-12-04

- https://www.hindustantimes.com/opinion/the-legal-net-to-trap-peddlers-of-deepfakes-101701520933515.html

- https://indianexpress.com/article/opinion/columns/with-deepfakes-getting-better-and-more-alarming-seeing-is-no-longer-believing/

Introduction

Charity and donation scams have continued to persist and are amplified in the digital era, where messages spread rapidly through WhatsApp, emails, and social media. These fraudulent schemes involve threat actors impersonating legitimate charities, government appeals, or social causes to solicit funds. Apart from targeting the general public, they also impact entities such as reputable tech firms and national institutions. Victims are tricked into transferring money or sharing personal information, often under the guise of urgent humanitarian efforts or causes.

A recent incident involves a fake WhatsApp message claiming to be from the Indian Ministry of Defence. The message urged users to donate to a fund for “modernising the Indian Army.” The government later confirmed this message was entirely fabricated and part of a larger scam. It emphasised that no such appeal had been issued by the Ministry, and urged citizens to verify such claims through official government portals before responding.

Tech Industry and Donation-Related Scams

Large corporations are also falling prey. According to media reports, an American IT company recently terminated around 700 Indian employees after uncovering a donation-related fraud. At least 200 of them were reportedly involved in a scheme linked to Telugu organisations in the US. The scam echoed a similar situation that had previously affected Apple, where Indian employees were fired after being implicated in donation fraud tied to the Telugu Association of North America (TANA). Investigations revealed that employees had made questionable donations to these groups in exchange for benefits such as visa support or employment favours.

Common People Targeted

While organisational scandals grab headlines, the common man remains equally or even more vulnerable. In a recent incident, a man lost over ₹1 lakh after clicking on a WhatsApp link asking for donations to a charity. Once he engaged with the link, the fraudsters manipulated him into making repeated transfers under various pretexts, ranging from processing fees to refund-related transactions (social engineering). Scammers often employ a similar set of tactics using urgency, emotional appeal, and impersonation of credible platforms to convince and deceive people.

Cautionary Steps

CyberPeace recommends adopting a cautious and informed approach when making charitable donations, especially online. Here are some key safety measures to follow:

- Verify Before You Donate. Always double-check the legitimacy of donation appeals. Use official government portals or the official charities' websites. Be wary of unfamiliar phone numbers, email addresses, or WhatsApp forwards asking for money.

- Avoid Clicking on Suspicious Links

Never click on links or download attachments from unknown or unverified sources. These could be phishing links/ malware designed to steal your data or access your bank accounts. - Be Sceptical of Urgency Scammers bank on creating a false sense of urgency to pressure their victims into donating quickly. One must take the time to evaluate before responding.

- Use Secure Payment Channels Ensure that one makes donations only through platforms that are secure, trusted, and verified. These include official UPI handles, government-backed portals (like PM CARES or Bharat Kosh), among others.

- Report Suspected Fraud In case one receives suspicious messages or falls victim to a scam, they are encouraged to report it to cybercrime authorities via cybercrime.gov.in (1930) or the local police, as prompt reporting can prevent further fraud.

Conclusion

Charity should never come at the cost of trust and safety. While donating to a good cause is noble, doing it mindfully is essential in today’s scam-prone environment. Always remember: a little caution today can save a lot tomorrow.

References

- https://economictimes.indiatimes.com/news/defence/misleading-message-circulating-on-whatsapp-related-to-donation-for-armys-modernisation-govt/articleshow/120672806.cms?from=mdr

- https://timesofindia.indiatimes.com/technology/tech-news/american-company-sacks-700-of-these-200-in-donation-scam-related-to-telugu-organisations-similar-to-firing-at-apple/articleshow/120075189.cms

- https://timesofindia.indiatimes.com/city/hyderabad/apple-fires-some-indians-over-donation-fraud-tana-under-scrutiny/articleshow/117034457.cms

- https://www.indiatoday.in/technology/news/story/man-gets-link-for-donation-and-charity-on-whatsapp-loses-over-rs-1-lakh-after-clicking-on-it-2688616-2025-03-04