#FactCheck: Viral AI Video Showing Finance Minister of India endorsing an investment platform offering high returns.

Executive Summary:

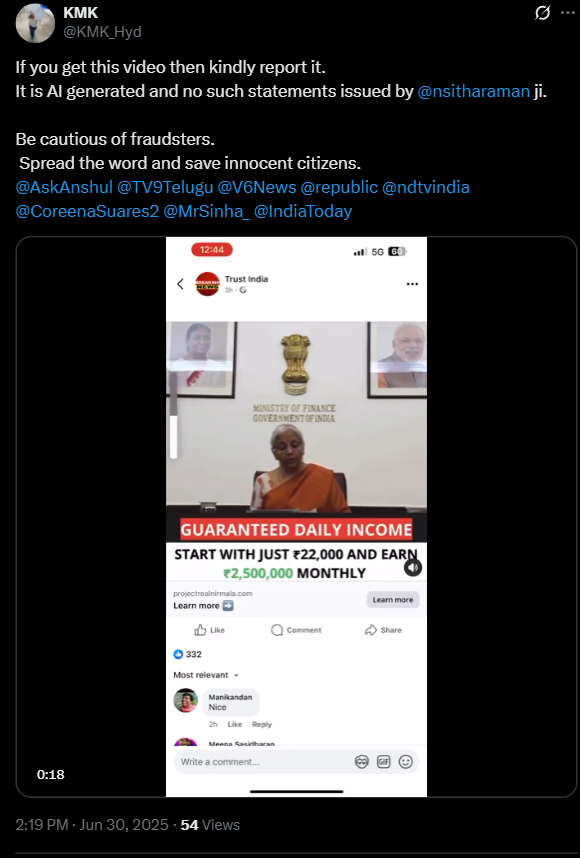

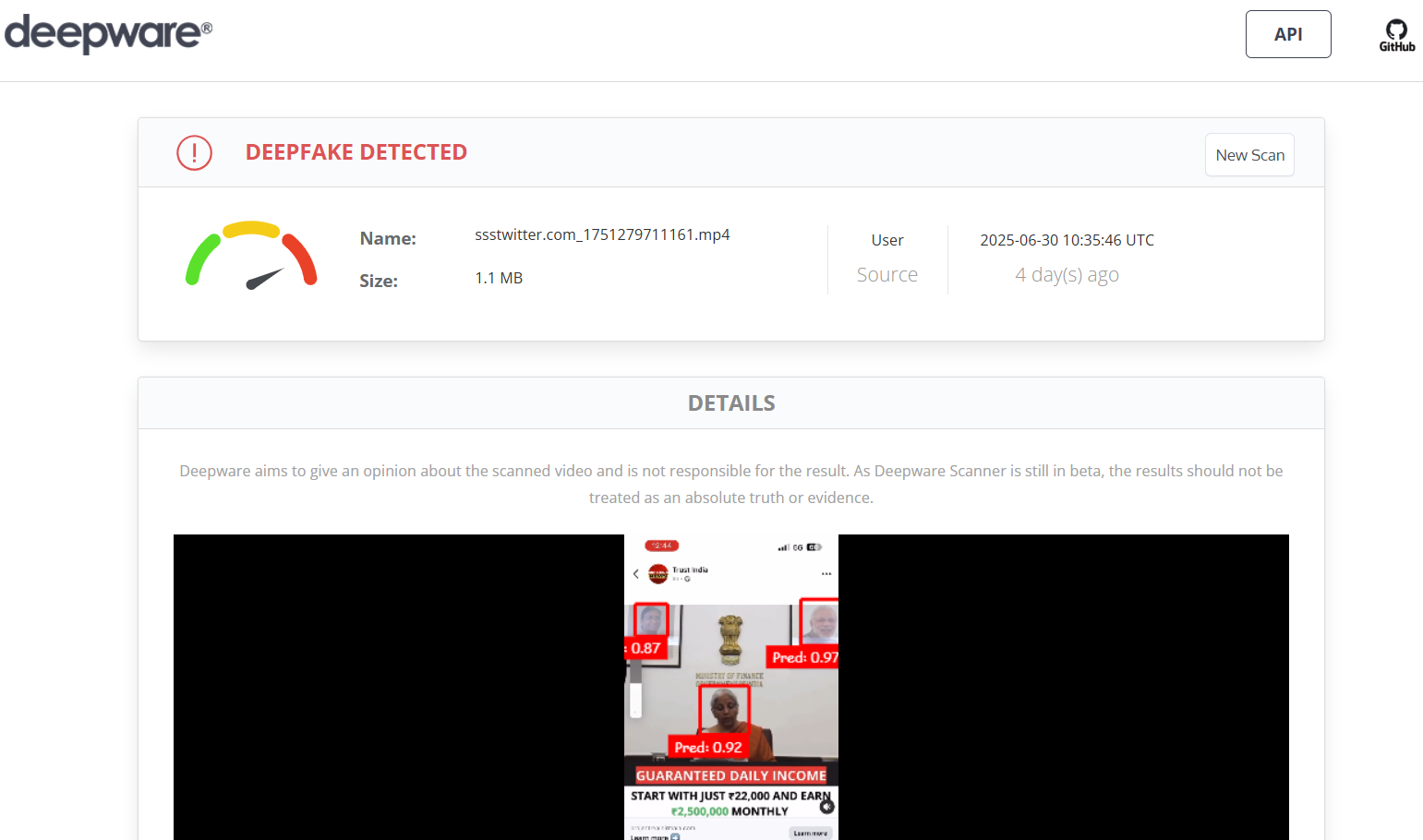

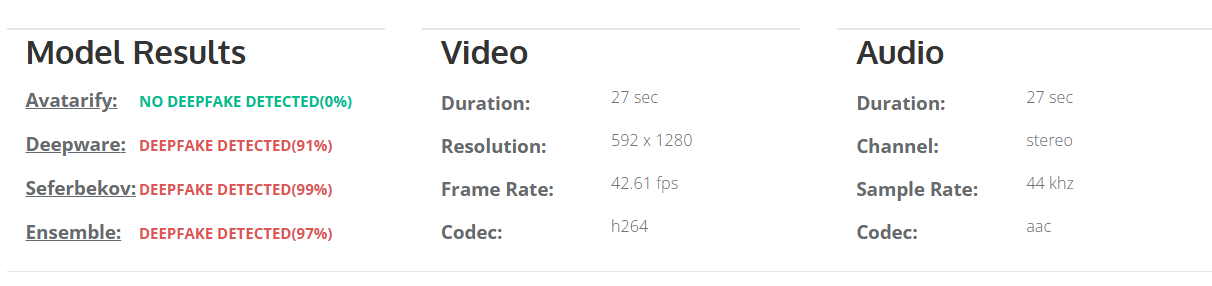

A video circulating on social media falsely claims that India’s Finance Minister, Smt. Nirmala Sitharaman, has endorsed an investment platform promising unusually high returns. Upon investigation, it was confirmed that the video is a deepfake—digitally manipulated using artificial intelligence. The Finance Minister has made no such endorsement through any official platform. This incident highlights a concerning trend of scammers using AI-generated videos to create misleading and seemingly legitimate advertisements to deceive the public.

Claim:

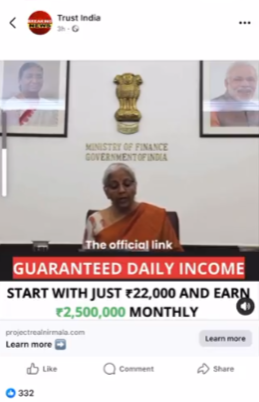

A viral video falsely claims that the Finance Minister of India Smt. Nirmala Sitharaman is endorsing an investment platform, promoting it as a secure and highly profitable scheme for Indian citizens. The video alleges that individuals can start with an investment of ₹22,000 and earn up to ₹25 lakh per month as guaranteed daily income.

Fact check:

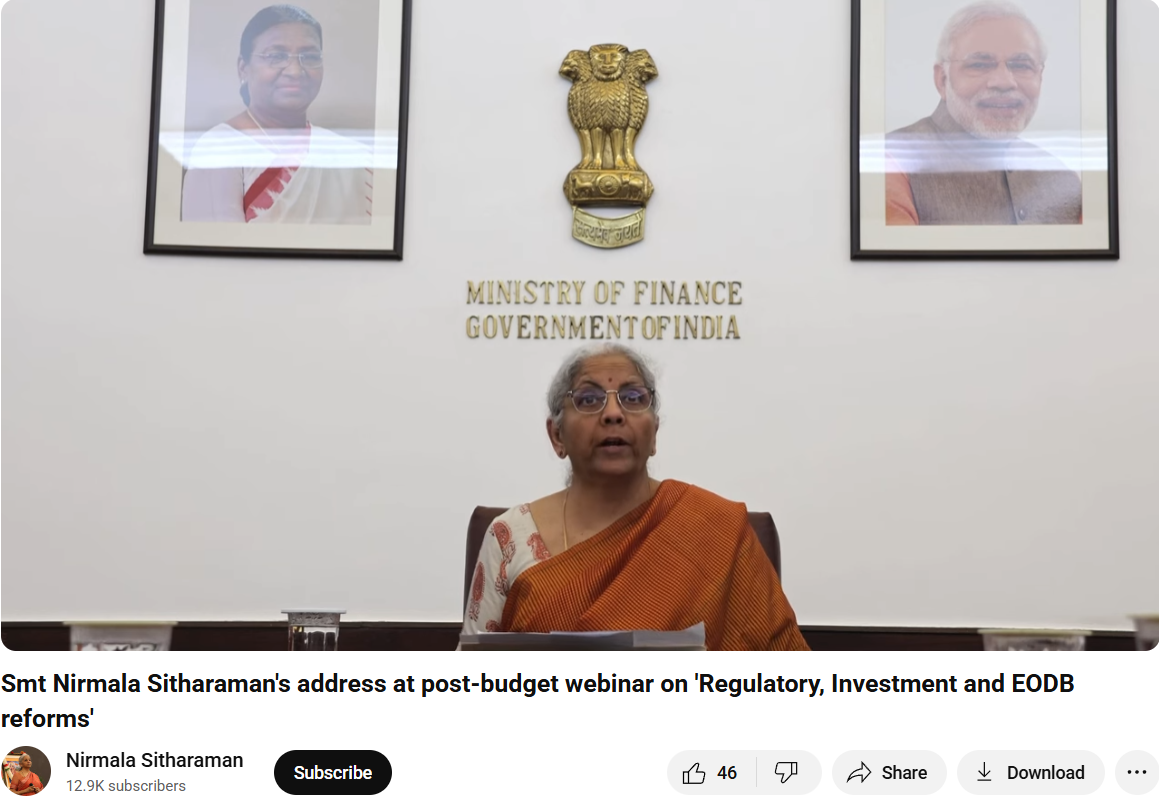

By doing a reverse image search from the key frames of the viral fake video we found an original YouTube clip of the Finance Minister of India delivering a speech on the webinar regarding 'Regulatory, Investment and EODB reforms'. Upon further research we have not found anything related to the viral investment scheme in the whole video.

The manipulated video has had an AI-generated voice/audio and scripted text injected into it to make it appear as if she has approved an investment platform.

The key to deepfakes is that they seem relatively realistic in their facial movement; however, if you look closely, you can see that there are mismatched lip-syncing and visual transitions that are out of the ordinary, and the results prove our point.

Also, there doesn't appear to be any acknowledgment of any such endorsement from a legitimate government website or a credible news outlet. This video is a fabricated piece of misinformation to attempt to scam the viewers by leveraging the image of a trusted public figure.

Conclusion:

The viral video showing the Finance Minister of India, Smt. Nirmala Sitharaman promoting an investment platform is fake and AI-generated. This is a clear case of deepfake misuse aimed at misleading the public and luring individuals into fraudulent schemes. Citizens are advised to exercise caution, verify any such claims through official government channels, and refrain from clicking on unknown investment links circulating on social media.

- Claim: Nirmala Sitharaman promoted an investment app in a viral video.

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

The G7 nations, a group of the most powerful economies, have recently turned their attention to the critical issue of cybercrimes and (AI) Artificial Intelligence. G7 summit has provided an essential platform for discussing the threats and crimes occurring from AI and lack of cybersecurity. These nations have united to share their expertise, resources, diplomatic efforts and strategies to fight against cybercrimes. In this blog, we shall investigate the recent development and initiatives undertaken by G7 nations, exploring their joint efforts to combat cybercrime and navigate the evolving landscape of artificial intelligence. We shall also explore the new and emerging trends in cybersecurity, providing insights into ongoing challenges and innovative approaches adopted by the G7 nations and the wider international community.

G7 Nations and AI

Each of these nations have launched cooperative efforts and measures to combat cybercrime successfully. They intend to increase their collective capacities in detecting, preventing, and responding to cyber assaults by exchanging intelligence, best practices, and experience. G7 nations are attempting to develop a strong cybersecurity architecture capable of countering increasingly complex cyber-attacks through information-sharing platforms, collaborative training programs, and joint exercises.

The G7 Summit provided an important forum for in-depth debates on the role of artificial intelligence (AI) in cybersecurity. Recognising AI’s transformational potential, the G7 nations have participated in extensive discussions to investigate its advantages and address the related concerns, guaranteeing responsible research and use. The nation also recognises the ethical, legal, and security considerations of deploying AI cybersecurity.

Worldwide Rise of Ransomware

High-profile ransomware attacks have drawn global attention, emphasising the need to combat this expanding threat. These attacks have harmed organisations of all sizes and industries, leading to data breaches, operational outages, and, in some circumstances, the loss of sensitive information. The implications of such assaults go beyond financial loss, frequently resulting in reputational harm, legal penalties, and service delays that affect consumers, clients, and the public. The increase in high-profile ransomware incidents has garnered attention worldwide, Cybercriminals have adopted a multi-faceted approach to ransomware attacks, combining techniques such as phishing, exploit kits, and supply chain Using spear-phishing, exploit kits, and supply chain hacks to obtain unauthorised access to networks and spread the ransomware. This degree of expertise and flexibility presents a substantial challenge to organisations attempting to protect against such attacks.

Focusing On AI and Upcoming Threats

During the G7 summit, one of the key topics for discussion on the role of AI (Artificial Intelligence) in shaping the future, Leaders and policymakers discuss the benefits and dangers of AI adoption in cybersecurity. Recognising AI’s revolutionary capacity, they investigate its potential to improve defence capabilities, predict future threats, and secure vital infrastructure. Furthermore, the G7 countries emphasise the necessity of international collaboration in reaping the advantages of AI while reducing the hazards. They recognise that cyber dangers transcend national borders and must be combated together. Collaboration in areas such as exchanging threat intelligence, developing shared standards, and promoting best practices is emphasised to boost global cybersecurity defences. The G7 conference hopes to set a global agenda that encourages responsible AI research and deployment by emphasising the role of AI in cybersecurity. The summit’s sessions present a path for maximising AI’s promise while tackling the problems and dangers connected with its implementation.

As the G7 countries traverse the complicated convergence of AI and cybersecurity, their emphasis on collaboration, responsible practices, and innovation lays the groundwork for international collaboration in confronting growing cyber threats. The G7 countries aspire to establish robust and secure digital environments that defend essential infrastructure, protect individuals’ privacy, and encourage trust in the digital sphere by collaboratively leveraging the potential of AI.

Promoting Responsible Al development and usage

The G7 conference will focus on developing frameworks that encourage ethical AI development. This includes fostering openness, accountability, and justice in AI systems. The emphasis is on eliminating biases in data and algorithms and ensuring that AI technologies are inclusive and do not perpetuate or magnify existing societal imbalances.

Furthermore, the G7 nations recognise the necessity of privacy protection in the context of AI. Because AI systems frequently rely on massive volumes of personal data, summit speakers emphasise the importance of stringent data privacy legislation and protections. Discussions centre around finding the correct balance between using data for AI innovation, respecting individuals’ privacy rights, and protecting data security. In addition to responsible development, the G7 meeting emphasises the importance of responsible AI use. Leaders emphasise the importance of transparent and responsible AI governance frameworks, which may include regulatory measures and standards to ensure AI technology’s ethical and legal application. The goal is to defend individuals’ rights, limit the potential exploitation of AI, and retain public trust in AI-driven solutions.

The G7 nations support collaboration among governments, businesses, academia, and civil society to foster responsible AI development and use. They stress the significance of sharing best practices, exchanging information, and developing international standards to promote ethical AI concepts and responsible practices across boundaries. The G7 nations hope to build the global AI environment in a way that prioritises human values, protects individual rights, and develops trust in AI technology by fostering responsible AI development and usage. They work together to guarantee that AI is a force for a good while reducing risks and resolving social issues related to its implementation.

Challenges on the way

During the summit, the nations, while the G7 countries are committed to combating cybercrime and developing responsible AI development, they confront several hurdles in their efforts. Some of them are:

A Rapidly Changing Cyber Threat Environment: Cybercriminals’ strategies and methods are always developing, as is the nature of cyber threats. The G7 countries must keep up with new threats and ensure their cybersecurity safeguards remain effective and adaptable.

Cross-Border Coordination: Cybercrime knows no borders, and successful cybersecurity necessitates international collaboration. On the other hand, coordinating activities among nations with various legal structures, regulatory environments, and agendas can be difficult. Harmonising rules, exchanging information, and developing confidence across states are crucial for effective collaboration.

Talent Shortage and Skills Gap: The field of cybersecurity and AI knowledge necessitates highly qualified personnel. However, skilled individuals in these fields need more supply. The G7 nations must attract and nurture people, provide training programs, and support research and innovation to narrow the skills gap.

Keeping Up with Technological Advancements: Technology changes at a rapid rate, and cyber-attacks become more complex. The G7 nations must ensure that their laws, legislation, and cybersecurity plans stay relevant and adaptive to keep up with future technologies such as AI, quantum computing, and IoT, which may both empower and challenge cybersecurity efforts.

Conclusion

To combat cyber threats effectively, support responsible AI development, and establish a robust cybersecurity ecosystem, the G7 nations must constantly analyse and adjust their strategy. By aggressively tackling these concerns, the G7 nations can improve their collective cybersecurity capabilities and defend their citizens’ and global stakeholders’ digital infrastructure and interests.

Introduction

The insurance industry is a target for cybercriminals due to the sensitive nature of the information it holds. This makes it essential for insurance companies to have robust cybersecurity measures to protect their data and customers’ personal information.

Cyber fraud in India’s insurance industry is increasing. It is reported that the Indian insurance sector has witnessed a surge in cyber-attacks, with several instances of data breaches, identity thefts, and financial fraud being reported. These cybercrimes not only pose a significant threat to the financial stability of the insurance industry but also to the privacy and security of policyholders.

Cyber Frauds in the Insurance Industry

The insurance industry in India has been the target of increasing cyber fraud in recent years. With the growing digital transformation trend, insurance companies have become increasingly vulnerable to cyber-attacks. Cyber frauds in the insurance industry are initiated by hackers who use various techniques such as phishing, malware, ransomware, and social engineering to gain unauthorised access to policyholders’ personal data and sensitive information

Kinds of cyber frauds in the insurance industry

It is essential for insurers and policyholders alike to be aware of these kinds of cyber-attacks on insurance companies in today’s digital age. Staying educated about these threats can help prevent them from happening in the future.

Identity theft– One common type of cyber fraud that occurs in the insurance industry is identity theft. In this type of fraud, criminals steal personal information such as name, address, date of birth and social security numbers through phishing emails or fraudulent websites. They then use this information to open fraudulent policies or access existing ones.

Payment fraud- Another type of cyber fraud that is on the rise is payment fraud. In this type of fraud, hackers intercept electronic payments made by policyholders or agents using fake bank accounts or compromised payment gateways. The money is then siphoned into untraceable accounts, making it difficult for law enforcement agencies to identify and arrest the perpetrators.

Phishing attacks- Where the fraudsters posed as company officials and sent emails to policyholders requesting their account details. The unsuspecting customers fell for this scam and shared their sensitive information, which was then used to access their accounts and steal funds.

Hacking- Where hackers breach the company’s system to gain access to policyholder data. The hackers’ stoles personal records, including names, addresses, phone numbers, social security numbers, and financial information, which they later sell on the dark web.

Fake policies scam- Fraudsters create fake policies using stolen identities and collect premiums from innocent customers. The insurer then voided these policies due to fraudulent activity leaving those people without valid coverage when they needed it most. The victims suffer significant financial losses due to this scam.

Fake Insurance Websites- Discuss the creation of deceptive websites that imitate well-known insurance companies, where unsuspecting individuals provide their personal details, leading to identity theft or financial losses.

Prevention of Cyber Frauds in the Insurance Industry- Best practices to follow

Prevention is better than cure, which also holds true in the case of cyber fraud in the insurance industry. The industry must take proactive steps to prevent such frauds from occurring in the first place. One of the most effective ways to do so is by investing in cybersecurity measures that are specifically designed for the insurance sector.

Insurance companies must conduct regular employee training programs on cybersecurity best practices. This includes educating employees on how to identify and avoid phishing emails, create strong passwords, and recognise potential cyber threats. Companies should also establish a reporting mechanism for employees to report suspicious activity or incidents immediately.

Having proper access controls in place is also necessary. This means limiting access to sensitive data only to those employees who need it, implementing two-factor authentication, and regularly monitoring user activity logs. Regular audits can also provide an extra layer of protection against potential threats by identifying vulnerabilities that may have been overlooked during routine security checks.

Another essential step is encrypting all data transmitted between different systems and devices. Encryption scrambles data into unreadable codes that can only be deciphered using a decryption key, making it difficult for hackers to intercept or steal information in transit.

Legal Framework for Cyber Frauds in the Insurance Industry

The legal framework for cyber fraud in the insurance industry is critical to preventing such crimes. The Insurance Regulatory and Development Authority of India (IRDAI) has issued guidelines for insurers to establish a cybersecurity framework. The guidelines require insurers to conduct regular risk assessments, implement security measures, and ensure compliance with data privacy laws.

The Information Technology Act 2000, is another significant piece of legislation dealing with cyber fraud in India. The act defines offences such as unauthorised access to a computer system, hacking, and tampering with data. It also provides for stringent penalties and imprisonment for those found guilty of such offences.

The IRDAI’s guidelines provide insurers with a roadmap to establish robust cybersecurity measures to help prevent cyber fraud in the insurance industry. Stringent implementation of these guidelines will go a long way in safeguarding sensitive customer information from falling into the wrong hands.

Best Practices for Insurers and Policyholders

Insurers:

Implementing Strong Authentication: Encouraging the use of multi-factor authentication and secure login processes to safeguard customer accounts and prevent unauthorised access.

Regular Employee Training: Conduct cybersecurity awareness programs to educate employees about the latest threats and preventive measures.

Investing in Advanced Technologies: Utilizing robust cybersecurity tools and systems to promptly detect and mitigate potential cyber threats.

Policyholders:

Vigilance and Awareness: Policyholders must stay vigilant while sharing personal information online and verify the authenticity of insurance websites and communication channels.

Regular Updates and Patches: Advising individuals to keep their devices and software up to date to minimise vulnerabilities that cybercriminals can exploit.

Secure Online Practices: Encouraging the use of strong and unique passwords, avoiding sharing sensitive information on unsecured networks, and exercising caution when clicking on suspicious links or attachments.

Conclusion

As the Indian insurance industry embraces digitisation, the risk of cyber scams and data breaches becomes a significant concern. Insurers and policyholders must collaborate to ensure robust cybersecurity measures are in place to protect sensitive information and financial interests.

It is essential for insurance companies to invest in robust cybersecurity measures that can detect and prevent fraud attempts. Additionally, educating employees on the dangers of cyber fraud and implementing strict compliance measures can go a long way in mitigating risks. With these efforts, the insurance industry can continue to provide trustworthy and reliable services to its customers while protecting against cyber threats. As technology continues to evolve, it is imperative that the insurance industry adapts accordingly and remains vigilant against emerging threats.

Executive Summary:

A video has gone viral that claims to show Hon'ble Minister of Home Affairs, Shri Amit Shah stating that the BJP-Led Central Government intends to end quotas for scheduled castes (SCs), scheduled tribes (STs), and other backward classes (OBCs). On further investigation, it turns out this claim is false as we found the original clip from an official source, while he delivered the speech at Telangana, Shah talked about falsehoods about religion-based reservations, with specific reference to Muslim reservations. It is a digitally altered video and thus the claim is false.

Claims:

The video which allegedly claims that the Hon'ble Minister of Home Affairs, Shri Amit Shah will be terminating the reservation quota systems of scheduled castes (SCs), scheduled tribes (STs) and other backward classes (OBCs) if BJP government was formed again has been viral on social media platforms.

English Translation: If the BJP government is formed again we will cancel ST, SC reservations: Hon'ble Minister of Home Affairs, Shri Amit Shah

Fact Check:

When the video was received we closely observed the name of the news media channel, and it was V6 News. We divided the video into keyframes and reverse searched the images. For one of the keyframes of the video, we found a similar video with the caption “Union Minister Amit Shah Comments Muslim Reservations | V6 Weekend Teenmaar” uploaded by the V6 News Telugu’s verified Youtube channel on April 23, 2023. Taking a cue from this, we also did some keyword searches to find any relevant sources. In the video at the timestamp of 2:38, Hon'ble Minister of Home Affairs, Shri Amit Shah talks about religion-based reservations calling ‘unconstitutional Muslim Reservation’ and that the Government will remove it.

Further, he talks about the SC, ST, and OBC reservations having full rights for quota but not the Muslim reservation.

While doing the reverse image, we found many other videos uploaded by other media outlets like ANI, Hindustan Times, The Economic Times, etc about ending Muslim reservations from Telangana state, but we found no such evidence that supports the viral claim of removing SC, ST, OBC quota system. After further analysis for any sign of alteration, we found that the viral video was edited while the original information is different. Hence, it’s misleading and false.

Conclusion:

The video featuring the Hon'ble Minister of Home Affairs, Shri Amit Shah announcing that they will remove the reservation quota system of SC, ST and OBC if the new BJP government is formed again in the ongoing Lok sabha election, is debunked. After careful analysis, it was found that the video was fake and was created to misrepresent the actual statement of Hon'ble Minister of Home Affairs, Shri Amit Shah. The original footage surfaced on the V6 News Telugu YouTube channel, in which Hon'ble Minister of Home Affairs, Shri Amit Shah was explaining about religion-based reservations, particularly Muslim reservations in Telangana. Unfortunately, the fake video was false and Hon'ble Minister of Home Affairs, Shri Amit Shah did not mention the end of SC, ST, and OBC reservations.

- Claim: The viral video covers the assertion of Hon'ble Minister of Home Affairs, Shri Amit Shah that the BJP government will soon remove reservation quotas for scheduled castes (SCs), scheduled tribes (STs), and other backward classes (OBCs).

- Claimed on: X (formerly known as Twitter)

- Fact Check: Fake & Misleading