#FactCheck- No, Iran’s Supreme Leader Mojtaba Khamenei Is Not Dead—Viral Video Debunked

Executive Summary

A video circulating on social media claims that Iran’s new Supreme Leader Mojtaba Khamenei has passed away, with users attributing the claim to American sources. However, research by the CyberPeace found the claim to be false. Our research confirms that Mojtaba Khamenei is alive and in good health.

Claim

A Facebook user shared the viral video, claiming that Iran’s new Supreme Leader Mojtaba Khamenei had died.

Fact Check

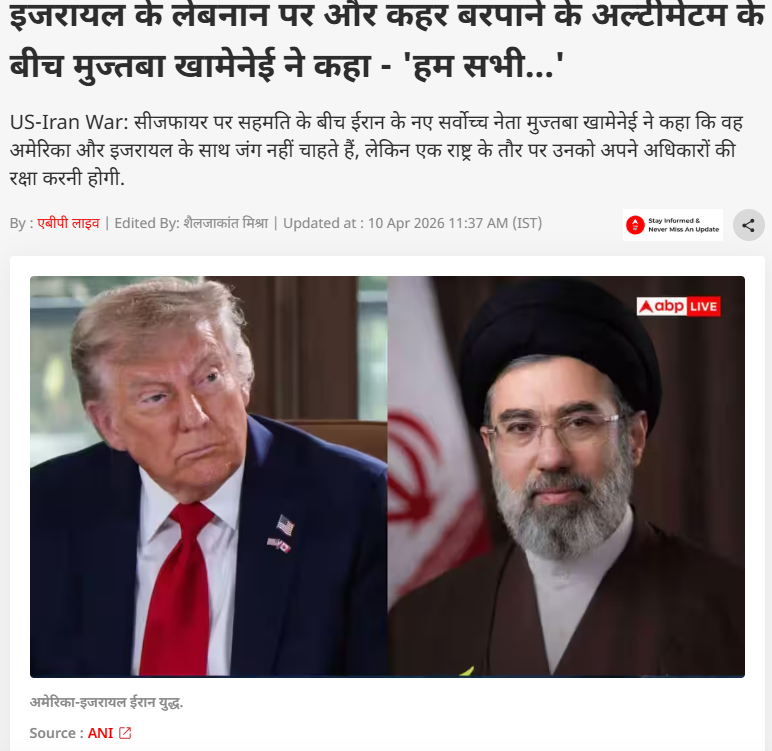

To verify the claim, we conducted keyword searches on Google but found no credible media reports confirming his death. Further research led us to a report published on April 10, 2026, by ABP News. According to the report, amid discussions around a ceasefire, Mojtaba Khamenei issued a statement saying that Iran does not seek war with the United States or Israel, but as a nation, it must defend its rights.

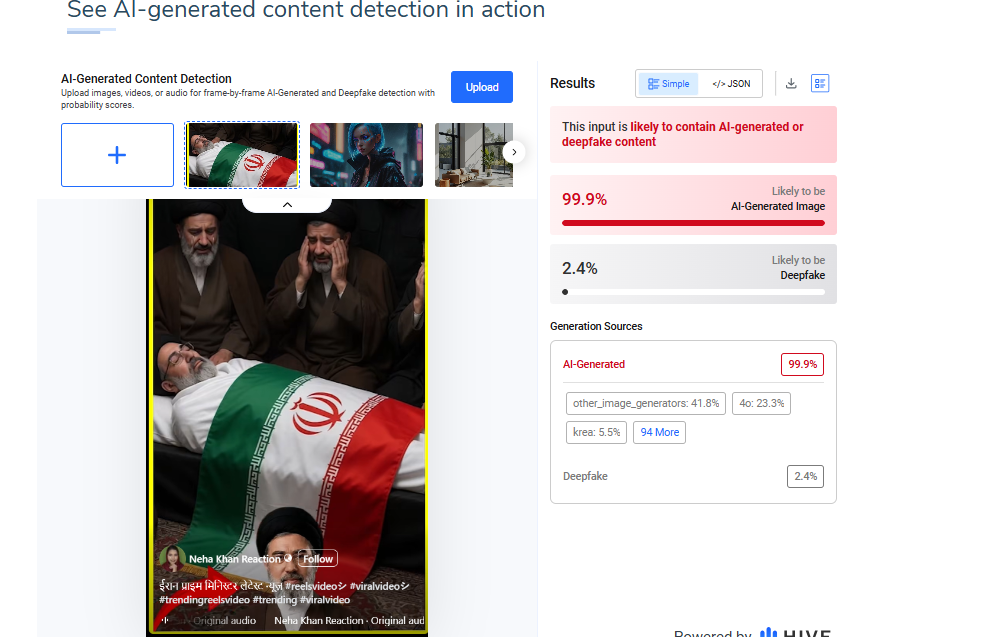

Additionally, the image used in the viral video was analyzed using the AI detection tool HIVE Moderation. The results indicated a 99% probability that the image is AI-generated.

Conclusion

The viral claim is false and misleading. There is no credible evidence to suggest that Mojtaba Khamenei has died. On the contrary, recent verified reports confirm that he is alive and has even issued public statements on ongoing geopolitical developments. The widespread circulation of this claim appears to be driven by misinformation, amplified through social media without verification. The use of AI-generated visuals further adds to the confusion, making the content appear authentic at first glance.

Related Blogs

Introduction

Over the past few months, cybercriminals have upped the ante with highly complex methods targeting innocent users. One such scam is a new one that exploits WhatsApp users in India and globally. A seemingly harmless picture message is the entry point to stealing money and data. Downloading seemingly harmless images via WhatsApp can unknowingly install malware on your smartphone. This malicious software can compromise your banking applications, steal passwords, and expose your personal identity. With such malware-laced instant messages now making headlines, it is advised for netizens to exercise extreme caution while handling media received on messaging platforms.

How Does the WhatsApp Photo Scam Work?

Cybercriminals began embedding malicious code in images being shared on WhatsApp. Here is how the attack typically works:

- The user receives a WhatsApp message from an unknown number with an image.

- The image may appear harmless—a greeting, meme, or holiday card—but it's packed with hidden malware.

- When the user taps to download the image, the malware gets installed on the phone in silent mode.

- Once installed, the malware is able to capture keystrokes, read messages, swipe banking applications, swipe credentials, and even hijack device functionality.

- Allegedly, in its advanced versions, it can exploit two-factor authentication (2FA) and make unauthorised transactions.

Who Is Being Targeted?

This scam targets both Android and iPhone users, with a focus on vulnerable groups like senior citizens, busy workers during peak seasons, and members of WhatsApp groups flooded with forwarded messages. Experts warn that a single careless click is enough to compromise an entire device.

What Can the Malware Do?

Upon installation, the malware grants hackers a terrifying level of access:

- Track user activity via keylogging or screen capture.

- Pilfer banking credentials and initiate fund transfers automatically.

- Obtain SMS or app-based 2FA codes, evading security layers.

- Clone identity information, such as Aadhaar details, digital wallets, and email access.

- Control device operations, including the camera and microphone.

This level of intrusion can result in not just financial loss but long-term digital impersonation or blackmail.

Safety Measures for WhatsApp Users

- Never Download Media from Suspicious Numbers

Do not download any files or pictures, even if the content appears to be familiar, unless you have faith in the source. Spread this advice among family members, particularly the older generation.

- Turn off Auto-Download in WhatsApp Settings

Navigate to Settings > Storage and Data > Media Auto-Download. Switch off auto-download for mobile data, Wi-Fi, and roaming.

- Install and Update Mobile Security Apps

Ensure your phone is equipped with a good antivirus or mobile security app that is updated from time to time.

- Block and Report Potential Scammers

WhatsApp offers the ability to block and report senders in a straightforward manner. This ensures that it notifies the platform and others as well.

- Educate Your Community

Share your knowledge on cyber hygiene with family, friends, and colleagues. Many people fall victim simply because they aren't aware of the risks, staying informed and spreading the word can make a big difference.

Advisories and Response

The Indian Cybercrime Coordination Centre (I4C) and other state cyber cells have released several alerts on increasing fraud via messaging platforms. Law enforcement agencies are appealing to the public not only to be vigilant but also to report any incident at once through the National Cybercrime Reporting Portal (cybercrime.gov.in).

Conclusion

The WhatsApp photo scam is a stark reminder that not all dangers come with a warning. A picture can now be a Trojan horse, propagating silently from device to device and draining personal money. Do not engage with unwanted media, refresh and update your privacy and security settings. Cyber criminals survive on neglect and ignorance, but through digital hygiene and vigilance, we can fight against these types of emerging threats.

References

- https://www.opswat.com/blog/how-emerging-image-based-malware-attacks-threaten-enterprise-defenses

- https://www.indiatvnews.com/technology/news/whatsapp-photo-scam-alert-downloading-random-images-could-cost-you-big-2025-05-06-988855

- https://www.hindustantimes.com/india-news/what-is-the-whatsapp-image-scam-and-how-can-you-stay-safe-from-it-101744353412848.html

- https://faq.whatsapp.com/898107234497196/?helpref=uf_share

- https://www.welivesecurity.com/en/malware/malware-hiding-in-pictures-more-likely-than-you-think/

- https://faq.whatsapp.com/573786218075805

- https://www.reversinglabs.com/blog/malware-in-images

Introduction

The world has been surfing the wave of technological advancements and innovations for the past decade, and it all pins down to one device – our mobile phone. For all mobile users, the primary choices of operating systems are Android and iOS. Android is an OS created by google in 2008 and is supported by most brands like – One+, Mi, OPPO, VIVO, Motorola, and many more and is one of the most used operating systems. iOS is an OS that was developed by Apple and was introduced in their first phone – The iPhone, in 2007. Both OS came into existence when mobile phone penetration was slow globally, and so the scope of expansion and advancements was always in favor of such operating systems.

The Evolution

iOS

Ever since the advent of the iPhone, iOS has seen many changes since 2007. The current version of iOs is iOS 16. However, in the course of creating new iOS and updating the old ones, Apple has come out with various advancements like the App Store, Touch ID & Face ID, Apple Music, Podcasts, Augmented reality, Contact exposure, and many more, which have later become part of features of Android phone as well. Apple is one of the oldest tech and gadget developers in the world, most of the devices manufactured by Apple have received global recognition, and hence Apple enjoys providing services to a huge global user base.

Android

The OS has been famous for using the software version names on the food items like – Pie, Oreo, Nougat, KitKat, Eclairs, etc. From Android 10 onwards, the new versions were demoted by number. The most recent Android OS is Android 13; this OS is known for its practicality and flexibility. In 2012 Android became the most popular operating system for mobile devices, surpassing Apple’s iOS, and as of 2020, about 75 percent of mobile devices run Android.

Android vs. iOS

1. USER INTERFACE

One of the most noticeable differences between Android and iPhone is their user interface. Android devices have a more customizable interface, with options to change the home screen, app icons, and overall theme. The iPhone, on the other hand, has a more uniform interface with less room for customization. Android allows users to customize their home screen by adding widgets and changing the layout of their app icons. This can be useful for people who want quick access to certain functions or information on their home screen. IOS does not have this feature, but it does allow users to organize their app icons into folders for easier navigation.

2. APP SELECTION

Another factor to consider when choosing between Android and iOS is the app selection. Both platforms have a wide range of apps available, but there are some differences to consider. Android has a larger selection of apps overall, including a larger selection of free apps. However, some popular apps, such as certain music streaming apps and games, may be released first or only available on iPhone. iOS also has a more curated app store, meaning that all apps must go through a review process before being accepted for download. This can result in a higher quality of apps overall, but it can also mean that it takes longer for new apps to become available on the platform. iPhone devices tend to have less processing power and RAM. But they are generally more efficient in their use of resources. This can result in longer battery life, but it may also mean that iPhones are slower at handling multiple tasks or running resource-intensive apps.

3. PERFORMANCE

When it comes to performance, both Android and iPhone have their own strengths and weaknesses. Android devices tend to have more processing power and RAM. This can make them faster and more capable of handling multiple tasks simultaneously. However, this can also lead to Android devices having shorter battery life compared to iPhones.

4. SECURITY

Security is an important consideration for any smartphone user, and Android and iPhone have their own measures to protect user data. Android devices are generally seen as being less secure than iPhones due to their open nature. Android allows users to install apps from sources other than the Google Play Store, which can increase the risk of downloading malicious apps. However, Android has made improvements in recent years to address this issue. Including the introduction of Google Play Protect, which scans apps for malware before they are downloaded. On the other hand, iPhone devices have a more closed ecosystem, with all apps required to go through Apple‘s review process before being available for download. This helps reduce the risk of downloading malicious apps, but it can also limit the platform’s flexibility.

Conclusion

The debate about the better OS has been going on for some time now, and it looks like it will get more comprehensive in the times to come, as netizens go deeper into cyberspace, they will get more aware and critical of their uses and demands, which will allow them to opt for the best OS for their convenience. Although the Andriod OS, due to its integration, stands more vulnerable to security threats as compared to iOS, no software is secure in today’s time, what is secure is its use and application hence the netizen and the platforms need to increase their awareness and knowledge to safeguard themselves and the wholesome cyberspace.

A video circulating on social media shows a man allegedly rolling out bhature on his stomach and then frying them in a pan. The clip is being shared with a communal narrative, with users making derogatory remarks while falsely linking the act to a particular community.

CyberPeace Foundation’s research found the viral claim to be false. Our probe confirms that the video is not real but has been created using artificial intelligence (AI) tools and is being shared online with a misleading and communal angle.

Claim

On January 5, 2025, several users shared the viral video on social media platform X (formerly Twitter). One such post carried a communal caption suggesting that the person shown in the video does not belong to a particular community and making offensive remarks about hygiene and food practices..

- The post link and archived version can be viewed here: https://x.com/RightsForMuslim/status/2008035811804291381

- Archive Link: https://archive.ph/lKnX5

Fact Check:

Upon closely examining the viral video, several visual inconsistencies and unnatural movements were observed, raising suspicion about its authenticity. These anomalies are commonly associated with AI-generated or digitally manipulated content.

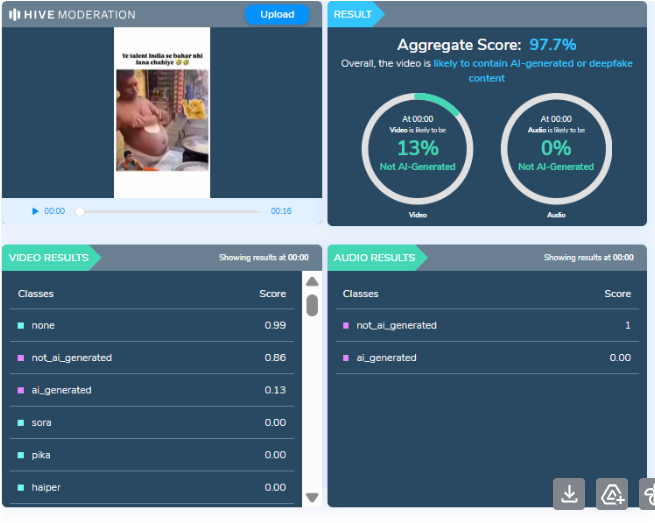

To verify this, the video was analysed using the AI detection tool HIVE Moderation. According to the tool’s results, the video was found to be 97 percent AI-generated, strongly indicating that it was not recorded in real life but synthetically created.

Conclusion

CyberPeace Foundation’s research clearly establishes that the viral video is AI-generated and does not depict a real incident. The clip is being deliberately shared with a false and communal narrative to mislead users and spread misinformation on social media. Users are advised to exercise caution and verify content before sharing such sensational and divisive material online.