#FactCheck: AI-Generated Photo Shared to Claim Boycott of Hindu Sammelan

Executive Summary:

A photo circulating on social media shows a stage with the words “Hindu Sammelan” (Hindu Conference) written in large letters. In front of the stage, rows of chairs appear largely empty, with only a few people seated while most seats remain vacant.

Users sharing the image claim that the event, held under the banner of a “Hindu Sammelan,” was in fact a “Brahmin Sammelan,” and that indigenous communities chose to stay away, resulting in poor attendance.

It is noteworthy that, on the occasion of the centenary year of the Rashtriya Swayamsevak Sangh (RSS), various “Hindu Sammelan” events are being organized across the country. The viral image is being linked to this broader context.

However, research conducted by the CyberPeace found the viral claim to be false. Our research revealed that the image being shared on social media is not authentic but AI-generated and is being circulated with a misleading narrative.

Claim

On February 21, 2026, a Facebook user shared the viral image. The original and archived links are provided below

- https://www.facebook.com/photo?fbid=935049042540479&set=gm.2425972001215469&idorvanity=465387370607285

- https://ghostarchive.org/archive/sxC6d

Fact Check:

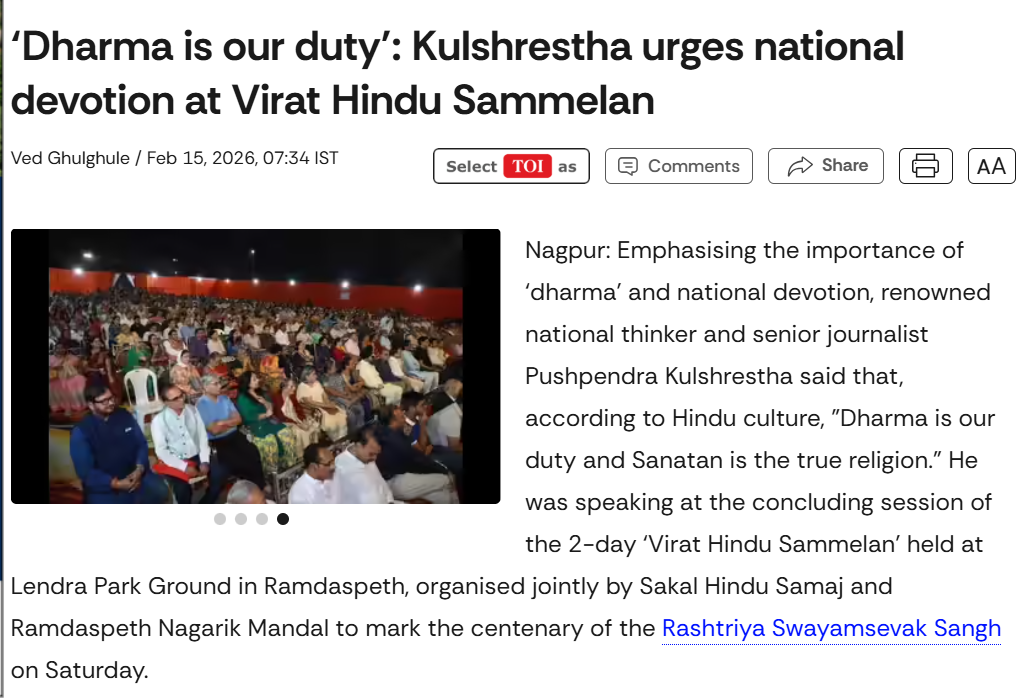

A keyword search on Google confirmed that several “Hindu Sammelan” events have indeed been organized across the country as part of the RSS centenary year. For instance, media reports have covered such events in different cities, including Nagpur.

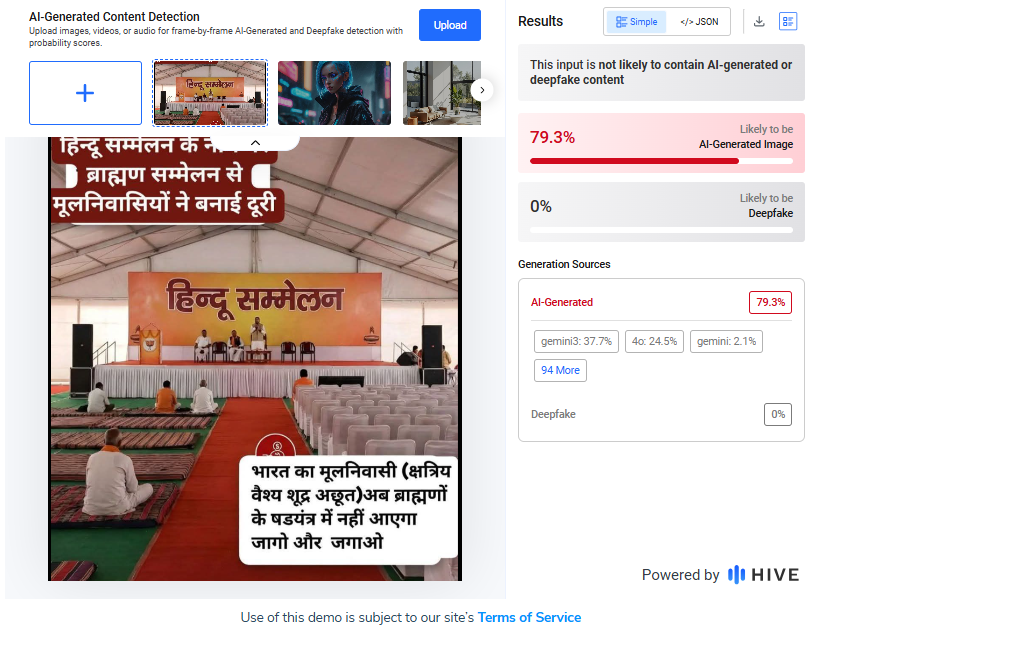

However, upon closely examining the viral image, we observed certain visual inconsistencies and unnatural elements that raised suspicion of AI generation. We first analyzed the image using the AI detection tool Hive Moderation, which indicated a 79.3 percent probability that the image was AI-generated.

To further verify, we scanned the image using another AI detection platform, Sightengine. The results showed a 97 percent likelihood that the image was AI-generated.

Conclusion

Our research confirms that the image circulating on social media is not genuine. It has been artificially created using AI technology and is being shared with a misleading claim.

Related Blogs

Introduction

Cybersecurity threats have been globally prevalent for quite some time now. All nations, organisations and individuals stand at risk from new and emerging potential cybersecurity threats, putting finances, privacy, data, identities and sometimes human lives at stake. The latest Data Breach Report by IBM revealed that nearly a staggering 83% of organisations experienced more than one data breach instance during 2022. As per the 2022 Data Breach Investigations Report by Verizon, the total number of global ransomware attacks surged by 13%, indicating a concerning rise equal to the last five years combined. The statistics clearly showcase how the future is filled with potential threats as we advance further into the digital age.

Who is Okta?

Okta is a secure identity cloud that links all your apps, logins and devices into a unified digital fabric. Okta has been in existence since 2009 and is based out of San Francisco, USA and has been one of the leading service providers in the States. The advent of the company led to early success based on the high-quality services and products introduced by them in the market. Although Okta is not as well-known as the big techs, it plays a vital role in big organisations' cybersecurity systems. More than 18,000 users of the identity management company's products rely on it to give them a single login for the several platforms that a particular business uses. For instance, Zoom leverages Okta to provide "seamless" access to its Google Workspace, ServiceNow, VMware, and Workday systems with only one login, thus showing how Okta is fundamental in providing services to ease the human effort on various platforms. In the digital age, such organisations are instrumental in leading the pathway to innovation and entrepreneurship.

The Okta Breach

The last Friday, 20 October, Okta reported a hack of its support system, leading to chaos and havoc within the organisation. The result of the hack can be seen in the market in the form of the massive losses incurred by Okta in the stock exchange.

Since the attack, the company's market value has dropped by more than $2 billion. The well-known incident is the most recent in a long line of events connected to Okta or its products, which also includes a wave of casino invasions that caused days-long disruptions to hotel rooms in Las Vegas, casino giants Caesars and MGM were both affected by hacks as reported earlier this year. Both of those attacks, targeting MGM and Caesars’ Okta installations, used a sophisticated social engineering attack that went through IT help desks.

What can be done to prevent this?

Cybersecurity attacks on organisations have become a very common occurrence ever since the pandemic and are rampant all across the globe. Major big techs have been successful in setting up SoPs, safeguards and precautionary measures to protect their companies and their digital assets and interests. However, the Medium, Mico and small business owners are the most vulnerable to such unknown high-intensity attacks. The governments of various nations have established Computer Emergency Response Teams to monitor and investigate such massive-scale cyberattacks both on organisations and individuals. The issue of cybersecurity can be better addressed by inculcating the following aspects into our daily digital routines:

- Team Upskilling: Organisations need to be critical in creating upskilling avenues for employees pertaining to cybersecurity and threats. These campaigns should be run periodically, focusing on both the individual and organisational impact of any threat.

- Reporting Mechanism for Employees and Customers: Business owners and organisations need to deploy robust, sustainable and efficient reporting mechanisms for both employees well as customers. The mechanism will be fundamental in pinpointing the potential grey areas and threats in the cyber security mechanism as well. A dedicated reporting mechanism is now a mandate by a lot of governments around the world as it showcases transparency and natural justice in terms of legal remedies.

- Preventive, Precautionary and Recovery Policies: Organisations need to create and deploy respective preventive, precautionary and recovery policies in regard to different forms of cyber attacks and threats. This will be helpful in a better understanding of threats and faster response in cases of emergencies and attacks. These policies should be updated regularly, keeping in mind the emerging technologies. Efficient deployment of the policies can be done by conducting mock drills and threat assessment activities.

- Global Dialogue Forums: It is pertinent for organisations and the industry to create a community of cyber security enthusiasts from different and diverse backgrounds to address the growing issues of cyberspace; this can be done by conducting and creating global dialogue forums, which will act as the beacon of sharing best practices, advisories, threat assessment reports, potential threats and attacks thus establishing better inter-agency and inter-organisation communication and coordination.

- Data Anonymisation and Encryption: Organisations should have data management/processing policies in place for transparency and should always store data in an encrypted and anonymous manner, thus creating a blanket of safety in case of any data breach.

- Critical infrastructure: The industry leaders should push the limits of innovation by setting up state-of-the-art critical cyber infrastructure to create employment, innovation, and entrepreneurship spirit among the youth, thus creating a whole new generation of cyber-ready professionals and dedicated netizens. Critical infrastructures are essential in creating a safe, secure, resilient and secured digital ecosystem.

- Cysec Audits & Sandboxing: All organisations should establish periodic routines of Cybersecurity audits, both by internal and external entities, to find any issue/grey area in the security systems. This will create a more robust and adaptive cybersecurity mechanism for the organisation and its employees. All tech developing and testing companies need to conduct proper sandboxing exercises for all or any new tech/software creation to identify its shortcomings and flaws.

Conclusion

In view of the rising cybersecurity attacks on organisations, especially small and medium companies, a lot has been done, and a lot more needs to be done to establish an aspect of safety and security for companies, employees and customers. The impact of the Okta breach very clearly show how cyber attacks can cause massive repercussion for any organisation in the form of monetary loss, loss of business, damage to reputation and a lot of other factors. One should take such instances as examples and learnings for ourselves and prepare our organisation to combat similar types of threats, ultimately working towards preventing these types of threats and eradicating the influence of bad actors from our digital ecosystem altogether.

References:

- https://hbr.org/2023/05/the-devastating-business-impacts-of-a-cyber-breach#:~:text=In%202022%2C%20the%20global%20average,legal%20fees%2C%20and%20audit%20fees.

- https://www.okta.com/intro-to-okta/#:~:text=Okta%20is%20a%20secure%20identity,use%20to%20work%2C%20instantly%20available.

- https://www.cyberpeace.org/resources/blogs/mgm-resorts-shuts-down-it-systems-after-cyberattack

Introduction

Social media has become integral to our lives and livelihood in today’s digital world. Influencers are now strong people who shape trends, views, and consumer behaviour. Influencers have become targets for bad actors aiming to abuse their fame due to their significant internet presence. Unfortunately, account hacking has grown frequently, with significant ramifications for influencers and their followers. Furthermore, the emergence of social media platforms in recent years has opened the way for influencer culture. Influencers exert power over their followers’ ideas, lifestyle choices, and purchase decisions. Influencers and brands frequently collaborate to exploit their reach, resulting in a mutually beneficial environment. As a result, the value of influencer accounts has risen dramatically, attracting the attention of hackers trying to abuse their potential for financial gain or personal advantage.

Instances of recent attacks

Places of worship

The hackers have targeted renowned temples for fulfilling their malicious activities the recent attack happened on The Khautji Shyam Temple, a famous religious institution with enormous cultural and spiritual value for its adherents. It serves as a place of worship, community events, and numerous religious activities. However, since technology has invaded all sectors of life, the temple’s online presence has developed, giving worshippers access to information, virtual darshans (holy viewings), and interactive forums. Unfortunately, this digital growth has also rendered the shrine vulnerable to cyber threats. The hackers hacked the Facebook page twice in the month, demanded donations and hacked the cheques the devotes gave to the trust. The second event happened by posting objectional images on the page and hurting the sentiments of the devotees. The Committee of the temple has filed an FIR under various charges and is also seeking help from the cyber cell.

Social media Influencers

Influencers enjoy a vast online following worldwide, but their presence is limited to the digital space. Hence every video, photo is of importance to them. An incident took place with leading news anchor and reporter Barkha Dutt, where in her youtube channel was hacked into, and all the posts made from the channel were deleted. The hackers also replaced the channel’s logo with Tesla and were streaming a live video on the channel featuring Elon Musk. A similar incident was reported by influencer Tanmay Bhatt, who also lost all the content e had posted on his channel. The hackers use the following methods to con social media influencers:

- Social engineering

- Phishing

- Brute Force Attacks

Such attacks on influencers can cause harm to their reputation, can also cause financial loss, and even lose the trust of the viewers or the followers who follow them, thus further impacting the collaborations.

Safeguards

Social media influencers need to be very careful about their cyber security as their prominent presence is in the online world. The influencers from different platforms should practice the following safeguards to protect themselves and their content better online

Secure your accounts

Protecting your accounts with passphrases or strong passwords is the first step. The best strategy for doing this is to create a passphrase, a phrase only you know. We advise choosing a passphrase with at least four words and 15 characters.

To further secure your accounts, you must enable multi-factor authentication in the second step.

To access your account, a hacker must guess your password and provide a second authentication factor (such as a face scan or fingerprint) that matches yours.

Be careful about who has access

Many social media influencers collaborate with a team to help generate and post content while building their personal brands.

This entails using team members who can write and produce material that influencers can share themselves, according to some of them. In these situations, the influencer is the only person who still has access to the account.

There are more potential weak spots when more people have access. Additionally, it increases the number of ways a password or account access could fall into the hands of a cybercriminal. Only some staff members will be as cautious about password security as you may be.

Stay up-to-date on the threats

What’s the most significant way to combat threats to computer security? Information.

Cybercriminals constantly adapt their methods. It’s crucial to stay informed about these threats and how they can be utilised against you.

But it’s not just threats. Social media platforms and other service providers are likewise changing their offerings to avoid these challenges.

Educate yourself to protect yourself. You can keep one step ahead of the hazards that cybercriminals offer by continuously educating yourself.

Preach cybersecurity

As influencers, cyber security should be preached, no matter your agenda.

This will also enable users to inculcate best practices for digital hygiene.

This will also boost the reporting numbers and increase population awareness, thus eradicating such bad actors from our cyberspace.

Acknowledge the risks

Keeping a blind eye will always hurt the safety aspects, as ignorance always causes issues.

Risks should be kept in mind while creating the digital routine and netiquette

Always inform your users of risk existing and potential risks

Monitor threats

After the acknowledgement, it is essential to monitor threats.

Active lookout for threats will allow you to understand the modus Operandi and the vulnerabilities to avoid criminals

Threats monitoring is also a basic netizens’ responsibility to ensure that the threats are reported as they emerge.

Interpret the data

All cyber nodal agencies release data and trends of cybercrimes, understand the trends and protect your vulnerabilities.

Data interpretation can lead to an early flagging of threats and issues, thus protecting the cyber ecosystem by and large.

Create risk profiles

All influencers should create risk profiles and backup profiles.

This will also help protect one’s data as it can be stored on different profiles.

Risk profiles and having a private profile are essential to safeguard the basic cyber interests of an influencer.

Conclusion

As we go deeper into the digital age, we see more technologies emerging, but along with them, we see a new generation of cyber threats and challenges. The physical, as well as the cyberspace, is now inter twinned and interdependent. Practising basic cyber security practices, hygiene, netiquette, and monitoring best practices will go a long way in protecting the online interests of the Influencers and will impact their followers to engage in best practices thus safeguarding the cyber ecosystem at large.

Introduction

In todays time, we can access any information in seconds and from the comfort of our homes or offices. The internet and its applications have been substantial in creating an ease of access to information, but the biggest question which still remains unanswered is Which information is legit and which one is fake? As netizens, we must be critical of what information we access and how.

Influence of Bad actors

The bad actors are one of the biggest threats to our cyberspace as they make the online world full of fear and activities which directly impact the users financial or emotional status by exploitaing their vulnerabilities and attacking them using social engineering. One such issue is website spoofing. In website spoofing, the bad actors try and create a website similar to the original website of any reputed brand. The similarity is so uncanny that the first time or occasional website users find it very difficult to find the difference between the two websites. This is basically an attempt to access sensitive information, such as personal and financial information, and in some cases, to spread malware into the users system to facilitate other forms of cybercrimes. Such websites will have very lucrative offers or deals, making it easier for people to fall prey to such phoney websites In turn, the bad actors can gain sensitive information right from the users without even calling or messaging them.

The Incident

A Noida based senior citizen couple was aggreved by using their dishwasher, and to get it fixed, they looked for the customer care number on their web browser. The couple came across a customer care number- 1800258821 for IFB, a electronics company. As they dialed the number and got in touch with the fake customer care representative, who, upon hearing the couple’s issue, directed them to a supposedly senior official of the company. The senior official spoke to the lady, despite of the call dropping few times, he was admant on staying in touch with the lady, once he had established the trust factor, he asked the lady to download an app which he potrayed to be an app to register complaints and carry out quick actions. The fake senior offical asked the lady to share her location and also asked her to grant few access permissions to the application along with a four digit OTP which looked harmless. He further asked the kady to make a transaction of Rs 10 as part of the complaint processing fee. Till this moment, the couple was under the impression that their complaimt had been registred and the issue with their dishwasher would be rectified soon.

The couple later at night recieved a message from their bank, informing them that Rs 2.25 lakh had been debited from their joint bank account, the following morning, they saw yet another text message informing them of a debit of Rs 5.99 lakh again from their account. The couple immediatly understood that they had become victims to cyber fraud. The couple immediatly launched a complaint on the cyber fraud helpline 1930 and their respective bank. A FIR has been registerd in the Noida Cyber Cell.

How can senior citizens prevent such frauds?

Senior citizens can be particularly vulnerable to cyber frauds due to their lack of familiarity with technology and potential cognitive decline. Here are some safeguards that can help protect them from cyber frauds:

- Educate seniors on common cyber frauds: It’s important to educate seniors about the most common types of cyber frauds, such as phishing, smishing, vishing, and scams targeting seniors.

- Use strong passwords: Encourage seniors to use strong and unique passwords for their online accounts and to change them regularly.

- Beware of suspicious emails and messages: Teach seniors to be wary of suspicious emails and messages that ask for personal or financial information, even if they appear to be from legitimate sources.

- Verify before clicking: Encourage seniors to verify the legitimacy of links before clicking on them, especially in emails or messages.

- Keep software updated: Ensure seniors keep their software, including antivirus and operating system, up to date.

- Avoid public Wi-Fi: Discourage seniors from using public Wi-Fi for sensitive transactions, such as online banking or shopping.

- Check financial statements: Encourage seniors to regularly check their bank and credit card statements for any suspicious transactions.

- Secure devices: Help seniors secure their devices with antivirus and anti-malware software and ensure that their devices are password protected.

- Use trusted sources: Encourage seniors to use trusted sources when making online purchases or providing personal information online.

- Seek help: Advise seniors to seek help if they suspect they have fallen victim to a cyber fraud. They should contact their bank, credit card company or report the fraud to relevant authorities. Calling 1930 should be the first and primary step.

Conclusion

The cyberspace is new space for people of all generations, the older population is a little more vulnerble in this space as they have not used gadgets or internet for most f theur lives, and now they are dependent upon the devices and application for their convinience, but they still do not understand the technology and its dark side. As netizens, we are responsible for safeguarding the youth and the older population to create a wholesome, safe, secured and sustainable cyberecosystem. Its time to put the youth’s understanding of tech and the life experience of the older poplaution in synergy to create SoPs and best practices for erradicating such cyber frauds from our cyberspace. CyberPeace Foundation has created a CyberPeace Helpline number for victims where they will be given timely assitance for resolving their issues; the victims can reach out the helpline on +91 95700 00066 and thay can also mail their issues on helpline@cyberpeace.net.