#FactCheck: A digitally altered video of actor Sebastian Stan shows him changing a ‘Tell Modi’ poster to one that reads ‘I Told Modi’ on a display panel.

Executive Summary:

A widely circulated video claiming to feature a poster with the words "I Told Modi" has gone viral, improperly connecting it to the April 2025 Pahalgam attack, in which terrorists killed 26 civilians. The altered Marvel Studios clip is allegedly a mockery of Operation Sindoor, the counterterrorism operation India initiated in response to the attack. This misinformation emphasizes how crucial it is to confirm information before sharing it online by disseminating misleading propaganda and drawing attention away from real events.

Claim:

A man can be seen changing a poster that says "Tell Modi" to one that says "I Told Modi" in a widely shared viral video. This video allegedly makes reference to Operation Sindoor in India, which was started in reaction to the Pahalgam terrorist attack on April 22, 2025, in which militants connected to The Resistance Front (TRF) killed 26 civilians.

Fact check:

Further research, we found the original post from Marvel Studios' official X handle, confirming that the circulating video has been altered using AI and does not reflect the authentic content.

By using Hive Moderation to detect AI manipulation in the video, we have determined that this video has been modified with AI-generated content, presenting false or misleading information that does not reflect real events.

Furthermore, we found a Hindustan Times article discussing the mysterious reveal involving Hollywood actor Sebastian Stan.

Conclusion:

It is untrue to say that the "I Told Modi" poster is a component of a public demonstration. The text has been digitally changed to deceive viewers, and the video is manipulated footage from a Marvel film. The content should be ignored as it has been identified as false information.

- Claim: Viral social media posts confirm a Pakistani military attack on India.

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

.webp)

Introduction

In the fast-paced digital age, misinformation spreads faster than actual news. This was seen recently when inaccurate information on social media was spread, stating that the Election Commission of India (ECI) had taken down e-voter rolls for some states from its website overnight. The rumour confused the public and caused political debate in states like Maharashtra, MP, Bihar, UP and Haryana, resulting in public confusion. But the ECI quickly called the viral information "fake news" and made sure that voters could still get access to the electoral rolls of all States and Union Territories, available at voters.eci.gov.in. The incident shows how electoral information could be harmed by the impact of misinformation and how important it is to verify the authenticity.

The Incident and Allegations

On August 7, 2025, social media posts on platforms like X and WhatsApp claimed that the Election Commission of India had removed e-voter lists from its website. The posts appeared after public allegations about irregularities in certain constituencies. However, the claims about the removal of voter lists were unverified.

The Election Commission’s Response

In a formal tweet posted on X, it stated categorically:

“This is a fake news. Anyone can download the Electoral Roll for any of 36 States/UTs through this link: https://voters.eci.gov.in/download-eroll.”

The Commission clarified that no deletion has been done at all and that all the voters' rolls are intact and accessible to the public. Keeping with the spirit of transparency, the ECI reaffirmed its overall practice of public access to electoral information by clarifying that the system is intact and accessible for inspection.

Importance of Timely Clarifications

By countering factually incorrect information the moment it was spread on a large scale, the ECI stopped possible harm to public trust. Election officials rely upon being trusted, and any speculation concerning their honesty can prove harmful to democracy. Such prompt action stops false information from becoming a standard in public discourse.

Misinformation in the Electoral Space

- How False Narratives Gain Traction

Election misinformation increases in significant political environments. Social media, confirmation bias, and increased emotional states during elections enable rumour spread. On this occasion, the unfounded report struck a chord with widespread political distrust, and hence, people easily believed and shared it without checking if it was true or not.

- Risks to Democratic Integrity

When misinformation impacts election procedures, the consequences can be profound:

- Erosion of Trust: People can lose faith in the neutrality of election administrators quite easily.

- Polarization: Untrue assertions tend to reinforce political divides, rendering constructive communication more difficult.

- The Role of Media Literacy

Combating such mis-disinformation requires more than official statements. Media skills training courses can equip individuals with the ability to recognise warning signs in suspect messages. Even basic actions like checking official sources prior to sharing can move far in keeping untruths from being spread.

Strategies to Counter Electoral Misinformation

Multi-Stakeholder Action

Effective counteracting of electoral disinformation requires coordination among election officials, fact-checkers, media, and platforms. Actions that are suggested include:

- Rapid Response Protocols: Institutions should maintain dedicated monitoring teams for quick rebuttals.

- Confirmed Channels of Communication: Providing official sites and pages for actual electoral news.

- Proactive Transparency: Regular publication of electoral process updates can anticipate rumours.

- Platform Accountability: Social media sites must label or limit the visibility of information found to be false by credentialed fact-checkers.

Conclusion

The recent allegations of e-voter rolls deletion underscore the susceptibility of contemporary democracies to mis-disinformation. Even though the circumstances were brought back into order by the ECI's swift and unambiguous denunciation, the incident itself serves to emphasise the necessity of preventive steps to maintain election faith. Even though fact-checking alone might not work in an environment where the information space is growing more polarised and algorithmic, the long-term solution to such complications is to grow an ironclad democratic culture where everyone, every organisation, and platforms value the truth over clickbait. The lesson is clear: in the age of instant news, accurate communication is vital for maintaining democratic integrity, not extravagances.

References

- https://www.newsonair.gov.in/election-commission-dismisses-fake-news-on-removal-of-e-voter-rolls/

- https://economictimes.indiatimes.com/news/india/eci-dismisses-claims-of-removing-e-voter-rolls-from-its-website-calls-it-fake-news/articleshow/123190662.cms

- https://www.thehindu.com/news/national/vote-theft-claim-of-congress-factually-incorrect-election-commission/article69921742.ece

- https://www.thehindu.com/opinion/editorial/a-crisis-of-trust-on-the-election-commission-of-india/article69893682.ece

Introduction

In a world where social media dictates public perception and content created by AI dilutes the difference between fact and fiction, mis/disinformation has become a national cybersecurity threat. Today, disinformation campaigns are designed for their effect, with political manipulation, interference in public health, financial fraud, and even community violence. India, with its 900+ million internet users, is especially susceptible to this distortion online. The advent of deep fakes, AI-text, and hyper-personalised propaganda has made disinformation more plausible and more difficult to identify than ever.

What is Misinformation?

Misinformation is false or inaccurate information provided without intent to deceive. Disinformation, on the other hand, is content intentionally designed to mislead and created and disseminated to harm or manipulate. Both are responsible for what experts have termed an "infodemic", overwhelming people with a deluge of false information that hinders their ability to make decisions.

Examples of impactful mis/disinformation are:

- COVID-19 vaccine conspiracy theories (e.g., infertility or microchips)

- Election-related false news (e.g., EVM hacking so-called)

- Social disinformation (e.g., manipulated videos of riots)

- Financial scams (e.g., bogus UPI cashbacks or RBI refund plans)

How Misinformation Spreads

Misinformation goes viral because of both technology design and human psychology. Social media sites such as Facebook, X (formerly Twitter), Instagram, and WhatsApp are designed to amplify messages that elicit high levels of emotional reactions are usually polarising, sensationalistic, or fear-mongering posts. This causes falsehoods or misinformation to get much more attention and activity than authentic facts, and therefore prioritises virality over truth.

Another major consideration is the misuse of generative AI and deep fakes. Applications like ChatGPT, Midjourney, and ElevenLabs can be used to generate highly convincing fake news stories, audio recordings, or videos imitating public figures. These synthetic media assets are increasingly being misused by bad actors for political impersonation, propagating fabricated news reports, and even carrying out voice-based scams.

To this danger are added coordinated disinformation efforts that are commonly operated by foreign or domestic players with certain political or ideological objectives. These efforts employ networks of bot networks on social media, deceptive hashtags, and fabricated images to sway public opinion, especially during politically sensitive events such as elections, protests, or foreign wars. Such efforts are usually automated with the help of bots and meme-driven propaganda, which makes them scalable and traceless.

Why Misinformation is Dangerous

Mis/disinformation is a significant threat to democratic stability, public health, and personal security. Perhaps one of the most pernicious threats is that it undermines public trust. If it goes unchecked, then it destroys trust in core institutions like the media, judiciary, and electoral system. This erosion of public trust has the potential to destabilise democracies and heighten political polarisation.

In India, false information has had terrible real-world outcomes, especially in terms of creating violence. Misleading messages regarding child kidnappers on WhatsApp have resulted in rural mob lynching. As well, communal riots have been sparked due to manipulated religious videos, and false terrorist warnings have created public panic.

The pandemic of COVID-19 also showed us how misinformation can be lethal. Misinformation regarding vaccine safety, miracle cures, and the source of viruses resulted in mass vaccine hesitancy, utilisation of dangerous treatments, and even avoidable deaths.

Aside from health and safety, mis/disinformation has also been used in financial scams. Cybercriminals take advantage of the fear and curiosity of the people by promoting false investment opportunities, phishing URLs, and impersonation cons. Victims get tricked into sharing confidential information or remitting money using seemingly official government or bank websites, leading to losses in crypto Ponzi schemes, UPI scams, and others.

India’s Response to Misinformation

- PIB Fact Check Unit

The Press Information Bureau (PIB) operates a fact-checking service to debunk viral false information, particularly on government policies. In 3 years, the unit identified more than 1,500 misinformation posts across media.

- Indian Cybercrime Coordination Centre (I4C)

Working under MHA, I4C has collaborated with social media platforms to identify sources of viral misinformation. Through the Cyber Tipline, citizens can report misleading content through 1930 or cybercrime.gov.in.

- IT Rules (The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 [updated as on 6.4.2023]

The Information Technology (Intermediary Guidelines) Rules were updated to enable the government to following aspects:

- Removal of unlawful content

- Platform accountability

- Detection Tools

There are certain detection tool that works as shields in assisting fact-checkers and enforcement bodies to:

- Identify synthetic voice and video scams through technical measures.

- Track misinformation networks.

- Label manipulated media in real-time.

CyberPeace View: Solutions for a Misinformation-Resilient Bharat

- Scale Digital Literacy

"Think Before You Share" programs for rural schools to teach students to check sources, identify clickbait, and not reshare fake news.

- Platform Accountability

Technology platforms need to:

- Flag manipulated media.

- Offer algorithmic transparency.

- Mark AI-created media.

- Provide localised fact-checking across diverse Indian languages.

- Community-Led Verification

Establish WhatsApp and Telegram "Fact Check Hubs" headed by expert organisations, industry experts, journalists, and digital volunteers who can report at the grassroots level fake content.

- Legal Framework for Deepfakes

Formulate targeted legislation under the Bhartiya Nyaya Sanhita (BNS) and other relevant laws to make malicious deepfake and synthetic media use a criminal offense for:

- Electoral manipulation.

- Defamation.

- Financial scams.

- AI Counter-Misinformation Infrastructure

Invest in public sector AI models trained specifically to identify:

- Coordinated disinformation patterns.

- Botnet-driven hashtag campaigns.

- Real-time viral fake news bursts.

Conclusion

Mis/disinformation is more than just a content issue, it's a public health, cybersecurity, and democratic stability challenge. As India enters the digitally empowered world, making a secure, informed, and resilient information ecosystem is no longer a choice; now, it's imperative. Fighting misinformation demands a whole-of-society effort with AI innovation, public education, regulatory overhaul, and tech responsibility. The danger is there, but so is the opportunity to guide the world toward a fact-first, trust-based digital age. It's time to act.

References

- https://www.pib.gov.in/factcheck.aspx

- https://www.meity.gov.in/static/uploads/2024/02/Information-Technology-Intermediary-Guidelines-and-Digital-Media-Ethics-Code-Rules-2021-updated-06.04.2023-.pdf

- https://www.cyberpeace.org

- https://www.bbc.com/news/topics/cezwr3d2085t

- https://www.logically.ai

- https://www.altnews.in

Executive Summary

Prime Minister Narendra Modi recently appealed to citizens to reduce the consumption of gold and edible oil for a year. He also urged people to conserve petrol, diesel, and cooking gas amid the crisis arising from the ongoing tensions in West Asia involving the United States, Israel, and Iran. Amid this backdrop, a purported image of Union Home Minister Amit Shah and his son Jay Shah, who currently serves as Chairman of the International Cricket Council, has gone viral on social media. The image shows them seated inside a chartered aircraft along with two other individuals. Several social media users shared the picture as genuine while targeting the central government and Amit Shah.

However, CyberPeace Research Wing reseach found that the viral image is fake and was generated using Artificial Intelligence (AI).

Claim

An Instagram user named “Om Prakash Shukla” shared the viral image on May 24, 2026, with the caption:“People are sacrificing their desires for the nation, while they are enjoying luxury.”

https://www.instagram.com/p/DYuFqnmCh7T

Fact Check

To verify the authenticity of the viral image, we conducted a Google Lens search. However, the image was not found on any credible news platform, which raised suspicion about its authenticity. We also performed keyword-based searches related to the image using Google Search, but found no authentic reports or media coverage associated with the picture.

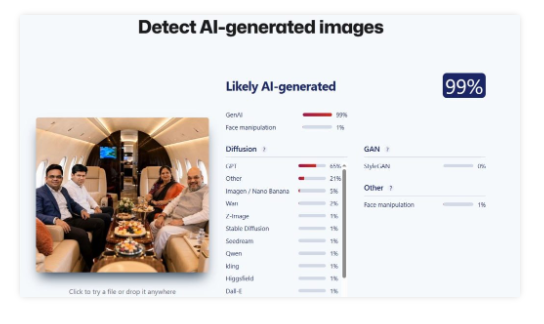

Further reseach involved analysing the image using AI detection tools. We first examined the picture using the “Sight Engine” AI detection tool, which indicated a 99 percent probability that the image was AI-generated.

We also tested the image using another AI detection platform called “TruthScan.” This tool likewise suggested, with around 97 percent probability, that the image had been created using artificial intelligence

Conclusion

The reseach found that the viral image featuring Union Home Minister Amit Shah and ICC Chairman Jay Shah inside a chartered aircraft is AI-generated. The image was digitally created using AI tools, and the viral claim associated with it is false.