#FactCheck - AI-Generated Flyover Collapse Video Shared With Misleading Claims

Executive Summary

A video showing a flyover collapse is going viral on social media. The clip shows a flyover and a road passing beneath it, with vehicles moving normally. Suddenly, a portion of the flyover appears to collapse and fall onto the road below, with some vehicles seemingly coming under its impact. The video has been widely shared by users online. However, research by the CyberPeace found the viral claim to be false. The probe revealed that the video is not real but has been created using artificial intelligence.

Claim:

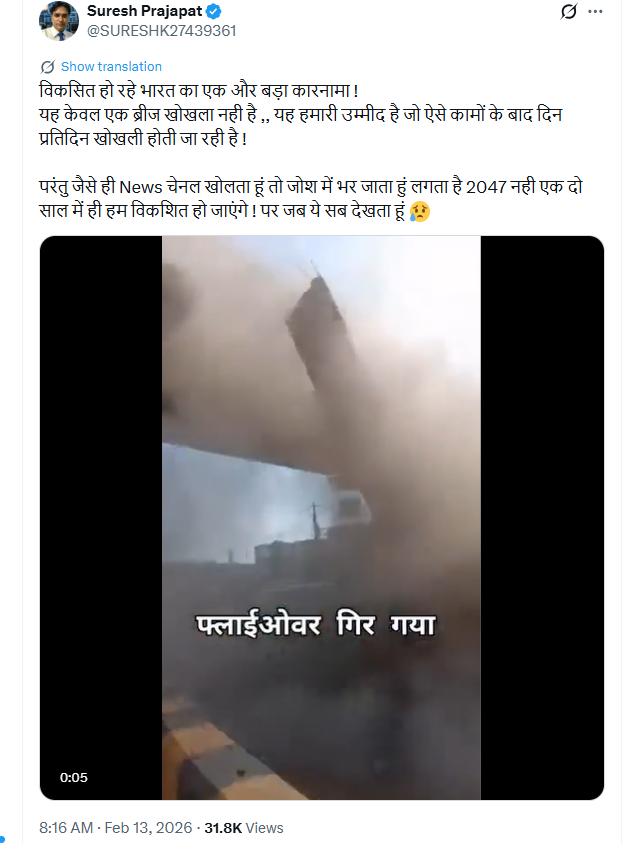

On X (formerly Twitter), a user shared the viral video on February 13, 2026, claiming it showed the reality of India’s infrastructure development and criticizing ongoing projects. The post quickly gained traction, with several users sharing it as a real incident. Similarly, another user shared the same video on Facebook on February 13, 2026, making a similar claim.

Fact Check:

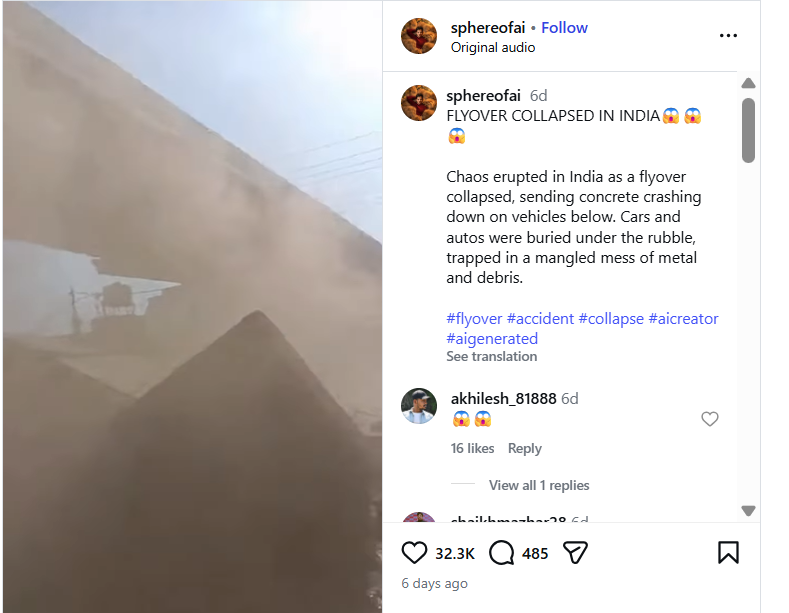

To verify the claim, key frames from the viral video were extracted and searched using Google Lens. During the search, the video was traced to an account named “sphereofai” on Instagram, where it had been posted on February 9. The post included hashtags such as “AI Creator” and “AI Generated,” clearly indicating that the video was created using AI. Further examination of the account showed that the user identifies themselves as an AI content creator.

To confirm the findings, the viral video was also analysed using Hive Moderation. The tool’s analysis suggested a 99 percent probability that the video was AI-generated.

Conclusion:

The research established that the viral flyover collapse video is not authentic. It is an AI-generated clip being circulated online with misleading claims.

Related Blogs

Executive Summary:

A viral video circulating on social media that appears to be deliberately misleading and manipulative is shown to have been done by comedian Samay Raina casually making a lighthearted joke about actress Rekha in the presence of host Amitabh Bachchan which left him visibly unsettled while shooting for an episode of Kaun Banega Crorepati (KBC) Influencer Special. The joke pointed to the gossip and rumors of unspoken tensions between the two Bollywood Legends. Our research has ruled out that the video is artificially manipulated and reflects a non genuine content. However, the specific joke in the video does not appear in the original KBC episode. This incident highlights the growing misuse of AI technology in creating and spreading misinformation, emphasizing the need for increased public vigilance and awareness in verifying online information.

Claim:

The claim in the video suggests that during a recent "Influencer Special" episode of KBC, Samay Raina humorously asked Amitabh Bachchan, "What do you and a circle have in common?" and then delivered the punchline, "Neither of you and circle have Rekha (line)," playing on the Hindi word "rekha," which means 'line'.ervicing routes between Amritsar, Chandigarh, Delhi, and Jaipur. This assertion is accompanied by images of a futuristic aircraft, implying that such technology is currently being used to transport commercial passengers.

Fact Check:

To check the genuineness of the claim, the whole Influencer Special episode of Kaun Banega Crorepati (KBC) which can also be found on the Sony Set India YouTube channel was carefully reviewed. Our analysis proved that no part of the episode had comedian Samay Raina cracking a joke on actress Rekha. The technical analysis using Hive moderator further found that the viral clip is AI-made.

Conclusion:

A viral video on the Internet that shows Samay Raina making a joke about Rekha during KBC was released and completely AI-generated and false. This poses a serious threat to manipulation online and that makes it all the more important to place a fact-check for any news from credible sources before putting it out. Promoting media literacy is going to be key to combating misinformation at this time, with the danger of misuse of AI-generated content.

- Claim: Fake AI Video: Samay Raina’s Rekha Joke Goes Viral

- Claimed On: X (Formally known as Twitter)

- Fact Check: False and Misleading

Executive Summary

Iran’s official news agencies have denied claims that senior officials, including Foreign Minister Abbas Araghchi and Parliament Speaker Mohammad Bagher Ghalibaf, have arrived in Pakistan for talks. A senior official told Iran’s Tasnim News Agency that Tehran is considering Pakistan’s proposal for peace talks, but any dialogue would depend on the United States fulfilling its commitment to halt military actions on all fronts.

Notably, the United States and Iran had agreed to a two-week ceasefire on April 8, 2026, with discussions reportedly scheduled for April 11 in Islamabad. Amid this backdrop, a video showing fighter jets escorting a large aircraft is being widely circulated on social media. Users claim that Pakistan deployed these jets to escort an Iranian delegation into the country.

However, an research by the CyberPeace found the claim to be false. The viral video is not recent and dates back to 2019.

Claim

An X (formerly Twitter) user shared the video claiming that Pakistan Air Force jets were escorting an Iranian delegation into Pakistan.

Fact Check

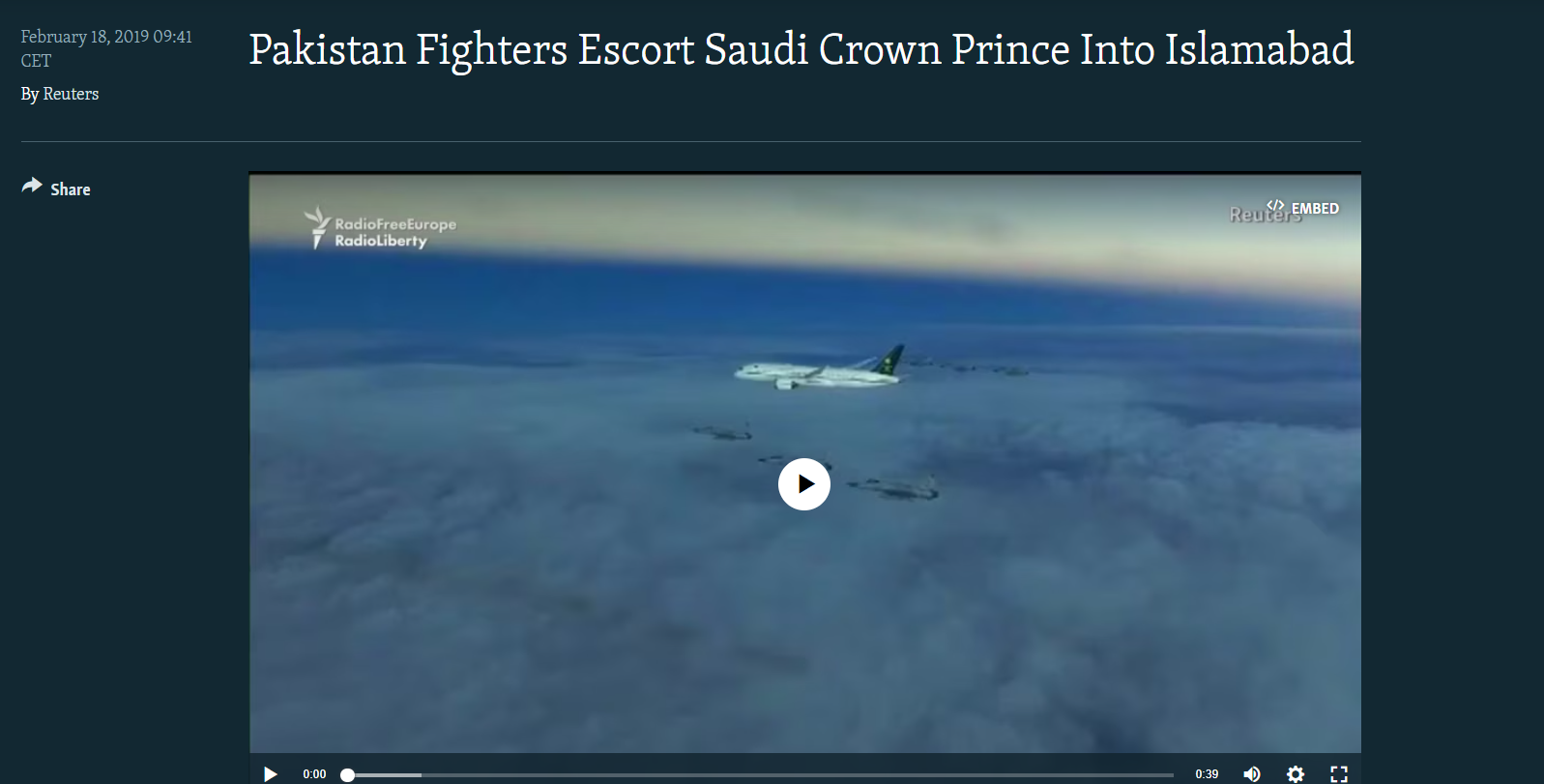

Reverse image search of keyframes from the viral video led us to a February 18, 2019 report by Radio Free Europe/Radio Liberty. The report stated that the fighter jets were deployed by Pakistan to escort the aircraft of Saudi Crown Prince Mohammed bin Salman during his visit to Pakistan on February 17, 2019.

Further verification led us to the same footage uploaded on YouTube by the channel “SCMP Archive” on July 6, 2020. At the time, Pakistan’s Air Force had described the escort as part of a ceremonial welcome tradition for visiting dignitaries.

Conclusion

The viral claim is misleading. The video does not show Pakistani fighter jets escorting an Iranian delegation amid ongoing ceasefire talks. Instead, it is an old clip from 2019, when Pakistan deployed JF-17 fighter jets to welcome Saudi Crown Prince Mohammed bin Salman during his official visit. There is no evidence linking the video to current geopolitical developments involving Iran and Pakistan. The footage has been taken out of context and reshared with a false narrative to mislead viewers.

Introduction

With the increasing frequency and severity of cyber-attacks on critical sectors, the government of India has formulated the National Cyber Security Reference Framework (NCRF) 2023, aimed to address cybersecurity concerns in India. In today’s digital age, the security of critical sectors is paramount due to the ever-evolving landscape of cyber threats. Cybersecurity measures are crucial for protecting essential sectors such as banking, energy, healthcare, telecommunications, transportation, strategic enterprises, and government enterprises. This is an essential step towards safeguarding these critical sectors and preparing for the challenges they face in the face of cyber threats. Protecting critical sectors from cyber threats is an urgent priority that requires the development of robust cybersecurity practices and the implementation of effective measures to mitigate risks.

Overview of the National Cyber Security Policy 2013

The National Cyber Security Policy of 2013 was the first attempt to address cybersecurity concerns in India. However, it had several drawbacks that limited its effectiveness in mitigating cyber risks in the contemporary digital age. The policy’s outdated guidelines, insufficient prevention and response measures, and lack of legal implications hindered its ability to protect critical sectors adequately. Moreover, the policy should have kept up with the rapidly evolving cyber threat landscape and emerging technologies, leaving organisations vulnerable to new cyber-attacks. The 2013 policy failed to address the evolving nature of cyber threats, leaving organisations needing updated guidelines to combat new and sophisticated attacks.

As a result, an updated and more comprehensive policy, the National Cyber Security Reference Framework 2023, was necessary to address emerging challenges and provide strategic guidance for protecting critical sectors against cyber threats.

Highlights of NCRF 2023

Strategic Guidance: NCRF 2023 has been developed to provide organisations with strategic guidance to address their cybersecurity concerns in a structured manner.

Common but Differentiated Responsibility (CBDR): The policy is based on a CBDR approach, recognising that different organisations have varying levels of cybersecurity needs and responsibilities.

Update of National Cyber Security Policy 2013: NCRF supersedes the National Cyber Security Policy 2013, which was due for an update to align with the evolving cyber threat landscape and emerging challenges.

Different from CERT-In Directives: NCRF is distinct from the directives issued by the Indian Computer Emergency Response Team (CERT-In) published in April 2023. It provides a comprehensive framework rather than specific directives for reporting cyber incidents.

Combination of robust strategies: National Cyber Security Reference Framework 2023 will provide strategic guidance, a revised structure, and a proactive approach to cybersecurity, enabling organisations to tackle the growing cyberattacks in India better and safeguard critical sectors. Rising incidents of malware attacks on critical sectors

In recent years, there has been a significant increase in malware attacks targeting critical sectors. These sectors, including banking, energy, healthcare, telecommunications, transportation, strategic enterprises, and government enterprises, play a crucial role in the functioning of economies and the well-being of societies. The escalating incidents of malware attacks on these sectors have raised concerns about the security and resilience of critical infrastructure.

Banking: The banking sector handles sensitive financial data and is a prime target for cybercriminals due to the potential for financial fraud and theft.

Energy: The energy sector, including power grids and oil companies, is critical for the functioning of economies, and disruptions can have severe consequences for national security and public safety.

Healthcare: The healthcare sector holds valuable patient data, and cyber-attacks can compromise patient privacy and disrupt healthcare services. Malware attacks on healthcare organisations can result in the theft of patient records, ransomware incidents that cripple healthcare operations, and compromise medical devices.

Telecommunications: Telecommunications infrastructure is vital for reliable communication, and attacks targeting this sector can lead to communication disruptions and compromise the privacy of transmitted data. The interconnectedness of telecommunications networks globally presents opportunities for cybercriminals to launch large-scale attacks, such as Distributed Denial-of-Service (DDoS) attacks.

Transportation: Malware attacks on transportation systems can lead to service disruptions, compromise control systems, and pose safety risks.

Strategic Enterprises: Strategic enterprises, including defence, aerospace, intelligence agencies, and other sectors vital to national security, face sophisticated malware attacks with potentially severe consequences. Cyber adversaries target these enterprises to gain unauthorised access to classified information, compromise critical infrastructure, or sabotage national security operations.

Government Enterprises: Government organisations hold a vast amount of sensitive data and provide essential services to citizens, making them targets for data breaches and attacks that can disrupt critical services.

Conclusion

The sectors of banking, energy, healthcare, telecommunications, transportation, strategic enterprises, and government enterprises face unique vulnerabilities and challenges in the face of cyber-attacks. By recognising the significance of safeguarding these sectors, we can emphasise the need for proactive cybersecurity measures and collaborative efforts between public and private entities. Strengthening regulatory frameworks, sharing threat intelligence, and adopting best practices are essential to ensure our critical infrastructure’s resilience and security. Through these concerted efforts, we can create a safer digital environment for these sectors, protecting vital services and preserving the integrity of our economy and society. The rising incidents of malware attacks on critical sectors emphasise the urgent need for updated cybersecurity policy, enhanced cybersecurity measures, a collaboration between public and private entities, and the development of proactive defence strategies. National Cyber Security Reference Framework 2023 will help in addressing the evolving cyber threat landscape, protect critical sectors, fill the gaps in sector-specific best practices, promote collaboration, establish a regulatory framework, and address the challenges posed by emerging technologies. By providing strategic guidance, this framework will enhance organisations’ cybersecurity posture and ensure the protection of critical infrastructure in an increasingly digitised world.