#FactCheck- Old US Troops Homecoming Video Falsely Linked to Iran Ceasefire

Executive Summary

Talks between the United States and Iran over a ceasefire reportedly held in Islamabad on Saturday ended without a resolution. Meanwhile, a video circulating on social media claims to show US troops returning home following a ceasefire in the Middle East conflict.

However, a research by the CyberPeace found the claim to be false. The viral video is not linked to any recent ceasefire. It actually dates back to March and shows the return of Iowa National Guard troops after months of deployment in the Middle East.

Claim

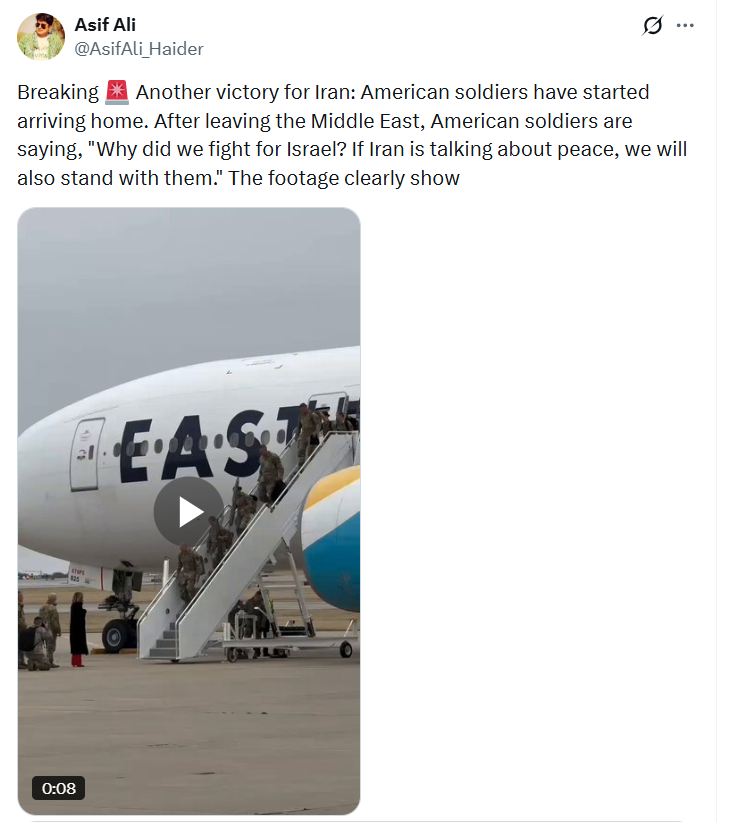

An X (formerly Twitter) user posted the video on April 7, 2026, claiming,“Another victory for Iran: American soldiers have started arriving home. After leaving the Middle East, American soldiers are saying, ‘Why did we fight for Israel? If Iran is talking about peace, we will also stand with them.’”

Fact Check

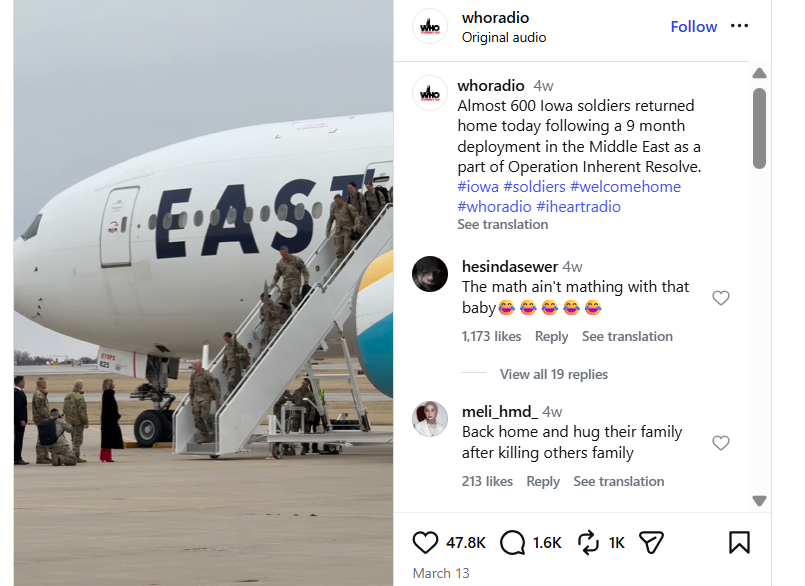

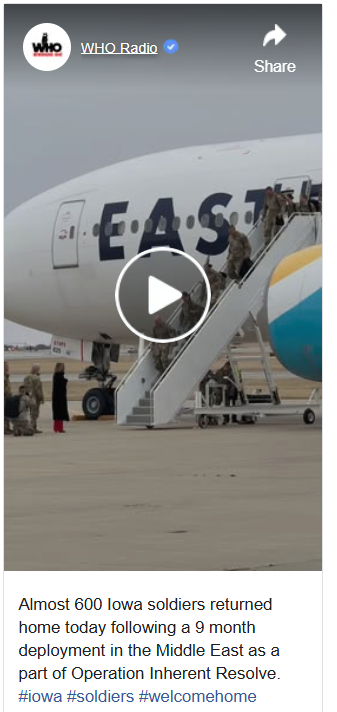

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search using Google Lens. This led us to posts by Newsradio 1040 WHO, which had shared the same footage on March 12 across Facebook and Instagram.

In its caption, the radio station stated that nearly 600 Iowa soldiers had returned home after a nine-month deployment in the Middle East as part of Operation Inherent Resolve. The segment, narrated by journalist Claire Burnett, explained that the soldiers belonged to the 2nd Brigade Combat Team, 34th Infantry Division, and had been deployed to Iraq and Syria. The footage was recorded at the 132nd Wing base of the Iowa Air National Guard in Des Moines.

For further confirmation, a March 12 report by KCCI 8 News also showed the same aircraft and troops, verifying the authenticity and timeline of the footage

Operation Inherent Resolve, launched in 2014, is a US-led campaign aimed at supporting local forces in the fight against the Islamic State (ISIS) and ensuring its lasting defeat.

https://www.kcci.com/article/iowans-welcome-national-guard-unit-home-from-deployment-in-middle-east/70729105

Conclusion

The viral claim is false and misleading. The video does not show US troops returning due to any recent ceasefire between the United States and Iran. Instead, it captures the routine homecoming of Iowa National Guard soldiers in March after completing a scheduled deployment in the Middle East.There is no evidence linking the footage to current geopolitical developments or any ceasefire agreement. The claim has been taken out of context and shared with a misleading narrative to create confusion around ongoing international events.