#FactCheck- Old CCTV Video Falsely Shared as Killing of LeT’s Amir Hamza

Executive Summary

A CCTV video showing a man being shot is being widely circulated on social media with the claim that it depicts the killing of Lashkar-e-Taiba terrorist Amir Hamza in Pakistan. However, research by the CyberPeace Research Wing found that the claim is misleading. The viral video existed online even before the reported attack on Amir Hamza.

Claim

Social media users are sharing a CCTV clip claiming that Lashkar-e-Taiba terrorist Amir Hamza was shot dead in Pakistan.

Fact Check

To verify the claim, we first searched relevant keywords such as “Maulana Amir Hamza firing Lahore.” This led us to a report published on April 17, 2026, by The Hindu. Citing Pakistani channel 24 News HD TV, the report stated that unidentified attackers opened fire on the car of TV host Justice Nazir Ahmed Ghazi. Amir Hamza was injured in the incident, not killed.

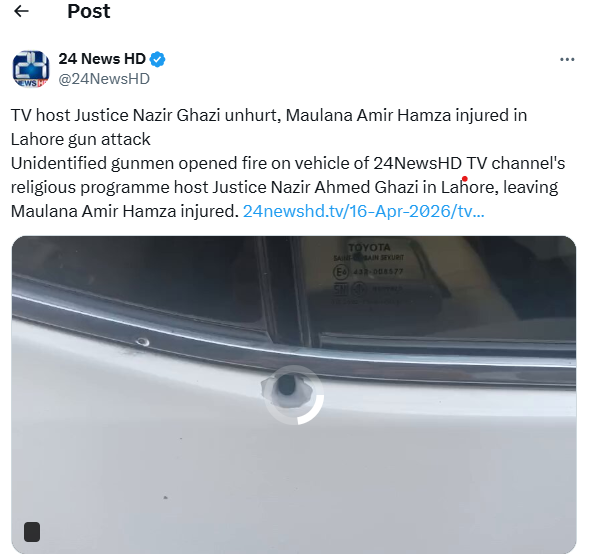

We also reviewed the official social media accounts of 24 News HD TV. A post on its X handle (@24NewsHD) confirmed that Justice Ghazi was safe, while Amir Hamza sustained injuries in the firing incident in Lahore.

For further verification, we extracted keyframes from the viral video and performed a reverse image search. The same clip was found uploaded on March 28, 2026, on a Pakistani Facebook page. According to the post, the CCTV footage was linked to the killing of an individual named Saifullah Malakhel.

Although we could not independently verify the exact origin of the video, our findings clearly indicate that the footage predates the recent attack on Amir Hamza and is unrelated to the incident.

Conclusion

The viral claim is false. Amir Hamza was not killed but reportedly injured in the firing incident, as per credible media reports. The CCTV video being shared in this context is old and unrelated, and has been circulated with a misleading narrative.

Related Blogs

Introduction

With the rise of AI deepfakes and manipulated media, it has become difficult for the average internet user to know what they can trust online. Synthetic media can have serious consequences, from virally spreading election disinformation or medical misinformation to serious consequences like revenge porn and financial fraud. Recently, a Pune man lost ₹43 lakh when he invested money based on a deepfake video of Infosys founder Narayana Murthy. In another case, that of Babydoll Archi, a woman from Assam had her likeness deepfaked by an ex-boyfriend to create revenge porn.

Image or video manipulation used to leave observable traces. Online sources may advise examining the edges of objects in the image, checking for inconsistent patterns, lighting differences, observing the lip movements of the speaker in a video or counting the number of fingers on a person’s hand. Unfortunately, as the technology improves, such folk advice might not always help users identify synthetic and manipulated media.

The Coalition for Content Provenance and Authenticity (C2PA)

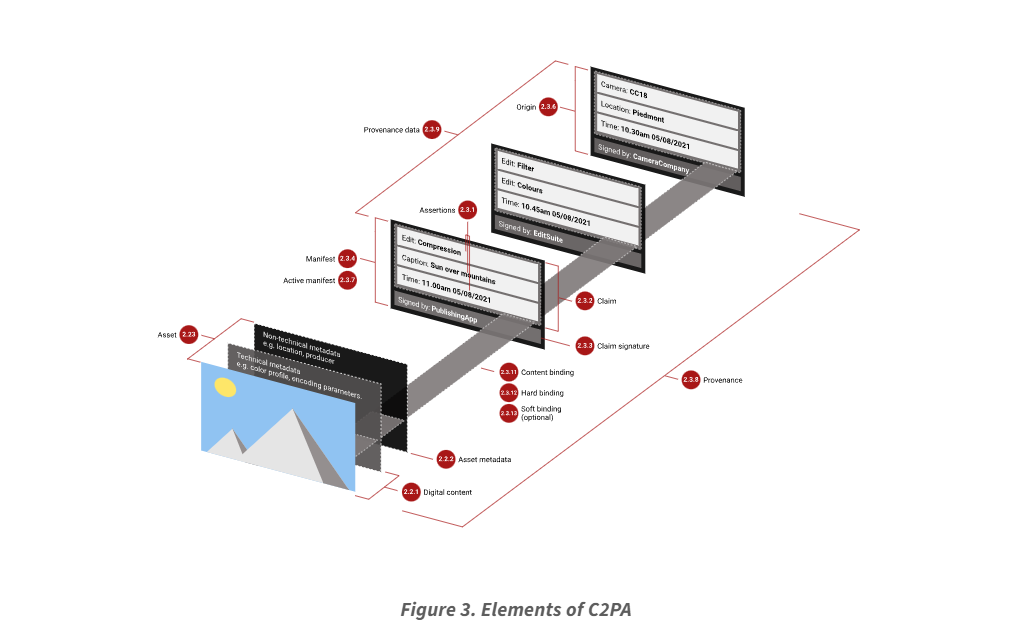

One interesting project in the area of trust-building under these circumstances has been the Coalition for Content Provenance and Authenticity (C2PA). Started in 2019 by Adobe and Microsoft, C2PA is a collaboration between major players in AI, social media, journalism, and photography, among others. It set out to create a standard for publishers of digital media to prove the authenticity of digital media and track changes as they occur.

When photos and videos are captured, they generally store metadata like the date and time of capture, the location, the device it was taken on, etc. C2PA developed a standard for sharing and checking the validity of this metadata, and adding additional layers of metadata whenever a new user makes any edits. This creates a digital record of any and all changes made. Additionally, the original media is bundled with this metadata. This makes it easy to verify the source of the image and check if the edits change the meaning or impact of the media. This standard allows different validation software, content publishers and content creation tools to be interoperable in terms of maintaining and displaying proof of authenticity.

The standard is intended to be used on an opt-in basis and can be likened to a nutrition label for digital media. Importantly, it does not limit the creativity of fledgling photo editors or generative AI enthusiasts; it simply provides consumers with more information about the media they come across.

Could C2PA be Useful in an Indian Context?

The World Economic Forum’s Global Risk Report 2024, identifies India as a significant hotspot for misinformation. The recent AI Regulation report by MeitY indicates an interest in tools for watermarking AI-based synthetic content for ease of detecting and tracking harmful outcomes. Perhaps C2PA can be useful in this regard as it takes a holistic approach to tracking media manipulation, even in cases where AI is not the medium.

Currently, 26 India-based organisations like the Times of India or Truefy AI have signed up to the Content Authenticity Initiative (CAI), a community that contributes to the development and adoption of tools and standards like C2PA. However, people are increasingly using social media sites like WhatsApp and Instagram as sources of information, both of which are owned by Meta and have not yet implemented the standard in their products.

India also has low digital literacy rates and low resistance to misinformation. Part of the challenge would be showing people how to read this nutrition label, to empower people to make better decisions online. As such, C2PA is just one part of an online trust-building strategy. It is crucial that education around digital literacy and policy around organisational adoption of the standard are also part of the strategy.

The standard is also not foolproof. Current iterations may still struggle when presented with screenshots of digital media and other non-technical digital manipulation. Linking media to their creator may also put journalists and whistleblowers at risk. Actual use in context will show us more about how to improve future versions of digital provenance tools, though these improvements are not guarantees of a safer internet.

The largest advantage of C2PA adoption would be the democratisation of fact-checking infrastructure. Since media is shared at a significantly faster rate than it can be verified by professionals, putting the verification tools in the hands of people makes the process a lot more scalable. It empowers citizen journalists and leaves a public trail for any media consumer to look into.

Conclusion

From basic colour filters to make a scene more engaging, to removing a crowd from a social media post, to editing together videos of a politician to make it sound like they are singing a song, we are so accustomed to seeing the media we consume be altered in some way. The C2PA is just one way to bring transparency to how media is altered. It is not a one-stop solution, but it is a viable starting point for creating a fairer and democratic internet and increasing trust online. While there are risks to its adoption, it is promising to see that organisations across different sectors are collaborating on this project to be more transparent about the media we consume.

References

- https://c2pa.org/

- https://contentauthenticity.org/

- https://indianexpress.com/article/technology/tech-news-technology/kate-middleton-9-signs-edited-photo-9211799/

- https://photography.tutsplus.com/articles/fakes-frauds-and-forgeries-how-to-detect-image-manipulation--cms-22230

- https://www.media.mit.edu/projects/detect-fakes/overview/

- https://www.youtube.com/watch?v=qO0WvudbO04&pp=0gcJCbAJAYcqIYzv

- https://www3.weforum.org/docs/WEF_The_Global_Risks_Report_2024.pdf

- https://indianexpress.com/article/technology/tech-news-technology/ai-law-may-not-prescribe-penal-consequences-for-violations-9457780/

- https://thesecretariat.in/article/meity-s-ai-regulation-report-ambitious-but-no-concrete-solutions

- https://www.ndtv.com/lifestyle/assam-what-babydoll-archi-viral-fame-says-about-india-porn-problem-8878689

- https://www.meity.gov.in/static/uploads/2024/02/9f6e99572739a3024c9cdaec53a0a0ef.pdf

Introduction

According to a new McAfee survey, 88% of American customers believe that cybercriminals will utilize artificial intelligence to "create compelling online scams" over the festive period. In the meanwhile, 31% believe it will be more difficult to determine whether messages from merchants or delivery services are genuine, while 57% believe phishing emails and texts will be more credible. The study, which was conducted in September 2023 in the United States, Australia, India, the United Kingdom, France, Germany, and Japan, yielded 7,100 responses. Some people may decide to cut back on their online shopping as a result of their worries about AI; among those surveyed, 19% stated they would do so this year.

In 2024, McAfee predicts a rise in AI-driven scams on social media, with cybercriminals using advanced tools to create convincing fake content, exploiting celebrity and influencer identities. Deepfake technology may worsen cyberbullying, enabling the creation of realistic fake content. Charity fraud is expected to rise, leveraging AI to set up fake charity sites. AI's use by cybercriminals will accelerate the development of advanced malware, phishing, and voice/visual cloning scams targeting mobile devices. The 2024 Olympic Games are seen as a breeding ground for scams, with cybercriminals targeting fans for tickets, travel, and exclusive content.

AI Scams' Increase on Social Media

Cybercriminals plan to use strong artificial intelligence capabilities to control social media by 2024. These applications become networking goldmines because they make it possible to create realistic images, videos, and audio. Anticipate the exploitation of influencers and popular identities by cybercriminals.

AI-powered Deepfakes and the Rise in Cyberbullying

The negative turn that cyberbullying might take in 2024 with the use of counterfeit technology is one trend to be concerned about. This cutting-edge technique is freely accessible to youngsters, who can use it to produce eerily convincing synthetic content that compromises victims' privacy, identities, and wellness.

In addition to sharing false information, cyberbullies have the ability to alter public photographs and re-share edited, detailed versions, which exacerbates the suffering done to children and their families. The study issues a warning, stating that deepfake technology would probably cause online harassment to take a negative turn. With this sophisticated tool, young adults may now generate frighteningly accurate synthetic content in addition to using it for fun. The increasing severity of these deceptive pictures and phrases can cause serious, long-lasting harm to children and their families, impairing their identity, privacy, and overall happiness.

Evolvement of GenAI Fraud in 2023

We simply cannot get enough of these persistent frauds and fake emails. People in general are now rather adept at [recognizing] those that are used extensively. But if they become more precise, such as by utilizing AI-generated audio to seem like a loved one's distress call or information that is highly personal to the person, users should be much more cautious about them. The rise in popularity of generative AIs brings with it a new wrinkle, as hackers can utilize these systems to refine their attacks:

- Writing communications more skillfully in order to deceive consumers into sending sensitive information, clicking on a link, or uploading a file.

- Recreate emails and business websites as realistically as possible to prevent arousing concern in the minds of the perpetrators.

- People's faces and voices can be cloned, and deepfakes of sounds or images can be created that are undetectable to the target audience. a problem that has the potential to greatly influence schemes like CEO fraud.

- Because generative AIs can now hold conversations, and respond to victims efficiently.

- Conduct psychological manipulation initiatives more quickly, with less money spent, and with greater complexity and difficulty in detecting them. AI generative already in use in the market can write texts, clone voices, or generate images and program websites.

AI Hastens the Development of Malware and Scams

Even while artificial intelligence (AI) has many uses, cybercriminals are becoming more and more dangerous with it. Artificial intelligence facilitates the rapid creation of sophisticated malware, illicit web pages, and plausible phishing and smishing emails. As these risks become more accessible, mobile devices will be attacked more frequently, with a particular emphasis on audio and visual impersonation schemes.

Olympic Games: A Haven for Scammers

The 2024 Olympic Games are seen as a breeding ground for scams, with cybercriminals targeting fans for tickets, travel, and exclusive content. Cybercriminals are skilled at profiting from big occasions, and the buzz that will surround the 2024 Olympic Games around the world will make it an ideal time for scams. Con artists will take advantage of customers' excitement by focusing on followers who are ready to purchase tickets, arrange travel, obtain special content, and take part in giveaways. During this prominent event, vigilance is essential to avoid an invasion of one's personal records and financial data.

Development of McAfee’s own bot to assist users in screening potential scammers and authenticators for messages they receive

Precisely such kind of technology is under the process of development by McAfee. It's critical to emphasize that solving the issue is a continuous process. AI is being manipulated by bad actors and thus, one of the tricksters can pull off is to exploit the fact that consumers fall for various ruses as parameters to train advanced algorithms. Thus, the con artists may make use of the gadgets, test them on big user bases, and improve with time.

Conclusion

According to the McAfee report, 88% of American customers are consistently concerned about AI-driven internet frauds that target them around the holidays. Social networking poses a growing threat to users' privacy. By 2024, hackers hope to take advantage of AI skills and use deepfake technology to exacerbate harassment. By mimicking voices and faces for intricate schemes, generative AI advances complex fraud. The surge in charitable fraud affects both social and financial aspects, and the 2024 Olympic Games could serve as a haven for scammers. The creation of McAfee's screening bot highlights the ongoing struggle against developing AI threats and highlights the need for continuous modification and increased user comprehension in order to combat increasingly complex cyber deception.

References

- https://www.fonearena.com/blog/412579/deepfake-surge-ai-scams-2024.html

- https://cxotoday.com/press-release/mcafee-reveals-2024-cybersecurity-predictions-advancement-of-ai-shapes-the-future-of-online-scams/#:~:text=McAfee%20Corp.%2C%20a%20global%20leader,and%20increasingly%20sophisticated%20cyber%20scams.

- https://timesofindia.indiatimes.com/gadgets-news/deep-fakes-ai-scams-and-other-tools-cybercriminals-could-use-to-steal-your-money-and-personal-details-in-2024/articleshow/106126288.cms

- https://digiday.com/media-buying/mcafees-cto-on-ai-and-the-cat-and-mouse-game-with-holiday-scams/

Digital vulnerabilities like cyber-attacks and data breaches proliferate rapidly in the hyper-connected world that is created today. These vulnerabilities can compromise sensitive data like personal information, financial data, and intellectual property and can potentially threaten businesses of all sizes and in all sectors. Hence, it has become important to inform all stakeholders about any breach or attack to ensure they can be well-prepared for the consequences of such an incident.

The non-reporting of reporting can result in heavy fines in many parts of the world. Data breaches caused by malicious acts are crimes and need proper investigation. Organisations may face significant penalties for failing to report the event. Failing to report data breach incidents can result in huge financial setbacks and legal complications. To understand why transparency is vital and understanding the regulatory framework that governs data breaches is the first step.

The Current Indian Regulatory Framework on Data Breach Disclosure

A data breach essentially, is the unauthorised processing or accidental disclosure of personal data, which may occur through its acquisition, sharing, use, alteration, destruction, or loss of access. Such incidents can compromise the affected data’s confidentiality, integrity, or availability. In India, the Information Technology Act of 2000 and the Digital Personal Data Protection Act of 2023 are the primary legislation that tackles cybercrimes like data breaches.

- Under the DPDP Act, neither materiality thresholds nor express timelines have been prescribed for the reporting requirement. Data Fiduciaries are required to report incidents of personal data breach, regardless of their sensitivity or impact on the Data Principal.

- The IT (Indian Computer Emergency Response Team and Manner of Performing Functions and Duties) Rules, 2013, the IT (Reasonable Security Practices and Procedures and Sensitive Personal Data or Information) Rules, 2011, along with the Cyber Security Directions, under section 70B(6) of the IT Act, 2000, relating to information security practices, procedure, prevention, response and reporting of cyber incidents for Safe & Trusted Internet prescribed in 2022 impose mandatory notification requirements on service providers, intermediaries, data centres and corporate entities, upon the occurrence of certain cybersecurity incidents.

- These laws and regulations obligate companies to report any breach and any incident to regulators such as the CERT-In and the Data Protection Board.

The Consequences of Non-Disclosure

A non-disclosure of a data breach has a manifold of consequences. They are as follows:

- Legal and financial penalties are the immediate consequence of a data breach in India. The DPDP Act prescribes a fine of up to Rs 250 Crore from the affected parties, along with suits of a civil nature and regulatory scrutiny. Non-compliance can also attract action from CERT-In, leading to more reputational damage.

- In the long term, failure to disclose data breaches can erode customer trust as they are less likely to engage with a brand that is deemed unreliable. Investor confidence may potentially waver due to concerns about governance and security, leading to stock price drops or reduced funding opportunities. Brand reputation can be significantly tarnished, and companies may struggle with retaining and attracting customers and employees. This can affect long-term profitability and growth.

- Companies such as BigBasket and Jio in 2020 and Haldiram in 2022 have suffered from data breaches recently. Poor transparency and delay in disclosures led to significant reputational damage, legal scrutiny, and regulatory actions for the companies.

Measures for Improvement: Building Corporate Reputation via Transparency

Transparency is critical when disclosing data breaches. It enhances trust and loyalty for a company when the priority is data privacy for stakeholders. Ensuring transparency mitigates backlash. It demonstrates a company’s willingness to cooperate with authorities. A farsighted approach instils confidence in all stakeholders in showcasing a company's resilience and commitment to governance. These measures can be further improved upon by:

- Offering actionable steps for companies to establish robust data breach policies, including regular audits, prompt notifications, and clear communication strategies.

- Highlighting the importance of cooperation with regulatory bodies and how to ensure compliance with the DPDP Act and other relevant laws.

- Sharing best public communications practices post-breach to manage reputational and legal risks.

Conclusion

Maintaining transparency when a data breach happens is more than a legal obligation. It is a good strategy to retain a corporate reputation. Companies can mitigate the potential risks (legal, financial and reputational) by informing stakeholders and cooperating with regulatory bodies proactively. In an era where digital vulnerabilities are ever-present, clear communication and compliance with data protection laws such as the DPDP Act build trust, enhance corporate governance, and secure long-term business success. Proactive measures, including audits, breach policies, and effective public communication, are critical in reinforcing resilience and fostering stakeholder confidence in the face of cyber threats.

References

- https://www.meity.gov.in/writereaddata/files/Digital%20Personal%20Data%20Protection%20Act%202023.pdf

- https://www.cert-in.org.in/PDF/CERT-In_Directions_70B_28.04.2022.pdf

- https://chawdamrunal.medium.com/the-dark-side-of-covering-up-data-breaches-why-transparency-is-crucial-fe9ed10aac27

- https://www.dlapiperdataprotection.com/index.html?t=breach-notification&c=IN