#FactCheck - Viral Video Falsely Linked to India; Actually from Bangladesh

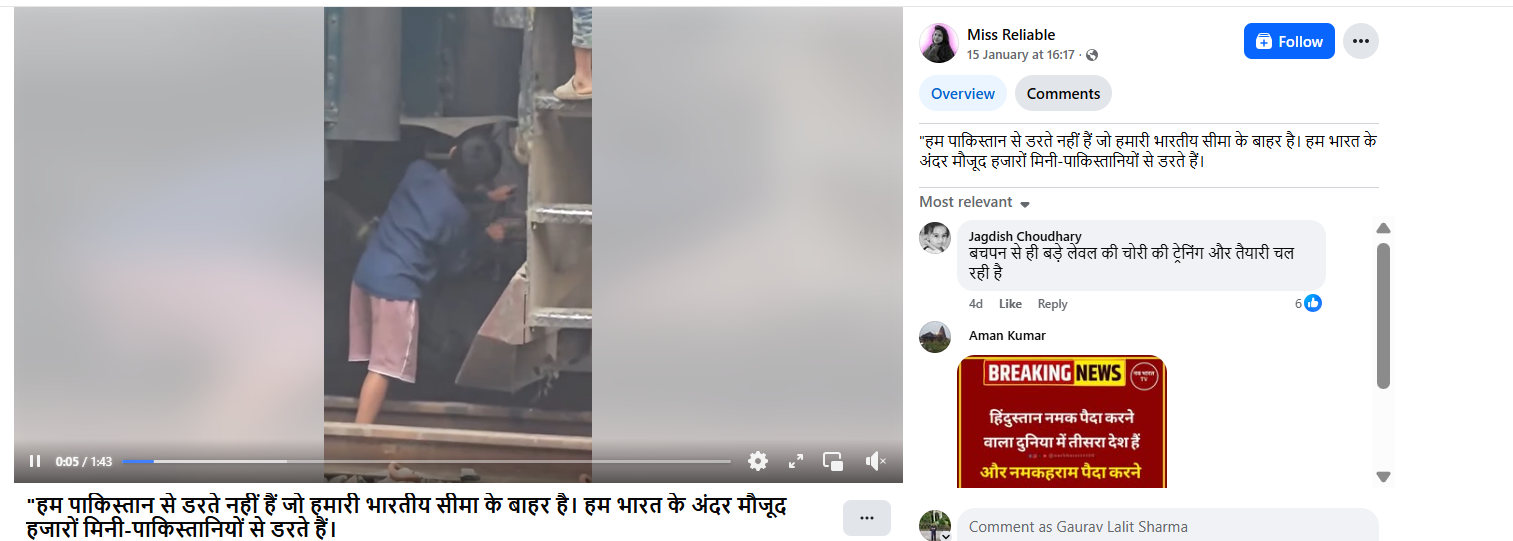

A video circulating widely on social media shows a child throwing stones at a moving train, while a few other children can also be seen climbing onto the engine. The video is being shared with a communal narrative, with claims that the incident took place in India.

Cyber Peace Foundation’s research found the viral claim to be misleading. Our research revealed that the video is not from India, but from Bangladesh, and is being falsely linked to India on social media.

Claim:

On January 15, 2026, a Facebook user shared the viral video claiming it depicted an incident from India. The post carried a provocative caption stating, “We are not afraid of Pakistan outside our borders. We are afraid of the thousands of mini-Pakistans within India.” The post has been widely circulated, amplifying communal sentiments.

Fact Check:

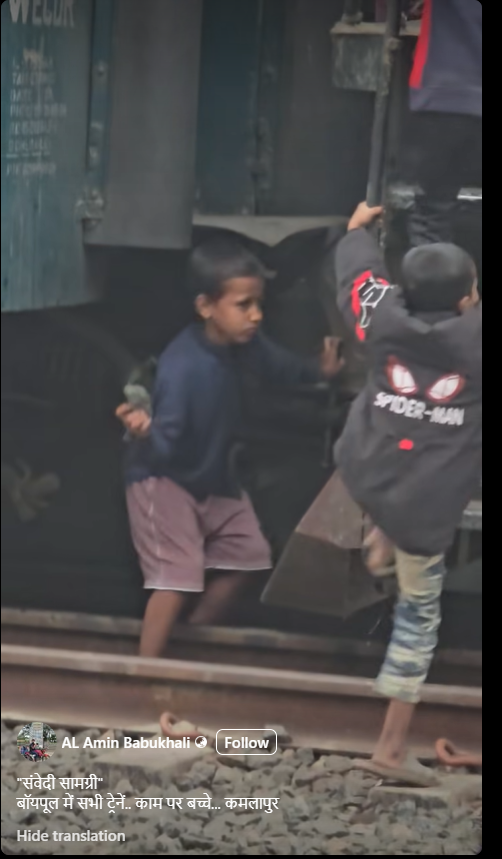

To verify the authenticity of the video, we conducted a reverse image search using Google Lens by extracting keyframes from the viral clip. During this process, we found the same video uploaded on a Bangladeshi Facebook account named AL Amin Babukhali on December 28, 2025. The caption of the original post mentions Kamalapur, which is a well-known railway station in Bangladesh. This strongly indicates that the incident did not occur in India.

Further analysis of the video shows that the train engine carries the marking “BR”, along with text written in the Bengali language. “BR” stands for Bangladesh Railways, confirming the origin of the train. To corroborate this further, we searched for images related to Bangladesh Railways using Google’s open tools. We found multiple images on Getty Images showing train engines with the same design and markings as seen in the viral video. The visual match clearly establishes that the train belongs to Bangladesh Railways.

Conclusion

Our research confirms that the viral video is from Bangladesh, not India. It is being shared on social media with a false and misleading claim to give it a communal angle and link it to India.

Related Blogs

Introduction

In September 2025, social media feeds were flooded with strikingly vintage saree-type portraits. These images were not taken by professional photographers, but AI-generated images. More than a million people turned to the "Nano Banana" AI tool of Google Gemini, uploading their ordinary selfies and watching them transform into Bollywood-style, cinematic, 1990s posters. The popularity of this trend is evident, as are the concerns of law enforcement agencies and cybersecurity experts regarding risks of infringement of privacy, unauthorised data sharing, and threats related to deepfake misuse.

What is the Trend?

This trend in AI sarees is created using Google Geminis' Nano Banana image-editing tool, editing and morphing uploaded selfies into glitzy vintage portraits in traditional Indian attire. A user would upload a clear photograph of a solo subject and enter prompts to generate images of cinematic backgrounds, flowing chiffon sarees, golden-hour ambience, and grainy film texture, reminiscent of classic Bollywood imagery. Since its launch, the tool has processed over 500 million images, with the saree trend marking one of its most popular uses. Photographs are uploaded to an AI system, which uses machine learning to alter the pictures according to the description specified. The transformed AI portraits are then shared by users on their Instagram, WhatsApp, and other social media platforms, thereby contributing to the viral nature of the trend.

Law Enforcement Agency Warnings

- A few Indian police agencies have issued strong advisories against participation in such trends. IPS Officer VC Sajjanar warned the public: "The uploading of just one personal photograph can make greedy operators go from clicking their fingers to joining hands with criminals and emptying one's bank account." His advisory had further warned that sharing personal information through trending apps can lead to many scams and fraud.

- Jalandhar Rural Police issued a comprehensive warning stating that such applications put the user at risk of identity theft and online fraud when personal pictures are uploaded. A senior police officer stated: "Once sensitive facial data is uploaded, it can be stored, analysed, and even potentially misused to open the way for cyber fraud, impersonation, and digital identity crimes.

The Cyber Crime Police also put out warnings on social media platforms regarding how photo applications appear entertaining but can pose serious risks to user privacy. They specifically warned that selfies uploaded can lead to data misuse, deepfake creation, and the generation of fake profiles, which are punishable under Sections 66C and 66D of the IT Act 2000.

Consequences of Such Trends

The massification of AI photo trends has several severe effects on private users and society as a whole. Identity fraud and theft are the main issues, as uploaded biometric information can be used by hackers to generate imitated identities, evading security measures or committing financial fraud. The facial recognition information shared by means of these trends remains a digital asset that could be abused years after the trend has passed. ‘Deepfake’ production is another tremendous threat because personal images shared on AI platforms can be utilised to create non-consensual artificial media. Studies have found that more than 95,000 deepfake videos circulated online in 2023 alone, a 550% increase from 2019. The images uploaded can be leveraged to produce embarrassing or harmful content that can cause damage to personal reputation, relationships, and career prospects.

Financial exploitation is also when fake applications in the guise of genuine AI tools strip users of their personal data and financial details. Such malicious platforms tend to look like well-known services so as to trick users into divulging sensitive information. Long-term privacy infringement also comes about due to the permanent retention and possible commercial exploitation of personal biometric information by AI firms, even when users close down their accounts.

Privacy Risks

A few months ago, the Ghibli trend went viral, and now this new trend has taken over. Such trends may subject users to several layers of privacy threats that go far beyond the instant gratification of taking pleasing images. Harvesting of biometric data is the most critical issue since facial recognition information posted on these sites becomes inextricably linked with user identities. Under Google's privacy policy for Gemini tools, uploaded images might be stored temporarily for processing and may be kept for longer periods if used for feedback purposes or feature development.

Illegal data sharing happens when AI platforms provide user-uploaded content to third parties without user consent. A Mozilla Foundation study in 2023 discovered that 80% of popular AI apps had either non-transparent data policies or obscured the ability of users to opt out of data gathering. This opens up opportunities for personal photographs to be shared with anonymous entities for commercial use. Exploitation of training data includes the use of personal photos uploaded to enhance AI models without notifying or compensating users. Although Google provides users with options to turn off data sharing within privacy settings, most users are ignorant of these capabilities. Integration of cross-platform data increases privacy threats when AI applications use data from interlinked social media profiles, providing detailed user profiles that can be taken advantage of for purposeful manipulation or fraud. Inadequacy of informed consent continues to be a major problem, with users engaging in trends unaware of the entire context of sharing information. Studies show that 68% of individuals show concern regarding the misuse of AI app data, but 42% use these apps without going through the terms and conditions.

CyberPeace Expert Recommendations

While the Google Gemini image trend feature operates under its own terms and conditions, it is important to remember that many other tools and applications allow users to generate similar content. Not every platform can be trusted without scrutiny, so users who engage in such trends should do so only on trustworthy platforms and make reliable, informed choices. Above all, following cybersecurity best practices and digital security principles remains essential.

Here are some best practices:-

1.Immediate Protection Measures for User

In a nutshell, protection of personal information may begin by not uploading high-resolution personal photos into AI-based applications, especially those trained for facial recognition. Instead, a person can play with stock images or non-identifiable pictures to the degree that it satisfies the program's creative features without compromising biometric security. Strong privacy settings should exist on every social media platform and AI app by which a person can either limit access to their data, content, or anything else.

2.Organisational Safeguards

AI governance frameworks within organisations should enumerate policies regarding the usage of AI tools by employees, particularly those concerning the upload of personal data. Companies should appropriately carry out due diligence before the adoption of an AI product made commercially available for their own use in order to ensure that such a product has its privacy and security levels as suitable as intended by the company. Training should instruct employees regarding deepfake technology.

3.Technical Protection Strategies

Deepfake detection software should be used. These tools, which include Microsoft Video Authenticator, Intel FakeCatcher, and Sensity AI, allow real-time detection with an accuracy higher than 95%. Use blockchain-based concepts to verify content to create tamper-proof records of original digital assets so that the method of proposing deepfake content as original remains very difficult.

4.Policy and Awareness Initiatives

For high-risk transactions, especially in banks and identity verification systems, authentication should include voice and face liveness checks to ensure the person is real and not using fake or manipulated media. Implement digital literacy programs to empower users with knowledge about AI threats, deepfake detection techniques, and safe digital practices. Companies should also liaise with law enforcement, reporting purported AI crimes, thus offering assistance in combating malicious applications of synthetic media technology.

5.Addressing Data Transparency and Cross-Border AI Security

Regulatory systems need to be called for requiring the transparency of data policies in AI applications, along with providing the rights and choices to users regarding either Biometric data or any other data. Promotion must be given to the indigenous development of AI pertaining to India-centric privacy concerns, assuring the creation of AI models in a secure, transparent, and accountable manner. In respect of cross-border AI security concerns, there must be international cooperation for setting common standards of ethical design, production, and use of AI. With the virus-like contagiousness of AI phenomena such as saree editing trends, they portray the potential and hazards of the present-day generation of artificial intelligence. While such tools offer newer opportunities, they also pose grave privacy and security concerns, which should have been considered quite some time ago by users, organisations, and policy-makers. Through the setting up of all-around protection mechanisms and keeping an active eye on digital privacy, both individuals and institutions will reap the benefits of this AI innovation, and they shall not fall on the darker side of malicious exploitation.

References

- https://www.hindustantimes.com/trending/amid-google-gemini-nano-banana-ai-trend-ips-officer-warns-people-about-online-scams-101757980904282.html%202

- https://www.moneycontrol.com/news/india/viral-banana-ai-saree-selfies-may-risk-fraud-warn-jalandhar-rural-police-13549443.html

- https://www.parliament.nsw.gov.au/researchpapers/Documents/Sexually%20explicit%20deepfakes.pdf

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year

- https://socradar.io/top-10-ai-deepfake-detection-tools-2025/

Introduction

India’s telecommunications infrastructure is one of the world’s largest and most complex, serving over a billion users across urban and rural landscapes. With rampant digitisation and mobile penetration, the vulnerability of telecom networks to cyber threats has grown exponentially. On April 24, 2025, the Ministry of Communications (MOC) released a draft of the “Telecommunications (Telecom Cyber Security) Amendment Rules, 2025,” to update the prior Telecommunications (Telecom Cyber Security) Rules, 2024, to improve cybersecurity in India's telecom industry and fortify network security. Public comments and recommendations regarding these draft rules can be sent to the department by July 24, 2025, after they have been made available for public comment. These rules are enacted under the Telecommunications Act, 2023, to enhance national cybersecurity in the telecom domain. These rules aim to prevent misuse of telecom networks and reinforce data and infrastructure protection mechanisms across service providers.

Safeguarding the Spectrum: Unpacking the 2025 Cybersecurity Revisions

The menace of fraudulent SIM cards deals the issue of cyber threats a fresh hand. The rising number of digital scams can also be attributed to unverified or fake mobile numbers. Fraudulent SIM cards have often been linked to various cybercrimes such as phishing, vishing, SIM swapping and identity theft. The situation has worsened in the face of easy availability of pre-activated SIM cards and weak KYC enforcement. In a recent example, as per reports of June 28, 2025, the Special Task Force (STF) found that the accused was operating a criminal nexus where he utilised fake documents and the Aadhaar credentials of law-abiding locals to activate numerous SIM cards. Following activation, the SIMs were either transferred to other telecom carriers for additional exploitation or sold illegally. This poses a serious concern for the data protection of vulnerable individuals, especially those in rural areas, whose credentials have been compromised.

Given the adverse state of cybersecurity in the telecom industry, the Telecommunications (Telecom Cyber Security) Rules, 2024, were passed on 22nd November, 2024, which put various telecom entities under an obligation to actively prevent cybersecurity threats by adopting such policies that mitigate cybersecurity risks and notify the same to the Central Government. The 2024 Telecom Cybersecurity Rules were a significant step in fortifying India’s telecom infrastructure against cyber threats, but they primarily focused on licensed telecom service providers, leaving behind a large segment of digital platforms operating outside the traditional telecom framework largely unregulated.

Expanding the Net: Key Revisions Under the 2025 Cybersecurity Amendment Rules

The amended rules of 2025 adequately address the regulatory blind spot that is created by the rapid expansion of online services, fintech apps, OTT platforms and social media networks, as these platforms often rely on telecom identifiers such as mobile numbers for user onboarding and service delivery. This regulatory blind spot was exploited for fraud, impersonation and other cybercrimes, especially in the absence of standardised identity verification mechanisms. The proposed regulations would give the government the authority to require private companies’ clients to provide identification if they use a mobile number. For a fee, businesses can also undertake this kind of verification on their own. “ The draft rules introduce a new category called “Telecommunication Identifier User Entities’ (TIUEs), extending cybersecurity compliance obligations to a broad category that now captures any entity using telecom identifiers to deliver digital services. It also creates a unified, government-backed verification framework, enabling better interoperability and uniform user identification norms across sectors.

While strengthening national digital security is the goal of the Telecom Cybersecurity (Amendment) Rules, 2025, the proposed rules create a great deal of uncertainty and compliance difficulties, especially for private digital platforms. A broad definition of Telecommunication Identifier User businesses (TIUEs) may include a variety of businesses, including e-commerce services, fintech apps and OTT platforms, under the purview of required mobile number verification. Given that many platforms already have advanced internal processes in place to verify users, this scope uncertainty creates significant concerns regarding operational clarity.

Conclusion

The Telecommunications (Telecom Cyber Security) Amendment Rules, 2025, represent a necessary evolution in India’s quest to secure its telecom ecosystem amid growing cyber threats. The draft regulations recognise the evolving landscape of digital services by broadening the legal scope to encompass Telecommunication Identifier User Entities (TIUEs). Though the goal of creating a strong, transparent and accountable framework is admirable, more clarification and stakeholder involvement are required due to the scope’s vagueness and the possible compliance burden on digital platforms. A truly durable telecom cybersecurity regime will require striking the correct balance between security, viability and privacy.

References

- https://www.cyberpeace.org/resources/blogs/the-government-enforces-key-sections-of-the-telecommunication-act-2023

- https://www.cyberpeace.org/resources/blogs/govt-notifies-the-telecommunications-telecom-cyber-security-rules-2024

- https://the420.in/uttarakhand-stf-busts-fake-sim-racket-linked-to-cyber-crimes-and-nepal-network/

- https://www.thehindu.com/business/dot-puts-out-draft-rules-to-enable-mobile-user-validation/article69741367.ece

- https://www.scconline.com/blog/post/2025/06/28/dot-telecom-cyber-security-draft-policy-update/

Executive Summary:

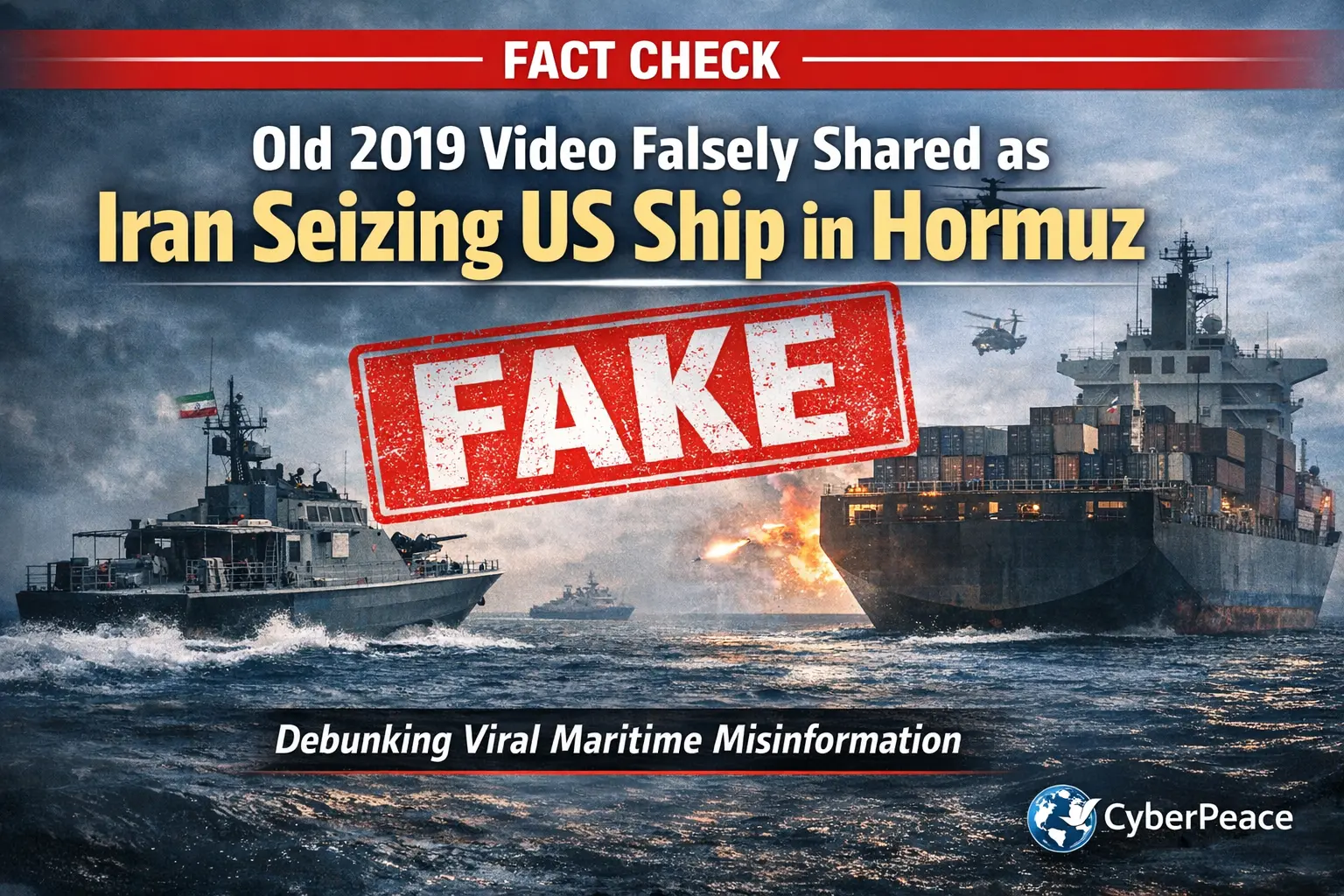

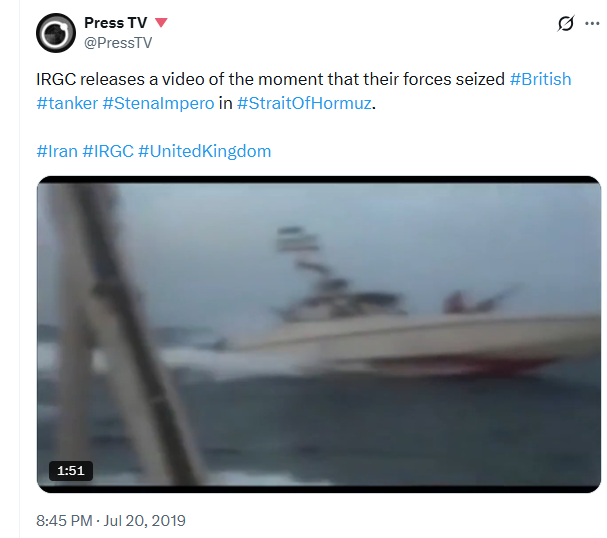

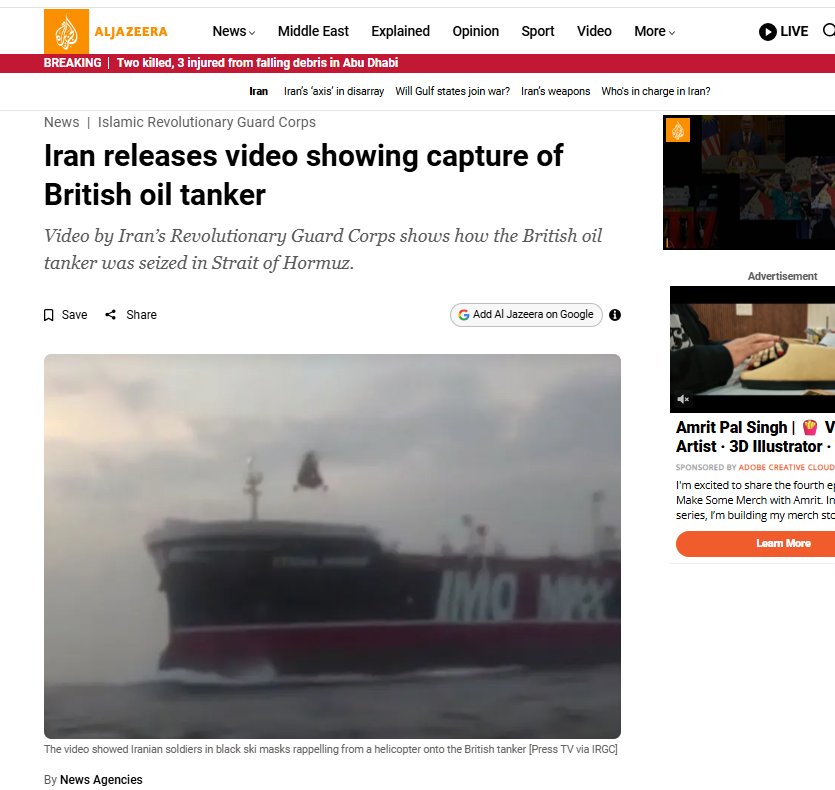

Amid the ongoing tensions in West Asia, a video is being widely circulated on social media with the claim that Iran has seized a US ship in the Strait of Hormuz. However, a research by the CyberPeace found that the claim is false. The video is from 2019 and is unrelated to the current situation. It actually shows Iran’s Islamic Revolutionary Guard Corps (IRGC) seizing a British-flagged tanker, Stena Impero. The ongoing conflict involving the United States, Israel and Iran since late February has raised concerns over global energy supply. The Strait of Hormuz, located between Iran and Oman, is a key route for global oil and maritime trade. Rising tensions in the region have impacted this route, although Iran has stated that it has not been completely closed.

Claim:

Users on X (formerly Twitter) are sharing the video as breaking news, claiming that Iran has captured a US ship in the Strait of Hormuz. The posts suggest that the move is a direct warning to the United States.

Fact Check:

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to the same video posted on the X handle of Iran’s Press TV on July 20, 2019.

Link:

- https://x.com/PressTV/status/1152597789362262016?s=20

- https://x.com/PressTV/status/1152597789362262016?s=20

The caption of the post stated that the footage showed the moment when IRGC forces seized the British oil tanker Stena Impero in the Strait of Hormuz. Further, we found a July 2019 report by Al Jazeera that included visuals matching the viral video. According to the report, Iran’s IRGC had intercepted the British-flagged tanker on July 19, 2019, after which the footage was released.

https://www.aljazeera.com/news/2019/7/20/iran-releases-video-showing-capture-of-british-oil-tanker

Conclusion:

The viral claim is misleading. The video is not recent and does not show Iran capturing a US ship. It is from 2019 and depicts the seizure of the British tanker Stena Impero by Iran’s IRGC.