#FactCheck - AI-Generated Video of Peacock ‘Rescue’ Falsely Shared as Real

Executive Summary:

A video showing a peacock allegedly trapped in ice has been going viral on social media. In the clip, the peacock appears to be frozen in a snow-covered area. Moments later, a man is seen approaching with a hammer and breaking the ice to rescue the bird. Social media users are sharing the video as a real-life incident, praising the peacock’s resilience and describing the scene as inspiring. However, CyberPeace research found the viral claim to be misleading. Our research revealed that the video was created using Artificial Intelligence (AI) and is being falsely circulated as a real incident.

Claim:

Facebook user ‘Ras Bihari Pathak’ shared the viral video on January 25, 2026, with the caption: “This peacock is not standing on ice, but on courage. It reminds us that no matter how harsh the circumstances are, hope always returns in colours.” The archived version of the post can be accessed here.

Fact Check:

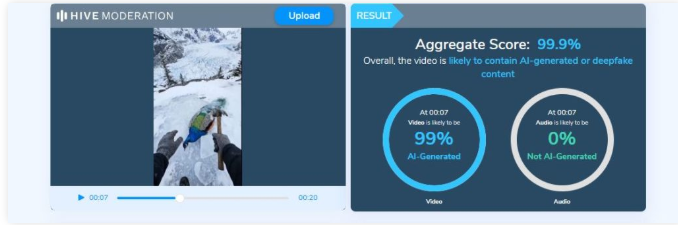

To verify the claim, we first conducted a keyword search on Google to check whether any such real incident involving a peacock trapped in ice had been reported. However, no credible or verified media reports were found. Next, we closely examined the viral video. Upon observation, the peacock’s movements and reactions appeared unnatural and artificial. The motion lacked realistic physical behaviour, raising suspicion that the video might have been digitally generated. To confirm this, we analysed the clip using the AI video detection tool Hive Moderation, which indicated a 99 per cent or higher likelihood that the video was AI-generated.

Conclusion:

CyberPeace research confirms that the viral video showing a peacock allegedly trapped in ice is not real. The clip has been created using Artificial Intelligence and is being shared on social media with a false and misleading claim.

Related Blogs

Introduction

Regulatory agencies throughout Europe have stepped up their monitoring of digital communication platforms because of the increased use of Artificial Intelligence in the digital domain. Messaging services have evolved into being more than just messaging systems, they now serve as a gateway for Artificial Intelligence services, Business Tools and Digital Marketplaces. In light of this evolution, the Competition Authority in Italy has taken action against Meta Platforms and ordered Meta to cease activities on WhatsApp that are deemed to restrict the ability of other companies to sell AI-based chatbots. This action highlights the concerns surrounding Gatekeeping Power, Market Foreclosure and Innovation Suppression. This proceeding will also raise questions regarding the application of Competition Law to the actions of Dominant Digital Platforms, where they leverage their own ecosystems to promote their own AI products to the detriment of competitors.

Background of the Case

In December 2025, Italy’s competition authority, the Autorità Garante della Concorrenza e del Mercato (AGCM), ordered Meta Platforms to suspend certain contractual terms governing WhatsApp. These terms allegedly prevented or restricted the operation of third-party AI chatbots on WhatsApp’s platform.

The decision was issued as an interim measure during an ongoing antitrust investigation. According to the AGCM, the disputed terms risked excluding competing AI chatbot providers from accessing a critical digital channel, thereby distorting competition and harming consumer choice.

Why WhatsApp Matters as a Digital Gateway

WhatsApp is situated uniquely within the European digital landscape. It has hundreds of millions of users throughout the entire European Union; therefore, it is an integral part of the communication infrastructure that supports communications between individual consumers and companies as well as between companies and their service providers. AI chatbot developers depend heavily upon WhatsApp as it provides the ability to connect directly with consumers in real-time, which is critical to their success as business offers.

According to the Italian regulator's opinion, a corporation that controls the ability to communicate via such a popular platform has a tremendous influence over innovation within that market as it essentially operates as a gatekeeper between the company creating an innovative service and the consumer using that service. If Meta is permitted to stop competing AI chatbot developers while providing more productive and useful offers than those offered by competing developers, it is likely that competing developers will be unable to market and distribute their innovative products at sufficient scale to remain competitive.

Alleged Abuse of Dominant Position

Under EU and national competition law, companies holding a dominant market position bear a special responsibility not to distort competition. The AGCM’s concern is that Meta may have abused WhatsApp’s dominance by:

- Restricting market access for rival AI chatbot providers

- Limiting technical development by preventing interoperability

- Strengthening Meta’s own AI ecosystem at the expense of competitors

Such conduct, if proven, could amount to an abuse under Article 102 of the Treaty on the Functioning of the European Union (TFEU). Importantly, the authority emphasised that even contractual terms—rather than explicit bans—can have exclusionary effects when imposed by a dominant platform.

Meta’s Response and Infrastructure Argument

Meta has openly condemned the Italian ruling as “fundamentally flawed,” arguing that third-party AI chatbots represent a major economic burden to the infrastructure and risk the performance, safety, and user enjoyment of WhatsApp.

Although the protection of infrastructure is a valid issue of concern, competition authorities commonly look at whether the justifications for such restrictions are appropriate and non-discriminatory. One of the principal legal issues is whether the restrictions imposed by Meta were applied in a uniform manner or whether they were selectively imposed in favour of Meta's AI services. If the restrictions are asymmetrical in application, they may be viewed as anti-competitive rather than as legitimate technical safeguards.

Link to the EU’s Digital Markets Framework

The Italian case fits into a wider EU context in relation to their efforts to regulate the actions of large technology companies with the use of prior (ex-ante) regulation as contained in the Digital Markets Act (DMA). The DMA has put in place obligations on a set of gatekeepers to make available to third parties on a non-discriminatory basis in order to maintain equitable access, interoperability and no discrimination against those parties.

While the Italian case has been brought pursuant to an Italian competition law, its philosophy is consistent with that of the DMA in that dominant digital platforms should not undertake actions that use their control over their core products and services to prevent other companies from being able to innovate. The trend with some EU national regulators is to be increasingly willing to take swift action through the application of interim measures rather than await many years for final decisions.

Implications for AI Developers and Platforms

The Italian order signifies to developers of AI-based chatbots that competitive access to AI technology via messaging services is an important factor for regulatory bodies. The order also serves as a warning to the large incumbent organisations that are establishing a foothold in the messaging services market to integrate AI into their already established platforms that they will not be protected from competition laws.

Additionally, the overall case showcases the growing consensus amongst regulatory agencies regarding the role of competition in the development of AI. If a handful of large companies are allowed to control both the infrastructure and the AI technology being operated on top of that infrastructure, the result will likely be the development of closed ecosystems that eliminate or greatly reduce the potential for technology diversity.

Conclusion

Italy's move against Meta highlights a significant intersection between competitive laws and artificial intelligence. The Italian antitrust authority has reinforced the principle that digital gatekeepers cannot use contractual methods to block off access to competition in targeting WhatsApp's restrictive terms. As AI becomes a larger part of our day to day digital services, regulatory bodies will likely continue to increase their scrutiny on platform behaviour. The result of this investigation will impact not just the Metaverse's AI strategy, but also create a baseline for future European regulators' methods of balancing innovation versus competition versus consumer choice in an increasingly AI-driven digital marketplace.

References

- https://www.reuters.com/sustainability/boards-policy-regulation/italy-watchdog-orders-meta-halt-whatsapp-terms-barring-rival-ai-chatbots-2025-12-24/

- https://techcrunch.com/2025/12/24/italy-tells-meta-to-suspend-its-policy-that-bans-rival-ai-chatbots-from-whatsapp/

- https://www.communicationstoday.co.in/italy-watchdog-orders-meta-to-halt-whatsapp-terms-barring-rival-ai-chatbots/

- https://www.techinasia.com/news/italy-watchdog-orders-meta-halt-whatsapp-terms-ai-bot

The more ease and dependency the internet slithers into our lives, the more obscure parasites linger on with it, menacing our privacy and data. Among these digital parasites, cyber espionage, hacking, and ransom have never failed to grab the headlines. These hostilities carried out by cyber criminals, corporate juggernauts and several state and non-state actors lend them unlimited access to the customers’ data damaging the digital fabric and wellbeing of netizens.

As technology continues to evolve, so does the need for robust safety measures. To tackle these emerging challenges, Korea based Samsung Electronics has introduced a cutting-edge security tool called Auto Blocker. Introduced in the One UI 6 Update, Auto Blocker boasts an array of additional security features, granting users the ability to customize their device's security as per their requirements Also known as ‘advanced sandbox’ or ‘Virtual Quarantine’. Sandboxing is a safety measure for separating running programs to prevent spread of digital vulnerabilities. It prohibits automatic execution of malicious code embedded in images. This shield now extends to third-party apps like WhatsApp and Facebook messenger, providing better resilience against cyber-attacks in all Samsung devices.

Matter of Choice

Dr. Seungwon Shin, EVP & Head of Security Team, Mobile eXperience Business at Samsung Electronics, emphasizes the significance of user safety. He stated “At Samsung, we constantly strive to keep our users safe from security attacks, and with the introduction of Auto Blocker, users can continue to enjoy the benefits of our open ecosystem, knowing that their mobile experience is secured.”

Auto Blocker is a matter of choice. It's not a cookie cutter solution; instead, its USP is the ability to customize security measures of your device. The Auto Blocker can be accessed through device’s setting, and is activated via toggle.

Your personal Digital Armor

One of Auto Blocker's salient features is its ability to prevent bloatware (unnecessary apps) from installing in the devices from unknown sources which is called sideloading. While sideloading provides greater scope of control and better customization, it also exposes users to potential threats, such as malicious file downloads. The proactive approach of Auto Blocker disables sideloading by default. Auto Blocker serves as an extra line of defense, especially against gruesome social engineering attacks such as voice Phishing (Vhishing). The app has an essential tool called ‘Message Guard’, engineered to combat Zero Click attacks. These complicated attacks are executed when a message containing an image is viewed.

The Auto Blocker also offers a wide variety of new controls to enhance device’s safety, including security scans to detect malwares. Additionally, Auto Blocker prevents the installation of malwares via USB cable. This ensures the device's security even when someone gains physical access to it, such as when the device is being charged in a public place.

Raising the Bar for Cyber Security

Auto Blocker testifies Samsung's unwavering commitment to the safety and privacy of its users. It acts an essential part of Samsung's security suite and privacy innovations, improving overall mobile experience within the Galaxy’s ecosystem. It provides a safer mobile experience while allowing user superior control over their device's protection. In comparison. Apple offers a more standardized approach to privacy and security with emphasis on user friendly design and closed ecosystem. Samsung disables sideloading to combat threats, while Apple is more flexible in this regard on macOS.

In this dynamic digital space, the Auto Blocker offers a tool to maintain cyber peace and resilience. It protects from a broad spectrum of digital hostilities while allowing us to embrace the new digital ecosystem crafted by Galaxy. It's a security feature that puts you in control, allowing you to determine how you fortify your digital fort to safeguard your device against digital specters like zero clicks, voice phishing (Vishing) and malware downloads

Samsung’s new product emerges as impenetrable armor shielding users against cyber hostilities. With its new customizable security feature with Galaxy Ecosystem, it allows users to exercise greater control over their digital space, promoting more secure and peaceful cyberspace.

Reference:

HT News Desk. (2023, November 1). Samsung unveils new Auto Blocker feature to protect devices. How does it work? Hindustan Times. https://www.hindustantimes.com/technology/samsung-unveils-new auto-blocker-feature to-protect-devices-how-does-it-work 101698805574773.html

Executive Summary

A video clip bearing the logo of News18 is being widely shared on social media with the claim that a serving Indian Army brigadier and his son were attacked in Delhi by an RSS-supporting mob for criticising the government over “Operation Sindoor.” The clip features an anchor allegedly explaining the motive behind the assault. However, research by the CyberPeace Research Wing found the claim to be false. The viral video has been digitally manipulated, with its audio altered to include misleading information.

Claim

An X user (@Mohammad776157) shared a video clip from Network18 on April 13, claiming that a serving Indian Army brigadier and his son were attacked in Delhi by an RSS-supporting mob for criticising the government over “Operation Sindoor.”

- https://x.com/Mohammad776157/status/2043691737609347166?s=20

- https://archive.ph/5EpbJ

To verify the claim, we extracted multiple keyframes from the viral video using the InVid tool and conducted reverse image searches via Google Lens. The same clip was found circulating across several social media platforms with similar claims.

- https://www.facebook.com/reel/2397972117364665

- https://www.instagram.com/reels/DXE4FFdjcnq/

- https://archive.ph/hjG3b

- https://archive.ph/9IkTY

Fact Check

Since the video carried the News18 logo, we examined the outlet’s official social media handles. We found the original video on its X account, where the visuals matched the viral clip. However, a detailed analysis of the original footage showed that the anchor never stated that the brigadier and his son were attacked for criticising the government over “Operation Sindoor.”

In the authentic version, the anchor reported that the assault took place in Delhi’s Vasant Enclave after the brigadier objected to two individuals consuming alcohol inside a car parked outside his residence. This clearly indicates that the audio in the viral clip was tampered with to insert a false narrative.

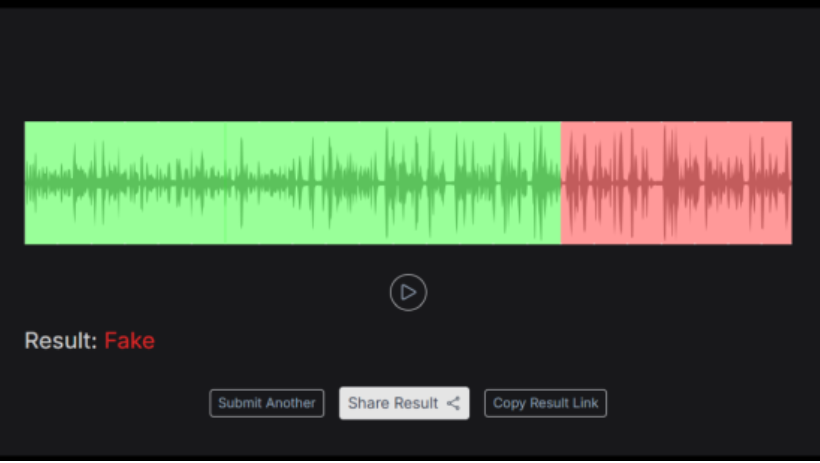

For further verification, we extracted the audio segment from the viral clip and analysed it using Resemble AI. The tool indicated that the portion describing the motive behind the attack had been digitally manipulated.

Conclusion

The viral claim is false. The video has been altered by modifying its audio to mislead viewers. In reality, the assault was not related to “Operation Sindoor” but occurred after the brigadier objected to public drinking near his residence.