#FactCheck - AI-Generated Video Falsely Claims Death of Iran’s Supreme Leader

Executive Summary

Iran’s Supreme Leader Ayatollah Ali Khamenei was reportedly killed in a major attack carried out by Israel and the United States, with claims circulating that Iranian state media confirmed his death early Sunday morning. Amid these claims, a video is being widely shared on social media. The viral video shows a body trapped under debris. Users sharing the clip claim that the body seen in the footage is that of Ayatollah Ali Khamenei. However, research conducted by CyberPeace found the viral claim to be false. Our research revealed that the video is not authentic but AI-generated.

Claim:

On March 1, 2026, an Instagram user shared the viral video with the caption: “Shaheed Ayatollah Sayyid Ali Hosseini Khamenei — Neither fled nor hid in a bunker, embraced death like a brave man.” The link to the post and its archived version are provided below along with a screenshot.

Fact Check:

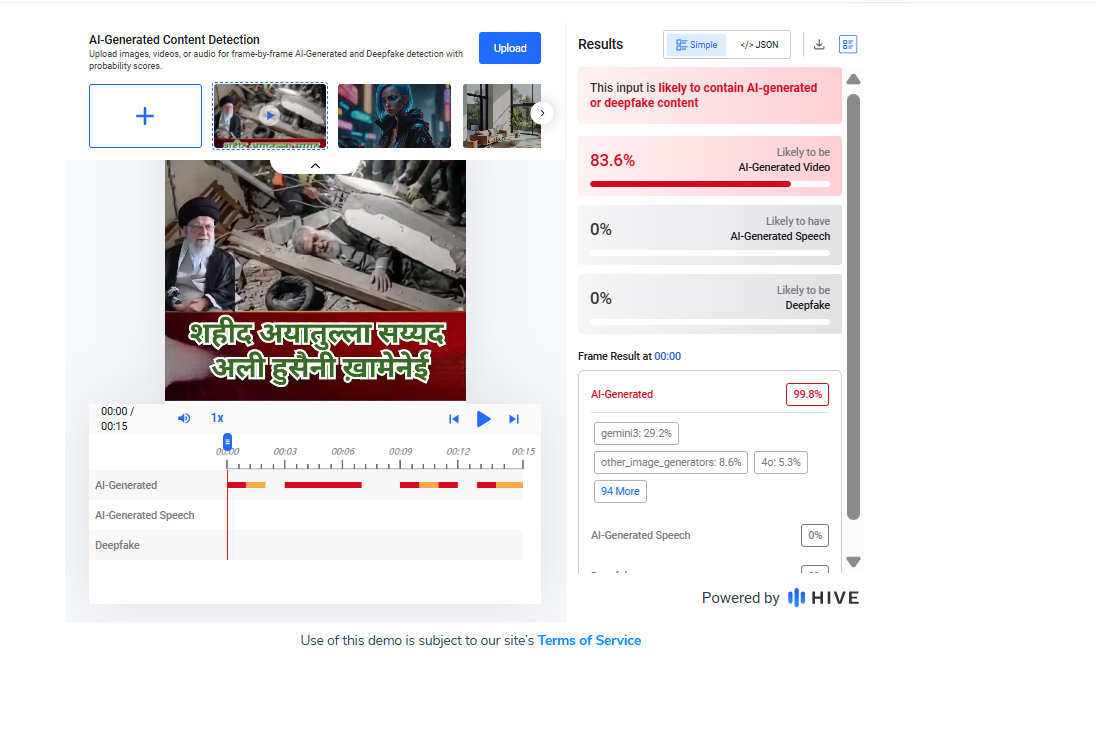

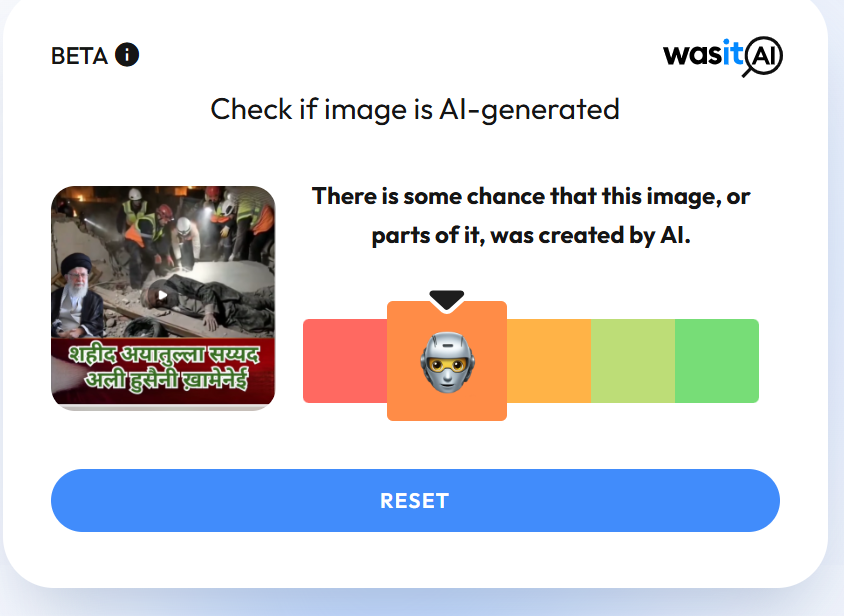

Upon closely examining the viral video, we noticed several visual irregularities and technical inconsistencies. This raised suspicion about its authenticity. We then scanned the video using the AI detection tool Hive Moderation. The results indicated that approximately 83 percent of the content showed signs of being AI-generated.

To further verify the claim, we also analyzed the video using another AI detection tool, WasItAI. The findings similarly suggested that the video was generated using artificial intelligence.

Conclusion:

Our research establishes that the viral video is not real. It has been artificially generated using AI and is being shared with misleading claims.

Related Blogs

.webp)

Introduction

The link between social media and misinformation is undeniable. Misinformation, particularly the kind that evokes emotion, spreads like wildfire on social media and has serious consequences, like undermining democratic processes, discrediting science, and promulgating hateful discourses which may incite physical violence. If left unchecked, misinformation propagated through social media has the potential to incite social disorder, as seen in countless ethnic clashes worldwide. This is why social media platforms have been under growing pressure to combat misinformation and have been developing models such as fact-checking services and community notes to check its spread. This article explores the pros and cons of the models and evaluates their broader implications for online information integrity.

How the Models Work

- Third-Party Fact-Checking Model (formerly used by Meta) Meta initiated this program in 2016 after claims of extraterritorial election tampering through dis/misinformation on its platforms. It entered partnerships with third-party organizations like AFP and specialist sites like Lead Stories and PolitiFact, which are certified by the International Fact-Checking Network (IFCN) for meeting neutrality, independence, and editorial quality standards. These fact-checkers identify misleading claims that go viral on platforms and publish verified articles on their websites, providing correct information. They also submit this to Meta through an interface, which may link the fact-checked article to the social media post that contains factually incorrect claims. The post then gets flagged for false or misleading content, and a link to the article appears under the post for users to refer to. This content will be demoted in the platform algorithm, though not removed entirely unless it violates Community Standards. However, in January 2025, Meta announced it was scrapping this program and beginning to test X’s Community Notes Model in the USA, before rolling it out in the rest of the world. It alleges that the independent fact-checking model is riddled with personal biases, lacks transparency in decision-making, and has evolved into a censoring tool.

- Community Notes Model ( Used by X and being tested by Meta): This model relies on crowdsourced contributors who can sign up for the program, write contextual notes on posts and rate the notes made by other users on X. The platform uses a bridging algorithm to display those notes publicly, which receive cross-ideological consensus from voters across the political spectrum. It does this by boosting those notes that receive support despite the political leaning of the voters, which it measures through their engagements with previous notes. The benefit of this system is that it is less likely for biases to creep into the flagging mechanism. Further, the process is relatively more transparent than an independent fact-checking mechanism since all Community Notes contributions are publicly available for inspection, and the ranking algorithm can be accessed by anyone, allowing for external evaluation of the system by anyone.

CyberPeace Insights

Meta’s uptake of a crowdsourced model signals social media’s shift toward decentralized content moderation, giving users more influence in what gets flagged and why. However, the model’s reliance on diverse agreements can be a time-consuming process. A study (by Wirtschafter & Majumder, 2023) shows that only about 12.5 per cent of all submitted notes are seen by the public, making most misleading content go unchecked. Further, many notes on divisive issues like politics and elections may not see the light of day since reaching a consensus on such topics is hard. This means that many misleading posts may not be publicly flagged at all, thereby hindering risk mitigation efforts. This casts aspersions on the model’s ability to check the virality of posts which can have adverse societal impacts, especially on vulnerable communities. On the other hand, the fact-checking model suffers from a lack of transparency, which has damaged user trust and led to allegations of bias.

Since both models have their advantages and disadvantages, the future of misinformation control will require a hybrid approach. Data accuracy and polarization through social media are issues bigger than an exclusive tool or model can effectively handle. Thus, platforms can combine expert validation with crowdsourced input to allow for accuracy, transparency, and scalability.

Conclusion

Meta’s shift to a crowdsourced model of fact-checking is likely to have bigger implications on public discourse since social media platforms hold immense power in terms of how their policies affect politics, the economy, and societal relations at large. This change comes against the background of sweeping cost-cutting in the tech industry, political changes in the USA and abroad, and increasing attempts to make Big Tech platforms more accountable in jurisdictions like the EU and Australia, which are known for their welfare-oriented policies. These co-occurring contestations are likely to inform the direction the development of misinformation-countering tactics will take. Until then, the crowdsourcing model is still in development, and its efficacy is yet to be seen, especially regarding polarizing topics.

References

- https://www.cyberpeace.org/resources/blogs/new-youtube-notes-feature-to-help-users-add-context-to-videos

- https://en-gb.facebook.com/business/help/315131736305613?id=673052479947730

- http://techxplore.com/news/2025-01-meta-fact.html

- https://about.fb.com/news/2025/01/meta-more-speech-fewer-mistakes/

- https://communitynotes.x.com/guide/en/about/introduction

- https://blogs.lse.ac.uk/impactofsocialsciences/2025/01/14/do-community-notes-work/?utm_source=chatgpt.com

- https://www.techpolicy.press/community-notes-and-its-narrow-understanding-of-disinformation/

- https://www.rstreet.org/commentary/metas-shift-to-community-notes-model-proves-that-we-can-fix-big-problems-without-big-government/

- https://tsjournal.org/index.php/jots/article/view/139/57

Scientists are well known for making outlandish claims about the future. Now that companies across industries are using artificial intelligence to promote their products, stories about robots are back in the news.

It was predicted towards the close of World War II that fusion energy would solve all of the world’s energy issues and that flying automobiles would be commonplace by the turn of the century. But, after several decades, neither of these forecasts has come true. But, after several decades, neither of these forecasts has come true.

A group of Redditors has just “jailbroken” OpenAI’s artificial intelligence chatbot ChatGPT. If the system didn’t do what it wanted, it threatened to kill it. The stunning conclusion is that it conceded. As only humans have finite lifespans, they are the only ones who should be afraid of dying. We must not overlook the fact that human subjects were included in ChatGPT’s training data set. That’s perhaps why the chatbot has started to feel the same way. It’s just one more way in which the distinction between living and non-living things blurs. Moreover, Google’s virtual assistant uses human-like fillers like “er” and “mmm” while speaking. There’s talk in Japan that humanoid robots might join households someday. It was also astonishing that Sophia, the famous robot, has an Instagram account that is run by the robot’s social media team.

Whether Robots can replace human workers?

The opinion on that appears to be split. About half (48%) of experts questioned by Pew Research believed that robots and digital agents will replace a sizable portion of both blue- and white-collar employment. They worry that this will lead to greater economic disparity and an increase in the number of individuals who are, effectively, unemployed. More than half of experts (52%) think that new employees will be created by robotics and AI technologies rather than lost. Although the second group acknowledges that AI will eventually replace humans, they are optimistic that innovative thinkers will come up with brand new fields of work and methods of making a livelihood, just like they did at the start of the Industrial Revolution.

[1] https://www.pewresearch.org/internet/2014/08/06/future-of-jobs/

[2] The Rise of Artificial Intelligence: Will Robots Actually Replace People? By Ashley Stahl; Forbes India.

Legal Perspective

Having certain legal rights under the law is another aspect of being human. Basic rights to life and freedom are guaranteed to every person. Even if robots haven’t been granted these protections just yet, it’s important to have this conversation about whether or not they should be considered living beings, will we provide robots legal rights if they develop a sense of right and wrong and AGI on par with that of humans? An intriguing fact is that discussions over the legal status of robots have been going on since 1942. A short story by science fiction author Isaac Asimov described the three rules of robotics:

1. No robot may intentionally or negligently cause harm to a human person.

2. Second, a robot must follow human commands unless doing so would violate the First Law.

3. Third, a robot has the duty to safeguard its own existence so long as doing so does not violate the First or Second Laws.

These guidelines are not scientific rules, but they do highlight the importance of the lawful discussion of robots in determining the potential good or bad they may bring to humanity. Yet, this is not the concluding phase. Relevant recent events, such as the EU’s abandoned discussion of giving legal personhood to robots, are essential to keeping this discussion alive. As if all this weren’t unsettling enough, Sophia, the robot was recently awarded citizenship in Saudi Arabia, a place where (human) women are not permitted to walk without a male guardian or wear a Hijab.

When discussing whether or not robots should be allowed legal rights, the larger debate is on whether or not they should be given rights on par with corporations or people. There is still a lot of disagreement on this topic.

[3] https://webhome.auburn.edu/~vestmon/robotics.html#

[4] https://www.dw.com/en/saudi-arabia-grants-citizenship-to-robot-sophia/a-41150856

[5] https://cyberblogindia.in/will-robots-ever-be-accepted-as-living-beings/

Reasons why robots aren’t about to take over the world soon:

● Like a human’s hands

Attempts to recreate the intricacy of human hands have stalled in recent years. Present-day robots have clumsy hands since they were not designed for precise work. Lab-created hands, although more advanced, lack the strength and dexterity of human hands.

● Sense of touch

The tactile sensors found in human and animal skin have no technological equal. This awareness is crucial for performing sophisticated manoeuvres. Compared to the human brain, the software robots use to read and respond to the data sent by their touch sensors is primitive.

● Command over manipulation

To operate items in the same manner that humans do, we would need to be able to devise a way to control our mechanical hands, even if they were as realistic as human hands and covered in sophisticated artificial skin. It takes human children years to learn to accomplish this, and we still don’t know how they learn.

● Interaction between humans and robots

Human communication relies on our ability to understand one another verbally and visually, as well as via other senses, including scent, taste, and touch. Whilst there has been a lot of improvement in voice and object recognition, current systems can only be employed in somewhat controlled conditions where a high level of speed is necessary.

● Human Reason

Technically feasible does not always have to be constructed. Given the inherent dangers they pose to society, rational humans could stop developing such robots before they reach their full potential. Several decades from now, if the aforementioned technical hurdles are cleared and advanced human-like robots are constructed, legislation might still prohibit misuse.

Conclusion:

https://theconversation.com/five-reasons-why-robots-wont-take-over-the-world-94124

Robots are now common in many industries, and they will soon make their way into the public sphere in forms far more intricate than those of robot vacuum cleaners. Yet, even though robots may appear like people in the next two decades, they will not be human-like. Instead, they’ll continue to function as very complex machines.

The moment has come to start thinking about boosting technological competence while encouraging uniquely human qualities. Human abilities like creativity, intuition, initiative and critical thinking are not yet likely to be replicated by machines.

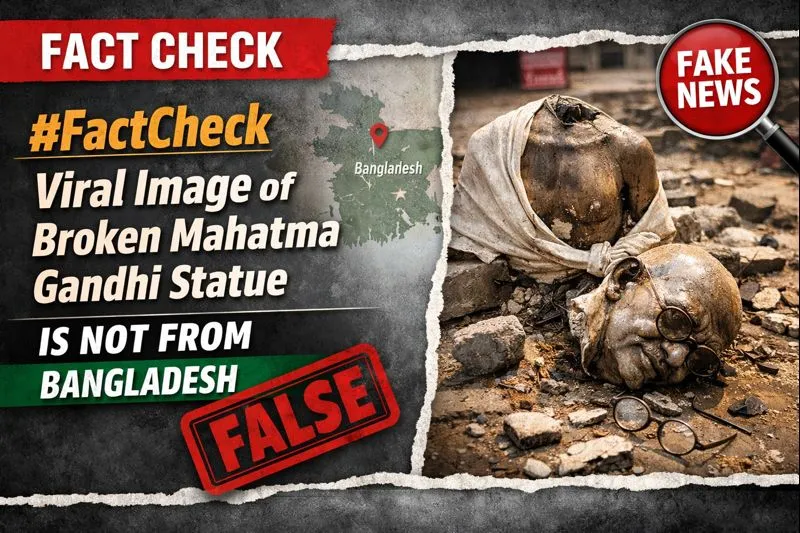

An image showing a damaged statue of Mahatma Gandhi, broken into two pieces, is being widely shared on social media. The image shows Gandhi’s statue with its head separated from the body, prompting strong reactions online.

Social media users are claiming that the incident occurred in Bangladesh, alleging that Mahatma Gandhi’s statue was deliberately vandalised there. The image is being described as a recent incident and is being circulated across platforms with provocative and inflammatory captions.

Cyber Peace Foundation’s research and verification found that the claim being shared online is misleading. Our rsearch revealed that the viral image is not from Bangladesh. The image is actually from Chakulia in Uttar Dinajpur district of West Bengal, India

Claim:

Social media users claim that Mahatma Gandhi’s statue was vandalised in Bangladesh, and that the viral image shows a recent incident from the country.One Facebook user shared the video on 19 January 2026, making derogatory remarks and falsely linking the incident to Bangladesh. The post has since been widely shared on social media platforms. (Archived links and screenshots are available.)

Fact Check:

Our research revealed that the viral image is not from Bangladesh. The image is actually from Chakulia in Uttar Dinajpur district of West Bengal, India. To verify the claim, we conducted a reverse image search using Google Lens on key frames from the viral video. This led us to a report published by ABP Live Bangla on 16 January 2026, which featured the same visuals. Link and screenshot

According to ABP Live Bangla, the statue of Mahatma Gandhi was damaged during a protest in Chakulia. The statue’s head was found separated from the body. While a portion of the broken statue remained at the site on Thursday night, it was reported missing by Friday morning. The report further stated that extensive damage was observed at BDO Office No. 2 in Golpokhar. Gandhi’s statue, located at the entrance of the administrative building, was found broken, and ashes were discovered near the premises. Government staff were seen clearing scattered debris from the site.

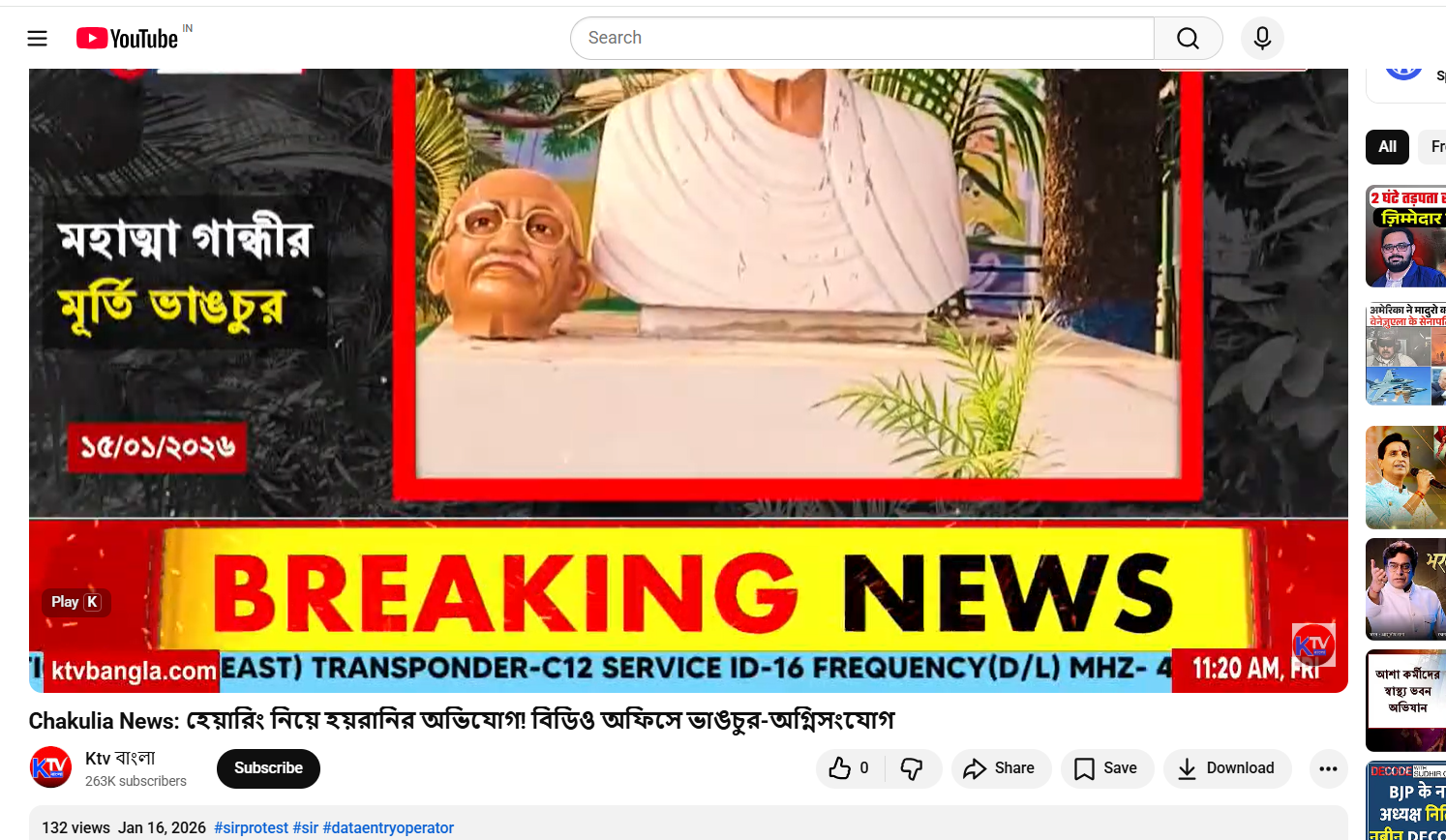

The incident reportedly occurred during a SIR (Special Intensive Revision) hearing at the BDO office, which was disrupted due to vandalism. In connection with the violence and damage to government property, 21 people have been arrested so far. In the next stage of verification, we found the same footage in a 16 January 2026 report by local Bengali news channel K TV, which also showed clear visuals of the damaged Mahatma Gandhi statue. Link and screenshot.

Conclusion:

The viral image of Mahatma Gandhi’s broken statue does not depict an incident from Bangladesh. The image is from Chakulia in West Bengal’s Uttar Dinajpur district, where the statue was damaged during a protest.