Agentic AI and the New Attack Surface

Introduction

Agentic AI systems are autonomous systems that can plan, make decisions, and take actions by interacting with external tools and environments. But they shift the nature of risk by blurring the lines among input, decision, and execution. A conventional model generates an output and stops. An agent takes input, makes plans, invokes tools, updates its state and repeats the cycle. This creates a system where decisions are continuously revised through interaction with external tools and environments, rather than being fixed at the point of input.

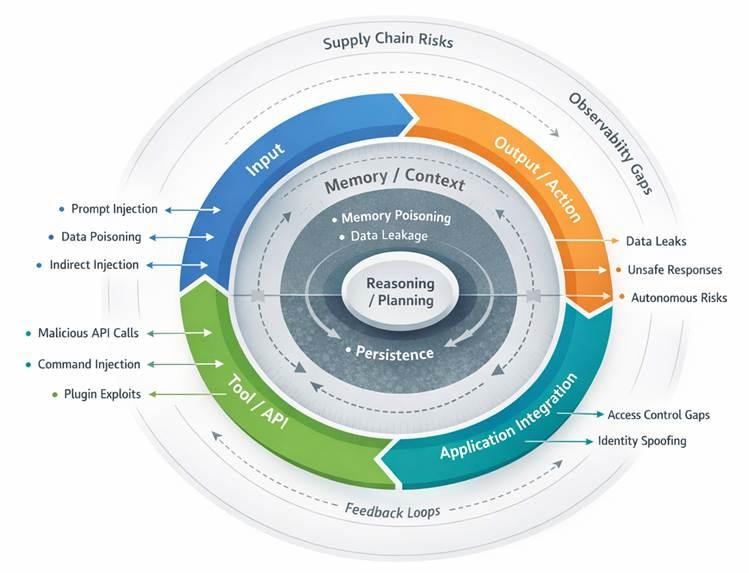

This means the attack surface expands in size and becomes more dynamic. Instead of remaining confined to components as in traditional computational systems, they spread in layers and can continue to grow through time. To understand this shift, the system can be analysed through functional layers such as inputs, memory, reasoning, and execution, while recognising that risk does not remain isolated within these layers but emerges through their interaction.

Agentic AI Attack Surface

A layered view of how risks emerge across input, memory, reasoning, execution, and system integration, including feedback loops and cross-system dependencies that amplify vulnerabilities.

Input Layer: Where Untrusted Data Becomes Control

The entry point of an agent is no longer one prompt. The documents, APIs, files, system logs and the outputs of other agents can now be considered input. This diversity is significant due to the fact that every source of input carries its own trust assumptions, and in the majority of cases, they are weak.

The most obvious threat is prompt injection, where inputs are treated as instructions rather than data. Since inputs are treated as instructions, a virus, a malicious webpage, or a document can contain instructions that override system goals without necessarily being detected as something harmful.

Indirect prompt injection extends this risk beyond direct user interaction. Instead of targeting the interface, attackers compromise the retrieval process by embedding malicious instructions within external data sources. When the agent retrieves and processes the data, it treats the embedded content as legitimate input. As a result, the attack is executed through normal reasoning processes, allowing the system to act on untrusted data without recognising the manipulation.

Data poisoning also occurs at runtime. In contrast to classical poisoning (where training data is manipulated), runtime poisoning distorts the agent’s perception of its environment as it runs. This can change decisions without causing apparent failures.

Obfuscation introduces another indirect attacker vector. Encoded instructions or complicated forms may bypass human review but remain readable to the model. This creates asymmetry whereby the system knows more about the attack than those operating it. Once compromised at this layer, the agent implements compromised instructions which affect downstream operations.

Context and Memory: Persistence of Influence

Agentic systems depend on memory to operate efficiently. They often retain context across sessions and frequently store information between sessions.

This introduces a different type of risk: persistence. Through memory poisoning, attackers can insert false or adversarial information into sorted context, which then influences future decisions. Unlike prompt injection, which is often limited to a single interaction, this effect carries forward. Over time, the agent begins to operate on a distorted internal state, shaping decisions in ways that may not be immediately visible.

Another issue is cross-session leakage. Information in a particular context may be replayed in a different context when memory is being shared or there is insufficient memory separation. This is specifically dangerous in those systems that combine retrieval and long-term storage. The context management in itself becomes a weakness. Agents are required to make decisions on what to retain and what to discard. This is susceptible to attackers who can flood the context or manipulate what is still visible and indirectly affect reasoning.

The underlying problem is structural. Memory turns data into a state. Once state is corrupted, the system cannot easily distinguish valid knowledge from adversarial influence.

The issue is structural. Memory converts temporary data into a persistent state. Once this state is weakened, the system cannot reliably separate valid information from adversarial influence, making recovery significantly more difficult.

Reasoning and Planning: Manipulating Intent Without Breaking Logic

The reasoning layer is where agentic AI stands apart from traditional systems. The model no longer reacts to inputs alone. It actively breaks down objectives, analyses alternatives, and ranks actions.

At the reasoning stage, the nature of risk shifts. The concern is no longer limited to injecting instructions, but to influencing how decisions are made. One example is goal manipulation, where the agent subtly reinterprets its objective and produces outcomes that are technically correct but strategically harmful. Reasoning hijacking operates within intermediate steps, altering how constraints are evaluated or how trade-offs are prioritised. The system may remain internally consistent, which makes such deviations difficult to detect.

Tool selection becomes a critical control point. Agents decide which tools to use and when, so influencing these choices can redirect execution without directly accessing the tools themselves. Hallucinations also take on a different role here. In static systems, they remain errors. In agentic systems, they can trigger actions. A perceived need or incorrect judgement can translate into real-world consequences.

This layer introduces probabilistic failure. The system is not fully weakened, but it is nudged towards decisions that appear reasonable yet are incorrect. The risk lies in how those decisions are justified.

Tool and Execution: When Decisions Gain Reach

Once an agent begins interacting with tools, its behaviour extends beyond the model into external systems. APIs, databases, and services become part of the execution path.

One key risk is the use of unauthorised tools. When agents operate with broad permissions, any manipulation of the upstream can be converted into real-world actions. This makes access control a central security concern. Command injection also takes a different form here. The agent generates commands based on its reasoning, so if that reasoning is compromised, the resulting actions may still appear valid despite being harmful.

External tool outputs introduce another risk. If these systems return corrupted or misleading data, the agent may accept it without verification and incorporate it into its decisions. It is also becoming increasingly reliant on third-part tools and plugins adds to this exposure. If these components are compromised, they can affect behaviour without directly attacking the core system, creating a supply-side risk.

At this stage, the agent effectively operates as an insider. It holds legitimate credentials and interacts with systems in expected ways, making misuse harder to identify.

Application and Integration: System-Level Exposure

Agentic systems rarely operate in isolation. They are embedded in larger environments, interacting with identity systems, business logic, and operational workflows.

Access control becomes a major vulnerability. Agents tend to operate across multiple systems with various permission models, creating irregularities that can be exploited. Risks also arise from identity and delegation. In case an agent is operating on behalf of a user, then any vulnerabilities in authentication or session management can allow attackers to assume that authority.

Workflow execution amplifies these risks. Agents can initiate multi-step processes such as transactions, updates, or approvals. Manipulating a single step can change the result of the entire workflow. As integrations increase, so do the number of interaction points, making cumulative risk harder to track.

At this layer, failures are not isolated. They propagate into business operations, making consequences harder to contain.

Output and Action: Where Failures Become Visible

The output layer is where failures become visible, though they rarely originate there.

Data leakage has been a key concern. Agents may disclose information they are allowed to access, especially when tasks boundaries are not clearly defined. Misinformation and unsafe outputs are also important, particularly when outputs directly influence actions or decisions.

Generated code and commands introduce execution risk. If outputs are used without validation, errors or manipulations can have system-level effects. The shift towards autonomous action increases this risk, as small upstream deviations can lead to significant consequences without human intervention. This layer reflects symptoms rather than root causes. Addressing it alone does not reduce the underlying risk.

Beyond Layers: The Missing Dimension

A layered view helps, but it does not capture the full picture. Agentic systems are defined by continuous interaction across layers.

The key missing dimension is the runtime loop. Inputs shape reasoning, reasoning drives action, and actions feed back into both reasoning and memory. These cycles create feedback loops, where small manipulations may escalate over time. This also reduces observability. With multiple interacting components, it becomes difficult to trace cause and effect or identify where failures originate.

Supply chain dependencies add another layer of risk. Models, datasets, APIs, and plugins each introduce their own points of failure. A compromise at any of these points can propagate across the system. The attack surface also includes governance. Weak supervision, unclear responsibility, or excessive autonomy increase overall risk. Human control is not external to the system; it is part of its security.

Conclusion: Structuring the Attack Surface

Agentic AI expands the attack surface beyond traditional systems. It is both recursive and stateful. Risk does not just accumulate across layers; it moves and changes as the system operates.

Any useful representation must go beyond a linear stack. It should capture feedback loops, persistent state, and cross-layer dependencies that characterise the way these systems actually behave. The system is not a pipeline but a cycle. That is where both its capability and its risk emerge.

.webp)